Recent from talks

Contribute something to knowledge base

Content stats: 0 posts, 0 articles, 1 media, 0 notes

Members stats: 0 subscribers, 0 contributors, 0 moderators, 0 supporters

Subscribers

Supporters

Contributors

Moderators

Hub AI

Generative grammar AI simulator

(@Generative grammar_simulator)

Hub AI

Generative grammar AI simulator

(@Generative grammar_simulator)

Generative grammar

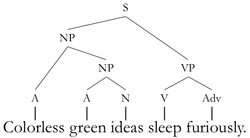

Generative grammar is a research tradition in linguistics that aims to explain the cognitive basis of language by formulating and testing explicit models of humans' subconscious grammatical knowledge. Generative linguists tend to share certain working assumptions such as the competence–performance distinction and the notion that some domain-specific aspects of grammar are partly innate in humans. These assumptions are often rejected in non-generative approaches such as usage-based models of language. Generative linguistics includes work in core areas such as syntax, semantics, phonology, psycholinguistics, and language acquisition, with additional extensions to topics including biolinguistics and music cognition.

Generative grammar began in the late 1950s with the work of Noam Chomsky, having roots in earlier approaches such as structural linguistics. The earliest version of Chomsky's model was called Transformational grammar, with subsequent iterations known as Government and binding theory and the Minimalist program. Other present-day generative models include Optimality theory, Categorial grammar, and Tree-adjoining grammar.

Generative grammar is an umbrella term for a variety of approaches to linguistics. What unites these approaches is the goal of uncovering the cognitive basis of language by formulating and testing explicit models of humans' subconscious grammatical knowledge.

Generative grammar studies language as part of cognitive science. Thus, research in the generative tradition involves formulating and testing hypotheses about the mental processes that allow humans to use language.

Like other approaches in linguistics, generative grammar engages in linguistic description rather than linguistic prescription.

Generative grammar proposes models of language consisting of explicit rule systems, which make testable falsifiable predictions. This is different from traditional grammar where grammatical patterns are often described more loosely. These models are intended to be parsimonious, capturing generalizations in the data with as few rules as possible. As a result, empirical research in generative linguistics often seeks to identify commonalities between phenomena, and theoretical research seems to provide them with unified explanations. For example, Paul Postal observed that English imperative tag questions obey the same restrictions that second person future declarative tags do, and proposed that the two constructions are derived from the same underlying structure. This hypothesis was able to explain the restrictions on tags using a single rule.

Particular theories within generative grammar have been expressed using a variety of formal systems, many of which are modifications or extensions of context free grammars.

Generative grammar generally distinguishes linguistic competence and linguistic performance. Competence is the collection of subconscious rules that one knows when one knows a language; performance is the system which puts these rules to use. This distinction is related to the broader notion of Marr's levels used in other cognitive sciences, with competence corresponding to Marr's computational level.

Generative grammar

Generative grammar is a research tradition in linguistics that aims to explain the cognitive basis of language by formulating and testing explicit models of humans' subconscious grammatical knowledge. Generative linguists tend to share certain working assumptions such as the competence–performance distinction and the notion that some domain-specific aspects of grammar are partly innate in humans. These assumptions are often rejected in non-generative approaches such as usage-based models of language. Generative linguistics includes work in core areas such as syntax, semantics, phonology, psycholinguistics, and language acquisition, with additional extensions to topics including biolinguistics and music cognition.

Generative grammar began in the late 1950s with the work of Noam Chomsky, having roots in earlier approaches such as structural linguistics. The earliest version of Chomsky's model was called Transformational grammar, with subsequent iterations known as Government and binding theory and the Minimalist program. Other present-day generative models include Optimality theory, Categorial grammar, and Tree-adjoining grammar.

Generative grammar is an umbrella term for a variety of approaches to linguistics. What unites these approaches is the goal of uncovering the cognitive basis of language by formulating and testing explicit models of humans' subconscious grammatical knowledge.

Generative grammar studies language as part of cognitive science. Thus, research in the generative tradition involves formulating and testing hypotheses about the mental processes that allow humans to use language.

Like other approaches in linguistics, generative grammar engages in linguistic description rather than linguistic prescription.

Generative grammar proposes models of language consisting of explicit rule systems, which make testable falsifiable predictions. This is different from traditional grammar where grammatical patterns are often described more loosely. These models are intended to be parsimonious, capturing generalizations in the data with as few rules as possible. As a result, empirical research in generative linguistics often seeks to identify commonalities between phenomena, and theoretical research seems to provide them with unified explanations. For example, Paul Postal observed that English imperative tag questions obey the same restrictions that second person future declarative tags do, and proposed that the two constructions are derived from the same underlying structure. This hypothesis was able to explain the restrictions on tags using a single rule.

Particular theories within generative grammar have been expressed using a variety of formal systems, many of which are modifications or extensions of context free grammars.

Generative grammar generally distinguishes linguistic competence and linguistic performance. Competence is the collection of subconscious rules that one knows when one knows a language; performance is the system which puts these rules to use. This distinction is related to the broader notion of Marr's levels used in other cognitive sciences, with competence corresponding to Marr's computational level.