Recent from talks

Contribute something to knowledge base

Content stats: 0 posts, 0 articles, 1 media, 0 notes

Members stats: 0 subscribers, 0 contributors, 0 moderators, 0 supporters

Subscribers

Supporters

Contributors

Moderators

Hub AI

Intel 8086 AI simulator

(@Intel 8086_simulator)

Hub AI

Intel 8086 AI simulator

(@Intel 8086_simulator)

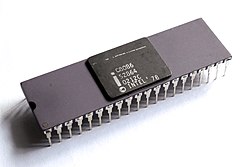

Intel 8086

The 8086 (also called iAPX 86) is a 16-bit microprocessor chip released by Intel on June 8, 1978 after development began in early 1976. It was followed by the Intel 8088 in 1979, which was a slightly modified chip with an external 8-bit data bus (allowing the use of cheaper and fewer supporting ICs).

The 8086 gave rise to the x86 architecture, which eventually became Intel's most successful line of processors. On June 5, 2018, Intel released a limited-edition CPU celebrating the 40th anniversary of the Intel 8086, called the Intel Core i7-8086K.

In 1972, Intel launched the 8008, Intel's first 8-bit microprocessor. It implemented an instruction set designed by Datapoint Corporation with programmable CRT terminals in mind, which also proved to be fairly general-purpose. The device needed several additional ICs to produce a functional computer, in part due to it being packaged in a small 18-pin "memory package", which ruled out the use of a separate address bus (Intel was primarily a DRAM manufacturer at the time).

Two years later, Intel launched the 8080, employing the new 40-pin DIL packages originally developed for calculator ICs to enable a separate address bus. It had an extended instruction set that is source-compatible (not binary compatible) with the 8008 and also included some 16-bit instructions to make programming easier. The 8080 device was eventually replaced by the depletion-load-based 8085 (1977), which used a single +5 V power supply instead of the three different operating voltages of earlier chips. Other well known 8-bit microprocessors that emerged during these years are Motorola 6800 (1974), General Instrument PIC16X (1975), MOS Technology 6502 (1975), Zilog Z80 (1976), and Motorola 6809 (1978).

The 8086 project started in May 1976 and was originally intended as a temporary substitute for the ambitious and delayed iAPX 432 project. It was an attempt to draw attention from the less-delayed 16-bit and 32-bit processors of other manufacturers — Motorola, Zilog, and National Semiconductor.

While the 8086 was a 16-bit microprocessor, it used a similar architecture as Intel's 8-bit microprocessors (8008, 8080, and 8085). This allowed assembly language programs written in 8-bit to seamlessly migrate. New instructions and features — such as signed integers, base+offset addressing, and self-repeating operations — were added. Instructions were added to assist source code compilation of nested functions in the ALGOL-family of languages, including Pascal and PL/M. According to principal architect Stephen P. Morse, this was a result of a more software-centric approach. Other enhancements included microcode instructions for the multiply and divide assembly language instructions. Designers also anticipated coprocessors, such as 8087 and 8089, so the bus structure was designed to be flexible.

The first revision of the instruction set and high level architecture was ready after about three months, and as almost no CAD tools were used, four engineers and 12 layout people were simultaneously working on the chip. The 8086 took a little more than two years from idea to working product, which was considered fast for a complex design in the 1970s.

The 8086 was sequenced using a mixture of random logic and microcode and was implemented using depletion-load nMOS circuitry with approximately 20,000 active transistors (29,000 counting all ROM and PLA sites). It was soon moved to a new refined nMOS manufacturing process called HMOS (for High performance MOS) that Intel originally developed for manufacturing of fast static RAM products. This was followed by HMOS-II, HMOS-III versions, and, eventually, a fully static CMOS version for battery powered devices, manufactured using Intel's CHMOS processes. The original chip measured 33 mm² and minimum feature size was 3.2 μm. The MUL and DIV instructions were very slow due to being microcoded so x86 programmers usually just used the bit shift instructions for multiplying and dividing instead. [dubious – discuss]

Intel 8086

The 8086 (also called iAPX 86) is a 16-bit microprocessor chip released by Intel on June 8, 1978 after development began in early 1976. It was followed by the Intel 8088 in 1979, which was a slightly modified chip with an external 8-bit data bus (allowing the use of cheaper and fewer supporting ICs).

The 8086 gave rise to the x86 architecture, which eventually became Intel's most successful line of processors. On June 5, 2018, Intel released a limited-edition CPU celebrating the 40th anniversary of the Intel 8086, called the Intel Core i7-8086K.

In 1972, Intel launched the 8008, Intel's first 8-bit microprocessor. It implemented an instruction set designed by Datapoint Corporation with programmable CRT terminals in mind, which also proved to be fairly general-purpose. The device needed several additional ICs to produce a functional computer, in part due to it being packaged in a small 18-pin "memory package", which ruled out the use of a separate address bus (Intel was primarily a DRAM manufacturer at the time).

Two years later, Intel launched the 8080, employing the new 40-pin DIL packages originally developed for calculator ICs to enable a separate address bus. It had an extended instruction set that is source-compatible (not binary compatible) with the 8008 and also included some 16-bit instructions to make programming easier. The 8080 device was eventually replaced by the depletion-load-based 8085 (1977), which used a single +5 V power supply instead of the three different operating voltages of earlier chips. Other well known 8-bit microprocessors that emerged during these years are Motorola 6800 (1974), General Instrument PIC16X (1975), MOS Technology 6502 (1975), Zilog Z80 (1976), and Motorola 6809 (1978).

The 8086 project started in May 1976 and was originally intended as a temporary substitute for the ambitious and delayed iAPX 432 project. It was an attempt to draw attention from the less-delayed 16-bit and 32-bit processors of other manufacturers — Motorola, Zilog, and National Semiconductor.

While the 8086 was a 16-bit microprocessor, it used a similar architecture as Intel's 8-bit microprocessors (8008, 8080, and 8085). This allowed assembly language programs written in 8-bit to seamlessly migrate. New instructions and features — such as signed integers, base+offset addressing, and self-repeating operations — were added. Instructions were added to assist source code compilation of nested functions in the ALGOL-family of languages, including Pascal and PL/M. According to principal architect Stephen P. Morse, this was a result of a more software-centric approach. Other enhancements included microcode instructions for the multiply and divide assembly language instructions. Designers also anticipated coprocessors, such as 8087 and 8089, so the bus structure was designed to be flexible.

The first revision of the instruction set and high level architecture was ready after about three months, and as almost no CAD tools were used, four engineers and 12 layout people were simultaneously working on the chip. The 8086 took a little more than two years from idea to working product, which was considered fast for a complex design in the 1970s.

The 8086 was sequenced using a mixture of random logic and microcode and was implemented using depletion-load nMOS circuitry with approximately 20,000 active transistors (29,000 counting all ROM and PLA sites). It was soon moved to a new refined nMOS manufacturing process called HMOS (for High performance MOS) that Intel originally developed for manufacturing of fast static RAM products. This was followed by HMOS-II, HMOS-III versions, and, eventually, a fully static CMOS version for battery powered devices, manufactured using Intel's CHMOS processes. The original chip measured 33 mm² and minimum feature size was 3.2 μm. The MUL and DIV instructions were very slow due to being microcoded so x86 programmers usually just used the bit shift instructions for multiplying and dividing instead. [dubious – discuss]