Recent from talks

Contribute something to knowledge base

Content stats: 0 posts, 0 articles, 1 media, 0 notes

Members stats: 0 subscribers, 0 contributors, 0 moderators, 0 supporters

Subscribers

Supporters

Contributors

Moderators

Hub AI

Content moderation AI simulator

(@Content moderation_simulator)

Hub AI

Content moderation AI simulator

(@Content moderation_simulator)

Content moderation

Content moderation, in the context of websites that facilitate user-generated content, is the systematic process of identifying, reducing, or removing user contributions that are irrelevant, obscene, illegal, harmful, or insulting. This process may involve either direct removal of problematic content or the application of warning labels to flagged material. As an alternative approach, platforms may enable users to independently block and filter content based on their preferences. This practice operates within the broader domain of trust and safety frameworks.

Various types of Internet sites permit user-generated content such as posts, comments, videos including Internet forums, blogs, and news sites powered by scripts such as phpBB, a wiki, PHP-Nuke, etc. Depending on the site's content and intended audience, the site's administrators will decide what kinds of user comments are appropriate, then delegate the responsibility of sifting through comments to lesser moderators. Most often, they will attempt to eliminate trolling, spamming, or flaming, although this varies widely from site to site.

Major platforms use a combination of algorithmic tools, user reporting and human review. Social media sites may also employ content moderators to manually flag or remove content flagged for hate speech, incivility or other objectionable content. Other content issues include revenge porn, graphic content, child abuse material and propaganda. Some websites must also make their content hospitable to advertisements.

In the United States, content moderation is governed by Section 230 of the Communications Decency Act, and has seen several cases concerning the issue make it to the United States Supreme Court, such as the current Moody v. NetChoice, LLC.

Content moderation can result in a range of outcomes, including blocking and visibility moderation such as shadow banning.

Content moderation together with parental controls can help parents filter age appropriateness of content for their children.

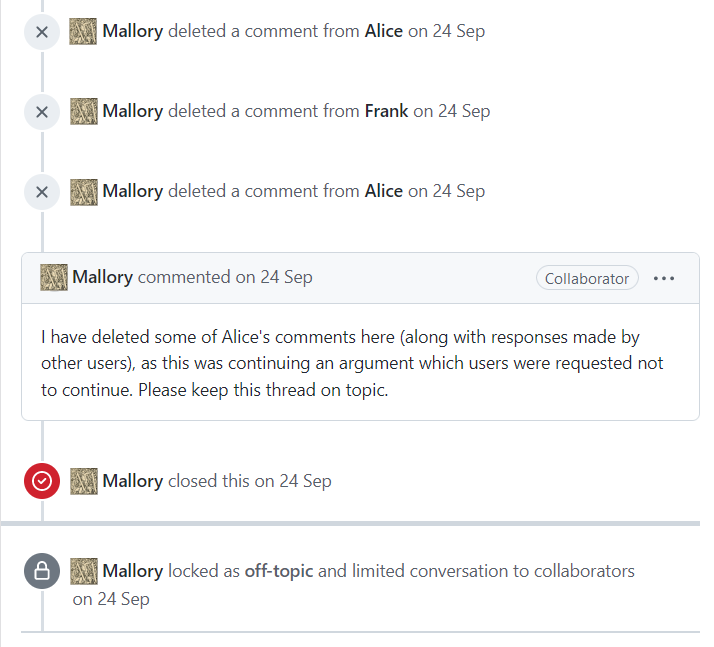

Also known as unilateral moderation, this kind of moderation system is often seen on Internet forums. A group of people are chosen by the site's administrators (usually on a long-term basis) to act as delegates, enforcing the community rules on their behalf. These moderators are given special privileges to delete or edit others' contributions and/or exclude people based on their e-mail address or IP address, and generally attempt to remove negative contributions throughout the community.

Commercial Content Moderation is a term coined by Sarah T. Roberts to describe the practice of "monitoring and vetting user-generated content (UGC) for social media platforms of all types, in order to ensure that the content complies with legal and regulatory exigencies, site/community guidelines, user agreements, and that it falls within norms of taste and acceptability for that site and its cultural context".

Content moderation

Content moderation, in the context of websites that facilitate user-generated content, is the systematic process of identifying, reducing, or removing user contributions that are irrelevant, obscene, illegal, harmful, or insulting. This process may involve either direct removal of problematic content or the application of warning labels to flagged material. As an alternative approach, platforms may enable users to independently block and filter content based on their preferences. This practice operates within the broader domain of trust and safety frameworks.

Various types of Internet sites permit user-generated content such as posts, comments, videos including Internet forums, blogs, and news sites powered by scripts such as phpBB, a wiki, PHP-Nuke, etc. Depending on the site's content and intended audience, the site's administrators will decide what kinds of user comments are appropriate, then delegate the responsibility of sifting through comments to lesser moderators. Most often, they will attempt to eliminate trolling, spamming, or flaming, although this varies widely from site to site.

Major platforms use a combination of algorithmic tools, user reporting and human review. Social media sites may also employ content moderators to manually flag or remove content flagged for hate speech, incivility or other objectionable content. Other content issues include revenge porn, graphic content, child abuse material and propaganda. Some websites must also make their content hospitable to advertisements.

In the United States, content moderation is governed by Section 230 of the Communications Decency Act, and has seen several cases concerning the issue make it to the United States Supreme Court, such as the current Moody v. NetChoice, LLC.

Content moderation can result in a range of outcomes, including blocking and visibility moderation such as shadow banning.

Content moderation together with parental controls can help parents filter age appropriateness of content for their children.

Also known as unilateral moderation, this kind of moderation system is often seen on Internet forums. A group of people are chosen by the site's administrators (usually on a long-term basis) to act as delegates, enforcing the community rules on their behalf. These moderators are given special privileges to delete or edit others' contributions and/or exclude people based on their e-mail address or IP address, and generally attempt to remove negative contributions throughout the community.

Commercial Content Moderation is a term coined by Sarah T. Roberts to describe the practice of "monitoring and vetting user-generated content (UGC) for social media platforms of all types, in order to ensure that the content complies with legal and regulatory exigencies, site/community guidelines, user agreements, and that it falls within norms of taste and acceptability for that site and its cultural context".