Recent from talks

Contribute something to knowledge base

Content stats: 0 posts, 0 articles, 1 media, 0 notes

Members stats: 0 subscribers, 0 contributors, 0 moderators, 0 supporters

Subscribers

Supporters

Contributors

Moderators

Hub AI

History of the graphical user interface AI simulator

(@History of the graphical user interface_simulator)

Hub AI

History of the graphical user interface AI simulator

(@History of the graphical user interface_simulator)

History of the graphical user interface

The history of the graphical user interface, understood as the use of graphic icons and a pointing device to control a computer, covers a five-decade span of incremental refinements, built on some constant core principles. Several vendors have created their own windowing systems based on independent code, but with basic elements in common that define the WIMP "window, icon, menu and pointing device" paradigm.

There have been important technological achievements, and enhancements to the general interaction in small steps over previous systems. There have been a few significant breakthroughs in terms of use, but the same organizational metaphors and interaction idioms are still in use. Desktop computers are often controlled by computer mice and/or keyboards while laptops often have a pointing stick or touchpad, and smartphones and tablet computers have a touchscreen. The influence of game computers and joystick operation has been omitted.

Early dynamic information devices such as radar displays, where input devices were used for direct control of computer-created data, set the basis for later improvements of graphical interfaces. Some early cathode-ray-tube (CRT) screens used a light pen, rather than a mouse, as the pointing device.

The concept of a multi-panel windowing system was introduced by the first real-time graphic display systems for computers: the SAGE Project and Ivan Sutherland's Sketchpad.

In the 1960s, Douglas Engelbart's Augmentation of Human Intellect project at the Augmentation Research Center at SRI International in Menlo Park, California developed the oN-Line System (NLS). This computer incorporated a mouse-driven cursor and multiple windows used to work on hypertext. Engelbart had been inspired, in part, by the memex desk-based information machine suggested by Vannevar Bush in 1945.

Much of the early research was based on how young children learn. So, the design was based on the childlike characteristics of hand–eye coordination, rather than use of command languages, user-defined macro procedures, or automated transformation of data as later used by adult professionals.

Engelbart publicly demonstrated this work at the Association for Computing Machinery / Institute of Electrical and Electronics Engineers (ACM/IEEE)—Computer Society's Fall Joint Computer Conference in San Francisco on December 9, 1968. It was so-called The Mother of All Demos.

The development of computers having multiple overlapping and resizable windows on a "desktop" is commonly, and incorrectly, attributed to Xerox PARC and its Alto. The Xerox Alto's windowing system was inspired by the DNLS (Display NLS)'s overlapping multi-windowing system, which was operational by early 1973 and used at several ARPA locations. In the DNLS, overlapping windows were referred to as "display areas" and could store multiple lines of strings.

History of the graphical user interface

The history of the graphical user interface, understood as the use of graphic icons and a pointing device to control a computer, covers a five-decade span of incremental refinements, built on some constant core principles. Several vendors have created their own windowing systems based on independent code, but with basic elements in common that define the WIMP "window, icon, menu and pointing device" paradigm.

There have been important technological achievements, and enhancements to the general interaction in small steps over previous systems. There have been a few significant breakthroughs in terms of use, but the same organizational metaphors and interaction idioms are still in use. Desktop computers are often controlled by computer mice and/or keyboards while laptops often have a pointing stick or touchpad, and smartphones and tablet computers have a touchscreen. The influence of game computers and joystick operation has been omitted.

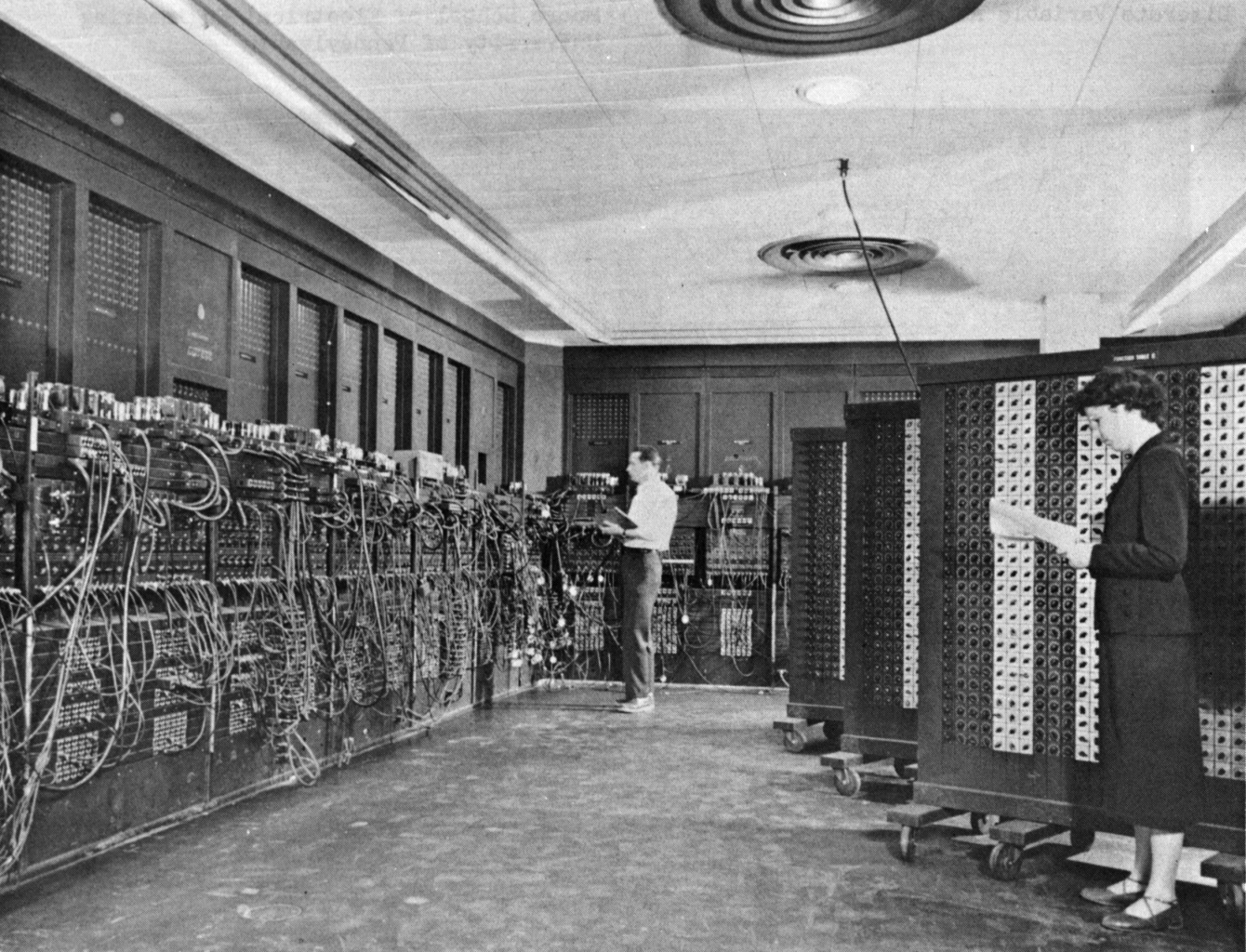

Early dynamic information devices such as radar displays, where input devices were used for direct control of computer-created data, set the basis for later improvements of graphical interfaces. Some early cathode-ray-tube (CRT) screens used a light pen, rather than a mouse, as the pointing device.

The concept of a multi-panel windowing system was introduced by the first real-time graphic display systems for computers: the SAGE Project and Ivan Sutherland's Sketchpad.

In the 1960s, Douglas Engelbart's Augmentation of Human Intellect project at the Augmentation Research Center at SRI International in Menlo Park, California developed the oN-Line System (NLS). This computer incorporated a mouse-driven cursor and multiple windows used to work on hypertext. Engelbart had been inspired, in part, by the memex desk-based information machine suggested by Vannevar Bush in 1945.

Much of the early research was based on how young children learn. So, the design was based on the childlike characteristics of hand–eye coordination, rather than use of command languages, user-defined macro procedures, or automated transformation of data as later used by adult professionals.

Engelbart publicly demonstrated this work at the Association for Computing Machinery / Institute of Electrical and Electronics Engineers (ACM/IEEE)—Computer Society's Fall Joint Computer Conference in San Francisco on December 9, 1968. It was so-called The Mother of All Demos.

The development of computers having multiple overlapping and resizable windows on a "desktop" is commonly, and incorrectly, attributed to Xerox PARC and its Alto. The Xerox Alto's windowing system was inspired by the DNLS (Display NLS)'s overlapping multi-windowing system, which was operational by early 1973 and used at several ARPA locations. In the DNLS, overlapping windows were referred to as "display areas" and could store multiple lines of strings.