Recent from talks

Knowledge base stats:

Talk channels stats:

Members stats:

Autoencoder

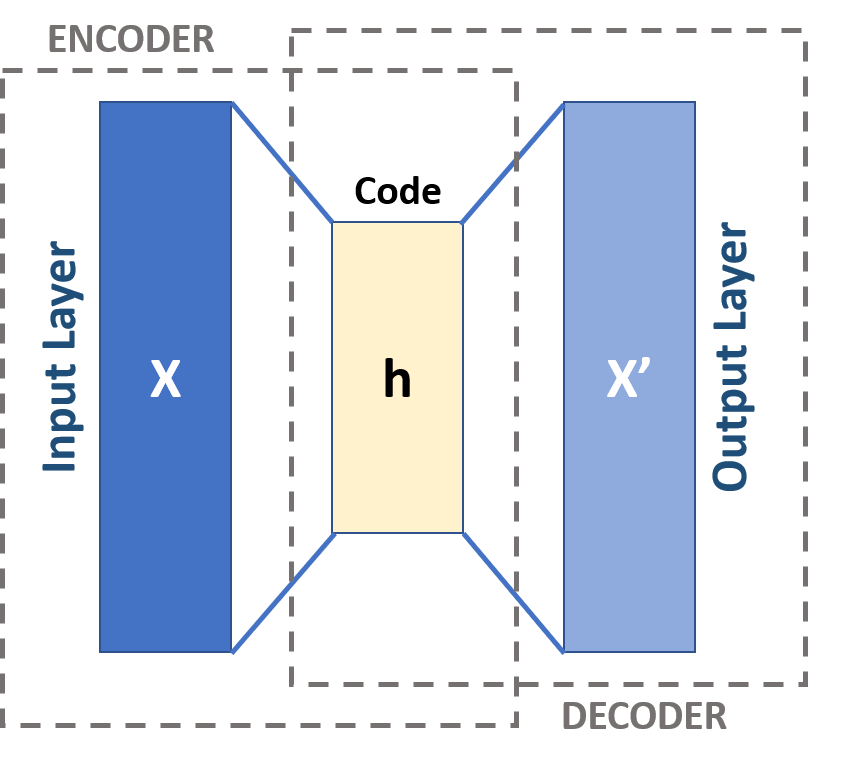

An autoencoder is a type of artificial neural network used to learn efficient codings of unlabeled data (unsupervised learning). An autoencoder learns two functions: an encoding function that transforms the input data, and a decoding function that recreates the input data from the encoded representation. The autoencoder learns an efficient representation (encoding) for a set of data, typically for dimensionality reduction, to generate lower-dimensional embeddings for subsequent use by other machine learning algorithms.

Variants exist which aim to make the learned representations assume useful properties. Examples are regularized autoencoders (sparse, denoising and contractive autoencoders), which are effective in learning representations for subsequent classification tasks, and variational autoencoders, which can be used as generative models. Autoencoders are applied to many problems, including facial recognition, feature detection, anomaly detection, and learning the meaning of words. In terms of data synthesis, autoencoders can also be used to randomly generate new data that is similar to the input (training) data.

An autoencoder is defined by the following components:

Two sets: the space of decoded messages ; the space of encoded messages . Typically and are Euclidean spaces, that is, with

Two parametrized families of functions: the encoder family , parametrized by ; the decoder family , parametrized by .

For any , we usually write , and refer to it as the code, the latent variable, latent representation, latent vector, etc. Conversely, for any , we usually write , and refer to it as the (decoded) message.

Usually, both the encoder and the decoder are defined as multilayer perceptrons (MLPs). For example, a one-layer-MLP encoder is:

where is an element-wise activation function, is a "weight" matrix, and is a "bias" vector.

Hub AI

Autoencoder AI simulator

(@Autoencoder_simulator)

Autoencoder

An autoencoder is a type of artificial neural network used to learn efficient codings of unlabeled data (unsupervised learning). An autoencoder learns two functions: an encoding function that transforms the input data, and a decoding function that recreates the input data from the encoded representation. The autoencoder learns an efficient representation (encoding) for a set of data, typically for dimensionality reduction, to generate lower-dimensional embeddings for subsequent use by other machine learning algorithms.

Variants exist which aim to make the learned representations assume useful properties. Examples are regularized autoencoders (sparse, denoising and contractive autoencoders), which are effective in learning representations for subsequent classification tasks, and variational autoencoders, which can be used as generative models. Autoencoders are applied to many problems, including facial recognition, feature detection, anomaly detection, and learning the meaning of words. In terms of data synthesis, autoencoders can also be used to randomly generate new data that is similar to the input (training) data.

An autoencoder is defined by the following components:

Two sets: the space of decoded messages ; the space of encoded messages . Typically and are Euclidean spaces, that is, with

Two parametrized families of functions: the encoder family , parametrized by ; the decoder family , parametrized by .

For any , we usually write , and refer to it as the code, the latent variable, latent representation, latent vector, etc. Conversely, for any , we usually write , and refer to it as the (decoded) message.

Usually, both the encoder and the decoder are defined as multilayer perceptrons (MLPs). For example, a one-layer-MLP encoder is:

where is an element-wise activation function, is a "weight" matrix, and is a "bias" vector.