Recent from talks

Nothing was collected or created yet.

Recursive least squares filter

View on WikipediaRecursive least squares (RLS) is an adaptive filter algorithm that recursively finds the coefficients that minimize a weighted linear least squares cost function relating to the input signals. This approach is in contrast to other algorithms such as the least mean squares (LMS) that aim to reduce the mean square error. In the derivation of the RLS, the input signals are considered deterministic, while for the LMS and similar algorithms they are considered stochastic. Compared to most of its competitors, the RLS exhibits extremely fast convergence. However, this benefit comes at the cost of high computational complexity.

Motivation

[edit]RLS was discovered by Gauss but lay unused or ignored until 1950 when Plackett rediscovered the original work of Gauss from 1821. In general, the RLS can be used to solve any problem that can be solved by adaptive filters. For example, suppose that a signal is transmitted over an echoey, noisy channel that causes it to be received as

where represents additive noise. The intent of the RLS filter is to recover the desired signal by use of a -tap FIR filter, :

where is the column vector containing the most recent samples of . The estimate of the recovered desired signal is

The goal is to estimate the parameters of the filter , and at each time we refer to the current estimate as and the adapted least-squares estimate by . is also a column vector, as shown below, and the transpose, , is a row vector. The matrix product (which is the dot product of and ) is , a scalar. The estimate is "good" if is small in magnitude in some least squares sense.

As time evolves, it is desired to avoid completely redoing the least squares algorithm to find the new estimate for , in terms of .

The benefit of the RLS algorithm is that there is no need to invert matrices, thereby saving computational cost. Another advantage is that it provides intuition behind such results as the Kalman filter.

Discussion

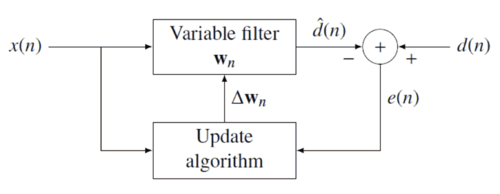

[edit]The idea behind RLS filters is to minimize a cost function by appropriately selecting the filter coefficients , updating the filter as new data arrives. The error signal and desired signal are defined in the negative feedback diagram below:

The error implicitly depends on the filter coefficients through the estimate :

The weighted least squares error function —the cost function we desire to minimize—being a function of is therefore also dependent on the filter coefficients:

where is the "forgetting factor" which gives exponentially less weight to older error samples.

The cost function is minimized by taking the partial derivatives for all entries of the coefficient vector and setting the results to zero

Next, replace with the definition of the error signal

Rearranging the equation yields

This form can be expressed in terms of matrices

where is the weighted sample covariance matrix for , and is the equivalent estimate for the cross-covariance between and . Based on this expression we find the coefficients which minimize the cost function as

This is the main result of the discussion.

Choosing λ

[edit]The smaller is, the smaller is the contribution of previous samples to the covariance matrix. This makes the filter more sensitive to recent samples, which means more fluctuations in the filter co-efficients. The case is referred to as the growing window RLS algorithm. In practice, is usually chosen between 0.98 and 1.[1] By using type-II maximum likelihood estimation the optimal can be estimated from a set of data.[2]

Recursive algorithm

[edit]The discussion resulted in a single equation to determine a coefficient vector which minimizes the cost function. In this section we want to derive a recursive solution of the form

where is a correction factor at time . We start the derivation of the recursive algorithm by expressing the cross covariance in terms of

where is the dimensional data vector

Similarly we express in terms of by

In order to generate the coefficient vector we are interested in the inverse of the deterministic auto-covariance matrix. For that task the Woodbury matrix identity comes in handy. With

is -by- is -by-1 (column vector) is 1-by- (row vector) is the 1-by-1 identity matrix

The Woodbury matrix identity follows

To come in line with the standard literature, we define

where the gain vector is

Before we move on, it is necessary to bring into another form

Subtracting the second term on the left side yields

With the recursive definition of the desired form follows

Now we are ready to complete the recursion. As discussed

The second step follows from the recursive definition of . Next we incorporate the recursive definition of together with the alternate form of and get

With we arrive at the update equation

where is the a priori error. Compare this with the a posteriori error; the error calculated after the filter is updated:

That means we found the correction factor

This intuitively satisfying result indicates that the correction factor is directly proportional to both the error and the gain vector, which controls how much sensitivity is desired, through the weighting factor, .

RLS algorithm summary

[edit]The RLS algorithm for a p-th order RLS filter can be summarized as

| Parameters: | filter order |

| forgetting factor | |

| value to initialize | |

| Initialization: | , |

| , | |

| where is the identity matrix of rank | |

| Computation: | For |

|

| |

| . |

The recursion for follows an algebraic Riccati equation and thus draws parallels to the Kalman filter.[3]

Lattice recursive least squares filter (LRLS)

[edit]The lattice recursive least squares adaptive filter is related to the standard RLS except that it requires fewer arithmetic operations (order N).[4] It offers additional advantages over conventional LMS algorithms such as faster convergence rates, modular structure, and insensitivity to variations in eigenvalue spread of the input correlation matrix. The LRLS algorithm described is based on a posteriori errors and includes the normalized form. The derivation is similar to the standard RLS algorithm and is based on the definition of . In the forward prediction case, we have with the input signal as the most up to date sample. The backward prediction case is , where i is the index of the sample in the past we want to predict, and the input signal is the most recent sample.[5]

Parameter summary

[edit]- is the forward reflection coefficient

- is the backward reflection coefficient

- represents the instantaneous a posteriori forward prediction error

- represents the instantaneous a posteriori backward prediction error

- is the minimum least-squares backward prediction error

- is the minimum least-squares forward prediction error

- is a conversion factor between a priori and a posteriori errors

- are the feedforward multiplier coefficients.

- is a small positive constant that can be 0.01

LRLS algorithm summary

[edit]The algorithm for a LRLS filter can be summarized as

| Initialization: | |

| For | |

| (if for ) | |

| End | |

| Computation: | |

| For | |

| For | |

| Feedforward filtering | |

| End | |

| End | |

Normalized lattice recursive least squares filter (NLRLS)

[edit]The normalized form of the LRLS has fewer recursions and variables. It can be calculated by applying a normalization to the internal variables of the algorithm which will keep their magnitude bounded by one. This is generally not used in real-time applications because of the number of division and square-root operations which comes with a high computational load.

NLRLS algorithm summary

[edit]The algorithm for a NLRLS filter can be summarized as

| Initialization: | |

| For | |

| (if for ) | |

| End | |

| Computation: | |

| For | |

| (Input signal energy) | |

| (Reference signal energy) | |

| For | |

| Feedforward filter | |

| End | |

| End | |

See also

[edit]References

[edit]- Hayes, Monson H. (1996). "9.4: Recursive Least Squares". Statistical Digital Signal Processing and Modeling. Wiley. p. 541. ISBN 0-471-59431-8.

- Simon Haykin, Adaptive Filter Theory, Prentice Hall, 2002, ISBN 0-13-048434-2

- M.H.A Davis, R.B. Vinter, Stochastic Modelling and Control, Springer, 1985, ISBN 0-412-16200-8

- Weifeng Liu, Jose Principe and Simon Haykin, Kernel Adaptive Filtering: A Comprehensive Introduction, John Wiley, 2010, ISBN 0-470-44753-2

- R.L.Plackett, Some Theorems in Least Squares, Biometrika, 1950, 37, 149–157, ISSN 0006-3444

- C.F.Gauss, Theoria combinationis observationum erroribus minimis obnoxiae, 1821, Werke, 4. Gottinge

Notes

[edit]- ^ Emannual C. Ifeacor, Barrie W. Jervis. Digital signal processing: a practical approach, second edition. Indianapolis: Pearson Education Limited, 2002, p. 718

- ^ Steven Van Vaerenbergh, Ignacio Santamaría, Miguel Lázaro-Gredilla "Estimation of the forgetting factor in kernel recursive least squares", 2012 IEEE International Workshop on Machine Learning for Signal Processing, 2012, accessed June 23, 2016.

- ^ Welch, Greg and Bishop, Gary "An Introduction to the Kalman Filter", Department of Computer Science, University of North Carolina at Chapel Hill, September 17, 1997, accessed July 19, 2011.

- ^ Diniz, Paulo S.R., "Adaptive Filtering: Algorithms and Practical Implementation", Springer Nature Switzerland AG 2020, Chapter 7: Adaptive Lattice-Based RLS Algorithms. https://doi.org/10.1007/978-3-030-29057-3_7

- ^ Albu, Kadlec, Softley, Matousek, Hermanek, Coleman, Fagan "Implementation of (Normalised) RLS Lattice on Virtex" Archived 2016-03-04 at the Wayback Machine, Digital Signal Processing, 2001, accessed December 24, 2011.

Recursive least squares filter

View on GrokipediaIntroduction and Background

Overview and Motivation

The recursive least squares (RLS) filter is an adaptive filtering algorithm that recursively updates the coefficients of a linear model to minimize a weighted least squares cost function, enabling real-time estimation in dynamic systems. This approach processes incoming data sequentially, making it particularly effective for applications where system parameters evolve over time, such as in signal processing and control systems.[4] Developed primarily in the 1970s and 1980s, RLS emerged as an enhancement to batch least squares methods, which require recomputing solutions from all historical data and are impractical for online applications. Key contributions came from researchers like Lennart Ljung and Torsten Söderström, who formalized recursive identification techniques in system modeling, providing a theoretical foundation for adaptive estimation. Early parallels to RLS concepts appeared in the 1960s through Kalman filtering for state estimation, but the distinct formulation of RLS for adaptive filtering gained prominence around 1980 with efficient implementations for signal processing tasks.[2] The primary motivation for RLS lies in its ability to address the rigidity of static least squares by incorporating a forgetting factor, typically denoted as λ (where 0 < λ ≤ 1), which exponentially discounts older data to emphasize recent observations. This mechanism allows the filter to track time-varying conditions, such as fluctuating noise or channel distortions, without accumulating outdated information that could degrade performance.[1] For instance, in acoustic echo cancellation, RLS adapts to changing room impulses and signal paths during a conversation, ensuring effective suppression of echoes in real-time telephony systems.[5] Compared to simpler methods like the least mean squares (LMS) algorithm, RLS achieves faster convergence, though at higher computational cost, making it ideal for scenarios demanding precise tracking.[2]Mathematical Prerequisites

The recursive least squares (RLS) filter relies on foundational concepts from linear algebra, particularly in the realm of signal estimation, where signals are represented as vectors in finite-dimensional vector spaces. In this context, an inner product defines the geometry of the space, enabling the measurement of similarity between signal vectors through the dot product, which for complex-valued signals generalizes to the Hermitian inner product . Matrix inverses play a crucial role in solving systems of linear equations that arise in estimation problems, assuming the matrix is invertible, which requires it to be positive definite or full rank in practice for signal data matrices. Projection operators are essential for finding the best linear approximation of a desired signal onto the subspace spanned by input regressors, minimizing the estimation error in a mean-square sense. Least squares estimation addresses the problem of finding the optimal weights that minimize the squared error between observed data and model predictions. Consider a batch of observations where the desired signal (an vector) is modeled as , with an data matrix of input regressors, an weight vector, and noise. The cost function to minimize is . To derive the solution, take the derivative with respect to and set it to zero: , yielding the normal equations . Assuming is invertible, the batch least squares solution is , which projects onto the column space of . This solution is unbiased and has minimum variance under Gaussian noise assumptions. An extension to weighted least squares incorporates a positive definite diagonal weighting matrix to emphasize certain data points, such as more recent observations in sequential processing. The weighted cost function becomes , and the solution follows similarly from the normal equations , giving , provided the weighted Gram matrix is invertible. This formulation allows for unequal treatment of errors, improving robustness to outliers or varying data reliability. In the stochastic setting relevant to RLS, signals are modeled as realizations of random processes, often assumed stationary for theoretical analysis, meaning statistical properties like mean and autocorrelation do not change over time. The autocorrelation matrix captures second-order statistics of the input vector , while the cross-correlation relates input to the desired signal , both expectations taken over the joint distribution. The Wiener solution, which minimizes the mean-square error , solves the Wiener-Hopf equations , yielding under the assumption that is positive definite. For time-varying systems where stationarity does not hold, such as in tracking changing signal environments, exponentially weighted data provides a mechanism to discount older observations. This involves assigning weights that decay geometrically with time lag, effectively prioritizing recent data to adapt to non-stationarities while maintaining computational tractability in estimation.Core RLS Formulation

Weighted Least Squares Problem

The weighted least squares problem underlying the recursive least squares (RLS) filter seeks to determine the optimal coefficient vector that minimizes a time-weighted cost function based on accumulated errors up to time . Specifically, the cost function is defined as where denotes the desired signal at time , is the input data vector, is the filter coefficient vector of length at time , and (with ) is the forgetting factor that applies exponentially decaying weights to past observations.[1] This weighting scheme emphasizes recent data over older samples, enabling the filter to adapt to time-varying environments; when , the formulation reduces to the standard unweighted least squares problem, treating all observations equally.[1] The batch solution for at each time step involves solving the normal equations: where is the inverse of the weighted correlation matrix and is the weighted cross-correlation vector between the input and desired signal.[1] This direct approach requires inverting an matrix at every iteration, incurring a computational complexity of operations per update, which becomes prohibitive for high-order filters or real-time applications and thus motivates the development of recursive formulations.[1] In the RLS context, two key error signals are defined to quantify prediction accuracy: the a priori error , which uses the previous coefficient vector, and the a posteriori error , which employs the updated coefficients.[1]Recursive Derivation

The recursive least squares (RLS) filter derives its efficient update mechanism from the batch weighted least squares solution by exploiting the structure of sequential data arrivals and applying the matrix inversion lemma, also known as the Sherman-Morrison formula, to avoid full matrix recomputation at each step. Consider the weighted least squares problem at time , where the cost function is , with forgetting factor to emphasize recent data. The minimizer is , where is the weighted autocorrelation matrix and is the cross-correlation vector.[3][6] To derive the recursive form, first update the inverse correlation matrix . Note that , so . Applying the Sherman-Morrison formula, which states that for invertible and vectors , with , , and , yields: [3][7] This rank-one update computes in operations, where is the filter order, compared to for direct inversion of the batch .[8] Next, derive the recursive updates for and . The cross-correlation vector updates as , a simple vector addition. The weight vector then becomes . Substituting the update for and using the expression for , this simplifies to , where the gain vector is . This form resembles the Kalman filter update, with weighting the prediction error .[7][6] To verify equivalence, prove by induction that the recursive minimizes . For the base case , clearly minimizes the scalar cost. Assume true for : . For , the minimizer satisfies the normal equations . Substituting the recursive forms shows , confirming the normal equations hold and thus minimizes . By induction, the recursion is equivalent to the batch solution.[3][9] For numerical stability in high dimensions, the Sherman-Morrison update can be generalized using the Woodbury matrix identity for low-rank adjustments, though implementation details are deferred to algorithmic variants. Overall, the recursive structure achieves complexity per iteration, enabling real-time adaptation in applications like signal processing, far superior to the cost of batch recomputation over samples.[8][7]Standard RLS Algorithm

Update Equations

The standard recursive least squares (RLS) algorithm updates the filter coefficients and associated matrices at each time step using a sequence of four key equations, derived from the matrix inversion lemma to avoid direct matrix inversion and enable efficient recursion.[1] First, the gain vector is computed as where is the inverse covariance matrix from the previous step, is the input vector, and (typically ) is the forgetting factor.[2] Next, the a priori error , which represents the prediction error before the update, is calculated as with denoting the desired signal and the prior coefficient vector.[7] The coefficient vector is then updated via incorporating the gain-weighted error to adjust the filter taps.[1] Finally, the inverse covariance matrix is updated using a rank-1 modification: where is the identity matrix; this form leverages the Sherman-Morrison formula for computational efficiency.[2] To reduce computational complexity, particularly for the denominator in the gain vector, a scalar intermediate quantity is often introduced, yielding ; the subsequent covariance update then proceeds via the rank-1 form above.[7] These updates form the core iteration of the RLS algorithm, typically implemented in a loop from to , where is the number of data samples; a pseudocode outline is as follows:For n = 1 to N:

Compute α(n) = x^T(n) P(n-1) x(n)

Compute K(n) = P(n-1) x(n) / (λ + α(n))

Compute ε(n) = d(n) - w^T(n-1) x(n)

Update w(n) = w(n-1) + K(n) ε(n)

Update P(n) = [I - K(n) x^T(n)] P(n-1) / λ

For n = 1 to N:

Compute α(n) = x^T(n) P(n-1) x(n)

Compute K(n) = P(n-1) x(n) / (λ + α(n))

Compute ε(n) = d(n) - w^T(n-1) x(n)

Update w(n) = w(n-1) + K(n) ε(n)

Update P(n) = [I - K(n) x^T(n)] P(n-1) / λ

Initialization and Parameter Selection

The initialization of the recursive least squares (RLS) filter involves setting the initial coefficient vector and covariance matrix to establish starting conditions that balance prior knowledge with adaptation speed. Typically, the initial coefficient vector is set to the zero vector if no prior information about the filter coefficients is available, allowing the algorithm to learn from incoming data without bias from assumptions. Alternatively, small random values may be used when slight perturbations are desired to avoid potential stagnation in flat error surfaces. This choice promotes unbiased convergence in stationary environments.[2] The initial covariance matrix is commonly initialized as , where is a small scalar (e.g., 0.01 to 1) and is the identity matrix of appropriate dimension. This formulation introduces high initial uncertainty, enabling fast adaptation during startup by yielding large initial gains in the update equations; smaller amplifies the inverse scaling, increasing the emphasis on early data for rapid convergence. The value of is often tuned relative to the input signal variance to ensure numerical stability and appropriate gain scaling.[2][10] The forgetting factor is a critical parameter that weights recent observations more heavily in the exponentially weighted cost function, with typical values ranging from 0.95 to 0.995 for moderate forgetting in many applications. Values closer to 1 (e.g., 0.99) promote slow adaptation and greater stability by retaining more historical data, suitable for nearly stationary processes, while smaller (e.g., 0.95) enables faster tracking of time-varying parameters at the expense of increased sensitivity to noise and potential instability. Selection criteria for often consider the eigenvalue spread of the input correlation matrix , where larger spreads necessitate smaller to mitigate ill-conditioning, or the desired effective memory length approximated as , which quantifies the number of past samples influencing the estimate (e.g., 20 to 200 samples for to 0.995). Additionally, is chosen to balance responsiveness against noise sensitivity, with application-specific tuning via simulation or analysis of parameter variation rates.[2][10][11] The filter order , which determines the dimension of the coefficient vector, is selected to achieve an application-specific trade-off between bias and variance in the model. Common approaches include information-theoretic criteria such as the Akaike Information Criterion (AIC) or Bayesian Information Criterion (BIC), which penalize higher orders to avoid overfitting while minimizing prediction error, or cross-validation techniques that evaluate performance on held-out data subsets. For instance, in autoregressive modeling with RLS estimation, order selection via AIC balances model complexity against residual fit, often yielding values from 2 to 10 depending on signal correlation structure.[12]Variant Algorithms

Lattice Recursive Least Squares (LRLS)

The lattice recursive least squares (LRLS) algorithm provides a modular, order-recursive formulation of the RLS filter, structured as a ladder network that processes signals stage by stage without maintaining the full covariance matrix. This design leverages reflection coefficients computed from backward prediction errors, enabling efficient adaptation to increasing filter orders while preserving the optimality of the least squares solution. Introduced in seminal work on ladder estimation, the LRLS facilitates implementations in adaptive signal processing by decoupling computations across stages, where each stage corresponds to a prediction order at time .[13] Central to the LRLS are the forward prediction errors , which represent the error in predicting the input signal using past samples, and the backward prediction errors , which predict the signal from future samples. These are complemented by partial correlation (PARCOR) coefficients , defined as which quantify the correlation between forward and backward errors at the previous stage and serve as the reflection coefficients for order updates.[13] The core updates in LRLS proceed in two phases: time recursion to incorporate the forgetting factor and new data, followed by order recursion using . The time-updated prediction errors satisfy \begin{align*} f_m(n) &= \lambda f_m(n-1) + \kappa_m(n) b_{m-1}(n-1), \ b_m(n) &= \lambda b_{m-1}(n-1) + \kappa_m(n) f_m(n-1), \end{align*} where these relations propagate errors stage-wise, ensuring the filter coefficients align with the exponentially weighted least squares criterion. To interface with transversal filter forms, the lattice gains are converted to equivalent transversal coefficients via a lower triangular conversion matrix derived from the Levinson-Durbin recursion, allowing seamless integration with standard RLS outputs.[13]| Parameter | Description | Recursive Relation |

|---|---|---|

| Reflection (PARCOR) coefficient at stage , time | ||

| Forward prediction error at stage , time | (time update); order update via stage recursion from | |

| Backward prediction error at stage , time | (time update); order update via stage recursion from |

Normalized Lattice Recursive Least Squares (NLRLS)

The Normalized Lattice Recursive Least Squares (NLRLS) algorithm introduces normalization into the lattice structure to enhance numerical stability and robustness against variations in input signal amplitudes. Unlike the standard lattice RLS, which can suffer from coefficient drift in finite-precision implementations due to fluctuating input energies, the NLRLS normalizes internal signals to maintain bounded magnitudes, typically close to unity. This normalization is particularly beneficial for adaptive filtering applications where input signals exhibit time-varying power levels, ensuring consistent performance without excessive sensitivity to scaling.[15] Central to the NLRLS are the energy estimates Δ_m(n), which approximate the expected squared magnitude of the forward prediction errors: where λ is the forgetting factor (0 < λ ≤ 1), and f_m(n) is the m-th stage forward prediction error at time n. These estimates serve as scaling factors for normalization. The normalized forward error is defined as γ_m(n) = f_m(n) / √Δ_m(n), and a similar normalization applies to the backward prediction error b_m(n), yielding a normalized backward error β_m(n) = b_m(n) / √Δ_m(n). This approach ensures that the normalized errors have approximately unit energy, mitigating amplification or attenuation effects from input variations.[15] The reflection coefficients in NLRLS are updated using the normalized quantities to preserve orthogonality and stability. Specifically, the m-th stage reflection coefficient evolves as where * denotes complex conjugate, and the update incorporates the previous normalized reflection coefficient weighted by λ. The normalized recursions then propagate the errors across stages while maintaining the unit energy constraint: for the forward path, and similarly for the backward path, These recursions ensure that the energy of the normalized errors remains approximately 1, preventing numerical overflow or underflow in fixed-point implementations.[15] The full NLRLS algorithm proceeds in a modular, order-recursive manner, starting with the zeroth-stage estimates and building up to the desired filter order M. Initialization sets Δ_0(-1) = ε (a small positive constant, e.g., 0.01) for all stages, with initial reflection coefficients κ_m(-1) = 0 and normalized errors to zero. For each time step n ≥ 0:- Update the input energy Δ_0(n) = λ Δ_0(n-1) + |x(n)|^2, where x(n) is the input sample, and compute the initial normalized error γ_0(n) = x(n) / √Δ_0(n).

- For m = 0 to M-1, compute the reflection coefficient κ_m(n) using the formula above, leveraging the previous stage's errors.

- Apply the normalized forward and backward recursions to obtain γ_m(n) and β_m(n) for each stage.

- Update the energy estimates Δ_m(n) = λ Δ_m(n-1) + |f_m(n)|^2, where f_m(n) = γ_m(n) √Δ_m(n) recovers the unnormalized error if needed.

- For filter output estimation, incorporate the desired signal d(n) via joint process extensions: compute the a priori error e(n) = d(n) - ∑_{m=0}^M g_m(n) γ_m(n), where g_m(n) are the joint process coefficients derived from the lattice stages (updated similarly via κ_m(n)). The posterior error and coefficient adjustments follow standard RLS principles, scaled by the normalization. This structure allows efficient order recursion, with complexity O(M) per update.[15]

Applications and Extensions

Practical Applications

The recursive least squares (RLS) filter finds extensive application in telecommunications, particularly for channel equalization in modems where it adapts to time-varying channel distortions to recover transmitted signals in high-speed data links.[16] In adaptive beamforming for antenna arrays, RLS enables interference suppression by dynamically adjusting weights to null out unwanted signals, enhancing signal-to-interference ratios in wireless communication environments.[17] In audio processing, RLS is employed for acoustic echo cancellation in hands-free communication systems, where it rapidly models the echo path to subtract unwanted reflections and improve speech clarity during teleconferencing or mobile calls.[18] For noise reduction in hearing aids, RLS-based multichannel Wiener filters adaptively suppress environmental noise while preserving speech signals, leveraging frequency-domain implementations to handle correlated noise sources effectively.[19] Within control systems, RLS supports system identification in adaptive control frameworks by estimating unknown plant parameters in real-time, enabling controllers to adjust to dynamic process variations in industrial automation.[20] In robotics, it facilitates tracking applications, such as kinematic calibration of manipulators, where RLS recursively updates pose estimates to maintain accuracy during motion amidst sensor noise and environmental changes.[21] In finance, RLS is utilized for time-series prediction of non-stationary data, such as stock prices, by incorporating a forgetting factor to emphasize recent observations and discount outdated information, thereby improving forecasts in volatile markets.[22] A notable example is the deployment of RLS in digital subscriber line (DSL) modems for far-end crosstalk mitigation, where it models interfering signals from adjacent lines to cancel them, achieving reported convergence rates up to 10 times faster than least mean squares (LMS) methods in adaptive equalization tasks. Recent developments since 2020 have integrated RLS with deep learning in hybrid adaptive networks for 5G and 6G systems, combining RLS's rapid parameter updates with neural networks for enhanced channel estimation and self-interference cancellation in full-duplex massive MIMO setups.[23] This fusion leverages the forgetting factor in RLS for superior tracking of fast-fading channels in beyond-5G scenarios.Comparisons and Numerical Considerations

The recursive least squares (RLS) filter provides faster convergence and lower steady-state error compared to the least mean squares (LMS) algorithm, but at the expense of increased computational complexity. The LMS algorithm updates filter coefficients using a stochastic gradient descent approach with O(M) operations per iteration, where M is the filter length, making it suitable for low-power applications. In contrast, the standard RLS requires O(M²) operations due to the matrix inversion or rank-one updates involved in maintaining the inverse correlation matrix. This higher complexity limits RLS to scenarios where rapid adaptation justifies the resource demands. Despite the cost, RLS converges in approximately M to 2M iterations for well-conditioned inputs, achieving near-optimal performance quickly, whereas LMS convergence typically requires 1/(μ λ_min) iterations, often hundreds or more, with μ as the step size and λ_min the smallest eigenvalue of the input correlation matrix. RLS also demonstrates lower misadjustment—remaining below 1% in steady state for typical parameters—independent of input statistics, while LMS misadjustment is approximately μ trace(R)/2 and increases with μ to achieve faster convergence. Furthermore, RLS exhibits superior tracking ability in non-stationary environments, adapting to parameter changes within a few iterations, compared to LMS, which relies on smaller μ for stability at the cost of slower tracking.| Performance Metric | LMS | RLS |

|---|---|---|

| Computational Complexity | O(M) | O(M²) |

| Convergence Iterations | ~1/(μ λ_min), often 100s–1000s | ~M to 2M |

| Steady-State Misadjustment | Higher (~μ trace(R)/2) | Lower (<1%, input-independent) |

| Tracking in Non-Stationary | Moderate (μ-dependent) | Excellent (λ-tunable) |

![{\displaystyle \mathbf {x} _{n}=[x(n)\quad x(n-1)\quad \ldots \quad x(n-p)]^{T}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/09eb921b307dabe5f3f396085912215d09c02114)

![{\displaystyle \sum _{i=0}^{n}\lambda ^{n-i}\left[d(i)-\sum _{\ell =0}^{p}w_{n}(\ell )x(i-\ell )\right]x(i-k)=0\qquad k=0,1,\ldots ,p}](https://wikimedia.org/api/rest_v1/media/math/render/svg/19ddcbc3bdad707cdb76a5d5371bc1bee9872174)

![{\displaystyle \sum _{\ell =0}^{p}w_{n}(\ell )\left[\sum _{i=0}^{n}\lambda ^{n-i}\,x(i-\ell )x(i-k)\right]=\sum _{i=0}^{n}\lambda ^{n-i}d(i)x(i-k)\qquad k=0,1,\ldots ,p}](https://wikimedia.org/api/rest_v1/media/math/render/svg/e5289d4da73b7a149fdf9a53cbd8c80a11c0dfe6)

![{\displaystyle \mathbf {x} (i)=[x(i),x(i-1),\dots ,x(i-p)]^{T}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/dab2ea44a54d82de74ac9f6d26e3f5f6c2a44d8a)

![{\displaystyle \left[\lambda \mathbf {R} _{x}(n-1)+\mathbf {x} (n)\mathbf {x} ^{T}(n)\right]^{-1}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f12c798517a6c530e616e5915429e3e16370785e)

![{\displaystyle =\lambda ^{-1}\left[\mathbf {P} (n-1)-\mathbf {g} (n)\mathbf {x} ^{T}(n)\mathbf {P} (n-1)\right]\mathbf {x} (n)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3baaff4ae27f9cfc51e54ada85a9de0999978262)

![{\displaystyle =\lambda \left[\lambda ^{-1}\mathbf {P} (n-1)-\mathbf {g} (n)\mathbf {x} ^{T}(n)\lambda ^{-1}\mathbf {P} (n-1)\right]\mathbf {r} _{dx}(n-1)+d(n)\mathbf {g} (n)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d1dfdfb80807912183bd47c28d76f65ea9d4c553)

![{\displaystyle =\mathbf {P} (n-1)\mathbf {r} _{dx}(n-1)+\mathbf {g} (n)\left[d(n)-\mathbf {x} ^{T}(n)\mathbf {P} (n-1)\mathbf {r} _{dx}(n-1)\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9800c914543c33d7b8ebd7f295efc9fdc51b57e7)

![{\displaystyle =\mathbf {w} _{n-1}+\mathbf {g} (n)\left[d(n)-\mathbf {x} ^{T}(n)\mathbf {w} _{n-1}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/78ebe76268d69116b2d676aab45f0272977a6df2)

![{\displaystyle \mathbf {x} (n)=\left[{\begin{matrix}x(n)\\x(n-1)\\\vdots \\x(n-p)\end{matrix}}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d85a8706d78172d40a299b2bb60ae26b22a21f6c)

![{\displaystyle {\overline {e}}(k,i+1)={\frac {1}{\sqrt {(1-{\overline {e}}_{b}^{2}(k,i))(1-{\overline {\delta }}_{D}^{2}(k,i))}}}[{\overline {e}}(k,i)-{\overline {\delta }}_{D}(k,i){\overline {e}}_{b}(k,i)]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/580293031a5a01b0b256042558d14b8ae206561d)