Frequency modulation synthesis

View on Wikipedia |

|

|

Frequency modulation synthesis (or FM synthesis) is a form of sound synthesis whereby the frequency of a waveform is changed by modulating its frequency with a modulator. The (instantaneous) frequency of an oscillator is altered in accordance with the amplitude of a modulating signal.[1]

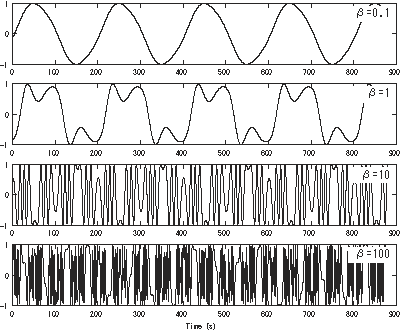

FM synthesis can create both harmonic and inharmonic sounds. To synthesize harmonic sounds, the modulating signal must have a harmonic relationship to the original carrier signal. As the amount of frequency modulation increases, the sound grows progressively complex. Through the use of modulators with frequencies that are non-integer multiples of the carrier signal (i.e. inharmonic), inharmonic bell-like and percussive spectra can be created.

FM synthesis using analog oscillators may result in pitch instability.[2] However, FM synthesis can also be implemented digitally, which is more stable and became standard practice.

Applications

[edit]In synthesizers

[edit]Digital FM synthesis (equivalent to phase modulation using the time integration of instantaneous frequency) was the basis of several musical instruments beginning as early as 1974. Yamaha built the first prototype digital synthesizer in 1974, based on FM synthesis,[3] before commercially releasing the Yamaha GS-1 in 1980.[4] The Synclavier I, manufactured by New England Digital Corporation beginning in 1978, included a digital FM synthesizer, using an FM synthesis algorithm licensed from Yamaha.[5] Yamaha's groundbreaking Yamaha DX7 synthesizer, released in 1983, brought FM to the forefront of synthesis in the mid-1980s.[6]

In PCs, arcades, game consoles, and mobile phones

[edit]FM synthesis also became the usual setting for games and software up until the mid-nineties. Sound cards for IBM PC compatible systems like the AdLib and Sound Blaster popularized Yamaha chips like the OPL2 and OPL3. Other computers such as the Sharp X68000 and MSX (Yamaha CX5M computer unit) utilize the OPM sound chip (with later CX5M units using the OPP sound chip). The NEC PC-88 and PC-98 computers use either the OPN and OPNA sound chips.

For arcade systems and game consoles, OPM was used in many arcade boards from the 1980s and 1990s (including Sega's System 16 and Capcom's CP System arcade boards); OPN was also used in some arcade boards in the 1980s. OPNB was notably used in SNK's Neo Geo arcade (MVS) and home console (AES) machines, as well as being used as the main basic sound generator in Taito's arcade boards (with a variant of the OPNB being used in the Taito Z System board). The related OPN2 was used in Sega's Mega Drive (Genesis), Fujitsu's FM Towns Marty, and some of Sega's arcade boards (e.g. Sega System C-2 and Sega System 32) as one of its sound generator chips.

FM synthesis was also used on a wide range of mobile phones in the 2000s to play ringtones and other sounds, using the Yamaha SMAF format.

History

[edit]Don Buchla (mid-1960s)

[edit]Don Buchla implemented FM on his instruments in the mid-1960s, prior to Chowning's patent. His 158, 258 and 259 dual oscillator modules had a specific FM control voltage input,[7] and the model 208 (Music Easel) had a modulation oscillator hard-wired to allow FM as well as AM of the primary oscillator.[8] These early applications used analog oscillators, and this capability was also followed by other modular synthesizers and portable synthesizers including Minimoog and ARP Odyssey.

John Chowning (late-1960s–1970s)

[edit]

By the mid-20th century, frequency modulation (FM), a means of carrying sound, had been understood for decades and was being used to broadcast radio transmissions. FM synthesis was developed since 1967 at Stanford University, California, by John Chowning, through his exploration of digital synthesis and spatialization, inspired by the new possibilities of digital sound as described by Max Mathews[citation needed]. His algorithm[citation needed] was licensed to Japanese company Yamaha in 1973.[3] The implementation commercialized by Yamaha (US Patent 4018121 Apr 1977[9] or U.S. Patent 4,018,121[10]) is actually based on phase modulation[citation needed], but the results end up being equivalent mathematically as both are essentially a special case of quadrature amplitude modulation[citation needed].[11]

1970s–1980s

[edit]Expansions by Yamaha

[edit]Yamaha's engineers began adapting Chowning's algorithm for use in a commercial digital synthesizer, adding improvements such as the "key scaling" method to avoid the introduction of distortion that normally occurred in analog systems during frequency modulation[citation needed], though it would take several years before Yamaha released their FM digital synthesizers.[12] In the 1970s, Yamaha were granted a number of patents, under the company's former name "Nippon Gakki Seizo Kabushiki Kaisha", evolving Chowning's work.[10] Yamaha built the first prototype FM digital synthesizer in 1974.[3] Yamaha eventually commercialized FM synthesis technology with the Yamaha GS-1, the first FM digital synthesizer, released in 1980.[4] FM synthesis was the basis of some of the early generations of digital synthesizers, most notably those from Yamaha, as well as New England Digital Corporation under license from Yamaha.[5]

Yamaha's DX7 synthesizer, released in 1983, was ubiquitous throughout the 1980s. Several other models by Yamaha provided variations and evolutions of FM synthesis during that decade.[13]

Yamaha had patented its hardware implementation of FM in the 1970s,[10] allowing it to nearly monopolize the market for FM technology until the mid-1990s.

Related development by Casio

[edit]Casio developed a related form of synthesis called phase distortion synthesis, used in its CZ range of synthesizers. It had a similar (but slightly differently derived) sound quality to the DX series.

1990s

[edit]Free use of FM after the patent expiration

[edit]With the expiration of the Stanford University FM patent in 1995, digital FM synthesis can now be implemented freely by other manufacturers. The FM synthesis patent brought Stanford $20 million before it expired, making it (in 1994) "the second most lucrative licensing agreement in Stanford's history".[14]

Today, FM is mostly found in software-based synths such as Native Instruments' FM8 or Image-Line's Sytrus plug-ins, but it has also been incorporated into the synthesis repertoire of some modern digital synthesizers, usually coexisting as an option alongside other methods of synthesis such as subtractive, sample-based synthesis, additive synthesis, and other techniques. The degree of complexity of the FM in such hardware synths may vary from simple 2-operator FM, to the highly flexible 6-operator engines of the Korg Kronos and Alesis Fusion, to creation of FM in extensively modular engines such as those in the latest synthesisers by Kurzweil Music Systems.[citation needed]

Later use of FM and other technologies: Realtime Convolution & Modulation (AFM + Sample) and Formant Shaping Synthesis

[edit]The Yamaha SY99[15] and FS1R[16] synthesizers marketed their highly powerful FM abilities as counterparts to sample-based synthesis and formant synthesis respectively. New hardware synths specifically marketed for their FM capabilities disappeared from the market after the release of FS1R in 1999, however, well-developed FM synthesis options are a feature of Nord Lead synths manufactured by Clavia, the Alesis Fusion range, the Korg Oasys and Kronos and the Modor NF-1. Various other synthesizers offer limited FM abilities to supplement their main engines.[citation needed]

The FS1R had 16 operators, 8 standard FM operators and 8 additional operators that used a noise source rather than an oscillator as its sound source. By adding in tuneable noise sources the FS1R could model the sounds produced in the human voice and in a wind instrument, along with making percussion instrument sounds. The FS1R also contained an additional wave form called the Formant wave form. Formants can be used to model resonating body instrument sounds like the cello, violin, acoustic guitar, bassoon, English horn, or human voice. Formants can even be found in the harmonic spectrum of several brass instruments.[17]

2000s–present

[edit]Additional improvements: Variable Phase Modulation, FM-X Synthesis, Altered FM, etc.

[edit]This section needs expansion. You can help by adding to it. (February 2023) |

In 2016, Korg released the Korg Volca FM, a, 3-voice, 6 operators FM iteration of the Korg Volca series of compact, affordable desktop modules.[18] Korg has also released the opsix (2020) and opsix SE (2023), integrating 6 operators FM synthesis with subtractive, analogue modeling, additive, semi-modular and Waveshaping.

Yamaha released the Montage in 2016, which combines a 128-voice sample-based engine with a 128-voice FM engine. This iteration of FM is called FM-X, and features 8 operators; each operator has a choice of several basic wave forms, but each wave form has several parameters to adjust its spectrum.[19] It was then followed by the more affordable Yamaha MODX in 2018, with 64-voice, 8 operators FM-X architecture in addition to a 128-voice sample-based engine.[20] The MODX+ released in 2022 increased the number of voices of the FM-X engine to 128, the same as with the Montage.[21] The Montage was succeeded by the Montage M in 2023, which uses the same 128-voice, 8 operators FM-X engine alongside a 128-voice sample-based engine and a newly-introduced 16-voice 3 oscillator analog-based engine known as AN-X.[22]

Elektron launched the Digitone in 2018, which is an 8-voice, 4 operators FM synth featuring Elektron's renowned sequence engine.[23]

FM-X synthesis was first introduced with the Yamaha Montage synthesizers in 2016. FM-X uses 8 operators. Each FM-X operator has a set of multi-spectral wave forms to choose from, which means each FM-X operator can be equivalent to a stack of 3 or 4 DX7 FM operators. The list of selectable wave forms includes sine waves, the All1 and All2 wave forms, the Odd1 and Odd2 wave forms, and the Res1 and Res2 wave forms. The sine wave selection works the same as the DX7 wave forms. The All1 and All2 wave forms are a saw-tooth wave form. The Odd1 and Odd2 wave forms are pulse or square waves. These two types of wave forms can be used to model the basic harmonic peaks in the bottom of the harmonic spectrum of most instruments. The Res1 and Res2 wave forms move the spectral peak to a specific harmonic and can be used to model either triangular or rounded groups of harmonics further up in the spectrum of an instrument. Combining an All1 or Odd1 wave form with multiple Res1 (or Res2) wave forms (and adjusting their amplitudes) can model the harmonic spectrum of an instrument or sound.[17] [citation needed]

Combining sets of 8 FM operators with multi-spectral wave forms was first introduced in the FS1R, released in 1999 by Yamaha. It was able to achieve similar results to that of FM-X using 8 noise operators.

Spectral analysis

[edit]There are multiple variations of FM synthesis, including:

- Various operator arrangements (known as "FM Algorithms" in Yamaha terminology)

- 2 operators

- Serial FM (multiple stages)

- Parallel FM (multiple modulators, multiple-carriers),

- Mix of them

- Various waveform of operators

- Sinusoidal waveform

- Other waveforms

- Additional modulation

- Linear FM

- Exponential FM (preceded by the anti-logarithm conversion for CV/oct. interface of analog synthesizers)

- Oscillator sync with FM

etc.

As the basic of these variations, we analyze the spectrum of 2 operators (linear FM synthesis using two sinusoidal operators) on the following.

2 operators

[edit]The spectrum generated by FM synthesis with one modulator is expressed as follows:[24][25]

For modulation signal , the carrier signal is:[note 1]

If we were to ignore the constant phase terms on the carrier and the modulator , finally we would get the following expression, as seen on Chowning 1973 and Roads 1996, p. 232:

where are angular frequencies () of carrier and modulator, is frequency modulation index, and amplitudes is -th Bessel function of first kind, respectively.[note 2]

See also

[edit]References

[edit]Footnotes

[edit]- ^ Note that modulation signal as instantaneous frequency is converted to the phase of carrier signal , by time integral between .

- ^ The above expression is transformed using trigonometric addition formulas

- (Source: Kreh 2012)

Citations

[edit]- ^ Dodge & Jerse 1997, p. 115

- ^ McGuire, Sam; Matějů, Zbyněk (2020-12-28). The Art of Digital Orchestration. CRC Press. ISBN 978-1-000-28699-1.

- ^ a b c "[Chapter 2] FM Tone Generators and the Dawn of Home Music Production". Yamaha Synth 40th Anniversary - History. Yamaha Corporation. 2014. Archived from the original on 2017-05-11.

- ^ a b Curtis Roads (1996). The computer music tutorial. MIT Press. p. 226. ISBN 0-262-68082-3. Retrieved 2011-06-05.

- ^ a b "1978 New England Digital Synclavier". Mix. Penton Media. September 1, 2006.

- ^ "The top 10 classic synth presets (and where you can hear them)". MusicRadar. Retrieved October 19, 2018.

- ^

Dr. Hubert Howe (1960s). Buchla Electronic Music System: Users Manual written for CBS Musical Instruments (Buchla 100 Owner's Manual). Educational Research Department, CBS Musical Instruments, Columbia Broadcasting System. p. 7.

At this point we may consider various additional signal modifications that we may wish to make to the series of tones produced by the above example. For instance, if we would like to add frequency modulation to the tones, it is necessary to patch another audio signal into the jack connected by a line to the middle dial on the Model 158 Dual Sine-Sawtooth Oscillator. ...

- ^ Atten Strange (1974). Programming and Metaprogramming in the Electro-Organism - An Operating Directive for the Music Easel. Buchla and Associates.

- ^ "U.S. Patent 4018121 Apr 1977". patft.uspto.gov. Retrieved 2017-04-30.

- ^ a b c "Patent US4018121 - Method of synthesizing a musical sound - Google Patents". Retrieved 2017-04-30.

- ^ Rob Hordijk. "FM synthesis on Modular". Nord Modular & Micro Modular V3.03 tips & tricks. Clavia DMI AB. Archived from the original on 2007-04-07. Retrieved 2013-03-23.

- ^ Holmes, Thom (2008). "Early Computer Music". Electronic and experimental music: technology, music, and culture (3rd ed.). Taylor & Francis. pp. 257–8. ISBN 978-0-415-95781-6. Retrieved 2011-06-04.

- ^ Gordon Reid (September 2001). "Sounds of the '80s Part 2: The Yamaha DX1 & Its Successors (Retro)". Sound on Sound. Archived from the original on 17 September 2011. Retrieved 2011-06-29.

- ^ Stanford University News Service (06/07/94), Music synthesis approaches sound quality of real instruments

- ^ "Yamaha SY99 spec". Yamaha Corporation (in Japanese).

- ^ Poyser, Debbie; Johnson, Derek (1998). "Yamaha FS1R - FM Synthesis / Formant-shaping Tone Generator". Sound on Sound. No. December 1998.

- ^ a b Zollinger, W. Thor (Dec 2017). "FM_Synthesis_of_Real_Instruments" (PDF). Archived (PDF) from the original on 2017-09-25.

- ^ Volca FM product page

- ^ Yamaha Montage Product Features Page

- ^ Yamaha MODX Product Features Page

- ^ MODX8+, MODX7+, and MODX6+ Features

- ^ MONTAGE M Synthesizer

- ^ Digitone product page

- ^ Chowning 1973, pp. 1–2

- ^ Doering, Ed. "Frequency Modulation Mathematics". Retrieved 2013-04-11.

Bibliography

[edit]- Chowning, J. (1973). "The Synthesis of Complex Audio Spectra by Means of Frequency Modulation" (PDF). Journal of the Audio Engineering Society. 21 (7).

- Chowning, John; Bristow, David (1986). FM Theory & Applications - By Musicians For Musicians. Tokyo: Yamaha. ISBN 4-636-17482-8.

- Dodge, Charles; Jerse, Thomas A. (1997). Computer Music: Synthesis, Composition and Performance. New York: Schirmer Books. ISBN 0-02-864682-7.

- Kreh, Martin (2012), "Bessel Functions" (PDF), The Pennsylvania State University, pp. 5–6, archived from the original (PDF) on 2017-11-18, retrieved 2014-08-22

- Roads, Curtis (1996). The Computer Music Tutorial. MIT Press. ISBN 978-0-262-68082-0.

External links

[edit]- An Introduction To FM, by Bill Schottstaedt

- FM tutorial

- Synth Secrets, Part 12: An Introduction To Frequency Modulation, by Gordon Reid

- Synth Secrets, Part 13: More On Frequency Modulation, by Gordon Reid

- Paul Wiffens Synth School: Part 3

- F.M. Synthesis including complex operator analysis mirror site of F.M. Synthesis, 2019

![{\displaystyle {\begin{aligned}FM(t)&\ \approx \ A\,\sin \left(\omega _{c}\,t+\beta \,\sin(\omega _{m}\,t)\right)\\&\ =\ A\left(J_{0}(\beta )\sin(\omega _{c}\,t)+\sum _{n=1}^{\infty }J_{n}(\beta )\left[\,\sin((\omega _{c}+n\,\omega _{m})\,t)\ +\ (-1)^{n}\sin((\omega _{c}-n\,\omega _{m})\,t)\,\right]\right)\\&\ =\ A\sum _{n=-\infty }^{\infty }J_{n}(\beta )\,\sin((\omega _{c}+n\,\omega _{m})\,t)\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2245df5dbe5a6c9f04835df2d4e89f07728a81e1)

![{\displaystyle [0,t]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/37d2d2fa44908c699e2b7b7b9e92befc8283f264)

![{\displaystyle {\begin{aligned}&\sin \left(\theta _{c}+\beta \,\sin(\theta _{m})\right)\\&\ =\ \sin(\theta _{c})\cos(\beta \sin(\theta _{m}))+\cos(\theta _{c})\sin(\beta \sin(\theta _{m}))\\&\ =\ \sin(\theta _{c})\left[J_{0}(\beta )+2\sum _{n=1}^{\infty }J_{2n}(\beta )\cos(2n\theta _{m})\right]+\cos(\theta _{c})\left[2\sum _{n=0}^{\infty }J_{2n+1}(\beta )\sin((2n+1)\theta _{m})\right]\\&\ =\ J_{0}(\beta )\sin(\theta _{c})+J_{1}(\beta )2\cos(\theta _{c})\sin(\theta _{m})+J_{2}(\beta )2\sin(\theta _{c})\cos(2\theta _{m})+J_{3}(\beta )2\cos(\theta _{c})\sin(3\theta _{m})+...\\&\ =\ J_{0}(\beta )\sin(\theta _{c})+\sum _{n=1}^{\infty }J_{n}(\beta )\left[\,\sin(\theta _{c}+n\theta _{m})\ +\ (-1)^{n}\sin(\theta _{c}-n\theta _{m})\,\right]\\&\ =\ \sum _{n=-\infty }^{\infty }J_{n}(\beta )\,\sin(\theta _{c}+n\theta _{m})\qquad (\because \ J_{-n}(x)=(-1)^{n}J_{n}(x))\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/75361ca6bdbbe660138b6b060475a5284a9d7b9b)