Recent from talks

Contribute something to knowledge base

Content stats: 0 posts, 0 articles, 1 media, 0 notes

Members stats: 0 subscribers, 0 contributors, 0 moderators, 0 supporters

Subscribers

Supporters

Contributors

Moderators

Hub AI

Ray tracing (graphics) AI simulator

(@Ray tracing (graphics)_simulator)

Hub AI

Ray tracing (graphics) AI simulator

(@Ray tracing (graphics)_simulator)

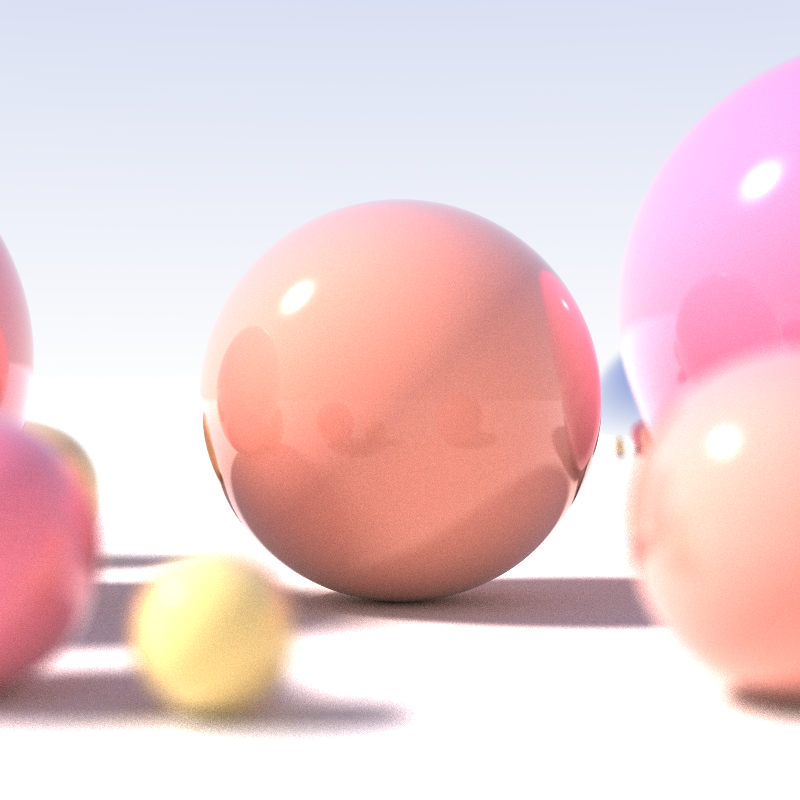

Ray tracing (graphics)

In 3D computer graphics, ray tracing is a technique for modeling light transport for use in a wide variety of rendering algorithms for generating digital images.

On a spectrum of computational cost and visual fidelity, ray tracing-based rendering techniques, such as ray casting, recursive ray tracing, distribution ray tracing, photon mapping and path tracing, are generally slower and higher fidelity than scanline rendering methods. Thus, ray tracing was first deployed in applications where taking a relatively long time to render could be tolerated, such as CGI images, and film and television visual effects (VFX), but was less suited to real-time applications such as video games, where speed is critical in rendering each frame.

Since 2018, however, hardware acceleration for real-time ray tracing has become standard on new commercial graphics cards, and graphics APIs have followed suit, allowing developers to use hybrid ray tracing and rasterization-based rendering in games and other real-time applications with a lesser hit to frame render times.

Ray tracing is capable of simulating a variety of optical effects, such as reflection, refraction, soft shadows, scattering, depth of field, motion blur, caustics, ambient occlusion and dispersion phenomena (such as chromatic aberration). It can also be used to trace the path of sound waves in a similar fashion to light waves, making it a viable option for more immersive sound design in video games by rendering realistic reverberation and echoes. In fact, any physical wave or particle phenomenon with approximately linear motion can be simulated with ray tracing.

Ray tracing-based rendering techniques that involve sampling light over a domain generate rays or using denoising techniques.[incomprehensible]

The idea of ray tracing comes from as early as the 16th century, when it was described by Albrecht Dürer, who is credited for its invention. Dürer described multiple techniques for projecting 3-D scenes onto an image plane. Some of these project chosen geometry onto the image plane, as is done with rasterization today. Others determine what geometry is visible along a given ray, as is done with ray tracing.

Using a computer for ray tracing to generate shaded pictures was first accomplished by Arthur Appel in 1968. Appel used ray tracing for primary visibility (determining the closest surface to the camera at each image point) by tracing a ray through each point to be shaded into the scene to identify the visible surface. The closest surface intersected by the ray was the visible one. This non-recursive ray tracing-based rendering algorithm is today called "ray casting". His algorithm then traced secondary rays to the light source from each point being shaded to determine whether the point was in shadow or not.

Later, in 1971, Goldstein and Nagel of MAGI (Mathematical Applications Group, Inc.) published "3-D Visual Simulation", wherein ray tracing was used to make shaded pictures of solids. At the ray-surface intersection point found, they computed the surface normal and, knowing the position of the light source, computed the brightness of the pixel on the screen. Their publication describes a short (30-second) film "made using the University of Maryland's display hardware outfitted with a 16mm camera. The film showed the helicopter and a simple ground-level gun emplacement. The helicopter was programmed to undergo a series of maneuvers including turns, take-offs, and landings, etc., until it eventually is shot down and crashed." A CDC 6600 computer was used. MAGI produced an animation video called MAGI/SynthaVision Sampler in 1974.

Ray tracing (graphics)

In 3D computer graphics, ray tracing is a technique for modeling light transport for use in a wide variety of rendering algorithms for generating digital images.

On a spectrum of computational cost and visual fidelity, ray tracing-based rendering techniques, such as ray casting, recursive ray tracing, distribution ray tracing, photon mapping and path tracing, are generally slower and higher fidelity than scanline rendering methods. Thus, ray tracing was first deployed in applications where taking a relatively long time to render could be tolerated, such as CGI images, and film and television visual effects (VFX), but was less suited to real-time applications such as video games, where speed is critical in rendering each frame.

Since 2018, however, hardware acceleration for real-time ray tracing has become standard on new commercial graphics cards, and graphics APIs have followed suit, allowing developers to use hybrid ray tracing and rasterization-based rendering in games and other real-time applications with a lesser hit to frame render times.

Ray tracing is capable of simulating a variety of optical effects, such as reflection, refraction, soft shadows, scattering, depth of field, motion blur, caustics, ambient occlusion and dispersion phenomena (such as chromatic aberration). It can also be used to trace the path of sound waves in a similar fashion to light waves, making it a viable option for more immersive sound design in video games by rendering realistic reverberation and echoes. In fact, any physical wave or particle phenomenon with approximately linear motion can be simulated with ray tracing.

Ray tracing-based rendering techniques that involve sampling light over a domain generate rays or using denoising techniques.[incomprehensible]

The idea of ray tracing comes from as early as the 16th century, when it was described by Albrecht Dürer, who is credited for its invention. Dürer described multiple techniques for projecting 3-D scenes onto an image plane. Some of these project chosen geometry onto the image plane, as is done with rasterization today. Others determine what geometry is visible along a given ray, as is done with ray tracing.

Using a computer for ray tracing to generate shaded pictures was first accomplished by Arthur Appel in 1968. Appel used ray tracing for primary visibility (determining the closest surface to the camera at each image point) by tracing a ray through each point to be shaded into the scene to identify the visible surface. The closest surface intersected by the ray was the visible one. This non-recursive ray tracing-based rendering algorithm is today called "ray casting". His algorithm then traced secondary rays to the light source from each point being shaded to determine whether the point was in shadow or not.

Later, in 1971, Goldstein and Nagel of MAGI (Mathematical Applications Group, Inc.) published "3-D Visual Simulation", wherein ray tracing was used to make shaded pictures of solids. At the ray-surface intersection point found, they computed the surface normal and, knowing the position of the light source, computed the brightness of the pixel on the screen. Their publication describes a short (30-second) film "made using the University of Maryland's display hardware outfitted with a 16mm camera. The film showed the helicopter and a simple ground-level gun emplacement. The helicopter was programmed to undergo a series of maneuvers including turns, take-offs, and landings, etc., until it eventually is shot down and crashed." A CDC 6600 computer was used. MAGI produced an animation video called MAGI/SynthaVision Sampler in 1974.