Recent from talks

Absolute threshold of hearing

Knowledge base stats:

Talk channels stats:

Members stats:

Absolute threshold of hearing

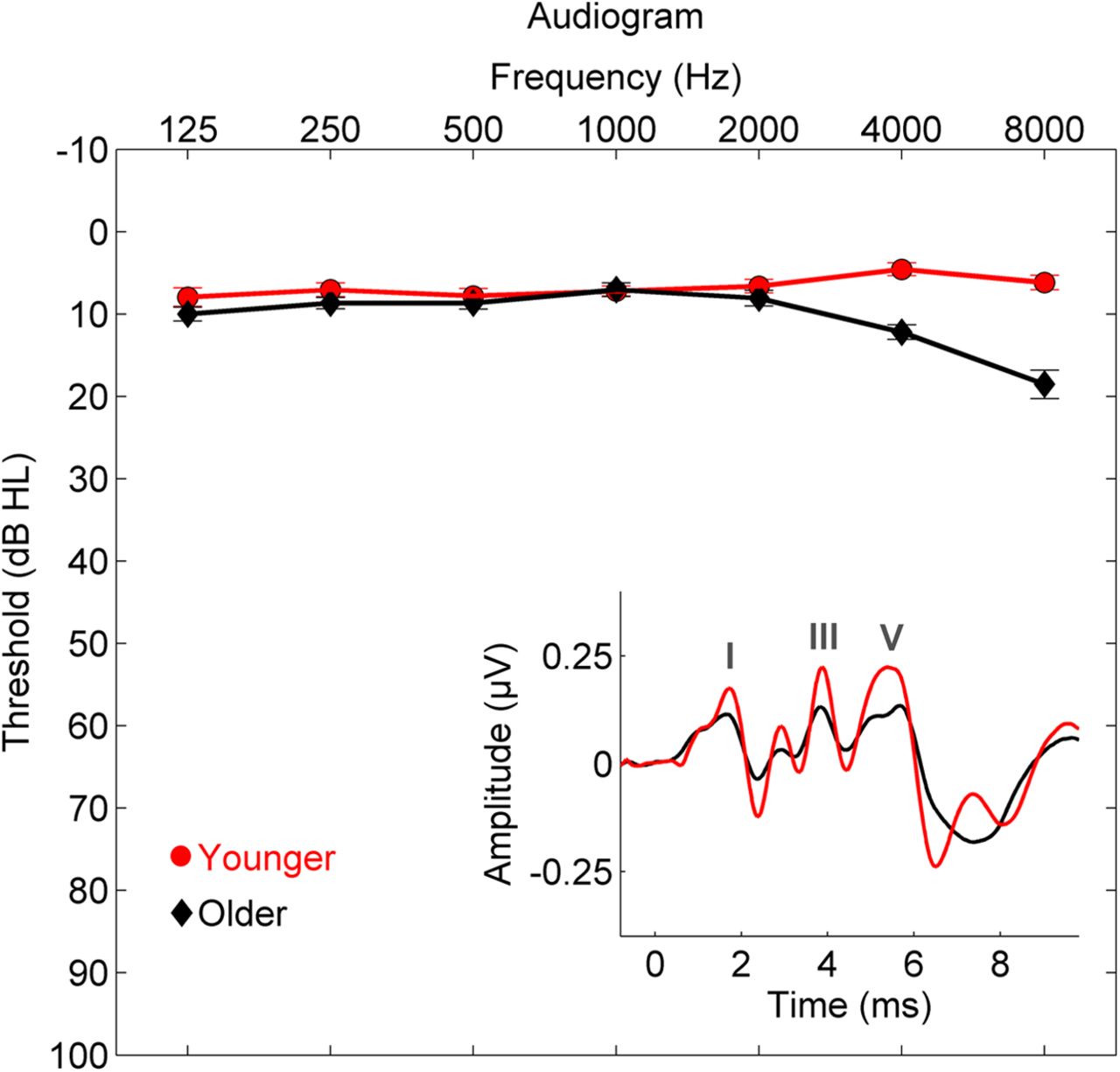

The absolute threshold of hearing (ATH), also known as the absolute hearing threshold or auditory threshold, is the minimum sound level of a pure tone that an average human ear with normal hearing can hear with no other sound present. The absolute threshold relates to the sound that can just be heard by the organism. The absolute threshold is not a discrete point and is therefore classed as the point at which a sound elicits a response a specified percentage of the time.

The threshold of hearing is generally reported in reference to the RMS sound pressure of 20 micropascals, i.e. 0 dB SPL, corresponding to a sound intensity of 0.98 pW/m2 at 1 atmosphere and 25 °C. It is approximately the quietest sound a young human with undamaged hearing can detect at 1 kHz. The threshold of hearing is frequency-dependent and it has been shown that the ear's sensitivity is best at frequencies between 2 kHz and 5 kHz, where the threshold reaches as low as −9 dB SPL.

Measurement of the absolute hearing threshold provides some basic information about our auditory system. The tools used to collect such information are called psychophysical methods. Through these, the perception of a physical stimulus (sound) and our psychological response to the sound is measured.

Several psychophysical methods can measure absolute threshold. These vary, but certain aspects are identical. Firstly, the test defines the stimulus and specifies the manner in which the subject should respond. The test presents the sound to the listener and manipulates the stimulus level in a predetermined pattern. The absolute threshold is defined statistically, often as an average of all obtained hearing thresholds.

Some procedures use a series of trials, with each trial using the 'single-interval "yes"/"no" paradigm'. This means that sound may be present or absent in the single interval, and the listener has to say whether they thought the stimulus was there. When the interval does not contain a stimulus, it is called a "catch trial".

Classical methods date back to the 19th century and were first described by Gustav Theodor Fechner in his work Elements of Psychophysics. Three methods are traditionally used for testing a subject's perception of a stimulus: the method of limits, the method of constant stimuli, and the method of adjustment.

Two intervals are presented to a listener, one with a tone and one without a tone. The listener must decide which interval had the tone in it. The number of intervals can be increased, but this may cause problems for the listener who has to remember which interval contained the tone.

Unlike the classical methods, where the pattern for changing the stimuli is preset, in adaptive methods the subject's response to the previous stimuli determines the level at which a subsequent stimulus is presented.

Hub AI

Absolute threshold of hearing AI simulator

(@Absolute threshold of hearing_simulator)

Absolute threshold of hearing

The absolute threshold of hearing (ATH), also known as the absolute hearing threshold or auditory threshold, is the minimum sound level of a pure tone that an average human ear with normal hearing can hear with no other sound present. The absolute threshold relates to the sound that can just be heard by the organism. The absolute threshold is not a discrete point and is therefore classed as the point at which a sound elicits a response a specified percentage of the time.

The threshold of hearing is generally reported in reference to the RMS sound pressure of 20 micropascals, i.e. 0 dB SPL, corresponding to a sound intensity of 0.98 pW/m2 at 1 atmosphere and 25 °C. It is approximately the quietest sound a young human with undamaged hearing can detect at 1 kHz. The threshold of hearing is frequency-dependent and it has been shown that the ear's sensitivity is best at frequencies between 2 kHz and 5 kHz, where the threshold reaches as low as −9 dB SPL.

Measurement of the absolute hearing threshold provides some basic information about our auditory system. The tools used to collect such information are called psychophysical methods. Through these, the perception of a physical stimulus (sound) and our psychological response to the sound is measured.

Several psychophysical methods can measure absolute threshold. These vary, but certain aspects are identical. Firstly, the test defines the stimulus and specifies the manner in which the subject should respond. The test presents the sound to the listener and manipulates the stimulus level in a predetermined pattern. The absolute threshold is defined statistically, often as an average of all obtained hearing thresholds.

Some procedures use a series of trials, with each trial using the 'single-interval "yes"/"no" paradigm'. This means that sound may be present or absent in the single interval, and the listener has to say whether they thought the stimulus was there. When the interval does not contain a stimulus, it is called a "catch trial".

Classical methods date back to the 19th century and were first described by Gustav Theodor Fechner in his work Elements of Psychophysics. Three methods are traditionally used for testing a subject's perception of a stimulus: the method of limits, the method of constant stimuli, and the method of adjustment.

Two intervals are presented to a listener, one with a tone and one without a tone. The listener must decide which interval had the tone in it. The number of intervals can be increased, but this may cause problems for the listener who has to remember which interval contained the tone.

Unlike the classical methods, where the pattern for changing the stimuli is preset, in adaptive methods the subject's response to the previous stimuli determines the level at which a subsequent stimulus is presented.