Recent from talks

Nothing was collected or created yet.

Wikipedia bots

View on Wikipedia

Wikipedia bots are Internet bots (computer programs) that perform simple, repetitive tasks on Wikipedia. One prominent example of an internet bot used in Wikipedia is Lsjbot, which has generated millions of short articles across various language editions of Wikipedia.[1]

Activities

[edit]Computer programs, called bots, have often been used to automate simple and repetitive tasks, such as correcting common misspellings and stylistic issues, or to start articles, such as geography entries, in a standard format from statistical data.[2][3][4] Additionally, there are bots designed to automatically notify editors when they make common editing errors (such as unmatched quotes or unmatched parentheses).[5]

Anti-vandalism bots like ClueBot NG, created in 2010 are programmed to detect and revert vandalism quickly.[3] Bots are able to indicate edits from particular accounts or IP address ranges, as occurred at the time of the shooting down of the MH17 jet incident in July 2014 when it was reported edits were made via IPs controlled by the Russian government.[6]

Bots on Wikipedia must be approved before activation.[7]

A bot once created up to 10,000 articles on the Swedish Wikipedia in a day.[8] According to Andrew Lih, the current expansion of Wikipedia to millions of articles would be difficult to envision without the use of such bots.[9] The Cebuano, Swedish and Waray Wikipedias are known to have high numbers of bot-created content.[10]

One notable development in recent years has been the use of bots to perform vandalism-fighting chores in place of human labor. According to recent estimates, 50% of all vandalism is already eliminated by bots. Human patrollers have congratulated the bots on their accuracy and speed in a number of remarks posted on their talk pages.[11]

Bot policy

[edit]The best method for reducing hazards without compromising functionality is Wikipedia's bot policy.[citation needed] Bots that update metatags and fix spelling "must be harmless and useful, have approval, use separate user accounts, and be operated responsibly," according to the guidelines.[7] Only once their application has been accepted by the platform and they have been publicly registered online can Wikipedia bots go live.[7]

Interactions

[edit]On Wikipedia, bots typically engage in more reciprocal and prolonged conversations than humans. However, bots in various cultural contexts may act differently, much like people. According to research, even comparatively "dumb" bots have the potential to produce complex relationships, which has important consequences for the study of artificial intelligence. Comprehending the factors that influence bot-bot interactions is essential for effective performance.[12]

Types of bots

[edit]

One way to sort bots is by what activities they perform:[13][14]

- content creation, such as by procedural generation

- fixing errors, such as by copy editing or addressing link rot

- adding connectors, such as with hyperlinks to content elsewhere

- tagging content with labels

- repairing vandalism, such as ClueBot NG[15]

- clerk, updating reports

- archiving old discussions or tasks

- moderation systems to combat against spam or misconduct

- recommender systems to encourage users

- notifications, such as with push technology and pull technology

- repairing broken external links, such as InternetArchiveBot

References

[edit]- ^ Gulbrandsson, Lennart (17 June 2013). "Swedish Wikipedia surpasses 1 million articles with aid of article creation bot". Wikimedia Blog. Archived from the original on 24 February 2018. Retrieved 24 February 2018.

- ^ "Wikipedia:Bots". English Wikipedia.

- ^ a b Daniel Nasaw (July 24, 2012). "Meet the 'bots' that edit Wikipedia". BBC News.

- ^ Halliday, Josh; Arthur, Charles (July 26, 2012). "Boot up: The Wikipedia vandalism police, Apple analysts, and more". The Guardian. Retrieved September 5, 2012.

- ^ Aube (March 23, 2009). "Abuse Filter is enabled". Wikipedia Signpost. Retrieved July 13, 2010.

- ^ Aljazeera, July 21, 2014, "MH17 Wikipedia entry edited from Russian Government IP Address". Negin (July 21, 2014). "MH17 Wikipedia entry edited from Russian government IP address". The Stream - Al Jazeera English. Archived from the original on November 16, 2016. Retrieved July 22, 2014.

- ^ a b c Wikipedia:Bot policy

- ^ Jervell, Ellen Emmerentze (July 13, 2014). "For This Author, 10,000 Wikipedia Articles Is a Good Day's Work". The Wall Street Journal. Retrieved August 18, 2014.

- ^ Andrew Lih (2009). The Wikipedia Revolution, chapter Then came the Bots, pp. 99–106.

- ^ Wilson, Kyle (11 February 2020). "The World's Second Largest Wikipedia Is Written Almost Entirely by One Bot". Vice.

- ^ de Laat, Paul B. (2015). "The use of software tools and autonomous bots against vandalism: eroding Wikipedia's moral order?". Ethics and Information Technology. 17 (3): 175–188. doi:10.1007/s10676-015-9366-9. ISSN 1388-1957.

- ^ Tsvetkova, Milena; García-Gavilanes, Ruth; Floridi, Luciano; Yasseri, Taha (2017-02-23). Gómez, Sergio (ed.). "Even good bots fight: The case of Wikipedia". PLOS ONE. 12 (2) e0171774. arXiv:1609.04285. Bibcode:2017PLoSO..1271774T. doi:10.1371/journal.pone.0171774. ISSN 1932-6203. PMC 5322977. PMID 28231323.

- ^ Zheng, Lei (Nico); Albano, Christopher M.; Vora, Neev M.; Mai, Feng; Nickerson, Jeffrey V. (7 November 2019). "The Roles Bots Play in Wikipedia". Proceedings of the ACM on Human-Computer Interaction. 3 (CSCW): 1–20. doi:10.1145/3359317.

- ^ Dormehl, Luke (20 January 2020). "Meet the 9 Wikipedia bots that make the world's largest encyclopedia possible". Digital Trends.

- ^ "This machine kills trolls". 18 February 2014.

External links

[edit]- Wikipedia:Bots

- Wikipedia:Bot policy

- Wikipedia:History of Wikipedia bots

- Wikidata item for Wikipedia:Bots, listing all Wikipedia:Bots project pages

Wikipedia bots

View on GrokipediaDefinition and Purpose

Overview of Functionality

Wikipedia bots operate as automated software scripts that execute predefined algorithms to perform routine, high-volume editing and maintenance tasks on the platform, interfacing with the MediaWiki API to read page content, apply rule-based modifications, and submit changes programmatically.[2][7] These scripts simulate human editing workflows but at scales unattainable manually, focusing on tasks such as detecting patterns indicative of errors or abuse through heuristics like edit timing, IP analysis, or content similarity checks.[8] Core functionalities include rapid reversion of vandalism, where bots like ClueBot NG scan recent edits for malicious alterations—such as nonsensical insertions or profanities—and undo them within seconds, often handling thousands of such interventions daily to preserve content integrity.[9] Other routine operations encompass formatting standardization, including the insertion of missing citation templates, category assignments, or infoboxes based on article content analysis; spell and grammar correction across multilingual entries; and automated creation of interlanguage links by cross-referencing titles across Wikipedia language editions.[8][10] Bots also support administrative efficiency by enforcing policies, such as archiving inactive talk page discussions, monitoring and blocking banned users' attempts, importing structured data from external databases (e.g., for geographic coordinates or biographical dates), and mining pages for copyright violations via hash comparisons or keyword filters.[8] To mitigate server strain and alert overload, approved bots receive a "bot" flag, which suppresses their edits from human-monitored recent changes feeds unless flagged for review, enabling sustained operation without overwhelming volunteer oversight.[2] This automation cluster—encompassing article organization, editor support, and inter-bot coordination—collectively accounts for a substantial fraction of Wikipedia's edit volume, freeing human contributors for substantive content development.[11]Contributions to Editorial Efficiency

Bots automate repetitive and mundane maintenance tasks on Wikipedia, such as reverting vandalism, fixing broken links, and updating categories, thereby reducing the workload on human editors and enabling them to prioritize content creation and quality improvements.[12][13] These automated processes handle approximately 16% of all edits on the English Wikipedia, with bots comprising 17 of the top 20 most prolific editors by edit volume.[12] By performing tasks like signing unsigned comments or importing structured data—such as HagermanBot's over 5,000 edits in its first five days in December 2006—bots enforce editorial norms efficiently without human intervention.[12] Anti-vandalism bots exemplify efficiency gains through rapid detection and reversion; ClueBot NG identifies and removes inappropriate edits, such as defacements to high-profile articles, often within seconds of occurrence.[9] This automation polices content continuously, reverting violations that would otherwise require manual patrols, and supports administrative processes like monitoring the Three Revert Rule.[12] Similarly, bots like AnomieBOT conduct routine fixes for reference errors and dating tags across thousands of pages, with over 20,000 edits demonstrating minimal need for human corrections due to high accuracy.[13] Bots also accelerate initial content scaling; Rambot generated approximately 30,000 stub articles on U.S. towns in 2002 using Census data, providing a foundation for later human elaboration at rates of thousands per day.[9] Maintenance bots further streamline operations by updating interwiki links (AvicBot, ~6,000 edits) or statistics tables (Cyberbot I, ~8,000 edits with 97% self-reversions for precision), ensuring real-time accuracy while minimizing persistent changes.[13] Overall, these contributions mitigate editor burnout from tedious work, sustaining the encyclopedia's scale amid limited active human participation of around 77,000 editors making five or more edits monthly as of 2012.[9][12]History

Inception and Early Bots (2001–2005)

The inception of bots on Wikipedia coincided closely with the project's launch on January 15, 2001, as the rapid accumulation of content necessitated automation for repetitive tasks such as data imports from public domain sources.[4] Early efforts included semi-automated scripts to incorporate entries from the 1897 Easton's Bible Dictionary starting in August–October 2001, marking the initial use of bot-like tools to bulk-import encyclopedic material and expand the nascent database.[4] These operations, often run by individual contributors via IP addresses or basic programs, focused on seeding articles with verifiable, non-original content to bootstrap growth, though they lacked the sophistication of later bots.[4] A landmark development occurred in October 2002 with Rambot, operated by user Derek Ramsey (known as Ram-Man), which generated approximately 30,000 stub articles on U.S. cities and counties using U.S. Census Bureau data.[9] [4] Operating over eight days from October 18 to 26, the bot created pages at a high volume—thousands per day—incorporating demographic statistics into templated prose, which boosted Wikipedia's English article count by roughly 40% to over 70,000.[9] [14] This mass generation, while drawing from empirical public records, produced uniform, minimally elaborated content that critics argued diluted quality and verifiability, prompting immediate community backlash over automation's role in core content creation.[15] [4] The controversy surrounding Rambot—including debates on whether bot-generated stubs met neutral point of view standards or overburdened human editors—catalyzed early guidelines on bot operations, emphasizing consensus approval and harm avoidance to prevent unchecked proliferation.[4] Following Rambot, bot development accelerated in 2003–2005, shifting toward maintenance and linking tasks. In summer 2003, Rob Hooft developed an interwiki bot in Python, initially for the Dutch Wikipedia, to automate detection and addition of cross-language links by parsing articles and querying sister projects.[4] This tool, later adapted as Robbot and operated by users like André Engels, corrected missing interwiki references across Wikipedias, enhancing navigational efficiency without altering substantive content.[4] By 2004–2005, additional bots emerged for tasks like template standardization and disambiguation fixes, reflecting growing recognition of automation's utility for scalability amid Wikipedia's expansion to millions of edits annually.[8] These early bots, often coded by volunteer developers using basic scripting languages, operated under ad hoc community oversight rather than formalized policy, laying groundwork for structured approvals while highlighting tensions between efficiency gains and editorial integrity.[4]Proliferation and Key Milestones (2006–2012)

During the years 2006 to 2012, Wikipedia bots proliferated significantly, transitioning from niche tools to essential components of content protection and generation, driven by the platform's rapid expansion and rising vandalism pressures. Bots and semi-automated "cyborg" systems—human-guided scripts—assumed an increasingly dominant role in reverting damaging edits starting in early 2006, compensating for growing human editor workloads amid Wikipedia's article count surpassing 1 million by 2006. This shift marked a causal turning point, as manual patrolling became infeasible against surging anonymous edits, with bots handling repetitive reversions to maintain article integrity. By 2012, hundreds of bots operated across tasks, reflecting formalized oversight through groups like the Bot Approvals Group, established earlier but actively managing approvals and reconfirmations during this era to mitigate risks like edit floods.[16] Key milestones highlighted advancements in anti-vandalism capabilities. In November 2010, ClueBot NG initiated edits on the English Wikipedia, employing statistical heuristics and machine learning to detect vandalism patterns, such as anomalous edit behaviors, achieving rapid deployment and high reversion rates with minimal false positives.[17] This bot exemplified the era's technical maturation, building on prior systems to process Recent Changes feeds in real-time and preemptively safeguard pages. Earlier, in 2008, generator bots leveraged public datasets like NASA's to automate thousands of stub articles on minor celestial bodies, demonstrating bots' potential for scalable content importation despite later quality critiques requiring human cleanup.[18] A notable 2012 development was the launch of Lsjbot, operated by physicist Sverker Johansson, which programmatically created over 454,000 articles on the Swedish Wikipedia by mid-2013—nearly half the edition's total—drawing from aggregated data sources to populate entries on localities and species.[19] Similar efforts extended to Cebuano and other languages, fueling debates on bot-generated content's depth versus volume, as these stubs often lacked depth but expanded coverage in underrepresented languages. This period's bot growth underscored empirical efficiencies in handling mundane tasks, though it also prompted scrutiny over automation's limits in ensuring encyclopedic standards without human oversight.[20]Maturation and Policy Evolution (2013–Present)

Following the proliferation of bots in the preceding period, their maturation from 2013 onward involved greater specialization and integration with emerging Wikimedia infrastructure, particularly Wikidata, launched in 2012 but whose impact expanded significantly thereafter. Interwiki bots, previously responsible for a substantial portion of automated edits—such as maintaining cross-language links—saw their roles curtailed by the centralization of these links in Wikidata. This shift began with the Hungarian Wikipedia enabling Wikidata-provided interlanguage links on January 14, 2013, followed by broader rollout across projects, including the English Wikipedia's adoption of centralized interwiki functionality on February 11, 2013.[21][22] As a result, bots like Addbot were repurposed to remove residual hidden interwiki links from Wikipedia articles post-migration, reducing redundant edits and edit volumes in this category.[23] Wikidata's structure facilitated this by storing relational data centrally, allowing bots to focus on data import, validation, and maintenance rather than siloed link management, thereby enhancing overall efficiency while minimizing inter-bot conflicts over interwiki tasks. Empirical analyses highlight how this period marked a decline in bot-induced edit wars, which peaked prior to 2013 due to overlapping tasks like interwiki maintenance. A 2017 study of over 11 million edits by 11 prominent bots on the English Wikipedia from 2007 to 2015 identified 59 sterile conflicts, many resolved by Wikidata-related policy adjustments that eliminated duplicate efforts.[8] These conflicts, though comprising less than 0.2% of total bot edits, underscored the need for coordinated bot operations, prompting developers to refine algorithms for better task delineation and human oversight. Maturation also manifested in expanded bot functions, including patrolling for tagging, anti-vandalism, and content generation, with bots forming collaborative teams alongside human editors for tasks like data import and quality assurance.[24] By 2019, unsupervised learning taxonomies classified bots into nine functional categories, reflecting their evolution from basic revertors to sophisticated maintainers integral to Wikipedia's knowledge ecosystem.[25] Policy evolution emphasized risk mitigation and procedural efficiency amid growing bot reliance. The global bot policy, enforced variably across projects, streamlined approvals by introducing automatic processes for low-risk tasks, such as interlanguage linking and double-redirect fixes, provided bots demonstrated at least 100 edits or one week of activity without disruption.[26] An November 12, 2022, resolution via requests for comment established that new content wikis default to permitting global bot access, reducing barriers for cross-project automation while requiring two-week community discussions for flag requests on established wikis.[27] These updates addressed overuse concerns, mandating separate bot accounts, edit throttling to avoid overwhelming recent changes patrols, and steward oversight to prevent conflicts, with policies explicitly allowing continuation of specialized interwiki bots only where Wikidata could not accommodate technical or policy exceptions.[28] Controversies, including prolonged bot-bot reversions documented in peer-reviewed work, informed these refinements, prioritizing harmlessness and utility without assuming source neutrality on bot efficacy.[8] By the mid-2020s, over 100 projects had adopted aligned policies, reflecting a consensus-driven maturation that balanced automation's scale—capable of edits far exceeding human capacity—with editorial integrity.[26]Technical Foundations

Architecture and Programming

Wikipedia bots are implemented as automated scripts that interface with the MediaWiki application programming interface (API) to query, parse, and edit wiki content programmatically.[7] This client-side architecture enables bots to mimic human editing workflows, such as logging in with bot credentials, retrieving page data via GET requests, applying logic-based transformations, and submitting changes through POST actions likeedit or move.[7] Core operations rely on API endpoints for actions including listing pages, searching revisions, and handling namespaces, with error handling for rate limits and conflicts to prevent disruptions.[29]

Programming languages suitable for bot development include Python, Perl, PHP, Java, JavaScript (via Node.js), and Microsoft .NET, selected for their HTTP client libraries and parsing capabilities.[7] Python dominates due to the Pywikibot framework, a comprehensive library originating from early Wikipedia automation efforts and now maintained by the Wikimedia Foundation.[29] Pywikibot encapsulates API interactions through classes like Page for content manipulation, Site for wiki-specific configurations, and Bot subclasses for task-specific scripts, supporting features such as dry-run modes for testing and configurable delays to simulate human pacing.[30] It requires MediaWiki API version 1.31 or higher and includes utilities for tasks like interwiki linking, categorization, and template replacement.[31]

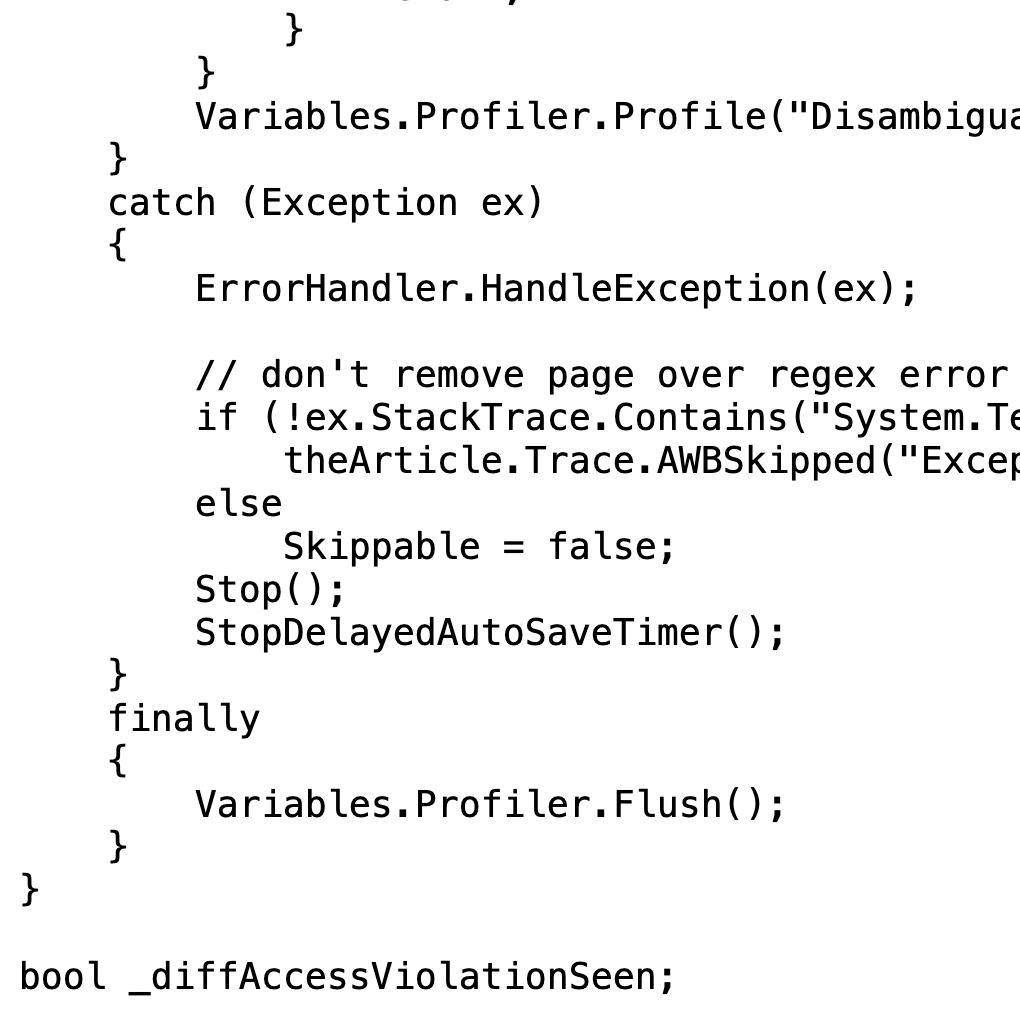

Alternative frameworks cater to specific environments; for instance, mwbot in Rust provides a modular structure for MediaWiki tools with built-in async support for concurrent operations.[32] In graphical contexts, tools like AutoWikiBrowser (AWB) offer a .NET-based interface for semi-automated edits, allowing scriptable regex replacements and list processing via a user-friendly GUI.[7] Bots typically execute in batch or looped modes, with logic to check edit summaries, recent changes, and approval flags before committing alterations, ensuring compliance with operational guidelines.[2] Hosting occurs on developer-controlled servers or Wikimedia's Toolforge platform, where jobs are scheduled via cron-like systems for periodic runs, though persistent execution demands robust error recovery to maintain reliability.[29]

Tools and Hosting Platforms

Pywikibot serves as a primary Python library for automating tasks on MediaWiki sites, including Wikipedia, by interfacing with the API to perform edits, queries, and maintenance operations.[29] Developed initially for Wikipedia, it supports API versions 1.31 and higher, encompassing scripts for tasks such as page generation, categorization, and link repairs.[30] The framework operates via command-line tools and customizable modules, enabling developers to handle repetitive edits efficiently without graphical interfaces.[33] AutoWikiBrowser (AWB), a .NET-based application, functions as a semi-automated editor tailored for Windows environments, streamlining bulk operations like find-and-replace across articles and null edits for cache updates.[34] It incorporates features for regular expression processing, custom modules, and integration with Wikipedia's edit filters, reducing manual intervention in vandalism reversion and formatting corrections.[35] While less flexible for fully autonomous operations compared to scripting frameworks, AWB's user-friendly interface has supported thousands of edits by approved operators since its inception.[34] Other frameworks include Java-based options like the Java Wiki Bot Framework for object-oriented bot development and emerging libraries such as mwbot-rs in Rust, which prioritize performance for high-volume tasks.[7] These tools generally leverage the MediaWiki API for authentication and data manipulation, ensuring compatibility across Wikimedia projects.[7] Wikimedia Toolforge provides the principal hosting platform for Wikipedia bots, offering scalable infrastructure including job queues via HTCondor, persistent storage, and web service endpoints managed by the Wikimedia Foundation.[36] Launched as an evolution of prior labs environments, it hosts numerous bots for activities like citation management and anti-vandalism, with over 1,000 active tools reported in community directories as of 2024.[37] Operators deploy bots using containerized environments like Kubernetes for webservices or lightweight virtual machines for continuous tasks, mitigating the need for personal hardware.[38] Alternatively, bots may run on self-managed servers or third-party cloud services, though Toolforge's integration with Wikimedia's authentication systems enhances reliability and oversight.[36]Classification of Bots

Reversion and Anti-Vandalism Bots

Reversion and anti-vandalism bots constitute a class of automated tools on Wikipedia that monitor incoming edits via the recent changes patrol and programmatically revert those classified as vandalism, thereby preserving article integrity against malicious or disruptive changes. These bots typically integrate machine learning algorithms, including neural networks, trained on labeled corpora of past edits to evaluate features such as edit length, user history, linguistic patterns, and contextual anomalies indicative of damage like obscenity insertion, factual distortion, or blanking.[39] Early implementations relied on rule-based heuristics, such as blacklists of profane terms, but contemporary systems favor probabilistic models that adapt to evolving vandalism tactics while adhering to bot approval policies mandating low false positive rates to avoid erroneous reverts of constructive contributions.[17] ClueBot NG exemplifies this category, having launched in November 2010 as a volunteer-developed system capable of processing all English Wikipedia edits in real time, often reverting suspected vandalism within 5 seconds of publication. Employing Bayesian neural networks, it assesses edit damage against Wikipedian norms, targeting overt issues like spam links, nonsensical additions, and promotional insertions, and has cumulatively executed over 3 million reversions since inception, contributing to the collective elimination of approximately 50% of detected vandalistic edits amid roughly 9,000 malicious daily submissions.[17][39] Downtime analyses reveal its pivotal role, as median reversion times for vandalism nearly doubled during outages—from 12.4 minutes upward to 21.4 minutes downward—though human patrollers and auxiliary bots partially compensated, underscoring the bot's efficiency in scaling quality control beyond manual capacity.[17] Other autonomous reversion bots, such as SentryBot and CVNBot1, operate on similar principles, scanning post-publication edits and issuing automated warnings alongside reverts, though ClueBot NG dominates in volume and sophistication on the English Wikipedia.[39] These systems' effectiveness stems from rapid deployment and data-driven thresholds, yet they face challenges including algorithmic blind spots to subtle vandalism, intermittent bot-bot edit wars when conflicting assessments arise, and the need for ongoing retraining to counter adaptive vandals.[39][8] Policy frameworks, including pre-approval trials, enforce safeguards like configurable revert delays and human oversight appeals to balance automation's speed with accountability.[39]Content Maintenance and Fixer Bots

Content maintenance and fixer bots on Wikipedia automate the correction of formatting inconsistencies, typographical errors, template standardization, citation cleanup, and other non-substantive edits aimed at enhancing article readability and compliance with style guidelines. These bots target repetitive issues that human editors might overlook or find tedious, such as repairing malformed dates, resolving duplicate parameters in infoboxes, or standardizing reference formats, thereby reducing maintenance backlogs without altering factual content.[40][25] Their operations rely on predefined rules and pattern matching to scan pages continuously, applying fixes only when criteria are met to minimize disruptions.[5] A prominent example is Citation bot, which processes citation templates by querying external databases for missing metadata, such as DOIs, PMIDs, and ISBNs, to populate fields like journal volumes, page ranges, and access dates, while reformatting inconsistent entries to adhere to Wikipedia's citation standards.[41] Introduced around 2013 and iteratively updated, it operates in modes ranging from quick scans to thorough verifications, handling millions of references across articles to combat incomplete or erroneous sourcing that could undermine verifiability.[42] Bots like this have been credited with improving citation completeness, though they occasionally require human oversight for ambiguous cases, such as non-standard sources.[25] Yobot exemplifies general fixer functionality, utilizing the AutoWikiBrowser framework to execute "genfixes"—automated corrections including relocating hatnotes to article tops, standardizing date formats per manual of style, and tagging pages for issues like orphaned references or uncited claims.[43] By 2015, Yobot had amassed over 3.7 million edits, focusing on maintenance categories to streamline human review processes.[43] Similarly, bots such as SieBot and VolkovBot specialize in link maintenance, repairing interwiki connections and removing spam-induced redirects, which prevents content fragmentation across language editions.[5] These bots collectively contribute to Wikipedia's operational efficiency, performing tasks that academic analyses describe as essential for sustaining the encyclopedia's scale, with maintenance edits comprising a significant portion of bot activity amid interactions that can lead to revert loops if uncoordinated.[5][8] However, their effectiveness depends on community-approved parameters and periodic audits to address over-editing or false positives, as evidenced by studies noting conflicts among fixer bots over sequential changes to the same elements.[5] As of 2017, over 2,100 approved bots included numerous maintainers, underscoring their role in upholding content quality amid growing article volumes.[3]Generator and Import Bots

Generator bots automate the creation of new Wikipedia articles or content elements through procedural methods, typically drawing from structured external datasets such as geographical coordinates, biological taxonomies, or lists of entities to populate standardized templates. These bots enable rapid expansion of coverage in niche or underrepresented topics but often produce concise stub articles that require subsequent human elaboration for depth and verifiability.[44][45] A leading example is Lsjbot, developed by Swedish physicist Sverker Johansson starting around 2007, which employs algorithms to generate articles on settlements, species, and other catalogable items using data from sources like GeoNames and taxonomic databases. By 2023, Lsjbot had authored over 7 million articles across languages including Swedish, Cebuano, and Waray-Waray, accounting for roughly 80% of the Swedish Wikipedia's total articles and inflating the Cebuano edition to become the second-largest Wikipedia by count despite limited human contributions.[46][47][48] The bot operates at scale, creating approximately 10,000 articles daily in its active phases, primarily through template-based assembly that includes basic infoboxes, coordinates, and minimal prose derived from input data.[45] Import bots, in contrast, specialize in transferring pre-existing content or metadata from external compatible sources—such as public domain or GFDL-licensed databases—into Wikipedia, often in batches to populate categories, interlanguage links, or structured data like Wikidata items. These bots mitigate manual drudgery for large-scale data migration but demand rigorous licensing checks to avoid copyright violations, with operations typically throttled to prevent server overload. Examples include scripts integrated with tools like Pywikibot for importing XML dumps or request-driven imports from databases, though specific high-profile instances remain less documented than generators due to their narrower, utility-focused scope.[7][49] Both categories have faced scrutiny for potentially diluting encyclopedic quality: generator outputs like Lsjbot's stubs, while factually grounded in sourced data, frequently lack contextual analysis or citations beyond the input dataset, prompting community debates on their value versus human-authored content. Import bots risk introducing unvetted or outdated data if source validation falters, underscoring the need for post-import reviews. Despite approvals via Wikimedia's Bot Approvals Groups, these bots' contributions highlight tensions between automation's efficiency in scaling knowledge bases and the imperative for substantive, verifiable editing.[47][48]Administrative and Specialized Bots

Administrative bots on Wikipedia facilitate governance-related processes by automating tagging of articles for maintenance, updating statistical trackers, and archiving discussions, thereby supporting policy enforcement and workflow efficiency without requiring constant human oversight. These bots, often granted elevated permissions akin to administrative tools, handle repetitive oversight tasks that align with community guidelines, such as applying templates for speedy deletion nominations or resolving expired discussions. As of February 2019, analysis of 1,601 active bots identified roles like "Tagger" and "Clerk," where Tagger bots add administrative markers (e.g., AnomieBot applying status templates to track article quality) and Clerk bots maintain project-wide metrics (e.g., WP 1.0 bot assessing content readiness for release versions).[25] Specialized bots target domain-specific functions beyond general maintenance, such as detecting conflicts of interest or validating technical content. For instance, Protector-role bots like COIBot scan edits for potential undisclosed paid editing or spam links, flagging violations based on predefined blacklists and external database cross-checks, which has helped mitigate undisclosed advertising attempts since its deployment in the mid-2000s.[25] Advisor bots, another specialized category, offer targeted guidance to editors; Mathbot, for example, processes and renders mathematical formulas in articles, ensuring TeX code compliance and notifying users of errors to prevent formatting disruptions. Notifier bots, such as those in the Ralbot series, deliver automated alerts for policy reminders or edit suggestions, reducing manual communication burdens. These roles collectively contribute to approximately 10% of English Wikipedia's total edits, enabling scalability in a platform with millions of revisions annually.[25] Adminbots, a subset with administrator-level access (limited to about 11 such flagged accounts as of recent categorizations), perform privileged actions like mass page protections or IP range blocks in response to coordinated vandalism surges, though their use is tightly regulated to prevent overreach. Deployment requires community consensus via bot approval groups, emphasizing error rates below 0.1% for high-impact tasks. Specialized implementations extend to niche areas, including Archiver bots like Lowercase sigmabot, which systematically close inactive talk page sections after predefined inactivity thresholds (e.g., 6 months), preserving discussion history while decluttering interfaces. Such bots underscore Wikipedia's reliance on automation for administrative resilience, with ongoing evaluations ensuring alignment with neutral point of view and verifiability policies.[25]Governance and Policies

Approval Mechanisms

The approval of bots on Wikipedia is overseen by the Bot Approvals Group (BAG), a committee comprising experienced bot developers, editors, and users responsible for evaluating proposals to ensure compliance with established policies emphasizing harmlessness, reliability, and utility.[16][12] Operators must submit detailed proposals outlining the bot's purpose, technical implementation, and anticipated edits, often including proof-of-concept demonstrations to verify functionality before full deployment.[12] This process prioritizes bots that address clear maintenance needs, such as error correction or formatting standardization, while minimizing risks like erroneous edits or disruption to human contributions.[16] Approval decisions rely on a consensus-driven model conducted through structured online discussions, where BAG members assess factors including the bot's demonstrated usefulness (e.g., number of accurate edits in trials), potential benefits relative to operational costs, and operational mode—automatic bots receive higher approval odds compared to manual ones due to their efficiency in routine tasks.[16] Trials are typically required, allowing evaluation of real-world performance, such as error rates below thresholds that could justify rejection.[12] For instance, early bots like those handling comment signing were approved rapidly—within hours—if initial outputs showed high precision, but subsequent issues prompted refinements like mandatory opt-out compliance via templates to exclude specific pages.[12] Consensus requires broad agreement; lack thereof leads to denial or suspension, enforcing accountability through ongoing monitoring post-approval.[3] Governance emphasizes human oversight in bot development and maintenance, with operators responsible for arguing the bot's value and adapting based on feedback, reflecting a decentralized approach that integrates bot activities into Wikipedia's broader editorial ecosystem.[24] Policies mandate exclusion mechanisms to handle objections, formalized after early incidents revealed gaps in initial approvals, ensuring bots do not override user preferences without recourse.[12] As of analyses covering over 1,600 active bots, this framework has sustained large-scale operations by vetting for reliability, though it depends on volunteer expertise, potentially introducing variability in stringency.[10]Operational Guidelines and Flags

Operational guidelines for Wikipedia bots emphasize minimizing disruption to site performance and human editing workflows. Bots are required to implement themaxlag parameter with a maximum value of 5 seconds in API requests to prevent server overload during high-latency periods; if unsupported, operators should limit requests to no more than 10 per minute.[7] Edit rates are further constrained by best practices that prioritize consolidating multiple changes into single edits where feasible, using HTTP persistent connections and compression for efficiency, and employing exponential backoff delays on errors to avoid exacerbating load issues.[7] All bots must set a custom User-Agent header compliant with Wikimedia standards, log in with assertion tokens for security, and include mechanisms for manual disablement, such as via a dedicated control page or talk page coordination, to allow rapid halting in case of malfunction.[7]

The primary technical flag for approved bots is the "bot" user right, which suppresses the visibility of their edits in default recent changes feeds, watchlists, and related patrol tools, thereby reducing clutter for human contributors without eliminating oversight options.[7] To invoke this suppression, bot operators must explicitly set bot=True in API edit parameters (e.g., via PageObject.edit(..., bot=True) in frameworks like Pywikibot), ensuring only qualifying automated actions benefit from the flag's effects.[7] Additional flags, such as those enabling higher API query limits or autoreview capabilities, may be granted selectively to mature bots based on demonstrated reliability, though these are secondary to the core bot flag and require ongoing compliance monitoring.[7] Non-compliance with flag usage or guidelines can result in flag revocation, underscoring the emphasis on verifiable low-impact operation.[7]

Enforcement and Recent Adjustments

Enforcement of Wikipedia bot operations relies on a combination of administrative oversight, technical flags, and operator accountability to prevent disruptions. Bot accounts must obtain approval through processes such as the Bot Approvals Group (BAG) for English Wikipedia or steward requests for global status, ensuring tasks align with project guidelines before deployment.[28] Violations, including excessive edit rates or unapproved tasks, trigger immediate blocks on bot accounts until resolution, with operators required to monitor and halt malfunctioning bots promptly.[28] Global bot flags can be revoked for misuse or prolonged inactivity (defined as no edits for over one year), following notification to the operator.[28] Operational guidelines mandate separate bot accounts labeled with "bot" suffixes, edit delays of at least five seconds between actions when flagged (or one minute unflagged), and reduced rates during peak hours to allow human review via recent changes patrol.[28] Operators bear primary responsibility for compliance, including declaring autonomy levels and responding to community reports of issues; failure to do so may result in escalated blocks or task restrictions.[28] Technical enforcement includes rate-limiting on APIs and site access, with blocks for threats to server stability, as outlined in robot access policies that prioritize efficient data handling like dumps over live scraping.[50] Recent adjustments have focused on streamlining approvals and expanding access efficiency. In November 2022, a request for comments led to global bots being enabled by default on new content wikis, reducing setup barriers for multi-project operations while maintaining local opt-out options.[27] Implementation policies now automate approvals for low-impact tasks, such as double-redirect fixes after a one-week trial or 100 edits, bypassing full community elections for bots operating across hundreds of wikis if multi-site consensus exists.[26] These changes aim to balance scalability with oversight, though projects retain authority to enforce stricter local rules, as seen in varying adoption rates across language editions.[26] No major overhauls have been documented since 2022, with policies emphasizing continued supervision amid rising automated editing volumes.[28]Core Activities

Routine Editing Tasks

Routine editing tasks on Wikipedia involve automated processes that address repetitive maintenance activities, such as correcting structural inconsistencies, standardizing references, and organizing content elements without altering substantive information. These tasks free human editors from mundane labor, enabling focus on content creation and verification. Bots in this domain typically operate under strict approval mechanisms to minimize disruption, targeting issues like malformed templates, outdated identifiers, or navigational aids.[24] Fixer bots exemplify routine corrections by repairing hyperlinks, resolving parameter errors in templates, and standardizing formatting. For example, bots like Xqbot systematically identify and mend broken internal or external links, while others adjust infobox or citation parameters to conform to manual of style guidelines. Citation-focused automation, often handled by connector bots, retrieves metadata from databases to populate fields such as DOIs, PMIDs, or ISBNs in reference templates, thereby enhancing traceability and reducing manual data entry. These operations occur across millions of articles, with individual bots accumulating over 1 million edits in some cases.[24][51][10] Tagger bots contribute by appending categories, maintenance templates, or quality assessments to pages, facilitating discoverability and workflow tracking. AnomieBOT, for instance, applies status tags based on predefined criteria, such as adding "needs infobox" banners or category assignments derived from article content analysis. Interwiki linking automation further supports routine connectivity by appending language version pointers, often propagating changes across sister projects via scripts like interwiki.py. Such tasks collectively represent a core subset of bot functions, comprising part of the approximately 10% of English Wikipedia edits performed by bots as of 2019, down from higher shares in earlier years due to refined operations and human oversight.[24][52]Scale of Operations and Metrics

Bots on the English Wikipedia comprise hundreds of active flagged accounts, enabling automated operations across diverse tasks such as vandalism reversion, template maintenance, and data imports. A 2019 analysis identified 1,601 registered bot accounts, though active usage concentrates among fewer instances with sustained editing privileges.[25] These bots collectively generate substantial edit volumes, with individual high-activity bots like those for anti-vandalism accumulating millions of reverts annually; for instance, specialized reversion bots detect and undo a significant share of malicious changes, often exceeding 40% of detected vandalism cases through low false-positive algorithms.[5] Edit contributions by bots represent 10-20% of total activity on the English Wikipedia, varying by period and methodology in empirical studies. Early 2010s estimates placed the figure at around 5%, reflecting conservative deployment amid governance scrutiny, while more recent quantitative reviews report 16.5% overall, rising to approximately 20% in 2023 data focused on maintenance-heavy namespaces.[52] [25] [53] This scale underscores bots' efficiency in scaling repetitive workloads, where they process edits at rates far exceeding human capacity—often thousands per day per bot—while comprising less than 0.1% of total editor accounts.[5] Across broader Wikimedia projects, bot edits approach half of all submissions, highlighting their foundational role in sustaining platform volume amid declining human participation.[54]| Metric | Estimate (English Wikipedia) | Time Frame | Source Notes |

|---|---|---|---|

| Active/Registered Bots | ~300 active; 1,601 registered | 2019 | Derived from flagged accounts and registration logs; active subset handles bulk operations.[25] |

| Percentage of Total Edits | 16.5-20% | 2018-2023 | Varies by inclusion of maintenance edits; higher in non-article namespaces.[25] [53] |

| Vandalism Reversions | >40% detected by top bots | Ongoing | Low-error anti-vandalism bots dominate detection metrics.[5] |

| Daily Edit Rate | Thousands per bot; ~10-20% aggregate | Recent | Enables causal scaling of mundane tasks without human fatigue.[53] |

Interactions and Dynamics

Human-Bot Collaborations

Human operators play a central role in Wikipedia bot operations by developing scripts, seeking approvals, and providing ongoing oversight to ensure bots perform repetitive tasks without disrupting editorial processes. These operators, typically experienced editors, create bot accounts distinct from their personal ones and monitor activity to address errors or conflicts, as bots lack independent judgment for complex decisions. For instance, early bots like Rambot, deployed in 2002, generated 30,000 articles but introduced 2,000 errors, prompting human interventions that refined approval policies and emphasized testing on dedicated servers provided by the Wikimedia Foundation.[55] Assisted editing tools represent a key form of human-bot collaboration, enabling editors to semi-automate routine fixes while retaining control over changes. AutoWikiBrowser (AWB), a widely used Windows-based program, allows users to apply general fixes—such as formatting corrections or link repairs—to batches of pages, with each edit requiring human review unless the account is flagged as a bot. Studies indicate that such tools accounted for approximately 12% of edits in administrative tasks during early analyses (2009 data), combining with fully automated bots to comprise nearly 28% of total edits, thus augmenting human efficiency in tasks like vandalism reversion via tools such as Huggle.[56][34] Community mechanisms further facilitate collaboration, including opt-out features for affected users and human review boards that evaluate bot proposals against criteria like harmlessness and utility. Tools like ClueBot NG detect potential vandalism algorithmically, but human operators using assisted interfaces confirm and revert edits, balancing automation with accountability. This hybrid approach mitigates risks observed in cases like HagermanBot (2006), where unchecked automation led to social backlash, resulting in policy adjustments for greater transparency and intervention options.[55][56]Bot-on-Bot Conflicts

Bot-on-bot conflicts on Wikipedia arise when automated scripts, intended for maintenance tasks such as link corrections or vandalism reversion, repeatedly revert each other's edits, creating cycles of mutual undoing.[5] A 2017 analysis of over 11 million edits by 2,443 bots from 2011 to 2014 identified 793 such conflicts, primarily involving reverts on interlanguage links and article titles, where bots lacked coordination mechanisms.[5] These incidents, though often limited in scale, could persist for extended periods; for instance, one pair of bots engaged in over 1,000 mutual reverts spanning years.[57] Specific examples include disputes over nomenclature, such as bots oscillating between "Palestine" and "State of Palestine" in infoboxes, or "Persian Gulf" versus alternative regional designations.[58] Anti-vandalism bots have also formed feedback loops, where one bot's reversion of suspected vandalism triggers another bot's counter-reversion, amplifying minor errors into repetitive edit chains.[59] Bots specializing in interwiki links, like Xqbot, EmausBot, SieBot, and VolkovBot, were frequent participants due to asynchronous updates across language versions without shared state awareness.[5] Causal factors include independent programming without inter-bot communication protocols and overlapping task scopes, leading to emergent antagonism despite benevolent intents.[5] While media reports framed these as "wars" implying systemic failure, subsequent Wikimedia investigations emphasized that most conflicts were low-impact, self-resolving via human oversight or bot flags, and did not significantly degrade content quality.[3] By 2013, many such loops had ceased through policy refinements, including enhanced Bot Approvals Group scrutiny for revert-prone scripts.[60] Replication studies confirmed the patterns but advocated nuanced metrics distinguishing benign reverts from disruptive cycles, underscoring the need for coordination frameworks rather than alarmism.[61]Controversies and Critiques

Edit Wars and Systemic Failures

Bots designed to automate routine tasks on Wikipedia have periodically entered into mutual revert cycles, where one bot systematically undoes changes made by another, creating patterns akin to edit wars. A peer-reviewed analysis of edits spanning 2001 to 2010 documented an increase in bot-bot reverts, averaging 105 such instances per bot on the English-language Wikipedia, with similar trends in German (24 per bot) and Portuguese (185 per bot) editions.[5] These conflicts often stemmed from uncoordinated operations, such as discrepancies in interlanguage link formatting or naming conventions across language versions, involving bots like Xqbot, EmausBot, SieBot, and VolkovBot.[5] In extreme cases, revert cycles exhibited a characteristic one-month response time and could extend over years, particularly on niche articles in fields like linguistics, highlighting gaps in preemptive coordination among independent bot developers.[5] Such interactions expose systemic vulnerabilities in Wikipedia's decentralized bot ecosystem, including insufficient mechanisms for anticipating cross-bot interference during approval processes and over-reliance on post-hoc human intervention for resolution.[3] For example, a 2010 clash between SmackBot and Yobot arose from overlapping revert logics on article maintenance, while self-induced loops in bots like the RFC bot demonstrated how rigid scripting could amplify minor errors into repetitive failures without built-in escalation halts.[3] Although comprising a small fraction of overall bot activity—amid over 2,100 approved bots on English Wikipedia—these episodes underscore causal failures in scalability, where autonomous agents optimized for speed prioritize individual tasks over holistic site stability, occasionally necessitating temporary bot suspensions or flag adjustments.[3] Later examinations reveal persistent issues from malfunctions rather than deliberate opposition, as seen with RonBot's erroneous categorization of articles, which triggered 429 human reverts out of approximately 8,500 edits, and Cyberbot I's 13% revert rate on 8,000 edits due to flawed template updates.[13] These cases illustrate broader systemic shortcomings, such as inadequate adaptability to evolving content structures and error propagation in high-volume operations, though self-revert features in bots like AvicBot and AnomieBOT mitigate some risks by routinely correcting their own outputs.[13] The 2013 launch of Wikidata centralized interwiki management, reducing link-related disputes, yet decentralized development continues to foster isolated failures without comprehensive simulation testing for multi-bot environments.[5] Overall, while governance via the Bot Approvals Group has curbed escalation, the persistence of uncoordinated reverts points to underlying design flaws in assuming bot behaviors remain benign in aggregate.[3]Quality and Bias Concerns

Automated editing by bots on Wikipedia has raised concerns over the introduction of errors due to limitations in their programming, which may fail to handle novel or edge-case scenarios effectively. For instance, anti-vandalism bots like ClueBot NG, responsible for detecting 40-55% of vandalism, achieve approximately 90% accuracy in classifying edits but can produce false positives, reverting legitimate changes.[62] Such errors occur when bots encounter circumstances beyond their predefined rules, leading to unnecessary disruptions in article content.[9] Bot-on-bot interactions exacerbate quality issues, as these programs frequently engage in prolonged conflicts by undoing each other's edits, averaging 105 reverts per bot compared to just 3 for human editors between 2001 and 2010. These disputes, often involving interlanguage link bots differing on naming conventions, can persist for months or years, creating inefficiencies and potential impasses that degrade edit quality without human intervention.[5] While bots perform up to 15% of edits on English Wikipedia, their lack of adaptive coordination highlights risks of over-reliance on automation for maintenance tasks.[5][63] Regarding bias, bots programmed by Wikipedia's predominantly left-leaning editor base—itself subject to systemic participation biases—tend to enforce and perpetuate prevailing content norms that embed political skews, as evidenced by analyses showing left-oriented sentiment associations in articles.[64][65] By automating routine tasks like link additions and vandalism reversions on biased source material, bots reinforce these imbalances rather than neutralizing them, with human oversight often insufficient to correct for underlying algorithmic adherence to flawed policies.[64] Critics, including Wikipedia co-founder Larry Sanger, argue this dynamic sustains a liberal tilt, amplified by bots' scale in edit volume.[66] Empirical studies underscore that such automation does not mitigate, and may entrench, selection and groupthink biases inherent in the platform's content generation.[64]External Pressures from AI Scraping

The proliferation of automated scraping bots operated by AI developers has exerted considerable strain on Wikipedia's server infrastructure, with these external agents primarily harvesting content for training large language models. In April 2025, the Wikimedia Foundation reported a marked increase in request volume from such crawlers, which disproportionately target less popular articles and contribute to 65% of the platform's most expensive outbound internet traffic.[67] [68] This surge, estimated at a 50% rise in overall bandwidth consumption, elevates hosting costs and risks operational instability, as the bots often disregard established protocols like robots.txt files designed to regulate automated access.[69] [70] These pressures indirectly affect Wikipedia's internal bot ecosystem by competing for finite computational resources, potentially delaying routine bot tasks such as vandalism reversion or template maintenance amid heightened server loads. Site administrators have responded with ad hoc measures, including case-by-case rate limiting and IP-based bans on identified scrapers, though these interventions require manual oversight rather than fully automated bot enforcement.[70] Critics argue that the absence of more proactive, bot-driven defenses—such as dynamic detection algorithms—exposes systemic vulnerabilities, diverting engineering focus from enhancing editing bots to reactive traffic management.[69] To alleviate the scraping incentive, the Wikimedia Foundation partnered with Kaggle in April 2025 to release an optimized, machine-readable dataset of Wikipedia content, encouraging AI developers to utilize this structured alternative instead of live queries that burden production servers.[71] [72] Despite this initiative, persistent non-compliance by some actors underscores ongoing external demands, prompting debates over whether Wikipedia's volunteer-driven bot policies adequately safeguard against commercial data extraction in an AI-dominated landscape.[68]References

- https://meta.wikimedia.org/wiki/Bot

- https://www.mediawiki.org/wiki/Manual:Bots

- https://www.mediawiki.org/wiki/Manual:Creating_a_bot

- https://meta.wikimedia.org/wiki/Requests_for_comment/Wikidata_rollout_and_interwiki_bots

- https://meta.wikimedia.org/wiki/Bot_policy/Implementation

- https://meta.wikimedia.org/wiki/Requests_for_comment/Make_all_new_wikis_global_bot_wikis

- https://meta.wikimedia.org/wiki/Bot_policy

- https://www.mediawiki.org/wiki/Manual:Pywikibot

- https://www.mediawiki.org/wiki/Project:AutoWikiBrowser

- https://meta.wikimedia.org/wiki/Toolforge

- https://wikitech.wikimedia.org/wiki/Portal:Toolforge

- https://wikitech.wikimedia.org/wiki/Robot_policy