Recent from talks

Nothing was collected or created yet.

Canonical normal form

View on WikipediaThis article has multiple issues. Please help improve it or discuss these issues on the talk page. (Learn how and when to remove these messages)

|

In Boolean algebra, any Boolean function can be expressed in the canonical disjunctive normal form (CDNF),[1] minterm canonical form, or Sum of Products (SoP or SOP) as a disjunction (OR) of minterms. The De Morgan dual is the canonical conjunctive normal form (CCNF), maxterm canonical form, or Product of Sums (PoS or POS) which is a conjunction (AND) of maxterms. These forms can be useful for the simplification of Boolean functions, which is of great importance in the optimization of Boolean formulas in general and digital circuits in particular.

Other canonical forms include the complete sum of prime implicants or Blake canonical form (and its dual), and the algebraic normal form (also called Zhegalkin or Reed–Muller).

Minterms

[edit]For a boolean function of variables , a minterm is a product term in which each of the variables appears exactly once (either in its complemented or uncomplemented form). Thus, a minterm is a logical expression of n variables that employs only the complement operator and the conjunction operator (logical AND). A minterm gives a true value for just one combination of the input variables, the minimum nontrivial amount. For example, a b' c, is true only when a and c both are true and b is false—the input arrangement where a = 1, b = 0, c = 1 results in 1.

Indexing minterms

[edit]There are 2n minterms of n variables, since a variable in the minterm expression can be in either its direct or its complemented form—two choices per variable. Minterms are often numbered by a binary encoding of the complementation pattern of the variables, where the variables are written in a standard order, usually alphabetical. This convention assigns the value 1 to the direct form () and 0 to the complemented form (); the minterm is then . For example, minterm is numbered 1102 = 610 and denoted .

Minterm canonical form

[edit]Given the truth table of a logical function, it is possible to write the function as a "sum of products" or "sum of minterms". This is a special form of disjunctive normal form. For example, if given the truth table for the arithmetic sum bit u of one bit position's logic of an adder circuit, as a function of x and y from the addends and the carry in, ci:

| ci | x | y | u(ci,x,y) |

|---|---|---|---|

| 0 | 0 | 0 | 0 |

| 0 | 0 | 1 | 1 |

| 0 | 1 | 0 | 1 |

| 0 | 1 | 1 | 0 |

| 1 | 0 | 0 | 1 |

| 1 | 0 | 1 | 0 |

| 1 | 1 | 0 | 0 |

| 1 | 1 | 1 | 1 |

Observing that the rows that have an output of 1 are the 2nd, 3rd, 5th, and 8th, we can write u as a sum of minterms and . If we wish to verify this: evaluated for all 8 combinations of the three variables will match the table.

Maxterms

[edit]For a boolean function of n variables , a maxterm is a sum term in which each of the n variables appears exactly once (either in its complemented or uncomplemented form). Thus, a maxterm is a logical expression of n variables that employs only the complement operator and the disjunction operator (logical OR). Maxterms are a dual of the minterm idea, following the complementary symmetry of De Morgan's laws. Instead of using ANDs and complements, we use ORs and complements and proceed similarly. It is apparent that a maxterm gives a false value for just one combination of the input variables, i.e. it is true at the maximal number of possibilities. For example, the maxterm a′ + b + c′ is false only when a and c both are true and b is false—the input arrangement where a = 1, b = 0, c = 1 results in 0.

Indexing maxterms

[edit]There are again 2n maxterms of n variables, since a variable in the maxterm expression can also be in either its direct or its complemented form—two choices per variable. The numbering is chosen so that the complement of a minterm is the respective maxterm. That is, each maxterm is assigned an index based on the opposite conventional binary encoding used for minterms. The maxterm convention assigns the value 0 to the direct form and 1 to the complemented form . For example, we assign the index 6 to the maxterm (110) and denote that maxterm as M6. The complement is the minterm , using de Morgan's law.

Maxterm canonical form

[edit]If one is given a truth table of a logical function, it is possible to write the function as a "product of sums" or "product of maxterms". This is a special form of conjunctive normal form. For example, if given the truth table for the carry-out bit co of one bit position's logic of an adder circuit, as a function of x and y from the addends and the carry in, ci:

| ci | x | y | co(ci,x,y) |

|---|---|---|---|

| 0 | 0 | 0 | 0 |

| 0 | 0 | 1 | 0 |

| 0 | 1 | 0 | 0 |

| 0 | 1 | 1 | 1 |

| 1 | 0 | 0 | 0 |

| 1 | 0 | 1 | 1 |

| 1 | 1 | 0 | 1 |

| 1 | 1 | 1 | 1 |

Observing that the rows that have an output of 0 are the 1st, 2nd, 3rd, and 5th, we can write co as a product of maxterms and . If we wish to verify this:

evaluated for all 8 combinations of the three variables will match the table.

Minimal PoS and SoP forms

[edit]It is often the case that the canonical minterm form is equivalent to a smaller SoP form. This smaller form would still consist of a sum of product terms, but have fewer product terms and/or product terms that contain fewer variables. For example, the following 3-variable function:

| a | b | c | f(a,b,c) |

|---|---|---|---|

| 0 | 0 | 0 | 0 |

| 0 | 0 | 1 | 0 |

| 0 | 1 | 0 | 0 |

| 0 | 1 | 1 | 1 |

| 1 | 0 | 0 | 0 |

| 1 | 0 | 1 | 0 |

| 1 | 1 | 0 | 0 |

| 1 | 1 | 1 | 1 |

has the canonical minterm representation , but it has an equivalent SoP form . In this trivial example, it is obvious that , and the smaller form has both fewer product terms and fewer variables within each term. The minimal SoP representations of a function according to this notion of "smallest" are referred to as minimal SoP forms. In general, there may be multiple minimal SoP forms, none clearly smaller or larger than another.[2] In a similar manner, a canonical maxterm form can be reduced to various minimal PoS forms.

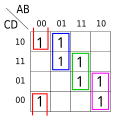

While this example was simplified by applying normal algebraic methods [], in less obvious cases a convenient method for finding minimal PoS/SoP forms of a function with up to four variables is using a Karnaugh map. The Quine–McCluskey algorithm can solve slightly larger problems. The field of logic optimization developed from the problem of finding optimal implementations of Boolean functions, such as minimal PoS and SoP forms.

Application example

[edit]The sample truth tables for minterms and maxterms above are sufficient to establish the canonical form for a single bit position in the addition of binary numbers, but are not sufficient to design the digital logic unless your inventory of gates includes AND and OR. Where performance is an issue (as in the Apollo Guidance Computer), the available parts are more likely to be NAND and NOR because of the complementing action inherent in transistor logic. The values are defined as voltage states, one near ground and one near the DC supply voltage Vcc, e.g. +5 VDC. If the higher voltage is defined as the 1 "true" value, a NOR gate is the simplest possible useful logical element.

Specifically, a 3-input NOR gate may consist of 3 bipolar junction transistors with their emitters all grounded, their collectors tied together and linked to Vcc through a load impedance. Each base is connected to an input signal, and the common collector point presents the output signal. Any input that is a 1 (high voltage) to its base shorts its transistor's emitter to its collector, causing current to flow through the load impedance, which brings the collector voltage (the output) very near to ground. That result is independent of the other inputs. Only when all 3 input signals are 0 (low voltage) do the emitter-collector impedances of all 3 transistors remain very high. Then very little current flows, and the voltage-divider effect with the load impedance imposes on the collector point a high voltage very near to Vcc.

The complementing property of these gate circuits may seem like a drawback when trying to implement a function in canonical form, but there is a compensating bonus: such a gate with only one input implements the complementing function, which is required frequently in digital logic.

This example assumes the Apollo parts inventory: 3-input NOR gates only, but the discussion is simplified by supposing that 4-input NOR gates are also available (in Apollo, those were compounded out of pairs of 3-input NORs).

Canonical and non-canonical consequences of NOR gates

[edit]A set of 8 NOR gates, if their inputs are all combinations of the direct and complement forms of the 3 input variables ci, x, and y, always produce minterms, never maxterms—that is, of the 8 gates required to process all combinations of 3 input variables, only one has the output value 1. That's because a NOR gate, despite its name, could better be viewed (using De Morgan's law) as the AND of the complements of its input signals.

The reason this is not a problem is the duality of minterms and maxterms, i.e. each maxterm is the complement of the like-indexed minterm, and vice versa.

In the minterm example above, we wrote but to perform this with a 4-input NOR gate we need to restate it as a product of sums (PoS), where the sums are the opposite maxterms. That is,

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

In the maxterm example above, we wrote but to perform this with a 4-input NOR gate we need to notice the equality to the NOR of the same minterms. That is,

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

Design trade-offs considered in addition to canonical forms

[edit]One might suppose that the work of designing an adder stage is now complete, but we haven't addressed the fact that all 3 of the input variables have to appear in both their direct and complement forms. There's no difficulty about the addends x and y in this respect, because they are static throughout the addition and thus are normally held in latch circuits that routinely have both direct and complement outputs. (The simplest latch circuit made of NOR gates is a pair of gates cross-coupled to make a flip-flop: the output of each is wired as one of the inputs to the other.) There is also no need to create the complement form of the sum u. However, the carry out of one bit position must be passed as the carry into the next bit position in both direct and complement forms. The most straightforward way to do this is to pass co through a 1-input NOR gate and label the output co′, but that would add a gate delay in the worst possible place, slowing down the rippling of carries from right to left. An additional 4-input NOR gate building the canonical form of co′ (out of the opposite minterms as co) solves this problem.

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

The trade-off to maintain full speed in this way includes an unexpected cost (in addition to having to use a bigger gate). If we'd just used that 1-input gate to complement co, there would have been no use for the minterm , and the gate that generated it could have been eliminated. Nevertheless, it is still a good trade.

Now we could have implemented those functions exactly according to their SoP and PoS canonical forms, by turning NOR gates into the functions specified. A NOR gate is made into an OR gate by passing its output through a 1-input NOR gate; and it is made into an AND gate by passing each of its inputs through a 1-input NOR gate. However, this approach not only increases the number of gates used, but also doubles the number of gate delays processing the signals, cutting the processing speed in half. Consequently, whenever performance is vital, going beyond canonical forms and doing the Boolean algebra to make the unenhanced NOR gates do the job is well worthwhile.

Top-down vs. bottom-up design

[edit]We have now seen how the minterm/maxterm tools can be used to design an adder stage in canonical form with the addition of some Boolean algebra, costing just 2 gate delays for each of the outputs. That's the "top-down" way to design the digital circuit for this function, but is it the best way? The discussion has focused on identifying "fastest" as "best," and the augmented canonical form meets that criterion flawlessly, but sometimes other factors predominate. The designer may have a primary goal of minimizing the number of gates, and/or of minimizing the fanouts of signals to other gates since big fanouts reduce resilience to a degraded power supply or other environmental factors. In such a case, a designer may develop the canonical-form design as a baseline, then try a bottom-up development, and finally compare the results.

The bottom-up development involves noticing that u = ci XOR (x XOR y), where XOR means eXclusive OR [true when either input is true but not when both are true], and that co = ci x + x y + y ci. One such development takes twelve NOR gates in all: six 2-input gates and two 1-input gates to produce u in 5 gate delays, plus three 2-input gates and one 3-input gate to produce co′ in 2 gate delays. The canonical baseline took eight 3-input NOR gates plus three 4-input NOR gates to produce u, co and co′ in 2 gate delays. If the circuit inventory actually includes 4-input NOR gates, the top-down canonical design looks like a winner in both gate count and speed. But if (contrary to our convenient supposition) the circuits are actually 3-input NOR gates, of which two are required for each 4-input NOR function, then the canonical design takes 14 gates compared to 12 for the bottom-up approach, but still produces the sum digit u considerably faster. The fanout comparison is tabulated as:

| Variables | Top-down | Bottom-up |

|---|---|---|

| x | 4 | 1 |

| x' | 4 | 3 |

| y | 4 | 1 |

| y' | 4 | 3 |

| ci | 4 | 1 |

| ci' | 4 | 3 |

| M or m | 4@1,4@2 | N/A |

| x XOR y | N/A | 2 |

| Misc | N/A | 5@1 |

| Max | 4 | 3 |

The description of the bottom-up development mentions co′ as an output but not co. Does that design simply never need the direct form of the carry out? Well, yes and no. At each stage, the calculation of co′ depends only on ci′, x′ and y′, which means that the carry propagation ripples along the bit positions just as fast as in the canonical design without ever developing co. The calculation of u, which does require ci to be made from ci′ by a 1-input NOR, is slower but for any word length the design only pays that penalty once (when the leftmost sum digit is developed). That's because those calculations overlap, each in what amounts to its own little pipeline without affecting when the next bit position's sum bit can be calculated. And, to be sure, the co′ out of the leftmost bit position will probably have to be complemented as part of the logic determining whether the addition overflowed. But using 3-input NOR gates, the bottom-up design is very nearly as fast for doing parallel addition on a non-trivial word length, cuts down on the gate count, and uses lower fanouts ... so it wins if gate count and/or fanout are paramount!

We'll leave the exact circuitry of the bottom-up design of which all these statements are true as an exercise for the interested reader, assisted by one more algebraic formula: u = ci(x XOR y) + ci′(x XOR y)′]′. Decoupling the carry propagation from the sum formation in this way is what elevates the performance of a carry-lookahead adder over that of a ripple carry adder.

Application in digital circuit design

[edit]One application of Boolean algebra is digital circuit design, with one goal to minimize the number of gates and another to minimize the settling time.

There are sixteen possible functions of two variables, but in digital logic hardware, the simplest gate circuits implement only four of them: conjunction (AND), disjunction (inclusive OR), and the respective complements of those (NAND and NOR).

Most gate circuits accept more than 2 input variables; for example, the spaceborne Apollo Guidance Computer, which pioneered the application of integrated circuits in the 1960s, was built with only one type of gate, a 3-input NOR, whose output is true only when all 3 inputs are false.[3][page needed][4]

See also

[edit]References

[edit]- ^ Peter J. Pahl; Rudolf Damrath (2012-12-06). Mathematical Foundations of Computational Engineering: A Handbook. Springer Science & Business Media. pp. 15–. ISBN 978-3-642-56893-0.

- ^ Lala, Parag K. (2007-07-16). Principles of Modern Digital Design. John Wiley & Sons. p. 78. ISBN 978-0-470-07296-7.

- ^ Hall, Eldon C. (1996). Journey to the Moon: The History of the Apollo Guidance Computer. AIAA. ISBN 1-56347-185-X.

- ^ "APOLLO GUIDANCE COMPUTER (AGC) Schematics". klabs.org. Rich Katz. Retrieved 2021-06-19.

To see how NOR gate logic was used in the Apollo Guidance Computer's ALU, select any of the 4-BIT MODULE entries in the Index to Drawings, and expand images as desired.

Further reading

[edit]- Bender, Edward A.; Williamson, S. Gill (2005). A Short Course in Discrete Mathematics. Mineola, NY: Dover Publications, Inc. ISBN 0-486-43946-1.

The authors demonstrate a proof that any Boolean (logic) function can be expressed in either disjunctive or conjunctive normal form (cf pages 5–6); the proof simply proceeds by creating all 2N rows of N Boolean variables and demonstrates that each row ("minterm" or "maxterm") has a unique Boolean expression. Any Boolean function of the N variables can be derived from a composite of the rows whose minterm or maxterm are logical 1s ("trues") - McCluskey, E. J. (1965). Introduction to the Theory of Switching Circuits. NY: McGraw–Hill Book Company. p. 78. LCCN 65-17394.

Canonical expressions are defined and described

- Hill, Fredrick J.; Peterson, Gerald R. (1974). Introduction to Switching Theory and Logical Design (2nd ed.). NY: John Wiley & Sons. p. 101. ISBN 0-471-39882-9.

Minterm and maxterm designation of functions

External links

[edit]- Boole, George (1848). "The Calculus of Logic". Cambridge and Dublin Mathematical Journal. III. Translated by Wilkins, David R.: 183–198.

Canonical normal form

View on GrokipediaFundamentals

Definition and overview

In Boolean algebra, a Boolean function represents a mapping from a set of binary input values to a binary output, where each input is composed of one or more variables that can take values 0 or 1.[6] For a function with variables, there are possible input combinations, each of which determines whether the function evaluates to 1 or 0.[7] A canonical normal form provides a standardized and unique representation of any Boolean function, expressed either as a sum-of-products (SOP) using all relevant minterms or as a product-of-sums (POS) using all relevant maxterms, thereby explicitly accounting for every possible combination of the variables.[8] This form ensures completeness by including terms for all variables in each product or sum, distinguishing it from general normal forms that may omit variables or include redundant terms.[7] Unlike non-canonical normal forms, which can vary in structure and allow for omissions or redundancies leading to multiple equivalent expressions, the canonical form is unique up to the ordering of variables and terms, facilitating direct comparison of function equivalence.[8] The general SOP canonical form is given bywhere the are the minterms corresponding to the input combinations for which .[7] Similarly, the POS canonical form is

where the are the maxterms corresponding to the input combinations for which .[6] Minterms and maxterms, which form the building blocks of these expressions, are detailed in subsequent sections.

Historical development

The foundations of canonical normal forms in Boolean algebra emerged in the mid-19th century alongside George Boole's formalization of logic as an algebraic system in his 1854 work An Investigation of the Laws of Thought, which laid the groundwork for expressing logical expressions systematically. However, the explicit development and popularization of canonical forms for Boolean functions occurred in the mid-20th century within digital logic and switching theory. Claude Shannon's 1938 master's thesis, A Symbolic Analysis of Relay and Switching Circuits, marked a pivotal advancement by applying Boolean algebra to electrical circuits and introducing the disjunctive normal form as a standard representation for relay networks, bridging theoretical logic to practical engineering.[9] During the 1930s and 1940s, canonical forms gained traction in switching theory for designing relay-based circuits, reflecting the era's focus on electromechanical computing devices. Post-World War II, these concepts were formalized in computer science literature, notably through Willard V. Quine's 1952 paper "The Problem of Simplifying Truth Functions" and Edward J. McCluskey's 1956 paper on Boolean function minimization, both of which utilized canonical sum-of-products representations as a starting point for optimization algorithms.[10] Concurrently, Maurice Karnaugh's 1953 introduction of the map method for combinational logic synthesis further embedded canonical forms in simplification techniques, emphasizing their role in efficient circuit design.[11] The term "canonical" in this context was borrowed from 19th-century mathematics, where it denoted unique standard representations, such as Camille Jordan's canonical form for matrices in the 1870s.[12] By the 1970s, canonical normal forms shifted from theoretical constructs to practical tools in very-large-scale integration (VLSI) design, enabling automated logic synthesis in integrated circuits as detailed in Carver Mead and Lynn Conway's influential 1980 textbook Introduction to VLSI Systems. From the 1960s onward, their uniqueness property was increasingly recognized in artificial intelligence for automated theorem proving, where conjunctive and disjunctive normal forms facilitate satisfiability checking and resolution-based inference.[13]Canonical Sum-of-Products Form

Minterms

In Boolean algebra, a minterm is defined as a product (logical AND) of all variables in a Boolean function, where each variable appears exactly once, either in its complemented form (denoted by a prime or overbar, e.g., ) or uncomplemented form (e.g., ), corresponding to a specific input combination that evaluates the function to true.[14] This representation ensures that the minterm is true only for that exact combination of variable values and false otherwise, serving as the fundamental building block for expressing Boolean functions in their most disaggregated product form.[14] A key property of minterms is that each one uniquely corresponds to a single row in the truth table of the Boolean function where the output is 1, capturing precisely those input conditions under which the function holds.[15] For a function with variables, there are exactly possible minterms, as each variable can be either complemented or not, exhausting all possible input combinations.[14] Minterms are typically notated using literals, which are the variables or their complements; for example, with two variables and , the minterms are expressed as , , , and . These literals are combined via the AND operation to form the product term. To illustrate, consider a two-variable case: the minterm is true only when both and ; when and ; when and ; and when and .[14] Minterms constitute the atomic conjunctions in the disjunctive normal form (DNF) of a Boolean function, where the full expression is a disjunction (logical OR) of selected minterms corresponding to the function's true outputs.[6]Indexing minterms

In Boolean functions with variables, minterms are systematically indexed using decimal numbers ranging from 0 to , where each index corresponds to a unique binary representation that determines the literal form of the minterm. The binary digits of the index indicate whether each variable is included in its uncomplemented or complemented form: a bit value of 1 includes the uncomplemented variable, while a bit value of 0 includes its complement. For instance, with three variables , , and (where is the most significant bit), the index 4 in binary is 100, yielding the minterm , as the first bit (1) selects , the second bit (0) selects , and the third bit (0) selects .[16][17] This indexing enables compact notation for expressing the canonical sum-of-products form using the summation symbol , where the function is written as , listing the decimal indices of the minterms that evaluate to 1. For example, if a function is true for input combinations corresponding to indices 0, 2, and 3, it is denoted as , equivalent to the disjunction . This shorthand directly maps to the rows of a truth table where the output is 1.[16][17] The decimal indexing follows the natural binary order, facilitating alignment with truth table rows and serving as a convenient shorthand in digital design tools for specifying functions without expanding full product terms. It also aligns with the positioning of minterms in Karnaugh maps, where cell labels reflect these binary-derived indices, though the map uses Gray code for adjacency. Formally, for an index with binary representation , the corresponding minterm is: where are the variables ordered from least to most significant bit. This system underpins the construction of canonical sum-of-products expressions.[16][17][18]Minterm canonical form

The minterm canonical form, also referred to as the canonical sum-of-products (SOP) form, is constructed directly from a Boolean function's truth table by identifying all input combinations where the function output is 1 and forming the corresponding minterms, then summing these minterms using the OR operation. Each minterm in this sum is a complete product term that incorporates every variable of the function exactly once, either in its uncomplemented or complemented form, based on the binary value of the input row (1 for uncomplemented, 0 for complemented); this guarantees no variables are omitted, providing a fully expanded and standardized representation.[7][6] The full expression for the minterm canonical form is written as , where the summation is over all indices corresponding to the truth table rows with output 1, and denotes the minterm associated with the binary encoding of (with the least significant bit typically assigned to the first variable). This notation compactly captures the disjunctive normal form (DNF) while explicitly linking back to the truth table for verification and implementation in logic circuits.[7] Every Boolean function has exactly one unique minterm canonical form, up to reordering of the summands, owing to the fact that the set of minterms where the function is 1 uniquely determines its truth table and thus its algebraic representation in this expanded SOP structure.[19][8] To illustrate, consider the two-variable exclusive-OR function , whose truth table yields 1 for inputs (0,1) and (1,0), corresponding to minterms and ; the canonical form is thus .[8] For a three-variable example, if based on the truth table, the expanded form is where each term fully specifies the variable polarities for the respective minterm indices (2: 010, 3: 011, 6: 110, 7: 111).[7]Canonical Product-of-Sums Form

Maxterms

In Boolean algebra, a maxterm is defined as a sum (logical OR) of all variables in a function, where each variable appears exactly once, either in its uncomplemented form or complemented form (denoted by a prime or overbar), such that the maxterm evaluates to false (0) for one specific input combination where the overall function is false.[6] This structure ensures that the maxterm represents the negation of a single minterm, capturing the conditions under which the function does not hold.[20] Each maxterm corresponds precisely to one row in the truth table of an -variable Boolean function where the output is 0, and there are exactly possible maxterms for variables, forming the complete set of atomic disjunctions that can express any such function's falsifying assignments.[7] These maxterms serve as the fundamental building blocks for the canonical product-of-sums (POS) form, also known as conjunctive normal form (CNF) in propositional logic, where the function is the product (logical AND) of selected maxterms.[6] Maxterms are typically denoted using literals, such as , (or ) for variable , combined with the OR operator. For example, with two variables and , the four maxterms are:- (true except when , )

- (true except when , )

- (true except when , )

- (true except when , )

These examples illustrate how each maxterm excludes exactly one input combination by being false only for that case.[21]

Indexing maxterms

Maxterms in a canonical product-of-sums expression for an n-variable Boolean function are indexed numerically from 0 to . The binary representation of each index k determines the literals in the corresponding maxterm : for each variable (with i from 0 to n-1, typically LSB to MSB), a bit value of 0 indicates the uncomplemented literal , while a bit value of 1 indicates the complemented literal . Thus, , where if the i-th bit of k is 1, and otherwise.[7][22] For n=3 variables A (MSB), B, C (LSB), the index 3 corresponds to binary 011, yielding . This maxterm evaluates to 0 only for the input combination A=0, B=1, C=1 (binary 011), aligning with the row in the truth table where the function value is 0 for that position.[7] The product-of-maxterms form is compactly notated as , where list enumerates the indices of the selected maxterms; for instance, denotes . This notation facilitates concise specification of the canonical POS expression.[7] This indexing scheme directly mirrors the truth table rows where the function equals 0, enabling straightforward selection of maxterms from the function's specification. It proves advantageous for dual representations, as the maxterm indices for F correspond to the minterm indices for the complementary function , with the specific relation accounting for the inverted binary encoding of literals between minterms and maxterms. Such duality supports transformations and verifications in Boolean function analysis.[22][7] The approach is detailed in standard treatments of digital logic design, where it underpins the construction of canonical forms.[22]Maxterm canonical form

The maxterm canonical form, also known as the canonical product-of-sums expression, represents a Boolean function as the logical product (AND) of all maxterms corresponding to the input combinations where the function output is 0. This form ensures that every maxterm includes all variables of the function, either in complemented or uncomplemented form, with no omissions, guaranteeing a complete and standardized representation.[7] Construction begins with the truth table of the Boolean function: for each row where the output is 0, identify the binary index of that input combination, and select the maxterm , which is a sum (OR) of literals that evaluates to 0 precisely for that input. The canonical form is then the product of these selected maxterms, ensuring the overall expression is 0 exactly when the function is 0 and 1 otherwise.[20][7] The full mathematical expression for a function with variables is where denotes the set of indices (from 0 to ) for which in the truth table, and each is the maxterm defined by the binary representation of .[7][20] This representation is unique for any given Boolean function, up to the commutative property of the product, as it exhaustively captures the function's zero locations without redundancy or ambiguity.[7] For example, consider the two-variable exclusive-OR function , with truth table outputs of 0 at inputs (0,0) and (1,1), corresponding to indices 0 and 3. The canonical form is .[20] To expand for three variables, suppose has outputs of 0 at indices 0, 1, 2, and 4 (binary 000, 001, 010, 100). The canonical form is where each maxterm is constructed by complementing literals according to the 1-bits in the index .[7]Properties and Transformations

Uniqueness and equivalence

The canonical sum-of-products (SOP) form of a Boolean function with variables is unique because it is constructed as the disjunction of exactly those minterms corresponding to the input combinations where , ensuring an exhaustive and non-redundant representation that directly mirrors the function's truth table without any omissions or duplicates.[7] Similarly, the canonical product-of-sums (POS) form is unique, comprising the conjunction of precisely those maxterms where , as each maxterm includes all variables in complemented or uncomplemented form, making the overall product distinct for the given function.[19] This uniqueness stems from the atomic nature of minterms and maxterms in Boolean algebra: for variables, there are exactly minterms, each true for one unique input assignment, allowing the canonical SOP to include only the relevant subset without overlap or redundancy.[7] The canonical forms are logically equivalent to the original function because they evaluate to the same output for all possible inputs; the SOP covers precisely the cases where by activating the corresponding minterm, while the POS enforces only at the specified maxterms.[19] To verify equivalence formally via truth table, consider any input vector . In the canonical SOP, exactly one minterm (the product of literals matching ) evaluates to 1, while all others are 0; thus, the disjunction equals 1 if and only if is included, which by construction occurs precisely when .[7] A parallel argument holds for the POS, where exactly one maxterm evaluates to 0 for , making the conjunction 0 if and only if that maxterm is included (i.e., when ). In canonical forms, Boolean properties such as idempotence (duplicating terms) and absorption (one term subsuming another) do not apply for simplification, as the full expansion into distinct minterms or maxterms eliminates any literal overlaps or redundancies present in non-canonical expressions.[7] The two forms are inter-related through De Morgan's laws: the canonical POS for is equivalent to the complemented and dualized canonical SOP for , preserving logical equivalence.[7] Notably, both forms exhibit exponential size complexity of in the worst case, underscoring the exponential growth inherent in exhaustive representations for large .[19]Conversion between forms

Converting between the canonical sum-of-products (SOP) form and the canonical product-of-sums (POS) form relies on the dual relationship inherent in Boolean algebra. The canonical POS form of a function is equivalent to the canonical SOP form of its complement , obtained by applying De Morgan's theorem to revert to the POS of .[8] This duality arises because minterms of correspond to the rows where , while maxterms of correspond to the rows where in the truth table.[7] To convert from canonical SOP to POS, first identify the minterms of by taking the complement of the indices used in the SOP of (i.e., the indices where ). These minterms of directly become the maxterms of , as the maxterm indices for are the positions where . The resulting POS form is the product of these maxterms. The reverse conversion from POS to SOP follows by identifying the maxterms of (complement indices) and treating them as minterms of .[7] For example, consider a two-variable function in canonical SOP form: . The minterms are at indices 1 and 2, so at indices 0 and 3. Thus, the canonical POS form is .[7] In general, if the canonical SOP form is for indices , then the canonical POS form is where (the complement set of indices).[7] This conversion preserves the canonicity of the forms, ensuring each represents the complete disjunctive or conjunctive normal form without simplification, though the resulting expression may not be minimal. It is particularly useful for verifying duality or checking equivalence between representations.[8]Minimization Techniques

Minimal SOP and POS forms

Minimal sum-of-products (SOP) and product-of-sums (POS) forms represent the most compact equivalent expressions for a Boolean function in their respective normal forms, achieved by eliminating redundant terms and literals from the canonical representations. These minimal forms retain the logical equivalence of the original function but use fewer product terms in SOP (or sum terms in POS) and reduced literal counts overall, making them preferable for practical implementations where efficiency is key.[23] In contrast to canonical SOP and POS forms, which exhaustively include all relevant minterms or maxterms and can require up to terms for variables, minimal forms significantly reduce complexity by merging overlapping terms through Boolean simplification principles. This reduction directly translates to fewer logic gates and interconnections in digital circuits, lowering costs, power consumption, and propagation delays. For instance, canonical forms ensure universality but are inefficient for synthesis, whereas minimal forms optimize hardware resource utilization without altering functionality. Key properties of minimal SOP and POS forms include the possibility of multiple equivalent minimal expressions for the same function, as different combinations of terms may achieve the same literal or term count minimization. Minimality is generally evaluated by the total number of literals (counting each variable appearance) or the number of terms, with the former often prioritized in circuit design to minimize gate inputs. Additionally, while canonical forms are unique up to term ordering, minimal forms may not be, requiring selection criteria based on implementation constraints. The challenge of obtaining the absolute minimal form is computationally intensive, as the exact minimization problem for Boolean functions in SOP or POS is NP-hard.[24] A representative example illustrates this reduction: the canonical SOP expression simplifies to the minimal SOP . Here, the original three-term form with five literals collapses to two terms with two literals by applying absorption and consensus theorems, demonstrating how redundancies in canonical expansions are removed. In terms of scale, for functions with variables, canonical forms scale exponentially with potential terms, whereas minimal forms can achieve linear complexity in the best case, highlighting their practical superiority despite the hardness of optimization.[25]Simplification methods

Simplification methods for canonical normal forms in Boolean algebra aim to reduce the sum-of-products (SOP) or product-of-sums (POS) expressions to more compact forms while preserving functionality. Algebraic techniques, such as the consensus theorem, provide a foundational approach by identifying and eliminating redundant terms. The consensus theorem states that for variables , , and , the expression , allowing the removal of the consensus term without altering the function's output. This theorem facilitates simplification by adding temporary consensus terms to enable absorption or further reduction, as demonstrated in examples where intermediate terms are introduced to absorb others.[26] Graphical methods like Karnaugh maps (K-maps) offer a visual aid for grouping adjacent minterms that differ by one literal, enabling intuitive simplification for up to four or five variables. Introduced by Maurice Karnaugh, K-maps arrange minterms in a grid where adjacency reflects single-variable changes, allowing groups of 2, 4, or 8 ones to form prime implicants by eliminating shared literals.[11] For more variables, the tabular Quine-McCluskey algorithm systematically combines minterms into implicants through iterative pairing and selection of prime implicants, suitable for computer implementation beyond manual limits. Developed from Willard V. Quine's theoretical framework on truth function simplification and extended by Edward J. McCluskey, this method ensures exhaustive coverage by generating all prime implicants and selecting a minimal set.[27][28] The general process involves identifying groups of minterms that share common literals to form larger implicants, then covering all required minterms with a minimal set of prime implicants to avoid redundancy. Prime implicants are the largest possible groups that cannot be further expanded, and the covering step selects a combination that fully represents the function with the fewest terms. For instance, consider a three-variable function , where the minterms are , , , and . Using a K-map:| B'C' | B'C | BC | BC' | |

|---|---|---|---|---|

| A' | 1 | 1 | 1 | 0 |

| A | 0 | 0 | 1 | 0 |

Applications

Digital circuit design

In digital circuit design, canonical sum-of-products (SOP) forms directly map to two-level AND-OR gate implementations, where each minterm is realized as an AND gate feeding into a multi-input OR gate, providing a straightforward hardware realization of Boolean functions derived from truth tables.[8] Similarly, canonical product-of-sums (POS) forms correspond to OR-AND structures, with maxterms implemented using OR gates followed by an AND gate, enabling dual representations for the same logic function.[8] These forms are commonly employed in hardware description languages (HDLs) such as Verilog for initial behavioral specifications, allowing designers to express complete logic coverage before optimization.[30] The systematic nature of canonical forms facilitates automation in synthesis tools, particularly for field-programmable gate arrays (FPGAs), where they enable algorithmic mapping of logic to lookup tables (LUTs) and ensure exhaustive coverage of input combinations during place-and-route processes.[31] This approach also verifies the completeness of a design by confirming that all minterms or maxterms align with the specified truth table, reducing errors in early-stage validation.[32] However, the exponential growth in the number of terms—with up to minterms for variables—renders canonical forms impractical for circuits with more than a few inputs, often necessitating subsequent minimization to control gate count and delay.[33] In application-specific integrated circuit (ASIC) design, canonical forms support formal verification techniques, such as logic equivalence checking, by providing a unique, exhaustive representation that allows tools to compare pre- and post-optimization netlists against a reference specification using canonical structures like reduced ordered binary decision diagrams (ROBDDs). Specifically, programmable logic array (PLA) and programmable array logic (PAL) devices leverage canonical SOP forms, as their architectures—with programmable AND planes generating product terms and OR planes summing them—naturally accommodate unminimized minterm expansions for implementing sum-of-products logic without additional restructuring.[34] This integration highlights the foundational role of canonical forms in programmable logic, though practical designs typically proceed to minimization for efficiency.Implementation examples

A canonical sum-of-products (SOP) form can be directly implemented using AND gates for each minterm followed by a single OR gate to combine them. For example, consider the function , which requires three 3-input AND gates—one for each minterm—and a 3-input OR gate. This two-level structure realizes the function, but high fan-in requirements for AND and OR gates (e.g., more than four inputs) often necessitate cascading multiple smaller gates, increasing propagation delay and complexity.[8] Implementing a canonical SOP form using only NOR gates involves applying De Morgan's theorems to transform the expression, typically resulting in a non-standard multi-level circuit rather than the direct two-level AND-OR structure. For instance, the SOP can be rewritten using double complementation as , forming a product-of-sums inverted structure, which requires NOR gates for the OR terms and an outer NOR for the AND equivalent—yielding three levels instead of two. A pure NOR-only realization often demands additional inversion levels, elevating the gate count and delay compared to native OR-AND. While hybrid NAND-NOR configurations can approximate SOP more efficiently, restricting to NOR gates alone complicates the canonical mapping.[35][31] Dually, a canonical product-of-sums (POS) form can be implemented using OR gates for each maxterm followed by a single AND gate, and when realized with only NAND gates, it mirrors the SOP-NOR transformation via De Morgan's theorems for efficiency in NAND-dominant technologies. For example, the POS simplifies in some cases, but in general, it corresponds to the function that is zero at specific inputs (e.g., 001 and 110), expressible as . This form allows implementation as a complemented SOP using a two-level NAND-NAND structure, with an additional inverter if the uncomplemented output is required, often achieving two levels with complemented inputs in NAND-optimized technologies. This duality makes POS with NANDs suitable for technologies where NAND gates are optimized, reducing transistor count in complementary setups.[31] In CMOS technology, canonical POS forms can favor balanced PMOS and NMOS transistor arrangements by distributing series and parallel configurations across OR-AND levels, potentially improving drive strength symmetry compared to unbalanced SOP implementations. Conversely, 1960s TTL logic preferred canonical SOP forms due to the foundational use of NAND gates in early TTL families like the 7400 series, enabling straightforward two-level NAND-NAND realizations.[36][37] For a concrete illustration, consider the canonical SOP for a two-variable XOR function, . This requires two 2-input AND gates (one for , one for ) feeding into a 2-input OR gate. A text-based diagram of the gate structure is as follows:Inputs: A ───┬─── NOT ─── A' ─── AND ───┐

│ │

│ B ──────────────────────┼─── OR ─── F

│ │

└─── AND ───────────────────┘

│

B' (from NOT on B)

Inputs: A ───┬─── NOT ─── A' ─── AND ───┐

│ │

│ B ──────────────────────┼─── OR ─── F

│ │

└─── AND ───────────────────┘

│

B' (from NOT on B)