Wikipedia

Impulse response

View on Wikipedia

In signal processing and control theory, the impulse response, or impulse response function (IRF), of a dynamic system is its output when presented with a brief input signal, called an impulse (δ(t)). More generally, an impulse response is the reaction of any dynamic system in response to some external change. In both cases, the impulse response describes the reaction of the system as a function of time (or possibly as a function of some other independent variable that parameterizes the dynamic behavior of the system).

In all these cases, the dynamic system and its impulse response may be actual physical objects, or may be mathematical systems of equations describing such objects.

Since the impulse function contains all frequencies (see the Fourier transform of the Dirac delta function, showing infinite frequency bandwidth that the Dirac delta function has), the impulse response defines the response of a linear time-invariant system for all frequencies.

Mathematical considerations

[edit]

Mathematically, how the impulse is described depends on whether the system is modeled in discrete or continuous time. The impulse can be modeled as a Dirac delta function for continuous-time systems, or as the discrete unit sample function for discrete-time systems. The Dirac delta represents the limiting case of a pulse made very short in time while maintaining its area or integral (thus giving an infinitely high peak). While this is impossible in any real system, it is a useful idealization. In Fourier analysis theory, such an impulse comprises equal portions of all possible excitation frequencies, which makes it a convenient test probe.

Any system in a large class known as linear, time-invariant (LTI) is completely characterized by its impulse response. That is, for any input, the output can be calculated in terms of the input and the impulse response. (See LTI system theory.) The impulse response of a linear transformation is the image of Dirac's delta function under the transformation, analogous to the fundamental solution of a partial differential operator.

It is usually easier to analyze systems using transfer functions as opposed to impulse responses. The transfer function is the Laplace transform of the impulse response. The Laplace transform of a system's output may be determined by the multiplication of the transfer function with the input's Laplace transform in the complex plane, also known as the frequency domain. An inverse Laplace transform of this result will yield the output in the time domain.

To determine an output directly in the time domain requires the convolution of the input with the impulse response. When the transfer function and the Laplace transform of the input are known, this convolution may be more complicated than the alternative of multiplying two functions in the frequency domain.

The impulse response, considered as a Green's function, can be thought of as an "influence function": how a point of input influences output.

Practical applications

[edit]In practice, it is not possible to perturb a system with a perfect impulse. One can use a brief pulse as a first approximation. Limitations of this approach include the duration of the pulse and its magnitude. The response can be close, compared to the ideal case, provided the pulse is short enough. Additionally, in many systems, a pulse of large intensity may drive the system into the nonlinear regime. Other methods exist to construct an impulse response. The impulse response can be calculated from the input and output of a system driven with a pseudo-random sequence, such as maximum length sequences.[1] Another approach is to take a sine sweep measurement and process the result to get the impulse response.[2]

Loudspeakers

[edit]Impulse response loudspeaker testing was first developed in the 1970s. Loudspeakers suffer from phase inaccuracy (delayed frequencies) which can be caused by passive crossovers, resonance, cone momentum, the internal volume, and vibrating enclosure panels.[3] The impulse response can be used to indicate when such inaccuracies can be improved by different materials, enclosures or crossovers.

Loudspeakers have a physical limit to their power output, thus the input amplitude must be limited to maintain linearity. This limitation led to the use of inputs like maximum length sequences in obtaining the impulse response.[4]

Electronic processing

[edit]Impulse response analysis is a major facet of radar, ultrasound imaging, and many areas of digital signal processing. An interesting example is found in broadband internet connections. Digital subscriber line service providers use adaptive equalization to compensate for signal distortion and interference from using copper phone lines for transmission.

Control systems

[edit]In control theory the impulse response is the response of a system to a Dirac delta input. This proves useful in the analysis of dynamic systems; the Laplace transform of the delta function is 1, so the impulse response is equivalent to the inverse Laplace transform of the system's transfer function.

Acoustic and audio applications

[edit]In acoustic and audio settings, impulse responses can be used to capture the acoustic characteristics of many things. The reverb at a location, the body of an instrument, certain analog audio equipment, and amplifiers are all emulated by impulse responses. The impulse is convolved with a dry signal in software, often to create the effect of a physical recording. Various packages containing impulse responses from specific locations are available online.[5]

Economics

[edit]In economics, and especially in contemporary macroeconomic modeling, impulse response functions are used to describe how the economy reacts over time to exogenous impulses, which economists usually call shocks, and are often modeled in the context of a vector autoregression. Impulses that are often treated as exogenous from a macroeconomic point of view include changes in government spending, tax rates, and other fiscal policy parameters; changes in the monetary base or other monetary policy parameters; changes in productivity or other technological parameters; and changes in preferences, such as the degree of impatience. Impulse response functions describe the reaction of endogenous macroeconomic variables such as output, consumption, investment, and employment at the time of the shock and over subsequent points in time.[6][7] Recently, asymmetric impulse response functions have been suggested in the literature that separate the impact of a positive shock from a negative one.[8]

See also

[edit]- Convolution reverb

- Duhamel's principle

- Dynamic stochastic general equilibrium

- Frequency response

- Gibbs phenomenon

- Küssner effect

- Linear response function

- LTI system theory

- Point spread function

- Pre-echo

- Step response

- System analysis

- Time constant

- Transient (oscillation)

- Transient response

- Variation of parameters

References

[edit]- ^ F. Alton Everest (2000). Master Handbook of Acoustics (Fourth ed.). McGraw-Hill Professional. ISBN 0-07-136097-2.

- ^ Stan, Guy-Bart (April 2002). "Comparison of Different Impulse Response Measurement Techniques". Journal of the Audio Engineering Society. 50 (4): 249–262. Retrieved 2 May 2025.

- ^ Mäkivirta, Aki; Liski, Juho; Välimäki, Vesa (2018). "Modeling and Delay-Equalizing Loudspeaker Responses" (PDF). Journal of the Audio Engineering Society. 66 (11): 922–934. doi:10.17743/jaes.2018.0053.

- ^ "Monitor". 9 April 1976. Retrieved 9 April 2018 – via Google Books.

- ^ http://www.acoustics.hut.fi/projects/poririrs/ the Concert Hall Impulse Responses from Pori, Finland

- ^ Lütkepohl, Helmut (2008). "Impulse response function". The New Palgrave Dictionary of Economics (2nd ed.).

- ^ Hamilton, James D. (1994). "Difference Equations". Time Series Analysis. Princeton University Press. p. 5. ISBN 0-691-04289-6.

- ^ Hatemi-J, A. (2014). "Asymmetric generalized impulse responses with an application in finance". Economic Modelling. 36: 18–2. doi:10.1016/j.econmod.2013.09.014.

External links

[edit] Media related to Impulse response at Wikimedia Commons

Media related to Impulse response at Wikimedia Commons

Grokipedia

Impulse response

View on GrokipediaMathematical Foundations

Dirac Delta Function

The Dirac delta function, denoted as , is a generalized function or distribution in mathematical analysis, defined such that it is zero everywhere except at , where it is infinite in a manner that its integral over the entire real line equals unity: /09%3A_Transform_Techniques_in_Physics/9.04%3A_The_Dirac_Delta_Function). This property ensures it acts as a unit measure concentrated at the origin. As a distribution, is not a conventional function but is rigorously defined through its action on test functions, where for any smooth function with compact support, the pairing is given by .[7] A key attribute of the Dirac delta is its sifting property, which states that for a continuous function and any real /09%3A_Transform_Techniques_in_Physics/9.04%3A_The_Dirac_Delta_Function). This property allows to "pick out" the value of a function at a specific point when integrated against it. Furthermore, the Dirac delta serves as the identity element under convolution: for any integrable function , the convolution , preserving the original function unchanged./09%3A_Transform_Techniques_in_Physics/9.04%3A_The_Dirac_Delta_Function) The concept was introduced by physicist Paul Dirac in his 1927 paper on quantum dynamics, where it provided a mathematical tool to handle point-like interactions and continuous spectra in quantum mechanics.[8] Dirac's formulation treated heuristically as an idealized spike, which later found rigorous justification through Laurent Schwartz's theory of distributions in 1945, but its initial use facilitated breakthroughs in describing quantum states.[9] In signal processing, the Dirac delta was adapted as the idealized unit impulse input for analyzing linear time-invariant systems, enabling the characterization of system behavior through response to this singular excitation.[10] Despite its mathematical elegance, the Dirac delta cannot be realized in physical systems due to constraints like finite bandwidth and energy limits, and is instead approximated by narrow pulses with unit area, such as Gaussian or rectangular functions with decreasing width.[10] These approximations converge to the true delta in the distributional sense as the pulse duration approaches zero while maintaining the integral value of 1./09%3A_Transform_Techniques_in_Physics/9.04%3A_The_Dirac_Delta_Function)Linear Time-Invariant Systems

Linear time-invariant (LTI) systems represent a fundamental class in signal processing and control theory, where the system's behavior is fully determined by its response to a unit impulse input. These systems adhere to both linearity and time-invariance properties, enabling powerful analytical tools like convolution and frequency-domain representations.[11] Linearity in a system implies adherence to the superposition principle, meaning that the output to a scaled and summed set of inputs equals the scaled and summed outputs to each individual input. Formally, for inputs and , and scalars and , the system response satisfies . This property ensures that complex signals can be decomposed into simpler components for analysis.[3] Time-invariance requires that a temporal shift in the input produces an identical shift in the output, without altering the system's inherent dynamics. If the output to input is , then the output to is for any delay . This axiom holds the system's parameters constant over time, a key assumption in many engineering applications.[12] The impulse response fully characterizes LTI systems because any continuous-time input can be expressed as a superposition of scaled and shifted Dirac delta functions: . By linearity and time-invariance, the corresponding output is the superposition of the system's responses to each delta input, as introduced in the prior discussion of the Dirac delta function.[2] In discrete-time settings, LTI systems are analogously described by linear constant-coefficient difference equations, such as , with analysis facilitated by the z-transform, which converts these equations into algebraic forms in the z-domain.[13] A prototypical example is the series RC circuit, where the capacitor voltage responds linearly to the input voltage source and invariantly to time shifts, governed by the differential equation . Ideal filters, such as a low-pass filter that attenuates frequencies above a cutoff while preserving lower ones, also exemplify LTI behavior, maintaining phase linearity across passed frequencies.[14][15]Definition of Impulse Response

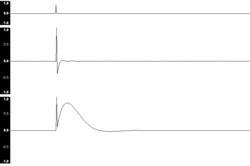

In the context of linear time-invariant (LTI) systems, the impulse response is formally defined as the output produced by the system when subjected to a unit impulse input under zero initial conditions. For a continuous-time LTI system, this is expressed as $ h(t) = \mathcal{S}{\delta(t)} $, where $ \mathcal{S} $ denotes the system operator and $ \delta(t) $ is the Dirac delta function.[2] This response encapsulates how the system reacts instantaneously and over time to an idealized instantaneous input. For discrete-time LTI systems, the analogous definition applies using the unit impulse sequence, yielding $ h[n] = \mathcal{S}{\delta[n]} $, where $ \delta[n] $ is the Kronecker delta.[3] In both cases, the impulse response serves as a complete characterization of the system's behavior, fully capturing its memory and dynamic properties, as any arbitrary input can be decomposed into scaled and shifted impulses to which the system responds linearly and invariantly.[16] Regarding units and scaling, the impulse response $ h(t) $ in continuous time carries dimensions of output quantity per unit input impulse, reflecting the Dirac delta's integral of unity; for instance, if the input and output are both in volts, $ h(t) $ has units of inverse time (e.g., s^{-1}) or equivalently volts per (volt-second).[17] In discrete time, $ h[n] $ typically shares the units of the output per unit input, as the Kronecker delta is dimensionless. Visually, the impulse response of stable LTI systems often takes characteristic shapes, such as an initial peak followed by exponential decay to zero, illustrating the system's tendency to return to equilibrium after perturbation; for example, a first-order stable system exhibits $ h(t) = a e^{-bt} u(t) $ for positive constants $ a $ and $ b $, where $ u(t) $ is the unit step function.[18]Properties and Derivations

Convolution Representation

The output $ y(t) $ of a continuous-time linear time-invariant (LTI) system, characterized by its impulse response $ h(t) $, to an arbitrary input $ x(t) $ is represented by the convolution integralThis form arises because the convolution operation captures the system's response to scaled and shifted impulses that compose the input signal. An equivalent expression interchanges the roles of the input and impulse response:

Both integrals yield the same result due to the commutative property of convolution, which holds for LTI systems such that $ h * x = x * h $.[16] This convolution representation derives from the core properties of LTI systems. Any continuous-time input $ x(t) $ can be decomposed as a continuous superposition of Dirac delta functions: $ x(t) = \int_{-\infty}^{\infty} x(\tau) \delta(t - \tau) , d\tau $.[20] By linearity, the system's output to this decomposition is the integral of the scaled responses: $ y(t) = \int_{-\infty}^{\infty} x(\tau) , [\text{response to } \delta(t - \tau)] , d\tau $. Time-invariance ensures that the response to $ \delta(t - \tau) $ is the shifted impulse response $ h(t - \tau) $, yielding the convolution integral.[19] This proof sketch demonstrates how the impulse response fully determines the system's behavior for any input via superposition. In the discrete-time domain, the analogous representation is the convolution sum for the output $ y[n] $ of an LTI system with impulse response $ h[n] $ and input $ x[n] $:

The derivation follows similarly by expressing $ x[n] $ as a sum of scaled and shifted unit impulses and applying linearity and time-invariance. Commutativity also applies here, allowing $ y[n] = \sum_{k=-\infty}^{\infty} x[k] , h[n - k] $.[16] A practical illustration is the step response $ s(t) $ of an LTI system to the unit step input $ u(t) $, which equals the time integral of the impulse response: $ s(t) = \int_{-\infty}^{t} h(\tau) , d\tau $.[2] For causal systems, where $ h(t) = 0 $ for $ t < 0 $, this simplifies to $ s(t) = \int_{0}^{t} h(\tau) , d\tau $, showing how the cumulative effect of the impulse response builds the response to a sudden onset.[2] In discrete time, the step response is the cumulative sum $ s[n] = \sum_{k=-\infty}^{n} h[k] $.[20]

Transfer Function Equivalence

The transfer function of a linear time-invariant (LTI) system in the frequency domain is directly related to its impulse response in the time domain through the Fourier transform. Specifically, the frequency response $ H(\omega) $ is obtained as the Fourier transform of the impulse response $ h(t) $:Causality and Stability Implications

In linear time-invariant (LTI) systems, causality requires that the output at any time depends only on current and past inputs, which translates to the impulse response $ h(t) = 0 $ for all $ t < 0 $ in the continuous-time case.[26] Equivalently, in the discrete-time domain, the impulse response $ h[n] = 0 $ for all $ n < 0 $.[27] This condition ensures that the system's response does not anticipate future inputs. In the Laplace domain, the impulse response of a causal system is right-sided, meaning its unilateral Laplace transform has a region of convergence that includes the imaginary axis and extends to the right half-plane.[3] Bounded-input bounded-output (BIBO) stability, a key measure for practical systems, holds if every bounded input produces a bounded output. For LTI systems, this is equivalent to the impulse response being absolutely integrable: $ \int_{-\infty}^{\infty} |h(t)| , dt < \infty $ in continuous time.[28] In the discrete-time case, the condition is $ \sum_{n=-\infty}^{\infty} |h[n]| < \infty $.[29] These criteria ensure that the convolution integral (or sum) with any bounded input remains finite. For rational transfer functions $ H(s) $, stability is closely tied to the pole locations: the system is asymptotically stable if all poles have negative real parts, lying in the left half of the s-plane.[30] This pole condition implies that the impulse response decays exponentially, satisfying the BIBO integrability requirement.[31] Non-causal systems can arise in theoretical designs, such as ideal low-pass filters, where the impulse response is a sinc function $ h(t) = \frac{\sin(\omega_c t)}{\pi t} $ extending to $ t < 0 $.[25] Such responses violate causality because they require knowledge of future inputs, rendering them unrealizable in real-time applications, though they serve as benchmarks for filter design.[32] The Paley-Wiener criterion provides a frequency-domain necessary and sufficient condition for a system to be both causal and stable: the magnitude $ |H(j\omega)| $ must satisfy $ \int_{-\infty}^{\infty} \frac{|\ln |H(j\omega)||}{1 + \omega^2} , d\omega < \infty $.[33] This ensures that the transfer function corresponds to a causal impulse response that is square-integrable and decays appropriately, linking time-domain constraints directly to frequency behavior.[34]Measurement and Computation

Experimental Techniques

In physical systems, the ideal Dirac delta function input cannot be realized exactly due to practical limitations, so experimental measurements approximate the impulse response $ h(t) $ using short-duration pulses that closely mimic its properties. For electronic circuits, an electrical spike generated by a function generator serves as the input, with the system's output captured directly on an oscilloscope to observe the transient response.[35] In acoustic environments, a brief click or pulse from a loudspeaker acts as the excitation, recorded via a microphone to capture the propagating response through the medium.[36] These approximations work best when the pulse width is much shorter than the system's characteristic time constants, ensuring the output closely represents the true impulse response.[37] To improve signal-to-noise ratio (SNR) in noisy real-world settings, more advanced excitation signals replace simple pulses. Maximum length sequences (MLS), generated as pseudo-random binary signals using shift registers, provide flat spectral energy distribution and allow extraction of $ h(t) $ through cross-correlation deconvolution with the input, yielding up to 20-30 dB SNR gains over direct pulsing in low-signal conditions.[38] Exponentially swept sine waves, which logarithmically increase frequency over time, offer robust performance against ambient noise by concentrating energy at lower frequencies where SNR is often poorer, with deconvolution performed by convolving the output with the time-reversed input signal.[39] These methods are particularly effective in environments with background interference, as they enable averaging multiple measurements to suppress uncorrelated noise.[40] Typical hardware setups for these measurements include signal generators or digital-to-analog converters (DACs) to produce the excitation, amplifiers to drive transducers, and acquisition devices for recording. In electronics, oscilloscopes with high bandwidth (e.g., >100 MHz) and pulse generators facilitate direct transient capture, often paired with probes for minimal loading effects.[41] For acoustics, omnidirectional or array microphones (such as cardioid condensers in tetrahedral configurations) paired with calibrated loudspeakers capture spatial responses, with preamplifiers ensuring low-noise amplification before digitization.[36] Synchronization between input and output channels is critical, typically achieved via shared clocks or reference signals to align recordings accurately. Deconvolution is essential to isolate $ h(t) $ from measured input-output pairs, mathematically inverting the convolution operation $ y(t) = x(t) * h(t) $ where $ x(t) $ is the known excitation and $ y(t) $ the observed response. In the time domain, this involves iterative or correlation-based techniques; frequency-domain approaches divide the Fourier transforms $ Y(\omega)/X(\omega) $ but require regularization to handle division by small values.[37] The resulting $ h(t) $ provides the system's characterization, validated by checking energy conservation or matching known benchmarks. Several challenges arise in these experiments, including environmental noise that degrades SNR and necessitates longer averaging times or higher input levels. Nonlinearities in the system or transducers introduce harmonic distortions, which MLS methods amplify while swept sines segregate into separate time windows for analysis.[40] Finite bandwidth of hardware limits the resolvable frequency range, potentially truncating high-frequency details in $ h(t) $, and aliasing occurs if analog signals are undersampled during digitization, folding spurious components into the baseband.[39] These issues demand careful calibration, such as using anti-aliasing filters and verifying linearity through distortion metrics below -40 dB.[37]Numerical Methods in DSP

In digital signal processing (DSP), numerical methods enable the computation and analysis of impulse responses for discrete-time systems, often leveraging frequency-domain transformations and optimization techniques to handle finite data lengths and computational constraints. These approaches are essential for simulating linear time-invariant (LTI) systems where the output is obtained via convolution of the input with the impulse response $ h[n] $.[42] One fundamental technique involves deriving the discrete-time impulse response $ h[n] $ from the frequency response $ H(\omega) $ using the inverse discrete Fourier transform (IDFT), efficiently implemented via the inverse fast Fourier transform (IFFT). The IFFT computes $ h[n] = \frac{1}{N} \sum_{k=0}^{N-1} H(k) e^{j 2\pi kn / N} $, where $ N $ is the transform length, transforming measured or modeled frequency data into the time domain. To mitigate artifacts from finite truncation, such as Gibbs phenomenon or non-causal ringing, windowing functions like the Hann or Blackman window are applied to $ H(\omega) $ before the IFFT, reducing spectral leakage while preserving the system's bandwidth. This method is particularly useful in filter design and system characterization, where frequency measurements are more accessible than direct time-domain impulses.[43] System identification techniques estimate $ h[n] $ from input-output data pairs, treating the unknown system as a black box. Least-squares fitting minimizes the error between observed outputs and those predicted by a finite impulse response (FIR) model, solving $ \hat{h} = (X^T X)^{-1} X^T y $ where $ X $ is the input convolution matrix and $ y $ the output vector, providing an unbiased estimate under white noise assumptions. For infinite impulse response (IIR) systems, autoregressive moving average (ARMA) models parameterize $ h[n] $ compactly as $ y[n] = \sum_{i=1}^p a_i y[n-i] + \sum_{j=0}^q b_j u[n-j] + e[n] $, with coefficients estimated via iterative least-squares or prediction error minimization to capture both transient and steady-state behaviors. These methods excel in scenarios with noisy measurements, offering robustness through regularization to avoid overfitting.[44][45] Simulation tools facilitate the generation and analysis of impulse responses by convolving test signals with system models. In MATLAB and Simulink, theimpulse function or Discrete Impulse block applies a unit impulse to LTI models, computing responses via state-space or transfer function simulations; for example, impulse(sys) yields $ h[n] $ for discrete systems up to a specified length. Similarly, Python's SciPy library uses scipy.signal.convolve to perform direct convolution of an impulse with filter coefficients, supporting modes like 'full' for complete $ h[n] $ output, enabling rapid prototyping of DSP algorithms. These environments handle vectorized operations efficiently, allowing visualization and parameter sweeps without custom coding.[46][47]

Discrete approximations of continuous-time impulse responses $ h(t) $ to $ h[n] $ must adhere to the sampling theorem to prevent aliasing, requiring a sampling frequency $ f_s > 2 f_{\max} $ where $ f_{\max} $ is the bandwidth of $ h(t) $, ensuring the discrete spectrum avoids overlap with replicas at multiples of $ f_s $. Undersampling introduces aliasing distortions in $ h[n] $, manifesting as spurious high-frequency components that corrupt subsequent convolutions; anti-aliasing is achieved by pre-filtering $ h(t) $ with a low-pass cutoff at $ f_s/2 $. This discretization preserves system stability and frequency selectivity when $ h[n] $ is used in digital implementations.[48]

For efficient computation of long convolutions involving extended impulse responses, the overlap-add method partitions the input into overlapping blocks, performs FFT-based multiplication with $ H(\omega) $, and adds the overlapping segments of the IFFT outputs. With block size $ L $ and FFT length $ N = L + M - 1 $ (where $ M $ is the impulse length), it reduces complexity from $ O(N^2) $ to $ O(N \log N) $, ideal for real-time DSP applications like audio processing. This segmented approach maintains linear convolution equivalence while minimizing memory usage.[49]