Recent from talks

Nothing was collected or created yet.

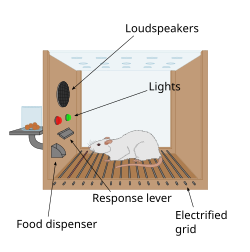

Operant conditioning chamber

View on Wikipedia

An operant conditioning chamber (also known as a Skinner box) is a laboratory apparatus used to study animal behavior. The operant conditioning chamber was created by B. F. Skinner while he was a graduate student at Harvard University. The chamber can be used to study both operant conditioning and classical conditioning.[1][2]

Skinner created the operant conditioning chamber as a variation of the puzzle box originally created by Edward Thorndike.[3] While Skinner's early studies were done using rats, he later moved on to study pigeons.[4][5] The operant conditioning chamber may be used to observe or manipulate behaviour. An animal is placed in the box where it must learn to activate levers or respond to light or sound stimuli for reward. The reward may be food or the removal of noxious stimuli such as a loud alarm. The chamber is used to test specific hypotheses in a controlled setting.

Name

[edit]

Skinner was noted to have expressed his distaste for becoming an eponym.[6] It is believed that psychologist Clark Hull and his Yale students coined the expression "Skinner box". Skinner said that he did not use the term himself; he went so far as to ask Howard Hunt to use "lever box" instead of "Skinner box" in a published document.[7]

History

[edit]

In 1898, American psychologist, Edward Thorndike proposed the 'law of effect', which formed the basis of operant conditioning.[8] Thorndike conducted experiments to discover how cats learn new behaviors. His work involved monitoring cats as they attempted to escape from puzzle boxes. The puzzle box trapped the animals until they moved a lever or performed an action which triggered their release.[9] Thorndike ran several trials and recorded the time it took for them to perform the actions necessary to escape. He discovered that the cats seemed to learn from a trial-and-error process rather than insightful inspections of their environment. The animals learned that their actions led to an effect, and the type of effect influenced whether the behavior would be repeated. Thorndike's 'law of effect' contained the core elements of what would become known as operant conditioning. B. F. Skinner expanded upon Thorndike's existing work.[9] Skinner theorized that if a behavior is followed by a reward, that behavior is more likely to be repeated, but added that if it is followed by some sort of punishment, it is less likely to be repeated. He introduced the word reinforcement into Thorndike's law of effect.[10] Through his experiments, Skinner discovered the law of operant learning which included extinction, punishment and generalization.[10]

Skinner designed the operant conditioning chamber to allow for specific hypothesis testing and behavioural observation. He wanted to create a way to observe animals in a more controlled setting as observation of behaviour in nature can be unpredictable.[2]

Purpose

[edit]

An operant conditioning chamber allows researchers to study animal behaviour and response to conditioning. They do this by teaching an animal to perform certain actions (like pressing a lever) in response to specific stimuli. When the correct action is performed the animal receives positive reinforcement in the form of food or other reward. In some cases, the chamber may deliver positive punishment to discourage incorrect responses. For example, researchers have tested certain invertebrates' reaction to operant conditioning using a "heat box".[11] The box has two walls used for manipulation; one wall can undergo temperature change while the other cannot. As soon as the invertebrate crosses over to the side which can undergo temperature change, the researcher will increase the temperature. Eventually, the invertebrate will be conditioned to stay on the side that does not undergo a temperature change. After conditioning, even when the temperature is turned to its lowest setting, the invertebrate will avoid that side of the box.[11]

Skinner's pigeon studies involved a series of levers. When the lever was pressed, the pigeon would receive a food reward.[5] This was made more complex as researchers studied animal learning behaviours. A pigeon would be placed in the conditioning chamber and another one would be placed in an adjacent box separated by a plexiglass wall. The pigeon in the chamber would learn to press the lever to receive food as the other pigeon watched. The pigeons would then be switched, and researchers would observe them for signs of cultural learning.

Structure

[edit]

The outside shell of an operant conditioning chamber is a large box big enough to easily accommodate the animal being used as a subject. Commonly used animals include rodents (usually lab rats), pigeons, and primates. The chamber is often sound-proof and light-proof to avoid distracting stimuli.

Operant conditioning chambers have at least one response mechanism that can automatically detect the occurrence of a behavioral response or action (i.e., pecking, pressing, pushing, etc.). This may be a lever or series of lights which the animal will respond to in the presence of stimulus. Typical mechanisms for primates and rats are response levers; if the subject presses the lever, the opposite end closes a switch that is monitored by a computer or other programmed device.[12] Typical mechanisms for pigeons and other birds are response keys with a switch that closes if the bird pecks at the key with sufficient force.[5] The other minimal requirement of an operant conditioning chamber is that it has a means of delivering a primary reinforcer such as a food reward.

A simple configuration, such as one response mechanism and one feeder, may be used to investigate a variety of psychological phenomena. Modern operant conditioning chambers may have multiple mechanisms, such as several response levers, two or more feeders, and a variety of devices capable of generating different stimuli including lights, sounds, music, figures, and drawings. Some configurations use an LCD panel for the computer generation of a variety of visual stimuli or a set of LED lights to create patterns they wish to be replicated.[13]

Some operant conditioning chambers can also have electrified nets or floors so that shocks can be given to the animals as a positive punishment or lights of different colors that give information about when the food is available as a positive reinforcement.[14]

Research impact

[edit]Operant conditioning chambers have become common in a variety of research disciplines especially in animal learning. The chambers design allows for easy monitoring of the animal and provides a space to manipulate certain behaviours. This controlled environment may allow for research and experimentation which cannot be performed in the field.

There are a variety of applications for operant conditioning. For instance, shaping the behavior of a child is influenced by the compliments, comments, approval, and disapproval of one's behavior.[15] An important factor of operant conditioning is its ability to explain learning in real-life situations. From an early age, parents nurture their children's behavior by using reward and praise following an achievement (crawling or taking a first step) which reinforces such behavior. When a child misbehaves, punishment in the form of verbal discouragement or the removal of privileges are used to discourage them from repeating their actions.

Skinner's studies on animals and their behavior laid the framework needed for similar studies on human subjects. Based on his work, developmental psychologists were able to study the effect of positive and negative reinforcement. Skinner found that the environment influenced behavior and when that environment is manipulated, behaviour will change. From this, developmental psychologists proposed theories on operant learning in children. That research was applied to education and the treatment of illness in young children.[10] Skinner's theory of operant conditioning played a key role in helping psychologists understand how behavior is learned. It explains why reinforcement can be used so effectively in the learning process, and how schedules of reinforcement can affect the outcome of conditioning.

Commercial applications

[edit]Slot machines, online games, and dating apps are examples where sophisticated operant schedules of reinforcement are used to reinforce certain behaviors.[16][17][18][19]

Gamification, the technique of using game design elements in non-game contexts, has also been described as using operant conditioning and other behaviorist techniques to encourage desired user behaviors.[20]

See also

[edit]References

[edit]- ^ Carlson NR (2009). Psychology-the science of behavior. U.S: Pearson Education Canada; 4th edition. p. 207. ISBN 978-0-205-64524-4.

- ^ a b Krebs JR (1983). "Animal behaviour. From Skinner box to the field". Nature. 304 (5922): 117. Bibcode:1983Natur.304..117K. doi:10.1038/304117a0. PMID 6866102. S2CID 5360836.

- ^ Schacter DL, Gilbert DT, Wegner DM, Nock MK (January 2, 2014). "B. F. Skinner: The Role of Reinforcement and Punishment". Psychology (3rd ed.). Macmillan. pp. 278–80. ISBN 978-1-4641-5528-4.

- ^ Kazdin A (2000). Encyclopedia of Psychology, Vol. 5. American Psychological Association.

- ^ a b c Sakagami T, Lattal KA (May 2016). "The Other Shoe: An Early Operant Conditioning Chamber for Pigeons". The Behavior Analyst. 39 (1): 25–39. doi:10.1007/s40614-016-0055-8. PMC 4883506. PMID 27606188.

- ^ Skinner BF (1959). Cumulative record (1999 ed.). Cambridge, MA: B.F. Skinner Foundation. p. 620.

- ^ Skinner BF (1983). A Matter of Consequences. New York, NY: Alfred A. Knopf, Inc. pp. 116, 164.

- ^ Gray P (2007). Psychology. New York: Worth Publishers. pp. 108–109.

- ^ a b "Edward Thorndike – Law of Effect | Simply Psychology". www.simplypsychology.org. Retrieved November 14, 2021.

- ^ a b c Schlinger H (January 17, 2021). "The Impact of B. F. Skinner's Science of Operant Learning on Early Childhood Research, Theory, Treatment, and Care". Early Child Development and Care. 191 (7–8): 1089–1106. doi:10.1080/03004430.2020.1855155. S2CID 234206521 – via Routledge.

- ^ a b Brembs B (December 2003). "Operant conditioning in invertebrates" (PDF). Current Opinion in Neurobiology. 13 (6): 710–717. doi:10.1016/j.conb.2003.10.002. PMID 14662373. S2CID 2385291.

- ^ Fernández-Lamo I, Delgado-García JM, Gruart A (March 2018). "When and Where Learning is Taking Place: Multisynaptic Changes in Strength During Different Behaviors Related to the Acquisition of an Operant Conditioning Task by Behaving Rats". Cerebral Cortex. 28 (3): 1011–1023. doi:10.1093/cercor/bhx011. PMID 28199479.

- ^ Jackson K, Hackenberg TD (July 1996). "Token reinforcement, choice, and self-control in pigeons". Journal of the Experimental Analysis of Behavior. 66 (1): 29–49. doi:10.1901/jeab.1996.66-29. PMC 1284552. PMID 8755699.

- ^ Craighead, W. Edward; Nemeroff, Charles B., eds. (2004). The Concise Corsini Encyclopedia of Psychology and Behavioral Science 3rd ed. Hoboken, New Jersey: John Wiley & Sons, Inc. p. 803. ISBN 0-471-22036-1.

- ^ Shrestha P (November 17, 2017). "Operant Conditioning". Psychestudy. Retrieved November 14, 2021.

- ^ Hopson, J. (April 2001). "Behavioral game design". Gamasutra. Retrieved April 27, 2019.

- ^ Coon D (2005). Psychology: A modular approach to mind and behavior. Thomson Wadsworth. pp. 278–279. ISBN 0-534-60593-1.

- ^ "The science behind those apps you can't stop using". Australian Financial Review. October 7, 2016. Retrieved January 23, 2024.

- ^ "The scientists who make apps addictive". The Economist. ISSN 0013-0613. Retrieved January 23, 2024.

- ^ Thompson A (May 6, 2015). "Slot machines perfected addictive gaming. Now, tech wants their tricks". The Verge.