Autostereoscopy

View on Wikipedia

Autostereoscopy is any method of displaying stereoscopic images (adding binocular perception of 3D depth) without the use of special headgear, glasses, something that affects vision, or anything for eyes on the part of the viewer. Because headgear is not required, it is also called "glasses-free 3D" or "glassesless 3D".

There are two broad approaches currently used to accommodate motion parallax and wider viewing angles: eye-tracking, and multiple views so that the display does not need to sense where the viewer's eyes are located.[1] Examples of autostereoscopic displays technology include lenticular lens, parallax barrier, and integral imaging. Volumetric and holographic displays are also autostereoscopic, as they produce a different image to each eye,[2] although some do make a distinction between those types of displays that create a vergence-accommodation conflict and those that do not.[3]

Autostereoscopic displays based on parallax barrier and lenticular methodologies have been known for about 100 years.[4]

Technology

[edit]Many organizations have developed autostereoscopic 3D displays, ranging from experimental displays in university departments to commercial products, and using a range of different technologies.[5] The method of creating autostereoscopic flat panel video displays using lenses was mainly developed in 1985 by Reinhard Boerner at the Heinrich Hertz Institute (HHI) in Berlin.[6] Prototypes of single-viewer displays were already being presented in the 1990s, by Sega AM3 (Floating Image System)[7] and the HHI. Nowadays, this technology has been developed further mainly by European and Japanese companies. One of the best-known 3D displays developed by HHI was the Free2C, a display with very high resolution and very good comfort achieved by an eye tracking system and a seamless mechanical adjustment of the lenses. Eye tracking has been used in a variety of systems in order to limit the number of displayed views to just two, or to enlarge the stereoscopic sweet spot. However, as this limits the display to a single viewer, it is not favored for consumer products.

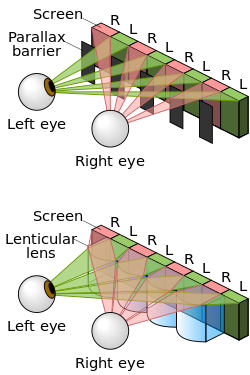

Currently, most flat-panel displays employ lenticular lenses or parallax barriers that redirect imagery to several viewing regions; however, this manipulation requires reduced image resolutions. When the viewer's head is in a certain position, a different image is seen with each eye, giving a convincing illusion of 3D. Such displays can have multiple viewing zones, thereby allowing multiple users to view the image at the same time, though they may also exhibit dead zones where only a non-stereoscopic or pseudoscopic image can be seen, if at all.

Parallax barrier

[edit]

A parallax barrier is a device placed in front of an image source, such as a liquid crystal display, to allow it to show a stereoscopic image or multiscopic image without the need for the viewer to wear 3D glasses. The principle of the parallax barrier was independently invented by Auguste Berthier, who published first but produced no practical results,[8] and by Frederic E. Ives, who made and exhibited the first known functional autostereoscopic image in 1901.[9] About two years later, Ives began selling specimen images as novelties, the first known commercial use.

In the early 2000s, Sharp developed the electronic flat-panel application of this old technology to commercialization, briefly selling two laptops with the world's only 3D LCD screens.[10] These displays are no longer available from Sharp but are still being manufactured and further developed from other companies. Similarly, Hitachi has released the first 3D mobile phone for the Japanese market under distribution by KDDI.[11][12] In 2009, Fujifilm released the FinePix Real 3D W1 digital camera, which features a built-in autostereoscopic LCD measuring 2.8 in (71 mm) diagonal. The Nintendo 3DS video game console family uses a parallax barrier for 3D imagery. On a newer revision, the New Nintendo 3DS, this is combined with an eye tracking system to allow for wider viewing angles.

Integral photography and lenticular arrays

[edit]The principle of integral photography, which uses a two-dimensional (X–Y) array of many small lenses to capture a 3-D scene, was introduced by Gabriel Lippmann in 1908.[13][14] Integral photography is capable of creating window-like autostereoscopic displays that reproduce objects and scenes life-size, with full parallax and perspective shift and even the depth cue of accommodation, but the full realization of this potential requires a very large number of very small high-quality optical systems and very high bandwidth. Only relatively crude photographic and video implementations have yet been produced.

One-dimensional arrays of cylindrical lenses were patented by Walter Hess in 1912.[15] By replacing the line and space pairs in a simple parallax barrier with tiny cylindrical lenses, Hess avoided the light loss that dimmed images viewed by transmitted light and that made prints on paper unacceptably dark.[16] An additional benefit is that the position of the observer is less restricted, as the substitution of lenses is geometrically equivalent to narrowing the spaces in a line-and-space barrier.

Philips solved a significant problem with electronic displays in the mid-1990s by slanting the cylindrical lenses with respect to the underlying pixel grid.[17] Based on this idea, Philips produced its WOWvx line until 2009, running up to 2160p (a resolution of 3840×2160 pixels) with 46 viewing angles.[18] Lenny Lipton's company, StereoGraphics, produced displays based on the same idea, citing a much earlier patent for the slanted lenticulars. Magnetic3d and Zero Creative have also been involved.[19]

Compressive light field displays

[edit]With rapid advances in optical fabrication, digital processing power, and computational models for human perception, a new generation of display technology is emerging: compressive light field displays. These architectures explore the co-design of optical elements and compressive computation while taking particular characteristics of the human visual system into account. Compressive display designs include dual[20] and multilayer[21][22][23] devices that are driven by algorithms such as computed tomography and non-negative matrix factorization and non-negative tensor factorization.

Autostereoscopic content creation and conversion

[edit]Tools for the instant conversion of existing 3D movies to autostereoscopic were demonstrated by Dolby, Stereolabs and Viva3D.[24][25][26]

Other

[edit]Dimension Technologies released a range of commercially available 2D/3D switchable LCDs in 2002 using a combination of parallax barriers and lenticular lenses.[27][28] SeeReal Technologies has developed a holographic display based on eye tracking.[29] CubicVue exhibited a color filter pattern autostereoscopic display at the Consumer Electronics Association's i-Stage competition in 2009.[30][31]

There are a variety of other autostereo systems as well, such as volumetric display, in which the reconstructed light field occupies a true volume of space, and integral imaging, which uses a fly's-eye lens array.

The term automultiscopic display has been introduced as a shorter synonym for the lengthy "multi-view autostereoscopic 3D display",[32] as well as for the earlier, more specific "parallax panoramagram". The latter term originally indicated a continuous sampling along a horizontal line of viewpoints, e.g., image capture using a very large lens or a moving camera and a shifting barrier screen, but it later came to include synthesis from a relatively large number of discrete views.

Sunny Ocean Studios, located in Singapore, has been credited with developing an automultiscopic screen that can display autostereo 3D images from 64 different reference points.[33]

A fundamentally new approach to autostereoscopy called HR3D has been developed by researchers from MIT's Media Lab. It would consume half as much power, doubling the battery life if used with devices like the Nintendo 3DS, without compromising screen brightness or resolution; other advantages include a larger viewing angle and maintaining the 3D effect when the screen is rotated.[34]

Movement parallax: single view vs. multi-view systems

[edit]Movement parallax refers to the fact that the view of a scene changes with movement of the head. Thus, different images of the scene are seen as the head is moved from left to right, and from up to down.

Many autostereoscopic displays are single-view displays and are thus not capable of reproducing the sense of movement parallax, except for a single viewer in systems capable of eye tracking.

Some autostereoscopic displays, however, are multi-view displays, and are thus capable of providing the perception of left–right movement parallax.[35] Eight and sixteen views are typical for such displays. While it is theoretically possible to simulate the perception of up–down movement parallax, no current display systems are known to do so, and the up–down effect is widely seen as less important than left–right movement parallax. One consequence of not including parallax about both axes becomes more evident as objects increasingly distant from the plane of the display are presented: as the viewer moves closer to or farther away from the display, such objects will more obviously exhibit the effects of perspective shift about one axis but not the other, appearing variously stretched or squashed to a viewer not positioned at the optimal distance from the display.[citation needed]

Vergence-accommodation conflict

[edit]Autostereoscopic displays display stereoscopic content without matching focal depth, thereby exhibiting vergence-accommodation conflict.[3]

References

[edit]- ^ Dodgson, N.A. (August 2005). "Autostereoscopic 3D Displays". IEEE Computer. 38 (8): 31–36. doi:10.1109/MC.2005.252. ISSN 0018-9162. S2CID 34507707.

- ^ Holliman, N. S., Dodgson, N. A., Favalora, G. E., & Pockett, L. (2011). Three-dimensional displays: a review and applications analysis. IEEE transactions on Broadcasting 57(2), 362-371.

- ^ a b "Resolving the Vergence-Accommodation Conflict in Head-Mounted Displays" (PDF). 22 September 2022. Archived from the original (PDF) on 22 September 2022. Retrieved 22 September 2022.

- ^ "Autostereoscopic display". XVRWiki. 1 December 2013. Retrieved 6 October 2024.

- ^ Holliman, N.S. (2006). Three-Dimensional Display Systems (PDF). CRC Press. ISBN 0-7503-0646-7. Archived from the original (PDF) on 4 July 2010. Retrieved 30 March 2010.

- ^ Boerner, R. (1985). "3D-Bildprojektion in Linsenrasterschirmen". Fernseh- und Kinotechnik (in German).

- ^ Electronic Gaming Monthly, issue 93 (April 1997), page 22

- ^ Berthier, Auguste. (May 16 and 23, 1896). "Images stéréoscopiques de grand format" (in French). Cosmos 34 (590, 591): 205–210, 227-233 (see 229-231)

- ^ Ives, Frederic E. (1902). "A novel stereogram". Journal of the Franklin Institute. 153: 51–52. doi:10.1016/S0016-0032(02)90195-X. Reprinted in Benton "Selected Papers n Three-Dimensional Displays"

- ^ "2D/3D Switchable Displays" (PDF). Sharp white paper. Archived (PDF) from the original on 30 May 2008. Retrieved 19 June 2008.

- ^ "Woooケータイ H001 - 2009年 - 製品アーカイブ - au by KDDI". Au.kddi.com. Archived from the original on 4 May 2010. Retrieved 15 June 2010.

- ^ "Hitachi Comes Up with 3.1-Inch 3D IPS Display". News.softpedia.com. 12 April 2010. Retrieved 15 June 2010.

- ^ Lippmann, G. (2 March 1908). "Épreuves réversibles. Photographies intégrales". Comptes Rendus de l'Académie des Sciences. 146 (9): 446–451. Bibcode:1908BSBA...13A.245D. Reprinted in Benton "Selected Papers on Three-Dimensional Displays"

- ^ Frédo Durand; MIT CSAIL. "Reversible Prints. Integral Photographs" (PDF). Retrieved 17 February 2011. (This crude English translation of Lippmann's 1908 paper will be more comprehensible if the reader bears in mind that "dark room" and "darkroom" are the translator's mistaken renderings of "chambre noire", the French equivalent of the Latin "camera obscura", and should be read as "camera" in the thirteen places where this error occurs.)

- ^ 1128979, Hess, Walter, "Stereoscopic picture", published 1915, filed 1 June 1912, patented 16 February 1915. Hess filed several similar patent applications in Europe in 1911 and 1912, which resulted in several patents issued in 1912 and 1913.

- ^ Benton, Stephen (2001). Selected Papers on Three-Dimensional Displays. Milestone Series. Vol. MS 162. SPIE Optical Engineering Press. p. xx-xxi.

- ^ van Berkel, Cees (1997). Fisher, Scott S; Merritt, John O; Bolas, Mark T (eds.). "Characterisation and optimisation of 3D-LCD module design". Proc. SPIE. Stereoscopic Displays and Virtual Reality Systems IV. 3012: 179–186. Bibcode:1997SPIE.3012..179V. doi:10.1117/12.274456. S2CID 62223285.

- ^ Fermoso, Jose (1 October 2008). "Philips' 3D HDTV Might Destroy Space-Time Continuum, Wallets - Gadget Lab". Wired. Archived from the original on 3 June 2010. Retrieved 15 June 2010.

- ^ "xyZ 3D Displays - Autostereoscopic 3D TV - 3D LCD - 3D Plasma - No Glasses 3D". Xyz3d.tv. Archived from the original on 20 April 2010. Retrieved 15 June 2010.

- ^ Lanman, Douglas; Hirsch, Matthew; Kim, Yunhee; Raskar, Ramesh (2010). "Content-adaptive parallax barriers: optimizing dual-layer 3D displays using low-rank light field factorization". ACM Transactions on Graphics. 29 (6): 1–10. doi:10.1145/1882261.1866164.

- ^ Wetzstein, Gordon; Lanman, Douglas; Heidrich, Wolfgang; Raskar, Ramesh (2011). "Layered 3D: tomographic image synthesis for attenuation-based light field and high dynamic range displays". ACM Transactions on Graphics. 30 (4): 1–12. doi:10.1145/2010324.1964990.

- ^ Lanman, Douglas; Wetzstein, Gordon; Hirsch, Matthew; Heidrich, Wolfgang; Raskar, Ramesh (2011). "Polarization fields: dynamic light field display using multi-layer LCDs". ACM Transactions on Graphics. 30 (6): 1–10. doi:10.1145/2070781.2024220.

- ^ Wetzstein, Gordon; Lanman, Douglas; Hirsch, Matthew; Raskar, Ramesh (2012). "Tensor displays: compressive light field synthesis using multilayer displays with directional backlighting". ACM Transactions on Graphics. 31 (4): 1–11. doi:10.1145/2185520.2185576.

- ^ Chinnock, Chris (11 April 2014). "NAB 2014 – Dolby 3D Details Partnership with Stereolabs". Display Central. Archived from the original on 23 April 2014. Retrieved 19 July 2016.

- ^ "Viva3D autostereo output for glasses-free 3D monitors". ViewPoint 3D. Retrieved 19 July 2016.

- ^ Robin C. Colclough. "Viva3D Real-time Stereo Vision: Stereo conversion & depth determination with mixed 3D graphics" (PDF). ViewPoint 3D. Retrieved 19 July 2016.

- ^ Smith, Tom (14 June 2002). "Review : Dimension Technologies 2015XLS". BlueSmoke. Archived from the original on 1 May 2011. Retrieved 25 March 2010.

- ^ McAllister, David F. (February 2002). "Stereo & 3D Display Technologies, Display Technology" (PDF). In Hornak, Joseph P. (ed.). Encyclopedia of Imaging Science and Technology, 2 Volume Set (Hardcover). Vol. 2. New York: Wiley & Sons. pp. 1327–1344. ISBN 978-0-471-33276-3.

- ^ Ooshita, Junichi (25 October 2007). "SeeReal Technologies Exhibits Holographic 3D Video Display, Targeting Market Debut in 2009". TechOn!. Retrieved 23 March 2010.

- ^ "CubicVue LLC : i-stage". I-stage.ce.org. 22 February 1999. Retrieved 15 June 2010.

- ^ Heater, Brian (23 March 2010). "Nintendo Says Next-Gen DS Will Add a 3D Display". PC Magazine.

- ^ Tomas Akenine-Moller, Tomas (2006). Rendering Techniques 2006. A K Peters, Ltd. p. 73. ISBN 9781568813516.

- ^ Pop, Sebastian (3 February 2010). "Sunny Ocean Studios Fulfills No-Glasses 3D Dream". Softpedia.

- ^ Hardesty, Larry (4 May 2011). "Better glasses-free 3-D: A fundamentally new approach". Phys.org. Retrieved 4 March 2012.

- ^ Dodgson, N.A.; Moore, J. R.; Lang, S. R. (1999). "Multi-View Autostereoscopic 3D Display". IEEE Computer. 38 (8): 31–36. CiteSeerX 10.1.1.42.7623. doi:10.1109/MC.2005.252. ISSN 0018-9162. S2CID 34507707.

External links

[edit]- Tridelity

- Viva3D

- VisuMotion

- Explanation of 3D Autostereoscopic Monitors

- Overview of different Autostereoscopic LCD displays

- Rendering for an Interactive 360° Light Field Display, a demonstration of Autostereoscopy using a spinning mirror, a holographic diffuser, and a high speed video projector demonstrated at SIGGRAPH 2007

- Behind-the-scenes video about production for autostereoscopic displays

- 3D Without Glasses - The Future of 3D Technology?

- Diffraction Influence on the Field of View and Resolution of Three-Dimensional Integral Imaging