Marsh test

View on Wikipedia

The Marsh test is a highly sensitive method in the detection of arsenic, especially useful in the field of forensic toxicology when arsenic was used as a poison. It was developed by the chemist James Marsh and first published in 1836.[1] The method continued to be used, with improvements, in forensic toxicology until the 1970s.[2]

Arsenic, in the form of white arsenic trioxide As2O3, was a highly favored poison, being odourless, easily incorporated into food and drink, and before the advent of the Marsh test, untraceable in the body. In France, it came to be known as poudre de succession ("inheritance powder"). For the untrained, arsenic poisoning will have symptoms similar to cholera.[citation needed]

Precursor methods

[edit]The first breakthrough in the detection of arsenic poisoning was in 1775 when Carl Wilhelm Scheele discovered a way to change arsenic trioxide to garlic-smelling arsine gas (AsH3), by treating it with nitric acid (HNO3) and combining it with zinc:[3]

- As2O3 + 6 Zn + 12 HNO3 → 2 AsH3 + 6 Zn(NO3)2 + 3 H2O

In 1787, German physician Johann Metzger (1739-1805) discovered that if arsenic trioxide were heated in the presence of carbon, the arsenic would sublime.[4] This is the reduction of As2O3 by carbon:

- 2 As2O3 + 3 C → 3 CO2 + 4 As

In 1806, Valentin Rose took the stomach of a victim suspected of being poisoned and treated it with potassium carbonate (K2CO3), calcium oxide (CaO) and nitric acid.[5] Any arsenic present would appear as arsenic trioxide and then could be subjected to Metzger's test.

The most common test (and used even today in water test kits) was discovered by Samuel Hahnemann. It would involve combining a sample fluid with hydrogen sulfide (H2S) in the presence of hydrochloric acid (HCl). A yellow precipitate, arsenic trisulfide (As2S3) would be formed if arsenic was present.[6]

Circumstances and methodology

[edit]Though precursor tests existed, they had sometimes proven not to be sensitive enough. In 1832, a certain John Bodle was brought to trial for poisoning his grandfather by putting arsenic in his coffee. James Marsh, a chemist working at the Royal Arsenal in Woolwich, was called by the prosecution to try to detect its presence. He performed the standard test by passing hydrogen sulfide through the suspect fluid. While Marsh was able to detect arsenic, the yellow precipitate did not keep very well, and, by the time it was presented to the jury, it had deteriorated. The jury was not convinced, and John Bodle was acquitted.

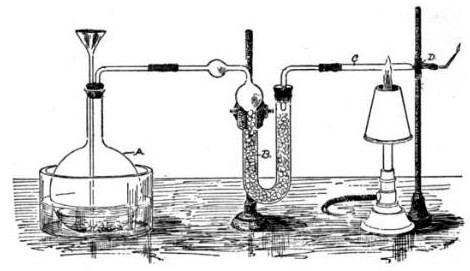

Angered and frustrated by this, especially when John Bodle confessed later that he indeed killed his grandfather, Marsh decided to devise a better test to demonstrate the presence of arsenic. Taking Scheele's work as a basis, he constructed a simple glass apparatus capable of not only detecting minute traces of arsenic but also measuring its quantity. Adding a sample of tissue or body fluid to a glass vessel with zinc and acid would produce arsine gas if arsenic was present, in addition to the hydrogen that would be produced regardless by the zinc reacting with the acid. Igniting this gas mixture would oxidize any arsine present into arsenic and water vapor. This would cause a cold ceramic bowl held in the jet of the flame to be stained with a silvery-black deposit of arsenic, physically similar to the result of Metzger's reaction. The intensity of the stain could then be compared to films produced using known amounts of arsenic.[7] Not only could minute amounts of arsenic be detected (as little as 0.02 mg), the test was very specific for arsenic. Although antimony (Sb) could give a false-positive test by forming stibine (SbH3) gas, which decomposes on heating to form a similar black deposit, it would not dissolve in a solution of sodium hypochlorite (NaOCl), while arsenic would. Bismuth (Bi), which also gives a false positive by forming bismuthine (BiH3), similarly can be distinguished by how it resists attack by both NaOCl and ammonium polysulfide (the former attacks As, and the latter attacks Sb).[8]

Specific reactions involved

[edit]The Marsh test treats the sample with sulfuric acid and arsenic-free zinc. Even if there are minute amounts of arsenic present, the zinc reduces the trivalent arsenic (As3+). Here are the two half-reactions:

- Oxidation: Zn → Zn2+ + 2 e−

- Reduction: As2O3 + 12 e− + 6 H+ → 2 As3− + 3 H2O

They combine into this reaction:

- As2O3 + 6 Zn + 6 H+ → 2 As3− + 6 Zn2+ + 3 H2O

In an acidic medium, As3− is protonated to form arsine gas (AsH3), so with adding sulphuric acid (H2SO4) to each side of the equation and by eliminating the common ions:

- As2O3 + 6 Zn + 6 H2SO4 → 2 AsH3 + 6 ZnSO4 + 3 H2O

First notable application

[edit]Although the Marsh test was efficacious, its first publicly documented use—in fact, the first time evidence from forensic toxicology was ever introduced—was in Tulle, France in 1840 with the celebrated Lafarge poisoning case. Charles Lafarge, a foundry owner, was suspected of being poisoned with arsenic by his wife, Marie. The circumstantial evidence was great: it was shown that she bought arsenic trioxide from a local chemist, supposedly to kill rats that infested their home. In addition, their maid swore that she had mixed a white powder into his drink. Although the food was found to be positive for the poison using the old methods as well as the Marsh test, when the husband's body was exhumed and tested, the chemists assigned to the case were not able to detect arsenic. Mathieu Orfila, the renowned toxicologist and an acknowledged authority of the Marsh test, examined the results. He performed the test again, and demonstrated that the Marsh test was not at fault for the misleading results, but rather, those who performed it did so incorrectly. Orfila thus proved the presence of arsenic in Lafarge's body using the test. As a result, Marie Lafarge was found guilty and sentenced to life imprisonment.

Effects

[edit]The Lafarge case proved to be controversial, for it divided the country into factions who were convinced or otherwise of Mme. Lafarge's guilt; nevertheless, the impact of the Marsh test was great. The French press covered the trial and gave the test the publicity it needed to give the field of forensic toxicology the legitimacy it deserved, although in some ways it trivialized it: actual Marsh test assays were conducted in salons, public lectures and even in some plays that recreated the Lafarge case.[citation needed]

The existence of the Marsh test also served a deterrent effect: deliberate arsenic poisonings became rarer because the fear of discovery became more prevalent.[citation needed]

In fiction

[edit]Marsh test is used in Bill Bergson Lives Dangerously to prove that a certain chocolate is poisoned with arsenic.[9]

Lord Peter Wimsey’s manservant Bunter uses Marsh’s test in Strong Poison to demonstrate that the culprit was secretly in possession of arsenic.[10]

In Alan Bradley's As Chimney Sweepers Come To Dust, 12-year old sleuth and chemistry genius Flavia de Luce uses the Marsh test to determine that arsenic was the murderer's weapon.[11]

In the first episode of the 2017 BBC television series Taboo a mirror test, referencing the Marsh test, is used to verify the protagonist's father was killed via arsenic poisoning. As the setting of the series is between 1814-1820, however, the test's appearance is anachronistic.[12]

In the episode "The King Came Calling" of the first season of Ripper Street, police surgeon Homer Jackson (Matthew Rothenberg) performs Marsh's test on the contents of a poisoning victim and determines that the fatal poison was antimony, not arsenic, since the chemical residue deposited by the flames does not dissolve in sodium hypochlorite.[13]

In episode of the 1957 television series Perry Mason, "The Case of the Fiery Fingers" (s01 ep31), a doctor testifying on the stand regarding the victim of a fatal poisoning is asked if he performed a Marsh test to determine that the poison used was arsenic. The doctor confirms that the Marsh test was used and allowed him to identify the poison as arsenic.

See also

[edit]References

[edit]- ^ Marsh, James (1836). "Account of a method of separating small quantities of arsenic from substances with which it may be mixed". Edinburgh New Philosophical Journal. 21: 229–236.

- ^ Hempel, Sandra (2013). "James Marsh and the poison panic". The Lancet. 381 (9885): 2247–2248. doi:10.1016/S0140-6736(13)61472-5. PMID 23819157. S2CID 36011702.

- ^ Scheele, Carl Wilhelm (1775) "Om Arsenik och dess syra" Archived 2016-01-05 at the Wayback Machine (On arsenic and its acid), Kongliga Vetenskaps Academiens Handlingar (Proceedings of the Royal Scientific Academy [of Sweden]), 36 : 263-294. From p. 290: "Med Zinck. 30. (a) Denna år den endaste af alla så hela som halfva Metaller, som i digestion met Arsenik-syra effervescerar." (With zinc. 30. (a) This is the only [metal] of all whole- as well as semi-metals that effervesces on digestion with arsenic acid.) Scheele collected the arsine and put a mixture of arsine and air into a cylinder. From p. 291: "3:0, Då et tåndt ljus kom når o̊pningen, tåndes luften i kolfven med en småll, lågan for mot handen, denna blef o̊fvedragen med brun fårg, … " (3:0, Then as [the] lit candle came near the opening [of the cylinder], the gases in [the] cylinder ignited with a bang; [the] flame [rushed] towards my hand, which became coated with [a] brown color, … )

- ^ Metzger, Johann Daniel, Kurzgefasstes System der gerichtlichen Arzneiwissenschaft (Concise system of forensic medicine), 2nd ed. (Königsberg and Leipzig, (Germany): Goebbels und Unzer, 1805), pp. 238–239. In a footnote on p. 238, Metzger mentions that if a sample that's suspected of containing arsenic trioxide (Arsenik) is heated on a copper plate (Kupferblech), then, when arsenic vapor lands on the plate, it will condense to form a shiny silver-white (weisse Silberglanz) patch. He also mentions that if a sample containing arsenic trioxide is large enough, metallic arsenic can be produced from it. From the footnote on p. 239: "b) Am besten geschieht sie, wenn mann den Arsenik mit einem fetten Oel zum Brey macht und in einer Retorte so lange distillirt, bis keine ölichte Dämpfe mehr übergehen, dann aber das Feuer verstärkt, wodurch der Arsenik - König sich sublimirt." ( b) It's best of all when one makes a paste of the arsenic trioxide with a fatty oil and distills it in a retort long enough until no more oily vapors pass over [and] then one intensifies the fire, whereby [metallic] arsenic is sublimated.)

- ^ Valentin Rose (1806) "Ueber das zweckmäßigste Verfahren, um bei Vergiftungen mit Arsenik letzern aufzufinden und darzustellen" Archived 2019-12-16 at the Wayback Machine (On the most effective method, in cases of poisoning with arsenic, to discover and show the latter), Journal für Chemie und Physik, 2 : 665-671.

- ^ Hahnemann, Samuel (1786). Ueber die Arsenikvergiftung, ihre Hülfe und gerichtliche Ausmittelung [On poisoning by arsenic: its treatment and forensic detection] (in German). Leipzig, (Germany): Siegfried Lebrecht Crusius. On p. 15, §34, and pp. 25–26, §67, Hahnemann noted that when hydrogen sulfide — Schwefelleberluft = gas (Luft) of liver (Leber) of sulfur (Schwefel); "liver of sulfur" is a mixture of sulfides of potassium; hydrogen sulfide was prepared by adding acid to liver of sulfur — dissolved in water was added to an acidified solution containing arsenic trioxide, a yellow precipitate — arsenic trisulfide, As2S3, which he called Operment (English: orpiment, yellow arsenic; German: Rauschgelb) — was produced. From pp. 25-26: "§67. Noch müssen wir der Schwefelleberluft erwähnen, die in Wasser aufgelöst, sich am innigsten mit dem Arsenikwasser verbindet, und als Operment mit ihm zu Boden fält." (We still must mention hydrogen sulfide, which [when it's] dissolved in water, binds most closely with arsenic [trioxide in] water, and falls to the bottom with it as arsenic trisulfide.) In Chapter 11 (Elftes Kapitel. Chemische Kennzeichen des Thatbestands (corporis delicti) einer Arsenikvergiftung [Ch. 11. Chemical indications of evidence of an arsenic poisoning]), Hahemann explains how to identify arsenic in autopsy samples (e.g., stomach contents). On p. 239, §429, he explains how to distinguish mercury poisoning from arsenic poisoning. And on p. 246, §440, he describes the course of the reaction: "§440. Mit Schwefelleberluft gesättigtes Wasser bildet in einer wenig gesättigten Arsenikauflösung zuerst eine durchsichtige Gilbe, nach einigen Minuten begint die Flüssigkeit erst trübe zu werden und nach mehrern Stunden erscheint dann nach und nach der lokere pomeranzengelbe Niederschlag, den man mit einigen zugetröpfelten Tropfen Weinessig beschleunigen kan." (§440. With water saturated with hydrogen sulfide, [there] forms, in a little saturated solution of arsenic, at first a transparent yellow; after some minutes the fluid begins first to become cloudy, and after several hours [there] then appears bit by bit a fluffy orange-yellow precipitate, [the formation of] which one can accelerate with some drops of acetic acid added dropwise.)

- ^ "Arsine - Molecule of the Month - January 2005 - HTML version". Archived from the original on 2008-10-07. Retrieved 2008-10-25.

- ^ Holleman, A. F.; Wiberg, E. "Inorganic Chemistry" Academic Press: San Diego, 2001.ISBN 0-12-352651-5.

- ^ Lingren, Astrid (1951). Bill Bergson Lives Dangerously. Rabén & Sjögren.

- ^ Sayers, Dorothy L. (1930). Strong Poison. Gollancz.

- ^ Bradley, Alan (2016). As Chimney Sweepers Come to Dust. Flavia de Luce. New York: Bantam. ISBN 978-0-345-53994-6.

- ^ Taboo (TV Series 2017– ), 10 January 2017, archived from the original on 2022-01-25, retrieved 2017-06-17

- ^ "The King Came Calling" Ripper Street (TV Series 2012-2016), 30 December 2012

Further reading

[edit]- Marsh, James (1837). "Arsenic; nouveau procédé pour le découvrir dans les substances auxquelles il est mêlé". Journal de Pharmacie. 23: 553–562.

- Marsh, James (1837). "Beschreibung eines neuen Verfahrens, um kleine Quantitäten Arsenik von den Substanzen abzuscheiden, womit er gemischt ist". Liebigs Annalen der Chemie. 23 (2): 207–216. doi:10.1002/jlac.18370230217.

- Mohr, C. F. (1837). "Zusätze zu der von Marsh angegebenen Methode, den Arsenik unmittelbar im regulinischen Zustande aus jeder Flüssigkeit auszuscheiden" [Addenda to the method given by Marsh for separating arsenic immediately in the metallic state from any liquid]. Annalen der Chemie und Pharmacie. 23 (2): 217–225. doi:10.1002/jlac.18370230218.

- Lockemann, Georg (1905). "Über den Arsennachweis mit dem Marshschen Apparate" [On the detection of arsenic with the Marsh apparatus]. Angewandte Chemie. 18 (11): 416–429. Bibcode:1905AngCh..18..416L. doi:10.1002/ange.19050181104.

- Harkins, W. D. (1910). "The Marsh test and Excess Potential (First Paper.1) The Quantitative Determination of Arsenic". Journal of the American Chemical Society. 32 (4): 518–530. doi:10.1021/ja01922a008.

- Campbell, W. A. (1965). "Some landmarks in the history of arsenic testing". Chemistry in Britain. 1: 198–202.

- Bertomeu-Sánchez, José Ramón; Nieto-Galan, Agustí, eds. (2006). Chemistry, Medicine, and Crime: Mateu J. B. Orfila (1787–1853) and his times. Sagamore Beach (MA): Science History Publications. ISBN 0-88135-275-6.

- Watson, Katherine D. (2006). Bertomeu-Sánchez, José Ramón; Nieto-Galan, Agustí (eds.). Criminal poisoning in England and the origins of the Marsh test for arsenic. In: Chemistry, Medicine and Crime: Mateu J. B. Orfila (1787-1853) and his times. Sagamore Beach (MA): Science History Publications. pp. 183–206. ISBN 0-88135-275-6. Retrieved 2024-01-02.

External links

[edit]- McMuigan, Hugh (1921). An Introduction to Chemical Pharmacology. Philadelphia: P. Blakiston's Son & Co. pp. 396–397. Retrieved 2007-12-16.

- Wanklyn, James Alfred (1901). Arsenic. London: Kegan Paul, Trench, Trübner & Co. Ltd. pp. 39–57. Retrieved 2007-12-16.

James Marsh Test.