Telecine

View on Wikipedia

Telecine (/ˈtɛləsɪneɪ/ or /ˌtɛləˈsɪneɪ/), or TK, is the process of transferring film into video and is performed in a color suite. The term is also used to refer to the equipment used in this post-production process.[1]

Telecine enables a motion picture, captured originally on film stock, to be viewed with standard video equipment, such as television sets, video cassette recorders (VCR), DVD, Blu-ray or computers. Initially, this allowed television broadcasters to produce programs using film, usually 16-mm stock, but transmit them in the same format, and quality, as other forms of television production.[2] Furthermore, telecine allows film producers, television producers and film distributors working in the film industry to release their productions on video and allows producers to use video production equipment to complete their filmmaking projects.

Within the film industry, it is also referred to as a TK, TC having already been used to designate timecode. Motion picture film scanners are similar to telecines.

History

[edit]With the advent of popular broadcast television, producers realized they needed more than live television programming. By turning to film-originated material, they would have access to the wealth of films made for the cinema in addition to recorded television programming on film that could be aired at different times. However, the difference in frame rates between film (generally 24 frames per second) and television (30 or 25 frames per second, interlaced) meant that simply playing a film into a television camera would result in flickering.

The kinescope was used to record the image from a television display to film, synchronized to the TV scan rate. The film could then be shown directly into a video camera for retransmission.[3] Non-live programming could also be filmed using the kinescope, edited mechanically as normal, and then played back for TV. As the film was run at the same speed as the television, the flickering was eliminated. Various displays, including projectors for these video rate films, slide projectors and film cameras were often combined into a film chain, allowing the broadcaster to cue up various forms of media and switch between them by moving a mirror or prism. Color was supported by using a multi-tube video camera, prisms, and filters to separate the original color signal and feed the red, green and blue to individual tubes.

However, this still left film shot at cinema frame rates as a problem. The obvious solution is to simply speed up the film to match the television frame rates, but this, at least in the case of NTSC, requires a change that is rather obvious to the eye and ear. The simple solution is to periodically play a selected frame twice. For NTSC, the difference in frame rates can be corrected by showing every fourth frame of film twice. This solution does require the sound to be handled separately. A more advanced technique is to use 2:3 pulldown, discussed below, which turns every second frame of the film into three fields of video, which results in a slightly smoother display. PAL uses a similar system, 2:2 pulldown. However, during the analog broadcasting period, the 24 frames per second film was shown at a slightly faster 25 frames per second rate, to match the PAL video signal. This resulted in a fractionally higher-pitched audio soundtrack, and resulted in feature films having a slightly shorter duration, by being shown 1 frame per second faster.

In recent decades, telecine has primarily been a film-to-storage process, as opposed to film-to-air. Changes since the 1950s have primarily been in terms of equipment and physical formats; the basic concept remains the same. Home movies originally on film may be transferred to video tape using this technique.

Frame rate differences

[edit]The most complex part of telecine is the synchronization of the mechanical film motion and the electronic video signal. Every time the video (tele) part of the telecine samples the light electronically, the film (cine) part of the telecine must have a frame in perfect registration and ready to photograph. This is relatively easy when the film is photographed at the same frame rate as the video camera will sample, but when video and film frame rates differ, a sophisticated procedure is required.

2:2 pulldown

[edit]

In countries that use the PAL or SECAM video standards, film destined for television is photographed at 25 frames per second. The PAL video standard broadcasts at 25 frames per second, so the transfer from film to video is simple; for every film frame, one video frame is captured.

Theatrical features originally photographed at 24 frames per second are shown at 25 frames per second. While this is usually not noticed in the picture, the 4% increase in playback speed causes a slightly noticeable increase in audio pitch by about 0.707 semitones. This can be corrected using time stretching algorithms, which speed up audio while preserving pitch.

2:2 pulldown is also used to transfer shows and films photographed at 30 frames per second, like Friends and Oklahoma! (1955),[4] to NTSC video, which has ≈59.94 Hz scanning rate. This requires playback speed to be slowed by a tenth of a percent.

2:3 pulldown

[edit]

In the United States and other countries where television uses the 59.94 Hz vertical scanning frequency, video is broadcast at ≈29.97 frames/s. For the film's motion to be accurately rendered on the video signal, a telecine must use a technique called the 2:3 pulldown, also known as 3:2 pulldown, to convert from 24 to ≈29.97 frames/s.

The term pulldown comes from the mechanical process of pulling (physically moving) the film downward within the film portion of the transport mechanism, to advance it from one frame to the next at a given rate (nominally 24 frames/s). This is accomplished in two steps. The first step is to slow down the film motion by NTSC's 1000⁄1001 ratio to 24,000⁄1001 (≈23.976) frames/s. The difference in speed is imperceptible to the viewer. For a two-hour film, play time is extended by 7.2 seconds. If the total playback time must be kept exact, a single frame can be dropped every 1000 frames.

The second step of the 2:3 pulldown is distributing cinema frames into video fields. At 23.976 frames/s, there are four frames of film for every five frames of 29.97 frame/s video:

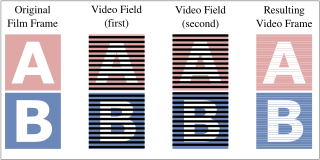

These four film frames are stretched into five video frames by exploiting the interlaced nature of 60 Hz video. For every video frame, there are actually two incomplete images or fields, one for the odd-numbered lines of the image, and one for the even-numbered lines. There are, therefore, ten fields for every four film frames, which are called A, B, C, and D. The telecine alternately places frame A across two fields, frame B across three fields, frame C across two fields and frame D across three fields. This can be written as A-A-B-B-B-C-C-D-D-D or 2-3-2-3 or simply 2–3. The cycle repeats itself completely after four film frames.

A 3:2 pulldown pattern is identical to the one described above except that it is shifted by one frame. For instance, a cycle that starts with film frame B yields a 3:2 pattern: B-B-B-C-C-D-D-D-A-A or 3-2-3-2 or simply 3–2. In other words, there is no difference between the 2-3 and 3-2 patterns. In fact, the 3-2 notation is misleading because according to SMPTE standards for every four-frame film sequence the first frame is scanned twice, not three times.[5]

The above method is a classic 2:3, which was used before frame buffers allowed for holding more than one frame. The preferred method for doing a 2:3 creates only one dirty frame in every five (i.e. 3:3:2:2 or 2:3:3:2 or 2:2:3:3); while this method has slightly more judder, it allows for easier upconversion (the dirty frame can be dropped without losing information) and a better overall compression when encoding. The 2:3:3:2 pattern is supported by the Panasonic DVX-100B video camera under the name "Advanced Pulldown". Note that just fields are displayed—no frames hence no dirty frames—in interlaced display such as on a CRT. Dirty frames may appear in other methods of displaying the interlaced video.

Euro pulldown

[edit]A new[when?] method called 2:2:2:2:2:2:2:2:2:2:2:3, Euro, 12:1 or 24:1 pulldown,[6][7][8] can be used in order to convert 24 frame/s material to 25 frame/s.[9][10] Usually, this involves a film to PAL transfer without the aforementioned 4% speedup. For film at 24 frames/s, there are 24 frames of film for every 25 frames of PAL video. In order to accommodate this mismatch in frame rate, 24 frames of film have to be distributed over 50 PAL fields. This can be accomplished by inserting a pulldown field every 12 frames, thus effectively spreading 12 frames of film over 25 fields (or 12.5 frames) of PAL video.

This method was born out of a frustration with the faster, higher-pitched soundtracks that traditionally accompanied films transferred for PAL and SECAM audiences. A few motion pictures are beginning to be telecined this way[citation needed]. It is particularly suited for films where the soundtrack is of special importance.

Other pulldown patterns

[edit]Similar techniques must be used for films shot at silent speeds of less than 24 frames/s, which includes home movie formats (the standard for Standard 8 mm film was 16 fps, and 18 fps for Super 8 mm film) as well as silent film (which in 35 mm format usually was 16 fps, 12 fps, or even lower).

- 16 frames/s (actually 15.984) to NTSC 30 frames/s (actually 29.97): pulldown should be 3:4:4:4 or the film may be run at 15 (actually 14.985) frames/s then pulldown should be 4:4. As motion pictures shot at this framerate are silent, there is no audio that is affected.

- 16 frames/s to PAL 25: pulldown should be 3:3:3:3:3:3:3:4 (if the film playback rate is increased to 16+2⁄3 frames/s [1,000 frames per minute)] pulldown is simplified to 3:3)

- 18 frames/s (slowed to 17.982) to NTSC 30: pulldown should be 3:3:4

- 20 frames/s (slowed to 19.98) to NTSC 30: pulldown should be 3:3

- 20 frames/s to PAL 25: pulldown should be 3:2

- 27.5 frames/s to NTSC 30: pulldown should be 3:2:2:2:2

- 27.5 frames/s to PAL 25: pulldown should be 1:2:2:2:2

Also, other patterns have been described that refer to the progressive frame rate conversion required to display 24 frame/s video (e.g., from a DVD player) on a progressive display (e.g., LCD or plasma):[11]

- 24 frames/s to 96 frames/s (4× frame repetition): pulldown is 4:4

- 24 frames/s to 120 frames/s (5× frame repetition): pulldown is 5:5

- 24 frames/s to 120 frames/s (3:2 pulldown followed by 2× deinterlacing): pulldown is 6:4

Mainframe Entertainment used a novel process for its TV shows. They are rendered at exactly 25.000 frames per second; then, for PAL/SECAM distribution, ordinary 2:2 pulldown is applied, but for NTSC distribution, 199 fields out of every 1001 are repeated. This brings the refresh rate from 25 frames/s to exactly 60,000⁄1001, or ≈59.94, fields per second, with no change whatsoever in speed, duration, or audio pitch.

Telecine judder

[edit]The 2:3 pulldown telecine process creates a slight error in the video signal compared to the original film frames that can be seen in the 2:3 pulldown diagram above. This is one reason why films viewed on typical NTSC home equipment may not appear as smooth as when viewed in a cinema and PAL home equipment. The effect is particularly apparent in scenes that feature slow, steady camera movements. These appear slightly jerky when viewed in material that has been through the telecine process. The phenomenon is commonly referred to as telecine judder. Reversing the 2:3 pulldown telecine is discussed below.

PAL material in which 2:3 (Euro) pulldown has been applied suffers from a similar lack of smoothness, though this effect is not usually called telecine judder. Effectively, every 12th film frame is displayed for the duration of three PAL fields (60 milliseconds), whereas the other 11 frames are each displayed for the duration of two PAL fields (40 milliseconds). This causes a slight hiccup in the video about twice a second.

Reverse telecine

[edit]Some DVD players, line doublers, and personal video recorders are designed to detect and remove 2:3 pulldown from telecined video sources, thereby reconstructing the original 24 frame/s film frames. Many video editing programs such as AviSynth also have this ability. This technique is known as reverse telecine, inverse telecine, reverse pulldown or detelecine. Benefits of reverse telecine include high-quality non-interlaced display on compatible display devices and the elimination of redundant data.

Reverse telecine is crucial when acquiring film material into a digital non-linear editing system since these machines produce edit decision lists which refer to specific frames in the original film material. When video from a telecine is ingested into these systems, the operator usually has available a telecine trace, in the form of a text file, which gives the correspondence between the video material and film original. Alternatively, the video transfer may include telecine sequence markers burned in to the video image along with other identifying information such as time code.

It is also possible, but more difficult, to perform reverse telecine without prior knowledge of where each field of video lies in the 2:3 pulldown pattern. This is the task faced by most consumer equipment such as line doublers and personal video recorders. Ideally, only a single field needs to be identified, the rest following the pattern in lock-step. However, the 2:3 pulldown pattern does not necessarily remain consistent throughout an entire program. Edits performed on film material after it undergoes 2:3 pulldown, e.g. in NTSC format, can introduce jumps in the pattern if care is not taken to preserve the original frame sequence. Most reverse telecine algorithms attempt to follow the 2:3 pattern using image analysis techniques, e.g. by searching for repeated fields.

Algorithms that perform 2:3 pulldown removal also usually perform the task of deinterlacing. It is possible to algorithmically determine whether video contains a 2:3 pulldown pattern or not, and selectively do either reverse telecine (in the case of film-sourced video) or simpler deinterlacing (in the case of native video sources).

Telecine hardware

[edit]Flying spot scanner

[edit]

In the United Kingdom, Rank Precision Industries was experimenting with the flying-spot scanner (FSS), which inverted the cathode-ray tube (CRT) concept of scanning using a television screen. Rank Precision-Cintel introduced the Mark series of FSS telecines. In 1950 the first Rank flying spot monochrome telecine was installed at the BBC's Lime Grove Studios.[12] The CRT in the FSS emits a pixel-sized electron beam which excites phosphors coating the envelope, causing them to glow in red, green, and blue. This dot of light is then focused by a lens onto the film's emulsion and finally collected by a special type of photo-electric cell known as a photomultiplier which converts the light into an electrical signal. This can be accomplished in real time, 24 frames per second (or in some cases faster). An advantage of the FSS is that color analysis is done after scanning, so there can be no registration errors as can be produced by vidicon tubes where scanning is done after color separation—it also allows simpler dichroics to be used.

The problem with flying-spot scanners was the difference in frequencies between television field rates and film frame rates. This was solved first by the Mark I Polygonal Prism system, which was optically synchronized to the television frame rate by the rotating prism and could be run at any frame rate. This was replaced by the Mark II Twin Lens, and then around 1975, by the Mark III Hopping Patch (jump scan). The Mark III series progressed from the original jump scan interlace scan to the Mark IIIB which used a progressive scan and included a digital scan converter (Digiscan) to output interlaced video. The Mark IIIC was the most popular of the series and used a next-generation Digiscan plus other improvements.

The Mark series was then replaced by the Ursa (1989), the first in their line of telecines capable of producing digital data in 4:2:2 color space. The Ursa Gold (1993) stepped this up to 4:4:4 and then the Ursa Diamond (1997), which incorporated many third-party improvements on the Ursa system.[13]

Line array CCD

[edit]

The Robert Bosch GmbH, Fernseh division[a] introduced the world's first charge-coupled device (CCD) telecine (1979), the FDL 60. The FDL 60 designed and made in Darmstadt, West Germany, was the first all solid state telecine. Rank Cintel (ADS telecine 1982) and Marconi Company (1985) both made CCD Telecines for a short time. The Marconi model B3410 telecine sold 84 units over a three-year period.[14]

In a line array CCD telecine, a white light is shone through the exposed film image into a prism, which separates out the image into the three primary colors, red, green and blue. Each beam of colored light is then projected at a different CCD, one for each color. The CCD converts the light into electrical impulses which the telecine electronics modulate into a video signal which can then be recorded onto video tape or broadcast.

Philips-BTS eventually evolved the FDL 60 into the FDL 90 (1989) and Quadra (1993). In 1996 Philips, working with Kodak, introduced the Spirit DataCine (SDC 2000), which was capable of scanning the film image at HDTV resolutions and approaching 2K (1920 Luminance and 960 Chrominace RGB) × 1556 RGB. With the data option, the Spirit DataCine can be used as a motion picture film scanner outputting 2K DPX data files as 2048 × 1556 RGB. In 2000 Philips introduced the Shadow Telecine (STE), a low-cost version of the Spirit with no Kodak parts. The Spirit DataCine, Cintel's C-Reality and ITK's Millennium opened the door to the technology of digital intermediates, wherein telecine tools were not just used for video outputs, but could now be used for high-resolution data that would later be recorded back out to film.[13] The DFT Digital Film Technology Spirit 4K/2K/HD (2004) replaced the Spirit 1 Datacine and uses both 2K and 4K line array CCDs.[b] DFT revealed its new scanner, Scanity, at the 2009 NAB Show.[15] The Scanity uses time delay integration (TDI) sensor technology for extremely fast and sensitive film scans.[c]

Pulsed LED/triggered three CCD camera system

[edit]With the manufacturing of new high-power LEDs came pulsed LED/triggered three-CCD camera systems. Flashing the LED light source for a very short time gives the full-frame CCD camera a stop action of the film, allowing continuous film motion. With CCD video cameras that have a trigger input, the camera can be electronically synced to the film transport framing.

An array of high-power multiple red, green and blue LEDs is pulsed just as the film frame is positioned in front of the optical lens. The camera sends the single, non-interlaced image of the film frame to a digital frame store, where the electronic picture is clocked out at the selected TV frame rate for PAL or NTSC or other standards. More advanced systems replace the sprocket wheel with laser or camera-based perf detection and image stabilization system.

Digital intermediate systems and virtual telecines

[edit]Telecine technology is increasingly merging with that of motion picture film scanners; high-resolution telecines, such as those mentioned above, can be regarded as film scanners that operate in real time.

As digital intermediate post-production becomes more common, the need to combine the traditional telecine functions of input devices, standards converters, and color grading systems is becoming less important as the post-production chain changes to tapeless and filmless operation.

However, the parts of the workflow associated with telecines still remain and are being pushed to the end, rather than the beginning, of the post-production chain, in the form of real-time digital grading systems and digital intermediate mastering systems, increasingly running in software on commodity computer systems. These are sometimes called virtual telecine systems.[citation needed]

Video cameras

[edit]This section needs to be updated. The reason given is: Lots of the section refers to cameras that presumably pre-date 1080p, and interlacing as though "standard televisions" need it.. (January 2022) |

Some video cameras and consumer camcorders are able to record in progressive 24 (or 23.976) frames/s. Such a video has cinema-like motion characteristics and is the major component of the so-called film look.

For most 24 frame/s cameras, the virtual 2:3 pulldown process is happening inside the camera. Although the camera is capturing a progressive frame at the CCD, just like a film camera, it is then imposing an interlacing on the image to record it to tape so that it can be played back on any standard television. Not every camera handles 24 frame/s this way, but the majority of them do.[16][needs update]

Cameras that record 25 frames/s (PAL) or 29.97 frames/s (NTSC) do not need to employ 2:3 pulldown, because every progressive frame occupies exactly two video fields. In the video industry, this type of encoding is called progressive segmented frame (PsF). PsF is conceptually identical to 2:2 pulldown, only there is no film original to transfer from.

Digital television and high definition

[edit]Digital television and high-definition standards provide several methods for encoding film material. Fifty field/s formats such as 576i50 and 1080i50 can accommodate film content using a 4% speed-up like PAL. 59.94 field/s interlaced formats such as 480i60 and 1080i60 use the same 2:3 pulldown technique as NTSC. In 59.94 frame/s progressive formats such as 480p60 and 720p60, entire frames (rather than fields) are repeated in a 2:3 pattern, accomplishing the frame rate conversion without interlacing and its associated artifacts. Other formats such as 1080p24 can decode film material at its native rate of 24 or 23.976 frames/s.

All of these coding methods are in use to some extent. In PAL countries, 25 frame/s formats remain the norm. In NTSC countries, most digital broadcasts of 24 frame/s progressive material, both standard and high definition, continue to use interlaced formats with 2:3 pulldown, even though ATSC allows native 24 and 23.976 frame/s progressive formats which offer the greatest image quality and coding efficiency, and are widely used in motion picture and high definition video production.

Gate weave

[edit]Gate weave, known in this context as telecine weave or telecine wobble, is caused by the movement of the film in the telecine machine gate. It is a characteristic artifact of real-time telecine scanning. Numerous techniques have been devised to minimize gate weave, using both improvements in mechanical film handling and electronic post-processing. Line-scan telecines are less vulnerable to frame-to-frame alignment issues than machines with mechanical gates, and non-real-time machines are also less vulnerable to gate weave than real-time machines. Some gate weave is inherent in film cinematography, as it was introduced by the film handling within the original film camera. Modern digital image stabilization techniques can remove both this and telecine gate weave.

Soft and hard telecine

[edit]On DVDs, telecined material may be either hard telecined, or soft telecined. In the hard-telecined case, video is stored on the DVD at the playback framerate (29.97 frames/s for NTSC, 25 frames/s for PAL), using the telecined frames as shown above. In the soft-telecined case, the material is stored on the DVD at the film rate (24 or 23.976 frames/s) in the original progressive format, with special flags inserted into the MPEG-2 video stream that instruct the DVD player to repeat certain fields so as to accomplish the required pulldown during playback.[17] Progressive scan DVD players additionally offer output at 480p by using these flags to duplicate frames rather than fields, or if the TV supports it, to play the disc back at the native 24p rate.

NTSC DVDs are often soft telecined, although lower-quality hard-telecined DVDs exist. In the case of PAL DVDs using 2:2 pulldown, the difference between soft and hard telecine vanishes, and the two may be regarded as equal. In the case of PAL DVDs using 2:3 pulldown, either soft or hard telecining may be applied.

Blu-ray offers native 24 frame/s support, allowing 5:5 cadence on most modern televisions.

Image gallery

[edit]-

Bosch Fernseh FDL 60 telecine film deck and lens gate

-

Quadra telecine film deck

-

Rank Cintel Mark 3 telecine

-

Cintel URSA Diamond telecine

-

Cintel C-Reality telecine film deck

-

Innovation TK Ltd Millennium telecine machine

-

SDC-2000 Spirit DataCine functional control panel (FCP)

-

Spirit Datacine 4k with the doors closed

-

Spirit Datacine 4k with the doors open

-

GCP control panel for a Spirit Datacine

-

A telecine room

See also

[edit]- Color motion picture film

- Da Vinci Systems for color grading and video editing systems

- Display motion blur, factors causing motion blur on displays

- Display resolution

- Faroudja, inventors of reverse telecine technologies

- Film recorder

- Film preservation

- Gamma correction

- Hard disk recorder

- Image scanner

- Keykode

- Pandora International

- Sound follower

- Test film

Notes

[edit]References

[edit]- ^ NAB Engineering Handbook. Focal Press. 2007. pp. 1421-ff. ISBN 978-0-240-80751-5.

- ^ John, Ellis; Nick, Hall (April 11, 2018). "ADAPT". Figshare. doi:10.17637/rh.c.3925603.v2.

- ^ Pincus, Edward and Ascher, Steven. (1984). The Filmmaker's Handbook. Plume. p. 368-9 ISBN 0-452-25526-0

- ^ "Home Theater and High Fidelity, Progressive Scan DVDs and deinterlacing".

- ^ Poynton, Charles (2003). Charles Poynton, Digital Video and HDTV: Algorithms and Interfaces. Morgan Kaufmann. ISBN 9781558607927., page 430

- ^ lorihollasch (June 3, 2021). "D3D11_1DDI_VIDEO_PROCESSOR_ITELECINE_CAPS (d3d10umddi.h) - Windows drivers". learn.microsoft.com. Retrieved June 16, 2023.

- ^ "2:2:2:2:2:2:2:2:2:2:2:3 Pulldown - AfterDawn: Glossary of technology terms & acronyms". www.afterdawn.com. Retrieved June 16, 2023.

- ^ Poynton, Charles (February 27, 2012). Digital Video and HD: Algorithms and Interfaces. Elsevier. p. 586. ISBN 978-0-12-391932-8.

- ^ "7.1. Making a high quality MPEG-4 ("DivX") rip of a DVD movie". mplayerhq.hu.

- ^ "The DVD-Video Bible, Written by @rlaphoenix". Gist. Retrieved June 16, 2023.

- ^ "1080/24 at 48Hz, 96Hz, or 120Hz". highdefdigest.com. Archived from the original on November 17, 2015.

- ^ "Some key dates in Cintel's history". Archived from the original on December 9, 2007. Retrieved July 15, 2019.

- ^ a b Holben, Jay (May 1999). "From Film to Tape" American Cinematographer Magazine, pp. 108–122.

- ^ "Digital Library". smpte.org.[permanent dead link]

- ^ DFT Scanity Archived June 18, 2009, at the Wayback Machine

- ^ "Jay Holben, More Detail on 24p". October 23, 2007. Archived from the original on October 25, 2007.

- ^ "Coming Soon To DVD – Find Out DVD Release Dates!". ComingSoon.net. 6 October 2015. Archived from the original on 5 January 2009. Retrieved 21 December 2008.