Recent from talks

Nothing was collected or created yet.

LMS color space

View on Wikipedia

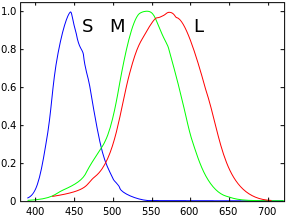

LMS (long, medium, short), is a color space which represents the response of the three types of cones of the human eye, named for their responsivity (sensitivity) peaks at long, medium, and short wavelengths.

The numerical range is generally not specified, except that the lower end is generally bounded by zero. It is common to use the LMS color space when performing chromatic adaptation (estimating the appearance of a sample under a different illuminant). It is also useful in the study of color blindness, when one or more cone types are defective.

Definition

[edit]The cone response functions are the color matching functions (CMFs) for the LMS color space. The chromaticity coordinates (L, M, S) for a spectral distribution are defined as:

The cone response functions are normalized to have their maxima equal to unity.

XYZ to LMS

[edit]Typically, colors to be adapted chromatically will be specified in a color space other than LMS (e.g. sRGB). The chromatic adaptation matrix in the diagonal von Kries transform method, however, operates on tristimulus values in the LMS color space. Since colors in most colorspaces can be transformed to the XYZ color space, only one additional transformation matrix is required for any color space to be adapted chromatically: to transform colors from the XYZ color space to the LMS color space.[3]

In addition, many color adaption methods, or color appearance models (CAMs), run a von Kries-style diagonal matrix transform in a slightly modified, LMS-like, space instead. They may refer to it simply as LMS, as RGB, or as ργβ. The following text uses the "RGB" naming, but do note that the resulting space has nothing to do with the additive color model called RGB.[3]

The chromatic adaptation transform (CAT) matrices for some CAMs in terms of CIEXYZ coordinates are presented here. The matrices, in conjunction with the XYZ data defined for the standard observer, implicitly define a "cone" response for each cell type.

Notes:

- All tristimulus values are normally calculated using the CIE 1931 2° standard colorimetric observer.[3]

- Unless specified otherwise, the CAT matrices are normalized (the elements in a row add up to 1) so the tristimulus values for an equal-energy illuminant (X=Y=Z), like CIE Illuminant E, produce equal LMS values.[3]

Hunt, RLAB

[edit]This article is missing information about how the HPE matrix was derived – looks like the most "physiological" of the XYZ bunch, but where's the data?. (October 2021) |

The Hunt and RLAB color appearance models use the Hunt–Pointer–Estevez transformation matrix (MHPE) for conversion from CIE XYZ to LMS.[4][5][6] This is the transformation matrix which was originally used in conjunction with the von Kries transform method, and is therefore also called von Kries transformation matrix (MvonKries).

Bradford's spectrally sharpened matrix (LLAB, CIECAM97s)

[edit]The original CIECAM97s color appearance model uses the Bradford transformation matrix (MBFD) (as does the LLAB color appearance model).[3] This is a “spectrally sharpened” transformation matrix (i.e. the L and M cone response curves are narrower and more distinct from each other). The Bradford transformation matrix was supposed to work in conjunction with a modified von Kries transform method which introduced a small non-linearity in the S (blue) channel. However, outside of CIECAM97s and LLAB this is often neglected and the Bradford transformation matrix is used in conjunction with the linear von Kries transform method, explicitly so in ICC profiles.[8]

A "spectrally sharpened" matrix is believed to improve chromatic adaptation especially for blue colors, but does not work as a real cone-describing LMS space for later human vision processing. Although the outputs are called "LMS" in the original LLAB incarnation, CIECAM97s uses a different "RGB" name to highlight that this space does not really reflect cone cells; hence the different names here.

LLAB proceeds by taking the post-adaptation XYZ values and performing a CIELAB-like treatment to get the visual correlates. On the other hand, CIECAM97s takes the post-adaptation XYZ value back into the Hunt LMS space, and works from there to model the vision system's calculation of color properties.

Later CIECAMs

[edit]A revised version of CIECAM97s switches back to a linear transform method and introduces a corresponding transformation matrix (MCAT97s):[9]

The sharpened transformation matrix in CIECAM02 (MCAT02) is:[10][3]

CAM16 uses a different matrix:[11]

As in CIECAM97s, after adaptation, the colors are converted to the traditional Hunt–Pointer–Estévez LMS for final prediction of visual results.

Stockman & Sharpe (2000) physiological CMFs

[edit]From a physiological point of view, the LMS color space describes a more fundamental level of human visual response, so it makes more sense to define the physiopsychological XYZ by LMS, rather than the other way around.

A set of physiologically-based LMS functions were proposed by Stockman & Sharpe in 2000. The functions have been published in a technical report by the CIE in 2006 (CIE 170).[12][13] The functions are derived from Stiles and Burch[1] RGB CMF data, combined with newer measurements about the contribution of each cone in the RGB functions. To adjust from the 10° data to 2°, assumptions about photopigment density difference and data about the absorption of light by pigment in the lens and the macula lutea are used.[14]

The Stockman & Sharpe functions can then be turned into a set of three color-matching functions similar to the CIE 1931 functions.[15]

Let be the three cone response functions, and let be the new XYZ color matching functions. Then, by definition, the new XYZ color matching functions are:

where the transformation matrix is defined as:

The derivation of this transformation is relatively straightforward.[16] The CMF is the luminous efficiency function originally proposed by Sharpe et al. (2005),[17] but then corrected (Sharpe et al., 2011[18][a]). The CMF is equal to the cone fundamental originally proposed by Stockman, Sharpe & Fach (1999)[19] scaled to have an integral equal to the CMF. The definition of the CMF is derived from the following constraints:

- Like the other CMFs, the values of are all positive.

- The integral of is identical to the integrals for and .

- The coefficients of the transformation that yields are optimized to minimize the Euclidean differences between the resulting , and color matching functions and the CIE 1931 , and color matching functions.

— CVRL description for 'CIE (2012) 2-deg XYZ "physiologically-relevant" colour matching functions'[15]

For any spectral distribution , let be the LMS chromaticity coordinates for , and let be the corresponding new XYZ chromaticity coordinates. Then:

or, explicitly:

The inverse matrix is shown here for comparison with the ones for traditional XYZ:

The above development has the advantage of basing the new XFYFZF color matching functions on the physiologically-based LMS cone response functions. In addition, it offers a one-to-one relationship between the LMS chromaticity coordinates and the new XFYFZF chromaticity coordinates, which was not the case for the CIE 1931 color matching functions. The transformation for a particular color between LMS and the CIE 1931 XYZ space is not unique. It rather depends highly on the particular form of the spectral distribution ) producing the given color. There is no fixed 3x3 matrix which will transform between the CIE 1931 XYZ coordinates and the LMS coordinates, even for a particular color, much less the entire gamut of colors. Any such transformation will be an approximation at best, generally requiring certain assumptions about the spectral distributions producing the color. For example, if the spectral distributions are constrained to be the result of mixing three monochromatic sources, (as was done in the measurement of the CIE 1931 and the Stiles and Burch[1] color matching functions), then there will be a one-to-one relationship between the LMS and CIE 1931 XYZ coordinates of a particular color.

As of Nov 28, 2023, CIE 170-2 CMFs are proposals that have yet to be ratified by the full TC 1-36 committee or by the CIE.

Quantal CMF

[edit]For theoretical purposes, it is often convenient to characterize radiation in terms of photons rather than energy. The energy E of a photon is given by the Planck relation

where E is the energy per photon, h is the Planck constant, c is the speed of light, ν is the frequency of the radiation and λ is the wavelength. A spectral radiative quantity in terms of energy, JE(λ), is converted to its quantal form JQ(λ) by dividing by the energy per photon:

For example, if JE(λ) is spectral radiance with the unit W/m2/sr/m, then the quantal equivalent JQ(λ) characterizes that radiation with the unit photons/s/m2/sr/m.

If CEλi(λ) (i=1,2,3) are the three energy-based color matching functions for a particular color space (LMS color space for the purposes of this article), then the tristimulus values may be expressed in terms of the quantal radiative quantity by:

Define the quantal color matching functions:

where λi max is the wavelength at which CEλ i(λ)/λ is maximized. Define the quantal tristimulus values:

Note that, as with the energy based functions, the peak value of CQλi(λ) will be equal to unity. Using the above equation for the energy tristimulus values CEi

For the LMS color space, ≈ {566, 541, 441} nm and

- J/photon

Applications

[edit]Color blindness

[edit]The LMS color space can be used to emulate the way color-blind people see color. An early emulation of dichromats were produced by Brettel et al. 1997 and was rated favorably by actual patients. An example of a state-of-the-art method is Machado et al. 2009.[20]

A related application is making color filters for color-blind people to more easily notice differences in color, a process known as daltonization.[21]

Image processing

[edit]JPEG XL uses an XYB color space derived from LMS. Its transform matrix is shown here:

This can be interpreted as a hybrid color theory where L and M are opponents but S is handled in a trichromatic way, justified by the lower spatial density of S cones. In practical terms, this allows for using less data for storing blue signals without losing much perceived quality.[22]

The colorspace originates from Guetzli's butteraugli metric[23] and was passed down to JPEG XL via Google's Pik project. Matt DesLauriers has produced a Gist with the relevant parts from the reference implementation of JPEG XL translated into JavaScript.[24]

See also

[edit]References

[edit]- ^ 2011 correction is taken into account with the CIE (2012) matrix.

- ^ a b c Stiles, WS; Burch, JM (1959). "NPL colour-matching investigation: final report". Optica Acta. 6. doi:10.1080/713826267.

- ^ "Stockman, MacLeod & Johnson 2-deg cone fundamentals (description page)". data retrieval page

- ^ a b c d e f Fairchild, Mark D. (2005). Color Appearance Models (2E ed.). Wiley Interscience. pp. 182–183, 227–230. ISBN 978-0-470-01216-1.

- ^ Schanda, Jnos, ed. (July 27, 2007). Colorimetry. p. 305. doi:10.1002/9780470175637. ISBN 9780470175637.

- ^ Moroney, Nathan; Fairchild, Mark D.; Hunt, Robert W.G.; Li, Changjun; Luo, M. Ronnier; Newman, Todd (November 12, 2002). "The CIECAM02 Color Appearance Model". IS&T/SID Tenth Color Imaging Conference. Scottsdale, Arizona: The Society for Imaging Science and Technology. ISBN 0-89208-241-0.

- ^ Ebner, Fritz (July 1, 1998). "Derivation and modelling hue uniformity and development of the IPT color space". Theses: 129.

- ^ "Welcome to Bruce Lindbloom's Web Site". brucelindbloom.com. Retrieved March 23, 2020.

- ^ Specification ICC.1:2010 (Profile version 4.3.0.0). Image technology colour management — Architecture, profile format, and data structure, Annex E.3, pp. 102.

- ^ Fairchild, Mark D. (2001). "A Revision of CIECAM97s for Practical Applications" (PDF). Color Research & Application. 26 (6). Wiley Interscience: 418–427. doi:10.1002/col.1061.

- ^ Fairchild, Mark. "Errata for COLOR APPEARANCE MODELS" (PDF).

The published MCAT02 matrix in Eq. 9.40 is incorrect (it is a version of the HuntPointer-Estevez matrix. The correct MCAT02 matrix is as follows. It is also given correctly in Eq. 16.2)

- ^ Li, Changjun; Li, Zhiqiang; Wang, Zhifeng; Xu, Yang; Luo, Ming Ronnier; Cui, Guihua; Melgosa, Manuel; Brill, Michael H.; Pointer, Michael (2017). "Comprehensive color solutions: CAM16, CAT16, and CAM16-UCS". Color Research & Application. 42 (6): 703–718. doi:10.1002/col.22131.

- ^ "CIE 2006 "physiologically-relevant" LMS functions (2-deg LMS fundamentals based on the Stiles and Burch 10-deg CMFs adjusted to 2-deg)". Color & Vision Research Laboratory/. Institute of Ophthalmology. Retrieved October 27, 2023.

- ^ Stockman, Andrew (December 2019). "Cone fundamentals and CIE standards" (PDF). Current Opinion in Behavioral Sciences. 30: 87–93. doi:10.1016/j.cobeha.2019.06.005. Retrieved October 27, 2023.

- ^ "Photopigments". Color & Vision Research Laboratory/. Institute of Ophthalmology. Retrieved November 27, 2023.

- ^ a b "CIE 2-deg CMFs". cvrl.ucl.ac.uk.

- ^ "CIE (2012) 2-deg XYZ "physiologically-relevant" colour matching functions". Color & Vision Research Laboratory/. Institute of Ophthalmology. Retrieved November 27, 2023.

- ^ Sharpe, Lindsay T.; Stockman, Andrew; Jagla, Wolfgang; Jägle, Herbert (December 21, 2005). "A luminous efficiency function, V*(λ), for daylight adaptation". Journal of Vision. 5 (11): 3. doi:10.1167/5.11.3. S2CID 19361187.

- ^ Sharpe, L.T.; Stockman, A.; et al. (February 2011). "A Luminous Efficiency Function, V*D65(λ), for Daylight Adaptation: A Correction". COLOR Research and Application. 36 (1): 42–46. doi:10.1002/col.20602.

- ^ Stockman, A.; Sharpe, L.T.; Fach, C.C. (1999). "The spectral sensitivity of the human short-wavelength cones". Vision Research. 39 (17): 2901–2927. doi:10.1016/S0042-6989(98)00225-9. PMID 10492818. Retrieved November 28, 2023.

- ^ "Color Vision Deficiency Emulation". colorspace.r-forge.r-project.org.

- ^ Simon-Liedtke, Joschua Thomas; Farup, Ivar (February 2016). "Evaluating color vision deficiency daltonization methods using a behavioral visual-search method". Journal of Visual Communication and Image Representation. 35: 236–247. doi:10.1016/j.jvcir.2015.12.014. hdl:11250/2461824.

- ^ Alakuijala, Jyrki; van Asseldonk, Ruud; Boukortt, Sami; Szabadka, Zoltan; Bruse, Martin; Comsa, Iulia-Maria; Firsching, Moritz; Fischbacher, Thomas; Kliuchnikov, Evgenii; Gomez, Sebastian; Obryk, Robert; Potempa, Krzysztof; Rhatushnyak, Alexander; Sneyers, Jon; Szabadka, Zoltan; Vandervenne, Lode; Versari, Luca; Wassenberg, Jan (September 6, 2019). "JPEG XL next-generation image compression architecture and coding tools". In Tescher, Andrew G; Ebrahimi, Touradj (eds.). Applications of Digital Image Processing XLII. Vol. 11137. p. 20. Bibcode:2019SPIE11137E..0KA. doi:10.1117/12.2529237. ISBN 9781510629677.

- ^ "google/butteraugli". GitHub. Retrieved August 2, 2021.

- ^ DesLauriers, Matt. "rgb-to-xyb.js". Gist.

LMS color space

View on GrokipediaPhysiological Foundations

Cone Sensitivity Functions

The human retina contains three types of cone photoreceptors, classified by their peak spectral sensitivities: long-wavelength-sensitive (L) cones, peaking at approximately 564 nm in the yellow-green region; medium-wavelength-sensitive (M) cones, peaking at approximately 534 nm in the green region; and short-wavelength-sensitive (S) cones, peaking at approximately 420 nm in the blue-violet region. These peaks represent the wavelengths at which each cone type exhibits maximum responsiveness to monochromatic light, with significant overlap in their sensitivity curves enabling trichromatic color vision.[4] Early estimations of cone sensitivities date back to the 1940s, with P. J. Bouma providing one of the first quantitative approximations based on assumptions about color mixture and loss in dichromatic vision. Subsequent refinements incorporated psychophysical data, notably from W. S. Stiles and J. M. Burch's 1959 experiments, which measured color-matching functions for 49 observers using 10-degree fields and primaries at 444 nm, 526 nm, and 645 nm. These data allowed derivation of spectral sensitivity curves, or cone fundamentals, by transforming the observed matches into estimates of individual cone responses while accounting for ocular media absorption.[4] Cone fundamentals are represented as spectral sensitivity functions—l(λ) for L-cones, m(λ) for M-cones, and s(λ) for S-cones—derived from such psychophysical experiments to approximate the quantal catch of each cone type. These curves describe the relative efficiency of light at wavelength λ in stimulating each cone, typically smoothed and extrapolated from experimental thresholds and matches.[5] Normalization of these functions commonly sets their maxima to unity for comparability across models, ensuring that the peak sensitivity at the respective λ_max equals 1. The response of an L-cone to a spectral power distribution J(λ) is then given by: with analogous integrals for M and S responses using m(λ) and s(λ). This linear integration models the quantal absorption under the assumption of equal photon efficacy, though actual responses incorporate post-receptoral factors in full vision models.Relation to Human Color Vision

The LMS color space directly models the neural responses generated by the three classes of cone photoreceptors in the human retina, forming the foundation of trichromatic color vision theory. In this framework, light stimuli are transduced into electrical signals by specialized photopigments—opsins—within long-wavelength-sensitive (L or red), medium-wavelength-sensitive (M or green), and short-wavelength-sensitive (S or blue) cones, enabling the discrimination of spectral variations across the visible range.[6] These cone responses capture the initial stage of color encoding, where the relative activation levels of L, M, and S cones determine the perceived hue, saturation, and brightness of a visual scene.[6] Beyond initial transduction, LMS signals undergo opponent processing in the post-receptoral visual pathway, transforming them into chromatic opponent channels that align with perceptual color categories. Specifically, the L-M difference encodes red-green opponency, while S-(L+M) supports blue-yellow opponency, complemented by a luminance channel derived from L+M for achromatic brightness perception.[7] This opponent organization, first physiologically demonstrated through single-unit recordings in the lateral geniculate nucleus (LGN), reveals neurons tuned to these contrasts, indicating that color information is recoded early in the pathway to enhance efficiency in representing hue differences. Chromatic adaptation in the LMS domain further illustrates its tie to human vision by facilitating color constancy, the ability to perceive stable object colors across illuminant changes. Under the von Kries model, adaptation occurs via independent scaling of L, M, and S cone sensitivities to normalize responses relative to the ambient light, preserving relative color differences despite absolute shifts in spectral power.[8] Electrophysiological studies of retinal ganglion cells and LGN neurons provide evidence for this mechanism at post-receptoral stages, where opponent signals adjust dynamically to illuminant variations, supporting perceptual invariance in natural viewing conditions.[9]Mathematical Transformations

From CIE XYZ to LMS

The CIE XYZ color space serves as a device-independent representation of colors, derived from the tristimulus values of the CIE 1931 standard colorimetric observer, which itself originates from linear combinations of RGB primaries matched to human color matching functions.[1] To convert these XYZ tristimulus values to LMS cone responses, which approximate the excitations of the long-wavelength (L), medium-wavelength (M), and short-wavelength (S) sensitive cones in the human retina, a linear transformation is applied. This transformation is expressed as a matrix multiplication: where is a 3×3 matrix that maps the XYZ coordinates to the cone response space by approximating the spectral sensitivities of the cones relative to the CIE standard observer.[1] The elements of are determined such that the rows correspond to the cone fundamentals, ensuring the transformation aligns with physiological measurements of cone absorption spectra. Explicitly, the cone responses are computed as linear combinations: with denoting the generic matrix coefficients calibrated to match empirical cone sensitivity data.[1] This linear form assumes a straightforward mapping without nonlinearities at the initial stage of cone excitation. The transformation operates under the assumptions of the von Kries adaptation model, which posits that chromatic adaptation occurs through independent scaling of the L, M, and S cone responses, typically referenced to the D65 illuminant as the standard daylight white point. This linearity facilitates subsequent adaptations for varying viewing conditions by applying a diagonal scaling matrix to the LMS values, preserving perceptual constancy across illuminants.[1]Variant-Specific Matrices

The Hunt matrix, introduced in 1987 for use in the RLAB color appearance model, provides a transformation from CIE XYZ tristimulus values to LMS cone responses aimed at supporting accurate color appearance modeling under varying viewing conditions. This matrix is derived from physiological cone fundamentals and emphasizes perceptual uniformity in appearance predictions, particularly for luminance-dependent effects.[10] The Bradford matrix, developed in 1994, represents a spectrally sharpened approach to the XYZ-to-LMS conversion, designed to improve chromatic adaptation modeling by reducing inter-channel correlations in cone responses.[11] Its sharpening enhances the transform's ability to handle illuminant changes more effectively, making it suitable for applications requiring precise color constancy.[12] The CIECAM97s model uses the Bradford transformation matrix, incorporating a nonlinearity in the short-wavelength (blue) channel to model chromatic adaptation more accurately for predicting color appearance attributes like saturation and hue.[13]| Matrix | L Components | M Components | S Components |

|---|---|---|---|

| Hunt (1987) | 0.4002, 0.7076, -0.0808 | -0.2263, 1.1653, 0.0457 | 0.0000, 0.0000, 0.9182 |

| Bradford (1994) | 0.8951, 0.2664, -0.1614 | -0.7502, 1.7135, 0.0367 | 0.0389, -0.0685, 1.0296 |

| CIECAM97s | 0.8951, 0.2664, -0.1614 | -0.7502, 1.7135, 0.0367 | 0.0389, -0.0685, 1.0296 |

Specific Models and Variants

Hunt and RLAB

The Hunt color appearance model, proposed by Robert W. G. Hunt in 1987, represents an early framework for predicting how colors appear under diverse viewing conditions, including changes in illumination and surround.[15] This model incorporates the LMS color space to simulate cone responses in the human visual system, applying von Kries chromatic adaptation to account for shifts in perceived color due to illuminant changes.[15] By transforming CIE XYZ tristimulus values into LMS coordinates via a specific matrix derived from cone fundamentals, Hunt's approach enabled predictions of attributes like hue, lightness, and saturation across photopic adaptation levels.[15] Building on such foundational ideas, the RLAB color space emerged in the early 1990s as a rudimentary yet practical appearance model developed by Mark D. Fairchild and Robert S. Berns, primarily for cross-media color reproduction tasks.[16] RLAB first converts XYZ to LMS using a transformation matrix, then derives opponent-color representations with red-green and yellow-blue channels defined as and , facilitating perceptual uniformity in applications like image rendering.[16] Chromatic adaptation in RLAB employs a von Kries mechanism, expressed as the adapted cone responses , where comprises diagonal elements representing relative adaptation factors for each cone type, computed from the illuminant's spectral power distribution.[16] These models hold historical significance as pivotal bridges between the device-independent CIE XYZ system and more perceptually oriented frameworks, influencing early research in color science by demonstrating LMS's utility for appearance prediction without requiring full spectral data. Hunt's work laid groundwork for handling complex viewing scenarios, while RLAB's simplicity made it accessible for practical implementations in the pre-CIECAM era, underscoring LMS's role in advancing uniform color spaces.[16][15]Bradford and CIECAM Adaptations

The Bradford method, introduced by K.M. Lam in 1985 based on experiments with M.G. Rigg at the University of Bradford, represents a key advancement in chromatic adaptation transforms for LMS-based models by incorporating a sharpened transformation matrix that reduces overlap between cone responses, thereby improving predictions of color constancy under varying illuminants.[18] This sharpening enhances the isolation of long (L), medium (M), and short (S) cone signals compared to earlier von Kries implementations, allowing for more accurate mapping of corresponding colors across illuminants while maintaining linearity in the adaptation process. The method employs a specific 3x3 matrix to convert CIE XYZ tristimulus values to a sharpened LMS space, where adaptation occurs via diagonal scaling of the cone responses. Let sharpened LMS_s = M_B × XYZ_s. The forward adaptation from source to destination (typically D50) follows a von Kries model in the sharpened domain: Here, sharpened LMS_d is the adapted sharpened LMS, subscripts and denote source and destination, and indicates the white point responses. The destination XYZ is then XYZ_d = M_B^{-1} × sharpened LMS_d.[19] Surround effects are incorporated through parameters that adjust the degree of adaptation (D factor) and impact post-adaptation correlates: average surround assumes relative luminance around 20% of the adapting white (F=1.0), dim surround below 20% (F=0.9), and dark surround near zero (F=0.8), influencing chroma and brightness scaling. CIECAM97s, adopted as the CIE interim standard in 1997, integrates the Bradford transform as its core chromatic adaptation mechanism within a comprehensive color appearance model, extending LMS predictions to perceptual attributes like lightness (J), chroma (C), and hue (h) in cylindrical JCh coordinates.[13] This model was later superseded by CIECAM02 in 2002, which uses a similar sharpened von Kries adaptation (CAT02) for improved performance. Post-adaptation, the model applies nonlinearities, including an exponential compression on the S channel to account for its lower sensitivity, and surround-specific adjustments to correlate signals for uniform appearance modeling across viewing conditions.[20] Fairchild's LLAB model, proposed in 1991, predates but anticipates Bradford-like sharpening by using a modified von Kries transform in a sharpened RGB-like space to achieve uniformity in a lightness-chroma-hue space suitable for imaging applications. This approach emphasizes practical color reproduction, incorporating incomplete adaptation scaling similar to later CIECAM implementations. Despite its strengths, the Bradford method's emphasis on spectral sharpening can lead to inaccuracies in conditions involving extreme illuminants or incomplete adaptation, as the fixed sharpened sensors may overcorrect cone overlap deviations from physiological data.[21]Stockman-Sharpe and Quantal CMFs

The Stockman-Sharpe LMS functions, developed in 2000, offer physiologically informed estimates of the spectral sensitivities for the long (L), medium (M), and short (S) wavelength-sensitive cones, incorporating data from individual observers to account for variability in cone responses. These functions were standardized in the CIE Technical Report 170-1:2006, providing tabulated values at 1 nm intervals from 390 to 830 nm for both 2° and 10° fields of view. The model improves upon earlier estimates by integrating psychophysical measurements from Stiles and Burch (1959) with direct physiological assessments of cone photopigments, enabling more accurate representations of human color matching.[22] A key feature of the Stockman-Sharpe model is its transformation matrix between CIE XYZ tristimulus values and LMS cone responses, which facilitates integration with standard colorimetric frameworks. For the 2° observer, the matrix from LMS to XYZ is given by: The inverse matrix converts from XYZ to LMS. These transformations align closely with retinal cone mosaics and account for effects like macular pigment density, offering advantages in modeling individual differences and spectral sensitivities over prior LMS variants.[23] The quantal color matching functions (CMFs), derived from energy-based cone fundamentals such as those of Stockman-Sharpe, shift to photon-count-based sensitivities to better reflect quantum efficiency in cone responses. This conversion adjusts the energy sensitivity to quantal sensitivity using the relation , where is Planck's constant, is the speed of light, and is the wavelength in vacuum. Such quantal formulations are essential for applications requiring precise photon flux modeling, such as in vision science simulations. These functions are available through resources like the CVRL database based on CIE-endorsed fundamentals.[2]Applications

Color Vision Deficiency Simulation

The LMS color space facilitates the simulation of congenital color vision deficiencies by directly modeling alterations to the cone sensitivity functions, providing a physiologically grounded approach that correlates with human visual perception. Simulations target two primary categories: dichromacy, where one cone type is absent (protanope lacking L-cones, deuteranope lacking M-cones, tritanope lacking S-cones), and anomalous trichromacy, where the sensitivity of one cone type is shifted or reduced (protanomaly with altered L-cone response, deuteranomaly with altered M-cone response). These deficiencies are emulated by modifying the LMS signals to reflect the reduced or absent cone contributions, ensuring that simulated colors align with empirical observations of confusion pairs—colors indistinguishable to affected individuals.[24] A seminal method for dichromatic simulation, proposed by Brettel et al., transforms input colors to LMS space using cone fundamentals, then projects the LMS vector onto a two-dimensional confusion plane defined by anchor wavelengths that represent typical confusion lines. For protanopia, the L-cone response is replaced by a linear combination derived from the confusion line equation , where coefficients and are computed from the LMS values of monochromatic anchors at 475 nm (blue) and 575 nm (yellow), ensuring the projection maintains perceptual validity within the display gamut. Similarly, for deuteranopia, the M-cone response is replaced by , where and (e.g., approximately 1.014 L + 0.986 S normalized) are derived from the same anchors; for tritanopia, the S-cone is adjusted via anchors at 485 nm (blue-green) and 660 nm (red). The modified LMS values are then inverse-transformed to RGB for display, preserving the dichromat's reduced color palette and confusion properties. This LMS-based projection outperforms direct RGB manipulations by decoupling responses tied to cone excitations, avoiding artifacts from correlated RGB channels.[24] For anomalous trichromacy, simulations extend this framework by scaling or shifting the affected cone's sensitivity curve rather than nullifying it, as detailed by Machado et al..[25] The anomalous L- or M-cone fundamentals are modeled using spectral shifts (e.g., 4-10 nm for protanomaly), with the degree of anomaly parameterized by a severity factor that interpolates between normal trichromacy and dichromacy. The LMS transformation incorporates the anomalous fundamentals to compute shifted responses, followed by a similar projection onto a confusion surface, enabling graded simulations that capture partial discrimination abilities.[25] These LMS-derived methods underpin standards in accessibility software, such as the Vischeck tool and extensions in image editing applications, where they enhance web and graphic design evaluation for color-deficient users by accurately emulating perceptual losses without introducing unnatural distortions common in RGB-based approximations. LMS's physiological alignment ensures simulations remain verifiably consistent with psychophysical data, supporting broader applications in user interface testing and medical visualization.[24][25]Image Processing and Appearance Modeling

In digital image processing, the LMS color space plays a pivotal role in modern codecs like JPEG XL, introduced in 2022, where an LMS-derived XYB encoding supports both lossless and lossy compression modes. This approach leverages the perceptual uniformity of LMS to handle wide color gamuts and high-dynamic-range (HDR) content, significantly reducing compression artifacts such as banding and noise in bright or saturated regions compared to traditional RGB-based methods. By transforming images into XYB—where Y approximates luminance, X captures L-M differences, and B represents S responses—JPEG XL achieves up to 60% better compression efficiency for HDR images while preserving visual fidelity across diverse displays.[26][27] For appearance modeling, LMS integration in the CIECAM16 model (published 2016) enables robust predictions of perceived color under complex conditions, including varying illuminants, surrounds, and background luminances. CIECAM16 employs the CAT16 chromatic adaptation transform, which first converts CIE XYZ to LMS cone responses using a sharpened matrix, then applies a non-linear adaptation to simulate von Kries scaling, yielding attributes like lightness (J), brightness (Q), and chroma (C) that correlate highly with human judgments (r > 0.95 in validation datasets). This LMS foundation outperforms earlier models like CIECAM02 in cross-media color reproduction, particularly for HDR workflows, by accounting for incomplete adaptation degrees up to 0.9.[28] In artificial intelligence and computer vision, LMS spaces enhance color constancy for tasks like object detection, where illumination variations can degrade performance. Recent studies (2023–2025) have developed LMS-inspired color difference formulas, such as MLAB(LMS), which optimize CIELAB-like metrics in LMS for better perceptual uniformity, achieving STRESS indices below 20 on benchmark datasets. These approaches transform RGB inputs to LMS for invariance learning. Complementing this, 2025 CIE updates have tested ΔE formulas in LMS spaces, like MLAB(XYZ) and MLAB(LMS), against visual experiments, showing superior performance (PF/3 < 45) for small differences under mixed lighting.[29][30] LMS also facilitates gamut mapping in displays and HDR tone mapping by providing a cone-opponent framework that maintains perceptual uniformity post-adaptation. Derived spaces like IPT, built from LMS, apply non-linear compression to cone responses for HDR handling: where for cone values c, and I is intensity; followed by a rotation matrix to IPT coordinates for hue-linear chroma mapping. This reduces clipping artifacts in tone reproduction by 25% in HDR pipelines, enabling seamless wide-gamut rendering on devices like OLED displays. LMS also underpins color management in ICC v4.5 profiles and HDR standards like Dolby Vision, enabling device-independent adaptation as of 2025.[31][32][33]References

- https://onlinelibrary.wiley.com/doi/abs/10.1002/(SICI)1520-6378(199610)21:5<338::AID-COL3>3.0.CO;2-Z

![{\displaystyle {\begin{bmatrix}L\\M\\S\end{bmatrix}}_{\text{E}}=\left[{\begin{array}{lll}{\phantom {-}}0.38971&{\phantom {-}}0.68898&-0.07868\\-0.22981&{\phantom {-}}1.18340&{\phantom {-}}0.04641\\{\phantom {-}}0&{\phantom {-}}0&{\phantom {-}}1\end{array}}\right]{\begin{bmatrix}X\\Y\\Z\end{bmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/be54f50485e50a4e82b112eae95e5af6f542eae3)

![{\displaystyle {\begin{bmatrix}L\\M\\S\end{bmatrix}}_{\text{D65}}=\left[{\begin{array}{lll}{\phantom {-}}0.4002&{\phantom {-}}0.7076&-0.0808\\-0.2263&{\phantom {-}}1.1653&{\phantom {-}}0.0457\\{\phantom {-}}0&{\phantom {-}}0&{\phantom {-}}0.9182\end{array}}\right]{\begin{bmatrix}X\\Y\\Z\end{bmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b1d1e134c0f36082be55dc9ec7bfd59690a5034b)

![{\displaystyle {\begin{bmatrix}R\\G\\B\end{bmatrix}}_{\text{BFD}}=\left[{\begin{array}{lll}{\phantom {-}}0.8951&{\phantom {-}}0.2664&-0.1614\\-0.7502&{\phantom {-}}1.7135&{\phantom {-}}0.0367\\{\phantom {-}}0.0389&-0.0685&{\phantom {-}}1.0296\end{array}}\right]{\begin{bmatrix}X\\Y\\Z\end{bmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/34aec12c6e041a42c88c05ea569a8cd5842e5aa1)

![{\displaystyle {\begin{bmatrix}R\\G\\B\end{bmatrix}}_{\text{97}}=\left[{\begin{array}{lll}{\phantom {-}}0.8562&{\phantom {-}}0.3372&-0.1934\\-0.8360&{\phantom {-}}1.8327&{\phantom {-}}0.0033\\{\phantom {-}}0.0357&-0.0469&{\phantom {-}}1.0112\end{array}}\right]{\begin{bmatrix}X\\Y\\Z\end{bmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/6688b1cdd805847f2eff87f6c318ec6d59408fe3)

![{\displaystyle {\begin{bmatrix}R\\G\\B\end{bmatrix}}_{\text{02}}=\left[{\begin{array}{lll}{\phantom {-}}0.7328&{\phantom {-}}0.4296&-0.1624\\-0.7036&{\phantom {-}}1.6975&{\phantom {-}}0.0061\\{\phantom {-}}0.0030&{\phantom {-}}0.0136&{\phantom {-}}0.9834\end{array}}\right]{\begin{bmatrix}X\\Y\\Z\end{bmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2c1c1dd9a765de0075c5f8e6f8ff44cf47f84a47)

![{\displaystyle {\begin{bmatrix}R\\G\\B\end{bmatrix}}_{\text{16}}=\left[{\begin{array}{lll}{\phantom {-}}0.401288&{\phantom {-}}0.650173&-0.051461\\-0.250268&{\phantom {-}}1.204414&{\phantom {-}}0.045854\\-0.002079&{\phantom {-}}0.048952&{\phantom {-}}0.953127\end{array}}\right]{\begin{bmatrix}X\\Y\\Z\end{bmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/80475584c98ff53b4dec97f22c62c41e8d4fd68b)

![{\displaystyle T_{ij}=\left[\,{\begin{array}{lll}1.94735469&-1.41445123&{\phantom {-}}0.36476327\\0.68990272&{\phantom {-}}0.34832189&{\phantom {-}}0\\0&{\phantom {-}}0&{\phantom {-}}1.93485343\end{array}}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/10e4a951a2d3ff2711b830f5842f61096373e526)

![{\displaystyle {\begin{bmatrix}X\\Y\\Z\end{bmatrix}}_{\text{F}}=\left[\,{\begin{array}{lll}1.94735469&-1.41445123&{\phantom {-}}0.36476327\\0.68990272&{\phantom {-}}0.34832189&{\phantom {-}}0\\0&{\phantom {-}}0&{\phantom {-}}1.93485343\end{array}}\right]{\begin{bmatrix}L\\M\\S\end{bmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ebf92385fc41f4ab50e37db7ee37c42a9fb9c6c6)

![{\displaystyle {\begin{bmatrix}L\\M\\S\end{bmatrix}}=\left[{\begin{array}{lll}{\phantom {-}}0.210576&{\phantom {-}}0.855098&-0.0396983\\-0.417076&{\phantom {-}}1.177260&{\phantom {-}}0.0786283\\{\phantom {-}}0&{\phantom {-}}0&{\phantom {-}}0.5168350\\\end{array}}\right]{\begin{bmatrix}X\\Y\\Z\end{bmatrix}}_{\text{F}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1e64d6054442424cf4ca6b912b843680266735e2)