Recent from talks

Nothing was collected or created yet.

Experimental Breeder Reactor II

View on Wikipedia43°35′42″N 112°39′26″W / 43.595039°N 112.657156°W

Experimental Breeder Reactor-II (EBR-II) was a sodium-cooled fast reactor designed, built and operated by Argonne National Laboratory at the National Reactor Testing Station in Idaho. It was shut down in 1994. Custody of the reactor was transferred to Idaho National Laboratory after its founding in 2005.

Initial operations began in July 1964 and it achieved criticality in 1965 at a total cost of more than US$32 million ($319 million in 2024 dollars). The original emphasis in the design and operation of EBR-II was to demonstrate a complete breeder-reactor power plant with on-site reprocessing of solid metallic fuel. Fuel elements enriched to about 67% uranium-235 were sealed in stainless steel tubes and removed when they reached about 65% enrichment. The tubes were unsealed and reprocessed to remove neutron poisons, mixed with fresh U-235 to increase enrichment, and placed back in the reactor.

Testing of the original breeder cycle ran until 1969, after which time the reactor was used to test concepts for the Integral Fast Reactor (IFR) concept. In this role, the high-energy neutron environment of the EBR-II core was used for testing fuels and materials for future, larger, liquid metal reactors. As part of these experiments, in 1986 EBR-II underwent an experimental shutdown simulating complete cooling pump failure. It demonstrated its ability to self-cool its fuel through natural convection of the sodium coolant during the decay heat period following the shutdown. It was used in the IFR support role, and many other experiments, until it was decommissioned in September 1994.

At full power operation, which it reached in September 1969, EBR-II produced about 62.5 megawatts of heat and 20 megawatts of electricity through a conventional three-loop steam turbine system and tertiary forced-air cooling tower. Over its lifetime it has generated over two billion kilowatt-hours of electricity, providing a majority of the electricity and also heat to the facilities of the Argonne National Laboratory-West.

Design

[edit]The fuel consists of uranium rods 5 millimetres (0.20 in) in diameter and 33 cm (13 in) long. Enriched to 67% uranium-235 when fresh, the concentration dropped to approximately 65% upon removal. The rods also contained 10% zirconium. Each fuel element is placed inside a thin-walled stainless steel tube along with a small amount of sodium metal. The tube is welded shut at the top to form a unit 73 cm (29 in) long. The purpose of the sodium is to function as a heat-transfer agent. As more and more of the uranium undergoes fission, it develops fissures and the sodium enters the voids. It extracts an important fission product, caesium-137, and hence becomes intensely radioactive. The void above the uranium collects fission gases, mainly krypton-85. Clusters of the pins inside hexagonal stainless steel jackets 234 cm (92 in) long are assembled honeycomb-like; each unit has about 4.5 kg (9.9 lb) of uranium. Altogether, the core contains about 308 kg (679 lb) of uranium fuel, and this part is called the driver.

The EBR-II core can accommodate as many as 65 experimental sub-assemblies for irradiation and operational reliability tests, fueled with a variety of metallic and ceramic fuels—the oxides, carbides, or nitrides of uranium and plutonium, and metallic fuel alloys such as uranium-plutonium-zirconium fuel. Other sub-assembly positions may contain structural-material experiments.

Passive safety

[edit]The pool-type reactor design of the EBR-II provides passive safety: the reactor core, its fuel handling equipment, and many other systems of the reactor are submerged under molten sodium. By providing a fluid which readily conducts heat from the fuel to the coolant, and which operates at relatively low temperatures, the EBR-II takes maximum advantage of expansion of the coolant, fuel, and structure during off-normal events which increase temperatures. The expansion of the fuel and structure in an off-normal situation causes the system to shut down even without human operator intervention. In April 1986, two special tests were performed on the EBR-II, in which the main primary cooling pumps were shut off with the reactor at full power (62.5 megawatts, thermal). By not allowing the normal shutdown systems to interfere, the reactor power dropped to near zero within about 300 seconds. No damage to the fuel or the reactor resulted. The same day, this demonstration was followed by another important test. With the reactor again at full power, flow in the secondary cooling system was stopped. This test caused the temperature to increase, since there was nowhere for the reactor heat to go. As the primary (reactor) cooling system became hotter, the fuel, sodium coolant, and structure expanded, and the reactor shut down. This test showed that it will shut down using inherent features such as thermal expansion, even if the ability to remove heat from the primary cooling system is lost.[1]

EBR-II is now defueled. The EBR-II shutdown activity also includes the treatment of its discharged spent fuel using an electrometallurgical fuel treatment process in the Fuel Conditioning Facility located next to the EBR-II.

The clean-up process for EBR-II includes the removal and processing of the sodium coolant, cleaning of the EBR-II sodium systems, removal and passivating of other chemical hazards and placing the deactivated components and structure in a safe condition.

Decommissioning

[edit]The reactor was shut down in September 1994. The initial phase of decommissioning activities, reactor de-fueling, was completed in December 1996. From 2000, the coolants were removed and processed. This was completed in March 2001. The third and final phase of the decommissioning activity was "the placement of the reactor and non-reactor systems in a radiological and industrially safe condition".[2]

Between 2012 and 2015, some components of the below-ground reactor were removed. The cost for removal actions in the reactor building were about $25.7 million.[3] The basement with the reactor was filled with grout. The three-year decontamination and entombment project cost $730 million. In a later stage, the large concrete dome that surrounds the EBR-II reactor would be removed and a concrete cap placed over the remaining structure.[4]

In 2018, the plans were changed. The removal of the dome was stopped and in 2019, a new floor was poured and the dome got a fresh paint to prepare the building for industrial use.[5] The building will be used for a research facility on top of the entombed reactor. The dome is an integral part of the tomb along with a "Site-Wide Long-Term Management and Control Program". The use of the site will be industrial in nature for a 100-year period and likely in the indefinite future thereafter.[3]

Related facilities

[edit]

The objective of the EBR-II was to demonstrate the operation of a sodium-cooled fast reactor power plant with on-site reprocessing of metallic fuel. In order to meet this objective of on-site reprocessing, the EBR-II was part of a wider complex of facilities, consisting of

- Fuel Conditioning Facility: facility for reprocessing and treating spent fuel from the EBR-II and other reactors, using an electrorefiner for electrometallurgical treatment of spent fuel

- Fuel Manufacturing Facility: facility for the manufacturing of metallic fuel elements

- Hot Fuels Examination Facility: a "hot-cell" complex for handling and examining highly radioactive materials remotely

- Sodium Processing Facility: facility for processing of reactive sodium into low-level waste

Integral Fast Reactor

[edit]The EBR-II has served as prototype of the Integral Fast Reactor (IFR), which was the intended successor to the EBR-II. The IFR program was started in 1983, but funding was withdrawn by U.S. Congress in 1994, three years before the intended completion of the program.

Gallery

[edit]-

EBR-II

-

Electrorefiner

-

Cathode processor

-

Control room of the EBR-II in 1986

-

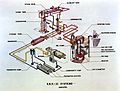

Schema of the EBR-II

-

Schema of the spent fuel treatment process

See also

[edit]References

[edit]- Citations

- ^ "Passively safe reactors rely on nature to keep them cool Reprinted from Argonne Logos - (Winter 2002 – vol. 20, no. 1)".

- ^ Experimental Breeder Reactor-II. Argonne National Laboratory (accessed Feb 2023)

- ^ a b Removal Action Report for the Experimental Breeder Reactor II (EBR-II). U.S. Department of Energy (DOE), July 2022 (pdf, 3.3 MB)

- ^ USA's Experimental Breeder Reactor-II now permanently entombed. World Nuclear News, 1 July 2015

- ^ Historic reactor dome gets a face-lift. Idaho National Laboratory, 3 Apr 2020

- Bibliography

- Till, Charles; Chang, Yoon Il (2011). Plentiful energy : the story of the integral fast reactor, the complex history of a simple reactor technology, with emphasis on its scientific basis for non-specialists. Charles E. Till and Yoon Il Chang. ISBN 978-1466384606. Online PDF

External links

[edit]- EBR-II at "Reactors designed by Argonne National Laboratory" web site.

- Experimental Breeder Reactor-II (21 MB) Leonard J. Koch

- Westfall, Catherine (Feb 2004). "Vision and reality: The EBR-II story" (PDF). Nuclear News: 25–32.

- Passively safe reactors rely on nature to keep them cool

Experimental Breeder Reactor II

View on GrokipediaHistorical Development

Construction and Commissioning

The construction of the Experimental Breeder Reactor II (EBR-II) commenced with groundbreaking in June 1958 at the National Reactor Testing Station (now Idaho National Laboratory) in Idaho, under the design and oversight of Argonne National Laboratory.[3] The project encompassed the reactor vessel, sodium cooling systems, fuel handling facilities, and an integrated steam-electric plant to demonstrate a self-sustaining breeder reactor cycle, with initial funding authorized at $14.85 million in July 1955 and later increased to $29.1 million by August 1957.[1] Construction progressed over five years, incorporating unmoderated fast neutron spectrum components and metallic uranium-plutonium alloy fuel assemblies, and was completed in May 1961 at a total cost of $32.5 million.[3][4] Key pre-operational milestones included dry criticality on September 30, 1960, which validated core neutronics without coolant, followed by wet criticality in November 1962 after sodium filling and fuel loading.[1] Approach to power operations began on July 16, 1964, marking the transition from testing to controlled fission.[1] On August 14, 1964, EBR-II generated its first electricity at 8,000 kilowatts, powering site facilities and confirming the integrated primary-to-secondary loop functionality.[5] Commissioning proceeded through phased power ascents, reaching 45 MW thermal by May 1965 and incorporating recycled fuel from initial operations, which demonstrated breeding capability.[1] Full design power of 62.5 MW thermal (producing 20 MW electric) was attained in September 1969 after extensive safety and performance validations, including sodium void and loss-of-flow simulations.[1] These steps established EBR-II as the first sodium-cooled fast reactor to operate as a complete power plant without external electricity dependence during startups.[5]Early Operations and Milestones

The Experimental Breeder Reactor II (EBR-II) achieved dry criticality on September 30, 1960, during initial low-power testing without sodium coolant, confirming basic neutronics behavior in the core assembly.[1] Wet criticality followed in November 1962 after sodium filling, enabling validation of the liquid-metal cooling system's interaction with the reactor physics under operational conditions.[1] Approach to power commenced on July 16, 1964, marking the transition to full operational mode as a sodium-cooled fast breeder reactor capable of electricity generation.[1] By August 1964, power levels reached 30 megawatts thermal (MWt), demonstrating stable heat transfer and turbine integration for net electrical output of approximately 20 megawatts electric (MWe) at full capacity.[1] This phase verified the reactor's integrated design, including on-site fuel reprocessing, as EBR-II operated continuously as a complete power plant prototype. A key milestone occurred in May 1965 when EBR-II first utilized recycled fuel from its own spent assemblies, operating at 45 MWt and fulfilling its core objective of demonstrating closed-fuel-cycle viability in a breeder configuration.[1] By March 1965, the reactor had successfully completed its initial mission of safe, reliable power production using reprocessed plutonium-uranium fuel, breeding more fissile material than consumed.[6] Power ascension continued, achieving the design rating of 62.5 MWt in September 1969, with over 90% plant availability in early years underscoring the inherent stability of the sodium-cooled system.[1] These operations provided empirical data on fast-spectrum neutron economy and sodium handling, informing subsequent breeder reactor designs without major incidents.[7]Technical Design

Core and Fuel Assembly

The core of the Experimental Breeder Reactor II (EBR-II) was a compact, unmoderated fast-spectrum assembly designed for plutonium breeding, featuring hexagonal subassemblies arranged in a hexagonal lattice within a pool-type sodium coolant configuration. It comprised 637 subassembly positions, including driver fuel for fission power generation, depleted uranium blankets for neutron capture and breeding, control rods, safety rods, reflectors, and experimental positions. The driver region occupied central rows 1 through 5 or 7, with an approximate equivalent diameter of 20 inches and active height of 14 inches, enabling a peak thermal power of 62.5 MWt.[8][9][10] Driver fuel assemblies utilized metallic fuel pins sodium-bonded within stainless steel cladding to facilitate heat transfer and accommodate fuel swelling during irradiation. Initial Mark-IA assemblies contained 91 pins of uranium-fissium (U-5 wt% Fs) alloy, with 95 wt% uranium enriched to 45-67 wt% U-235, pin outer diameters of 0.174 inches, and active lengths of 14.22 inches. Subsequent Mark-II assemblies featured 61 pins, typically of U-10 wt% Zr or U-Pu-Zr alloys in later Integral Fast Reactor demonstrations, with cladding diameters around 0.44 cm, fuel slugs of 0.33 cm diameter, and active lengths of 62 cm; pins were wire-wrapped for subchannel flow and spaced in hexagonal arrays. Cladding materials included Type 304 or 316 stainless steel, with sodium bonding filling a small annular gap (e.g., 0.006 inches).[8][9][11] Blanket assemblies, numbering 60-66 in the inner region (rows 6-7) and up to 510 in the outer (rows 8-16), employed 19 larger depleted uranium pins per assembly (outer diameter 1.25 cm, length 1.43 m) to maximize neutron economy and produce fissile plutonium via U-238 capture. Axial blankets at core ends, when present in early designs, used 18 pins each of 0.3165-inch diameter. These elements were similarly clad in stainless steel with sodium bonding and wire wraps, but optimized for lower power density and higher breeding capture. Later core designs omitted axial blankets to simplify fabrication and enhance performance.[8][9][10] Reactivity control integrated moveable driver assemblies and dedicated control/safety subassemblies, with 61 fuel pins plus boron carbide absorbers in control types; these could be raised into or lowered from the core to modulate power without external electricity. The overall design supported high fuel burnup (up to 19 at% in metallic fuels) and breeding ratios exceeding 1.0, validated through decades of operation from 1964 to 1994.[12][9]Sodium Cooling System

The sodium cooling system of the Experimental Breeder Reactor II (EBR-II) utilized liquid sodium in a pool-type primary circuit, submerging the reactor core, primary pumps, and intermediate heat exchangers (IHXs) within a large tank to enable compact design and passive heat dissipation. The primary tank measured 7.9 meters in diameter and 7.9 meters in height, containing approximately 89,000 US gallons (337 m³) of sodium at operating conditions.[13] [14] This configuration leveraged the sodium pool's substantial heat capacity for natural circulation decay heat removal during transients, as demonstrated in inherent safety tests.[9] Liquid sodium served as coolant due to its thermophysical advantages, including high thermal conductivity (about 80 W/m·K at operating temperatures), adequate specific heat (1.3 kJ/kg·K), boiling point of 883°C, and minimal neutron absorption cross-section, preserving the fast spectrum essential for plutonium breeding while facilitating efficient heat transfer at low pressure.[1] The system operated near atmospheric pressure, with sodium temperatures typically entering the core at around 360°C and exiting at 510°C under full power, yielding a core temperature rise of approximately 150°C.[15] Two centrifugal primary pumps, each rated at 4,500 gallons per minute, circulated sodium at a nominal mass flow rate of 485 kg/s from the pool inlet, through the core's lower plenum, and to the IHXs for heat transfer to the intermediate loop.[14] [10] The intermediate sodium loop, operating at 315 kg/s, isolated the primary system from the steam generators using three IHXs with double-walled, concentric tube designs to preclude sodium-water reactions in the event of leaks.[10] [15] Sodium purity was maintained via electromagnetic pumps and cold traps to remove impurities like oxides and hydrides, preventing corrosion of stainless steel components and ensuring long-term operational reliability over the reactor's 30-year lifespan from 1964 to 1994.[16] The system's design emphasized leak-tightness and argon cover gas blanketing to mitigate sodium's reactivity with air and moisture, though handling residual sodium post-shutdown required specialized passivation and carbonation processes due to its persistence as a pyrophoric liquid.[17]Primary and Secondary Loops

The primary cooling loop of the Experimental Breeder Reactor II (EBR-II) employed a pool-type design, with the reactor core, primary pumps, and intermediate heat exchangers (IHXs) submerged in a large tank containing approximately 86,000 gallons (330 m³) of sodium coolant.[9] [18] This configuration minimized external piping and enhanced safety by leveraging the thermal inertia of the sodium pool for passive heat removal during transients.[9] Two vertical centrifugal pumps circulated the sodium at a nominal flow rate of 9,000 gallons per minute (gpm) through the core, achieving an inlet temperature of 700°F (371°C) and an outlet temperature of 883–900°F (473–482°C), corresponding to a 200°F temperature rise.[14] [9] The system operated at low pressures, with the high-pressure plenum at 61 psi and the low-pressure plenum at 22 psi, and featured components such as shutdown coolers using sodium-potassium eutectic alloy and an auxiliary pump for post-shutdown cooling.[9] Heat from the primary sodium was transferred to three parallel secondary sodium loops via shell-and-tube IHXs, with the primary sodium on the shell side and secondary on the tube side to contain potential leaks within the primary system.[9] [19] Each secondary loop used a linear induction electromagnetic pump to circulate non-radioactive sodium at a combined flow rate of approximately 315 kg/s, isolating the radioactive primary coolant from the steam generation system and reducing activation in the secondary sodium due to the IHXs' remote location from the core.[10] [12] Secondary sodium entered the IHX at 588°F (308°C) and exited at 866°F (463°C), flowing to steam generators with duplex tubes for added leak tolerance, requiring dual failures for sodium-water contact.[9] The loops maintained low pressures around 10 psi normally, with relief valves at 100 psi, and included storage tanks for full system drainage.[9] This dual-loop sodium system supported EBR-II's 62.5 MWth design power, later operated up to 69 MWth, enabling efficient heat transfer while demonstrating inherent safety features like natural circulation capability.[9] [20]Operational Performance

Power Generation and Efficiency

The Experimental Breeder Reactor II (EBR-II) was designed as an integrated fast reactor power plant with a nominal thermal power rating of 62.5 MWth, capable of generating approximately 20 MWe of gross electrical power through its steam cycle system.[21][22] This output supported on-site electricity needs at Argonne National Laboratory and demonstrated the feasibility of sodium-cooled fast reactor electricity production, with the primary sodium coolant transferring heat to a secondary sodium loop and then to steam generators for turbine drive.[23] Operational performance highlighted high reliability, with annual plant capacity factors averaging 70.5% from 1975 to the late 1980s, including a peak of 77.4% in 1980.[24] Over the preceding six years to that period, capacity factors averaged 73.7%, comparable to commercial nuclear and fossil plants, reflecting effective maintenance and fuel management that minimized downtime.[6] In its final decade of operation before shutdown in 1994, EBR-II achieved capacity factors of up to 80%, underscoring the robustness of its design for sustained power generation despite experimental testing interruptions.[1] Efficiency in power conversion was influenced by the fast spectrum's higher outlet temperatures compared to light-water reactors, enabling steam conditions suitable for turbine efficiencies around 30-35%, though specific thermodynamic efficiencies were not routinely reported beyond power output ratios. The closed fuel cycle integration further enhanced overall plant efficiency by recycling actinides, reducing fresh fuel needs and supporting extended operational runs at full power.[4]Fuel Cycle Integration

The Experimental Breeder Reactor II (EBR-II) featured an integrated fuel cycle that combined power generation, fuel irradiation, on-site reprocessing, and refabrication within a single facility complex, minimizing external dependencies and enabling closed-loop operation. This approach utilized sodium-bonded metallic uranium-plutonium-zirconium alloy fuel, which was designed for high burnup and compatibility with fast neutron spectra to facilitate breeding.[9] The system's design allowed for the demonstration of both enriched uranium and plutonium-uranium metallic fuel cycles, with spent assemblies processed pyrometallurgically to recover actinides for recycling.[9][25] Central to the integration was the development and application of electrochemical pyroprocessing, particularly electrorefining, where spent fuel was dissolved anodically in molten salt electrolytes to separate uranium, plutonium, and other transuranics from fission products.[1] Recovered actinides were then cast into ingots and fabricated into new fuel pins using injection casting techniques, achieving rapid turnaround times of weeks to months for reinsertion into the reactor core.[24] This on-site recycling reduced the need for large fuel inventories and supported economic operation by reusing fissile material, with blanket assemblies producing plutonium that was blended into driver fuel for subsequent cycles.[1][26] EBR-II's fuel cycle demonstrated closure by recycling over 90% of the energy content in spent fuel, with irradiated metallic fuel achieving burnups up to 19 atomic percent and recycled fuel performing equivalently to fresh assemblies in terms of swelling and cladding integrity. The process isolated fission products as stable ceramic waste forms, minimizing high-level waste volume compared to aqueous reprocessing methods.[1] Operational data from 30 years of experience validated the cycle's reliability, including sustained breeding ratios greater than 1.0 in plutonium-fueled configurations, though full commercial scalability was curtailed by program termination in 1994.[27][26] The integration extended to safety and efficiency, as the compact fuel cycle working inventory—typically subassemblies handled robotically—facilitated inherent safeguards against proliferation by keeping materials under continuous accounting within the reactor site.[1] Post-irradiation examinations confirmed that recycled fuel maintained ductilities and power densities suitable for extended core life, supporting the Integral Fast Reactor (IFR) vision of self-sustaining operations without long-term actinide accumulation in waste streams.[25]Safety Demonstrations

Passive Shutdown Mechanisms

The Experimental Breeder Reactor II (EBR-II) featured passive shutdown mechanisms rooted in inherent negative reactivity feedbacks, enabling automatic power reduction without active intervention such as control rod insertion or external power. These feedbacks included the Doppler effect, where rising fuel temperatures broadened neutron absorption resonances in fissile and fertile materials like uranium-plutonium oxide or metal fuels, thereby increasing parasitic neutron capture and inserting negative reactivity on the order of -0.5 to -1.0 pcm/°C in the core.[28] Coolant feedback from sodium thermal expansion similarly reduced coolant density, diminishing neutron moderation and leakage reduction effects, contributing an additional negative reactivity coefficient of approximately -0.1 to -0.2 pcm/°C.[29] Axial expansion of fuel pins and core structural components, such as grid plates, further decoupled the core height from neutron flux, providing a small but cumulative negative reactivity insertion of about -0.05 pcm/°C.[28] These mechanisms ensured self-regulation during transients like unprotected loss of flow (ULOF), where primary pump failure led to reduced flow rates below 10% of nominal without initiating a scram; reactor power decayed exponentially to decay heat levels (around 1-5% of full power) within seconds to minutes, preventing fuel melting or cladding breach.[29] Natural circulation in the pool-type primary system, driven by buoyancy differences in the sodium coolant (with density changes of ~0.2% per °C), maintained core inlet temperatures below 600°C even under full-power scrammed conditions, relying on the reactor vessel's thermal inertia and elevated inlet plenum design.[30] For sustained decay heat removal post-shutdown, passive air-cooled shutdown heat exchangers (two units penetrating the primary tank) transferred heat directly to the atmosphere via natural convection, achieving removal rates up to 1.5 MWth without auxiliary power or forced airflow.[31] The design's effectiveness stemmed from the fast neutron spectrum and metallic sodium's high thermal conductivity (about 80 W/m·K), which minimized void coefficient risks compared to water-cooled reactors; sodium voids in the core introduced positive reactivity but were counteracted by upper plenum voiding effects and overall negative feedbacks in EBR-II's configuration.[9] Empirical validation occurred through integral tests simulating anticipated transient without scram (ATWS) events, confirming that peak fuel temperatures remained under 2000°C—well below the 2500°C melting point of the fuel—solely via these passive responses.[28][29] This approach prioritized causal chain integrity from neutronics to thermal hydraulics, avoiding reliance on diverse actuation systems prone to common-mode failures.1986 Inherent Safety Tests

On April 3, 1986, Argonne National Laboratory conducted two landmark inherent safety demonstration tests in the Experimental Breeder Reactor II (EBR-II), simulating severe accident scenarios without reliance on active safety systems or operator intervention.[32] The first test involved a loss-of-flow (LOF) event without scram, where all primary pump power was abruptly terminated at full reactor power of approximately 62.5 MWth, leading to a rapid reduction in coolant flow.[33] Inherent negative reactivity feedbacks, primarily Doppler broadening from fuel temperature increase and radial thermal expansion of the core, passively shut down the reactor within seconds, reducing power to decay heat levels without fuel melting or cladding breach.[29] The second test simulated a loss-of-heat-sink (LOHS) condition without scram, achieved by simultaneously scraming the intermediate heat exchanger's electromagnetic pumps and initiating a steam generator water dump, isolating the reactor from its heat sink at full power.[32] Natural circulation in the primary sodium pool and heat transfer through the reactor vessel wall to the reactor silo air maintained core temperatures below safety limits, with peak fuel temperatures reaching about 800°C but stabilizing via passive mechanisms.[31] No active components, such as pumps or control rods, were required for shutdown or cooldown, validating the pool-type liquid-metal fast reactor's design for inherent safety.[28] These tests, part of the broader Shutdown Heat Removal Test series conducted between 1984 and 1986, provided empirical evidence that EBR-II could withstand unprotected transients—accidents without safety system actuation—without core damage, challenging prevailing assumptions about fast reactor vulnerabilities post-Chernobyl.[12] Post-test analysis confirmed subassembly outlet temperatures peaked at around 650°C during LOF and heat removal occurred via buoyancy-driven flows, with the reactor achieving stable natural convection cooling indefinitely.[10] The results underscored the role of metallic fuel's high thermal conductivity and the sodium coolant's properties in enabling such passive responses, influencing subsequent liquid-metal reactor designs.[33]Breeding and Waste Management

Pyrometallurgical Reprocessing

The pyrometallurgical reprocessing, or pyroprocessing, developed for the Experimental Breeder Reactor II (EBR-II) formed a key component of its closed fuel cycle, enabling the recycling of metallic uranium-plutonium-zirconium alloy fuel directly at the site. This high-temperature electrochemical process utilized molten salts to separate recoverable actinides from fission products and cladding hulls, contrasting with aqueous methods by avoiding dilution and proliferation risks associated with pure plutonium separation.[34] The core technique involved electrorefining, where spent fuel was anodically dissolved in a LiCl-KCl eutectic salt bath at approximately 500°C, with uranium depositing on a solid cathode for recovery, while transuranic elements co-deposited on a liquid cadmium cathode. Early implementation occurred in EBR-II's Fuel Cycle Facility, which from 1964 to 1969 reprocessed and refabricated approximately 35,000 spent fuel pins using an initial pyrochemical approach, demonstrating feasibility for fast reactor metallic fuels.[35] Under the Integral Fast Reactor (IFR) program, the process was refined to require only nine major pieces of equipment for complete recycling, including electrorefining, cathode processing, and injection casting for refabrication, allowing spent fuel to be returned to the reactor after irradiation to high burnup levels exceeding 10% fissile atom fraction.[36] Argonne National Laboratory researchers treated over four metric tons of EBR-II used fuel through this method, recovering uranium and plutonium for reuse while concentrating fission products into stable ceramic waste forms via zeolite incorporation and vitrification. Post-shutdown in 1994, the Spent Fuel Treatment (SFT) program at Argonne-West (now Idaho National Laboratory) applied pilot-scale electrorefining to condition EBR-II's sodium-bonded driver fuel, successfully recovering uranium metal dendrites from the first operational electrorefiner in the mid-1990s. This MARK-V electrorefiner processed blanket and driver fuels separately, yielding over 99% uranium recovery efficiency and minimizing secondary waste through the compact, non-aqueous process.[37] The approach addressed waste management by partitioning actinides for potential transmutation, reducing long-lived radiotoxicity, though full-scale commercialization was halted by policy decisions in 1994.[38]

Pyroprocessing's integration with EBR-II highlighted its suitability for sodium-cooled fast reactors, as it handled metallic fuels without oxide conversion and operated compatibly with sodium coolant residues. Independent validations confirmed the process's electrochemical kinetics and material balances, with no evidence of significant salt contamination or noble metal interference in scaled operations.[39] Despite technical successes, including demonstrated breeding ratios above 1.0 when coupled with recycling, the program's termination reflected non-technical factors rather than process limitations.[9]