Recent from talks

Nothing was collected or created yet.

List of trigonometric identities

View on Wikipedia

| Trigonometry |

|---|

|

| Reference |

| Laws and theorems |

| Calculus |

| Mathematicians |

In trigonometry, trigonometric identities are equalities that involve trigonometric functions and are true for every value of the occurring variables for which both sides of the equality are defined. Geometrically, these are identities involving certain functions of one or more angles. They are distinct from triangle identities, which are identities potentially involving angles but also involving side lengths or other lengths of a triangle.

These identities are useful whenever expressions involving trigonometric functions need to be simplified. An important application is the integration of non-trigonometric functions: a common technique involves first using the substitution rule with a trigonometric function, and then simplifying the resulting integral with a trigonometric identity.

Pythagorean identities

[edit]

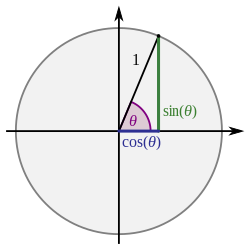

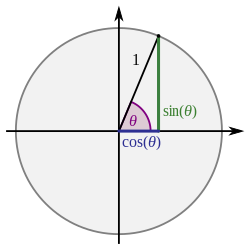

The basic relationship between the sine and cosine is given by the Pythagorean identity:

where means and means

This can be viewed as a version of the Pythagorean theorem, and follows from the equation for the unit circle. This equation can be solved for either the sine or the cosine:

where the sign depends on the quadrant of

Dividing this identity by , , or both yields the following identities:

Using these identities, it is possible to express any trigonometric function in terms of any other (up to a plus or minus sign):

| in terms of | ||||||

|---|---|---|---|---|---|---|

Reflections, shifts, and periodicity

[edit]By examining the unit circle, one can establish the following properties of the trigonometric functions.

Reflections

[edit]

When the direction of a Euclidean vector is represented by an angle this is the angle determined by the free vector (starting at the origin) and the positive -unit vector. The same concept may also be applied to lines in an Euclidean space, where the angle is that determined by a parallel to the given line through the origin and the positive -axis. If a line (vector) with direction is reflected about a line with direction then the direction angle of this reflected line (vector) has the value

The values of the trigonometric functions of these angles for specific angles satisfy simple identities: either they are equal, or have opposite signs, or employ the complementary trigonometric function. These are also known as reduction formulae.[2]

| reflected in [3] odd/even identities |

reflected in | reflected in | reflected in | reflected in compare to |

|---|---|---|---|---|

Shifts and periodicity

[edit]

| Shift by one quarter period | Shift by one half period | Shift by full periods[4] | Period |

|---|---|---|---|

Signs

[edit]The sign of trigonometric functions depends on quadrant of the angle. If and sgn is the sign function,

The trigonometric functions are periodic with common period so for values of θ outside the interval they take repeating values (see § Shifts and periodicity above).

Angle sum and difference identities

[edit]

These are also known as the angle addition and subtraction theorems (or formulae).

The angle difference identities for and can be derived from the angle sum versions by substituting for and using the facts that and . They can also be derived by using a slightly modified version of the figure for the angle sum identities, both of which are shown here.

These identities are summarized in the first two rows of the following table, which also includes sum and difference identities for the other trigonometric functions.

| Sine | [5][6] | ||||

|---|---|---|---|---|---|

| Cosine | [6][7] | ||||

| Tangent | [6][8] | ||||

| Cosecant | [9] | ||||

| Secant | [9] | ||||

| Cotangent | [6][10] | ||||

| Arcsine | [11] | ||||

| Arccosine | [12] | ||||

| Arctangent | [13] | ||||

| Arccotangent | |||||

Sines and cosines of sums of infinitely many angles

[edit]When the series converges absolutely then

Because the series converges absolutely, it is necessarily the case that and Particularly, in these two identities, an asymmetry appears that is not seen in the case of sums of finitely many angles: in each product, there are only finitely many sine factors but there are cofinitely many cosine factors. Terms with infinitely many sine factors would necessarily be equal to zero.

When only finitely many of the angles are nonzero then only finitely many of the terms on the right side are nonzero because all but finitely many sine factors vanish. Furthermore, in each term all but finitely many of the cosine factors are unity.

Tangents and cotangents of sums

[edit]Let (for ) be the kth-degree elementary symmetric polynomial in the variables for that is,

Then

This can be shown by using the sine and cosine sum formulae above:

The number of terms on the right side depends on the number of terms on the left side.

For example:

and so on. The case of only finitely many terms can be proved by mathematical induction.[14] The case of infinitely many terms can be proved by using some elementary inequalities.[15]

Linear fractional transformations of tangents, related to tangents of sums

[edit]Suppose and and

and let be any number for which Suppose that so that the forgoing fraction cannot be . Then for all [16]

(In case the denominator of this fraction is 0, we take the value of the fraction to be , where the symbol does not mean either or , but is the that is approached by going in either the positive or the negative direction, making the completion of the line topologically a circle.)

From this identity it can be shown to follow quickly that the family of all Cauchy-distributed random variables is closed under linear fractional tranformations, a result known since 1976.[17]

Secants and cosecants of sums

[edit]

where is the kth-degree elementary symmetric polynomial in the n variables and the number of terms in the denominator and the number of factors in the product in the numerator depend on the number of terms in the sum on the left.[18] The case of only finitely many terms can be proved by mathematical induction on the number of such terms.

For example,

Ptolemy's theorem

[edit]

Ptolemy's theorem is important in the history of trigonometric identities, as it is how results equivalent to the sum and difference formulas for sine and cosine were first proved. It states that in a cyclic quadrilateral , as shown in the accompanying figure, the sum of the products of the lengths of opposite sides is equal to the product of the lengths of the diagonals. In the special cases of one of the diagonals or sides being a diameter of the circle, this theorem gives rise directly to the angle sum and difference trigonometric identities.[19] The relationship follows most easily when the circle is constructed to have a diameter of length one, as shown here.

By Thales's theorem, and are both right angles. The right-angled triangles and both share the hypotenuse of length 1. Thus, the side , , and .

By the inscribed angle theorem, the central angle subtended by the chord at the circle's center is twice the angle , i.e. . Therefore, the symmetrical pair of red triangles each has the angle at the center. Each of these triangles has a hypotenuse of length , so the length of is , i.e. simply . The quadrilateral's other diagonal is the diameter of length 1, so the product of the diagonals' lengths is also .

When these values are substituted into the statement of Ptolemy's theorem that , this yields the angle sum trigonometric identity for sine: . The angle difference formula for can be similarly derived by letting the side serve as a diameter instead of .[19]

Multiple-angle and half-angle formulae

[edit]| Tn is the nth Chebyshev polynomial | [20] |

|---|---|

| de Moivre's formula, i is the imaginary unit | [21] |

Multiple-angle formulae

[edit]Double-angle formulae

[edit]

Formulae for twice an angle.[22]

Triple-angle formulae

[edit]Formulae for triple angles.[22]

Multiple-angle formulae

[edit]Formulae for multiple angles.[23]

Chebyshev method

[edit]The Chebyshev method is a recursive algorithm for finding the nth multiple angle formula knowing the th and th values.[24]

can be computed from , , and with

This can be proved by adding together the formulae

It follows by induction that is a polynomial of the so-called Chebyshev polynomial of the first kind, see Chebyshev polynomials#Trigonometric definition.

Similarly, can be computed from and with This can be proved by adding formulae for and

Serving a purpose similar to that of the Chebyshev method, for the tangent we can write:

Half-angle formulae

[edit]Also

Table

[edit]These can be shown by using either the sum and difference identities or the multiple-angle formulae.

| Sine | Cosine | Tangent | Cotangent | |

|---|---|---|---|---|

| Double-angle formula[27][28] | ||||

| Triple-angle formula[20][29] | ||||

| Half-angle formula[25][26] |

The fact that the triple-angle formula for sine and cosine only involves powers of a single function allows one to relate the geometric problem of a compass and straightedge construction of angle trisection to the algebraic problem of solving a cubic equation, which allows one to prove that trisection is in general impossible using the given tools.

A formula for computing the trigonometric identities for the one-third angle exists, but it requires finding the zeroes of the cubic equation 4x3 − 3x + d = 0, where is the value of the cosine function at the one-third angle and d is the known value of the cosine function at the full angle. However, the discriminant of this equation is positive, so this equation has three real roots (of which only one is the solution for the cosine of the one-third angle). None of these solutions are reducible to a real algebraic expression, as they use intermediate complex numbers under the cube roots.

Power-reduction formulae

[edit]Obtained by solving the second and third versions of the cosine double-angle formula.

| Sine | Cosine | Other |

|---|---|---|

In general terms of powers of or the following is true, and can be deduced using De Moivre's formula, Euler's formula and the binomial theorem.

| if n is ... | ||

|---|---|---|

| n is odd | ||

| n is even |

Product-to-sum and sum-to-product identities

[edit]

The product-to-sum identities[30] or prosthaphaeresis formulae can be proven by expanding their right-hand sides using the angle addition theorems. Historically, the first four of these were known as Werner's formulas, after Johannes Werner who used them for astronomical calculations.[31] See amplitude modulation for an application of the product-to-sum formulae, and beat (acoustics) and phase detector for applications of the sum-to-product formulae.

Product-to-sum identities

[edit]The product of two sines or cosines of different angles can be converted to a sum of trigonometric functions of a sum and difference of those angles:

As a corollary, the product or quotient of tangents can be converted to a quotient of sums of cosines or sines, respectively,

More generally, for a product of any number of sines or cosines,[citation needed]

Sum-to-product identities

[edit]

The sum of sines or cosines of two angles can be converted to a product of sines or cosines of the mean and half the difference of the angles:[32]

The sum of the tangent of two angles can be converted to a quotient of the sine of angles divided by the product of the cosines:[32]

Hermite's cotangent identity

[edit]Charles Hermite demonstrated the following identity.[33] Suppose are complex numbers, no two of which differ by an integer multiple of π. Let

(in particular, being an empty product, is 1). Then

The simplest non-trivial example is the case n = 2:

Finite products of trigonometric functions

[edit]For coprime integers n, m

where Tn is the Chebyshev polynomial.[citation needed]

The following relationship holds for the sine function

More generally for an integer n > 0[34]

or written in terms of the chord function ,

This comes from the factorization of the polynomial into linear factors (cf. root of unity): For any complex z and an integer n > 0,

Linear combinations

[edit]For some purposes it is important to know that any linear combination of sine waves of the same period or frequency but different phase shifts is also a sine wave with the same period or frequency, but a different phase shift. This is useful in sinusoid data fitting, because the measured or observed data are linearly related to the a and b unknowns of the in-phase and quadrature components basis below, resulting in a simpler Jacobian, compared to that of and .

Sine and cosine

[edit]The linear combination, or harmonic addition, of sine and cosine waves is equivalent to a single sine wave with a phase shift and scaled amplitude,[35][36]

where and are defined as so:

given that

Arbitrary phase shift

[edit]More generally, for arbitrary phase shifts, we have

where and satisfy:

More than two sinusoids

[edit]The general case reads[36]

where and

Lagrange's trigonometric identities

[edit]These identities, named after Joseph Louis Lagrange, are:[37][38][39] for

A related function is the Dirichlet kernel:

A similar identity is[40]

The proof is the following. By using the angle sum and difference identities, Then let's examine the following formula,

and this formula can be written by using the above identity,

So, dividing this formula with completes the proof.

Certain linear fractional transformations

[edit]If is given by the linear fractional transformation and similarly then

More tersely stated, if for all we let be what we called above, then

If is the slope of a line, then is the slope of its rotation through an angle of

Relation to the complex exponential function

[edit]Euler's formula states that, for any real number x:[41] where i is the imaginary unit. Substituting −x for x gives us:

These two equations can be used to solve for cosine and sine in terms of the exponential function. Specifically,[42][43]

These formulae are useful for proving many other trigonometric identities. For example, that ei(θ+φ) = eiθ eiφ means that

That the real part of the left hand side equals the real part of the right hand side is an angle addition formula for cosine. The equality of the imaginary parts gives an angle addition formula for sine.

The following table expresses the trigonometric functions and their inverses in terms of the exponential function and the complex logarithm.

| Function | Inverse function[44] |

|---|---|

Relation to complex hyperbolic functions

[edit]Trigonometric functions may be deduced from hyperbolic functions with complex arguments. The formulae for the relations are shown below[45][46].

Series expansion

[edit]When using a power series expansion to define trigonometric functions, the following identities are obtained:[47]

Infinite product formulae

[edit]For applications to special functions, the following infinite product formulae for trigonometric functions are useful:[48][49]

Inverse trigonometric functions

[edit]The following identities give the result of composing a trigonometric function with an inverse trigonometric function.[50]

Taking the multiplicative inverse of both sides of the each equation above results in the equations for The right hand side of the formula above will always be flipped. For example, the equation for is: while the equations for and are:

The following identities are implied by the reflection identities. They hold whenever and are in the domains of the relevant functions.

Also,[51]

The arctangent function can be expanded as a series:[52]

Identities without variables

[edit]In terms of the arctangent function we have[51]

The curious identity known as Morrie's law,

is a special case of an identity that contains one variable:

Similarly, is a special case of an identity with :

For the case ,

For the case ,

The same cosine identity is

Similarly,

Similarly,

The following is perhaps not as readily generalized to an identity containing variables (but see explanation below):

Degree measure ceases to be more felicitous than radian measure when we consider this identity with 21 in the denominators:

The factors 1, 2, 4, 5, 8, 10 may start to make the pattern clear: they are those integers less than 21/2 that are relatively prime to (or have no prime factors in common with) 21. The last several examples are corollaries of a basic fact about the irreducible cyclotomic polynomials: the cosines are the real parts of the zeroes of those polynomials; the sum of the zeroes is the Möbius function evaluated at (in the very last case above) 21; only half of the zeroes are present above. The two identities preceding this last one arise in the same fashion with 21 replaced by 10 and 15, respectively.

Other cosine identities include:[53] and so forth for all odd numbers, and hence

Many of those curious identities stem from more general facts like the following:[54] and

Combining these gives us

If n is an odd number () we can make use of the symmetries to get

The transfer function of the Butterworth low pass filter can be expressed in terms of polynomial and poles. By setting the frequency as the cutoff frequency, the following identity can be proved:

Computing π

[edit]An efficient way to compute π to a large number of digits is based on the following identity without variables, due to Machin. This is known as a Machin-like formula: or, alternatively, by using an identity of Leonhard Euler: or by using Pythagorean triples:

Generally, for numbers t1, ..., tn−1 ∈ (−1, 1) for which θn = Σn−1

k=1 arctan tk ∈ (π/4, 3π/4), let tn = tan(π/2 − θn) = cot θn. This last expression can be computed directly using the formula for the cotangent of a sum of angles whose tangents are t1, ..., tn−1 and its value will be in (−1, 1). In particular, the computed tn will be rational whenever all the t1, ..., tn−1 values are rational. With these values,

where in all but the first expression, we have used tangent half-angle formulae. The first two formulae work even if one or more of the tk values is not within (−1, 1). Note that if t = p/q is rational, then the (2t, 1 − t2, 1 + t2) values in the above formulae are proportional to the Pythagorean triple (2pq, q2 − p2, q2 + p2).

For example, for n = 3 terms, for any a, b, c, d > 0.

An identity of Euclid

[edit]Euclid showed in Book XIII, Proposition 10 of his Elements that the area of the square on the side of a regular pentagon inscribed in a circle is equal to the sum of the areas of the squares on the sides of the regular hexagon and the regular decagon inscribed in the same circle. In the language of modern trigonometry, this says:

Ptolemy used this proposition to compute some angles in his table of chords in Book I, chapter 11 of Almagest.

Composition of trigonometric functions

[edit]These identities involve a trigonometric function of a trigonometric function:[56]

where Ji are Bessel functions.

Further "conditional" identities for the case α + β + γ = 180°

[edit]A conditional trigonometric identity is a trigonometric identity that holds if specified conditions on the arguments to the trigonometric functions are satisfied.[57] The following formulae apply to arbitrary plane triangles and follow from as long as the functions occurring in the formulae are well-defined (the latter applies only to the formulae in which tangents and cotangents occur).[58]

Historical shorthands

[edit]The versine, coversine, haversine, and exsecant were used in navigation. For example, the haversine formula was used to calculate the distance between two points on a sphere. They are rarely used today.

Miscellaneous

[edit]Dirichlet kernel

[edit]The Dirichlet kernel Dn(x) is the function occurring on both sides of the next identity:

The convolution of any integrable function of period with the Dirichlet kernel coincides with the function's th-degree Fourier approximation. The same holds for any measure or generalized function.

Tangent half-angle substitution

[edit]If we set then[59] where sometimes abbreviated to cis x.

When this substitution of for tan x/2 is used in calculus, it follows that is replaced by 2t/1 + t2, is replaced by 1 − t2/1 + t2 and the differential dx is replaced by 2 dt/1 + t2. Thereby one converts rational functions of and to rational functions of in order to find their antiderivatives.

Viète's infinite product

[edit]

See also

[edit]- Aristarchus's inequality

- Derivatives of trigonometric functions

- Exact trigonometric values (values of sine and cosine expressed in surds)

- Exsecant

- Half-side formula

- Hyperbolic function

- Laws for solution of triangles:

- List of integrals of trigonometric functions

- Mnemonics in trigonometry

- Pentagramma mirificum

- Proofs of trigonometric identities

- Prosthaphaeresis

- Pythagorean theorem

- Tangent half-angle formula

- Trigonometric number

- Trigonometry

- Uses of trigonometry

- Versine and haversine

References

[edit]- ^ Abramowitz, Milton; Stegun, Irene Ann, eds. (1983) [June 1964]. "Chapter 4, eqn 4.3.45". Handbook of Mathematical Functions with Formulas, Graphs, and Mathematical Tables. Applied Mathematics Series. Vol. 55 (Ninth reprint with additional corrections of tenth original printing with corrections (December 1972); first ed.). Washington D.C.; New York: United States Department of Commerce, National Bureau of Standards; Dover Publications. p. 73. ISBN 978-0-486-61272-0. LCCN 64-60036. MR 0167642. LCCN 65-12253.

- ^ Selby 1970, p. 188

- ^ Abramowitz and Stegun, p. 72, 4.3.13–15

- ^ Abramowitz and Stegun, p. 72, 4.3.7–9

- ^ Abramowitz and Stegun, p. 72, 4.3.16

- ^ a b c d Weisstein, Eric W. "Trigonometric Addition Formulas". MathWorld.

- ^ Abramowitz and Stegun, p. 72, 4.3.17

- ^ Abramowitz and Stegun, p. 72, 4.3.18

- ^ a b "Angle Sum and Difference Identities". www.milefoot.com. Retrieved 2019-10-12.

- ^ Abramowitz and Stegun, p. 72, 4.3.19

- ^ Abramowitz and Stegun, p. 80, 4.4.32

- ^ Abramowitz and Stegun, p. 80, 4.4.33

- ^ Abramowitz and Stegun, p. 80, 4.4.34

- ^ Bronstein, Manuel (1989). "Simplification of real elementary functions". In Gonnet, G. H. (ed.). Proceedings of the ACM-SIGSAM 1989 International Symposium on Symbolic and Algebraic Computation. ISSAC '89 (Portland US-OR, 1989-07). New York: ACM. pp. 207–211. doi:10.1145/74540.74566. ISBN 0-89791-325-6.

- ^ Michael Hardy. (2016). "On Tangents and Secants of Infinite Sums." The American Mathematical Monthly, volume 123, number 7, 701–703. https://doi.org/10.4169/amer.math.monthly.123.7.701

- ^ Michael Hardy (2025), "Invariance of the Cauchy Family Under Linear Fractional Transformations," The American Mathematical Monthly, 132:5, 453–455, DOI: 10.1080/00029890.2025.2459048

- ^ Knight F. B., "A characterization of the Cauchy type." Proceedings of the American Mathematical Society, 1976:130–135.

- ^ Hardy, Michael (2016). "On Tangents and Secants of Infinite Sums". American Mathematical Monthly. 123 (7): 701–703. doi:10.4169/amer.math.monthly.123.7.701.

- ^ a b "Sine, Cosine, and Ptolemy's Theorem".

- ^ a b Weisstein, Eric W. "Multiple-Angle Formulas". MathWorld.

- ^ Abramowitz and Stegun, p. 74, 4.3.48

- ^ a b Selby 1970, pg. 190

- ^ Weisstein, Eric W. "Multiple-Angle Formulas". mathworld.wolfram.com. Retrieved 2022-02-06.

- ^ Ward, Ken. "Multiple angles recursive formula". Ken Ward's Mathematics Pages.

- ^ a b Abramowitz, Milton; Stegun, Irene Ann, eds. (1983) [June 1964]. "Chapter 4, eqn 4.3.20-22". Handbook of Mathematical Functions with Formulas, Graphs, and Mathematical Tables. Applied Mathematics Series. Vol. 55 (Ninth reprint with additional corrections of tenth original printing with corrections (December 1972); first ed.). Washington D.C.; New York: United States Department of Commerce, National Bureau of Standards; Dover Publications. p. 72. ISBN 978-0-486-61272-0. LCCN 64-60036. MR 0167642. LCCN 65-12253.

- ^ a b Weisstein, Eric W. "Half-Angle Formulas". MathWorld.

- ^ Abramowitz and Stegun, p. 72, 4.3.24–26

- ^ Weisstein, Eric W. "Double-Angle Formulas". MathWorld.

- ^ Abramowitz and Stegun, p. 72, 4.3.27–28

- ^ Abramowitz and Stegun, p. 72, 4.3.31–33

- ^ Eves, Howard (1990). An introduction to the history of mathematics (6th ed.). Philadelphia: Saunders College Pub. p. 309. ISBN 0-03-029558-0. OCLC 20842510.

- ^ a b Abramowitz and Stegun, p. 72, 4.3.34–39

- ^ Johnson, Warren P. (Apr 2010). "Trigonometric Identities à la Hermite". American Mathematical Monthly. 117 (4): 311–327. doi:10.4169/000298910x480784. S2CID 29690311.

- ^ "Product Identity Multiple Angle".

- ^ Apostol, T.M. (1967) Calculus. 2nd edition. New York, NY, Wiley. Pp 334-335.

- ^ a b Weisstein, Eric W. "Harmonic Addition Theorem". MathWorld.

- ^ Ortiz Muñiz, Eddie (Feb 1953). "A Method for Deriving Various Formulas in Electrostatics and Electromagnetism Using Lagrange's Trigonometric Identities". American Journal of Physics. 21 (2): 140. Bibcode:1953AmJPh..21..140M. doi:10.1119/1.1933371.

- ^ Agarwal, Ravi P.; O'Regan, Donal (2008). Ordinary and Partial Differential Equations: With Special Functions, Fourier Series, and Boundary Value Problems (illustrated ed.). Springer Science & Business Media. p. 185. ISBN 978-0-387-79146-3. Extract of page 185

- ^ Jeffrey, Alan; Dai, Hui-hui (2008). "Section 2.4.1.6". Handbook of Mathematical Formulas and Integrals (4th ed.). Academic Press. ISBN 978-0-12-374288-9.

- ^ Fay, Temple H.; Kloppers, P. Hendrik (2001). "The Gibbs' phenomenon". International Journal of Mathematical Education in Science and Technology. 32 (1): 73–89. doi:10.1080/00207390117151.

- ^ Abramowitz and Stegun, p. 74, 4.3.47

- ^ Abramowitz and Stegun, p. 71, 4.3.2

- ^ Abramowitz and Stegun, p. 71, 4.3.1

- ^ Abramowitz and Stegun, p. 80, 4.4.26–31

- ^ Hawkins, Faith Mary; Hawkins, J. Q. (March 1, 1969). Complex Numbers and Elementary Complex Functions. London: MacDonald Technical & Scientific London (published 1968). p. 122. ISBN 978-0356025056.

- ^ Markushevich, A. I. (1966). The Remarkable Sine Function. New York: American Elsevier Publishing Company, Inc. pp. 35–37, 81. ISBN 978-1483256313.

- ^ Abramowitz and Stegun, p. 74, 4.3.65–66

- ^ Abramowitz and Stegun, p. 75, 4.3.89–90

- ^ Abramowitz and Stegun, p. 85, 4.5.68–69

- ^ Abramowitz & Stegun 1972, p. 73, 4.3.45

- ^ a b c Wu, Rex H. "Proof Without Words: Euler's Arctangent Identity", Mathematics Magazine 77(3), June 2004, p. 189.

- ^ S. M. Abrarov; R. K. Jagpal; R. Siddiqui; B. M. Quine (2021), "Algorithmic determination of a large integer in the two-term Machin-like formula for π", Mathematics, 9 (17), 2162, arXiv:2107.01027, doi:10.3390/math9172162

- ^ Humble, Steve (Nov 2004). "Grandma's identity". Mathematical Gazette. 88: 524–525. doi:10.1017/s0025557200176223. S2CID 125105552.

- ^ Weisstein, Eric W. "Sine". MathWorld.

- ^ Harris, Edward M. "Sums of Arctangents", in Roger B. Nelson, Proofs Without Words (1993, Mathematical Association of America), p. 39.

- ^ Milton Abramowitz and Irene Stegun, Handbook of Mathematical Functions with Formulas, Graphs, and Mathematical Tables, Dover Publications, New York, 1972, formulae 9.1.42–9.1.45

- ^ Er. K. C. Joshi, Krishna's IIT MATHEMATIKA. Krishna Prakashan Media. Meerut, India. page 636.

- ^ Cagnoli, Antonio (1808), Trigonométrie rectiligne et sphérique, p. 27.

- ^ Abramowitz and Stegun, p. 72, 4.3.23

Bibliography

[edit]- Abramowitz, Milton; Stegun, Irene A., eds. (1972). Handbook of Mathematical Functions with Formulas, Graphs, and Mathematical Tables. New York: Dover Publications. ISBN 978-0-486-61272-0.

- Nielsen, Kaj L. (1966), Logarithmic and Trigonometric Tables to Five Places (2nd ed.), New York: Barnes & Noble, LCCN 61-9103

- Selby, Samuel M., ed. (1970), Standard Mathematical Tables (18th ed.), The Chemical Rubber Co.

External links

[edit]- Values of sin and cos, expressed in surds, for integer multiples of 3° and of 5+5/8°, and for the same angles csc and sec and tan

List of trigonometric identities

View on GrokipediaFundamental Identities

Pythagorean identities

The Pythagorean identities form a cornerstone of trigonometry, expressing fundamental relationships between the sine, cosine, and their reciprocal functions derived from the geometry of right triangles and the unit circle. These identities arise directly from the Pythagorean theorem, which states that in a right triangle with legs and and hypotenuse , . For an angle in such a triangle, where and , dividing both sides of the theorem by yields the primary identity: This relation holds for all angles when sine and cosine are defined via the unit circle, where a point on the circle has coordinates , ensuring the distance from the origin is 1. From this core identity, extensions follow by dividing both sides by appropriate powers of cosine or sine. Dividing by (assuming ) gives: Similarly, dividing by (assuming ) produces: These forms link the tangent, cotangent, secant, and cosecant functions to their bases, providing tools for simplifying expressions involving ratios. The origins of these identities trace back to the Pythagorean theorem, developed by the Pythagorean school in ancient Greece around the 6th century BCE as a geometric principle for right triangles. The trigonometric formulation of these identities emerged during the Renaissance with the development of plane trigonometry. Leonhard Euler further connected them to exponential and series representations in his Introductio in analysin infinitorum (1748), emphasizing their algebraic utility. In applications, the Pythagorean identities are essential for normalizing trigonometric expressions, such as reducing higher powers of sine or cosine to lower degrees or converting between sine-cosine pairs and tangent-secant forms, which simplifies solving equations and integrals in calculus and physics. For instance, they enable the verification of orthogonality in Fourier series by confirming for integers , a direct consequence of the identity.Reciprocal and quotient identities

The reciprocal trigonometric functions are defined in terms of the primary functions sine and cosine. The secant function is the multiplicative inverse of the cosine function, given by provided that . Similarly, the cosecant function is the multiplicative inverse of the sine function, where .[2] The cotangent function is defined as the quotient of the cosine and sine functions, or equivalently as the reciprocal of the tangent function, with the condition that . These definitions extend the tangent and cotangent as quotient identities. The tangent function is the ratio of sine to cosine, defined where . Domain restrictions are essential for these functions, as they are undefined where the denominators vanish. For and , the domain excludes angles for integers , where cosine is zero. For and , the domain excludes for integers , where sine is zero. These exclusions ensure the functions are well-defined over their respective domains in the real numbers. In modern educational contexts, explicitly stating these domains promotes precise understanding of trigonometric behavior and avoids common pitfalls in applications like calculus and physics.Symmetry and Periodicity

Reflections and even-odd properties

Trigonometric functions exhibit even or odd symmetry with respect to the origin, reflecting their behavior under negation of the argument. Even functions satisfy , while odd functions satisfy . These properties arise from the definitions of the functions and are fundamental to understanding their symmetries. The cosine and secant functions are even: The sine, tangent, cosecant, and cotangent functions are odd: These identities hold for all where the functions are defined. Geometrically, these properties derive from the unit circle definition. For an angle , the point on the unit circle is . The angle corresponds to the reflection across the x-axis, yielding the point . Thus, the x-coordinate (cosine) remains unchanged, making cosine even, while the y-coordinate (sine) changes sign, making sine odd. Tangent, as the ratio , inherits oddness from sine over even cosine. Reciprocal functions follow: secant from even cosine is even, and cosecant from odd sine is odd; cotangent, as , is odd.[3] Analytically, the even-odd nature is evident in the Taylor series expansions around zero. The Maclaurin series for cosine contains only even powers of : Substituting yields the same series, confirming even parity. For sine, only odd powers appear: Negating changes the sign of all terms, confirming odd parity. The series for tangent, cosecant, and cotangent, derived from these, preserve the respective parities.[4][5] These identities simplify expressions involving negative angles by converting them to positive equivalents. For instance, , avoiding direct computation of negative rotations. Such reductions are essential in calculus for integration and differentiation of composite functions and in physics for modeling symmetric phenomena like waves.[6]Shifts and periodicity

The trigonometric functions exhibit periodicity, meaning their values repeat after certain intervals known as periods. The sine and cosine functions have a fundamental period of , expressed by the identities and for all real .[7][8] These relations arise from the unit circle definition, where advancing by radians returns to the same point. The reciprocal functions follow suit: and , since they are defined as the reciprocals of sine and cosine, respectively.[7][8] In contrast, the tangent function has a smaller period of , given by .[7][8] This reflects the fact that the tangent repeats every half-cycle of the unit circle, as opposite sides align after radians. The cotangent, as the reciprocal of tangent, shares this period: .[7][8] These periodic properties allow angles to be reduced modulo their respective periods to equivalent values within a principal interval, such as for sine and cosine, or for tangent. A related set of shift identities involves cofunctions, which connect complementary angles summing to . Specifically, , , and . These hold for all where the functions are defined and stem from the geometric symmetry of the unit circle. The reciprocal cofunctions align accordingly: and . More generally, periodicity extends to multiples of the fundamental period: for any integer , , , , and similarly for the reciprocals.[7][8] This additive property facilitates the reduction of arbitrary angles to standard forms in computations. Unlike their circular trigonometric counterparts, hyperbolic functions such as and are non-periodic, growing exponentially without repetition.[9]Signs in quadrants

The signs of the trigonometric functions depend on the quadrant in which the terminal side of the angle lies in the unit circle.[10] In the first quadrant (0 to π/2), all primary trigonometric functions—sine, cosine, and tangent—are positive, and consequently, their reciprocals—cosecant, secant, and cotangent—are also positive.[11] In the second quadrant (π/2 to π), sine and cosecant are positive, while cosine, secant, tangent, and cotangent are negative.[10] In the third quadrant (π to 3π/2), tangent and cotangent are positive, but sine, cosecant, cosine, and secant are negative.[11] In the fourth quadrant (3π/2 to 2π), cosine and secant are positive, whereas sine, cosecant, tangent, and cotangent are negative.[10] These sign patterns can be summarized in the following table:| Quadrant | sin θ | cos θ | tan θ | csc θ | sec θ | cot θ |

|---|---|---|---|---|---|---|

| I (0 to π/2) | + | + | + | + | + | + |

| II (π/2 to π) | + | - | - | + | - | - |

| III (π to 3π/2) | - | - | + | - | - | + |

| IV (3π/2 to 2π) | - | + | - | - | + | - |

Angle Addition Formulas

Sum and difference for sine and cosine

The sum and difference formulas for sine and cosine express the sine or cosine of the sum or difference of two angles in terms of the sines and cosines of the individual angles. These identities are fundamental in trigonometry, enabling the computation of trigonometric functions for composite angles and serving as a basis for more advanced formulas.[13] The sine addition and subtraction formulas are given by Similarly, the cosine formulas are These can be verified using the Pythagorean identity by expanding both sides and comparing.[13] One modern derivation employs Euler's formula, , which links trigonometric functions to complex exponentials. Consider . Expanding the right side yields . Equating real and imaginary parts to the left side directly gives the addition formulas; the subtraction formulas follow analogously by replacing with .[13] These identities were part of the prosthaphaeresis methods developed in the late 16th century, with François Viète (1540–1603) contributing to their application in algebraic computations, such as approximating products through angle additions in trigonometry.[14] The formulas extend to finite sums of angles through iterative application, yielding as a nested expansion, though this process telescopes only in specific recursive forms. In numerical computing, direct recursive use of these sum formulas can lead to instability due to floating-point errors accumulating in intermediate terms, particularly for large angles or many additions.Sum and difference for tangent and cotangent

The sum and difference formulas for the tangent function express in terms of and . These identities are given by These formulas hold provided the denominators are nonzero and the individual tangents are defined.[13] The tangent addition and subtraction formulas are derived by dividing the corresponding sine and cosine sum and difference formulas. Specifically, starting from dividing the numerator by the denominator yields . Dividing both by simplifies the expression to the tangent form above, assuming and . A similar process applies to the difference formulas using and .[13][15] Analogous formulas exist for the cotangent function, which is the reciprocal of tangent. They are These are obtained by dividing the cosine and sine sum and difference formulas, respectively, and simplifying by dividing numerator and denominator by , assuming and .[13] The tangent sum formula is undefined when , as this makes the intermediate division invalid, corresponding to cases where or (or both) are odd multiples of , where tangent is itself undefined. Additionally, is undefined when , which occurs precisely when . Similar conditions apply to the difference and cotangent formulas, where denominators vanish when the overall cosine or sine is zero.[13][15] These identities find applications in navigation for computing composite bearings and azimuths from individual angles, such as adjusting course deviations, and in physics for resolving vector components in angle compositions, like in projectile motion or force equilibria.[16][17]Linear fractional transformations of tangents

The addition formula for the tangent function expresses as a linear fractional transformation (also known as a Möbius transformation) of and : provided that . This formula demonstrates how the tangent of the sum of two angles arises from a rational function of degree one in each variable, reflecting the projective geometry inherent to the tangent's role in parametrizing points on the unit circle via stereographic projection. In general, linear fractional transformations of tangents take the form , where , , and the coefficients satisfy to preserve the orientation and normalization, corresponding to elements of the special linear group SL(2, ). Compositions of such transformations arise naturally when considering multiple-angle sums or differences, such as for integer , which can be obtained iteratively from the basic addition formula. This structure highlights the algebraic closure of tangent values under angle addition, distinguishing it from the transcendental nature of sine and cosine.[18] These transformations are intimately related to the action of SL(2, ) on the real projective line , which is conformally equivalent to the unit circle through the stereographic projection where serves as the coordinate. The group PSL(2, ) = SL(2, )/{\pm I} acts transitively and faithfully on the circle, mapping tangent values to new positions via rotations and reflections that preserve the circular geometry. This group-theoretic perspective unifies the trigonometric identities with hyperbolic geometry and dynamical systems, where orbits under SL(2, ) describe geodesic flows on the circle. Historically, such algebraic addition theorems trace back to the foundational work on elliptic functions by Carl Friedrich Gauss in the early 19th century, where similar rational expressions govern the duplication and addition of arguments in degenerate cases approaching trigonometric limits.[19][20]Secant and cosecant sums

The addition formulas for secant and cosecant express these reciprocal trigonometric functions of angle sums and differences in terms of secant, cosecant, tangent, and cotangent. These identities are obtained by taking the reciprocals of the standard sine and cosine addition formulas and simplifying using reciprocal and quotient identities.[21] Consider the secant addition identity, derived from the cosine sum formula: Taking the reciprocal gives: Dividing the numerator and denominator by yields the form in terms of secant and tangent: For the difference, starting from , the analogous simplification produces: These hold wherever both sides are defined, provided and the denominators are nonzero.[21] For cosecant, begin with the sine sum formula: The reciprocal is: Multiplying the numerator and denominator by simplifies to: For the difference, using , the derivation yields: These are valid where and the denominators differ from zero.[21] Secant and cosecant addition identities are less commonly applied than those for sine, cosine, tangent, or cotangent, owing to the functions' poles at odd multiples of , which introduce additional points of discontinuity in sums and differences. Singularities arise precisely when for integer , rendering the expressions undefined, and care must be taken to exclude cases where intermediate denominators vanish (e.g., for the secant sum).[21]Ptolemy's theorem

Ptolemy's theorem provides a key geometric relation that connects to trigonometric product identities through the properties of cyclic quadrilaterals. For a cyclic quadrilateral ABCD inscribed in a circle, the theorem states that the product of the lengths of the two diagonals equals the sum of the products of the lengths of the two pairs of opposite sides: This relation holds specifically because the vertices lie on a common circle, distinguishing it from the inequality form for non-cyclic quadrilaterals.[22] Named after the Greco-Egyptian mathematician and astronomer Claudius Ptolemy (c. 100–170 AD), the theorem appears in his seminal work Almagest, where it facilitated the computation of chord lengths in a circle, forming the basis for his trigonometric table used in astronomical predictions.[23] Ptolemy applied this result extensively in modeling planetary motions and eclipses, bridging geometry with early trigonometry for practical celestial calculations.[23] To derive a trigonometric form, consider the quadrilateral inscribed in a unit circle, where chord lengths are with the central angle. For points A, B, C, D on the circle with successive central angles , substituting these into Ptolemy's theorem yields the identity: This derivation applies the law of sines to express all chords in terms of sines of half-central angles and simplifies via the circle's symmetry.[24] The identity highlights product relations among sines of related angles and, in special cases, reduces to angle sum formulas like by appropriate angle choices in the configuration.[25]Multiple-Angle Formulas

Double-angle formulas

The double-angle formulas express trigonometric functions of twice an angle in terms of functions of the original angle and are derived by substituting the second angle equal to the first in the angle addition formulas.[26] For sine, the formula is obtained directly from the sum formula .[26][27] For cosine, the primary form is derived from .[26][27] Equivalent expressions include and which follow from substituting the Pythagorean identity or into the primary form.[26][27][28] The double-angle formula for tangent is derived from .[26][27] These formulas facilitate iterative computations in trigonometric expressions and find applications in optics, particularly in nonlinear processes like second-harmonic generation, where terms such as arise from quadratic nonlinearities to produce doubled frequencies.[29] In mechanics, they aid analysis of vibrations and oscillations involving harmonic components at doubled frequencies, such as in nonlinear systems where displacement terms generate higher-order responses.[30]Triple-angle formulas

The triple-angle formulas provide expressions for the sine, cosine, and tangent of three times an angle in terms of powers of the trigonometric functions of the original angle. These identities are derived by applying the angle addition formulas to the composition of a double angle and a single angle, building on the double-angle formulas. They are particularly useful in simplifying expressions involving triple angles and in applications such as solving cubic equations in trigonometry. The formula for sine is given by This can be derived starting from the angle addition formula: Substituting the double-angle formulas and yields Further substituting simplifies to the final form.[31] Similarly, the cosine formula is The derivation follows analogously using with the double-angle substitutions and , leading to the cubic expression after algebraic simplification.[1] For tangent, the formula is This is obtained by applying the tangent addition formula to , where , and simplifying the resulting rational expression.[32] These formulas appeared in early modern trigonometric developments, including the use of triple-angle identities for tangent in the construction of tables during the 15th century, as part of the advancements from Regiomontanus onward.[33]General multiple-angle formulas

The general multiple-angle formulas express and for positive integer in terms of powers of and . These identities arise from De Moivre's theorem, which states that Expanding the left side via the binomial theorem gives The real part yields and the imaginary part yields , resulting in explicit sums: These expressions, while useful for small , become cumbersome for larger values due to the terms in the sums. An efficient alternative uses recurrence relations derived from angle addition formulas. Specifically, with initial conditions and ; a similar relation holds for cosine: This allows computation in steps, forward or backward, and is numerically stable when implemented carefully. The multiple-angle formulas connect directly to Chebyshev polynomials. The Chebyshev polynomial of the first kind satisfies while the Chebyshev polynomial of the second kind relates via These polynomials provide a polynomial representation, enabling evaluation through their own recurrences or explicit forms, and are orthogonal on with respect to weight functions involving . For large , direct evaluation via binomial expansion or basic recurrences can be inefficient or prone to overflow in finite precision. Modern computational approaches, particularly in signal processing and numerical analysis as of 2025, leverage the fast Fourier transform (FFT) to compute multiple-angle terms efficiently within broader Fourier sums or polynomial evaluations related to Chebyshev series, achieving complexity per term in batched contexts.Half-angle formulas

Half-angle formulas express the trigonometric functions of half an angle in terms of the functions of the full angle. These identities are particularly useful for computing exact values of trigonometric functions at angles that are halves of known angles, often involving square roots, and they play a key role in simplifying expressions and solving equations in trigonometry.[34][35] The half-angle formulas for sine and cosine can be derived from the double-angle formulas by substituting for the variable and solving for the half-angle terms using the Pythagorean identity . Starting with the double-angle formula for cosine, , rearrange to isolate the sine term: , so , and thus . Similarly, from , rearrange to , yielding . The choice of sign in these formulas depends on the quadrant in which lies: sine is positive in quadrants I and II, negative in III and IV; cosine is positive in I and IV, negative in II and III.[35][36] For tangent, the half-angle formula can be obtained by dividing the sine half-angle formula by the cosine half-angle formula or by alternative manipulations of the double-angle identities. Two common equivalent forms are and , with the sign determined by the quadrant of (tangent positive in I and III, negative in II and IV). These expressions avoid square roots and are especially useful in integral substitutions, such as the Weierstrass substitution.[34][35]Reduction and Conversion Formulas

Power-reduction formulas

Power-reduction formulas express powers of trigonometric functions, such as sine and cosine, in terms of multiple-angle functions, which simplifies integration, Fourier series expansions, and other analytical tasks in mathematics and physics.[37] These identities originate from rearranging double-angle formulas.[37] The fundamental power-reduction formulas for squared terms are: These follow directly from solving the double-angle identities and for the squared functions.[37] For cubic powers, the formulas are: These derive from the triple-angle identities and , rearranged to isolate the cubed terms.[38] In general, higher even powers can be reduced using multiple-angle expressions involving binomial coefficients. For even powers, and expand as a constant term plus a finite sum of cosines (or sines for odd cases) of even multiples of : For odd powers like or , the expressions similarly reduce to sums involving sines or cosines of multiple angles, often derived recursively from lower powers or via the binomial theorem applied to complex exponentials.[38] Conversely, the multiple-angle formula for expresses it as a polynomial in powers of using the binomial theorem on the identity , yielding Chebyshev polynomials of the first kind: , where is the nth-degree polynomial.[38] These bidirectional reductions extend to arbitrary higher even and odd powers, facilitating computations in advanced trigonometric analysis.[38]Product-to-sum identities

The product-to-sum identities express the product of two trigonometric functions—specifically sines and/or cosines—as a sum or difference of sines or cosines of sums and differences of the angles. These identities, historically known as prosthaphaeresis formulas, were instrumental in 16th-century computations for approximating products using trigonometric tables and later became essential in simplifying expressions in calculus and analysis. They are particularly fundamental in signal processing and Fourier analysis, where they enable the evaluation of integrals of products of sinusoidal functions to establish their orthogonality, a key property for decomposing signals into orthogonal basis functions.[39][40] The four primary product-to-sum identities are: These formulas can be derived directly from the angle addition and subtraction formulas by appropriate addition or subtraction of the relevant identities. For instance, consider the cosine addition and subtraction formulas: Adding these equations yields: Dividing by 2 gives the product-to-sum identity for . The other identities follow analogously: subtracting the equations above produces the identity for , while similar manipulations of the sine addition and subtraction formulas yield the mixed sine-cosine identities.[39] The prosthaphaeresis formulas originated in the late 16th century; the sine product identity was discovered around 1510 by Johannes Werner, the cosine product by Joost Bürgi around 1585, and both were first published in 1588 by Nicolai Reymers Ursus in Fundamentum astronomicum.[40]Sum-to-product identities

Sum-to-product identities express the sum or difference of two sine or cosine functions as a product involving sine and cosine of average and half-difference angles. These identities are particularly useful for condensing trigonometric expressions, factoring polynomials in trigonometric terms, and solving equations by transforming sums into factorable products.[41] The standard sum-to-product formulas are as follows: For sines: For cosines: These formulas can be derived from the sum and difference identities by substituting appropriate variables, such as letting and , which simplifies the expressions into products.[41] In applications, sum-to-product identities facilitate the simplification of trigonometric equations. For instance, to solve , apply the identity to rewrite it as , yielding solutions from or . This approach often reduces the equation to more manageable forms, aiding in finding roots or verifying solutions.[41]Linear Combinations

Combinations of sines and cosines

Linear combinations of the form , where and are constants, can be rewritten as a single sine or cosine function multiplied by an amplitude factor and shifted by a phase angle. This form simplifies analysis in applications such as wave superposition and harmonic motion, where multiple oscillatory terms need consolidation into an equivalent single oscillation. The process relies on the angle addition formula for sine: . To express as , equate coefficients by expanding the right side: . Thus, and . Squaring and adding these equations yields , so (taking the positive root for amplitude). Then, , with chosen in the appropriate quadrant based on the signs of and to match the coefficients. This derivation follows directly from the sine addition formula. An alternative form is , using the cosine addition formula . Equating gives and , so again and , with quadrant adjustment. Both representations are equivalent up to the choice of phase, and the amplitude establishes the maximum value of the expression, which is . The auxiliary angle method provides a geometric interpretation: consider a right triangle with opposite side and adjacent side to angle , so and hypotenuse . Dividing the original expression by yields . This vector addition view treats and as components of a resultant phasor of length at phase . These identities apply specifically to two-term combinations and serve as a foundation for handling phase shifts in more general contexts.Arbitrary phase shifts

Arbitrary phase shifts in trigonometric functions generalize the representation of sinusoidal waves by incorporating a phase angle φ, allowing for the expression of a sinusoid as sin(θ + φ) or cos(θ + φ), where θ is the primary angle and φ accounts for any offset. This formulation expands upon linear combinations of sines and cosines, treating the coefficients as cosφ and sinφ to capture the phase explicitly. These identities derive from the geometric properties of angles in triangles or algebraic manipulations of trigonometric definitions.[13] The fundamental identities for sine and cosine with an arbitrary phase shift are: For the tangent function, the phase shift identity is: This follows from dividing the sine addition formula by the cosine addition formula, assuming the denominator is nonzero to avoid undefined points.[13] In practical applications, these phase shift identities are essential for modeling periodic phenomena with offsets. In mechanical vibrations, the formulas represent the displacement of oscillating systems, such as springs or pendulums, where the phase φ accounts for initial conditions or damping effects that shift the waveform relative to the driving force.[42]Superposition of multiple sinusoids

The superposition of multiple sinusoids commonly appears in the form where are amplitudes and are phase shifts, representing the partial sum of a Fourier series for a periodic function. This expression generalizes the linear combinations of individual sinusoids and is fundamental in signal processing and harmonic analysis.[43] For the specific case of harmonics with zero phase shifts (i.e., ), the partial sum identity simplifies the superposition. The sum of cosines holds for , providing a closed-form expression for equally spaced frequencies. This identity is derived from the geometric series summation of complex exponentials and is central to the convergence properties of Fourier series.[44] The function , known as the Dirichlet kernel, encapsulates this partial sum and serves as the reproducing kernel in the Fourier partial sum operator. Introduced by Dirichlet in his 1829 analysis of trigonometric series convergence, it highlights how finite superpositions approximate periodic functions.[45] Regarding convergence, partial sums using the Dirichlet kernel exhibit the Gibbs phenomenon near discontinuities of the target function, where overshoots of approximately 9% of the jump height persist regardless of , preventing uniform convergence. This oscillatory behavior was first noted by Wilbraham in 1848 and later analyzed by Gibbs in 1899.[46] These finite superpositions form the basis for Fourier analysis, with extensions to the continuous Fourier transform for aperiodic signals via limiting processes on the kernel.[43]Advanced Algebraic Identities

Lagrange's trigonometric identities

Lagrange's trigonometric identities relate the products of sums of sines and cosines to sums of products involving cosines of angle differences, providing a useful tool for analyzing the aggregation of angular quantities. These identities are particularly valuable in contexts where angles represent directions or phases, allowing the magnitude of their vector sum to be expressed in terms of pairwise interactions. The core identity for n arbitrary angles θ_k is This equation arises from expanding the left side using the product-to-sum formulas for sine and cosine, where the terms involving cos(θ_i + θ_j) cancel out, leaving the diagonal terms (which sum to n) and the cos(θ_i - θ_j) cross terms. The identity generalizes to m terms in a similar fashion, maintaining the structure for any finite set of angles. The formula admits a geometric interpretation as the squared length of the resultant vector obtained by adding n unit vectors in the plane, with directions given by the angles θ_k. Each unit vector can be represented as (\cos \theta_k, \sin \theta_k), and the squared magnitude of their sum is the sum of squared magnitudes plus twice the sum of pairwise dot products: |∑ v_k|^2 = ∑ |v_k|^2 + 2 ∑{i<j} v_i · v_j = n + 2 ∑{i<j} \cos (\theta_i - \theta_j), since the dot product of two unit vectors is the cosine of the angle between them. This vector perspective highlights the identity's role in quantifying coherence or alignment among directions. One derivation uses complex numbers, representing each angle by the unit complex number e^{i θ_k} = \cos θ_k + i \sin θ_k. The sum S = ∑ e^{i θ_k}, and |S|^2 = S \bar{S} = ∑{k,j} e^{i (θ_k - θ_j)}. The diagonal terms (k = j) contribute n, while the off-diagonal terms yield 2 ∑{i<j} \cos (θ_i - θ_j), as the imaginary parts (sines) cancel due to antisymmetry. This approach leverages Euler's formula and properties of the exponential function. Although the identity can be bounded using the Cauchy-Schwarz inequality—|∑ v_k|^2 ≤ n ∑ |v_k|^2 = n^2, with equality when all θ_k are equal—the full equality form is the expansion shown, not a direct application of the inequality itself. Named after the 18th-century mathematician Joseph-Louis Lagrange, who made significant contributions to trigonometric applications in mechanics and analysis during that era, these identities have found applications in statistics, particularly in circular and directional data analysis. In circular statistics, the left side divided by n^2 gives the square of the mean resultant length \bar{R}, a measure of angular dispersion; the right side expresses \bar{R}^2 = 1/n + (2/n^2) ∑_{i<j} \cos (θ_i - θ_j), facilitating inference on clustering or uniformity in datasets like wind directions or animal orientations.Certain linear fractional transformations

Linear fractional transformations provide a powerful framework for expressing trigonometric functions as rational functions, particularly through connections to stereographic projection and projective geometry. The tangent half-angle substitution , often attributed to Weierstrass, emerges from projecting the unit circle in the complex plane onto the real line via stereographic projection. This maps the point to the coordinate , enabling the representation of sine and cosine as rational functions of . Specifically, with the differential . These formulas transform integrals or equations involving rational combinations of sine and cosine into algebraic forms in , preserving the structure of trigonometric identities under substitution.[47] In general, if (with ) is a linear fractional transformation, then and are also linear fractional transformations in . Substituting the expressions for and yields a rational function that simplifies to the form , where the coefficients are determined by the originals and the quadratic nature of the denominators. For instance, applying this to recovers the standard sine formula, while for , it relates to phase shifts or other identities. This property ensures that compositions of such transformations maintain the group structure of Möbius transformations, facilitating the derivation of new trigonometric relations from known ones. The Weierstrass substitution represents a specific instance of this broader mechanism, often used for integration, whereas the linear fractional view emphasizes transformations across the entire class of such functions.[47][48] These transformations also connect to geometric invariants via the cross-ratio, preserved by all Möbius transformations. For four points on the unit circle at angles , the cross-ratio can be expressed using tangent of half-angle differences, such as , linking projective geometry to trigonometric measures of angular separations. This trigonometric form of the cross-ratio underscores how stereographic projection translates circular geometries into linear ones, with applications in conformal mapping and non-Euclidean models where angles and distances are computed via such ratios.[49][50]Hermite's cotangent identity

Hermite's cotangent identity provides a finite partial fraction decomposition for the product of cotangent functions, expressing it as a constant plus a linear combination of individual cotangents. Specifically, for distinct complex numbers such that no two differ by an integer multiple of , where the coefficients are given by . This identity serves as a finite analog to the infinite partial fraction expansion of the cotangent function itself. The identity is named after the French mathematician Charles Hermite, who discovered it in the 19th century and published it in 1872.[51] Hermite's work built on earlier developments in complex analysis, particularly the infinite series representation , which originates from Eisenstein's summation formula and relates to the poles of the cotangent. The derivation relies on complex analysis techniques, such as considering the meromorphic function formed by the product of cotangents and applying Liouville's theorem to equate its principal parts at the poles . This approach leverages the reflection formula for the gamma function, , whose logarithmic derivative yields the cotangent expansion, to establish the finite case.[52] A notable special case arises in evaluating finite products of sines, obtained by suitable choices of the and taking limits or residues related to the cotangent poles. In particular, setting the points symmetrically leads to the identity This result follows from applying Hermite's identity to the roots of unity in the complex plane, connecting the product's value to the residue at in the cotangent expansion.Finite products of trigonometric functions

Finite products of trigonometric functions encompass identities that express the product of sines (or cosines) evaluated at angles in arithmetic progression as a closed-form expression, often involving a multiple-angle trigonometric function. These identities typically derive from the factorization of polynomials in the complex plane, leveraging the roots of unity or the reflection formula for the gamma function in limiting cases, though finite versions rely on exponential representations or inductive proofs from angle-addition formulas. Such products generalize beyond specific cases like Hermite's cotangent identity, which applies to cotangents, by focusing on sines with arbitrary starting angle θ and common difference δ.[53] A key identity for products of sines is: This holds for positive integer n and can be proved using De Moivre's theorem or by considering the imaginary part of the product of complex exponentials corresponding to shifted angles.[54] For δ = π/n, this provides a direct link between the multiple-angle sine and the finite product. Variants arise by adjusting the phase or using cosine substitutions via Euler's formula, sin φ = (e^{iφ} - e^{-iφ})/(2i), where the product transforms into a ratio of polynomials in e^{iθ}.[55] Setting θ = π/n in the identity yields the classical fixed-angle product: This formula, derivable from the limit of the general product or directly from the polynomial whose roots are the sines, evaluates explicitly for small n; for instance, when n=3, the product sin(π/3) sin(2π/3) = (√3/2)(√3/2) = 3/4, matching 3/2^{2}.[56] For arbitrary δ, closed forms for ∏_{k=0}^{n-1} sin(θ + kδ) involve the sine of a summed argument, such as sin(n(θ + (n-1)δ/2)) divided by sin(nδ/2) up to a scaling factor, obtained via complex logarithmic summation or the Dirichlet kernel in Fourier analysis.[55] More advanced finite products connect trigonometric evaluations to algebraic sequences. For example, for odd n, where F_n denotes the nth Fibonacci number; this stems from Binet's formula and polynomial matching at specific points like y=1. Similar identities exist for Lucas numbers using cosine products.[55] Another form is derived analogously from evaluating cyclotomic polynomials at roots of unity.[53] These identities apply to rooting polynomials through trigonometric means, as the product form relates to the factorization of sin(nz) or Chebyshev polynomials U_{n-1}(cos θ) = sin(nθ)/sin θ, whose roots are cos(((2k-1)π)/(2n)); this substitution solves equations like 8x^3 - 4x^2 - 4x + 1 = 0 by setting x = cos φ, yielding explicit roots via the product.[57] In 2025 computational contexts, such formulas enable precise numerical verification in software like MATLAB or Python's NumPy, avoiding floating-point errors in iterative root-finding for trigonometric polynomials; for n=4 and θ=π/12, sin(4·π/12)=sin(π/3)=√3/2 ≈ 0.866, while 2^{3} ∏ sin(π/12 + kπ/4) for k=0 to 3 computes as 8 · sin(π/12) · sin(π/3) · sin(5π/12) · sin(5π/6) ≈ 8 · 0.259 · 0.866 · 0.966 · 0.500 ≈ 0.866, confirming the identity to high precision.[54]Exponential and Hyperbolic Relations

Relation to complex exponentials

Trigonometric functions can be expressed in terms of complex exponentials through Euler's formula, which states that for any real number , This relation, first established by Leonhard Euler in his 1748 treatise Introductio in analysin infinitorum, links the exponential function with trigonometric functions via the imaginary unit , where . It provides a powerful analytic tool for deriving identities and understanding the periodic nature of sine and cosine as the imaginary and real parts, respectively, of the complex exponential.[58][59] From Euler's formula, the expressions for sine and cosine in terms of exponentials follow directly by isolating the imaginary and real components. Specifically, These forms arise by adding and subtracting Euler's formula with its complex conjugate, , which highlights the even and odd symmetries of cosine and sine, respectively. Such representations facilitate proofs of addition formulas and other identities through properties of exponents.[59][60] The tangent function can similarly be rewritten using complex exponentials: This identity is obtained by dividing the exponential form of sine by that of cosine, simplifying the result with the imaginary unit. It proves useful in contexts involving ratios of trigonometric functions, such as in partial fraction decompositions or solving differential equations.[59] Euler's formula also underpins De Moivre's theorem, which states that for any integer , or equivalently, . This exponential interpretation extends De Moivre's original result from 1722, enabling straightforward computation of multiple-angle formulas by raising the complex exponential to powers, thus avoiding recursive trigonometric identities.[59]Relation to complex hyperbolic functions

The trigonometric functions sine and cosine can be expressed in terms of the complex hyperbolic functions sinh and cosh through substitution of imaginary arguments, providing a direct link between circular and hyperbolic geometries in the complex plane. Specifically, These relations follow from the exponential definitions of both sets of functions and hold for complex arguments, enabling the translation of trigonometric identities into their hyperbolic counterparts.[61] A key example is the Pythagorean identity , which, upon substitution, yields . This aligns with the fundamental hyperbolic identity evaluated at , demonstrating how the imaginary unit bridges the two systems while preserving structural similarities. Similar transformations apply to other identities, such as those for tangent: derived from the ratio of sine and cosine expressions.[62] These connections serve as a mathematical bridge in applications requiring mixed trigonometric and hyperbolic behaviors, particularly in solving partial differential equations (PDEs) where complex arguments unify wave propagation models across Euclidean and hyperbolic domains. In quantum mechanics, the relations appear in Wick rotations to imaginary time, facilitating the transition from oscillatory solutions to exponential decays in path integral formulations.Infinite Expansions

Series expansions

The Taylor series expansions, also known as Maclaurin series when centered at zero, provide power series representations of trigonometric functions that are useful for approximations, especially near θ = 0. These series arise from the general Taylor expansion formula and reflect the analytic nature of the functions.[63] The sine function has the infinite series expansion which converges for all real θ, as sine is an entire function in the complex plane.[64] Similarly, the cosine function expands as and also converges for all real θ.[65] These expansions can be derived from the exponential series via Euler's formula, relating trigonometric functions to complex exponentials. For the tangent function, the series is more involved and incorporates Bernoulli numbers B_{2n}: This series has a radius of convergence of π/2, determined by the nearest singularities at θ = ±π/2 where cosine vanishes.[66] The coefficients arise from higher-order derivatives of tan θ at θ = 0, which relate to the poles of the function.[67] In physics, the leading terms of these series enable small-angle approximations, such as sin θ ≈ θ and cos θ ≈ 1 for θ ≪ 1 radian, which simplify analyses of oscillatory systems. For instance, in the simple pendulum, this approximation yields the period T ≈ 2π √(L/g), valid for small amplitudes and widely used in introductory mechanics.[68] Such approximations also appear in optics for paraxial ray tracing and in astronomy for estimating angular sizes when distances greatly exceed object dimensions.[69]Infinite product formulas

Infinite product formulas express trigonometric functions as infinite products over their zeros in the complex plane, derived from the Weierstrass factorization theorem for entire functions.[70] This theorem states that any entire function can be represented as a product involving factors corresponding to its zeros, along with an exponential factor to ensure convergence.[70] For trigonometric functions like sine and cosine, which are entire of order 1, the Weierstrass canonical products of genus 0 or 1 suffice without additional exponential terms, as the zeros are simple and lie on the real axis at multiples of π.[70] These representations were first discovered by Leonhard Euler in the 1730s, with the sine product appearing in his 1748 work Introductio in analysin infinitorum.[71] Euler derived the formula by comparing the Taylor series expansion of sine with its factorization into linear terms, inspired by earlier finite products like Wallis's.[71] The products converge uniformly on compact sets away from the poles, thanks to the exponential convergence factors implicit in the Weierstrass construction.[70] The infinite product for the sine function is where the zeros occur at integer multiples of π.[72] For the cosine function, the product is reflecting zeros at odd multiples of π/2.[73] The tangent function, being the ratio of sine to cosine, has the product form with poles at odd multiples of π/2.[73] These formulas highlight the global structure of the functions through their zero sets and are foundational in complex analysis.[74]Viète's infinite product

Viète's infinite product is a seminal trigonometric identity that expresses the sinc function as an infinite product of cosines, discovered by the French mathematician François Viète in 1593. This formula predates Leonhard Euler's later work on infinite products and represents the earliest known infinite product in mathematics, originally used by Viète to approximate the value of π to ten decimal places through computations involving polygons with up to 393,216 sides. Published in his work Supplementum geometriae, the identity highlights the iterative nature of trigonometric functions and their connection to geometric limits.[75] The core identity is given by valid for all real where the product converges, which is everywhere except at odd multiples of . This formula arises from repeated application of the double-angle formula for sine, . Starting with , substituting iteratively yields . Dividing by and taking the limit as , since , produces the infinite product.[76] Setting specializes the identity to a representation of : This product converges slowly but allows numerical approximation of by truncating terms, as Viète demonstrated with high-sided polygons to achieve his decimal precision. An equivalent nested radical form, also derived by Viète, emerges from iterating the half-angle formula for cosine, , starting from : This nested structure underscores the formula's geometric origins in doubling polygon sides to approximate the circle.[77]Inverse Trigonometric Identities

Basic identities for inverse functions

The inverse trigonometric functions, such as arcsine (arcsin), arccosine (arccos), and arctangent (arctan), are defined to return principal values within specific ranges to ensure they are single-valued functions, despite the periodic nature of the trigonometric functions. These principal ranges are chosen so that the functions are bijective over their domains. For example, the range of arcsin is , ensuring that for any in , there is a unique angle in this interval whose sine is .[78] The domains and principal ranges of the inverse trigonometric functions are as follows:| Function | Domain | Principal Range |

|---|---|---|

Composition of trigonometric and inverse functions

The composition of trigonometric functions with inverse trigonometric functions of different types often yields simplified algebraic expressions, particularly when leveraging the principal ranges of the inverse functions. For instance, holds for , where the positive square root is taken due to the range of , which lies in , ensuring the sine is non-negative in that interval.[80] Similarly, for , as the range of is , where cosine is non-negative.[81] These identities arise from the Pythagorean theorem applied to the right triangle definitions implicit in the inverse functions. Further compositions extend to tangent, such as for . This follows by setting , so and , yielding .[82] The domain excludes the endpoints to avoid division by zero. A key identity is for , following from the ranges where . In general, for a trigonometric function and its inverse , the composition holds within the domain of , such as for sine and cosine inverses. However, branch caveats apply due to the multi-valued nature of trigonometric functions; the principal branch ensures uniqueness, but compositions with different functions (e.g., sine and arccosine) may introduce sign choices or restrictions based on the range.[83] For instance, the square root in the earlier identities reflects the positive branch selection. These identities find applications in solving transcendental equations, such as those arising in optimization or physics, where substituting transforms trigonometric equations into algebraic ones solvable by radicals. For example, equations like can be resolved using for principal values, aiding in explicit solutions.[82]Geometric and Constant Identities

Identities without variables