Recent from talks

Nothing was collected or created yet.

Cloud computing

View on Wikipedia

Cloud computing is "a paradigm for enabling network access to a scalable and elastic pool of shareable physical or virtual resources with self-service provisioning and administration on-demand," according to ISO.[1] It is commonly referred to as "the cloud".[2]

Characteristics

[edit]In 2011, the National Institute of Standards and Technology (NIST) identified five "essential characteristics" for cloud systems.[3] Below are the exact definitions according to NIST:[3]

- On-demand self-service: "A consumer can unilaterally provision computing capabilities, such as server time and network storage, as needed automatically without requiring human interaction with each service provider."

- Broad network access: "Capabilities are available over the network and accessed through standard mechanisms that promote use by heterogeneous thin or thick client platforms (e.g., mobile phones, tablets, laptops, and workstations)."

- Resource pooling: " The provider's computing resources are pooled to serve multiple consumers using a multi-tenant model, with different physical and virtual resources dynamically assigned and reassigned according to consumer demand."

- Rapid elasticity: "Capabilities can be elastically provisioned and released, in some cases automatically, to scale rapidly outward and inward commensurate with demand. To the consumer, the capabilities available for provisioning often appear unlimited and can be appropriated in any quantity at any time."

- Measured service: "Cloud systems automatically control and optimize resource use by leveraging a metering capability at some level of abstraction appropriate to the type of service (e.g., storage, processing, bandwidth, and active user accounts). Resource usage can be monitored, controlled, and reported, providing transparency for both the provider and consumer of the utilized service.

By 2023, the International Organization for Standardization (ISO) had expanded and refined the list.[4]

History

[edit]The history of cloud computing extends to the 1960s, with the initial concepts of time-sharing becoming popularized via remote job entry (RJE). The "data center" model, where users submitted jobs to operators to run on mainframes, was predominantly used during this era. This was a time of exploration and experimentation with ways to make large-scale computing power available to more users through time-sharing, optimizing the infrastructure, platform, and applications, and increasing efficiency for end users.[5]

The "cloud" metaphor for virtualized services dates to 1994, when it was used by General Magic for the universe of "places" that mobile agents in the Telescript environment could "go". The metaphor is credited to David Hoffman, a General Magic communications specialist, based on its long-standing use in networking and telecom.[6] The expression cloud computing became more widely known in 1996 when Compaq Computer Corporation drew up a business plan for future computing and the Internet. The company's ambition was to supercharge sales with "cloud computing-enabled applications". The business plan foresaw that online consumer file storage would likely be commercially successful. As a result, Compaq decided to sell server hardware to internet service providers.[7]

In the 2000s, the application of cloud computing began to take shape with the establishment of Amazon Web Services (AWS) in 2002, which allowed developers to build applications independently. In 2006 Amazon Simple Storage Service, known as Amazon S3, and the Amazon Elastic Compute Cloud (EC2) were released. In 2008 NASA's development of the first open-source software for deploying private and hybrid clouds.[8][9]

The following decade saw the launch of various cloud services. In 2010, Microsoft launched Microsoft Azure, and Rackspace Hosting and NASA initiated an open-source cloud-software project, OpenStack. IBM introduced the IBM SmartCloud framework in 2011, and Oracle announced the Oracle Cloud in 2012. In December 2019, Amazon launched AWS Outposts, a service that extends AWS infrastructure, services, APIs, and tools to customer data centers, co-location spaces, or on-premises facilities.[10][11]

Value proposition

[edit]This section cites its sources but does not provide page references. (January 2024) |

Cloud computing can enable shorter time to market by providing pre-configured tools, scalable resources, and managed services, allowing users to focus on their core business value instead of maintaining infrastructure. Cloud platforms can enable organizations and individuals to reduce upfront capital expenditures on physical infrastructure by shifting to an operational expenditure model, where costs scale with usage. Cloud platforms also offer managed services and tools, such as artificial intelligence, data analytics, and machine learning, which might otherwise require significant in-house expertise and infrastructure investment.[12][13][14]

While cloud computing can offer cost advantages through effective resource optimization, organizations often face challenges such as unused resources, inefficient configurations, and hidden costs without proper oversight and governance. Many cloud platforms provide cost management tools, such as AWS Cost Explorer and Azure Cost Management, and frameworks like FinOps have emerged to standardize financial operations in the cloud. Cloud computing also facilitates collaboration, remote work, and global service delivery by enabling secure access to data and applications from any location with an internet connection.[12][13][14]

Cloud providers offer various redundancy options for core services, such as managed storage and managed databases, though redundancy configurations often vary by service tier. Advanced redundancy strategies, such as cross-region replication or failover systems, typically require explicit configuration and may incur additional costs or licensing fees.[12][13][14]

Cloud environments operate under a shared responsibility model, where providers are typically responsible for infrastructure security, physical hardware, and software updates, while customers are accountable for data encryption, identity and access management (IAM), and application-level security. These responsibilities vary depending on the cloud service model—Infrastructure as a Service (IaaS), Platform as a Service (PaaS), or Software as a Service (SaaS)—with customers typically having more control and responsibility in IaaS environments and progressively less in PaaS and SaaS models, often trading control for convenience and managed services.[12][13][14]

Adoption and suitability

[edit]This section cites its sources but does not provide page references. (January 2024) |

The decision to adopt cloud computing or maintain on-premises infrastructure depends on factors such as scalability, cost structure, latency requirements, regulatory constraints, and infrastructure customization.[15][16][17][18]

Organizations with variable or unpredictable workloads, limited capital for upfront investments, or a focus on rapid scalability benefit from cloud adoption. Startups, SaaS companies, and e-commerce platforms often prefer the pay-as-you-go operational expenditure (OpEx) model of cloud infrastructure. Additionally, companies prioritizing global accessibility, remote workforce enablement, disaster recovery, and leveraging advanced services such as AI/ML and analytics are well-suited for the cloud. In recent years, some cloud providers have started offering specialized services for high-performance computing and low-latency applications, addressing some use cases previously exclusive to on-premises setups.[15][16][17][18]

On the other hand, organizations with strict regulatory requirements, highly predictable workloads, or reliance on deeply integrated legacy systems may find cloud infrastructure less suitable. Businesses in industries like defense, government, or those handling highly sensitive data often favor on-premises setups for greater control and data sovereignty. Additionally, companies with ultra-low latency requirements, such as high-frequency trading (HFT) firms, rely on custom hardware (e.g., FPGAs) and physical proximity to exchanges, which most cloud providers cannot fully replicate despite recent advancements. Similarly, tech giants like Google, Meta, and Amazon build their own data centers due to economies of scale, predictable workloads, and the ability to customize hardware and network infrastructure for optimal efficiency. However, these companies also use cloud services selectively for certain workloads and applications where it aligns with their operational needs.[15][16][17][18]

In practice, many organizations are increasingly adopting hybrid cloud architectures, combining on-premises infrastructure with cloud services. This approach allows businesses to balance scalability, cost-effectiveness, and control, offering the benefits of both deployment models while mitigating their respective limitations.[15][16][17][18]

Challenges and limitations

[edit]One of the main challenges of cloud computing, in comparison to more traditional on-premises computing, is data security and privacy. Cloud users entrust their sensitive data to third-party providers, who may not have adequate measures to protect it from unauthorized access, breaches, or leaks. Cloud users also face compliance risks if they have to adhere to certain regulations or standards regarding data protection, such as GDPR or HIPAA.[19]

Another challenge of cloud computing is reduced visibility and control. Cloud users may not have full insight into how their cloud resources are managed, configured, or optimized by their providers. They may also have limited ability to customize or modify their cloud services according to their specific needs or preferences.[19] Complete understanding of all technology may be impossible, especially given the scale, complexity, and deliberate opacity of contemporary systems; however, there is a need for understanding complex technologies and their interconnections to have power and agency within them.[20] The metaphor of the cloud can be seen as problematic as cloud computing retains the aura of something noumenal and numinous; it is something experienced without precisely understanding what it is or how it works.[21]

Additionally, cloud migration is a significant challenge. This process involves transferring data, applications, or workloads from one cloud environment to another, or from on-premises infrastructure to the cloud. Cloud migration can be complicated, time-consuming, and expensive, particularly when there are compatibility issues between different cloud platforms or architectures. If not carefully planned and executed, cloud migration can lead to downtime, reduced performance, or even data loss.[22]

Cloud migration challenges

[edit]According to the 2024 State of the Cloud Report by Flexera, approximately 50% of respondents identified the following top challenges when migrating workloads to public clouds:[23]

- "Understanding application dependencies"

- "Comparing on-premise and cloud costs"

- "Assessing technical feasibility."

Implementation challenges

[edit]Applications hosted in the cloud are susceptible to the fallacies of distributed computing, a series of misconceptions that can lead to significant issues in software development and deployment.[24]

Cloud cost overruns

[edit]In a report by Gartner, a survey of 200 IT leaders revealed that 69% experienced budget overruns in their organizations' cloud expenditures during 2023. Conversely, 31% of IT leaders whose organizations stayed within budget attributed their success to accurate forecasting and budgeting, proactive monitoring of spending, and effective optimization.[25]

The 2024 Flexera State of Cloud Report identifies the top cloud challenges as managing cloud spend, followed by security concerns and lack of expertise. Public cloud expenditures exceeded budgeted amounts by an average of 15%. The report also reveals that cost savings is the top cloud initiative for 60% of respondents. Furthermore, 65% measure cloud progress through cost savings, while 42% prioritize shorter time-to-market, indicating that cloud's promise of accelerated deployment is often overshadowed by cost concerns.[23]

Service Level Agreements

[edit]Typically, cloud providers' Service Level Agreements (SLAs) do not encompass all forms of service interruptions. Exclusions typically include planned maintenance, downtime resulting from external factors such as network issues, human errors, like misconfigurations, natural disasters, force majeure events, or security breaches. Typically, customers bear the responsibility of monitoring SLA compliance and must file claims for any unmet SLAs within a designated timeframe. Customers should be aware of how deviations from SLAs are calculated, as these parameters may vary by service. These requirements can place a considerable burden on customers. Additionally, SLA percentages and conditions can differ across various services within the same provider, with some services lacking any SLA altogether. In cases of service interruptions due to hardware failures in the cloud provider, the company typically does not offer monetary compensation. Instead, eligible users may receive credits as outlined in the corresponding SLA.[26][27][28][29]

Leaky abstractions

[edit]Cloud computing abstractions aim to simplify resource management, but leaky abstractions can expose underlying complexities. These variations in abstraction quality depend on the cloud vendor, service and architecture. Mitigating leaky abstractions requires users to understand the implementation details and limitations of the cloud services they utilize.[30][31][32]

Service lock-in within the same vendor

[edit]Service lock-in within the same vendor occurs when a customer becomes dependent on specific services within a cloud vendor, making it challenging to switch to alternative services within the same vendor when their needs change.[33][34]

Security and privacy

[edit]

Cloud computing poses privacy concerns because the service provider can access the data that is in the cloud at any time. It could accidentally or deliberately alter or delete information.[35] Many cloud providers can share information with third parties if necessary for purposes of law and order without a warrant. That is permitted in their privacy policies, which users must agree to before they start using cloud services. Solutions to privacy include policy and legislation as well as end-users' choices for how data is stored.[35] Users can encrypt data that is processed or stored within the cloud to prevent unauthorized access.[35] Identity management systems can also provide practical solutions to privacy concerns in cloud computing. These systems distinguish between authorized and unauthorized users and determine the amount of data that is accessible to each entity.[36] The systems work by creating and describing identities, recording activities, and getting rid of unused identities.

According to the Cloud Security Alliance, the top three threats in the cloud are Insecure Interfaces and APIs, Data Loss & Leakage, and Hardware Failure—which accounted for 29%, 25% and 10% of all cloud security outages respectively. Together, these form shared technology vulnerabilities. In a cloud provider platform being shared by different users, there may be a possibility that information belonging to different customers resides on the same data server. Additionally, Eugene Schultz, chief technology officer at Emagined Security, said that hackers are spending substantial time and effort looking for ways to penetrate the cloud. "There are some real Achilles' heels in the cloud infrastructure that are making big holes for the bad guys to get into". Because data from hundreds or thousands of companies can be stored on large cloud servers, hackers can theoretically gain control of huge stores of information through a single attack—a process he called "hyperjacking". Some examples of this include the Dropbox security breach, and iCloud 2014 leak.[37] Dropbox had been breached in October 2014, having over seven million of its users passwords stolen by hackers in an effort to get monetary value from it by Bitcoins (BTC). By having these passwords, they are able to read private data as well as have this data be indexed by search engines (making the information public).[37]

There is the problem of legal ownership of the data (If a user stores some data in the cloud, can the cloud provider profit from it?). Many Terms of Service agreements are silent on the question of ownership.[38] Physical control of the computer equipment (private cloud) is more secure than having the equipment off-site and under someone else's control (public cloud). This delivers great incentive to public cloud computing service providers to prioritize building and maintaining strong management of secure services.[39] Some small businesses that do not have expertise in IT security could find that it is more secure for them to use a public cloud. There is the risk that end users do not understand the issues involved when signing on to a cloud service (persons sometimes do not read the many pages of the terms of service agreement, and just click "Accept" without reading). This is important now that cloud computing is common and required for some services to work, for example for an intelligent personal assistant (Apple's Siri or Google Assistant). Fundamentally, private cloud is seen as more secure with higher levels of control for the owner, however public cloud is seen to be more flexible and requires less time and money investment from the user.[40]

The attacks that can be made on cloud computing systems include man-in-the middle attacks, phishing attacks, authentication attacks, and malware attacks. One of the largest threats is considered to be malware attacks, such as Trojan horses. Recent research conducted in 2022 has revealed that the Trojan horse injection method is a serious problem with harmful impacts on cloud computing systems.[41]

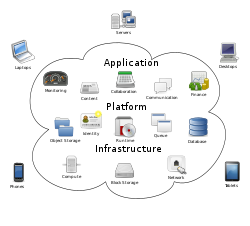

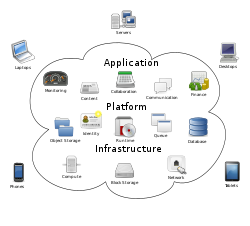

Service models

[edit]

The National Institute of Standards and Technology recognized three cloud service models in 2011: Infrastructure as a Service (IaaS), Platform as a Service (PaaS), and Software as a Service (SaaS).[3] The International Organization for Standardization (ISO) later identified additional models in 2023, including "Network as a Service", "Communications as a Service", "Compute as a Service", and "Data Storage as a Service".[4]

Infrastructure as a service (IaaS)

[edit]Infrastructure as a service (IaaS) refers to online services that provide high-level APIs used to abstract various low-level details of underlying network infrastructure like physical computing resources, location, data partitioning, scaling, security, backup, etc. A hypervisor runs the virtual machines as guests. Pools of hypervisors within the cloud operational system can support large numbers of virtual machines and the ability to scale services up and down according to customers' varying requirements. Linux containers run in isolated partitions of a single Linux kernel running directly on the physical hardware. Linux cgroups and namespaces are the underlying Linux kernel technologies used to isolate, secure and manage the containers. The use of containers offers higher performance than virtualization because there is no hypervisor overhead. IaaS clouds often offer additional resources such as a virtual-machine disk-image library, raw block storage, file or object storage, firewalls, load balancers, IP addresses, virtual local area networks (VLANs), and software bundles.[42]

The NIST's definition of cloud computing describes IaaS as "where the consumer is able to deploy and run arbitrary software, which can include operating systems and applications. The consumer does not manage or control the underlying cloud infrastructure but has control over operating systems, storage, and deployed applications; and possibly limited control of select networking components (e.g., host firewalls)."[3]

IaaS-cloud providers supply these resources on-demand from their large pools of equipment installed in data centers. For wide-area connectivity, customers can use either the Internet or carrier clouds (dedicated virtual private networks). To deploy their applications, cloud users install operating-system images and their application software on the cloud infrastructure. In this model, the cloud user patches and maintains the operating systems and the application software. Cloud providers typically bill IaaS services on a utility computing basis: cost reflects the number of resources allocated and consumed.[43]

Platform as a service (PaaS)

[edit]The NIST's definition of cloud computing defines Platform as a Service as:[3]

The capability provided to the consumer is to deploy onto the cloud infrastructure consumer-created or acquired applications created using programming languages, libraries, services, and tools supported by the provider. The consumer does not manage or control the underlying cloud infrastructure including network, servers, operating systems, or storage, but has control over the deployed applications and possibly configuration settings for the application-hosting environment.

PaaS vendors offer a development environment to application developers. The provider typically develops toolkit and standards for development and channels for distribution and payment. In the PaaS models, cloud providers deliver a computing platform, typically including an operating system, programming-language execution environment, database, and the web server. Application developers develop and run their software on a cloud platform instead of directly buying and managing the underlying hardware and software layers. With some PaaS, the underlying computer and storage resources scale automatically to match application demand so that the cloud user does not have to allocate resources manually.[44][need quotation to verify]

Some integration and data management providers also use specialized applications of PaaS as delivery models for data. Examples include iPaaS (Integration Platform as a Service) and dPaaS (Data Platform as a Service). iPaaS enables customers to develop, execute and govern integration flows.[45] Under the iPaaS integration model, customers drive the development and deployment of integrations without installing or managing any hardware or middleware.[46] dPaaS delivers integration—and data-management—products as a fully managed service.[47] Under the dPaaS model, the PaaS provider, not the customer, manages the development and execution of programs by building data applications for the customer. dPaaS users access data through data-visualization tools.[48]

Software as a service (SaaS)

[edit]The NIST's definition of cloud computing defines Software as a Service as:[3]

The capability provided to the consumer is to use the provider's applications running on a cloud infrastructure. The applications are accessible from various client devices through either a thin client interface, such as a web browser (e.g., web-based email), or a program interface. The consumer does not manage or control the underlying cloud infrastructure including network, servers, operating systems, storage, or even individual application capabilities, with the possible exception of limited user-specific application configuration settings.

In the software as a service (SaaS) model, users gain access to application software and databases. Cloud providers manage the infrastructure and platforms that run the applications. SaaS is sometimes referred to as "on-demand software" and is usually priced on a pay-per-use basis or using a subscription fee.[49] In the SaaS model, cloud providers install and operate application software in the cloud and cloud users access the software from cloud clients. Cloud users do not manage the cloud infrastructure and platform where the application runs. This eliminates the need to install and run the application on the cloud user's own computers, which simplifies maintenance and support. Cloud applications differ from other applications in their scalability—which can be achieved by cloning tasks onto multiple virtual machines at run-time to meet changing work demand.[50] Load balancers distribute the work over the set of virtual machines. This process is transparent to the cloud user, who sees only a single access-point. To accommodate a large number of cloud users, cloud applications can be multitenant, meaning that any machine may serve more than one cloud-user organization.

The pricing model for SaaS applications is typically a monthly or yearly flat fee per user,[51] so prices become scalable and adjustable if users are added or removed at any point. It may also be free.[52] Proponents claim that SaaS gives a business the potential to reduce IT operational costs by outsourcing hardware and software maintenance and support to the cloud provider. This enables the business to reallocate IT operations costs away from hardware/software spending and from personnel expenses, towards meeting other goals. In addition, with applications hosted centrally, updates can be released without the need for users to install new software. One drawback of SaaS comes with storing the users' data on the cloud provider's server. As a result,[citation needed] there could be unauthorized access to the data.[53] Examples of applications offered as SaaS are games and productivity software like Google Docs and Office Online. SaaS applications may be integrated with cloud storage or File hosting services, which is the case with Google Docs being integrated with Google Drive, and Office Online being integrated with OneDrive.[54]

Serverless computing

[edit]Serverless computing allows customers to use various cloud capabilities without the need to provision, deploy, or manage hardware or software resources, apart from providing their application code or data. ISO/IEC 22123-2:2023 classifies serverless alongside Infrastructure as a Service (IaaS), Platform as a Service (PaaS), and Software as a Service (SaaS) under the broader category of cloud service categories. Notably, while ISO refers to these classifications as cloud service categories, the National Institute of Standards and Technology (NIST) refers to them as service models.[3][4]

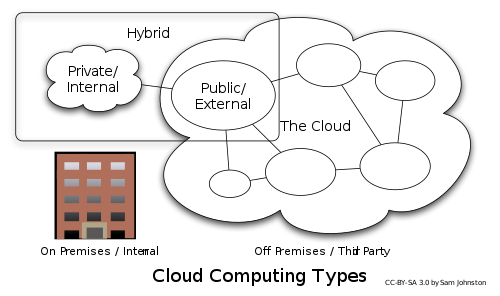

Deployment models

[edit]

"A cloud deployment model represents the way in which cloud computing can be organized based on the control and sharing of physical or virtual resources."[4] Cloud deployment models define the fundamental patterns of interaction between cloud customers and cloud providers. They do not detail implementation specifics or the configuration of resources.[4]

Private

[edit]Private cloud is cloud infrastructure operated solely for a single organization, whether managed internally or by a third party, and hosted either internally or externally.[3] Undertaking a private cloud project requires significant engagement to virtualize the business environment, and requires the organization to reevaluate decisions about existing resources. It can improve business, but every step in the project raises security issues that must be addressed to prevent serious vulnerabilities. Self-run data centers[55] are generally capital intensive. They have a significant physical footprint, requiring allocations of space, hardware, and environmental controls. These assets have to be refreshed periodically, resulting in additional capital expenditures. They have attracted criticism because users "still have to buy, build, and manage them" and thus do not benefit from less hands-on management,[56] essentially "[lacking] the economic model that makes cloud computing such an intriguing concept".[57][58]

Public

[edit]Cloud services are considered "public" when they are delivered over the public Internet, and they may be offered as a paid subscription, or free of charge.[59] Architecturally, there are few differences between public- and private-cloud services, but security concerns increase substantially when services (applications, storage, and other resources) are shared by multiple customers. Most public-cloud providers offer direct-connection services that allow customers to securely link their legacy data centers to their cloud-resident applications.[60][61]

Several factors like the functionality of the solutions, cost, integrational and organizational aspects as well as safety & security are influencing the decision of enterprises and organizations to choose a public cloud or on-premises solution.[62]

Hybrid

[edit]Hybrid cloud is a composition of a public cloud and a private environment, such as a private cloud or on-premises resources,[63][64] that remain distinct entities but are bound together, offering the benefits of multiple deployment models. Hybrid cloud can also mean the ability to connect collocation, managed or dedicated services with cloud resources.[3] Gartner defines a hybrid cloud service as a cloud computing service that is composed of some combination of private, public and community cloud services, from different service providers.[65] A hybrid cloud service crosses isolation and provider boundaries so that it cannot be simply put in one category of private, public, or community cloud service. It allows one to extend either the capacity or the capability of a cloud service, by aggregation, integration or customization with another cloud service.

Varied use cases for hybrid cloud composition exist. For example, an organization may store sensitive client data in house on a private cloud application, but interconnect that application to a business intelligence application provided on a public cloud as a software service.[66] This example of hybrid cloud extends the capabilities of the enterprise to deliver a specific business service through the addition of externally available public cloud services. Hybrid cloud adoption depends on a number of factors such as data security and compliance requirements, level of control needed over data, and the applications an organization uses.[67]

Another example of hybrid cloud is one where IT organizations use public cloud computing resources to meet temporary capacity needs that can not be met by the private cloud.[68] This capability enables hybrid clouds to employ cloud bursting for scaling across clouds.[3] Cloud bursting is an application deployment model in which an application runs in a private cloud or data center and "bursts" to a public cloud when the demand for computing capacity increases. A primary advantage of cloud bursting and a hybrid cloud model is that an organization pays for extra compute resources only when they are needed.[69] Cloud bursting enables data centers to create an in-house IT infrastructure that supports average workloads, and use cloud resources from public or private clouds, during spikes in processing demands.[70]

Community

[edit]Community cloud shares infrastructure between several organizations from a specific community with common concerns (security, compliance, jurisdiction, etc.), whether it is managed internally or by a third-party, and hosted internally or externally, the costs are distributed among fewer users compared to a public cloud (but more than a private cloud). As a result, only a portion of the potential cost savings of cloud computing is achieved. [3]

Multi cloud

[edit]According to ISO/IEC 22123-1: "multi-cloud is a cloud deployment model in which a customer uses public cloud services provided by two or more cloud service providers". [71] Poly cloud refers to the use of multiple public clouds for the purpose of leveraging specific services that each provider offers. It differs from Multi cloud in that it is not designed to increase flexibility or mitigate against failures but is rather used to allow an organization to achieve more than could be done with a single provider.[72]

Market

[edit]According to International Data Corporation (IDC), global spending on cloud computing services has reached $706 billion and is expected to reach $1.3 trillion by 2025.[73] Gartner estimated that global public cloud services end-user spending would reach $600 billion by 2023.[74] According to a McKinsey & Company report, cloud cost-optimization levers and value-oriented business use cases foresee more than $1 trillion in run-rate EBITDA across Fortune 500 companies as up for grabs in 2030.[75] In 2022, more than $1.3 trillion in enterprise IT spending was at stake from the shift to the cloud, growing to almost $1.8 trillion in 2025, according to Gartner.[76]

The European Commission's 2012 Communication identified several issues which were impeding the development of the cloud computing market:[77]: Section 3

- fragmentation of the digital single market across the EU

- concerns about contracts including reservations about data access and ownership, data portability, and change control

- variations in standards applicable to cloud computing

The Communication set out a series of "digital agenda actions" which the Commission proposed to undertake in order to support the development of a fair and effective market for cloud computing services.[77]: Pages 6–14

Cloud Computing Vendors

[edit]As of 2025, the three largest cloud computing providers by market share, commonly referred to as hyperscalers, are Amazon Web Services (AWS), Microsoft Azure, and Google Cloud.[78][79] These companies dominate the global cloud market due to their extensive infrastructure, broad service offerings, and scalability.

In recent years, organizations have increasingly adopted alternative cloud providers, which offer specialized services that distinguish them from hyperscalers. These providers may offer advantages such as lower costs, improved cost transparency and predictability, enhanced data sovereignty (particularly within regions such as the European Union to comply with regulations like the General Data Protection Regulation (GDPR)), stronger alignment with local regulatory requirements, or industry-specific services.[80]

Alternative cloud providers are often part of multi-cloud strategies, where organizations use multiple cloud services—both from hyperscalers and specialized providers—to optimize performance, compliance, and cost efficiency. However, they do not necessarily serve as direct replacements for hyperscalers, as their offerings are typically more specialized.[80]

Similar concepts

[edit]The goal of cloud computing is to allow users to take benefit from all of these technologies, without the need for deep knowledge about or expertise with each one of them. The cloud aims to cut costs and helps the users focus on their core business instead of being impeded by IT obstacles.[81] The main enabling technology for cloud computing is virtualization. Virtualization software separates a physical computing device into one or more "virtual" devices, each of which can be easily used and managed to perform computing tasks. With operating system–level virtualization essentially creating a scalable system of multiple independent computing devices, idle computing resources can be allocated and used more efficiently. Virtualization provides the agility required to speed up IT operations and reduces cost by increasing infrastructure utilization. Autonomic computing automates the process through which the user can provision resources on-demand. By minimizing user involvement, automation speeds up the process, reduces labor costs and reduces the possibility of human errors.[81]

Cloud computing uses concepts from utility computing to provide metrics for the services used. Cloud computing attempts to address QoS (quality of service) and reliability problems of other grid computing models.[81]

Cloud computing shares characteristics with:

- Client–server model – Client–server computing refers broadly to any distributed application that distinguishes between service providers (servers) and service requestors (clients).[82]

- Computer bureau – A service bureau providing computer services, particularly from the 1960s to 1980s.

- Grid computing – A form of distributed and parallel computing, whereby a 'super and virtual computer' is composed of a cluster of networked, loosely coupled computers acting in concert to perform very large tasks.

- Fog computing – Distributed computing paradigm that provides data, compute, storage and application services closer to the client or near-user edge devices, such as network routers. Furthermore, fog computing handles data at the network level, on smart devices and on the end-user client-side (e.g. mobile devices), instead of sending data to a remote location for processing.

- Utility computing – The "packaging of computing resources, such as computation and storage, as a metered service similar to a traditional public utility, such as electricity."[83][84]

- Peer-to-peer – A distributed architecture without the need for central coordination. Participants are both suppliers and consumers of resources (in contrast to the traditional client-server model).

- Cloud sandbox – A live, isolated computer environment in which a program, code or file can run without affecting the application in which it runs.

See also

[edit]- Block-level storage

- Browser-based computing

- Category:Cloud computing providers

- Category:Cloud platforms

- Cloud computing architecture

- Cloud broker

- Cloud collaboration

- Cloud-computing comparison

- Cloud computing security

- Cloud gaming

- Cloud management

- Cloud-native computing

- Cloud research

- Cloud robotics

- Cloud storage

- Cloud-to-cloud integration

- Cloudlet

- Computer cluster

- Cooperative storage cloud

- Decentralized computing

- Desktop virtualization

- Dew computing

- Directory

- Distributed data store

- Distributed database

- Distributed computing

- Distributed networking

- e-Science

- Edge computing

- Edge device

- Exchange-traded fund

- File system

- Fog computing

- Fog robotics

- Green computing (environmentally sustainable computing)

- Grid computing

- In-memory database

- In-memory processing

- Internet of things

- IoT security device

- Knowledge as a service

- Microservices

- Mobile cloud computing

- Multi-access edge computing

- Multisite cloud

- Peer-to-peer

- Personal cloud

- Private cloud computing infrastructure

- Robot as a service

- Service-oriented architecture

- Time-sharing

- Ubiquitous computing

- Virtual private cloud

Notes

[edit]References

[edit]- ^ "ISO/IEC 22123-1:2023(E) - Information technology - Cloud computing - Part 1: Vocabulary". International Organization for Standardization. 2023.

- ^ "What is the cloud? | Microsoft Azure". azure.microsoft.com. Retrieved 2025-09-29.

- ^ a b c d e f g h i j k Mell, Peter; Timothy Grance (September 2011). The NIST Definition of Cloud Computing (Technical report). National Institute of Standards and Technology: U.S. Department of Commerce. doi:10.6028/NIST.SP.800-145. Special publication 800-145.

- ^ a b c d e "ISO/IEC 22123-2:2023(E) - Information technology — Cloud computing — Part 2: Concepts". International Organization for Standardization. September 2023.

- ^ James E. White (March 1971). Network Specifications for Remote Job Entry and Remote Job Output Retrieval at UCSB. Network Working Group. doi:10.17487/RFC0105. RFC 105. Status Unknown. Updated by RFC 217

- ^ Levy, Steven (April 1994). "Bill and Andy's Excellent Adventure II". Wired. Archived from the original on 2015-10-02.

- ^ Mosco, Vincent (2015). To the Cloud: Big Data in a Turbulent World. Taylor & Francis. p. 15. ISBN 978-1-317-25038-8.

- ^ "Announcing Amazon Elastic Compute Cloud (Amazon EC2) – beta". 24 August 2006. Archived from the original on 13 August 2014. Retrieved 31 May 2014.

- ^ Qian, Ling; Lou, Zhigou; Du, Yujian; Gou, Leitao. "Cloud Computing: An Overview". Retrieved 19 April 2021.

- ^ "Windows Azure General Availability". The Official Microsoft Blog. Microsoft. 2010-02-01. Archived from the original on 2014-05-11. Retrieved 2015-05-03.

- ^ "Announcing General Availability of AWS Outposts". Amazon Web Services, Inc. Archived from the original on 2021-01-21. Retrieved 2021-02-04.

- ^ a b c d Erl, Thomas; Puttini, Ricardo; Mahmood, Zaigham (2013). Cloud Computing: Concepts, Technology & Architecture. Pearson Education. ISBN 978-0-13-338752-0.

- ^ a b c d Ruparelia, Nayan B. (August 2023). Cloud Computing, revised and updated edition. MIT Press. ISBN 978-0-262-54647-8.

- ^ a b c d Cloud Computing. ISBN 978-1-284-23397-1.

- ^ a b c d Hurwitz, Judith S.; Bloor, Robin; Kaufman, Marcia; Halper, Fern (16 November 2009). Cloud Computing For Dummies. John Wiley & Sons. ISBN 978-0-470-48470-8.

- ^ a b c d Hybrid Cloud for Architects: Build robust hybrid cloud solutions using AWS and OpenStack. ISBN 978-1-78862-351-3.

- ^ a b c d Security Architecture for Hybrid Cloud: A Practical Method for Designing Security Using Zero Trust Principles. ISBN 978-1-0981-5777-7.

- ^ a b c d Kavis, Michael J. (28 January 2014). Architecting the Cloud: Design Decisions for Cloud Computing Service Models (SaaS, PaaS, and IaaS). John Wiley & Sons. ISBN 978-1-118-61761-8.

- ^ a b Marko, Kurt; Bigelow, Stephen J. (10 Nov 2022). "The pros and cons of cloud computing explained". TechTarget.

- ^ Bratton, Benjamin H. (2015). The stack: on software and sovereignty. Software studies. Cambridge, Mass. London: MIT press. ISBN 978-0-262-02957-5.

- ^ Bridle, James (2019). New dark age: technology and the end of the future. Verso.

- ^ Shurma, Ramesh (8 Mar 2023). "The Hidden Costs Of Cloud Migration". Forbes.

- ^ a b "2024 State of the Cloud Report". Flexera's State of the Cloud Report.

- ^ Fundamentals of Software Architecture: An Engineering Approach. O'Reilly Media. 2020. ISBN 978-1-4920-4345-4.

- ^ "2024 Cloud Spending: IT Balances Costs with GenAI Innovation". Gartner Peer Community. Retrieved Nov 16, 2024.

- ^ Cloud Security and Privacy An Enterprise Perspective on Risks and Compliance. O'Reilly Media. 4 September 2009. ISBN 978-1-4493-7951-3.

- ^ Requirements Engineering for Service and Cloud Computing. Springer International Publishing. 10 April 2017. ISBN 978-3-319-51310-2.

- ^ Srinivasan (14 May 2014). Cloud Computing Basics. Springer. ISBN 978-1-4614-7699-3.

- ^ Murugesan, San (August 2016). Encyclopedia of Cloud Computing. John Wiley & Sons. ISBN 978-1-118-82197-8.

- ^ Cloud Native Infrastructure: Patterns for Scalable Infrastructure and Applications in a Dynamic Environment. O'Reilly Media. 25 October 2017. ISBN 978-1-4919-8425-3.

- ^ Hausenblas, Michael (26 December 2023). Cloud Observability in Action. Simon and Schuster. ISBN 978-1-63343-959-7.

- ^ Jr, Cloves Carneiro; Schmelmer, Tim (10 December 2016). Microservices From Day One: Build robust and scalable software from the start. Apress. ISBN 978-1-4842-1937-9.

- ^ Jennings, Roger (29 December 2010). Cloud Computing with the Windows Azure Platform. John Wiley & Sons. ISBN 978-1-118-05875-6.

- ^ Cloud and Virtual Data Storage Networking. ISBN 978-1-4665-0844-6.

- ^ a b c Ryan, Mark D. (January 2011). "Cloud Computing Privacy Concerns on Our Doorstep". cacm.acm.org. Archived from the original on 2021-12-28. Retrieved 2021-05-21.

- ^ Indu, I.; Anand, P.M. Rubesh; Bhaskar, Vidhyacharan (August 1, 2018). "Identity and access management in cloud environment: Mechanisms and challenges". Engineering Science and Technology. 21 (4): 574–588. doi:10.1016/j.jestch.2018.05.010.

- ^ a b "Google Drive, Dropbox, Box and iCloud Reach the Top 5 Cloud Storage Security Breaches List". psg.hitachi-solutions.com. Archived from the original on 2015-11-23. Retrieved 2015-11-22.

- ^ Maltais, Michelle (26 April 2012). "Who owns your stuff in the cloud?". Los Angeles Times. Archived from the original on 2013-01-20. Retrieved 2012-12-14.

- ^ "Security of virtualization, cloud computing divides IT and security pros". Network World. 2010-02-22. Archived from the original on 2024-04-26. Retrieved 2010-08-22.

- ^ "The Bumpy Road to Private Clouds". 2010-12-20. Archived from the original on 2014-10-15. Retrieved 8 October 2014.

- ^ Kanaker, Hasan; Karim, Nader Abdel; Awwad, Samer A. B.; Ismail, Nurul H. A.; Zraqou, Jamal; Ali, Abdulla M. F. Al (2022-12-20). "Trojan Horse Infection Detection in Cloud Based Environment Using Machine Learning". International Journal of Interactive Mobile Technologies. 16 (24): 81–106. doi:10.3991/ijim.v16i24.35763. ISSN 1865-7923. S2CID 254960874.

- ^ Amies, Alex; Sluiman, Harm; Tong, Qiang Guo; Liu, Guo Ning (July 2012). "Infrastructure as a Service Cloud Concepts". Developing and Hosting Applications on the Cloud. IBM Press. ISBN 978-0-13-306684-5. Archived from the original on 2012-09-15. Retrieved 2012-07-19.

- ^ Nelson, Michael R. (2009). "The Cloud, the Crowd, and Public Policy". Issues in Science and Technology. 25 (4): 71–76. JSTOR 43314918.

- ^ Boniface, M.; et al. (2010). Platform-as-a-Service Architecture for Real-Time Quality of Service Management in Clouds. 5th International Conference on Internet and Web Applications and Services (ICIW). Barcelona, Spain: IEEE. pp. 155–160. doi:10.1109/ICIW.2010.91.

- ^ "Integration Platform as a Service (iPaaS)". Gartner IT Glossary. Gartner. Archived from the original on 2015-07-29. Retrieved 2015-07-20.

- ^ Gartner; Massimo Pezzini; Paolo Malinverno; Eric Thoo. "Gartner Reference Model for Integration PaaS". Archived from the original on 1 July 2013. Retrieved 16 January 2013.

- ^ Loraine Lawson (3 April 2015). "IT Business Edge". Archived from the original on 7 July 2015. Retrieved 6 July 2015.

- ^ Enterprise CIO Forum; Gabriel Lowy. "The Value of Data Platform-as-a-Service (dPaaS)". Archived from the original on 19 April 2015. Retrieved 6 July 2015.

- ^ "Definition of: SaaS". PC Magazine Encyclopedia. Ziff Davis. Archived from the original on 14 July 2014. Retrieved 14 May 2014.

- ^ Hamdaqa, Mohammad. A Reference Model for Developing Cloud Applications (PDF). Archived (PDF) from the original on 2012-10-05. Retrieved 2012-05-23.

- ^ Chou, Timothy. Introduction to Cloud Computing: Business & Technology. Archived from the original on 2016-05-05. Retrieved 2017-09-09.

- ^ "HVD: the cloud's silver lining" (PDF). Intrinsic Technology. Archived from the original (PDF) on 2 October 2012. Retrieved 30 August 2012.

- ^ Sun, Yunchuan; Zhang, Junsheng; Xiong, Yongping; Zhu, Guangyu (2014-07-01). "Data Security and Privacy in Cloud Computing". International Journal of Distributed Sensor Networks. 10 (7) 190903. doi:10.1155/2014/190903. ISSN 1550-1477. S2CID 13213544.

- ^ "Use OneDrive with Office". Microsoft Support. Archived from the original on 2022-10-15. Retrieved 2022-10-15.

- ^ "Self-Run Private Cloud Computing Solution – GovConnection". govconnection.com. 2014. Archived from the original on April 6, 2014. Retrieved April 15, 2014.

- ^ "Private Clouds Take Shape – Services – Business services – Informationweek". 2012-09-09. Archived from the original on 2012-09-09.

- ^ Haff, Gordon (2009-01-27). "Just don't call them private clouds". CNET News. Archived from the original on 2014-12-27. Retrieved 2010-08-22.

- ^ "There's No Such Thing As A Private Cloud – Cloud-computing -". 2013-01-26. Archived from the original on 2013-01-26.

- ^ Rouse, Margaret. "What is public cloud?". Definition from Whatis.com. Archived from the original on 16 October 2014. Retrieved 12 October 2014.

- ^ "Defining 'Cloud Services' and "Cloud Computing"". IDC. 2008-09-23. Archived from the original on 2010-07-22. Retrieved 2010-08-22.

- ^ "FastConnect | Oracle Cloud Infrastructure". cloud.oracle.com. Archived from the original on 2017-11-15. Retrieved 2017-11-15.

- ^ Schmidt, Rainer; Möhring, Michael; Keller, Barbara (2017). "Customer Relationship Management in a Public Cloud environment - Key influencing factors for European enterprises". HICSS. Proceedings of the 50th Hawaii International Conference on System Sciences (2017). doi:10.24251/HICSS.2017.513. hdl:10125/41673. ISBN 978-0-9981331-0-2.

- ^ "What is hybrid cloud? - Definition from WhatIs.com". SearchCloudComputing. Archived from the original on 2019-07-16. Retrieved 2019-08-10.

- ^ Butler, Brandon (2017-10-17). "What is hybrid cloud computing? The benefits of mixing private and public cloud services". Network World. Archived from the original on 2019-08-11. Retrieved 2019-08-11.

- ^ "Mind the Gap: Here Comes Hybrid Cloud – Thomas Bittman". Thomas Bittman. 24 September 2012. Archived from the original on 17 April 2015. Retrieved 22 April 2015.

- ^ "Business Intelligence Takes to Cloud for Small Businesses". CIO.com. 2014-06-04. Archived from the original on 2014-06-07. Retrieved 2014-06-04.

- ^ Désiré Athow (24 August 2014). "Hybrid cloud: is it right for your business?". TechRadar. Archived from the original on 7 July 2017. Retrieved 22 April 2015.

- ^ Metzler, Jim; Taylor, Steve. (2010-08-23) "Cloud computing: Reality vs. fiction" Archived 2013-06-19 at the Wayback Machine, Network World.

- ^ Rouse, Margaret. "Definition: Cloudbursting" Archived 2013-03-19 at the Wayback Machine, May 2011. SearchCloudComputing.com.

- ^ "How Cloudbursting "Rightsizes" the Data Center". 2012-06-22. Archived from the original on 2016-10-19. Retrieved 2016-10-19.

- ^ "ISO/IEC 22123-1:2023(E) - Information technology — Cloud computing — Part 1: Vocabulary". International Organization for Standardization: 2.

- ^ Gall, Richard (2018-05-16). "Polycloud: a better alternative to cloud agnosticism". Packt Hub. Archived from the original on 2019-11-11. Retrieved 2019-11-11.

- ^ "IDC Forecasts Worldwide "Whole Cloud" Spending to Reach $1.3 Trillion by 2025". Idc.com. 2021-09-14. Archived from the original on 2022-07-29. Retrieved 2022-07-30.

- ^ "Gartner Forecasts Worldwide Public Cloud End-User Spending to Reach Nearly $500 Billion in 2022". Archived from the original on 2022-07-25. Retrieved 2022-07-25.

- ^ "Cloud's trillion-dollar prize is up for grabs". McKinsey. Archived from the original on 2022-07-25. Retrieved 2022-07-30.

- ^ "Gartner Says More Than Half of Enterprise IT Spending in Key Market Segments Will Shift to the Cloud by 2025". Archived from the original on 2022-07-25. Retrieved 2022-07-25.

- ^ a b European Commission, Unleashing the Potential of Cloud Computing in Europe, COM(2012) 529 final, page 3, published 27 September 2012, accessed 26 April 2024

- ^ "Global cloud infrastructure market share 2024 | Statista". Statista. Archived from the original on 2025-01-18. Retrieved 2025-03-20.

- ^ "Gartner Says Worldwide IaaS Public Cloud Services Revenue Grew 16.2% i". Gartner. Retrieved 2025-03-20.

- ^ a b Linthicum, David (2022). AN INSIDER'S GUIDE TO CLOUD COMPUTING. Erscheinungsort nicht ermittelbar: ADDISON WESLEY. ISBN 978-0-13-793578-9.

- ^ a b c HAMDAQA, Mohammad (2012). Cloud Computing Uncovered: A Research Landscape (PDF). Elsevier Press. pp. 41–85. ISBN 978-0-12-396535-6. Archived (PDF) from the original on 2013-06-19. Retrieved 2013-03-19.

- ^ "Distributed Application Architecture" (PDF). Sun Microsystem. Archived (PDF) from the original on 2011-04-06. Retrieved 2009-06-16.

- ^ Vaquero, Luis M.; Rodero-Merino, Luis; Caceres, Juan; Lindner, Maik (December 2008). "A break in the clouds: Towards a cloud definition". ACM SIGCOMM Computer Communication Review. 39 (1): 50–55. doi:10.1145/1496091.1496100. S2CID 207171174.

- ^ Danielson, Krissi (2008-03-26). "Distinguishing Cloud Computing from Utility Computing". Ebizq.net. Archived from the original on 2017-11-10. Retrieved 2010-08-22.

Further reading

[edit]- Millard, Christopher (2013). Cloud Computing Law. Oxford University Press. ISBN 978-0-19-967168-7.

- Weisser, Alexander (2020). International Taxation of Cloud Computing. Editions Juridiques Libres, ISBN 978-2-88954-030-3.

- Singh, Jatinder; Powles, Julia; Pasquier, Thomas; Bacon, Jean (July 2015). "Data Flow Management and Compliance in Cloud Computing". IEEE Cloud Computing. 2 (4): 24–32. doi:10.1109/MCC.2015.69. S2CID 9812531.

- Armbrust, Michael; Stoica, Ion; Zaharia, Matei; Fox, Armando; Griffith, Rean; Joseph, Anthony D.; Katz, Randy; Konwinski, Andy; Lee, Gunho; Patterson, David; Rabkin, Ariel (1 April 2010). "A view of cloud computing". Communications of the ACM. 53 (4): 50. doi:10.1145/1721654.1721672. S2CID 1673644.

- Hu, Tung-Hui (2015). A Prehistory of the Cloud. MIT Press. ISBN 978-0-262-02951-3.

- Mell, P. (2011, September). The NIST Definition of Cloud Computing. Retrieved November 1, 2015, from National Institute of Standards and Technology website

![]() Media related to Cloud computing at Wikimedia Commons

Media related to Cloud computing at Wikimedia Commons

Cloud computing

View on GrokipediaFundamentals

Definition and Essential Characteristics

Cloud computing is a way to use computer services—like storing files, running apps, or using powerful computers—over the internet instead of on your own device. Compare it to electricity: you plug in and use it without knowing how the power plant works or owning one. The "cloud" refers to big remote servers in data centers managed by companies like Google, Amazon, or Microsoft. This allows access from any internet-connected device, makes things easier and cheaper (pay only for what you use), and provides scalable power without buying hardware. Cloud computing is a model for enabling ubiquitous, convenient, on-demand network access to a shared pool of configurable computing resources—such as networks, servers, storage, applications, and services—that can be rapidly provisioned and released with minimal management effort or service provider interaction.[1] This definition, established by the National Institute of Standards and Technology (NIST) in 2011, emphasizes the delivery of computing resources over the internet without requiring users to own or manage underlying hardware.[1] The model is defined by five essential characteristics according to NIST: 1. on-demand self-service, whereby consumers provision resources unilaterally without human intervention; 2. broad network access, enabling access via standard mechanisms from diverse devices like laptops and mobiles; 3. resource pooling, where providers pool computing resources to serve multiple consumers with dynamically assigned and reassigned resources according to demand; 4. rapid elasticity, allowing resources to scale out or in automatically to match demand; and 5. measured service, providing transparency in resource usage via metering for pay-per-use billing.[1] However, many sources commonly cite six characteristics by treating multi-tenancy—secure sharing of infrastructure among multiple users or organizations with isolation to prevent interference—as a distinct feature, often incorporated within resource pooling in the NIST definition: 6. multi-tenancy, whereby multiple tenants share resources securely while maintaining data and process isolation. These traits enable empirical scalability, as resources adjust in near real-time to workload fluctuations, contrasting with rigid traditional setups.[1] In distinction from traditional on-premises IT infrastructure, cloud computing shifts costs from capital expenditures (CapEx) on hardware purchases to operational expenditures (OpEx) for usage-based consumption, eliminating the need for upfront ownership of physical assets and enabling global scalability.[9] Typical intra-region network latency remains under 100 milliseconds, supporting responsive applications, while service level agreements (SLAs) from major providers guarantee up to 99.99% uptime, equating to at most 4.38 minutes of monthly downtime.[10][11]Underlying Technologies

Virtualization forms the foundational abstraction layer in cloud computing, enabling the creation of multiple virtual machines (VMs) on a single physical server by emulating hardware resources through a hypervisor. This technology partitions physical compute, memory, and storage, allowing efficient resource utilization via time-sharing and isolation mechanisms. Type-1 hypervisors, which run directly on hardware, include proprietary solutions like VMware vSphere, introduced in the late 1990s for server consolidation, and open-source options such as Kernel-based Virtual Machine (KVM), integrated into the Linux kernel to leverage hardware-assisted virtualization extensions like Intel VT-x.[12][13][14] Cloud data centers primarily utilize CPU-centric servers, such as x86 architectures, with virtualization supporting general-purpose workloads including web services, databases, and broad data processing; AI capabilities are supplementary via optional GPU instances like AWS EC2 P series. In contrast, AI data centers prioritize accelerators such as GPUs (e.g., NVIDIA H100 or Blackwell series) and TPUs (e.g., Google TPU), deployed in specialized systems including NVIDIA DGX servers and SuperPOD clusters, with high-density racks optimized for parallel processing and matrix computations essential to AI training and inference.[15][16][17][18] Containerization extends virtualization principles with operating-system-level isolation, packaging applications and dependencies into lightweight, portable units without full OS emulation, thus reducing overhead compared to traditional VMs. Docker, released as open-source software in 2013, popularized this approach by standardizing container formats using Linux kernel features like cgroups and namespaces for process isolation and resource limits. Container orchestration tools automate deployment, scaling, and management of these containers across clusters; Kubernetes, open-sourced by Google in 2014 based on its internal Borg system, provides declarative configuration for container lifecycle management, service discovery, and fault tolerance through components like pods, nodes, and controllers.[19][20] Networking in cloud infrastructures relies on software-defined networking (SDN), which decouples the control plane—handling routing decisions—from the data plane of physical switches, enabling centralized, programmable configuration via APIs for dynamic traffic management. SDN facilitates virtual overlays, such as VXLAN for Layer 2 extension across data centers, and integrates with load balancers that distribute incoming requests across backend instances using algorithms like round-robin or least connections to prevent bottlenecks. Storage systems underpin data persistence with distinct paradigms: block storage, which exposes raw volumes for high-performance I/O suitable for databases via protocols like iSCSI; object storage, exemplified by Amazon S3 launched in 2006, storing unstructured data as immutable objects with metadata for scalable, distributed access; and distributed file systems like Hadoop Distributed File System (HDFS) or cloud-native equivalents for shared POSIX-compliant access.[21][22] Hyperscale data centers, housing millions of servers in facilities exceeding 100 megawatts, incorporate redundancy architectures such as N+1 configurations, where an additional power supply, cooling unit, or generator backs up the minimum required (N) components to tolerate single failures without downtime. These setups employ uninterruptible power supplies (UPS), diesel generators, and cooling systems like chillers in fault-tolerant topologies, ensuring continuous operation amid hardware faults or maintenance. Automation via RESTful APIs, often following standards like OpenAPI, allows programmatic provisioning of these resources, integrating with infrastructure-as-code tools such as Terraform, which defines resources declaratively to support multiple cloud providers, and Pulumi, which uses general-purpose programming languages for cloud-agnostic infrastructure management.[23][24][25][26]Historical Evolution

Precursors and Early Concepts

The concept of shared computing resources emerged in the early 1960s through time-sharing systems, which allowed multiple users to access a single mainframe computer interactively via terminals, contrasting with batch processing. This approach was pioneered by systems like the Compatible Time-Sharing System (CTSS), demonstrated in 1961 at MIT by Fernando Corbató and colleagues, enabling efficient resource utilization amid scarce hardware.[27] Time-sharing laid foundational principles for multiplexing compute power, influencing later distributed architectures.[28] In 1961, John McCarthy proposed organizing computation as a public utility akin to electricity or telephony, suggesting that excess capacity could be sold on demand to optimize usage and reduce costs for users without dedicated machines.[29] Concurrently, J.C.R. Licklider envisioned interconnected networks of computers facilitating seamless data and resource sharing, as outlined in his work on man-computer symbiosis and intergalactic networks.[30] These ideas gained infrastructural support with ARPANET's first successful connection on October 29, 1969, establishing packet-switching networking as a precursor to wide-area resource distribution.[31] By the 1990s, grid computing extended these principles to harness distributed, heterogeneous resources across networks for large-scale computations, often analogized to electrical grids for on-demand power. Projects like SETI@home, launched on May 17, 1999, exemplified volunteer computing by aggregating idle CPUs worldwide to analyze radio signals for extraterrestrial intelligence, demonstrating scalable, pay-per-use-like resource pooling without centralized ownership.[32] Early experiments foreshadowed commercial viability: Salesforce, founded in March 1999 by Marc Benioff, pivoted to a software-as-a-service (SaaS) model delivering customer relationship management via the internet, eliminating on-premises installations.[33] Similarly, Amazon developed internal infrastructure in the early 2000s, including virtualization and automated scaling to manage e-commerce traffic spikes, which evolved from proprietary tools into reusable components before external commercialization.[34]Commercial Emergence (2006–2010)

Amazon Web Services (AWS) marked the commercial inception of modern cloud computing with the launch of Amazon Simple Storage Service (S3) on March 14, 2006, which provided developers with durable, scalable object storage accessible via web services APIs on a pay-per-use pricing model.[35] This service addressed longstanding challenges in data storage by eliminating the need for upfront hardware investments and enabling infinite scalability without capacity planning. Five months later, on August 25, 2006, AWS introduced Elastic Compute Cloud (EC2) in beta, offering resizable virtual machine instances that allowed users to rent computing resources on demand, further solidifying the infrastructure-as-a-service (IaaS) paradigm.[36] Together, S3 and EC2 demonstrated a viable economic model for commoditizing compute and storage, shifting from capital-intensive on-premises infrastructure to operational expenditure-based utility computing.[37] Competitive responses followed as major technology firms recognized the potential. Google launched App Engine on April 7, 2008, in limited preview, introducing a platform-as-a-service (PaaS) offering that enabled developers to build and host web applications on Google's infrastructure without managing underlying servers, initially supporting Python runtimes with automatic scaling.[38] Microsoft entered the fray with Windows Azure, announcing platform availability in November 2009 and reaching general availability on February 1, 2010, which provided a hybrid-compatible environment for deploying .NET and other applications across virtual machines and storage services.[39] These launches validated the market for abstracted cloud services, though adoption remained nascent, with AWS maintaining primacy in IaaS due to its earlier availability and developer-friendly APIs. A landmark validation of cloud reliability occurred through Netflix's migration to AWS, initiated in August 2008 following a severe database corruption incident that exposed vulnerabilities in its on-premises systems.[40] By 2010, Netflix had transitioned substantial portions of its streaming and backend operations to EC2 and S3, achieving high availability through automated failover and elastic scaling that handled surging demand without downtime, thereby establishing empirical benchmarks for production-grade cloud workloads in media delivery.[41] This shift underscored causal advantages in fault tolerance and cost efficiency, as Netflix reported reduced infrastructure overhead while serving millions of subscribers, influencing enterprise perceptions of cloud viability.[42]Rapid Expansion (2011–2020)

The period from 2011 to 2020 marked a phase of rapid scaling in cloud computing, driven by technological advancements enabling hybrid deployments and the proliferation of Platform as a Service (PaaS) and Software as a Service (SaaS) models. Global end-user spending on cloud services expanded significantly, rising from approximately $40.7 billion in 2011 to $241 billion by 2020, reflecting widespread enterprise adoption amid improving infrastructure reliability and cost efficiencies.[43] This growth was fueled by the integration of on-premises systems with public clouds in hybrid architectures, which allowed organizations to retain control over sensitive data while leveraging scalable external resources.[44] Key open-source milestones facilitated this expansion. OpenStack, initially released in October 2010, gained traction for building private and hybrid clouds, with its modular components enabling customizable infrastructure management for enterprises wary of full public cloud reliance.[45] Docker's launch in 2013 introduced lightweight containerization, simplifying application portability and deployment across hybrid environments, which accelerated microservices adoption and reduced virtualization overhead.[46] Complementing this, Kubernetes was announced by Google in June 2014, providing orchestration for containerized workloads; its integration into the Cloud Native Computing Foundation (CNCF), formed in July 2015, standardized cloud-native practices and boosted hybrid scalability.[20][47] Major vendors advanced enterprise offerings during this decade. IBM introduced SmartCloud in April 2011, emphasizing secure, hybrid cloud services for business analytics and infrastructure.[48] Oracle followed with initial cloud platform services in June 2012, focusing on enterprise resource planning in PaaS formats to bridge legacy systems with cloud agility. These initiatives, alongside AWS and Azure expansions, shifted focus toward PaaS for developer productivity—evidenced by PaaS revenues surpassing $171 billion globally by the late 2010s—and SaaS for end-user applications, which dominated market segments with annual growth rates exceeding 30% in some regions.[49] The COVID-19 pandemic in 2020 catalyzed a surge in adoption, as remote work demands necessitated rapid scaling of cloud resources for collaboration and data access, with public cloud spending projected to grow 18% amid lockdowns.[50] Hybrid models proved resilient, enabling seamless bursting to public clouds during peak loads while maintaining private data sovereignty, solidifying cloud computing's role in operational continuity.[51]Maturation and Recent Advances (2021–2025)

Following the accelerated cloud migrations during the COVID-19 pandemic, the period from 2021 to 2025 saw refinements in cloud architectures emphasizing efficiency, scalability, and integration with emerging workloads. Global spending on cloud infrastructure services reached $106.9 billion in the third quarter of 2025, reflecting a 28% year-over-year increase primarily driven by AI and machine learning (AI/ML) demands. AI/ML-specific cloud services generated $47.3 billion in revenue for 2025, up 19.6% from the prior year, as enterprises shifted compute-intensive tasks to cloud platforms for faster model training and inference. This growth underscored a maturation where cloud providers optimized for generative AI, with hyperscalers like AWS, Microsoft Azure, and Google Cloud investing heavily in specialized accelerators and APIs.[52][53][54] Serverless computing advanced significantly, with platforms like AWS Lambda evolving to support longer execution times, enhanced concurrency, and tighter integration with AI services; by 2025, serverless adoption grew 3-7% across major providers, enabling developers to deploy event-driven applications without infrastructure provisioning. Hybrid edge-cloud models emerged as a key refinement, processing data closer to sources via 5G and IoT integrations to reduce latency, with edge computing projected to expand rapidly for real-time applications in manufacturing and autonomous systems. Kubernetes solidified its dominance in container orchestration, with over 60% of enterprises adopting it by 2025 as the de facto standard for managing hybrid and multi-cloud workloads, supported by tools for AI-driven autoscaling and edge deployments.[55][56][57] Multi-cloud strategies became ubiquitous, with 92-93% of organizations employing them across an average of 4.8 providers to mitigate vendor lock-in and optimize costs, though this complexity contributed to operational challenges. Gartner reported rising dissatisfaction, predicting that 25% of organizations would face significant issues with cloud adoption by 2028 due to unrealistic expectations and escalating cost pressures, prompting a focus on FinOps practices for better governance. These trends highlighted a shift toward pragmatic maturation, balancing innovation with sustainability and sovereignty concerns in regulated sectors.[58][59][60]Technical Models

Service Models

![Comparison of on-premise, IaaS, PaaS, and SaaS][float-right] Cloud computing service models delineate the degrees of abstraction provided by cloud providers, ranging from raw infrastructure to fully managed applications, with corresponding shifts in responsibility for management, configuration, and control between the provider and consumer. The U.S. National Institute of Standards and Technology (NIST) formalized three primary models—infrastructure as a service (IaaS), platform as a service (PaaS), and software as a service (SaaS)—in its Special Publication 800-145, published on September 28, 2011.[1] These models embody causal trade-offs: greater abstraction eases operational burdens and accelerates deployment but diminishes user control over underlying components, potentially constraining customization and optimization while increasing dependency on provider capabilities.[61] IaaS delivers fundamental computing resources such as virtual machines (VMs), storage, and networking on a pay-as-you-go basis, allowing consumers to provision and manage operating systems, applications, and data while the provider handles physical hardware and virtualization.[2] Prominent examples include Amazon Web Services (AWS) Elastic Compute Cloud (EC2), launched in 2006, Microsoft Azure Virtual Machines, and Google Compute Engine.[62] This model affords the highest degree of control over the software stack, enabling fine-tuned configurations akin to on-premises environments, yet it demands substantial expertise in system administration, patching, and scaling, which can elevate complexity and resource overhead compared to higher abstractions.[63] PaaS extends abstraction by supplying a managed runtime environment, including operating systems, middleware, databases, and development tools, permitting consumers to focus on application code and data without provisioning or maintaining underlying infrastructure.[2] Key providers encompass Heroku, acquired by Salesforce in 2010; Google App Engine, introduced in 2008; and AWS Elastic Beanstalk.[64] By offloading server management and auto-scaling to the provider, PaaS reduces deployment times and operational costs for developers, but it limits control over runtime specifics, potentially hindering integration with legacy systems or bespoke optimizations.[65] SaaS furnishes complete, multi-tenant applications accessible via the internet, with the provider assuming responsibility for all layers from infrastructure to software updates, security, and scalability, leaving consumers to handle only user access and configuration.[2] Exemplars include Microsoft 365, formerly Office 365, and Salesforce CRM, which dominate enterprise adoption.[66] This model maximizes ease and accessibility for end-users, obviating hardware investments and maintenance, though it yields minimal customization latitude and exposes users to vendor-specific limitations in functionality or data portability.[67] Function as a service (FaaS), often termed serverless computing, represents an evolution beyond traditional models by enabling event-driven code execution without provisioning or managing servers, with providers automatically handling invocation, scaling, and billing per execution duration.[3] AWS Lambda, debuted in 2014, exemplifies this paradigm, alongside Azure Functions and Google Cloud Functions. Adoption surged in the 2020s, with the global serverless market valued at USD 24.51 billion in 2024 and projected to reach USD 52.13 billion by 2030, driven by cost efficiencies for sporadic workloads and microservices architectures.[68] FaaS further abstracts resource allocation, minimizing idle capacity costs but introducing cold-start latencies and constraints on execution timeouts, which can complicate stateful or long-running applications.[55]Deployment Models

Public cloud deployment involves provisioning resources from third-party providers using shared, multi-tenant infrastructure accessible over the internet, exemplified by Amazon Web Services (AWS) public regions. This model achieves cost efficiency through pay-as-you-go pricing and resource pooling, making it empirically suitable for workloads with variable or unpredictable demands, as elasticity allows scaling without overprovisioning dedicated hardware.[69][70] Private cloud deployment dedicates infrastructure to a single organization, either on-premises via virtualization software like VMware or hosted by a vendor, ensuring isolated, single-tenant environments. It suits regulated sectors such as finance and healthcare, where compliance requirements demand granular control over data locality, security configurations, and auditability, though at higher upfront costs due to the absence of shared economies.[71][72] Hybrid cloud deployment orchestrates public and private clouds into an integrated system, enabling seamless data transfer and workload orchestration, such as bursting non-sensitive tasks to public resources during demand spikes while retaining sensitive operations privately. This approach addresses trade-offs in cost and control, with empirical evidence showing 73% of organizations adopting it by 2024 to optimize for both scalability and regulatory adherence.[73][74] Multi-cloud deployment spans multiple public cloud providers, such as combining AWS for compute with Microsoft Azure for analytics, to enhance redundancy against provider outages and mitigate lock-in risks through diversified dependencies. While providing resilience via best-of-breed services and bargaining power on pricing, it increases complexity in orchestration, interoperability, and skill requirements, necessitating robust governance to avoid fragmented operations.[75]Economic and Operational Benefits

Core Value Propositions

Cloud computing's core economic value derives from shifting from capital expenditures (capex) for dedicated hardware to operational expenditures (opex) aligned with actual usage, thereby minimizing waste from underutilized on-premises servers. On-premises data centers typically achieve server utilization rates of 10-15%, as organizations provision for peak loads that occur infrequently, leaving capacity idle for extended periods.[76] In contrast, cloud providers leverage multi-tenancy and economies of scale to maintain utilization rates exceeding 70-80%, distributing costs across numerous customers and reducing per-unit expenses through efficient resource pooling.[77] The pay-per-use model further enhances efficiency by eliminating payments for idle resources, while elasticity enables automatic scaling to match demand fluctuations, such as e-commerce traffic surges during Black Friday sales.[78][79] This capability prevents overprovisioning costs associated with anticipating unpredictable spikes, allowing systems to provision additional compute or storage capacity dynamically without manual intervention.[80] Operationally, cloud environments accelerate resource provisioning from months required for on-premises hardware procurement and setup to minutes via self-service APIs and automation.[81][82] Providers also offer built-in global redundancy across distributed data centers, enhancing availability and disaster recovery compared to localized on-premises setups vulnerable to single-site failures. Empirical analyses confirm these propositions, with studies indicating total cost of ownership (TCO) reductions of 20-30% for migrated workloads suitable for cloud architectures, driven by lower maintenance, energy, and staffing overheads.[83] Independent research attributes similar 30-40% TCO savings to optimized resource allocation and avoidance of upfront infrastructure investments.[84] These gains hold for variable workloads but require careful workload selection to avoid inefficiencies in fixed, predictable use cases.Drivers of Adoption