Emotion recognition

View on Wikipedia

| Part of a series on |

| Artificial intelligence (AI) |

|---|

Emotion recognition is the process of identifying human emotion. People vary widely in their accuracy at recognizing the emotions of others. Use of technology to help people with emotion recognition is a relatively nascent research area. Generally, the technology works best if it uses multiple modalities in context. To date, the most work has been conducted on automating the recognition of facial expressions from video, spoken expressions from audio, written expressions from text, and physiology as measured by wearables.

Human

[edit]Humans show a great deal of variability in their abilities to recognize emotion. A key point to keep in mind when learning about automated emotion recognition is that there are several sources of "ground truth", or truth about what the real emotion is. Suppose we are trying to recognize the emotions of Alex. One source is "what would most people say that Alex is feeling?" In this case, the 'truth' may not correspond to what Alex feels, but may correspond to what most people would say it looks like Alex feels. For example, Alex may actually feel sad, but he puts on a big smile and then most people say he looks happy. If an automated method achieves the same results as a group of observers it may be considered accurate, even if it does not actually measure what Alex truly feels. Another source of 'truth' is to ask Alex what he truly feels. This works if Alex has a good sense of his internal state, and wants to tell you what it is, and is capable of putting it accurately into words or a number. However, some people are alexithymic and do not have a good sense of their internal feelings, or they are not able to communicate them accurately with words and numbers. In general, getting to the truth of what emotion is actually present can take some work, can vary depending on the criteria that are selected, and will usually involve maintaining some level of uncertainty.

Automatic

[edit]Decades of scientific research have been conducted developing and evaluating methods for automated emotion recognition. There is now an extensive literature proposing and evaluating hundreds of different kinds of methods, leveraging techniques from multiple areas, such as signal processing, machine learning, computer vision, and speech processing. Different methodologies and techniques may be employed to interpret emotion such as Bayesian networks.[1] , Gaussian Mixture models[2] and Hidden Markov Models[3] and deep neural networks.[4]

Approaches

[edit]The accuracy of emotion recognition is usually improved when it combines the analysis of human expressions from multimodal forms such as texts, physiology, audio, or video.[5] Different emotion types are detected through the integration of information from facial expressions, body movement and gestures, and speech.[6] The technology is said to contribute in the emergence of the so-called emotional or emotive Internet.[7]

The existing approaches in emotion recognition to classify certain emotion types can be generally classified into three main categories: knowledge-based techniques, statistical methods, and hybrid approaches.[8]

Knowledge-based techniques

[edit]Knowledge-based techniques (sometimes referred to as lexicon-based techniques), utilize domain knowledge and the semantic and syntactic characteristics of text and potentially spoken language in order to detect certain emotion types.[9] In this approach, it is common to use knowledge-based resources during the emotion classification process such as WordNet, SenticNet,[10] ConceptNet, and EmotiNet,[11] to name a few.[12] One of the advantages of this approach is the accessibility and economy brought about by the large availability of such knowledge-based resources.[8] A limitation of this technique on the other hand, is its inability to handle concept nuances and complex linguistic rules.[8]

Knowledge-based techniques can be mainly classified into two categories: dictionary-based and corpus-based approaches.[citation needed] Dictionary-based approaches find opinion or emotion seed words in a dictionary and search for their synonyms and antonyms to expand the initial list of opinions or emotions.[13] Corpus-based approaches on the other hand, start with a seed list of opinion or emotion words, and expand the database by finding other words with context-specific characteristics in a large corpus.[13] While corpus-based approaches take into account context, their performance still vary in different domains since a word in one domain can have a different orientation in another domain.[14]

Statistical methods

[edit]Statistical methods commonly involve the use of different supervised machine learning algorithms in which a large set of annotated data is fed into the algorithms for the system to learn and predict the appropriate emotion types.[8] Machine learning algorithms generally provide more reasonable classification accuracy compared to other approaches, but one of the challenges in achieving good results in the classification process, is the need to have a sufficiently large training set.[8]

Some of the most commonly used machine learning algorithms include Support Vector Machines (SVM), Naive Bayes, and Maximum Entropy.[15] Deep learning, which is under the unsupervised family of machine learning, is also widely employed in emotion recognition.[16][17][18] Well-known deep learning algorithms include different architectures of Artificial Neural Network (ANN) such as Convolutional Neural Network (CNN), Long Short-term Memory (LSTM), and Extreme Learning Machine (ELM).[15] The popularity of deep learning approaches in the domain of emotion recognition may be mainly attributed to its success in related applications such as in computer vision, speech recognition, and Natural Language Processing (NLP).[15]

Hybrid approaches

[edit]Hybrid approaches in emotion recognition are essentially a combination of knowledge-based techniques and statistical methods, which exploit complementary characteristics from both techniques.[8] Some of the works that have applied an ensemble of knowledge-driven linguistic elements and statistical methods include sentic computing and iFeel, both of which have adopted the concept-level knowledge-based resource SenticNet.[19][20] The role of such knowledge-based resources in the implementation of hybrid approaches is highly important in the emotion classification process.[12] Since hybrid techniques gain from the benefits offered by both knowledge-based and statistical approaches, they tend to have better classification performance as opposed to employing knowledge-based or statistical methods independently.[citation needed] A downside of using hybrid techniques however, is the computational complexity during the classification process.[12]

Datasets

[edit]Data is an integral part of the existing approaches in emotion recognition and in most cases it is a challenge to obtain annotated data that is necessary to train machine learning algorithms.[13] For the task of classifying different emotion types from multimodal sources in the form of texts, audio, videos or physiological signals, the following datasets are available:

- HUMAINE: provides natural clips with emotion words and context labels in multiple modalities[21]

- Belfast database: provides clips with a wide range of emotions from TV programs and interview recordings[22]

- SEMAINE: provides audiovisual recordings between a person and a virtual agent and contains emotion annotations such as angry, happy, fear, disgust, sadness, contempt, and amusement[23]

- IEMOCAP: provides recordings of dyadic sessions between actors and contains emotion annotations such as happiness, anger, sadness, frustration, and neutral state[24]

- eNTERFACE: provides audiovisual recordings of subjects from seven nationalities and contains emotion annotations such as happiness, anger, sadness, surprise, disgust, and fear[25]

- DEAP: provides electroencephalography (EEG), electrocardiography (ECG), and face video recordings, as well as emotion annotations in terms of valence, arousal, and dominance of people watching film clips[26]

- DREAMER: provides electroencephalography (EEG) and electrocardiography (ECG) recordings, as well as emotion annotations in terms of valence, dominance of people watching film clips[27]

- MELD: is a multiparty conversational dataset where each utterance is labeled with emotion and sentiment. MELD[28] provides conversations in video format and hence suitable for multimodal emotion recognition and sentiment analysis. MELD is useful for multimodal sentiment analysis and emotion recognition, dialogue systems and emotion recognition in conversations.[29]

- MuSe: provides audiovisual recordings of natural interactions between a person and an object.[30] It has discrete and continuous emotion annotations in terms of valence, arousal and trustworthiness as well as speech topics useful for multimodal sentiment analysis and emotion recognition.

- UIT-VSMEC: is a standard Vietnamese Social Media Emotion Corpus (UIT-VSMEC) with about 6,927 human-annotated sentences with six emotion labels, contributing to emotion recognition research in Vietnamese which is a low-resource language in Natural Language Processing (NLP).[31]

- BED: provides valence and arousal of people watching images. It also includes electroencephalography (EEG) recordings of people exposed to various stimuli (SSVEP, resting with eyes closed, resting with eyes open, cognitive tasks) for the task of EEG-based biometrics.[32]

Applications

[edit]Emotion recognition is used in society for a variety of reasons. Affectiva, which spun out of MIT, provides artificial intelligence software that makes it more efficient to do tasks previously done manually by people, mainly to gather facial expression and vocal expression information related to specific contexts where viewers have consented to share this information. For example, instead of filling out a lengthy survey about how you feel at each point watching an educational video or advertisement, you can consent to have a camera watch your face and listen to what you say, and note during which parts of the experience you show expressions such as boredom, interest, confusion, or smiling. (Note that this does not imply it is reading your innermost feelings—it only reads what you express outwardly.) Other uses by Affectiva include helping children with autism, helping people who are blind to read facial expressions, helping robots interact more intelligently with people, and monitoring signs of attention while driving in an effort to enhance driver safety.[33]

Academic research increasingly uses emotion recognition as a method to study social science questions around elections, protests, and democracy. Several studies focus on the facial expressions of political candidates on social media and find that politicians tend to express happiness.[34][35][36] However, this research finds that computer vision tools such as Amazon Rekognition are only accurate for happiness and are mostly reliable as 'happy detectors'.[37] Researchers examining protests, where negative affect such as anger is expected, have therefore developed their own models to more accurately study expressions of negativity and violence in democratic processes.[38]

A patent Archived 7 October 2019 at the Wayback Machine filed by Snapchat in 2015 describes a method of extracting data about crowds at public events by performing algorithmic emotion recognition on users' geotagged selfies.[39]

Emotient was a startup company which applied emotion recognition to reading frowns, smiles, and other expressions on faces, namely artificial intelligence to predict "attitudes and actions based on facial expressions".[40] Apple bought Emotient in 2016 and uses emotion recognition technology to enhance the emotional intelligence of its products.[40]

nViso provides real-time emotion recognition for web and mobile applications through a real-time API.[41] Visage Technologies AB offers emotion estimation as a part of their Visage SDK for marketing and scientific research and similar purposes.[42]

Eyeris is an emotion recognition company that works with embedded system manufacturers including car makers and social robotic companies on integrating its face analytics and emotion recognition software; as well as with video content creators to help them measure the perceived effectiveness of their short and long form video creative.[43][44]

Many products also exist to aggregate information from emotions communicated online, including via "like" button presses and via counts of positive and negative phrases in text and affect recognition is increasingly used in some kinds of games and virtual reality, both for educational purposes and to give players more natural control over their social avatars.[citation needed]

Subfields

[edit]Emotion recognition is probably to gain the best outcome if applying multiple modalities by combining different objects, including text (conversation), audio, video, and physiology to detect emotions.

Emotion recognition in text

[edit]Text data is a favorable research object for emotion recognition when it is free and available everywhere in human life. Compare to other types of data, the storage of text data is lighter and easy to compress to the best performance due to the frequent repetition of words and characters in languages. Emotions can be extracted from two essential text forms: written texts and conversations (dialogues).[45] For written texts, many scholars focus on working with sentence level to extract "words/phrases" representing emotions.[46][47]

Emotion recognition in audio

[edit]Different from emotion recognition in text, vocal signals are used for the recognition to extract emotions from audio.[48].Unlike images and videos, which are typically two-dimensional or three-dimensional data capturing spatial or spatio-temporal features, audio is inherently one-dimensional time-series data that represents variations in sound amplitude over time. This fundamental difference makes emotion recognition from audio unique. Instead of relying on visual cues or textual semantics, audio-based emotion detection focuses on prosodic and acoustic features such as pitch, intensity, speech rate, and voice quality.[49]

Emotion recognition in video

[edit]Video data is a combination of audio data, image data and sometimes texts (in case of subtitles[50]).

Emotion recognition in conversation

[edit]Emotion recognition in conversation (ERC) extracts opinions between participants from massive conversational data in social platforms, such as Facebook, Twitter, YouTube, and others.[29] ERC can take input data like text, audio, video or a combination form to detect several emotions such as fear, lust, pain, and pleasure.

See also

[edit]References

[edit]- ^ Miyakoshi, Yoshihiro, and Shohei Kato. "Facial Emotion Detection Considering Partial Occlusion Of Face Using Baysian Network". Computers and Informatics (2011): 96–101.

- ^ Hari Krishna Vydana, P. Phani Kumar, K. Sri Rama Krishna and Anil Kumar Vuppala. "Improved emotion recognition using GMM-UBMs". 2015 International Conference on Signal Processing and Communication Engineering Systems

- ^ B. Schuller, G. Rigoll M. Lang. "Hidden Markov model-based speech emotion recognition". ICME '03. Proceedings. 2003 International Conference on Multimedia and Expo, 2003.

- ^ Singh, Premjeet; Saha, Goutam; Sahidullah, Md (2021). "Non-linear frequency warping using constant-Q transformation for speech emotion recognition". 2021 International Conference on Computer Communication and Informatics (ICCCI). pp. 1–4. arXiv:2102.04029. doi:10.1109/ICCCI50826.2021.9402569. ISBN 978-1-7281-5875-4. S2CID 231846518.

- ^ Poria, Soujanya; Cambria, Erik; Bajpai, Rajiv; Hussain, Amir (September 2017). "A review of affective computing: From unimodal analysis to multimodal fusion". Information Fusion. 37: 98–125. doi:10.1016/j.inffus.2017.02.003. hdl:1893/25490. S2CID 205433041.

- ^ Caridakis, George; Castellano, Ginevra; Kessous, Loic; Raouzaiou, Amaryllis; Malatesta, Lori; Asteriadis, Stelios; Karpouzis, Kostas (19 September 2007). "Multimodal emotion recognition from expressive faces, body gestures and speech". Artificial Intelligence and Innovations 2007: From Theory to Applications. IFIP the International Federation for Information Processing. Vol. 247. pp. 375–388. doi:10.1007/978-0-387-74161-1_41. ISBN 978-0-387-74160-4.

- ^ Price (23 August 2015). "Tapping Into The Emotional Internet". TechCrunch. Retrieved 12 December 2018.

- ^ a b c d e f Cambria, Erik (March 2016). "Affective Computing and Sentiment Analysis". IEEE Intelligent Systems. 31 (2): 102–107. Bibcode:2016IISys..31b.102.. doi:10.1109/MIS.2016.31. S2CID 18580557.

- ^ Taboada, Maite; Brooke, Julian; Tofiloski, Milan; Voll, Kimberly; Stede, Manfred (June 2011). "Lexicon-Based Methods for Sentiment Analysis". Computational Linguistics. 37 (2): 267–307. doi:10.1162/coli_a_00049. ISSN 0891-2017.

- ^ Cambria, Erik; Liu, Qian; Decherchi, Sergio; Xing, Frank; Kwok, Kenneth (2022). "SenticNet 7: A Commonsense-based Neurosymbolic AI Framework for Explainable Sentiment Analysis" (PDF). Proceedings of LREC. pp. 3829–3839.

- ^ Balahur, Alexandra; Hermida, JesúS M; Montoyo, AndréS (1 November 2012). "Detecting implicit expressions of emotion in text: A comparative analysis". Decision Support Systems. 53 (4): 742–753. doi:10.1016/j.dss.2012.05.024. ISSN 0167-9236.

- ^ a b c Medhat, Walaa; Hassan, Ahmed; Korashy, Hoda (December 2014). "Sentiment analysis algorithms and applications: A survey". Ain Shams Engineering Journal. 5 (4): 1093–1113. doi:10.1016/j.asej.2014.04.011.

- ^ a b c Madhoushi, Zohreh; Hamdan, Abdul Razak; Zainudin, Suhaila (2015). "Sentiment analysis techniques in recent works". 2015 Science and Information Conference (SAI). pp. 288–291. doi:10.1109/SAI.2015.7237157. ISBN 978-1-4799-8547-0. S2CID 14821209.

- ^ Hemmatian, Fatemeh; Sohrabi, Mohammad Karim (18 December 2017). "A survey on classification techniques for opinion mining and sentiment analysis". Artificial Intelligence Review. 52 (3): 1495–1545. doi:10.1007/s10462-017-9599-6. S2CID 11741285.

- ^ a b c Sun, Shiliang; Luo, Chen; Chen, Junyu (July 2017). "A review of natural language processing techniques for opinion mining systems". Information Fusion. 36: 10–25. doi:10.1016/j.inffus.2016.10.004.

- ^ Majumder, Navonil; Poria, Soujanya; Gelbukh, Alexander; Cambria, Erik (March 2017). "Deep Learning-Based Document Modeling for Personality Detection from Text". IEEE Intelligent Systems. 32 (2): 74–79. Bibcode:2017IISys..32b..74M. doi:10.1109/MIS.2017.23. S2CID 206468984.

- ^ Mahendhiran, P. D.; Kannimuthu, S. (May 2018). "Deep Learning Techniques for Polarity Classification in Multimodal Sentiment Analysis". International Journal of Information Technology & Decision Making. 17 (3): 883–910. doi:10.1142/S0219622018500128.

- ^ Yu, Hongliang; Gui, Liangke; Madaio, Michael; Ogan, Amy; Cassell, Justine; Morency, Louis-Philippe (23 October 2017). "Temporally Selective Attention Model for Social and Affective State Recognition in Multimedia Content". Proceedings of the 25th ACM international conference on Multimedia. MM '17. ACM. pp. 1743–1751. doi:10.1145/3123266.3123413. ISBN 9781450349062. S2CID 3148578.

- ^ Cambria, Erik; Hussain, Amir (2015). Sentic Computing: A Common-Sense-Based Framework for Concept-Level Sentiment Analysis. Springer Publishing Company, Incorporated. ISBN 978-3319236537.

- ^ Araújo, Matheus; Gonçalves, Pollyanna; Cha, Meeyoung; Benevenuto, Fabrício (7 April 2014). "IFeel: A system that compares and combines sentiment analysis methods". Proceedings of the 23rd International Conference on World Wide Web. WWW '14 Companion. ACM. pp. 75–78. doi:10.1145/2567948.2577013. ISBN 9781450327459. S2CID 11018367.

- ^ Paolo Petta; Catherine Pelachaud; Roddy Cowie, eds. (2011). Emotion-oriented systems the humaine handbook. Berlin: Springer. ISBN 978-3-642-15184-2.

- ^ Douglas-Cowie, Ellen; Campbell, Nick; Cowie, Roddy; Roach, Peter (1 April 2003). "Emotional speech: towards a new generation of databases". Speech Communication. 40 (1–2): 33–60. CiteSeerX 10.1.1.128.3991. doi:10.1016/S0167-6393(02)00070-5. ISSN 0167-6393. S2CID 6421586.

- ^ McKeown, G.; Valstar, M.; Cowie, R.; Pantic, M.; Schroder, M. (January 2012). "The SEMAINE Database: Annotated Multimodal Records of Emotionally Colored Conversations between a Person and a Limited Agent". IEEE Transactions on Affective Computing. 3 (1): 5–17. Bibcode:2012ITAfC...3....5M. doi:10.1109/T-AFFC.2011.20. S2CID 2995377.

- ^ Busso, Carlos; Bulut, Murtaza; Lee, Chi-Chun; Kazemzadeh, Abe; Mower, Emily; Kim, Samuel; Chang, Jeannette N.; Lee, Sungbok; Narayanan, Shrikanth S. (5 November 2008). "IEMOCAP: interactive emotional dyadic motion capture database". Language Resources and Evaluation. 42 (4): 335–359. doi:10.1007/s10579-008-9076-6. ISSN 1574-020X. S2CID 11820063.

- ^ Martin, O.; Kotsia, I.; Macq, B.; Pitas, I. (3 April 2006). "The eNTERFACE'05 Audio-Visual Emotion Database". 22nd International Conference on Data Engineering Workshops (ICDEW'06). Icdew '06. IEEE Computer Society. pp. 8–. doi:10.1109/ICDEW.2006.145. ISBN 9780769525716. S2CID 16185196.

- ^ Koelstra, Sander; Muhl, Christian; Soleymani, Mohammad; Lee, Jong-Seok; Yazdani, Ashkan; Ebrahimi, Touradj; Pun, Thierry; Nijholt, Anton; Patras, Ioannis (January 2012). "DEAP: A Database for Emotion Analysis Using Physiological Signals". IEEE Transactions on Affective Computing. 3 (1): 18–31. Bibcode:2012ITAfC...3...18K. CiteSeerX 10.1.1.593.8470. doi:10.1109/T-AFFC.2011.15. ISSN 1949-3045. S2CID 206597685.

- ^ Katsigiannis, Stamos; Ramzan, Naeem (January 2018). "DREAMER: A Database for Emotion Recognition Through EEG and ECG Signals From Wireless Low-cost Off-the-Shelf Devices" (PDF). IEEE Journal of Biomedical and Health Informatics. 22 (1): 98–107. Bibcode:2018IJBHI..22...98K. doi:10.1109/JBHI.2017.2688239. ISSN 2168-2194. PMID 28368836. S2CID 23477696. Archived from the original (PDF) on 1 November 2022. Retrieved 1 October 2019.

- ^ Poria, Soujanya; Hazarika, Devamanyu; Majumder, Navonil; Naik, Gautam; Cambria, Erik; Mihalcea, Rada (2019). "MELD: A Multimodal Multi-Party Dataset for Emotion Recognition in Conversations". Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics. Stroudsburg, PA, USA: Association for Computational Linguistics: 527–536. arXiv:1810.02508. doi:10.18653/v1/p19-1050. S2CID 52932143.

- ^ a b Poria, S., Majumder, N., Mihalcea, R., & Hovy, E. (2019). Emotion recognition in conversation: Research challenges, datasets, and recent advances. IEEE Access, 7, 100943-100953.

- ^ Stappen, Lukas; Schuller, Björn; Lefter, Iulia; Cambria, Erik; Kompatsiaris, Ioannis (2020). "Summary of MuSe 2020: Multimodal Sentiment Analysis, Emotion-target Engagement and Trustworthiness Detection in Real-life Media". Proceedings of the 28th ACM International Conference on Multimedia. Seattle, PA, USA: Association for Computing Machinery. pp. 4769–4770. arXiv:2004.14858. doi:10.1145/3394171.3421901. ISBN 9781450379885. S2CID 222278714.

- ^ Ho, Vong (2020). "Emotion Recognition for Vietnamese Social Media Text". Computational Linguistics. Communications in Computer and Information Science. Vol. 1215. pp. 319–333. arXiv:1911.09339. doi:10.1007/978-981-15-6168-9_27. ISBN 978-981-15-6167-2. S2CID 208202333.

- ^ Arnau-González, Pablo; Katsigiannis, Stamos; Arevalillo-Herráez, Miguel; Ramzan, Naeem (February 2021). "BED: A new dataset for EEG-based biometrics". IEEE Internet of Things Journal. (Early Access) (15): 12219. Bibcode:2021IITJ....812219A. doi:10.1109/JIOT.2021.3061727. ISSN 2327-4662. S2CID 233916681.

- ^ "Affectiva".

- ^ Bossetta, Michael; Schmøkel, Rasmus (2 January 2023). "Cross-Platform Emotions and Audience Engagement in Social Media Political Campaigning: Comparing Candidates' Facebook and Instagram Images in the 2020 US Election". Political Communication. 40 (1): 48–68. doi:10.1080/10584609.2022.2128949. ISSN 1058-4609.

- ^ Peng, Yilang (January 2021). "What Makes Politicians' Instagram Posts Popular? Analyzing Social Media Strategies of Candidates and Office Holders with Computer Vision". The International Journal of Press/Politics. 26 (1): 143–166. doi:10.1177/1940161220964769. ISSN 1940-1612. S2CID 225108765.

- ^ Haim, Mario; Jungblut, Marc (15 March 2021). "Politicians' Self-depiction and Their News Portrayal: Evidence from 28 Countries Using Visual Computational Analysis". Political Communication. 38 (1–2): 55–74. doi:10.1080/10584609.2020.1753869. ISSN 1058-4609. S2CID 219481457.

- ^ Bossetta, Michael; Schmøkel, Rasmus (2 January 2023). "Cross-Platform Emotions and Audience Engagement in Social Media Political Campaigning: Comparing Candidates' Facebook and Instagram Images in the 2020 US Election". Political Communication. 40 (1): 48–68. doi:10.1080/10584609.2022.2128949. ISSN 1058-4609.

- ^ Won, Donghyeon; Steinert-Threlkeld, Zachary C.; Joo, Jungseock (19 October 2017). "Protest Activity Detection and Perceived Violence Estimation from Social Media Images". Proceedings of the 25th ACM international conference on Multimedia. MM '17. New York, NY, USA: Association for Computing Machinery. pp. 786–794. arXiv:1709.06204. doi:10.1145/3123266.3123282. ISBN 978-1-4503-4906-2.

- ^ Bushwick, Sophie. "This Video Watches You Back". Scientific American. Retrieved 27 January 2020.

- ^ a b DeMuth Jr., Chris (8 January 2016). "Apple Reads Your Mind". M&A Daily. Seeking Alpha. Retrieved 9 January 2016.

- ^ "nViso". nViso.ch.

- ^ "Visage Technologies".

- ^ "Feeling sad, angry? Your future car will know".

- ^ Varagur, Krithika (22 March 2016). "Cars May Soon Warn Drivers Before They Nod Off". Huffington Post.

- ^ Shivhare, S. N., & Khethawat, S. (2012). Emotion detection from text. arXiv preprint arXiv:1205.4944

- ^ Ezhilarasi, R., & Minu, R. I. (2012). Automatic emotion recognition and classification. Procedia Engineering, 38, 21-26.

- ^ Krcadinac, U., Pasquier, P., Jovanovic, J., & Devedzic, V. (2013). Synesketch: An open source library for sentence-based emotion recognition. IEEE Transactions on Affective Computing, 4(3), 312-325.

- ^ Schmitt, M., Ringeval, F., & Schuller, B. W. (2016, September). At the Border of Acoustics and Linguistics: Bag-of-Audio-Words for the Recognition of Emotions in Speech. In Interspeech (pp. 495-499).

- ^ Jose, Jiby Mariya; Jeeva, Jose (June 2023). "Energy-Reduced Bio-Inspired 1D-CNN for Audio Emotion Recognition". International Journal of Scientific Research in Computer Science, Engineering and Information Technology. 11 (3): 1034–1054. doi:10.32628/CSEIT25113386. Retrieved 24 July 2025.

- ^ Dhall, A., Goecke, R., Lucey, S., & Gedeon, T. (2012). Collecting large, richly annotated facial-expression databases from movies. IEEE multimedia, (3), 34-41.

Emotion recognition

View on GrokipediaConceptual Foundations

Definition and Historical Context

Emotion recognition is the process of identifying and interpreting emotional states in others through the analysis of multimodal cues, including facial expressions, vocal intonation, gestures, and physiological responses. This capability enables social coordination, empathy, and adaptive behavior, with empirical evidence indicating that humans reliably detect discrete basic emotions—such as joy, anger, fear, sadness, disgust, and surprise—under controlled conditions, achieving recognition accuracies often exceeding 70% in cross-cultural experiments.[9][10] Recognition accuracy varies by modality and context, declining for ambiguous or culturally modulated expressions, but core mechanisms appear rooted in evolved neural pathways rather than solely learned associations.[1] The historical foundations of emotion recognition research originated with Charles Darwin's 1872 treatise The Expression of the Emotions in Man and Animals, which posited that emotional displays are innate, biologically adaptive signals shared across species, serving functions like threat signaling or affiliation. Darwin gathered evidence through direct observations of infants and animals, photographic documentation of expressions, and questionnaires sent to missionaries and travelers in remote regions, revealing consistent interpretations of expressions like smiling for happiness or frowning for displeasure across diverse populations. His work emphasized serviceable habits—instinctive actions retained from evolutionary utility—and antithesis, where opposite emotions produce contrasting expressions, laying empirical groundwork that anticipated modern evolutionary psychology.[11][12] Mid-20th-century behaviorism marginalized emotional study by prioritizing observable stimuli over internal states, but revival occurred through Silvan Tomkins' 1962-1991 affect theory, which framed emotions as hardwired amplifiers of drives, and Paul Ekman's systematic investigations starting in the 1960s. Ekman's cross-cultural fieldwork, including experiments with the isolated South Fore people in Papua New Guinea in 1967-1968, demonstrated agreement rates above chance (often 80-90%) for eliciting and recognizing basic facial expressions, refuting strong cultural relativism claims dominant in mid-century anthropology. These findings, replicated in over 20 subsequent studies across illiterate and urban groups, established facial action coding systems like the Facial Action Coding System (FACS) developed by Ekman and Friesen in 1978, which dissect expressions into anatomically precise muscle movements (action units).[10][13] While constructivist perspectives in psychology, emphasizing appraisal and cultural construction over discrete universals, gained traction amid institutional shifts toward relativism, they often underweight replicable perceptual data from non-Western samples; empirical syntheses affirm that biological universals underpin recognition, modulated but not wholly determined by culture or context. This historical progression from Darwin's naturalism to Ekman's experimental rigor shifted emotion recognition from speculative philosophy to a verifiable science, influencing fields from clinical assessment to machine learning despite persistent debates over innateness.[14][11]Major Theories of Emotion

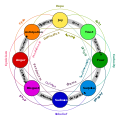

Charles Darwin's evolutionary theory, outlined in The Expression of the Emotions in Man and Animals (1872), proposes that emotions and their facial expressions evolved as adaptive mechanisms to enhance survival, signaling intentions and states to conspecifics, with evidence from cross-species similarities in displays like fear responses.[11] This framework underpins much of modern emotion recognition by emphasizing innate, universal expressive patterns, supported by subsequent cross-cultural studies validating recognition of basic expressions at above-chance levels.[11] The James-Lange theory, articulated by William James (1884) and Carl Lange (1885), contends that emotional experiences result from awareness of bodily physiological changes, such as increased heart rate preceding the feeling of fear.[15] Experimental evidence includes manipulations of bodily signals, like holding a pencil in the teeth to simulate smiling, which elevate reported positive affect, suggesting peripheral feedback influences emotion.[15] However, autonomic patterns show limited specificity across emotions, challenging the theory's claim of distinct bodily signatures for each.[16] In response, the Cannon-Bard theory (1927) argues that thalamic processing triggers simultaneous emotional experience and physiological response, independent of bodily feedback.[17] This addresses James-Lange shortcomings by noting identical autonomic arousal in diverse emotions, like fear and rage, but faces criticism for overemphasizing the thalamus while underplaying cortical integration and evidence of bodily influence on affect.[17][18] The Schachter-Singer two-factor theory (1962) posits that undifferentiated physiological arousal requires cognitive labeling based on environmental cues to produce specific emotions.[19] Their epinephrine injection experiment aimed to demonstrate this via manipulated contexts eliciting euphoria or anger, yet data showed inconsistent labeling, with many participants not experiencing predicted shifts, and later analyses reveal methodological flaws undermining empirical support.[20] Appraisal theories, notably Richard Lazarus's cognitive-motivational-relational model (1991), emphasize that emotions emerge from evaluations of events' relevance to personal goals, with primary appraisals assessing threat or benefit and secondary assessing coping potential.[21] Empirical validation includes studies linking specific appraisals, like goal obstruction to anger, to corresponding emotions, though cultural variations in appraisal patterns suggest incomplete universality.[21] More recently, Lisa Feldman Barrett's theory of constructed emotion (2017) views emotions as predictive brain constructions from interoceptive signals, concepts, and context, rejecting innate "fingerprints" for basic emotions.[22] Neuroimaging shows distributed cortical activity rather than localized modules, but critics argue it dismisses cross-species and developmental evidence for core affective circuits, such as Panksepp's primal systems identified via deep brain stimulation in mammals.[22][23] Robert Plutchik's psychoevolutionary model (1980) integrates discrete basic emotions—joy, trust, fear, surprise, sadness, disgust, anger, anticipation—arranged in a wheel denoting oppositions and dyads, with empirical backing from factor analyses of self-reports aligning with adaptive functions like protection and reproduction.[24] This contrasts constructionist views by positing evolved primaries, influencing recognition systems via categorical prototypes.Human Emotion Recognition

Psychological Mechanisms

Humans recognize emotions in others through integrated perceptual, neural, and cognitive processes that decode cues from facial expressions, vocal prosody, body posture, and contextual information. These mechanisms enable rapid inference of affective states, supporting social interaction and adaptive behavior. Empirical studies indicate that recognition of basic emotions—such as happiness, sadness, anger, fear, surprise, and disgust—occurs with high accuracy, often exceeding 70% in controlled tasks, due to innate configural processing of facial features like eye and mouth movements.[25][26] A core neural pathway involves subcortical routes for automatic detection, particularly for threat-related emotions. Visual input from the retina reaches the superior colliculus and pulvinar, bypassing primary cortical areas to activate the amygdala within 100-120 milliseconds, facilitating pre-conscious responses to fearful expressions even when masked from awareness.[27] This distributed network, including occipitotemporal cortex for feature extraction and orbitofrontal cortex for evaluation, processes emotions holistically rather than featurally, as evidenced by impaired recognition in prosopagnosia where face-specific deficits disrupt emotional decoding.[28][27] Cognitive mechanisms overlay perceptual input with interpretive layers, including theory of mind (ToM), which infers mental states underlying expressed emotions. ToM deficits, as seen in autism spectrum disorders, correlate with reduced accuracy in recognizing subtle or context-dependent emotions, with mediation analyses showing ToM explaining up to 30% of variance in recognition performance beyond basic perception.[29][30] Appraisal processes further refine recognition by evaluating situational relevance, though these are slower and more variable across individuals.[31] The mirror neuron system contributes to embodied simulation, where observed emotional expressions activate corresponding motor and affective representations, enhancing empathy and recognition of intentions. Neuroimaging reveals overlapping activations in inferior frontal gyrus and inferior parietal lobule during both execution and observation of emotional actions, supporting simulation-based understanding, though this mechanism's necessity remains debated as lesions in these areas impair but do not abolish recognition.[32][33] Cultural modulation influences higher-level interpretation, with display rules altering expression intensity, yet core recognition of universals persists across societies, as confirmed in studies with preliterate Fore tribes achieving 80-90% agreement on basic emotion judgments.[34][2]Empirical Capabilities and Limitations

Humans demonstrate moderate accuracy in recognizing basic emotions—typically anger, disgust, fear, happiness, sadness, and surprise—from static or posed facial expressions, with overall rates averaging 70-80% in controlled laboratory settings using prototypical stimuli. Happiness is recognized most reliably, often exceeding 90% accuracy, while fear and disgust show lower performance, around 50-70%, due to overlapping expressive features and subtlety. These figures derive from forced-choice tasks where participants select from predefined emotion labels, reflecting recognition above chance levels (16.7% for six categories) but highlighting variability across emotions.[35][36] Cross-cultural studies support partial universality for basic facial signals, with recognition accuracies of 60-80% when Western participants judge non-Western faces or vice versa, though in-group cultural matching boosts performance by 10-20%. For instance, remote South Fore tribes in Papua New Guinea identified posed basic emotions from American photographs at rates comparable to Westerners, around 70%, suggesting innate perceptual mechanisms, yet accuracy declines for culturally specific displays or non-prototypical expressions. Individual factors modulate capability: higher empathy and fluid intelligence correlate positively with recognition accuracy (r ≈ 0.20-0.30), while aging impairs it, with older adults showing 10-15% deficits relative to younger ones across modalities.[37][38][39] Key limitations arise from context independence in many paradigms; isolated facial cues yield accuracies dropping to 40-60% without situational information, as expressions are polysemous and modulated by surrounding events, gaze direction, or body posture. Spontaneous real-world expressions, unlike posed ones, exhibit greater variability and lower recognizability, with humans achieving only 50-65% accuracy for genuine micro-expressions or blended emotions, challenging assumptions of discrete, reliable signaling. Cultural divergences further constrain universality: East Asian displays emphasize context over facial extremity, leading to under-recognition by Western observers (e.g., 20-30% lower for surprise), while voluntary control allows deception, decoupling expressions from internal states in up to 70% of cases per lie detection studies. Multimodal integration—combining face with voice or gesture—elevates accuracy to 80-90%, underscoring facial-only recognition's inadequacy for causal inference about emotions.[40][41][42]Automatic Emotion Recognition

Historical Milestones

The field of automatic emotion recognition began to formalize in the mid-1990s with the advent of affective computing, a discipline focused on enabling machines to detect, interpret, and respond to human emotions. In 1995, Rosalind Picard, a professor at MIT's Media Lab, introduced the concept in a foundational paper, emphasizing the need for computational systems to incorporate affective signals for more natural human-computer interaction.[43] This work built on psychological research, such as Paul Ekman's Facial Action Coding System (FACS) developed in the 1970s, which provided a framework for quantifying facial muscle movements associated with emotions, later adapted for automated analysis.[44] Early prototypes emerged shortly thereafter. In 1996, researchers demonstrated the first automatic speech emotion recognition system, using acoustic features like pitch and energy to classify emotions from voice samples.[45] By 1998, IBM's BlueEyes project showcased preliminary emotion-sensing technology through eye-tracking and physiological monitoring, aiming to adjust computer interfaces based on user frustration or focus.[46] Picard's 1997 book Affective Computing further solidified the theoretical groundwork, advocating for multimodal approaches integrating facial, vocal, and physiological data.[44] The 2000s saw advancements in facial expression recognition driven by machine learning. Systems began employing computer vision techniques to detect action units from FACS in video footage, achieving initial accuracies for basic emotions like anger and happiness in controlled settings.[47] Commercialization accelerated in 2009 with the founding of Affectiva by Picard, which developed scalable emotion AI for analyzing real-time facial and voice data in applications like market research.[47] Subsequent milestones included the integration of deep learning in the 2010s, enabling higher precision across diverse populations despite challenges like cultural variations in expression.[48]Core Methodological Approaches

Automatic emotion recognition systems typically follow a pipeline involving data acquisition from sensors, preprocessing to reduce noise and normalize inputs, feature extraction or representation learning, and classification or regression to infer emotional states. Early methodologies relied on handcrafted features—such as facial action units via landmark detection, mel-frequency cepstral coefficients (MFCCs) for speech prosody, or bag-of-words with TF-IDF for text—combined with traditional machine learning classifiers like support vector machines (SVM), random forests (RF), or k-nearest neighbors (KNN), achieving accuracies up to 96% on facial datasets but struggling with generalization across varied conditions.[49][50] The dominance of deep learning since the 2010s has shifted paradigms toward end-to-end architectures that automate feature extraction, leveraging large labeled datasets for hierarchical representations. Convolutional neural networks (CNNs), such as VGG or ResNet variants, excel in spatial pattern recognition for visual modalities, attaining accuracies exceeding 99% on benchmark facial expression datasets like FER2013 by capturing micro-expressions and textures without manual engineering.[49][50] Recurrent neural networks (RNNs), particularly long short-term memory (LSTM) and gated recurrent units (GRU) variants, handle sequential dependencies in audio or textual data, with hybrid CNN-LSTM models fusing spatial and temporal features to reach 95% accuracy in multimodal speech emotion recognition on datasets like IEMOCAP.[49][50] Transformer-based models, introduced around 2017 and refined in architectures like BERT or RoBERTa, have advanced contextual understanding through self-attention mechanisms, outperforming RNNs in text-based emotion detection with F1-scores up to 93% on social media corpora by modeling long-range dependencies and semantics.[50] For multimodal integration, late fusion at the decision level or early feature-level concatenation via bilinear pooling enhances robustness, as seen in systems combining audiovisual cues to achieve 94-98% accuracy, though challenges persist in real-time deployment due to computational demands.[49] Generative adversarial networks (GANs) augment limited datasets by synthesizing emotional expressions, improving model generalization in underrepresented categories.[49] These approaches prioritize supervised learning on categorical (e.g., Ekman’s six basic emotions) or dimensional (e.g., valence-arousal) models, evaluated via cross-validation metrics like accuracy and F1-score, with ongoing emphasis on transfer learning to mitigate overfitting on small-scale data.[49][50]Datasets and Evaluation

Datasets for automatic emotion recognition primarily consist of annotated collections of facial videos, speech recordings, textual data, and physiological signals, often categorized by discrete emotions (e.g., anger, happiness) or continuous dimensions (e.g., valence-arousal). Facial datasets dominate due to accessibility, with the Extended Cohn-Kanade (CK+) providing 593 posed video sequences from 123 North American actors depicting onset-to-apex transitions for seven expressions: anger, contempt, disgust, fear, happiness, sadness, and surprise.[51] The FER2013 dataset offers over 35,000 grayscale images scraped from the web, labeled for seven emotions, though it exhibits class imbalance and low resolution, limiting its utility for high-fidelity models.[52] In-the-wild datasets like AFEW (Acted Facial Expressions in the Wild) include 1,426 short video clips extracted from movies, covering the same seven emotions plus neutral, introducing contextual variability but challenged by pose variations and partial occlusions.[53] Speech emotion recognition datasets emphasize acoustic features, with IEMOCAP featuring approximately 12 hours of dyadic interactions from 10 English-speaking actors, annotated for four primary categorical emotions (angry, happy, sad, neutral) and dimensional attributes, blending scripted and improvised utterances for semi-natural expressiveness.[54] RAVDESS (Ryerson Audio-Visual Database of Emotional Speech and Song) contains 7,356 files from 24 Canadian actors performing eight emotions at varying intensities, primarily acted but including singing variants, with noted limitations in cultural homogeneity and elicitation naturalness.[55] Multimodal datasets, such as CMU-MOSEI, integrate audio, video, and text from 1,000+ YouTube monologues, labeled for sentiment and six emotions, enabling fusion models but suffering from subjective annotations and domain-specific biases toward opinionated speech.[56] Overall, datasets often rely on laboratory-elicited or acted data, which underrepresent spontaneous real-world variability and demographic diversity, contributing to generalization failures in deployment.[53][57]| Dataset | Modality | Emotions/Dimensions | Size | Key Limitations |

|---|---|---|---|---|

| CK+ | Facial video | 7 categorical | 593 sequences, 123 subjects | Posed expressions, lacks ecological validity[51] |

| FER2013 | Facial images | 7 categorical | ~35,887 images | Imbalanced classes, low quality[52] |

| AFEW | Facial video | 7 categorical | 1,426 clips | Movie-sourced artifacts, alignment issues[53] |

| IEMOCAP | Speech (audio/video) | 4+ categorical, VAD | ~12 hours, 10 speakers | Small speaker pool, semi-acted[54] |

| RAVDESS | Speech (audio/video) | 8 categorical | 7,356 files, 24 actors | Acted, limited diversity[55] |