Rationing

View on Wikipedia

Rationing is the controlled distribution of resources, goods, services[1], especially when scarce, or an artificial restriction of demand. Rationing controls the size of the ration, which is one's allowed portion of the resources being distributed on a particular day or at a particular time. There are many forms of rationing, although rationing by price is most prevalent.[2]: 8–12

Rationing is often done to keep price below the market-clearing price determined by the process of supply and demand in an unfettered market. Thus, rationing can be complementary to price controls. An example of rationing in the face of rising prices took place in the various countries where there was rationing of gasoline during the 1973 energy crisis.

A reason for setting the price lower than would clear the market may be that there is a high input , which would drive the market price very high. High prices, especially in the case of necessities, are undesirable with regard to those who cannot afford them. However, economists point out that high prices act to reduce waste of the scarce resource, while also providing incentive to produce more.

Rationing using ration stamps is only one kind of non-price rationing. For example, scarce products can be rationed using queues. This is seen, for example, at amusement parks, where one pays a price to get in and then need not pay any price to go on the rides. Similarly, in the absence of road pricing, access to roads is rationed in a first come, first served queueing process, leading to congestion.

Authorities which introduce rationing often have to deal with the rationed goods being sold illegally on the black market. Despite the fact that rationing systems are sometimes necessary as the only viable option for societies facing severe consumer goods shortages, they are usually extremely unpopular with the general public, as they enforce limits on individual consumption.[3][4][5]

Civilian rationing

[edit]Rationing for civilians has most often been instituted during wartime. For example, each person may be given "ration coupons" which allow them to purchase a certain amount of a product each month. Rationing often includes food and other necessities for which there is a shortage, including materials needed for the war effort such as rubber tires, leather shoes, clothing, and fuel.

Rationing of food and water may also become necessary during an emergency, such as a natural disaster or terror attack.

In the U.S., the Federal Emergency Management Agency (FEMA) has established guidelines for rationing food and water when replacements are not available. According to FEMA standards, every person should have a minimum of 1 US quart (0.95 L) per day of water, and more for children, nursing mothers, and the ill.[6]

Origins

[edit]

Military sieges have often resulted in shortages of food and other essentials. In such circumstances, the rations allocated to an individual have often been determined based on age, sex, race or social standing. During the Siege of Lucknow (part of the Indian Rebellion of 1857) women received three-quarters of a man's food ration. Children received only half.[7]: 71 During the Siege of Ladysmith in the early stages of the Boer War in 1900, white adults received the same food rations as soldiers while children received half that. Food rations for Indian people and black people were significantly smaller.[8]: 266–272

The first modern rationing systems were imposed during the First World War. In Germany, suffering from the effects of the British blockade, a rationing system was introduced in 1914 and was steadily expanded over the following years as the situation worsened.[9] Although Britain did not suffer from food shortages, as the sea lanes were kept open for food imports, panic buying towards the end of the war prompted the rationing of first sugar and then meat.[10] It is said to have benefited the overall health of the country,[11] through the "levelling of consumption of essential foodstuffs".[12] To assist with rationing, ration books were introduced on 15 July 1918 for butter, margarine, lard, meat, and sugar. During the war, average caloric intake decreased by only three percent, but protein intake by six percent.[11]

Food rationing appeared in Poland after the First World War, and ration stamps were in use until the end of the Polish–Soviet War.

Second World War

[edit]

Rationing became common during the Second World War. Ration stamps were often used. These were redeemable stamps or coupons, and every family was issued a set number of each kind of stamp based on the size of the family, ages of children, and income. The British Ministry of Food refined the rationing process in the early 1940s to ensure the population did not starve when food imports were severely restricted and local production limited due to the large number of men fighting the war.[13]

Rationing on a scientific basis was pioneered by Elsie Widdowson and Robert McCance at the Department of Experimental Medicine, University of Cambridge. They worked on the chemical composition of the human body, and on the nutritional value of different flours used to make bread. Widdowson also studied the impact of infant diet on human growth. They studied the differing effects from deficiencies of salt and of water and produced the first tables to compare the nutritional contents of foods before and after cooking. They co-authored The Chemical Composition of Foods, first published in 1940 by the Medical Research Council.[14] Their book, "McCance and Widdowson", became known as the dietician's bible and formed the basis for modern nutritional thinking.[15]

In 1939, they tested whether the United Kingdom could survive with only domestic food production if U-boats ended all imports. Using 1938 food-production data, they fed themselves and other volunteers a limited diet, while simulating the strenuous wartime physical work Britons would likely have to perform. The scientists found that the subjects' health and performance remained very good after three months. They also headed the first ever mandated addition of vitamins and minerals to food, beginning with adding calcium to bread. Their work became the basis of the wartime austerity diet promoted by the Minister of Food, Lord Woolton.[15]

The British public's wartime diet was never as severe as in the Cambridge study because German U-boats failed to halt trans-Atlantic supply,[16] but rationing improved the health of British people: infant mortality declined and life expectancy rose. This was because everyone had access to a varied diet with enough nutrients.[17][18]

The first commodity to be controlled was petrol. On 8 January 1940, bacon, butter and sugar were rationed. This was followed by successive rationing schemes for meat, tea, jam, biscuits, breakfast cereals, cheese, eggs, lard, milk, and canned and dried fruit. Fresh vegetables and fruit were not rationed, but supplies were limited. Many people grew their own vegetables, greatly encouraged by the highly successful "Digging for Victory" campaign.[19] Most controversial was bread; it was not rationed until after the war ended, but the "national loaf" of wholemeal bread replaced the ordinary white variety, to the distaste of most housewives who found it mushy, grey, and easy to blame for digestive problems.[20] Fish was not rationed, but fish prices increased considerably as the war progressed.[21]

In May 1941, Woolton appealed to Americans to reduce consumption of certain foods (dairy, sugar canned salmon and meat) so more of those could go to the United Kingdom.[22] The Office of Price Administration (OPA) warned Americans of potential gasoline, steel, aluminum and electricity shortages.[23] It believed that with factories converting to military production and consuming many critical supplies, rationing would become necessary if the country entered the war. It established a rationing system after the attack on Pearl Harbor.[24] In June 1942 the Combined Food Board was set up to coordinate the worldwide supply of food to the Allies, with special attention to flows from the U.S. and Canada to Britain.

American civilians first received ration books—War Ration Book Number One, or the "Sugar Book"—on 4 May 1942,[25] through more than 100,000 school teachers, Parent-Teacher Associations, and other volunteers.[24] Sugar was the first consumer commodity rationed. Bakeries, ice cream makers, and other commercial users received rations of about 70% of normal usage.[25] Coffee was rationed on 27 November 1942 to 1 pound (0.45 kg) every five weeks.[26] By the end of 1942, ration coupons were used for nine other items.[24] Typewriters, gasoline, bicycles, footwear, silk, nylon, fuel oil, stoves, meat, lard, shortening and cooking oils, cheese, butter, margarine, processed foods (canned, bottled, and frozen), dried fruits, canned milk, firewood and coal, jams, jellies, and fruit butters were rationed by November 1943.[27]

The work of issuing ration books and exchanging used stamps for certificates was handled by some 5,500 local ration boards of mostly volunteers. As a result of the gasoline rationing, all forms of automobile racing, including the Indianapolis 500, were banned.[28] All rationing in the United States ended in 1946.[29]

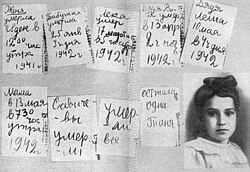

In the Soviet Union food was rationed from 1941 to 1947. In particular, daily bread rations in besieged Leningrad were initially set at 800 grams (28 ounces). By the end of 1941 the bread rations were reduced to 250 grams (8+3⁄4 ounces) for workers and 125 grams (4+1⁄2 ounces) for everyone else, which resulted in a surge of deaths caused by starvation. Starting in 1942 daily bread rations were increased to 350 grams (12+1⁄4 ounces) for workers and 200 grams (7 ounces) for everyone else. One of the documents of the period is the diary of Tanya Savicheva, who recorded the deaths of each member of her family during the siege.

Rationing was also introduced to a number of British dominions, and colonies, with rationing of clothing imposed in Australia, from 12 June 1942, and certain foodstuffs from 1943. Canada rationed tea, coffee, sugar, butter and mechanical spares, between 1942 and 1947. The Cochin, Travancore and Madras states, of British India elected to ration grain between the fall of 1943 and spring 1944. Egypt introduced a ration card-based subsidy of essential foodstuffs in 1945 that has persisted into the 21st century.[citation needed] New Zealand rationing in began in 1942[30] and was abolished on most foods in 1948,[31] but continued on butter until 1950.[32]

Similarly rationing was introduced across the Japanese empire, as commodities such as rice became scarce in territories, after the destruction of the transport infrastructure that once served colonies.[33]

Many countries had gasoline rationing that determined how much gasoline could be filled in a fuel tank, depending on whether the driver was essential to the war effort.[citation needed]

Peacetime rationing

[edit]

Civilian peacetime rationing of food has been employed after natural disasters, during contingencies, or after failed governmental economic policies regarding production or distribution, as well as due to extensive austerity programs implemented to cut or restrict public spending in countries where the rationed goods previously relied on government procurement or subsidies, as was the case in Israel.

In the United Kingdom, rationing remained for several years after the end of the war. Some aspects of rationing became stricter than they were during the conflict—two major foodstuffs that were never rationed during the war, bread and potatoes, were rationed after it (bread from 1946 to 1948, and potatoes for a time from 1947). Tea was still rationed until 1952. In 1953 rationing of sugar and eggs ended and in 1954, all other rationing was abolished when cheese and meats came off ration.[13] Sugar was again rationed in 1974 after Caribbean producers began selling to the more lucrative United States market.[34]

Some centralized planned economies introduced peacetime rationing systems due to food shortages in the postwar period. North Korea and China did so in the 1970s and 1980s, as did Socialist Republic of Romania during Ceausescu's rule in the 1980s, the Soviet Union in 1990–1991, and from 1962–present in Cuba.[35]

From 1949 to 1959, Israel was under a regime of austerity, during which rationing was enforced. At first, only staple foods such as cooking oil, sugar, and margarine were rationed, but it was later expanded, and eventually included furniture and footwear. Every month, each citizen would get food coupons worth six Israeli pounds, and every family would be allotted food. The average Israeli diet was 2,800 calories a day, with additional calories for children, the elderly, and pregnant women.

Following the 1952 Reparations Agreement between Israel and West Germany, and the subsequent influx of foreign capital, Israel's economy was bolstered, and in 1953, most restrictions were cancelled. In 1958, the list of rationed goods was narrowed to just eleven, and in 1959, it was narrowed to only jam, sugar, and coffee.

Petroleum products were rationed in many countries following the 1973 oil crisis. The United States introduced odd–even rationing for fuels during the crisis, which allowed only vehicles with even-numbered numberplates to fill up on gas one day and odd-numbered ones on another.[36]

Poland enacted rationing in 1981 to cope with economic crisis. The rationing system initially encompassed most of the population's daily necessities, but was gradually phased out over time, with the last ration being abolished in 1989.[37]

Rationing in Cuba for basic goods was enacted in 1991 following the collapse of the Soviet Union, which had previously subsidised the island nation's economy. Rationing started being phased out in the year 2000 at the end of the "special period", as Cuba had shifted to a more diversified and self-sustaining economy. Rationing, however, was not fully abolished and instead turned into an alternative way to purchase goods, in addition to the markets. This makes a curious departure from classical rationing, as during the 2001–2019 period, the rationing system was used in addition to, instead of as a replacement for regular markets. Cubans would be able to buy a certain amount of items at 'liberated' prices using ration coupons at a significantly reduced rate, while still being able to purchase more at regular market prices. This 'liberated' system persisted even during Cuba's period of economic growth and relative prosperity during the early and mid 2010s and enjoyed considerable popularity among the island's citizens. Cuba later re-introduced a classical limiting rationing system in 2019, following the imposition of strict sanctions on the island by US President Donald Trump, as well as the collapse of petroleum shipments from Venezuela, which was facing its own economic troubles at that time. Cuba's president pitched the new system as significantly more lenient than the 1991–2000 "special period", though admitted that it would negatively affect consumption.[38][39][40][41]

Short-term rationing for gas and other fuels was introduced in the U.S. states of New Jersey and New York following Hurricane Sandy in 2012.[42]

In April 2019, Venezuela announced a 30-day electricity rationing regime in the face of power shortages.[43][44]

For a few years during a series of droughts in California (from 2015 to 2019), the California State Water Resources Control Board had mandatory water-use restrictions.[45][46][47]

In 2021, Sri Lanka, facing a major economic crisis, is considering introducing food rationing.[48][49][50][51] According to The Hindu, "President Gotabaya Rajapaksa has called in the army to manage the crisis by rationing the supply of various essential goods."[49]

In 2023, Iran began the National Credit Network mechanism.[52]

As of 2024[update], peacetime rationing for basic foodstuffs and similar goods is in effect in Cuba and North Korea.[53]

Refugee aid rations

[edit]Aid agencies, such as the World Food Programme, provide food rations and other essentials to refugees or internally displaced persons who are registered with the UNHCR and are either living in refugee camps or are supported in urban centres. Every registered refugee is given a ration card upon registration which is used for collecting the rations from food distribution centres. The 2,100 calories allocated per person per day is based on minimal standards and is frequently not achieved, as has been the case in Kenya.[54][55]

According to Article 20 of the Convention Relating to the Status of Refugees refugees shall be treated as national citizens in rationing schemes when there is a rationing system in place for the general population.

Other types

[edit]Health-care rationing

[edit]As the British Royal Commission on the National Health Service observed in 1979, "whatever the expenditure on health care, demand is likely to rise to meet and exceed it". Rationing health care to control costs is regarded[citation needed] as an explosive issue in the US, but in reality health care is rationed everywhere. In places where a government provides healthcare, rationing is explicit. In other places people are denied treatment because of personal lack of funds, or because of decisions made by insurance companies. The American Supreme Court approved paying doctors to ration care, stating that there must be "some incentive connecting physician reward with treatment rationing".[56] Shortages of organs for donation forces the rationing of organs for transplant even when funding is available.

Cultural rationing

[edit]Censorship, libraries and museums can function to limit, moderate and share out approved cultural goods, experiences and exposures to the general public.[57]

Credit rationing

[edit]Credit rationing describes a situation wherein a bank limits the supply of loans, even when it has enough funds to loan, and the provision of loans has not yet equaled the demand of prospective borrowers. Changing the price of the loans (interest rate) does not balance loan demand and supply.[58]

Carbon rationing

[edit]Personal carbon trading refers to proposed emissions trading schemes under which emissions credits are allocated to adult individuals on a (broadly) equal per capita basis, within national carbon budgets. Individuals then surrender these credits when buying fuel or electricity. Individuals wanting or needing to emit at a level above that permitted by their initial allocation would be able to engage in emissions trading and purchase additional credits. Conversely, those individuals who emit at a level below that permitted by their initial allocation have the opportunity to sell their surplus credits. Thus, individual trading under Personal Carbon Trading is similar to the trading of companies under EU ETS.

Personal carbon trading is sometimes confused with carbon offsetting due to the similar notion of paying for emissions allowances, but is a quite different concept designed to be mandatory and to guarantee that nations achieve their domestic carbon emissions targets (rather than attempting to do so via international trading or offsetting).

Rationing mechanisms

[edit]

The purpose of rationing is to guarantee a minimum of some resource or to impose a maximum limit on its use. (The latter is the case with carbon rationing, where the scarcity is artificial). Usually, the government determines a fair ration, for example, one proportional to the number of family members. If participants possess different rights to a portion (even when they have the same need) and there is not enough for everyone, then one of the many algorithms for solving the bankruptcy problem may apply.[59]

Sweden from 1919 to 1955 and Finland from 1944 to 1970, also Estonia from 1 July 1920 to 31 December 1925 sought to limit the consumption of alcohol by rationing with the Bratt System, where each household was given a booklet (motbok in Sweden, viinakortti in Finland, tšekisüsteem in Estonia), where after each purchase of alcoholic beverages, a stamp was added, based on the amount of alcohol bought. If the buyer had reached their monthly ration, they would have to wait until next month to buy more.[60][61] The rations were based on gender, income, wealth and social status, with unemployed people and welfare recipients not being allowed to buy any alcohol at all. In addition, since the motboks were distributed per household, not per person, wives had to share their household allowance with their husbands, and in fact thus got nothing at all.[citation needed] People often sought to circumvent the rationing by making frequent use of friends or even strangers' booklets, for example by rewarding a young woman with a dinner out in return for the other party consuming most or all of the alcohol incurring the stamps. Alcohol rationing was eventually abolished in Sweden with the opening of state-owned Systembolaget liquor stores, where people could buy alcoholic beverages without limit.

At other times, the ration can only be estimated by the beneficiary, such as a factory for which energy is to be rationed. In such cases, a mechanism is needed to discourage misreporting the needs or wants (i.e., to meet strategy-proofness). Suppose every participant reports an ideal ration. For so-called uniform rationing, each ration is set to the minimum of the participant's ideal ration and a cap, the cap being determined so that the sum of the rations equals the amount available. So, loosely speaking, the participant asking least will be served first. This mechanism is strategy-proof, avoids unnecessary waste (Pareto optimality) and equally treats equals (anonymity.) In fact, it is the only such mechanism.[62] (Anonymity in this statement can be replaced by envyfreeness). For the redistribution of scarce goods to demanders by suppliers, see non-monetary microeconomies.

For smooth supply chain management the supplies may be rationed,[63] which is sometimes referred to as the rationing game.[64] The references mentioned here are a small sample of the literature about rationing inventories.[65]

Ration stamp

[edit]

A ration stamp, ration coupon, or ration card is a stamp or card issued by a government to allow the holder to obtain food or other commodities that are in short supply during wartime or in other emergency situations when rationing is in force. Ration stamps were widely used during World War II by both sides after hostilities caused interruption to the normal supply of goods. They were also used after the end of the war while the economies of the belligerents gradually returned to normal. Ration stamps were also used to help maintain the amount of food one could hold at a time. This was so that one person would not have more food than another.

India

[edit]Rationing has been present in India since World War II. A ration card allows households to purchase highly subsidised food grain, sugar and kerosene from their local Public distribution system (PDS) shop.

There are two[66] types of ration cards:

- Priority ration cards (replaced the erstwhile above poverty line and below poverty line ration cards after the enactment of the National Food Security Act in 2013)

- Antyodaya (AAY) ration cards, issued to the "poorest of the poor"

United States

[edit]Rationing was used in the United States during World War II.

Government funds provided to poverty stricken individuals by the Supplemental Nutrition Assistance Program are often referred to colloquially as "food stamps". The parallels between these "food stamps" and ration stamps as used in war time rationing are limited, however, since food can be purchased in the United States on the regular market without the use of stamps.

United Kingdom

[edit]Rationing was widespread in the United Kingdom during World War II and continued long after the end of the war. It has been credited with greatly increasing public health. Fuel rationing did not end until 1950.[67]

Poland

[edit]Ration cards were used in the Polish People's Republic in two periods: April 1952 to January 1953 and August 1976 to July 1989.

If one were to buy more food than specified on the stamp, they had to pay 2.5 times the price.

See also

[edit]- Basic income

- Colorado River Compact

- Food bank

- Food stamps

- Grain rationing in China

- Rationing in India

- 2007 Gas Rationing Plan in Iran

- Military rations

- Rationing in Nicaragua

- Rationing in the Soviet Union

- Rationing in the United Kingdom

- Rationing in the United States

- Road space rationing

- Salt lists

- Juntas de Abastecimientos y Precios, rationing in Chile under Allende

References

[edit]- ^ "Rationing". The New Encyclopaedia Britannica (15th ed.). 1994.

government policy consisting of the planned and restrictive allocation of scarce resources and consumer goods, usually practiced during times of war, famine or some other national emergency.

- ^ Cox, Stan (7 May 2013). Any Way You Slice It: The Past, Present, and Future of Rationing (Hardcover). New York: New Press. ISBN 978-1-59558-884-5. Retrieved 3 July 2021.

- ^ "Life on the Home Front; Rationing: A Necessary But Hated Sacrifice". Oregon Secretary of State. Retrieved 22 September 2019.

- ^ Charman, Terry (22 March 2018). "How the Ministry of Food managed food rationing in World War Two". Museum Crush. Retrieved 3 July 2021.

- ^ Williams, Zoe (24 December 2013). "Could rationing hold the key to today's food crises?". The Guardian. ISSN 0261-3077. Retrieved 22 September 2019.

- ^ "Food and Water in an Emergency" (PDF). FEMA.

- ^ Inglis, Julia Selina (1892). The Siege of Lucknow: A Diary. London: James R. Osgood, McIlvaine & Co.

- ^ Nevinson, Henry Wood (1900). Ladysmith: The Diary of a Siege. New Amsterdam Book Co.

- ^ Heyman, Neil M. (1997). World War I. Greenwood Publishing Group. p. 85. ISBN 978-0313298806.

- ^ Hurwitz, Samuel J. (1949). State Intervention in Great Britain: Study of Economic Control and Social Response, 1914–1919. Routledge. pp. 12–29. ISBN 978-1136931864.

{{cite book}}: ISBN / Date incompatibility (help) - ^ a b Beckett, Ian F.W. (2007). The Great War (2 ed.). Longman. pp. 380–382. ISBN 978-1-4058-1252-8.

- ^ Beckett attributes this quotation (page 382) to Margaret Barnett, but does not give further details.

- ^ a b Nicol, Patricia (2010). Sucking Eggs. Vintage Books. ISBN 978-0099521129.

- ^ McCance, Robert Alexander; Widdowson, Elsie May (1940). The Chemical Composition of Foods. Medical Research Council (GB) Special Report Series, no. 235. London: H.M. Stationery Office. Retrieved 3 July 2021.

- ^ a b Elliott, Jane (25 March 2007). "Elsie – mother of the modern loaf". BBC News.

- ^ Dawes, Laura (24 September 2013). "Fighting fit: how dietitians tested if Britain would be starved into defeat". The Guardian. Retrieved 25 September 2013.

- ^ "Wartime Rationing helped the British get healthier than they had ever been". 21 June 2004. Retrieved 20 January 2013.

- ^ "History in Focus: War – Rationing in London WWII". Archived from the original on 3 March 2016. Retrieved 20 January 2013.

- ^ Regan, Geoffrey (1992). The Guinness Book of Military Anecdotes. Guinness Publishing. pp. 19–20. ISBN 0-85112-519-0.

- ^ Calder, Angus (1969). The People's War: Britain 1939–45. New York, Pantheon Books. pp. 276–277.

- ^ Ministry of Agriculture and Fisheries (1946). Fisheries in war time: report on the sea fisheries of England and Wales by the Ministry of Agriculture and Fisheries for the Years 1939–1944 inclusive. H.M. Stationery Office.

- ^ "Woolton Asks Sacrifices by U.S. To Build Food Surplus for Britain; Minister Urges Reduction in Use of Milk, Sugar, Cheese, Meat and Canned Salmon -Calls Situation Now 'Secure'". The New York Times. 31 May 1941. p. 4. Retrieved 14 August 2023.

- ^ ""Creamless Days?" / The Pinch". Life. 9 June 1941. p. 38. Retrieved 5 December 2012.

- ^ a b c Kennett, 1985 p 133, 137-138

- ^ a b "Sugar: U.S. consumers register for first ration books". Life. 11 May 1942. p. 19. Retrieved 17 November 2011.

- ^ "Coffee Rationing". Life. 30 November 1942. p. 64. Retrieved 23 November 2011.

- ^ "Rationed Goods in the USA During the Second World War". Ames Historical Society. Archived from the original on 10 October 2014.

- ^ "Historic Pittsburgh: Chronology". University of Pittsburgh.

- ^ "World War II Rationing". Online Highways.

- ^ "New Zealand Official Yearbook 1946".

- ^ "Rationing of New Zealand-Grown Foods | NZETC". nzetc.victoria.ac.nz. Retrieved 21 July 2022.

- ^ "Availability of Butter Coupons. Gisborne Herald". paperspast.natlib.govt.nz. 16 December 1949. Retrieved 21 July 2022.

- ^ "The Japanese occupation: Malayan economy before, during and after - Articles | Economic History Malaya". www.ehm.my. Retrieved 21 June 2024.

- ^ "Rationing starts as sugar shortage looms". The Guardian. 9 July 1974. p. 3.

- ^ "FE482/FE482: Overview of Cuba's Food Rationing System".

- ^ "Shortages: Gas Fever: Happiness Is a Full Tank". Time. 18 February 1974. ISSN 0040-781X. Retrieved 22 September 2019.

- ^ "Poland to End Rations And Food-Price Freeze". The New York Times. 31 July 1989. ISSN 0362-4331. Retrieved 22 September 2019.

- ^ Garth, Hanna (2009). "Things Became Scarce: Food Availability and Accessibility in Santiago de Cuba Then and Now". NAPA Bulletin. 32 (1): 178–192. doi:10.1111/j.1556-4797.2009.01034.x. ISSN 1556-4789.

- ^ Haven, Paul (7 November 2009). "Cuba cuts back on rationed products". Boston.com. Retrieved 22 September 2019.

- ^ "Cuba to widen food rationing as supply crisis bites". Deutsche Welle. 11 May 2019. Retrieved 22 September 2019.

- ^ "Cuba Rations Staple Foods and Soap in Face of Economic Crisis". The New York Times. Associated Press. 11 May 2019. ISSN 0362-4331. Retrieved 22 September 2019.

- ^ Hu, Winnie (18 November 2012). "New York City Decides to Extend Gas Rationing Through Friday". The New York Times. ISSN 0362-4331. Retrieved 22 September 2019.

- ^ "Venezuela's Maduro announces electricity rationing amid power cuts". South China Morning Post. 1 April 2019. Retrieved 22 September 2019.

- ^ IANS (1 April 2019). "Electricity rationing plan announced in Venezuela". Business Standard India. Retrieved 22 September 2019.

- ^ Nagourney, Adam (1 April 2015). "California Imposes First Mandatory Water Restrictions to Deal With Drought". The New York Times. ISSN 0362-4331. Retrieved 22 September 2019.

- ^ Stephanie Koons, California's drought is over, but water conservation remains a 'way of life', AccuWeathey (July 10, 2019).

- ^ Romero, Ezra David (16 July 2021), "Drought-Stricken California Hasn't Mandated Statewide Water Restrictions. Here's Why", KQED.

- ^ Pandey, Samyak (5 September 2021). "How Sri Lanka's overnight flip to total organic farming has led to an economic disaster". ThePrint. Retrieved 6 September 2021.

- ^ a b Perumal, Prashanth (6 September 2021). "Explained - What caused the Sri Lankan economic crisis?". The Hindu. Retrieved 6 September 2021.

- ^ Jayasinghe, Amal (1 September 2021). "Sri Lanka organic revolution threatens tea disaster". Phys.org. Omicron Limited. Retrieved 6 September 2021.

- ^ "Sri Lanka walks back fertiliser ban over political fallout fears". France 24. 5 August 2021. Retrieved 6 September 2021.

- ^ "وزارت کار خبر داد: گسترش اقتصاد کوپنی در ایران" [The Ministry of Labor announced the expansion of the coupon economy in Iran]. Voice of America (in Persian). 27 June 2023.

- ^ "Cuba slashes size of daily bread ration as ingredients run thin". Reuters. 17 September 2024.

- ^ "FAQs | WFP | United Nations World Food Programme". Archived from the original on 18 August 2016. Retrieved 11 August 2016.

- ^ "WFP Forced To Reduce Food Rations To Refugees In Kenya". UN World Food Programme. 14 November 2014. Retrieved 3 July 2021.

- ^ "The Nation: The 'R' Word; Justice Souter Takes on a Health Care Taboo". The New York Times. 18 June 2000. Retrieved 19 May 2015.

- ^

Goldstone, Jack A., ed. (1994) [1986]. Revolutions: Theoretical, Comparative, and Historical Studies (2 ed.). Fort Worth: Harcourt Brace College Publishers. pp. 223–224. ISBN 9780155003859. Retrieved 12 December 2023.

[...] there is also cultural rationing, with strict official control over what is published and performed in China, so that, particularly in recent years, there is a very narrow range of cultural entertainment from which all strata of society have to pick.

- ^ Stiglitz, Joseph E.; Weiss, Andrew (1981). "Credit Rationing in Markets with Imperfect Information". The American Economic Review. 71 (3): 393–410. ISSN 0002-8282. JSTOR 1802787.

- ^ Moulin, Hervé (1 May 2000). "Priority Rules and Other Asymmetric Rationing Methods" (PDF). Econometrica. 68 (3): 643–684. doi:10.1111/1468-0262.00126. Archived from the original (PDF) on 18 September 2020. Retrieved 2 August 2020.

- ^ Bjorkman, Jenny (15 June 2018). "Motboken – hatad av många". Slakthistoria.se (in Swedish). Retrieved 29 March 2023.

- ^ "Alkoholikeeldudest Eestis". Postimees (in Estonian). 8 November 2008. Retrieved 27 March 2024.

- ^ Weymark, John A. (1999). "Sprumont's characterization of the uniform rule when all single-peaked preferences are admissible". Review of Economic Design. 4 (4). (January 2000): 389–393. doi:10.1007/s100580050044. S2CID 154970988.

- ^ Richard, Gilbert J.; Klemperer, Paul. "An equilibrium theory of rationing" (PDF). RAND Journal of Economics. 31: 1–21.

- ^ Rong, Ying; Snyder, Lawrence V.; Shen, Zuo-Jun Max (25 May 2017). "Bullwhip and Reverse Bullwhip Effects under the Rationing Game". SSRN. SSRN 1240173.

- ^ "Find Research Output". Eindhoven University of Technology. Retrieved 3 July 2021.[not specific enough to verify]

- ^ Government of India. "National Food Security Act (2013)" (PDF).

- ^ "1950: UK drivers cheer end of fuel rations". BBC. 26 May 1950. Retrieved 27 March 2009.

- Kennett, Lee (1985). For the duration...: the United States goes to war, Pearl Harbor – 1942. New York: Scribner. ISBN 0-684-18239-4 – via Internet Archive.

Further reading

[edit]- Allocation of Ventilators in an Influenza Pandemic, Report of New York State Task Force on Life and the Law, 2007.

- Matt Gouras. "Frist Defends Flu Shots for Congress." Associated Press. October 21, 2004.

- Elster, Jon, ed. (1995). Local justice in America. New York: Russell Sage Foundation. ISBN 978-0-87154-233-5. LCCN 94039623.

- Allen, Harold Don. Canada rationing, 1942-1947 : a numismatic record. OCLC 1007738043.

External links

[edit]- Are You Ready?: An In-depth Guide to Citizen Preparedness – FEMA

- short descriptions of World War I rationing – Spartacus Educational

- a short description of World War II rationing – Memories of the 1940s

- Ration Coupons on the Home Front, 1942–1945 – Duke University Libraries Digital Collections

- World War II Rationing on the U.S. homefront, illustrated – Ames Historical Society

- Links to 1940s newspaper clippings on rationing, primarily World War II War Ration Books – Genealogy Today

- Tax Rationing

- Recipe for Victory:Food and Cooking in Wartime[not specific enough to verify]

- war time rationing in UK