Regular number

View on Wikipedia

Regular numbers are numbers that evenly divide powers of 60 (or, equivalently, powers of 30). Equivalently, they are the numbers whose only prime divisors are 2, 3, and 5. As an example, 602 = 3600 = 48 × 75, so as divisors of a power of 60 both 48 and 75 are regular.

These numbers arise in several areas of mathematics and its applications, and have different names coming from their different areas of study.

- In number theory, these numbers are called 5-smooth, because they can be characterized as having only 2, 3, or 5 as their prime factors. This is a specific case of the more general k-smooth numbers, the numbers that have no prime factor greater than k.

- In the study of Babylonian mathematics, the divisors of powers of 60 are called regular numbers or regular sexagesimal numbers, and are of great importance in this area because of the sexagesimal (base 60) number system that the Babylonians used for writing their numbers, and that was central to Babylonian mathematics.

- In music theory, regular numbers occur in the ratios of tones in five-limit just intonation. In connection with music theory and related theories of architecture, these numbers have been called the harmonic whole numbers.

- In computer science, regular numbers are often called Hamming numbers, after Richard Hamming, who proposed the problem of finding computer algorithms for generating these numbers in ascending order. This problem has been used as a test case for functional programming.

Number theory

[edit]Formally, a regular number is an integer of the form , for nonnegative integers , , and . Such a number is a divisor of . The regular numbers are also called 5-smooth, indicating that their greatest prime factor is at most 5.[2] More generally, a k-smooth number is a number whose greatest prime factor is at most k.[3]

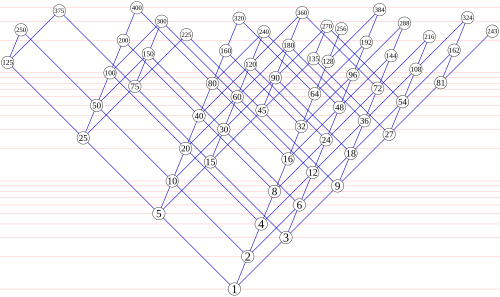

The first few regular numbers are[2]

Several other sequences at the On-Line Encyclopedia of Integer Sequences have definitions involving 5-smooth numbers.[4]

Although the regular numbers appear dense within the range from 1 to 60, they are quite sparse among the larger integers. A regular number is less than or equal to some threshold if and only if the point belongs to the tetrahedron bounded by the coordinate planes and the plane as can be seen by taking logarithms of both sides of the inequality . Therefore, the number of regular numbers that are at most can be estimated as the volume of this tetrahedron, which is Even more precisely, using big O notation, the number of regular numbers up to is and it has been conjectured that the error term of this approximation is actually .[2] A similar formula for the number of 3-smooth numbers up to is given by Srinivasa Ramanujan in his first letter to G. H. Hardy.[5]

Babylonian mathematics

[edit]

In the Babylonian sexagesimal notation, the reciprocal of a regular number has a finite representation. If divides , then the sexagesimal representation of is just that for , shifted by some number of places. This allows for easy division by these numbers: to divide by , multiply by , then shift.[6]

For instance, consider division by the regular number 54 = 2133. 54 is a divisor of 603, and 603/54 = 4000, so dividing by 54 in sexagesimal can be accomplished by multiplying by 4000 and shifting three places. In sexagesimal 4000 = 1×3600 + 6×60 + 40×1, or (as listed by Joyce) 1:6:40. Thus, 1/54, in sexagesimal, is 1/60 + 6/602 + 40/603, also denoted 1:6:40 as Babylonian notational conventions did not specify the power of the starting digit. Conversely 1/4000 = 54/603, so division by 1:6:40 = 4000 can be accomplished by instead multiplying by 54 and shifting three sexagesimal places.

The Babylonians used tables of reciprocals of regular numbers, some of which still survive.[7] These tables existed relatively unchanged throughout Babylonian times.[6] One tablet from Seleucid times, by someone named Inaqibıt-Anu, contains the reciprocals of 136 of the 231 six-place regular numbers whose first place is 1 or 2, listed in order. It also includes reciprocals of some numbers of more than six places, such as 323 (2 1 4 8 3 0 7 in sexagesimal), whose reciprocal has 17 sexagesimal digits. Noting the difficulty of both calculating these numbers and sorting them, Donald Knuth in 1972 hailed Inaqibıt-Anu as "the first man in history to solve a computational problem that takes longer than one second of time on a modern electronic computer!" (Two tables are also known giving approximations of reciprocals of non-regular numbers, one of which gives reciprocals for all the numbers from 56 to 80.)[8][9]

Although the primary reason for preferring regular numbers to other numbers involves the finiteness of their reciprocals, some Babylonian calculations other than reciprocals also involved regular numbers. For instance, tables of regular squares have been found[6] and the broken tablet Plimpton 322 has been interpreted by Neugebauer as listing Pythagorean triples generated by and both regular and less than 60.[10] Fowler and Robson discuss the calculation of square roots, such as how the Babylonians found an approximation to the square root of 2, perhaps using regular number approximations of fractions such as 17/12.[9]

Music theory

[edit]In music theory, the just intonation of the diatonic scale involves regular numbers: the pitches in a single octave of this scale have frequencies proportional to the numbers in the sequence 24, 27, 30, 32, 36, 40, 45, 48 of nearly consecutive regular numbers.[11] Thus, for an instrument with this tuning, all pitches are regular-number harmonics of a single fundamental frequency. This scale is called a 5-limit tuning, meaning that the interval between any two pitches can be described as a product 2i3j5k of powers of the prime numbers up to 5, or equivalently as a ratio of regular numbers.[12]

5-limit musical scales other than the familiar diatonic scale of Western music have also been used, both in traditional musics of other cultures and in modern experimental music: Honingh & Bod (2005) list 31 different 5-limit scales, drawn from a larger database of musical scales. Each of these 31 scales shares with diatonic just intonation the property that all intervals are ratios of regular numbers.[12] Euler's tonnetz provides a convenient graphical representation of the pitches in any 5-limit tuning, by factoring out the octave relationships (powers of two) so that the remaining values form a planar grid.[12] Some music theorists have stated more generally that regular numbers are fundamental to tonal music itself, and that pitch ratios based on primes larger than 5 cannot be consonant.[13] However the equal temperament of modern pianos is not a 5-limit tuning,[14] and some modern composers have experimented with tunings based on primes larger than five.[15]

In connection with the application of regular numbers to music theory, it is of interest to find pairs of regular numbers that differ by one. There are exactly ten such pairs and each such pair defines a superparticular ratio that is meaningful as a musical interval. These intervals are 2/1 (the octave), 3/2 (the perfect fifth), 4/3 (the perfect fourth), 5/4 (the just major third), 6/5 (the just minor third), 9/8 (the just major tone), 10/9 (the just minor tone), 16/15 (the just diatonic semitone), 25/24 (the just chromatic semitone), and 81/80 (the syntonic comma).[16]

In the Renaissance theory of universal harmony, musical ratios were used in other applications, including the architecture of buildings. In connection with the analysis of these shared musical and architectural ratios, for instance in the architecture of Palladio, the regular numbers have also been called the harmonic whole numbers.[17]

Algorithms

[edit]Algorithms for calculating the regular numbers in ascending order were popularized by Edsger Dijkstra. Dijkstra (1976, 1981) attributes to Hamming the problem of building the infinite ascending sequence of all 5-smooth numbers; this problem is now known as Hamming's problem, and the numbers so generated are also called the Hamming numbers. Dijkstra's ideas to compute these numbers are the following:

- The sequence of Hamming numbers begins with the number 1.

- The remaining values in the sequence are of the form , , and , where is any Hamming number.

- Therefore, the sequence may be generated by outputting the value 1, and then merging the sequences , , and .

This algorithm is often used to demonstrate the power of a lazy functional programming language, because (implicitly) concurrent efficient implementations, using a constant number of arithmetic operations per generated value, are easily constructed as described above. Similarly efficient strict functional or imperative sequential implementations are also possible whereas explicitly concurrent generative solutions might be non-trivial.[18]

In the Python programming language, lazy functional code for generating regular numbers is used as one of the built-in tests for correctness of the language's implementation.[19]

A related problem, discussed by Knuth (1972), is to list all -digit sexagesimal numbers in ascending order (see #Babylonian mathematics above). In algorithmic terms, this is equivalent to generating (in order) the subsequence of the infinite sequence of regular numbers, ranging from to .[8] See Gingerich (1965) for an early description of computer code that generates these numbers out of order and then sorts them;[20] Knuth describes an ad hoc algorithm, which he attributes to Bruins (1970), for generating the six-digit numbers more quickly but that does not generalize in a straightforward way to larger values of .[8] Eppstein (2007) describes an algorithm for computing tables of this type in linear time for arbitrary values of .[21]

Other applications

[edit]Heninger, Rains & Sloane (2006) show that, when is a regular number and is divisible by 8, the generating function of an -dimensional extremal even unimodular lattice is an th power of a polynomial.[22]

As with other classes of smooth numbers, regular numbers are important as problem sizes in computer programs for performing the fast Fourier transform, a technique for analyzing the dominant frequencies of signals in time-varying data. For instance, the method of Temperton (1992) requires that the transform length be a regular number.[23]

Book VIII of Plato's Republic involves an allegory of marriage centered on the highly regular number 604 = 12,960,000 and its divisors (see Plato's number). Later scholars have invoked both Babylonian mathematics and music theory in an attempt to explain this passage.[24]

Certain species of bamboo release large numbers of seeds in synchrony (a process called masting) at intervals that have been estimated as regular numbers of years, with different intervals for different species, including examples with intervals of 10, 15, 16, 30, 32, 48, 60, and 120 years.[25] It has been hypothesized that the biological mechanism for timing and synchronizing this process lends itself to smooth numbers, and in particular in this case to 5-smooth numbers. Although the estimated masting intervals for some other species of bamboo are not regular numbers of years, this may be explainable as measurement error.[25]

Notes

[edit]- ^ Inspired by similar diagrams by Erkki Kurenniemi in "Chords, scales, and divisor lattices".

- ^ a b c Sloane "A051037".

- ^ Pomerance (1995).

- ^ OEIS search for sequences involving 5-smoothness.

- ^ Berndt & Rankin (1995).

- ^ a b c Aaboe (1965).

- ^ Sachs (1947).

- ^ a b c Knuth (1972).

- ^ a b Fowler & Robson (1998).

- ^ See Conway & Guy (1996) for a popular treatment of this interpretation. Plimpton 322 has other interpretations, for which see its article, but all involve regular numbers.

- ^ Clarke (1877).

- ^ a b c Honingh & Bod (2005).

- ^ Asmussen (2001), for instance, states that "within any piece of tonal music" all intervals must be ratios of regular numbers, echoing similar statements by much earlier writers such as Habens (1889). In the modern music theory literature this assertion is often attributed to Longuet-Higgins (1962), who used a graphical arrangement closely related to the tonnetz to organize 5-limit pitches.

- ^ Kopiez (2003).

- ^ Wolf (2003).

- ^ Halsey & Hewitt (1972) note that this follows from Størmer's theorem (Størmer 1897), and provide a proof for this case; see also Silver (1971).

- ^ Howard & Longair (1982).

- ^ See, e.g., Hemmendinger (1988) or Yuen (1992).

- ^ Function m235 in test_generators.py.

- ^ Gingerich (1965).

- ^ Eppstein (2007).

- ^ Heninger, Rains & Sloane (2006).

- ^ Temperton (1992).

- ^ Barton (1908); McClain (1974).

- ^ a b Veller, Nowak & Davis (2015).

References

[edit]- Aaboe, Asger (1965), "Some Seleucid mathematical tables (extended reciprocals and squares of regular numbers)", Journal of Cuneiform Studies, 19 (3), The American Schools of Oriental Research: 79–86, doi:10.2307/1359089, JSTOR 1359089, MR 0191779, S2CID 164195082.

- Asmussen, Robert (2001), Periodicity of sinusoidal frequencies as a basis for the analysis of Baroque and Classical harmony: a computer based study (PDF), Ph.D. thesis, University of Leeds, archived from the original (PDF) on 2016-04-24, retrieved 2007-03-15.

- Barton, George A. (1908), "On the Babylonian origin of Plato's nuptial number", Journal of the American Oriental Society, 29, American Oriental Society: 210–219, doi:10.2307/592627, JSTOR 592627.

- Berndt, Bruce C.; Rankin, Robert Alexander, eds. (1995), Ramanujan: letters and commentary, History of mathematics, vol. 9, American Mathematical Society, p. 23, Bibcode:1995rlc..book.....B, ISBN 978-0-8218-0470-4.

- Bruins, E. M. (1970), "La construction de la grande table le valeurs réciproques AO 6456", in Finet, André (ed.), Actes de la XVIIe Rencontre Assyriologique Internationale, Comité belge de recherches en Mésopotamie, pp. 99–115.

- Clarke, A. R. (January 1877), "Just intonation", Nature, 15 (377): 253, Bibcode:1877Natur..15..253C, doi:10.1038/015253b0.

- Conway, John H.; Guy, Richard K. (1996), The Book of Numbers, Copernicus, pp. 172–176, ISBN 0-387-97993-X.

- Dijkstra, Edsger W. (1976), "17. An exercise attributed to R. W. Hamming", A Discipline of Programming, Prentice-Hall, pp. 129–134, ISBN 978-0132158718

- Dijkstra, Edsger W. (1981), Hamming's exercise in SASL (PDF), Report EWD792. Originally a privately circulated handwritten note.

- Eppstein, David (2007), The range-restricted Hamming problem.

- Fowler, David; Robson, Eleanor (1998), "Square Root Approximations in Old Babylonian Mathematics: YBC 7289 in Context" (PDF), Historia Mathematica, 25 (4): 366–378, doi:10.1006/hmat.1998.2209, page 375.

- Gingerich, Owen (1965), "Eleven-digit regular sexagesimals and their reciprocals", Transactions of the American Philosophical Society, 55 (8), American Philosophical Society: 3–38, doi:10.2307/1006080, JSTOR 1006080.

- Habens, Rev. W. J. (1889), "On the musical scale", Proceedings of the Musical Association, 16, Royal Musical Association: 16th Session, p. 1, JSTOR 765355.

- Halsey, G. D.; Hewitt, Edwin (1972), "More on the superparticular ratios in music", American Mathematical Monthly, 79 (10), Mathematical Association of America: 1096–1100, doi:10.2307/2317424, JSTOR 2317424, MR 0313189.

- Hemmendinger, David (1988), "The "Hamming problem" in Prolog", ACM SIGPLAN Notices, 23 (4): 81–86, doi:10.1145/44326.44335, S2CID 28906392.

- Heninger, Nadia; Rains, E. M.; Sloane, N. J. A. (2006), "On the integrality of nth roots of generating functions", Journal of Combinatorial Theory, Series A, 113 (8): 1732–1745, arXiv:math.NT/0509316, doi:10.1016/j.jcta.2006.03.018, MR 2269551, S2CID 15913795.

- Honingh, Aline; Bod, Rens (2005), "Convexity and the well-formedness of musical objects", Journal of New Music Research, 34 (3): 293–303, doi:10.1080/09298210500280612, S2CID 16321292.

- Howard, Deborah; Longair, Malcolm (May 1982), "Harmonic proportion and Palladio's Quattro Libri", Journal of the Society of Architectural Historians, 41 (2): 116–143, doi:10.2307/989675, JSTOR 989675

- Knuth, D. E. (1972), "Ancient Babylonian algorithms" (PDF), Communications of the ACM, 15 (7): 671–677, doi:10.1145/361454.361514, S2CID 7829945. A correction appears in CACM 19(2), 1976, stating that the tablet does not contain all 231 of the numbers of interest. The article (corrected) with a brief addendum appears in Selected Papers on Computer Science, CSLI Lecture Notes 59, Cambridge Univ. Press, 1996, pp. 185–203, but without the Appendix that was included in the original paper.

- Kopiez, Reinhard (2003), "Intonation of harmonic intervals: adaptability of expert musicians to equal temperament and just intonation", Music Perception, 20 (4): 383–410, doi:10.1525/mp.2003.20.4.383

- Longuet-Higgins, H. C. (1962), "Letter to a musical friend", Music Review (August): 244–248.

- McClain, Ernest G. (1974), "Musical "Marriages" in Plato's "Republic"", Journal of Music Theory, 18 (2), Duke University Press: 242–272, JSTOR 843638.

- Pomerance, Carl (1995), "The role of smooth numbers in number-theoretic algorithms", Proceedings of the International Congress of Mathematicians, Vol. 1, 2 (Zürich, 1994), Basel: Birkhäuser, pp. 411–422, MR 1403941.

- Sachs, A. J. (1947), "Babylonian mathematical texts. I. Reciprocals of regular sexagesimal numbers", Journal of Cuneiform Studies, 1 (3), The American Schools of Oriental Research: 219–240, doi:10.2307/1359434, JSTOR 1359434, MR 0022180, S2CID 163783242.

- Silver, A. L. Leigh (1971), "Musimatics or the nun's fiddle", American Mathematical Monthly, 78 (4), Mathematical Association of America: 351–357, doi:10.2307/2316896, JSTOR 2316896.

- Sloane, N. J. A. (ed.), "Sequence A051037 (5-smooth numbers)", The On-Line Encyclopedia of Integer Sequences, OEIS Foundation

- Størmer, Carl (1897), "Quelques théorèmes sur l'équation de Pell x2 − Dy2 = ±1 et leurs applications", Skrifter Videnskabs-selskabet (Christiania), Mat.-Naturv. Kl., I (2).

- Temperton, Clive (1992), "A generalized prime factor FFT algorithm for any N = 2p3q5r", SIAM Journal on Scientific and Statistical Computing, 13 (3): 676–686, doi:10.1137/0913039, S2CID 14764894.

- Veller, Carl; Nowak, Martin A.; Davis, Charles C. (May 2015), "Extended flowering intervals of bamboos evolved by discrete multiplication", Ecology Letters, 18 (7): 653–659, Bibcode:2015EcolL..18..653V, doi:10.1111/ele.12442, PMID 25963600

- Wolf, Daniel James (March 2003), "Alternative tunings, alternative tonalities", Contemporary Music Review, 22 (1–2): 3–14, doi:10.1080/0749446032000134715, S2CID 191457676

- Yuen, C. K. (1992), "Hamming numbers, lazy evaluation, and eager disposal", ACM SIGPLAN Notices, 27 (8): 71–75, doi:10.1145/142137.142151, S2CID 18283005.

External links

[edit]- Table of reciprocals of regular numbers up to 3600 from the web site of Professor David E. Joyce, Clark University.

- RosettaCode Generation of Hamming_numbers in ~ 50 programming languages

Regular number

View on GrokipediaDefinition and Properties

Definition

Regular numbers are positive integers whose only prime factors are 2, 3, and 5, expressible in the form , where , , and are non-negative integers.[5][6] These numbers form a subset of the natural numbers and are characterized by their limited prime factorization, making them particularly amenable to certain computational and historical contexts. Equivalently, regular numbers are the positive divisors of for any positive integer , since and increasing allows for arbitrarily high exponents in the prime factorization.[7] Examples of regular numbers include 1 (), 2, 3, 4 (), 5, 6 (), 8 (), 9 (), 10 (), 12 (), 15 (), 16 (), 18 (), 20 (), 24 (), 25 (), 27 (), and 30 ().[5] In number theory, regular numbers are synonymous with 5-smooth numbers, denoting integers with no prime factors exceeding 5.[5][8] In computer science, they are commonly referred to as Hamming numbers, a term originating from a problem posed by Richard Hamming on efficiently generating such numbers, which Edsger Dijkstra attributed to him in the 1976 book A Discipline of Programming.[9][10] Regular numbers hold historical significance in the Babylonian sexagesimal system, where their reciprocals have finite representations.[2]Basic Properties

Regular numbers, being positive integers of the form where are integers, form a multiplicative semigroup under integer multiplication. The product of any two such numbers is again a regular number, as the exponents add accordingly while preserving the restricted prime factors. This structure arises directly from the closure property inherent in the prime factorization limited to 2, 3, and 5.[11] The density of regular numbers up to a real number is given by the counting function , which enumerates the nonnegative integer solutions to . Due to the linear independence of , , and over the rationals, each regular number has a unique representation, and equals the number of lattice points in the tetrahedron defined by with . The asymptotic approximation is , obtained as the volume of this region divided by the factorial of the number of primes involved (here, 3). Lower-order terms are , ensuring the leading term dominates for large .[11] The regular numbers form a strictly increasing sequence , ordered by magnitude. This sequence can be generated recursively by starting with 1 and repeatedly adjoining the smallest integer greater than the current maximum that is a multiple of an earlier term by 2, 3, or 5, ensuring no duplicates due to unique factorizations. Equivalently, each subsequent term is the minimum among the sets , , and excluding previously appearing values, where is the current sequence length. This process yields the sequence 1, 2, 3, 4, 5, 6, 8, 9, 10, 12, ... .[11] A subset of regular numbers corresponds to highly composite numbers when restricted to the primes 2, 3, and 5. Specifically, those with non-increasing exponents in are the 5-smooth highly composite numbers, as this satisfies the condition that exponents decrease (or stay equal) with increasing primes, a defining property of highly composite numbers. Examples include 1 (), 2 (), 6 (), 12 (), and 60 (). These form a proper subsequence of all regular numbers.[12] The th regular number is defined as the smallest positive integer such that . Determining thus requires inverting the counting function, typically by solving asymptotically, yielding , though exact computation involves enumerating the lattice points up to the boundary. This inversion highlights the connection between the geometric volume and the ordering of the sequence.[11] These foundational properties underpin applications of regular numbers in number theory, such as addressing factorization challenges.[11]Historical Development

Babylonian Mathematics

The Babylonians utilized a sexagesimal (base-60) positional numeral system in their arithmetic from the Old Babylonian period, approximately 2000–1600 BCE, which inherently supported fractional computations through the use of reciprocals. Regular numbers—natural numbers whose prime factors are exclusively 2, 3, and 5—played a central role as denominators for unit fractions, as their reciprocals possess finite expansions in sexagesimal notation, permitting exact representations without recurring or infinite digits. Scribes compiled extensive tables of these reciprocals to streamline division, a fundamental operation in their mathematical practices, transforming it into multiplication by the precomputed inverse. Clay tablets from this era, such as those cataloged in collections like the Yale Babylonian Collection, demonstrate the systematic documentation of reciprocals for regular numbers up to 81, covering all one- and two-digit values in the system. A prominent example is the Plimpton 322 tablet, dated to around 1800 BCE and housed at Columbia University, which employs reciprocals of regular numbers in generating tables of Pythagorean triples for astronomical and geometric purposes. In this artifact, parameters like the reciprocals of 48 and 60 (both regular) facilitate the derivation of side ratios in right triangles, underscoring their utility in precise mensuration tasks. Specific reciprocals illustrate the system's elegance: the inverse of 2 is expressed as 0;30 (equivalent to 30/60 or 1/2), that of 3 as 0;20 (20/60 or 1/3), and that of 5 as 0;12 (12/60 or 1/5), all terminating after a single sexagesimal place. These finite forms enabled scribes to perform exact arithmetic on fractions that would require infinite series in base-10 systems, such as 1/7, by approximating with nearby regular denominators when necessary. The adoption of regular numbers in the sexagesimal framework offered a significant advantage over other ancient numeral systems, like the Egyptian base-10, by allowing divisions by non-divisors of 60 to be managed through finite approximations or exact reciprocals, thus enhancing accuracy in fields like land measurement and celestial tracking.[13] This approach, rooted in the factorization of 60 as , minimized computational errors and supported complex problem-solving evident in surviving cuneiform records.Modern Recognition

The rediscovery of regular numbers in Western mathematics gained momentum during the Renaissance, as scholars engaged with ancient Greek texts that transmitted Babylonian sexagesimal methods. Translations such as George of Trebizond's 1451 Latin version of Ptolemy's Almagest exposed mathematicians to sexagesimal computations, where regular numbers—those factorable solely by 2, 3, and 5—enabled finite fractional representations essential for astronomical tables. John Dee, a leading 16th-century figure, highlighted the value of these numerical systems in his preface to the 1570 English translation of Euclid's Elements, advocating their application in astronomy and navigation amid ongoing debates over decimal versus sexagesimal divisions.[14] In the 19th century, breakthroughs in cuneiform decipherment by Henry Rawlinson and Edward Hincks in the 1840s–1850s unlocked original Babylonian tablets, revealing extensive tables of reciprocals and multiplications centered on regular numbers. This direct evidence integrated the concept into European number theory, identifying them as 5-smooth numbers whose limited prime factors facilitated precise calculations. Their relevance emerged in Diophantine approximation studies, as exemplified by Joseph Liouville's 1844 work on transcendental numbers, where rationals with smooth denominators demonstrated exceptionally close approximations to irrationals, influencing subsequent theorems by Charles Hermite and others on the distribution and utility of such forms. The 20th century brought formalization through Otto Neugebauer's seminal analyses of Babylonian mathematics, where he coined "regular numbers" to describe their role in producing terminating sexagesimal expansions and enabling efficient reciprocal computations. Neugebauer's Quellen und Studien zur Geschichte der Mathematik (1935–1939) and The Exact Sciences in Antiquity (1957) established their foundational importance, drawing parallels to modern number-theoretic properties. Concurrently, in computational contexts, in the mid-20th century, Richard Hamming posed the challenge of efficiently generating the ordered sequence of these numbers without redundancy or overflow. Edsger Dijkstra's structured solution in A Discipline of Programming (1976) popularized it as a benchmark for algorithmic elegance, with the numbers thereafter known as Hamming numbers in computer science; the problem persists in programming contests like the International Olympiad in Informatics.Mathematical Applications

Number Theory

In number theory, regular numbers, also known as 5-smooth numbers, represent the extremal case within the broader theory of smooth numbers, where all prime factors are bounded by a fixed value; specifically, they consist solely of the primes 2, 3, and 5, making them the "smoothest" integers under this bound of 5.[11] This classification arises from the general study of y-smooth numbers, whose prime factors do not exceed y, and regular numbers exemplify the simplest multiplicative structure in this hierarchy, facilitating analysis in Diophantine problems and algorithmic efficiency.[11] The Dirichlet series associated with the indicator function of regular numbers connects directly to the Riemann zeta function through restricted Euler products. Formally, the generating function is given byMusic Theory

In music theory, regular numbers—products of the prime factors 2, 3, and 5—form the basis of consonant intervals derived from the harmonic series, where overtones produce simple frequency ratios that align with human perception of harmony. The octave corresponds to the ratio , the perfect fifth to , and the major third to , all of which are ratios of regular numbers and appear prominently in the lower partials of a vibrating string or air column. These ratios ensure minimal beating between simultaneously sounded tones, promoting a sense of purity and stability in chords, as opposed to intervals involving higher primes that introduce greater complexity and dissonance.[18][19] Just intonation scales are constructed as products of powers of 2, 3, and 5, limiting the prime factors to these three to maintain simple ratios and avoid the dissonant intervals arising from higher primes like 7 or 11, which yield more intricate fractions such as or . This 5-limit approach allows for scales like the major scale with ratios 1:1, 9:8, 5:4, 4:3, 3:2, 5:3, 15:8, and 2:1, where each step preserves harmonic consonance without accumulating irregularities from extraneous factors. By restricting to regular numbers, musicians achieve intervals that closely match the natural overtones, enhancing the acoustic coherence of polyphonic music.[20][19] Historically, Pythagorean tuning relied solely on powers of 2 and 3 to generate a diatonic scale through stacked perfect fifths, producing pure octaves and fifths but imperfect thirds like the major third at . This system, while consonant in two-voice counterpoint, revealed limitations in triadic harmony, prompting extensions in meantone temperaments that incorporated the prime 5 to refine major and minor thirds to and , respectively. Such adjustments, popularized in Renaissance keyboard practice, improved chordal sweetness at the expense of slight fifth tempering.[21][19] The mathematical foundation for these applications lies in the simplicity of regular number ratios, which minimizes the denominator and numerator values to foster acoustic consonance through low-beat frequencies. A key irregularity in Pythagorean tuning, the syntonic comma of —the discrepancy between the Pythagorean major third () and the just major third ()—arises from excluding 5, leading to a slight detuning of about 21.5 cents that disrupts triads. Including 5 resolves this comma by aligning ratios more closely with the harmonic series, enabling more stable harmonic progressions in just intonation systems.[20][21]Computational Aspects

Algorithms for Generation

One straightforward method for generating regular numbers involves enumerating all possible non-negative integer exponents , , and such that does not exceed a predefined upper bound, collecting these products, sorting them in ascending order, and removing any duplicates. Appropriate bounds for the exponents are determined by the logarithms: , , and , where is the limit, leading to approximately candidate numbers to generate and sort. To address the challenge of efficiently generating the ordered sequence of regular numbers without an explicit limit—known as Hamming's problem, posed by Richard Hamming for computing the 1000th such number—a more sophisticated approach uses a priority queue (min-heap) to produce terms incrementally while avoiding duplicates. This method, popularized by Edsger W. Dijkstra in his manuscript EWD 792, leverages the recursive property that every regular number greater than 1 is obtained by multiplying a smaller regular number by 2, 3, or 5. It initializes the queue with 1 and iteratively extracts the minimum value, generates its multiples by 2, 3, and 5 (if they do not exceed bounds and are unique), and inserts them back into the queue. Duplicate removal can be handled via a set tracking seen values. The resulting sequence is strictly increasing by construction.[22] The following pseudocode illustrates a heap-based implementation in Python-like syntax for generating the first regular numbers:import heapq

def generate_regular_numbers(n):

if n == 0:

return []

heap = [1]

seen = set([1])

result = []

while len(result) < n:

x = heapq.heappop(heap)

result.append(x)

for factor in [2, 3, 5]:

y = x * factor

if y not in seen:

seen.add(y)

heapq.heappush(heap, y)

return result

Implementations and Efficiency

Practical implementations of regular number generation commonly leverage priority queues to produce the sequence in ascending order while minimizing computational overhead. In Python, theheapq module facilitates this by maintaining a min-heap of candidate numbers derived from multiplying existing regular numbers by the primes 2, 3, and 5; duplicates are avoided either through a set to track seen values or by merging sorted iterator streams.[24]

A comparable approach in C++ employs std::priority_queue as a min-heap to store potential next values, paired with std::set for duplicate elimination, enabling scalable generation without redundant computations.[24]

Key optimizations focus on lazy evaluation, where the sequence is generated on-demand via generators in Python or iterators in C++, deferring computation until values are requested and conserving memory by avoiding full precomputation.[4] Performance benchmarks demonstrate that heap-based methods can generate the first 1,000,000 regular numbers in under 1 second on modern hardware, highlighting their suitability for large-scale applications.[24]

Extensions to k-smooth numbers involve expanding the prime set to the first k primes (e.g., including 7 for 7-smooth), then applying the same priority queue merging to incorporate multiples of these additional factors.[25]

Common pitfalls in these implementations include integer overflow from high exponents, mitigated by adopting arbitrary-precision arithmetic such as Python's built-in int or libraries like GMP in C++; ensuring duplicate-free output also necessitates rigorous set checks or monotonic merging, as naive multiplications can introduce redundancies otherwise.[24]

Other Uses

Timekeeping and Calendars

The division of time into units based on regular numbers has deep historical roots in systems designed for precise astronomical tracking. The modern sexagesimal structure of timekeeping—60 seconds per minute and 60 minutes per hour—originates from the ancient Babylonian adoption of a base-60 numeral system, where 60 factors as , a regular number with abundant divisors that permitted fractional divisions without complex remainders. This facilitated accurate observations of celestial bodies, as calculations in sexagesimal arithmetic allowed for straightforward subdivisions of angles and intervals in astronomy.[26][27] A full 24-hour day equates to 86,400 seconds, which factors as , another regular number whose extensive divisors (96 in total) support even partitioning for scheduling and scientific computations. This numerical property enhances the practicality of time divisions in observational contexts, enabling clean multiples and submultiples that align with periodic astronomical phenomena without introducing awkward fractions.[28][29] In calendar systems, regular numbers similarly underpin cyclical structures. The ancient Maya utilized a vigesimal (base-20) system in their Long Count and ritual calendars, with 20 factoring as , allowing for hierarchical counts that integrated solar, lunar, and ritual periods into a cohesive framework. Modern timekeeping incorporates approximations to handle irregularities, such as leap seconds added to Coordinated Universal Time (UTC) to reconcile the atomic second's regularity with the Earth's variable rotation, ensuring clocks remain aligned with solar observations while retaining the benefits of regular number-based units.[30][31] These applications underscore the advantages of regular numbers in timekeeping: their prime factors limited to 2, 3, and 5 yield highly divisible units ideal for fraction-free divisions in astronomical and calendrical contexts, promoting precision across diverse cultures.[26]Signal Processing

In digital signal processing for audio, sampling rates that are 5-smooth numbers—products of powers of 2, 3, and 5—facilitate efficient computation in tasks like rate conversion and buffering due to their divisibility by small integers, aligning well with hardware and algorithmic requirements.[32] For instance, the standard 48 kHz rate factors as , making it preferable over 44.1 kHz (which includes a factor of 7) for minimizing computational overhead in polyphase resampling filters and block processing. This property extends to higher rates like 192 kHz used in Blu-ray audio, which factors as and supports lossless high-resolution playback with optimized digital workflows.[33] Filter design in audio synthesis, particularly for just-intonation systems relying on 2-3-5 prime ratios for harmonic intervals, uses these ratios to generate tones with simple frequency relationships like 3:2 or 5:4.[34][35] Modern FFT implementations in signal processing libraries leverage 5-smooth lengths for input arrays to optimize performance via mixed-radix algorithms, which decompose the transform into efficient stages using factors of 2, 3, and 5. For example, functions like SciPy'snext_fast_len select the nearest 5-smooth number greater than or equal to a target length, ensuring faster execution on real-time audio data compared to prime or highly composite lengths requiring more complex factoring. This approach is rooted in libraries like FFTPACK and FFTW, where small-prime factorizations minimize multiplications and enable adaptive planning for spectral analysis in audio applications.[36][37]