Timeline of computing

View on Wikipediafrom Wikipedia

| History of computing |

|---|

|

| Hardware |

| Software |

| Computer science |

| Modern concepts |

| By country |

| Timeline of computing |

| Glossary of computer science |

Timeline of computing presents events in the history of computing organized by year and grouped into six topic areas: predictions and concepts, first use and inventions, hardware systems and processors, operating systems, programming languages, and new application areas.

Detailed computing timelines: before 1950, 1950–1979, 1980–1989, 1990–1999, 2000–2009, 2010–2019, 2020–present

Graphical timeline

[edit]

See also

[edit]- History of compiler construction

- History of computing hardware – up to third generation (1960s)

- History of computing hardware (1960s–present) – third generation and later

- History of the graphical user interface

- History of the Internet

- History of the World Wide Web

- List of pioneers in computer science

- Timeline of electrical and electronic engineering

- Microprocessor chronology

Resources

[edit]- Stephen White, A Brief History of Computing

- The Computer History in time and space, Graphing Project, an attempt to build a graphical image of computer history, in particular operating systems.

The Computer Revolution/Timeline at Wikibooks

The Computer Revolution/Timeline at Wikibooks- "File:Timeline.pdf - Engineering and Technology History Wiki" (PDF). ethw.org. 2012. Archived (PDF) from the original on 2017-10-31. Retrieved 2018-03-03.

External links

[edit]- Visual History of Computing 1944-2013 (archived)

Timeline of computing

View on Grokipediafrom Grokipedia

Pre-Modern Foundations

Ancient and Medieval Devices

The abacus, one of the earliest known computing devices, emerged around 2400 BCE in Mesopotamia as a simple tool consisting of beads or stones slid along rods or grooves for arithmetic calculations.[11] This manual device facilitated addition, subtraction, multiplication, and division by representing numbers in a positional system, serving as a foundational aid for merchants and scribes in ancient trade and administration.[12] The abacus evolved across cultures, with the Chinese suanpan variant documented as early as the 2nd century BCE during the Han dynasty, featuring a framed structure with beads divided into upper and lower sections for decimal computations.[13] In the Roman Empire, the hand abacus adapted this concept into a portable bronze or metal plate with grooves and sliding counters, enabling efficient base-10 calculations for engineering and commerce from the 1st century BCE onward.[14] These iterations highlighted the abacus's versatility, remaining in use for millennia due to its mechanical simplicity and portability.[15] A remarkable advancement in analog computation appeared with the Antikythera mechanism, an intricate Greek device dated to approximately 100 BCE, recovered from a shipwreck near the island of Antikythera.[16] This hand-powered orrery functioned as an astronomical calculator, using over 30 interlocking bronze gears—including a sophisticated differential gear system—to predict celestial positions, eclipses, and planetary motions based on Babylonian and Greek cycles.[17] Its dials and inscriptions allowed users to input dates and output ephemerides, demonstrating early mechanical simulation of complex periodic phenomena for navigation and calendar-making.[18] Medieval astrolabes, refined from Hellenistic prototypes, became essential instruments from the 8th to 16th centuries, particularly in the Islamic world where scholars like al-Fazari adapted them for advanced astronomy and navigation.[19] Constructed from brass or other metals, these devices featured a fixed mater disk engraved with a stereographic projection of the celestial sphere, rotatable plates (typos or retes) for specific latitudes, and an alidade sighting rule for measuring altitudes.[20] Users aligned the alidade with stars or the sun to solve trigonometric problems, such as determining local time, latitude, or the qibla direction, by reading scales calibrated in degrees and astrological divisions.[21] The astrolabe's layered, rotating components thus integrated geometry and observation, aiding explorers like Portuguese mariners in the 15th century for cross-equatorial voyages.[22]17th-19th Century Mechanical Innovations

The 17th to 19th centuries marked a pivotal shift in computing history through the invention of mechanical devices that automated arithmetic and introduced early concepts of programmability, laying essential foundations for later computational systems. These innovations, driven by mathematicians and engineers seeking to reduce human error in calculations, evolved from simple gear-based calculators to complex machines capable of conditional operations and data processing via punched cards. Precursors like John Napier's logarithmic tables from 1614 facilitated manual computations but lacked automation, highlighting the need for mechanical aids.[23] In the early 17th century, Scottish mathematician John Napier introduced "Napier's bones" in his 1617 treatise Rabdologiae, a set of rectangular rods inscribed with multiples derived from logarithmic principles to simplify multiplication and division.[24] Each bone, typically made of ivory or bone, bore a central digit from 0 to 9 on one end and a table of its products by 2 through 9 along the sides, allowing users to align selected rods in a frame and sum intersecting values for rapid computation of large products without full manual arithmetic.[25] This precursor to logarithmic tools reduced complex operations to additions, influencing later calculating devices.[26] Building on logarithmic concepts, English mathematician William Oughtred devised the slide rule in the 1620s by combining two engraved scales of logarithms on sliding rulers, enabling multiplication and division through alignment without explicit rod summation.[27] The fixed and movable parts, often wooden with ivory or metal facing, featured proportional logarithmic markings where the distance between indices represented logarithmic values, allowing users to read results directly from cursor alignments for approximate calculations in engineering and science.[28] This analog instrument marked a shift toward more efficient manual computation, paving the way for 17th-century mechanical innovations.[29] In 1642, French mathematician Blaise Pascal invented the Pascaline, a portable mechanical calculator designed to assist his father with tax computations by performing addition and subtraction through a series of interconnected gears and dials. The device featured numbered dials that advanced like an odometer, with carry-over mechanisms for multi-digit operations, though it was limited to fixed arithmetic and proved expensive to produce, resulting in only about 50 units built. Despite these constraints, the Pascaline represented the first practical mechanical aid for routine calculations, influencing subsequent designs.[30][31] Building on Pascal's work, German philosopher and mathematician Gottfried Wilhelm Leibniz developed the Stepped Reckoner in 1673, a more advanced calculator capable of multiplication and division in addition to addition and subtraction. The machine employed a stepped drum mechanism—cylindrical gears with progressively increasing teeth—that allowed variable digit selection for operations, enabling direct computation of products and quotients without repeated additions. Although mechanical imperfections like friction prevented mass production, Leibniz's device introduced scalable arithmetic automation and foreshadowed geared computing principles.[23][32] A significant leap toward programmability came in 1804 with Joseph Marie Jacquard's invention of the Jacquard loom, an automated weaving machine controlled by punched cards that directed the pattern of threads in silk production. Each card, linked in a chain, featured holes corresponding to specific warp thread lifts, allowing the loom to produce intricate designs without manual intervention and serving as a discrete control system for sequential operations. This card-based input method demonstrated how instructions could be stored and executed mechanically, profoundly influencing later computing pioneers by proving the feasibility of non-manual pattern control.[33][34] Inspired by the Jacquard loom's punched-card system, English mathematician Charles Babbage conceived the Difference Engine in 1822 as a mechanical device to automate the calculation of polynomial tables, such as logarithms, using the method of finite differences to eliminate manual errors in astronomical and navigational data. The machine consisted of thousands of brass gears and levers to perform iterative additions, with a design scalable to produce up to seven-figure accuracy, though funding issues halted full construction during Babbage's lifetime. Evolving this concept, Babbage proposed the Analytical Engine in 1837, a general-purpose programmable computer that incorporated a "mill" for processing operations (analogous to a central processing unit) and a "store" for holding numbers (akin to memory), with punched cards for inputting both data and instructions, enabling conditional branching and loops.[33][35][36] Augmenting Babbage's vision, Ada Lovelace, in her 1843 notes on an article about the Analytical Engine, described the first computer program: an algorithm to compute Bernoulli numbers using the machine's operations, which extended beyond mere number-crunching to symbolic manipulation and highlighted the potential for computers to create music or graphics. Lovelace's insights, including recognition of the engine's ability to process any computable sequence through looping and conditional transfers, established foundational programming concepts.[36][35] By the late 19th century, these ideas converged in practical data processing with Herman Hollerith's tabulating machine, introduced for the 1890 U.S. Census to handle the growing volume of population statistics. The system used punched cards—each with up to 80 holes representing demographic data—to feed information into an electromechanical reader that sorted and tallied results via electrical contacts and mercury pools, reducing census tabulation time from years to months and processing over 62 million cards. Hollerith's innovation, which combined punched-card storage with automated electromechanical output, directly built on Jacquard and Babbage's principles and formed the basis for modern data processing systems.[37][38]Electronic Computing Emerges

1930s-1940s: Theoretical and Wartime Machines

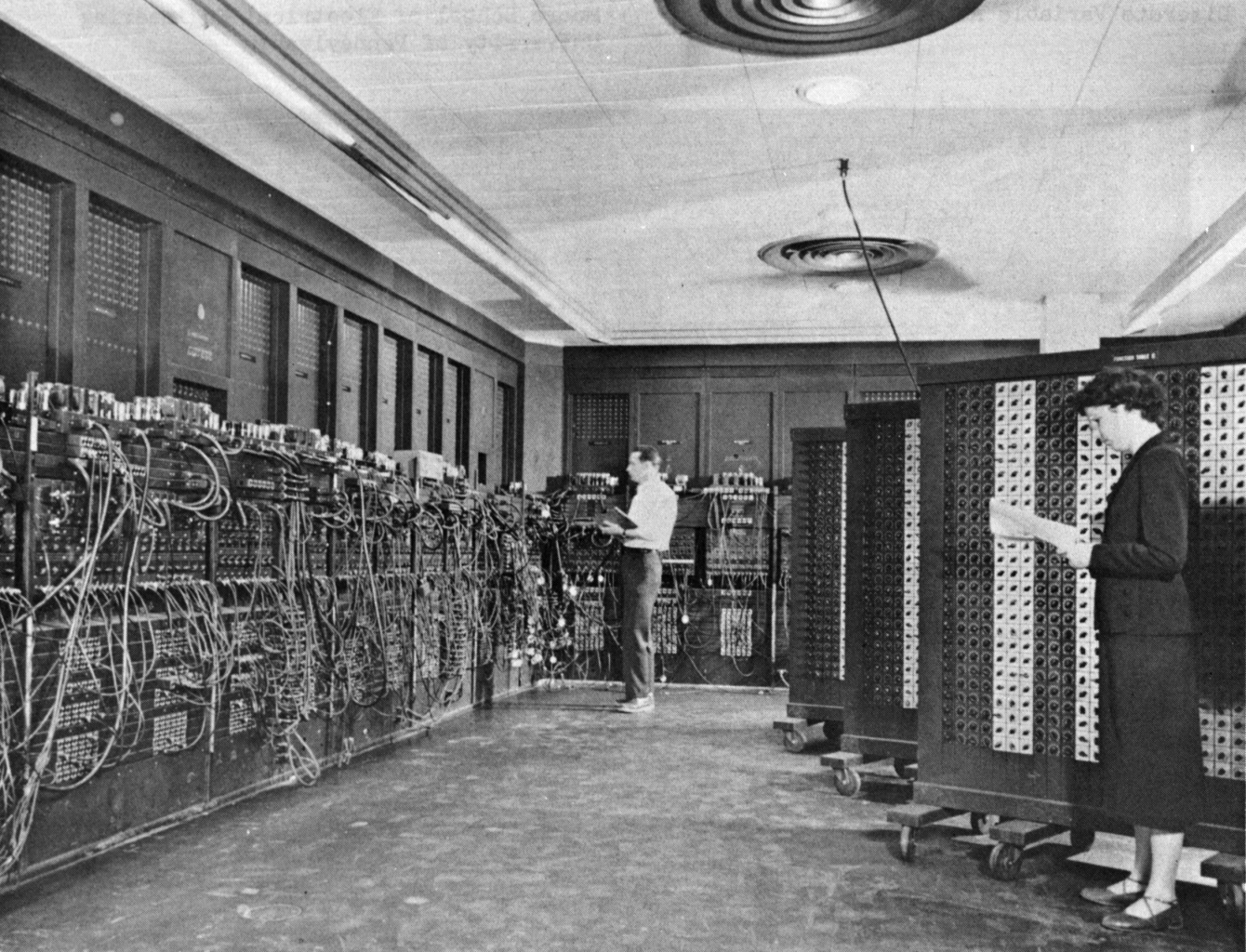

The 1930s marked a pivotal shift in computing from mechanical devices, such as Charles Babbage's Analytical Engine, to theoretical models that formalized the limits of computation. In 1936, Alan Turing published "On Computable Numbers, with an Application to the Entscheidungsproblem," introducing the Turing machine as an abstract model of computation. This device, conceptualized as an infinite tape divided into cells with symbols, a read/write head, and a set of states with transition rules, defined computability as any function that could be calculated algorithmically by such a machine. Turing's model also proved the undecidability of the halting problem, demonstrating that no general algorithm exists to determine whether a given program will finish running or continue indefinitely.[39] Practical electronic computing began with the Atanasoff-Berry Computer (ABC), designed by John Atanasoff and Clifford Berry at Iowa State College between 1937 and 1942. This specialized machine used 280 vacuum tubes for binary electronic computation to solve up to 29 linear equations, though it lacked programmability and used rotating drums for memory. A U.S. court ruling in 1973 affirmed its status as the first electronic digital computer.[40] Building on these theoretical foundations, the decade saw the emergence of practical machines amid World War II demands. In 1941, German engineer Konrad Zuse completed the Z3, the world's first functional programmable digital computer. Constructed using approximately 2,300 electromechanical relays for binary arithmetic and logic operations, the Z3 had a clock frequency of about 5 Hz, performing floating-point arithmetic including additions in under 1 second and multiplications in about 3 seconds, and was programmed via a punched film strip to solve engineering problems like aerodynamic simulations. Unlike earlier mechanical calculators, the Z3 incorporated conditional branching, enabling it to perform loops and decisions automatically, though it remained electromechanical rather than fully electronic.[41] Wartime secrecy drove further innovations in electronic computing for cryptanalysis. Between 1943 and 1944, British engineer Tommy Flowers designed and built Colossus at Bletchley Park to decipher German Lorenz cipher messages. The first Colossus used 1,500 vacuum tubes for parallel Boolean operations and counting, processing 5,000 characters per second from paper tape input to test wheel settings in the Tunny cipher system. Subsequent versions, up to ten machines by war's end, incorporated shift registers and photo-electric readers for greater speed and reliability, marking the first large-scale use of electronics for programmable digital tasks.[42] Post-war, the United States advanced general-purpose electronic computing with ENIAC, completed in 1945 by John Mauchly and J. Presper Eckert at the University of Pennsylvania. Intended for ballistic trajectory calculations, ENIAC employed 18,000 vacuum tubes, 7,200 crystal diodes, and 1,500 relays across 30 tons of equipment, performing 5,000 additions per second—about 1,000 times faster than mechanical calculators. Programming required physical reconfiguration via plugboards, switches, and cables, limiting flexibility but enabling it to solve diverse numerical problems beyond its initial military purpose.[43] These machines influenced architectural paradigms, culminating in John von Neumann's 1945 "First Draft of a Report on the EDVAC." Drafted during discussions on the successor to ENIAC, the report outlined stored-program architecture, where instructions and data reside in the same modifiable memory, distinct from fixed wiring or plugs. This design separated hardware from software, allowing programs to be loaded and altered dynamically, and proposed a central processing unit with arithmetic and control logic to execute sequential instructions. Von Neumann's ideas, though not solely his, became foundational for modern computers by enabling versatility and scalability.[44] The stored-program architecture was first practically demonstrated in 1948 with the Manchester Small-Scale Experimental Machine (SSEM, or "Baby"), developed by Frederic C. Williams, Tom Kilburn, and Geoff Tootill at the University of Manchester. Operational on 21 June 1948, it used a Williams-Kilburn cathode-ray tube for 32 words of memory and executed its initial program to find the highest factor of 2^18, proving the viability of electronic stored-program computing.[45]1950s: First Commercial Systems

The 1950s marked a pivotal shift in computing from experimental and wartime machines to commercially produced systems, building on the stored-program concept introduced by John von Neumann in the late 1940s, which allowed instructions and data to be stored interchangeably in electronic memory. These early commercial computers relied primarily on vacuum tubes for processing, enabling batch processing for business and scientific applications, though reliability challenges persisted due to tube failures and limited memory capacities. The decade saw the debut of systems designed for civilian use, such as census data processing and defense calculations, laying the groundwork for broader adoption. The UNIVAC I, developed by J. Presper Eckert and John Mauchly's Eckert-Mauchly Computer Corporation and delivered in 1951, became the first general-purpose commercial computer sold to a non-military customer, the U.S. Census Bureau.[46] It used magnetic tape reels for input and output, allowing efficient handling of large datasets at speeds up to 75 inches per second, a significant improvement over punch cards.[47] For main memory, the UNIVAC I employed mercury delay lines—acoustic tubes filled with liquid mercury that stored data as sound waves circulating at the speed of sound—providing up to 1,000 words of capacity but prone to timing errors from temperature variations.[46] In response to the UNIVAC's success, IBM entered the electronic computer market with the IBM 701 in 1952, initially nicknamed the Defense Calculator for its focus on scientific and defense computations.[48] Targeted at engineering and research tasks, it featured electrostatic storage tubes based on the Williams-Kilburn cathode-ray tube design, offering 4,096 words of high-speed memory but requiring constant refreshing to prevent data loss.[48] Input relied on punched cards read at up to 120 cards per minute, supporting arithmetic operations at a rate of about 16,000 additions per second, which made it suitable for simulations but limited by its batch-oriented processing.[49] The invention of the transistor in 1947 at Bell Laboratories by John Bardeen, Walter Brattain, and William Shockley revolutionized electronics by replacing bulky, power-hungry vacuum tubes with compact semiconductor devices capable of amplification and switching. Although transistors were not immediately adopted in commercial systems due to reliability issues, the first experimental transistorized computer emerged in 1953 at the University of Manchester, where a prototype using about 100 point-contact transistors for logic circuits demonstrated reliable operation for simple programs.[50] This was followed in 1954 by TRADIC (Transistorized Airborne Digital Computer), built by Bell Labs for the U.S. Air Force, which became the first fully transistorized production system with nearly 800 transistors, enabling compact, low-power computing for guidance applications without any vacuum tubes.[50] Advancements in memory technology addressed the unreliability of delay lines and tubes, with magnetic core memory invented in 1951 by An Wang and further developed by Jay Forrester at MIT's Lincoln Laboratory.[51] This system used tiny ferrite rings arranged in a grid, magnetized to represent binary states, offering non-volatile, random-access storage that was far more robust against environmental factors. Early implementations, such as in the Whirlwind computer, provided up to 4 kilobytes of reliable RAM, dramatically improving data retention and access speeds over prior methods.[51] Software innovations complemented hardware progress, exemplified by FORTRAN (Formula Translation), developed in 1957 by John Backus and his team at IBM as the first high-level programming language.[52] Designed for scientific computing on the IBM 704, FORTRAN's compiler translated mathematical formulas into efficient machine code, reducing programming time from weeks of assembly-level coding to hours and enabling complex simulations like nuclear reactor modeling.[52] Its indexed DO loops and array handling features prioritized computational efficiency, establishing a standard for formulaic expression in code that influenced subsequent languages.[53]Transistor and Miniaturization Era

1960s: Integrated Circuits and Time-Sharing

The 1960s marked a pivotal shift in computing through the advent of integrated circuits (ICs), which dramatically reduced the size and cost of electronic components by fabricating multiple transistors and other elements on a single semiconductor chip. Building on transistor foundations from the 1950s, Jack Kilby at Texas Instruments conceived the first IC in July 1958 and demonstrated a working prototype on September 12, 1958, using germanium to integrate a transistor, capacitors, and resistors into one unit.[54] This breakthrough addressed the "tyranny of numbers" in wiring discrete components, enabling more complex circuits. Independently, Robert Noyce at Fairchild Semiconductor developed the planar IC process in January 1959, which used silicon and photolithography for reliable mass production, patenting the concept in July 1959 and fabricating the first monolithic ICs by early 1960.[55] These innovations laid the groundwork for miniaturization, paving the way for smaller, more affordable computers. The IC's impact was evident in the rise of minicomputers, exemplified by Digital Equipment Corporation's (DEC) PDP-8, introduced on March 22, 1965, as the first commercially successful minicomputer. Priced at $18,000—far below mainframe costs—the PDP-8 featured a 12-bit architecture, modular design with IC-based logic modules, and a compact footprint suitable for laboratories and small businesses, selling over 50,000 units by the decade's end.[56] Its versatility supported applications from process control to scientific computing, democratizing access to computing power beyond large institutions. Parallel to hardware advances, the decade introduced time-sharing, allowing multiple users to interactively access a single computer via remote terminals, contrasting with batch processing. J.C.R. Licklider advocated for this in his 1960 paper "Man-Computer Symbiosis," envisioning symbiotic human-machine interaction through rapid, shared computing resources.[57] MIT's Compatible Time-Sharing System (CTSS), demonstrated in November 1961 on a modified IBM 709 and later upgraded to an IBM 7094, supported up to 30 users simultaneously with features like virtual memory and file sharing, influencing future operating systems.[58] Building on CTSS, the Multics project—initiated in 1964 by MIT, Bell Labs, and General Electric—aimed for a more secure, scalable time-sharing system on the GE-645 computer, introducing hierarchical file systems and dynamic linking, though it faced delays until operational in 1969.[59] Time-sharing facilitated early networking experiments, culminating in the ARPANET's inception in 1969 under U.S. Department of Defense funding. On October 29, 1969, the first message—"LO" (intended as "LOGIN")—was transmitted from a UCLA Interface Message Processor to Stanford Research Institute, establishing the first node-to-node link in this packet-switching network and laying the foundation for the internet.[60] To make programming accessible on time-shared systems, John Kemeny and Thomas Kurtz at Dartmouth College developed BASIC (Beginner's All-purpose Symbolic Instruction Code) in 1964, with the first program running on May 1, 1964, via their DTSS on a GE-225. Designed for non-experts, BASIC used simple English-like syntax and immediate execution, enabling students to write and run code interactively without compilation waits.[61]1970s: Microprocessors and Personal Prototypes

The 1970s marked a pivotal shift in computing with the advent of the microprocessor, a single integrated circuit that combined the core functions of a central processing unit, enabling compact and affordable systems for individual use. In 1971, Intel introduced the 4004, the world's first microprocessor, designed by Ted Hoff, Federico Faggin, and Stanley Mazor as a 4-bit processor for Busicom's desktop calculators.[62] This chip, containing approximately 2,300 transistors, revolutionized design by replacing multiple custom logic chips with programmable logic, paving the way for smaller, cheaper computers beyond institutional mainframes.[63] Building on this foundation, the decade saw the emergence of personal computer prototypes targeted at hobbyists, drawing from the modularity of 1960s minicomputers but adapted for single-user affordability. The Altair 8800, released in 1975 by Micro Instrumentation and Telemetry Systems (MITS), became the first commercially successful personal computer kit, powered by the Intel 8080 microprocessor and sold for $397 in kit form.[64] Featured on the cover of Popular Electronics in January 1975, it ignited the home computing movement by attracting thousands of enthusiasts to build and experiment with their own systems.[65] This sparked widespread interest, leading to homebrew clubs and innovations that democratized computing. Early personal prototypes further advanced accessibility, with the Apple I in 1976 exemplifying a basic, user-assembled board designed by Steve Wozniak and marketed by Steve Jobs, featuring a MOS Technology 6502 CPU and 4 KB of RAM expandable to 8 KB.[66] The follow-up Apple II, launched in 1977, introduced groundbreaking features like built-in color graphics displayable on standard televisions and support for external floppy disk drives via a custom controller using just eight integrated circuits.[67] These designs emphasized expandability and visual appeal, setting standards for future personal systems. Software ecosystems also took shape to support these machines, with Microsoft founded in 1975 by Bill Gates and Paul Allen specifically to develop and license Altair BASIC, an interpreter that made programming accessible without extensive hardware knowledge.[68] Complementing this, Gary Kildall created CP/M in 1974, the first commercially successful operating system for microcomputers, which standardized disk access through its BIOS layer and ran on diverse hardware like the Altair and later systems.[69] Meanwhile, networking innovations emerged at Xerox PARC, where in 1973 Robert Metcalfe and David Boggs invented Ethernet, a coaxial cable-based local area network protocol using carrier-sense multiple access with collision detection, influencing subsequent standards for connecting personal computers.[70]Personal and Networked Computing

1980s: Mass-Market PCs and GUIs

The 1980s witnessed the democratization of computing through affordable, standardized personal computers that shifted from hobbyist prototypes to essential tools for businesses and households, fueled by advancements in hardware architecture and intuitive software interfaces. The decade's breakthroughs emphasized open designs for compatibility and expansion, alongside graphical user interfaces (GUIs) that simplified interaction, laying the groundwork for widespread adoption.[71] These developments built upon the microprocessor foundations of the previous decade, enabling scalable production and third-party innovation. IBM's entry into the personal computer market with the IBM PC, model 5150, released on August 12, 1981, established a de facto industry standard through its open architecture, which permitted manufacturers to produce compatible clones without licensing restrictions.[72] Powered by a 4.77 MHz Intel 8088 microprocessor and initially equipped with 16 KB of RAM, the system supported Microsoft's MS-DOS operating system, a command-line interface that became ubiquitous for business applications.[73] This design choice spurred an ecosystem of peripherals and software, with over 1.24 million personal computers in workplaces by the time of its launch, growing exponentially thereafter.[74] Meanwhile, the Commodore 64, introduced in 1982, dominated the home market as the best-selling computer model ever, with an estimated 17 million units sold by 1993, thanks to its 64 KB of RAM and the MOS Technology SID chip that enabled advanced sound synthesis for gaming and multimedia.[75] Priced at around $595, it offered versatile BASIC programming and cartridge-based expansion, appealing to education and entertainment users.[76] Apple's Macintosh, unveiled on January 24, 1984, popularized the GUI and mouse-driven interaction for non-technical users, drawing inspiration from Xerox PARC's Alto system developed in 1973, which first demonstrated windows, icons, and pointers.[77] Featuring a 7.83 MHz Motorola 68000 processor, 128 KB of RAM, and a 9-inch monochrome display, the Macintosh integrated these elements into a desktop metaphor that made file management intuitive, revolutionizing productivity software design.[8] Its "1984" Super Bowl advertisement underscored a vision of computing liberation from command-line rigidity.[78] Complementing hardware advances, spreadsheet applications transformed business workflows; VisiCalc, released in 1979 for the Apple II but reaching peak influence in the early 1980s, automated recalculation of financial models, turning personal computers into indispensable tools for "what-if" analysis and boosting Apple II sales dramatically.[79] This paved the way for Lotus 1-2-3, launched on January 26, 1983, for the IBM PC, which integrated spreadsheet functions with basic graphics and database capabilities, generating over $53 million in its first year and dominating office computing as a "killer app."[80] Networking protocols also matured, enabling interconnected systems beyond standalone use. The U.S. Department of Defense's DARPA mandated the adoption of TCP/IP on January 1, 1983, for the ARPANET, standardizing packet-switched communication and marking the operational birth of the modern Internet.[81] This facilitated reliable data transmission across diverse networks. Complementing it, the Domain Name System (DNS), proposed by Paul Mockapetris in 1983 and operationalized in 1984 via RFC 920, replaced numeric IP addresses with human-readable hierarchical names, supporting scalable global addressing.[82] These protocols, initially for military and academic use, set the stage for broader connectivity in the following decade.[83]1990s: Internet Expansion and Open Source

The 1990s marked a pivotal era in computing, characterized by the rapid commercialization of the internet and the burgeoning open-source software movement, which democratized access to technology and fostered global collaboration. Building on foundational networking protocols like TCP/IP developed in the 1980s for ARPANET, the decade saw the internet evolve from an academic and military tool into a commercial infrastructure supporting e-commerce, multimedia, and distributed development.[83] This period's innovations not only accelerated personal computing but also laid the groundwork for the digital economy, with hardware advancements enabling richer user experiences and software paradigms emphasizing community-driven progress. In 1989, British computer scientist Tim Berners-Lee, while working at CERN, proposed the World Wide Web as a system for sharing hypertext documents across networks, with the first formal proposal submitted in March 1989 and a refined version in May 1990.[84] He developed key components including the Hypertext Transfer Protocol (HTTP) for data communication, Hypertext Markup Language (HTML) for structuring content, and the first web browser/editor, enabling seamless linking of information. The inaugural website, hosted at http://info.cern.ch, launched on August 6, 1991, providing details on the Web project itself and marking the Web's public debut.[85] The Web's accessibility exploded in 1993 with the release of NCSA Mosaic, the first widely available graphical browser developed at the University of Illinois, which integrated text and images, supported clickable hyperlinks, and ran on multiple platforms, popularizing web browsing for non-experts and spurring an internet revolution.[86] Parallel to the Web's rise, the open-source movement gained momentum, exemplified by the GNU project and Linux. Initiated in 1983 by Richard Stallman to create a free Unix-like operating system, the GNU project developed essential tools like compilers and utilities, but lacked a complete kernel until the 1990s.[87] In 1991, Finnish student Linus Torvalds released the initial Linux kernel version 0.01 on September 17, inviting global contributions under an open-source license, which combined with GNU components to form a fully functional, Unix-compatible OS distributed freely.[88] Linux's collaborative development model attracted developers worldwide, leading to rapid enhancements and widespread adoption in servers and embedded systems by the late 1990s, embodying the era's ethos of shared innovation. Hardware and software integrations further propelled internet expansion. Intel introduced the Pentium processor on March 22, 1993, featuring superscalar architecture with over 3.1 million transistors, which boosted performance for multimedia applications like web graphics and video, running at initial speeds of 60 MHz.[89] Microsoft's Windows 95, released on August 24, 1995, revolutionized personal computing by merging a graphical user interface with built-in networking support, including Plug and Play for devices and integration with The Microsoft Network for dial-up internet access, making online connectivity user-friendly for millions.[90] The mid-1990s ignited the dot-com boom, fueled by investor enthusiasm for internet ventures. Netscape's initial public offering on August 9, 1995, saw shares surge from $28 to $75, valuing the company at over $2 billion and signaling the Web's commercial viability, which catalyzed funding for online businesses.[91] E-commerce pioneers emerged, including Amazon, founded by Jeff Bezos on July 5, 1994, and launched as an online bookstore in 1995, expanding to other product categories such as music and videos in 1998, leveraging secure transactions and vast inventories to redefine consumer shopping.[92] Similarly, eBay, launched by Pierre Omidyar on September 3, 1995, as AuctionWeb, facilitated peer-to-peer auctions starting with a broken laser pointer sale, growing into a global marketplace for diverse goods.[93] Connectivity standards also advanced, with the Universal Serial Bus (USB) specification released in January 1996 by an industry consortium including Intel, Microsoft, and others, standardizing plug-and-play peripheral connections at speeds up to 12 Mbps and eliminating proprietary cables for devices like keyboards and modems.[94] This simplification supported the proliferation of internet-enabled hardware, underpinning the decade's shift toward ubiquitous digital interaction.Digital Ubiquity and Intelligence

2000s: Mobile Devices and Web 2.0

The 2000s marked a pivotal transition in computing from stationary personal computers to portable, always-connected devices, driven by advancements in mobile hardware and the evolution of the internet into a participatory platform. Mobile phones evolved into smartphones, enabling seamless integration of communication, computing, and entertainment on the go. Simultaneously, the web shifted from static information delivery to dynamic, user-driven experiences, fostering collaboration and content creation on a massive scale. This era laid the groundwork for ubiquitous digital access, with innovations in processors, storage, and cloud services addressing the growing demands of mobility and data intensity. The rise of smartphones began with Research In Motion's BlackBerry devices, which popularized mobile email and productivity tools in the early 2000s. Although the first BlackBerry pager launched in 1999, the 2002 BlackBerry 5810 introduced integrated voice calling alongside push email, defining the smartphone era for business users with its physical QWERTY keyboard and secure messaging. By mid-decade, BlackBerry's market dominance highlighted the need for portable computing beyond desktops. Apple's iPhone, unveiled in 2007, revolutionized the category by combining a touchscreen interface, internet browsing, and multimedia capabilities into a single device, introducing multitouch gestures and an app ecosystem via the iOS platform that encouraged third-party software development. The iPhone's launch emphasized intuitive user interfaces over physical buttons, setting a new standard for consumer smartphones. Google's Android platform further democratized mobile computing with its open-source model, debuting on the HTC Dream (also known as the T-Mobile G1) in 2008. This device featured a slide-out keyboard, full touchscreen, and access to the Android Market for apps, allowing manufacturers and developers broad customization and rapid innovation. Android's flexibility spurred competition, leading to diverse hardware options and widespread adoption by the end of the decade. These mobile advancements built briefly on 1990s HTML foundations for web rendering but extended them into app-based ecosystems. The Web 2.0 paradigm, coined by Tim O'Reilly in 2004, described this interactive web evolution, emphasizing user-generated content, collective intelligence, and perpetual beta software over rigid structures. A key enabler was AJAX (Asynchronous JavaScript and XML), formalized by Jesse James Garrett in 2005, which allowed dynamic web pages to update without full reloads, powering responsive applications like Google Maps. Wikipedia, launched in 2001 by Jimmy Wales and Larry Sanger, exemplified Web 2.0 as a collaborative encyclopedia where volunteers edited and expanded articles in real-time, growing to millions of entries by decade's end. Social media platforms amplified this interactivity: Facebook, founded in 2004 by Mark Zuckerberg, evolved from a college network into a global site for sharing profiles, photos, and connections, reaching over 350 million users by 2009. YouTube, established in 2005 by Chad Hurley, Steve Chen, and Jawed Karim, transformed video distribution by enabling easy user uploads and streaming, amassing billions of views and influencing content creation. Hardware innovations supported these software shifts by tackling power and performance constraints. Intel's Core 2 Duo processors, introduced in 2006, brought multi-core architecture to mainstream desktops and laptops, doubling processing threads while improving energy efficiency through features like Intel Wide Dynamic Execution. This addressed the end of rapid single-core clock speed gains under Moore's Law, enabling smoother multitasking for web and mobile applications. Solid-state drives (SSDs), commercialized by Samsung in 2006, replaced mechanical hard disks with flash memory for faster read/write speeds and greater durability, initially in enterprise but soon in consumer laptops, reducing boot times from minutes to seconds. Early cloud services emerged as precursors to scalable infrastructure, with Amazon Simple Storage Service (S3) launching in 2006 to provide durable, on-demand object storage via a simple API. S3's pay-as-you-go model and 99.999999999% durability over a year allowed developers to offload data management from local hardware, facilitating the storage needs of Web 2.0 sites and mobile apps without upfront investments.2010s-2020s: Cloud, AI, and Quantum Advances

The 2010s marked a significant expansion in cloud computing, building on the foundational launch of Amazon Web Services' Elastic Compute Cloud (EC2) in 2006, which provided resizable virtual servers and enabled developers to scale applications dynamically without managing physical hardware.[95] By the early 2010s, EC2 and competing platforms like Microsoft Azure (launched in 2010) and Google Cloud Platform (2011) drove widespread adoption, with global cloud spending surpassing $100 billion annually by 2015, facilitating the shift from on-premises data centers to distributed infrastructures.[96] This era saw innovations like serverless computing, exemplified by AWS Lambda's release in November 2014, which allowed developers to execute code in response to events without provisioning servers, reducing operational overhead and accelerating application development. Parallel to cloud advancements, artificial intelligence experienced a resurgence through deep learning, catalyzed by the 2012 ImageNet Large Scale Visual Recognition Challenge, where AlexNet—a convolutional neural network trained on graphics processing units (GPUs)—achieved a top-5 error rate of 15.3%, dramatically outperforming prior methods and demonstrating the power of deep architectures on large datasets.[97] GPUs, particularly NVIDIA's CUDA-enabled hardware, became essential for training these models due to their parallel processing capabilities, enabling the scaling of neural networks that had previously been computationally infeasible.[97] This breakthrough spurred the modern AI boom, culminating in the public release of ChatGPT by OpenAI on November 30, 2022, a large language model (LLM) based on the GPT-3.5 architecture that popularized generative AI for conversational tasks, amassing over 100 million users within two months and influencing applications from content creation to software assistance.[98] In 2025, AI continued to advance with Anthropic's release of Claude 4 models, including Opus 4 and Sonnet 4, on May 22, which set new benchmarks in coding and long-running tasks through improved hybrid reasoning capabilities.[99] Later that year, OpenAI launched ChatGPT Atlas on October 21, an AI-powered web browser integrating ChatGPT for automated tasks, summaries, and enhanced browsing, challenging traditional browsers like Google Chrome.[100] Quantum computing progressed toward practical viability with key hardware milestones, including Google's demonstration of quantum supremacy using its 53-qubit Sycamore processor in October 2019, which completed a random circuit sampling task in 200 seconds that would take a classical supercomputer 10,000 years.[101] IBM advanced qubit scaling with the 127-qubit Eagle processor unveiled in November 2021, featuring a heavy-hex lattice design to improve connectivity and reduce error rates in superconducting circuits.[102] Error correction emerged as a critical focus in the 2023–2025 period, with Google's 2023 experiment reducing error rates in a nine-qubit surface code below the threshold for fault tolerance, and further advances in 2024 via the Willow chip achieving below-threshold correction for larger logical qubits, paving the way for scalable, error-resistant quantum systems by the mid-2020s.[103][104] Building on this, in October 2025, Google announced the Quantum Echoes algorithm, leveraging the 105-qubit Willow processor to achieve verifiable quantum advantage in scientific simulations, marking a step toward practical applications.[105] In November 2025, IBM unveiled new quantum processors and software advancements, progressing toward quantum advantage by the end of 2026 and fault-tolerant systems by 2029.[106] The rollout of 5G networks beginning in 2019 enhanced edge computing by providing ultra-low latency and high bandwidth, enabling real-time data processing closer to devices for applications like autonomous vehicles and IoT.[107] Commercial 5G services launched in markets such as the United States (Verizon in April 2019) and South Africa (Rain in September 2019), with global shipments of 5G smartphones exceeding 6.7 million units that year.[108][109] Foldable smartphones debuted with the Samsung Galaxy Fold in April 2019, introducing a 7.3-inch flexible display that unfolded into a tablet-like form, signaling innovations in device form factors for immersive computing.[110] Concurrently, standalone virtual reality advanced with the Oculus Quest's release on May 21, 2019, a wireless headset with inside-out tracking that democratized VR gaming and spatial experiences without external sensors.[111] The COVID-19 pandemic in 2020 accelerated the adoption of remote collaboration tools, with Zoom's daily meeting participants surging from 10 million in December 2019 to over 300 million by April 2020, as businesses and schools shifted to virtual platforms amid lockdowns.[112] This rapid digital transformation highlighted cloud and AI's role in sustaining productivity, with video conferencing usage increasing 3–5 times globally in early 2020.[113] By the mid-2020s, regulatory frameworks addressed AI's societal impacts, including the European Union's AI Act, enacted in August 2024, which classifies AI systems by risk levels and mandates transparency for high-risk applications like biometrics.[114] In the United States, President Biden's Executive Order on the Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence, issued October 30, 2023, directed federal agencies to develop standards for AI safety, equity, and cybersecurity, marking a coordinated push for responsible innovation.[115]Visual and Reference Aids

Graphical Timeline

The graphical timeline offers a visual synthesis of major computing milestones, structured as an interactive infographic spanning from 1940 to 2025 along a horizontal year-based axis. Key events are plotted as markers with descriptive labels and era-specific icons, enabling quick reference to pivotal developments across hardware, software, and networking domains. For example, the ENIAC's completion in 1945 marks an early hardware milestone with a vacuum tube icon, the iPhone's launch in 2007 represents mobile hardware innovation via a touchscreen device symbol, ChatGPT's release in 2022 highlights AI software progress through a neural network emblem, and Microsoft's Majorana 1 quantum processor in 2025 signifies quantum hardware advancement with a topological qubit icon.[43][116][117][118] Events are color-coded for clarity: blue for hardware advancements (e.g., the 1947 transistor invention and 1971 microprocessor debut), green for software breakthroughs (e.g., the 1969 UNIX operating system and 1989 World Wide Web proposal), and orange for networking milestones (e.g., the 1969 ARPANET connection and 1993 Mosaic browser release). Icons evolve symbolically from ancient tools like the abacus for pre-1940 precursors to contemporary representations such as quantum bits for 2019 supremacy demonstrations and topological structures for 2025 quantum processors, emphasizing the progression from mechanical to quantum computing paradigms. Zoomable segments divide the timeline into eras—Transistor and Miniaturization (1940s-1970s), Personal and Networked Computing (1980s-1990s), and Digital Ubiquity and Intelligence (2000s-2025)—with each marker hyperlinked to corresponding article section anchors for deeper exploration, maintaining a non-narrative focus on visual navigation. An integrated line graph overlays the timeline to depict computing power growth via Moore's Law, tracing the exponential rise in transistors per chip from Gordon Moore's 1965 prediction of doubling every year (later revised to every two years), starting with roughly 60 components in early integrated circuits and reaching over 100 billion in advanced 2020s processors like those in high-performance GPUs. This curve underscores the scaling that has driven computational density, plotted logarithmically for clarity.[119][120]Key Milestones Summary

| Year | Event | Category | Impact |

|---|---|---|---|

| 1837 | Charles Babbage designs the Analytical Engine | Hardware | Established the conceptual foundation for a general-purpose programmable computer, influencing modern computing architecture.[121] |

| 1945 | Completion of ENIAC by John Mauchly and J. Presper Eckert | Hardware | Demonstrated the first general-purpose electronic digital computer, accelerating scientific computations during and after World War II. |

| 1952 | Grace Hopper develops the A-0 compiler for UNIVAC | Software | Pioneered automatic translation of symbolic code to machine code, laying groundwork for high-level programming languages.[122] |

| 1964 | IBM announces System/360 family of computers | Hardware | Introduced compatibility across a range of machines, standardizing business computing and enabling scalable data processing. |

| 1969 | ARPANET connects four university nodes | Network | Created the precursor to the Internet, enabling packet-switched networking and remote collaboration. |

| 1971 | Intel releases the 4004 microprocessor | Hardware | Marked the beginning of the microprocessor era, allowing integration of CPU functions into single chips for smaller devices. |

| 1981 | IBM launches the IBM Personal Computer (PC) | Hardware | Standardized personal computing hardware, spurring the PC industry and widespread adoption in homes and offices. |

| 1985 | Radia Perlman invents the Spanning Tree Protocol (STP) | Network | Prevented loops in Ethernet networks, enabling reliable, scalable local area networks essential for modern infrastructure.[123] |

| 1989 | Tim Berners-Lee proposes the World Wide Web at CERN | Network | Facilitated hypertext-based information sharing over the Internet, transforming global communication and access to knowledge. |

| 1991 | Linus Torvalds releases the first Linux kernel | Software | Provided a free, open-source operating system kernel, fostering collaborative development and powering servers worldwide. |

| 2007 | Apple introduces the iPhone | Hardware | Revolutionized mobile computing by integrating phone, internet, and media player, popularizing touch interfaces and app ecosystems. |

| 2011 | IBM Watson wins Jeopardy! | Software | Showcased advanced natural language processing and question-answering AI, advancing applications in healthcare and business.[124] |

| 2022 | OpenAI releases ChatGPT | Software | Mainstreamed generative AI for conversational interactions, influencing education, productivity, and creative tools.[117] |

| 2023 | xAI launches Grok AI chatbot | Software | Introduced an AI model emphasizing truth-seeking and real-time knowledge integration via X platform, competing in the generative AI space.[125] |

| 2024 | Apple announces Apple Intelligence | Software | Integrated generative AI features into iOS, enhancing on-device privacy-focused tools like writing assistance and image generation.[126] |

| 2025 | Microsoft unveils Majorana 1 quantum processor | Hardware | Introduced the first processor powered by topological qubits using topoconductors, advancing scalable and error-resistant quantum computing.[118] |

| 2025 | NVIDIA releases DGX Spark AI supercomputer | Hardware | Launched the world's smallest AI supercomputer delivering 1 petaFLOP of AI performance, enabling accessible high-performance AI for developers.[127] |