Recent from talks

Nothing was collected or created yet.

Digital art

View on Wikipedia

Digital art, or the digital arts, is artistic work that uses digital technology as part of the creative or presentational process. It can also refer to computational art that uses and engages with digital media.[2] Since the 1960s, various names have been used to describe digital art, including computer art, electronic art, multimedia art,[3] and new media art.[4][5] Digital art includes pieces stored on physical media, such as with digital painting, as well as digital galleries on websites. Digital art also extends to the field of visual computing.

History

[edit]In the early 1960s, John Whitney developed the first computer-generated art using mathematical operations.[6] In 1963, Ivan Sutherland invented the first user interactive computer-graphics interface known as Sketchpad.[7] Between 1974 and 1977, Salvador Dalí created two big canvases of Gala Contemplating the Mediterranean Sea which at a distance of 20 meters is transformed into the portrait of Abraham Lincoln (Homage to Rothko)[8] and prints of Lincoln in Dalivision based on a portrait of Abraham Lincoln processed on a computer by Leon Harmon published in "The Recognition of Faces".[9] The technique is similar to what later became known as photographic mosaics.

Andy Warhol created digital art using an Amiga where the computer was publicly introduced at the Lincoln Center in July 1985. An image of Debbie Harry was captured in monochrome from a video camera and digitized into a graphics program called ProPaint. Warhol manipulated the image by adding color using flood fills.[10][11]

Art made for digital media

[edit]Artwork that is highly computational, presented through digital media, and explicitly engages with digital technologies are categorized as "art made for digital media". This differs from art using digital tools, which incorporate digital technology in the creation process but may exist outside the digital world.

Digital art historian Christiane Paul writes that it "is highly problematic to classify all art that makes use of digital technologies somewhere in its production and dissemination process as digital art since it makes it almost impossible to arrive at any unifying statement about the art form".[12]

Art that uses digital tools

[edit]

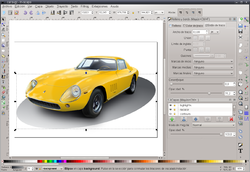

Digital art can be purely computer-generated (such as fractals and algorithmic art) or taken from other sources, such as a scanned photograph or an image drawn using vector graphics software using a mouse or graphics tablet. Artworks are considered digital paintings when created similarly to non-digital paintings but using software on a computer platform and digitally outputting the resulting image as painted on canvas.

Despite differing viewpoints on digital technology's impact on the arts, a consensus exists within the digital art community about its significant contribution to expanding the creative domain, i.e., that it has greatly broadened the creative opportunities available to professional and non-professional artists alike.[13]

Art theorists and art historians

[edit]Notable art theorists and historians in this field include: Oliver Grau, Jon Ippolito, Christiane Paul, Frank Popper, Jasia Reichardt, Mario Costa, Christine Buci-Glucksmann, Dominique Moulon, Roy Ascott, Catherine Perret, Margot Lovejoy, Edmond Couchot, Tina Rivers Ryan, Fred Forest and Edward A. Shanken.

Digital painting

[edit]Digital painting is either a physical painting made with the use of digital electronics and spray paint robotics within the digital art fine art context[14] or pictorial art imagery made with pixels on a computer screen that mimics artworks from the traditional histories of painting and illustration.[15]

Artificial intelligence art

[edit]Artists have used artificial intelligence to create artwork since at least the 1960s.[16] Since their design in 2014, some artists have created artwork using a generative adversarial network (GAN), which is a machine learning framework that allows two "algorithms" to compete with each other and iterate.[17][18] It can be used to generate pictures that have visual effects similar to traditional fine art. The essential idea of image generators is that people can use text descriptions to let AI convert their text into visual picture content. Anyone can turn their language into a painting through a picture generator.[19]

Digital art education

[edit]

Digital art education has become more common with the advancement of digital hardware and software. From hardware such as graphics tablets, styluses, tablets, 3D scanners, virtual reality headsets, and digital cameras; to software such as digital art software, 3D modeling software, 3D rendering, digital sculpting, 2D graphics software, digital painting, 3D terrain generation, 2D animation software, 3D animation software, raster graphics editors, vector graphics editors, mathematical art software, and video editing software.[20][21]

Scholarship and archives

[edit]In addition to the creation of original art, research methods that utilize AI have been generated to quantitatively analyze digital art collections. This has been made possible due to the large-scale digitization of artwork in the past few decades.[22] Although the main goal of digitization was to allow for accessibility and exploration of these collections, the use of AI in analyzing them has brought about new research perspectives.[23]

Two computational methods, close reading and distant viewing, are the typical approaches used to analyze digitized art.[24] Close reading focuses on specific visual aspects of one piece. Some tasks performed by machines in close reading methods include computational artist authentication and analysis of brushstrokes or texture properties. In contrast, through distant viewing methods, the similarity across an entire collection for a specific feature can be statistically visualized. Common tasks relating to this method include automatic classification, object detection, multimodal tasks, knowledge discovery in art history, and computational aesthetics.[23] Whereas distant viewing includes the analysis of large collections, close reading involves one piece of artwork.

Whilst 2D and 3D digital art is beneficial as it allows the preservation of history that would otherwise have been destroyed by events like natural disasters and war, there is the issue of who should own these 3D scans – i.e., who should own the digital copyrights.[25]

Computer demos

[edit]

Computer demos are based on computer programs, usually non-interactive. It produces audiovisual presentations. They are a novel form of art, which emerged as a consequence of the home computer revolution in the early 1980s. In the classification of digital art, they can be best described as real-time procedurally generated animated audio-visuals.

This form of art does not concentrate only on the aesthetics of the final presentation, but also on the complexities and skills involved in creating the presentation. As such, it can be fully enjoyed only by persons with a relatively high knowledge level of relevant computer technologies. An example is that, as said by Hua Jin and Jie Yang, Using computer-aided design software to present the class content in art design teaching," is not to advocate computer-aided design instead of hand-drawn performance, but to make it serve the profession earlier through a more reasonable course arrangement."[26]

On the other hand, many of the created pieces of art are primarily aesthetic or amusing, and those can be enjoyed by the general public.

Digital installation art

[edit]Digital installation art constitutes a broad field of artistic practices and a variety of forms.

Some resemble video installations, especially large-scale works involving projections and live video capture. By using projection techniques that enhance an audience's impression of sensory envelopment, many digital installations attempt to create immersive environments.

While others go even further and attempt to facilitate a complete immersion in virtual realms. This type of installation is generally site-specific, scalable, and without fixed dimensionality, meaning it can be reconfigured to accommodate different presentation spaces.[27]

Scott Snibbe's "Boundary Functions" is an example of augmented reality digital installation art, which responds to people who enter the installation by drawing lines between people, indicating their personal space.[1]Noah Wardrip-Fruin's "Screen"(2003) utilizes a Cave Automatic Virtual Environment (CAVE) to create an interactive, text-based digital experience that engages the viewer in a multi-sensory interaction.[28]

Internet art and net.art

[edit]Internet art is digital art that uses the specific characteristics of the Internet and is exhibited on the Internet. The term "internet art" is included by "net art" for which artists assume that network will be refreshed through history. So the term "post-internet art" is used to exclude artworks outside of the internet media.[29]

A representative example is Protocols for Achievements, which is a digital photo frame that confronts the aesthetics of kitsch, and inserts individual artistic dynamics within institutional media.[30]

Digital art and blockchain

[edit]Blockchain, and more specifically Non-Fungible Tokens(NFTs), have been a common tool for digital arts since the NFTs boom of 2020-2021.[31] By minting digital artworks as NFTs, artists can establish provable ownership.[32][33] However, the technology received much criticism and has many flaws related to plagiarism and fraud (due to its almost completely unregulated nature).[34]

Furthermore, auction houses, museums, and galleries around the world have started to integrate NFTs and collaborate with digital artists, exhibiting their artworks (associated with the respective NFTs) both in virtual galleries and real-life screens, monitors, and TVs.[35][36][37]

In March 2024, Sotheby's presented an auction highlighting significant contributions of digital artists over the previous decade,[38] one of many record-breaking auctions of digital artwork by the auction house. These auctions look broadly at the cultural impact of digital art in the 21st century and feature work by artists such as Jennifer & Kevin McCoy, Vera Molnár, Claudia Hart, Jonathan Monaghan, and Sarah Zucker.[39][40]

Computer-generated visual media

[edit]

Digital visual art consists of either 2D visual information displayed on an electronic visual display or information mathematically translated into 3D information viewed through perspective projection on an electronic visual display. The simplest form, 2D computer graphics, reflects how one might draw with a pencil or paper. In this case, however, the image is on the computer screen, and the instrument you draw with might be a tablet stylus or a mouse. What is generated on your screen might appear to be drawn with a pencil, pen, or paintbrush. The second kind is 3D computer graphics, where the screen becomes a window into a virtual environment, where you arrange objects to be "photographed" by the computer.

Typically 2D computer graphics use raster graphics as their primary means of source data representations, whereas 3D computer graphics use vector graphics in the creation of immersive virtual reality installations. A possible third paradigm is to generate art in 2D or 3D entirely through the execution of algorithms coded into computer programs. This can be considered the native art form of the computer, and an introduction to the history of which is available in an interview with computer art pioneer Frieder Nake.[41] Fractal art, Datamoshing, algorithmic art, and real-time generative art are examples.

Computer-generated 3D still imagery

[edit]3D graphics are created via the process of designing imagery from geometric shapes, polygons, or NURBS curves[42] to create three-dimensional objects and scenes for use in various media such as film, television, print, rapid prototyping, games/simulations, and special visual effects.

There are many software programs for doing this. The technology can enable collaboration, lending itself to sharing and augmenting by a creative effort similar to the open source movement and the creative commons in which users can collaborate on a project to create art.[43]

Computer-generated animated imagery

[edit]Computer-generated animations are animations created with a computer from digital models created by 3D artists or procedurally generated. The term is usually applied to works created entirely with a computer. Movies make heavy use of computer-generated graphics; they are called computer-generated imagery (CGI) in the film industry. In the 1990s and early 2000s, CGI advanced enough that, for the first time, it was possible to create realistic 3D computer animation, although films had been using extensive computer images since the mid-70s. A number of modern films have been noted for their heavy use of photo-realistic CGI.[44]

Generation Process

[edit]Generally, the user can set the input, and the input content includes detailed picture content that the user wants. For example, the content can be a scene's content, characters, weather, character relationships, specific items, etc. It can also include selecting a specific artist style, screen style, image pixel size, brightness, etc. Then picture generators will return several similar pictures[18] generated according to the input (generally, 4 pictures are given now). After receiving the results generated by picture generators, the user can select one picture as a result he wants or let the generator redraw and return to new pictures.

Awards and recognition

[edit]In both 1991 and 1992, Karl Sims won the Golden Nica award at Prix Ars Electronica for his 3D AI animated videos using artificial evolution.[45] In 2009, Eric Millikin won the Pulitzer Prize along with several other awards for his artificial intelligence art that was critical of government corruption in Detroit and resulted in the city's mayor being sent to jail.[46][47] In 2018 Christie's auction house in New York sold an artificial intelligence work, "Edmond de Bellamy" for US$432,500. It was created by a collective in Paris named "Obvious".[48]

In 2019, Stephanie Dinkins won the Creative Capital award for her creation of an evolving artificial intelligence based on the "interests and culture(s) of people of color."[49] In 2022, an amateur artist using Midjourney won the first-place $300 prize in a digital art competition at the Colorado State Fair.[50][19] Also in 2022, Refik Anadol created an artificial intelligence art installation at the Museum of Modern Art in New York, based on the museum's own collection.[51]

List of digital art software

[edit]| List of digital art software[52][53][54] | |||

|---|---|---|---|

| Software | Developer | Platform | License |

| 3D-Coat | Pilgway | Windows, macOS | Trialware |

| Adobe Fresco | Adobe Inc. | Windows, iOS, iPadOS | Freemium |

| Adobe Photoshop | Adobe Inc. | Windows, macOS | Proprietary |

| Adobe Illustrator | Adobe Inc. | Windows, macOS, iPadOS | Proprietary |

| Adobe Substance 3D Modeler | Adobe Inc. | Windows, macOS | Proprietary |

| Affinity Designer | Serif | Windows, macOS | Proprietary |

| ArtRage | Ambient Design Ltd | Windows, macOS, iOS, Android | Proprietary EULA |

| Artweaver | Boris Eyrich Software | Windows | Freemium |

| Autodesk SketchBook | Autodesk | Windows, macOS, iOS, Android | Freemium |

| Blender | Blender Foundation | Windows, macOS, Linux | GPLv2 |

| Corel Painter | Corel Corporation | Windows, macOS | Proprietary |

| Clip Studio Paint | Celsys, Inc. | Windows, macOS, iOS, Android | Proprietary |

| GIMP | GNU Image Manipulation Program | Windows, macOS, Linux | GPLv3 |

| Inkscape | Inkscape Developers | Windows, macOS, Linux | GPLv2 |

| Krita | Krita Foundation | Windows, macOS, Linux | GPLv3 |

| Mudbox | Autodesk | Windows, macOS | Proprietary |

| My Paint | MyPaint Contributors | Windows, macOS, Linux, BSD | GPLv2 |

| Pencil2D | Pencil2D Team | Windows, macOS, Linux | GPLv2 |

| Procreate | Savage Interactive | iPadOS | Proprietary |

| Terragen | Planetside Software | Windows, macOS | Freeware |

| ZBrush | Pixologic | Windows, macOS | Proprietary |

List of 2D digital art repositories

[edit]Repositories for 2D and vector digital art offer pieces for download, either individually or in bulk. Proprietary repositories require a purchase to license or use any image, while those operating under freemium models like Flaticon, Vecteezy, etc., provide some images for free and others for fee based on tiers.[55][56]

| List of 2D digital art repositories[55][57] | ||

|---|---|---|

| Repository | Company | License |

| Vecteezy | Eezy LLC | Freemium |

| Flaticon | Freepik Company | Freemium |

| The Noun Project | Noun Project Inc. | Freemium |

| Openclipart | Community-driven | Public domain |

| Pixabay | Canva | Free use (Pixabay Content License) |

| Shutterstock | Shutterstock, Inc. | Proprietary |

Subtypes

[edit]- Art game

- ASCII art

- Chip art

- Computer art scene

- Computer music

- Crypto art

- Cyberarts

- Digital illustration

- Digital imaging

- Digital literature

- Digital painting

- Digital photography

- Digital poetry

- Digital sculpture

- Digital architecture

- Electronic music

- Evolutionary art

- Holography art

- Fractal art

- Generative art

- Generative music

- GIF art

- Immersion (virtual reality)

- Interactive art

- Internet art

- Motion graphics

- Music visualization

- Photo manipulation

- Pixel art

- Render art

- Software art

- Systems art

- Textures

Related organizations and conferences

[edit]See also

[edit]References

[edit]- ^ a b Snibbe, Scott (1998). "Boundary Functions - Interactive Art".

- ^ Paul, Christiane (2016). "Introduction From Digital to Post-Digital—Evolutions of an Art Form". In Paul, Christiane (ed.). A Companion to Digital Art. Malden, MA: Wiley. pp. 1–2. ISBN 978-1-118-47520-1.

- ^ Reichardt, Jasia (1974). "Twenty years of symbiosis between art and science". Art and Science. XXIV (1): 41–53.

- ^ Christiane Paul (2006). Digital Art, pp. 7–8. Thames & Hudson.

- ^ Lieser, Wolf. Digital Art. Langenscheidt: h.f. ullmann. 2009, pp. 13–15

- ^ Grierson, Mick. "Creative Coding for Audiovisual Art: The CodeCircle Platform" (PDF).

- ^ "Sketchpad | computer program | Britannica". www.britannica.com. Retrieved 2022-12-01.

- ^ Pitxot, Antoni; Aguer, Montse (2022). "Cúpula". Guía - Teatro-Museo Dalí - Figueres (in Spanish). Barcelona: Fundació Gala-Salvador Dalí - Triangle Books. p. 97. ISBN 978-84-8478-714-3.

A la derecha llama la atención el inmenso óleo fotográfico "Gala desnuda mirando al mar que a 18 metros aparece el presidente Lincoln" (1975), nueva muestra anticipadora de Dalí que representa, en este caso, el primer ejemplo de utilización de imagen digitalizada en la pintura.

[On the right, attention is attracted by the immense photographic oil "Nude Gala Looking at the Sea that from 18 Meters Appears as Lincoln (1975), new anticipating sample of Dalí that represents, in this case, the first example of the use of the digitized image in painting.] - ^ Harmon, Leon D. (November 1973). "The Recognition of Faces". Scientific American. 229 (5): 70–82. Bibcode:1973SciAm.229e..70H. doi:10.1038/scientificamerican1173-70. PMID 4748120. Retrieved 4 September 2023.

- ^ 'Reimer, Jeremy (October 21, 2007). "A history of the Amiga, part 4: Enter Commodore". Arstechnica.com. Retrieved June 10, 2011.

- ^ YouTube. Archived from the original on 2009-05-07.

- ^ Christiane Paul, ed. (2016). A companion to digital art. Chichester, West Sussex: Wiley. pp. 1–2. ISBN 978-1-118-47521-8. OCLC 925426732.

- ^ Bessette, Juliette; Frederic Fol Leymarie; Glenn W. Smith (16 September 2019). "Trends and Anti-Trends in Techno-Art Scholarship: The Legacy of the Arts "Machine" Special Issues". Arts. 8 (3): 120. doi:10.3390/arts8030120.

- ^ Christiane Paul, Digital Art, Thames & Hudson World of Art, 2003, pp. 51-60

- ^ Christiane Paul Digital Art, Fourth Edition, Thames & Hudson Ltd, pp. 177-181

- ^ McCorduck, Pamela (1991). AARONS's Code: Meta-Art. Artificial Intelligence, and the Work of Harold Cohen. New York: W. H. Freeman and Company. p. 210. ISBN 0-7167-2173-2.

- ^ Karpathy, Andrej; Abbeel, Pieter; Brockman, Greg; Chen, Peter; Cheung, Vicki; Duan, Rock; Goodfellow, Ian; Kingma, Durk; Ho, Jonathan; Houthooft, Rein; Salimans, Tim; Schulman, John; Sutskeyer, Ilya; Zaermba, Wojciech (2016-06-16). "Generative Models, OpenAI". OpenAI. Retrieved 2022-10-09.

- ^ a b Ramesh, Aditya; Pavlov, Mikhail; Goh, Gabriel; Gray, Scott; Voss, Chelsea; Radford, Alec; Chen, Mark; Sutskever, Ilya (2021-02-26). "Zero-Shot Text-to-Image Generation". arXiv:2102.12092 [cs.CV].

- ^ a b Roose, Kevin (2022-09-02). "An A.I.-Generated Picture Won an Art Prize. Artists Aren't Happy". The New York Times. Retrieved 2022-10-04.

- ^ "How Tech is Reinventing Arts Education".

- ^ "Integrating Technology in Art Education: Tools and Techniques". 17 October 2024.

- ^ Lang, Sabine; Ommer, Björn (2021-08-21). "Transforming Information Into Knowledge: How Computational Methods Reshape Art History". Digital Humanities Quarterly. 015 (3). ISSN 1938-4122.

- ^ a b Cetinic, Eva; She, James (2022-02-16). "Understanding and Creating Art with AI: Review and Outlook". ACM Transactions on Multimedia Computing, Communications, and Applications. 18 (2): 66:1–66:22. arXiv:2102.09109. doi:10.1145/3475799. ISSN 1551-6857. S2CID 231951381.

- ^ Lang, Sabine; Ommer, Bjorn (2018). "Reflecting on How Artworks Are Processed and Analyzed by Computer Vision: Supplementary Material". Proceedings of the European Conference on Computer Vision (ECCV) Workshops – via Computer Vision Foundation.

- ^ Sydell, Laura (21 May 2018). "3D Scans Help Preserve History, But Who Should Own Them? 2018". NPR. Archived from the original on 2022-01-18. Retrieved 7 February 2021.

- ^ Jin, H; Yang, J (2021). "Using computer-aided design software in teaching environmental art design" (PDF). Computer-Aided Design and Applications. 19 (S1): 173–183. doi:10.14733/cadaps.2022.S1.173-183.

- ^ Paul, Christiane (2023). Digital art (4th ed.). London: Thames & Hudson. p. 71. ISBN 9780500204801.

- ^ Wardrip-Fruin, Noah (2002). "screen".

- ^ DANAE (2019-12-02). "Net Art, Post-internet Art, New Aesthetics: The Fundamentals of Art on the Internet". DANAE.IO.

- ^ GCC (2013). "Protocols for Achievements". Art Post-Internet.

- ^ Sestino, Andrea; Guido, Gianluigi; Peluso, Alessandro M. (2022). Non-Fungible Tokens (NFTs). Examining the Impact on Consumers and Marketing Strategies. Palgrave. p. 26 f. doi:10.1007/978-3-031-07203-1. ISBN 978-3-031-07202-4. S2CID 250238540.

- ^ Kugler, Logan (2021). "Non-Fungible Tokens and the Future of Art". Communications of the ACM. 64 (9): 19–20. doi:10.1145/3474355. S2CID 237283169.

There is nothing stopping someone online from viewing, copying, and sharing a digital art file, but thanks to NFTs, they cannot fake possession of the art. NFTs make it possible to have exclusive ownership of digital art — something that was previously impossible.

- ^ Trautman, Lawrence J. (2021). "Virtual Art and Non-fungible Tokens". SSRN Electronic Journal. doi:10.2139/ssrn.3814087. ISSN 1556-5068.

Trautman references Zittrain, Jonathan; Marks, Will (7 April 2021). "What Critics Don't Understand About NFTs. The complexity and arbitrariness of non-fungible tokens are a big part of their appeal". The Atlantic. Retrieved 11 January 2023. The buyer is not, however, acquiring anything that they alone can use. (...) an NFT buyer is not purchasing a work, but rather a publicly available token that links to a work. (...) The token itself is visible to all, as is the work to which it points, so anyone else can look at the work and download it. And most NFT transactions don't purport to convey copyright or other intellectual-property interests regarding the work in question (...) By these terms, many NFT purchases are akin to acquiring a piece of art that nevertheless remains in the gallery where it was sold, open all the time to members of the public, who may grab a free print of the work after their visit.

- ^ Lu, Fei (2022-01-06). "Does NFT Art Have A Place In The Museum In 2022?". Jing Daily Culture.

- ^ Trautman, Lawrence J. (2022). "Virtual Art and Non-Fungible Tokens". Hofstra Law Review. 50 (361): 371. doi:10.2139/ssrn.3814087. S2CID 234830426.

- ^ "Natively Digital: A Curated NFT Sale". sothebys.com. 2021-06-03.

- ^ Kastrenakes, Jacob (2021-03-11). "Beeple sold an NFT for $69 million". theverge.com.

- ^ "Evolutionaries Digital Art Through The Decade". sothebys.com. 2024-03-15.

- ^ Tremayne-Pengelly, Alexandra (2023-10-27). "Traditional and Digital Art Will Merge in Sotheby's ThankYouX Show". The New York Observer.

- ^ Escalante-De Mattei, Shanti (2022-04-13). "Sotheby's Is Launching Another Digital Art Auction, This Time on the Art Before NFTs". ARTnews.

- ^ Smith, Glenn (31 May 2019). "An Interview with Frieder Nake". Arts. 8 (2): 69. doi:10.3390/arts8020069.

- ^ Wands, Bruce (2006). Art of the Digital Age, pp. 15–16. Thames & Hudson.

- ^ Foundation, Blender. "About". blender.org. Retrieved 2021-02-25.

- ^ Lev Manovich (2001) The Language of New Media Cambridge, Massachusetts: The MIT Press.

- ^ "Golden Nicas". Ars Electronica Center. Retrieved 2023-02-26.

- ^ "Mayoral reporting: Free Press wins top honor". (April 1, 2009). Detroit Free Press, p. 5A.

- ^ "Free Press wins its 9th Pulitzer; Reporting led to downfall of mayor". (April 21, 2009). Detroit Free Press, p.1A.

- ^ Cohn, Gabe (2018-10-25). "AI Art at Christie's Sells for $432,500". The New York Times. ISSN 0362-4331. Retrieved 2022-10-04.

- ^ "Not the Only One". Creative Capital. Retrieved 2023-02-26.

- ^ "2022 Fine Arts Placings of the Colorado State Fair" (PDF).

- ^ "Refik Anadol: Unsupervised | MoMA". The Museum of Modern Art. Retrieved 2023-02-26.

- ^ "15 Best Free Drawing Software for 2024".

- ^ "7 Best Software for Drawing Tablets".

- ^ "Best Drawing Apps and Software in 2024 (Free & Paid)". 4 September 2019.

- ^ a b "13 Platforms to Get Icons for Your Website [Free and Paid]". 26 July 2020.

- ^ "10 Best Websites For Free Graphics & Vector Designs - PageTraffic". 2022-05-18. Retrieved 2024-08-25.

- ^ Estefani, Nikka (27 July 2023). "10 Best Free Vector Websites for Designers". UI Garage. Archived from the original on 23 May 2024.

External links

[edit] Media related to Digital art at Wikimedia Commons

Media related to Digital art at Wikimedia Commons- Dreher, Thomas. "History of Computer Art"

- Zorich, Diane M. "Transitioning to a Digital World"

- Chitrakala "Digital Art"