Motion capture

View on WikipediaThis article has multiple issues. Please help improve it or discuss these issues on the talk page. (Learn how and when to remove these messages)

|

Motion capture (sometimes referred as mocap or mo-cap, for short) is the process of recording high-resolution movement of objects or people into a computer system. It is used in military, entertainment, sports, medical applications, and for validation of computer vision[3] and robots.[4]

In films, television shows and video games, motion capture refers to recording actions of human actors and using that information to animate digital character models in 2D or 3D computer animation.[5][6][7] When it includes face and fingers or captures subtle expressions, it is often referred to as performance capture.[8] In many fields, motion capture is sometimes called motion tracking, but in filmmaking and games, motion tracking usually refers more to match moving.

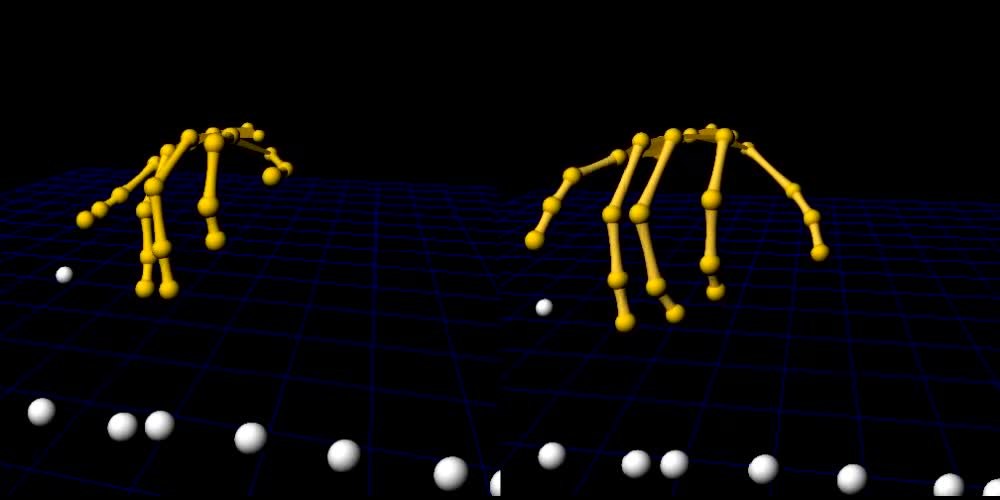

In motion capture sessions, movements of one or more actors are sampled many times per second. Whereas early techniques used images from multiple cameras to calculate 3D positions,[9] often the purpose of motion capture is to record only the movements of the actor, not their visual appearance. This animation data is mapped to a 3D model so that the model performs the same actions as the actor. This process may be contrasted with the older technique of rotoscoping.

Camera movements can also be motion captured so that a virtual camera in the scene will pan, tilt or dolly around the stage driven by a camera operator while the actor is performing. At the same time, the motion capture system can capture the camera and props as well as the actor's performance. This allows the computer-generated characters, images and sets to have the same perspective as the video images from the camera. A computer processes the data and displays the movements of the actor, providing the desired camera positions in terms of objects in the set. Retroactively obtaining camera movement data from the captured footage is known as match moving or camera tracking.

The first virtual actor animated by motion-capture was produced in 1993 by Didier Pourcel and his team at Gribouille. It involved "cloning" the body and face of French comedian Richard Bohringer, and then animating it with still-nascent motion-capture tools.

Advantages

[edit]This section needs additional citations for verification. (February 2014) |

Motion capture offers several advantages over traditional computer animation of a 3D model:

- Low latency, close to real-time results can be obtained. In entertainment applications, this can reduce the costs of keyframe-based animation.[10] The Hand Over technique is an example of this.

- The amount of work does not vary with the complexity or length of the performance to the same degree as when using traditional techniques. This allows many tests to be done with different styles or deliveries, giving a distinct personality that is only limited by the talent of the actor.

- Complex movement and realistic physical interactions such as secondary motions, weight, and exchange of forces can be easily recreated in a physically accurate manner.[11]

- The amount of animation data that can be produced within a given time is extremely large when compared to traditional animation techniques. This contributes to both cost-effectiveness and meeting production deadlines.[12]

- Potential for free software and third-party solutions reducing its costs.

Disadvantages

[edit]This section needs additional citations for verification. (February 2014) |

- Specific hardware and special software programs are required to obtain and process the data.

- The cost of the software, equipment and personnel required can be prohibitive for small productions.

- The capture system may have specific requirements for the space in which it is operated, depending on camera field of view or magnetic distortion.

- When problems occur, it is easier to shoot the scene again rather than trying to manipulate the data. Only a few systems allow real-time viewing of the data to decide if the take needs to be redone.

- The initial results are limited to what can be performed within the capture volume without extra editing of the data.

- Movement that does not follow the laws of physics cannot be captured.

- Traditional animation techniques, such as added emphasis on anticipation and follow through, secondary motion or manipulating the shape of the character, as with squash and stretch animation techniques, must be added later.

- If the computer model has different proportions from the capture subject, artifacts may occur. For example, if a cartoon character has large, oversized hands, these may intersect the character's body if the human performer is not careful with their physical motion.

Applications

[edit]This section needs additional citations for verification. (February 2013) |

There are many applications of motion capture. The most common are for video games, movies, and movement capture, however there is a research application for this technology being used at Purdue University in robotics development.

Video games

[edit]Video games often use motion capture to animate athletes, martial artists, and other in-game characters.[13][14] As early as 1988, an early form of motion capture was used to animate the 2D player characters of Martech's video game Vixen (performed by model Corinne Russell)[15] and Magical Company's 2D arcade fighting game Last Apostle Puppet Show (to animate digitized sprites).[16] Motion capture was later notably used to animate the 3D character models in the Sega Model arcade games Virtua Fighter (1993)[17][18] and Virtua Fighter 2 (1994).[19] In mid-1995, developer/publisher Acclaim Entertainment had its own in-house motion capture studio built into its headquarters.[14] Namco's 1995 arcade game Soul Edge used passive optical system markers for motion capture.[20] Motion capture also uses athletes in based-off animated games, such as Naughty Dog's Crash Bandicoot, Insomniac Games' Spyro the Dragon, and Rare's Dinosaur Planet.

Robotics

[edit]Indoor positioning is another application for optical motion capture systems. Robotics researchers often use motion capture systems when developing and evaluating control, estimation, and perception algorithms and hardware. In outdoor spaces, it's possible to achieve accuracy to the centimeter by using the Global Navigation Satellite System (GNSS) together with Real-Time Kinematics (RTK). However, this reduces significantly when there is no line-of-sight to the satellites — such as in indoor environments. The majority of vendors selling commercial optical motion capture systems provide accessible open source drivers that integrate with the popular Robotic Operating System (ROS) framework, allowing researchers and developers to effectively test their robots during development.

In the field of aerial robotics research, motion capture systems are widely used for positioning as well. Regulations on airspace usage limit how feasible outdoor experiments can be conducted with Unmanned Aerial Systems (UAS). Indoor tests can circumvent such restrictions. Many labs and institutions around the world have built indoor motion capture volumes for this purpose.

Purdue University houses the world's largest indoor motion capture system, inside the Purdue UAS Research and Test (PURT) facility. PURT is dedicated to UAS research, and provides tracking volume of 600,000 cubic feet using 60 motion capture cameras.[21] The optical motion capture system is able to track targets in its volume with millimeter accuracy, effectively providing the true position of targets — the "ground truth" baseline in research and development. Results derived from other sensors and algorithms can then be compared to the ground truth data to evaluate their performance.

Movies

[edit]Movies use motion capture for CGI effects, in some cases replacing traditional cel animation, and for completely CGI creatures, such as Gollum, The Mummy, King Kong, Davy Jones from Pirates of the Caribbean, the Na'vi from the film Avatar, and Clu from Tron: Legacy. The Great Goblin, the three Stone-trolls, many of the orcs and goblins in the 2012 film The Hobbit: An Unexpected Journey, and Smaug were created using motion capture.

The film Batman Forever (1995) used some motion capture for certain visual effects. Warner Bros. had acquired motion capture technology from arcade video game company Acclaim Entertainment for use in the film's production.[22] Acclaim's 1995 video game of the same name also used the same motion capture technology to animate the digitized sprite graphics.[23]

The 1999 film Star Wars: Episode I – The Phantom Menace was the first feature-length film to include a main character created (Jar Jar Binks, played by Ahmed Best), using motion capture. The 2000 Indian-American film Sinbad: Beyond the Veil of Mists was the first feature-length film made primarily with motion capture, although many character animators also worked on the film, which had a very limited release. 2001's Final Fantasy: The Spirits Within was the first widely released movie to be made with motion capture technology. Despite its poor box-office intake, supporters of motion capture technology took notice. Total Recall had already used the technique, in the scene of the x-ray scanner and the skeletons.

The Lord of the Rings: The Two Towers was the first feature film to utilize a real-time motion capture system. This method streamed the actions of actor Andy Serkis into the computer-generated imagery skin of Gollum / Smeagol as it was being performed.[24]

Storymind Entertainment, which is an independent Ukrainian studio, created a neo-noir third-person / shooter video game called My Eyes On You, using motion capture in order to animate its main character, Jordan Adalien, and along with non-playable characters.[25]

Of the three nominees for the 2006 Academy Award for Best Animated Feature, two of the nominees (Monster House and the winner Happy Feet) used motion capture, and only Disney·Pixar's Cars was animated without it. In the ending credits of Pixar's film Ratatouille, a stamp appears labelling the film as "100% Genuine Animation – No Motion Capture!"

Since 2001, motion capture has been used extensively to simulate or approximate the look of live-action theater, with nearly photorealistic digital character models. The Polar Express used motion capture to allow Tom Hanks to perform as several distinct digital characters (in which he also provided the voices). The 2007 adaptation of the saga Beowulf animated digital characters whose appearances were based in part on the actors who provided their motions and voices. James Cameron's highly popular Avatar used this technique to create the Na'vi that inhabit Pandora. The Walt Disney Company has produced Robert Zemeckis's A Christmas Carol using this technique. In 2007, Disney acquired Zemeckis' ImageMovers Digital (that produces motion capture films), but then closed it in 2011, after a box office failure of Mars Needs Moms.

Television series produced entirely with motion capture animation include Laflaque in Canada, Sprookjesboom and Cafe de Wereld [nl] in The Netherlands, and Headcases in the UK.

Movement capture

[edit]Virtual reality and augmented reality providers, such as uSens and Gestigon, allow users to interact with digital content in real time by capturing hand motions. This can be useful for training simulations, visual perception tests, or performing virtual walk-throughs in a 3D environment. Motion capture technology is frequently used in digital puppetry systems to drive computer-generated characters in real time.

Gait analysis is one application of motion capture in clinical medicine. Techniques allow clinicians to evaluate human motion across several biomechanical factors, often while streaming this information live into analytical software.

One innovative use is pose detection, which can empower patients during post-surgical recovery or rehabilitation after injuries. This approach enables continuous monitoring, real-time guidance, and individually tailored programs to enhance patient outcomes.[26]

Some physical therapy clinics utilize motion capture as an objective way to quantify patient progress.[27]

During the filming of James Cameron's Avatar all of the scenes involving motion capture were directed in real-time using Autodesk MotionBuilder software to render a screen image which allowed the director and the actor to see what they would look like in the movie, making it easier to direct the movie as it would be seen by the viewer. This method allowed views and angles not possible from a pre-rendered animation. Cameron was so proud of his results that he invited Steven Spielberg and George Lucas on set to view the system in action.

In Marvel's The Avengers, Mark Ruffalo used motion capture so he could play his character the Hulk, rather than have him be only CGI as in previous films, making Ruffalo the first actor to play both the human and the Hulk versions of Bruce Banner.

FaceRig software uses facial recognition technology from ULSee.Inc to map a player's facial expressions and the body tracking technology from Perception Neuron to map the body movement onto a 2D or 3D character's motion on-screen.[28][29]

During Game Developers Conference 2016 in San Francisco Epic Games demonstrated full-body motion capture live in Unreal Engine. The whole scene, from the upcoming game Hellblade about a woman warrior named Senua, was rendered in real-time. The keynote[30] was a collaboration between Unreal Engine, Ninja Theory, 3Lateral, Cubic Motion, IKinema and Xsens.

In 2020, the two-time Olympic figure skating champion Yuzuru Hanyu graduated from Waseda University. In his thesis, using data provided by 31 sensors placed on his body, he analysed his jumps. He evaluated the use of technology both in order to improve the scoring system and to help skaters improve their jumping technique.[31][32] In March 2021 a summary of the thesis was published in the academic journal.[33]

Methods and systems

[edit]

Motion tracking or motion capture started as a photogrammetric analysis tool in biomechanics research in the 1970s and 1980s, and expanded into education, training, sports and recently computer animation for television, cinema, and video games as the technology matured. Since the 20th century, the performer has to wear markers near each joint to identify the motion by the positions or angles between the markers. Acoustic, inertial, LED, magnetic or reflective markers, or combinations of any of these, are tracked, optimally at least two times the frequency rate of the desired motion. The resolution of the system is important in both the spatial resolution and temporal resolution as motion blur causes almost the same problems as low resolution. Since the beginning of the 21st century - and because of the rapid growth of technology - new methods have been developed. Most modern systems can extract the silhouette of the performer from the background. Afterwards all joint angles are calculated by fitting in a mathematical model into the silhouette. For movements you can not see a change of the silhouette, there are hybrid systems available that can do both (marker and silhouette), but with less marker.[citation needed] In robotics, some motion capture systems are based on simultaneous localization and mapping.[34]

Optical systems

[edit]Optical systems utilize data captured from image sensors to triangulate the 3D position of a subject between two or more cameras calibrated to provide overlapping projections. Data acquisition is traditionally implemented using special markers attached to an actor; however, more recent systems are able to generate accurate data by tracking surface features identified dynamically for each particular subject. Tracking a large number of performers or expanding the capture area is accomplished by the addition of more cameras. These systems produce data with three degrees of freedom for each marker, and rotational information must be inferred from the relative orientation of three or more markers; for instance shoulder, elbow and wrist markers providing the angle of the elbow. Newer hybrid systems are combining inertial sensors with optical sensors to reduce occlusion, increase the number of users and improve the ability to track without having to manually clean up data.[35]

Passive markers

[edit]

Passive optical systems use markers coated with a retroreflective material to reflect light that is generated near the camera's lens. The camera's threshold can be adjusted so only the bright reflective markers will be sampled, ignoring skin and fabric.

The centroid of the marker is estimated as a position within the two-dimensional image that is captured. The grayscale value of each pixel can be used to provide sub-pixel accuracy by finding the centroid of the Gaussian.

An object with markers attached at known positions is used to calibrate the cameras and obtain their positions, and the lens distortion of each camera is measured. If two calibrated cameras see a marker, a three-dimensional fix can be obtained. Typically a system will consist of around 2 to 48 cameras. Systems of over three hundred cameras exist to try to reduce marker swap. Extra cameras are required for full coverage around the capture subject and multiple subjects.

Vendors have constraint software to reduce the problem of marker swapping since all passive markers appear identical. Unlike active marker systems and magnetic systems, passive systems do not require the user to wear wires or electronic equipment.[36] Instead, hundreds of rubber balls are attached with reflective tape, which needs to be replaced periodically. The markers are usually attached directly to the skin (as in biomechanics), or they are velcroed to a performer wearing a full-body spandex/lycra suit designed specifically for motion capture. This type of system can capture large numbers of markers at frame rates usually around 120 to 160 fps although by lowering the resolution and tracking a smaller region of interest they can track as high as 10,000 fps.

Active marker

[edit]

Active optical systems triangulate positions by illuminating one LED at a time very quickly or multiple LEDs with software to identify them by their relative positions, somewhat akin to celestial navigation. Rather than reflecting light back that is generated externally, the markers themselves are powered to emit their own light. Since the inverse square law provides one quarter of the power at two times the distance, this can increase the distances and volume for capture. This also enables a high signal-to-noise ratio, resulting in very low marker jitter and a resulting high measurement resolution (often down to 0.1 mm within the calibrated volume).

The TV series Stargate SG1 produced episodes using an active optical system for the VFX allowing the actor to walk around props that would make motion capture difficult for other non-active optical systems.[citation needed]

ILM used active markers in Van Helsing to allow capture of Dracula's flying brides on very large sets similar to Weta's use of active markers in Rise of the Planet of the Apes. The power to each marker can be provided sequentially in phase with the capture system providing a unique identification of each marker for a given capture frame at a cost to the resultant frame rate. The ability to identify each marker in this manner is useful in real-time applications. The alternative method of identifying markers is to do it algorithmically requiring extra processing of the data.

There are also possibilities to find the position by using colored LED markers. In these systems, each color is assigned to a specific point of the body.

One of the earliest active marker systems in the 1980s was a hybrid passive-active mocap system with rotating mirrors and colored glass reflective markers and which used masked linear array detectors.

Time modulated active marker

[edit]

Active marker systems can further be refined by strobing one marker on at a time, or tracking multiple markers over time and modulating the amplitude or pulse width to provide marker ID. 12-megapixel spatial resolution modulated systems show more subtle movements than 4-megapixel optical systems by having both higher spatial and temporal resolution. Directors can see the actor's performance in real-time, and watch the results on the motion capture-driven CG character. The unique marker IDs reduce the turnaround, by eliminating marker swapping and providing much cleaner data than other technologies. LEDs with onboard processing and radio synchronization allow motion capture outdoors in direct sunlight while capturing at 120 to 960 frames per second due to a high-speed electronic shutter. Computer processing of modulated IDs allows less hand cleanup or filtered results for lower operational costs. This higher accuracy and resolution requires more processing than passive technologies, but the additional processing is done at the camera to improve resolution via subpixel or centroid processing, providing both high resolution and high speed. These motion capture systems typically cost $20,000 for an eight-camera, 12-megapixel spatial resolution 120-hertz system with one actor.

Semi-passive imperceptible marker

[edit]One can reverse the traditional approach based on high-speed cameras. Systems such as Prakash use inexpensive multi-LED high-speed projectors. The specially built multi-LED IR projectors optically encode the space. Instead of retro-reflective or active light emitting diode (LED) markers, the system uses photosensitive marker tags to decode the optical signals. By attaching tags with photo sensors to scene points, the tags can compute not only their own locations of each point, but also their own orientation, incident illumination, and reflectance.

These tracking tags work in natural lighting conditions and can be imperceptibly embedded in attire or other objects. The system supports an unlimited number of tags in a scene, with each tag uniquely identified to eliminate marker reacquisition issues. Since the system eliminates a high-speed camera and the corresponding high-speed image stream, it requires significantly lower data bandwidth. The tags also provide incident illumination data which can be used to match scene lighting when inserting synthetic elements. The technique appears ideal for on-set motion capture or real-time broadcasting of virtual sets but has yet to be proven.

Underwater motion capture system

[edit]Motion capture technology has been available for researchers and scientists for a few decades, which has given new insight into many fields.

Underwater cameras

[edit]The vital part of the system, the underwater camera, has a waterproof housing. The housing has a finish that withstands corrosion and chlorine which makes it perfect for use in basins and swimming pools. There are two types of cameras. Industrial high-speed cameras can also be used as infrared cameras. Infrared underwater cameras come with a cyan light strobe instead of the typical IR light for minimum fall-off underwater and high-speed cameras with an LED light or with the option of using image processing.

Measurement volume

[edit]An underwater camera is typically able to measure 15–20 meters depending on the water quality, the camera and the type of marker used. Unsurprisingly, the best range is achieved when the water is clear, and like always, the measurement volume is also dependent on the number of cameras. A range of underwater markers are available for different circumstances.

Tailored

[edit]Different pools require different mountings and fixtures. Therefore, all underwater motion capture systems are uniquely tailored to suit each specific pool instalment. For cameras placed in the center of the pool, specially designed tripods, using suction cups, are provided.

Markerless

[edit]Emerging techniques and research in computer vision are leading to the rapid development of the markerless approach to motion capture. Markerless systems such as those developed at Stanford University, the University of Maryland, MIT, and the Max Planck Institute, do not require subjects to wear special equipment for tracking. Special computer algorithms are designed to allow the system to analyze multiple streams of optical input and identify human forms, breaking them down into constituent parts for tracking. ESC entertainment, a subsidiary of Warner Brothers Pictures created especially to enable virtual cinematography, used a technique called Universal Capture that utilized 7 camera setup and the tracking the optical flow of all pixels over all the 2-D planes of the cameras for motion, gesture and facial expression capture leading to photorealistic results.

Traditional systems

[edit]Traditionally markerless optical motion tracking is used to keep track of various objects, including airplanes, launch vehicles, missiles and satellites. Many such optical motion tracking applications occur outdoors, requiring differing lens and camera configurations. High-resolution images of the target being tracked can thereby provide more information than just motion data. The image obtained from NASA's long-range tracking system on the space shuttle Challenger's fatal launch provided crucial evidence about the cause of the accident. Optical tracking systems are also used to identify known spacecraft and space debris despite the fact that it has a disadvantage compared to radar in that the objects must be reflecting or emitting sufficient light.[37]

An optical tracking system typically consists of three subsystems: the optical imaging system, the mechanical tracking platform and the tracking computer.

The optical imaging system is responsible for converting the light from the target area into a digital image that the tracking computer can process. Depending on the design of the optical tracking system, the optical imaging system can vary from as simple as a standard digital camera to as specialized as an astronomical telescope on the top of a mountain. The specification of the optical imaging system determines the upper limit of the effective range of the tracking system.

The mechanical tracking platform holds the optical imaging system and is responsible for manipulating the optical imaging system in such a way that it always points to the target being tracked. The dynamics of the mechanical tracking platform combined with the optical imaging system determines the tracking system's ability to keep the lock on a target that changes speed rapidly.

The tracking computer is responsible for capturing the images from the optical imaging system, analyzing the image to extract the target position and controlling the mechanical tracking platform to follow the target. There are several challenges. First, the tracking computer has to be able to capture the image at a relatively high frame rate. This posts a requirement on the bandwidth of the image-capturing hardware. The second challenge is that the image processing software has to be able to extract the target image from its background and calculate its position. Several textbook image-processing algorithms are designed for this task. This problem can be simplified if the tracking system can expect certain characteristics that is common in all the targets it will track. The next problem down the line is controlling the tracking platform to follow the target. This is a typical control system design problem rather than a challenge, which involves modeling the system dynamics and designing controllers to control it. This will however become a challenge if the tracking platform the system has to work with is not designed for real-time.

The software that runs such systems is also customized for the corresponding hardware components. One example of such software is OpticTracker, which controls computerized telescopes to track moving objects at great distances, such as planes and satellites. Another option is the software SimiShape, which can also be used hybrid in combination with markers.

RGB-D cameras

[edit]RGB-D cameras such as Kinect capture both the color and depth images. By fusing the two images, 3D colored voxels can be captured, allowing motion capture of 3D human motion and human surface in real-time.

Because of the use of a single-view camera, motions captured are usually noisy. Machine learning techniques have been proposed to automatically reconstruct such noisy motions into higher quality ones, using methods such as lazy learning[38] and Gaussian models.[39] Such method generates accurate enough motion for serious applications like ergonomic assessment.[40]

Non-optical systems

[edit]Inertial systems

[edit]Inertial motion capture[41] technology is based on miniature inertial sensors, biomechanical models and sensor fusion algorithms.[42] The motion data of the inertial sensors (inertial guidance system) is often transmitted wirelessly to a computer, where the motion is recorded or viewed. Most inertial systems use inertial measurement units (IMUs) containing a combination of gyroscope, magnetometer, and accelerometer, to measure rotational rates. These rotations are translated to a skeleton in the software. Much like optical markers, the more IMU sensors the more natural the data. No external cameras, emitters or markers are needed for relative motions, although they are required to give the absolute position of the user if desired. Inertial motion capture systems capture the full six degrees of freedom body motion of a human in real-time and can give limited direction information if they include a magnetic bearing sensor, although these are much lower resolution and susceptible to electromagnetic noise. Benefits of using Inertial systems include: capturing in a variety of environments including tight spaces, no solving, portability, and large capture areas. Disadvantages include lower positional accuracy and positional drift which can compound over time. These systems are similar to the Wii controllers but are more sensitive and have greater resolution and update rates. They can accurately measure the direction to the ground to within a degree. The popularity of inertial systems is rising amongst game developers,[10] mainly because of the quick and easy setup resulting in a fast pipeline. A range of suits are now available from various manufacturers and base prices range from $1000 to US$80,000.

Mechanical motion

[edit]Mechanical motion capture systems directly track body joint angles and are often referred to as exoskeleton motion capture systems, due to the way the sensors are attached to the body. A performer attaches the skeletal-like structure to their body and as they move so do the articulated mechanical parts, measuring the performer's relative motion. Mechanical motion capture systems are real-time, relatively low-cost, free from occlusion, and wireless (untethered) systems that have unlimited capture volume. Typically, they are rigid structures of jointed, straight metal or plastic rods linked together with potentiometers that articulate at the joints of the body. These suits tend to be in the $25,000 to $75,000 range plus an external absolute positioning system. Some suits provide limited force feedback or haptic input.

Magnetic systems

[edit]Magnetic systems calculate position and orientation by the relative magnetic flux of three orthogonal coils on both the transmitter and each receiver.[43] The relative intensity of the voltage or current of the three coils allows these systems to calculate both range and orientation by meticulously mapping the tracking volume. The sensor output is six degrees of freedom (6DOF), which provides useful results obtained with two-thirds the number of markers required in optical systems; one on upper arm and one on lower arm for elbow position and angle.[citation needed] The markers are vulnerable to magnetic and electrical interference from metal objects in the environment, like rebar (steel reinforcing bars in concrete) or wiring, which affect the magnetic field, and electrical sources such as monitors, lights, cables and computers.

The sensor response is nonlinear, especially toward edges of the capture area. The wiring from the sensors tends to preclude extreme performance movements.[43] With magnetic systems, it is possible to monitor the results of a motion capture session in real time.[43] The capture volumes for magnetic systems are dramatically smaller than they are for optical systems. With the magnetic systems, there is a distinction between alternating-current (AC) and direct-current (DC) systems: DC system uses square pulses, AC systems use sine waves.

Stretch sensors

[edit]Stretch sensors are flexible parallel plate capacitors that measure either stretch, bend, shear, or pressure and are typically produced from silicone. When the sensor stretches or squeezes its capacitance value changes. This data can be transmitted via Bluetooth or direct input and used to detect minute changes in body motion. Stretch sensors are unaffected by magnetic interference and are free from occlusion. The stretchable nature of the sensors also means they do not suffer from positional drift, which is common with inertial systems. Stretchable sensors, on the other hands, due to the material properties of their substrates and conducting materials, suffer from relatively low signal-to-noise ratio, requiring filtering or machine learning to make them usable for motion capture. These solutions result in higher latency when compared to alternative sensors.

Related techniques

[edit]Facial motion capture

[edit]Most traditional motion capture hardware vendors provide for some type of low-resolution facial capture utilizing anywhere from 32 to 300 markers with either an active or passive marker system. All of these solutions are limited by the time it takes to apply the markers, calibrate the positions and process the data. Ultimately the technology also limits their resolution and raw output quality levels.

High-fidelity facial motion capture, also known as performance capture, is the next generation of fidelity and is utilized to record the more complex movements in a human face in order to capture higher degrees of emotion. Facial capture is currently arranging itself in several distinct camps, including traditional motion capture data, blend-shaped based solutions, capturing the actual topology of an actor's face, and proprietary systems.

The two main techniques are stationary systems with an array of cameras capturing the facial expressions from multiple angles and using software such as the stereo mesh solver from OpenCV to create a 3D surface mesh, or to use light arrays as well to calculate the surface normals from the variance in brightness as the light source, camera position or both are changed. These techniques tend to be only limited in feature resolution by the camera resolution, apparent object size and number of cameras. If the users face is 50 percent of the working area of the camera and a camera has megapixel resolution, then sub millimeter facial motions can be detected by comparing frames. Recent work is focusing on increasing the frame rates and doing optical flow to allow the motions to be retargeted to other computer generated faces, rather than just making a 3D Mesh of the actor and their expressions.

Radio frequency positioning

[edit]Radio frequency positioning systems are becoming more viable[citation needed] as higher frequency radio frequency devices allow greater precision than older technologies such as radar. The speed of light is 30 centimeters per nanosecond (billionth of a second), so a 10 gigahertz (billion cycles per second) radio frequency signal enables an accuracy of about 3 centimeters. By measuring amplitude to a quarter wavelength, it is possible to improve the resolution down to about 8 mm. To achieve the resolution of optical systems, frequencies of 50 gigahertz or higher are needed, which are almost as dependent on line of sight and as easy to block as optical systems. Multipath and reradiation of the signal are likely to cause additional problems, but these technologies will be ideal for tracking larger volumes with reasonable accuracy, since the required resolution at 100 meter distances is not likely to be as high. Many scientists[who?] believe that radio frequency will never produce the accuracy required for motion capture.

Researchers at Massachusetts Institute of Technology researchers said in 2015 that they had made a system that tracks motion by radio frequency signals.[44][45]

Non-traditional systems

[edit]An alternative approach was developed where the actor is given an unlimited walking area through the use of a rotating sphere, similar to a hamster ball, which contains internal sensors recording the angular movements, removing the need for external cameras and other equipment. Even though this technology could potentially lead to much lower costs for motion capture, the basic sphere is only capable of recording a single continuous direction. Additional sensors worn on the person would be needed to record anything more.

Another alternative is using a 6DOF (Degrees of freedom) motion platform with an integrated omni-directional treadmill with high resolution optical motion capture to achieve the same effect. The captured person can walk in an unlimited area, negotiating different uneven terrains. Applications include medical rehabilitation for balance training, bio-mechanical research and virtual reality.[citation needed]

3D pose estimation

[edit]In 3D pose estimation, an actor's pose can be reconstructed from an image or depth map.[46]

See also

[edit]- Animation database

- Finger tracking

- Gesture recognition

- Inverse kinematics (a different way of making CGI effects realistic)

- Kinect (created by Microsoft Corporation)

- List of motion and gesture file formats

- Motiongram

- Motion-capture acting

- Motion History Images

- Video tracking

- VR positional tracking

References

[edit]- ^ Goebl, Werner; Palmer, Caroline (2013). Balasubramaniam, Ramesh (ed.). "Temporal Control and Hand Movement Efficiency in Skilled Music Performance". PLOS ONE. 8 (1) e50901. Bibcode:2013PLoSO...850901G. doi:10.1371/journal.pone.0050901. PMC 3536780. PMID 23300946.

- ^ Olsen, Niels Lundtorp; Markussen, Bo; Raket, Lars Lau (2018). "Simultaneous inference for misaligned multivariate functional data". Journal of the Royal Statistical Society, Series C. 67 (5): 1147–76. arXiv:1606.03295. doi:10.1111/rssc.12276. S2CID 88515233.

- ^ Noonan, David P.; Mountney, Peter; Elson, Daniel S.; Darzi, Ara; Yang, Guang-Zhong (May 2009). "A stereoscopic fibroscope for camera motion and 3D depth recovery during Minimally Invasive Surgery". 2009 IEEE International Conference on Robotics and Automation. pp. 4463–4468. doi:10.1109/ROBOT.2009.5152698. ISBN 978-1-4244-2788-8.

- ^ Yamane, Katsu; Hodgins, Jessica (October 2009). "Simultaneous tracking and balancing of humanoid robots for imitating human motion capture data". 2009 IEEE/RSJ International Conference on Intelligent Robots and Systems. pp. 2510–2517. doi:10.1109/IROS.2009.5354750. ISBN 978-1-4244-3803-7. S2CID 18042804.

- ^ Gatt, Joe (2013-09-13). "Motion Capture Actors: Body Movement Tells the Story". NYCastings - DirectSubmit. Archived from the original on 2025-03-22. Retrieved 2025-06-14.

- ^ Salomon, Andrew Harris (2013-02-22). "Growth In Performance Capture Helping Gaming Actors Weather Slump". www.backstage.com. Retrieved 2025-06-14.

- ^ Child, Ben (2011-08-12). "Andy Serkis: why won't Oscars go ape over motion-capture acting?". The Guardian. ISSN 0261-3077. Retrieved 2025-06-14.

- ^ Hart, Hugh (2012-01-24). "When Will a Motion-Capture Actor Win an Oscar?". Wired. ISSN 1059-1028. Retrieved 2025-06-14.

- ^ Cheung, German K.M.; Kanade, Takeo; Bouguet, Jean-Yves; Holler, Mark (2000). "A real time system for robust 3D voxel reconstruction of human motions" (PDF). Proceedings IEEE Conference on Computer Vision and Pattern Recognition. CVPR 2000 (Cat. No.PR00662). Vol. 2. IEEE Comput. Soc. pp. 714–720. doi:10.1109/CVPR.2000.854944. ISBN 978-0-7695-0662-3.

- ^ a b "Xsens MVN Animate – Products". Xsens 3D motion tracking. Retrieved 2019-01-22.

- ^ "The Next Generation 1996 Lexicon A to Z: Motion Capture". Next Generation. No. 15. Imagine Media. March 1996. p. 37.

- ^ "Motion Capture". Next Generation (10). Imagine Media: 50. October 1995.

- ^ Radoff, Jon (2008-08-22). "Anatomy of an MMORPG". Archived from the original on 2009-12-13. Retrieved 2009-11-30.

- ^ a b "Hooray for Hollywood! Acclaim Studios". GamePro. No. 82. IDG. July 1995. pp. 28–29.

- ^ Mason, Graeme. "Martech Games - The Personality People". Retro Gamer. No. 133. p. 51.

- ^ "Pre-Street Fighter II Fighting Games". Hardcore Gaming 101. p. 8. Retrieved 26 November 2021.

- ^ "Sega Saturn exclusive! Virtua Fighter: fighting in the third dimension" (PDF). Computer and Video Games. No. 158 (January 1995). Future plc. 15 December 1994. pp. 12–3, 15–6, 19.

- ^ "Virtua Fighter". Maximum: The Video Game Magazine (1). Emap International Limited: 142–3. October 1995.

- ^ Wawro, Alex (October 23, 2014). "Yu Suzuki Recalls Using Military Tech to Make Virtua Fighter 2". Gamasutra. Retrieved 18 August 2016.

- ^ "History of Motion Capture". Motioncapturesociety.com. Archived from the original on 2018-10-23. Retrieved 2013-08-10.

- ^ "Purdue's Home for Drone Systems Engineering". Aerogram Magazine - 2021-2022. Purdue University. Retrieved 2023-09-18.

- ^ "Coin-Op News: Acclaim technology tapped for "Batman" movie". Play Meter. Vol. 20, no. 11. October 1994. p. 22.

- ^ "Acclaim Stakes its Claim". RePlay. Vol. 20, no. 4. January 1995. p. 71.

- ^ Savage, Annaliza (12 July 2012). "Gollum Actor: How New Motion-Capture Tech Improved The Hobbit". Wired. Retrieved 29 January 2017.

- ^ "INTERVIEW: Storymind Entertainment Talks About Upcoming 'My Eyes On You'". That Moment In. 29 October 2017. Archived from the original on 2018-09-26. Retrieved 2022-09-24.

- ^ "AI based pose detection for physical rehabilitation software".

- ^ "Markerless Motion Capture | EuMotus". Markerless Motion Capture | EuMotus. Retrieved 2018-10-12.

- ^ Corriea, Alexa Ray (30 June 2014). "This facial recognition software lets you be Octodad". Polygon. Retrieved 4 January 2017.

- ^ Plunkett, Luke (27 December 2013). "Turn Your Human Face Into A Video Game Character". kotaku.com. Retrieved 4 January 2017.

- ^ Seymour, Mike (24 April 2016). "Put your (digital) game face on". fxguide.com. Retrieved 4 January 2017.

- ^ "羽生結弦"動いたこと"は卒論完成 24時間テレビにリモート出演 (Yuzuru Hanyu completes graduation thesis on "moving things" and appears remotely on TV 24 hours a day)" (in Japanese). Retrieved 2 September 2023.

- ^ "羽生結弦が卒業論文を公開 24時間テレビに出演 (Yuzuru Hanyu publishes graduation thesis and appears on 24-hour TV)" (in Japanese). Retrieved 2 September 2023.

- ^ Hanyu, Yuzuru; 羽生, 結弦 (18 March 2021). "無線・慣性センサー式モーションキャプチャシステムのフィギュアスケートでの利活用に関するフィージビリティスタディ (A feasibility study on the use of wireless and inertial sensor motion capture systems in figure skating)". 人間科学研究 (Waseda Journal of Human Sciences) (in Japanese). 2021. Waseda University: 1–7. hdl:2065/00080605. Retrieved 2 September 2023.

- ^ Sturm, Jürgen; Engelhard, Nikolas; Endres, Felix; Burgard, Wolfram; Cremers, Daniel (October 2012). "A benchmark for the evaluation of RGB-D SLAM systems" (PDF). 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems. pp. 573–580. doi:10.1109/IROS.2012.6385773. ISBN 978-1-4673-1736-8.

- ^ Li, Jian; Yang, Jiushan; Xu, Zhanwang; Peng, Jingliang (November 2012). "Computer-assisted hand rehabilitation assessment using an optical motion capture system". 2012 International Conference on Image Analysis and Signal Processing. pp. 1–5. doi:10.1109/IASP.2012.6425069. ISBN 978-1-4673-2546-2.

- ^ "Motion Capture: Optical Systems". Next Generation (10). Imagine Media: 53. October 1995.

- ^ Veis, George (1963). "Optical tracking of artificial satellites". Space Science Reviews. 2 (2): 250–296. Bibcode:1963SSRv....2..250V. doi:10.1007/BF00216781. S2CID 121533715.

- ^ Shum, Hubert P. H.; Ho, Edmond S. L.; Jiang, Yang; Takagi, Shu (2013). "Real-Time Posture Reconstruction for Microsoft Kinect" (PDF). IEEE Transactions on Cybernetics. 43 (5): 1357–1369. Bibcode:2013ITCyb..43.1357S. doi:10.1109/TCYB.2013.2275945. PMID 23981562. S2CID 14124193.

- ^ Liu, Zhiguang; Zhou, Liuyang; Leung, Howard; Shum, Hubert P. H. (2016). "Kinect Posture Reconstruction based on a Local Mixture of Gaussian Process Models". IEEE Transactions on Visualization and Computer Graphics. 22 (11): 2437–2450. Bibcode:2016ITVCG..22.2437L. doi:10.1109/TVCG.2015.2510000. PMID 26701789. S2CID 216076607.

- ^ Plantard, Pierre; Shum, Hubert P. H.; Pierres, Anne-Sophie Le; Multon, Franck (2017). "Validation of an Ergonomic Assessment Method using Kinect Data in Real Workplace Conditions". Applied Ergonomics. 65: 562–569. doi:10.1016/j.apergo.2016.10.015. PMID 27823772. S2CID 13658487.

- ^ "Full 6DOF Human Motion Tracking Using Miniature Inertial Sensors" (PDF). Archived from the original (PDF) on 2013-04-04. Retrieved 2013-04-03.

- ^ "A history of motion capture". Xsens 3D motion tracking. Archived from the original on 2019-01-22. Retrieved 2019-01-22.

- ^ a b c "Motion Capture: Magnetic Systems". Next Generation (10). Imagine Media: 51. October 1995.

- ^ Alba, Alejandro (November 2015). "MIT researchers create device that can recognize, track people through walls". nydailynews.com. Retrieved 2019-12-09.

- ^ Adib, Fadel; Hsu, Chen-Yu; Mao, Hongzi; Katabi, Dina; Durand, Frédo (2015-11-04). "Capturing the human figure through a wall" (PDF). ACM Transactions on Graphics. 34 (6): 1–13. doi:10.1145/2816795.2818072. hdl:1721.1/111588. ISSN 0730-0301.

- ^ Ye, Mao; Wang, Xianwang; Yang, Ruigang; Ren, Liu; Pollefeys, Marc (November 2011). "Accurate 3D pose estimation from a single depth image" (PDF). 2011 International Conference on Computer Vision. pp. 731–738. doi:10.1109/ICCV.2011.6126310. ISBN 978-1-4577-1102-2.

External links

[edit]- The fascination for motion capture Archived 2016-03-04 at the Wayback Machine, an introduction to the history of motion capture technology

Motion capture

View on GrokipediaFundamentals

Definition and Principles

Motion capture, often abbreviated as MoCap, is the process of recording the movements of objects or people in physical space and translating that data into a digital format for 3D reconstruction and analysis.[12] This involves digitizing real-world motion to create accurate representations suitable for applications in animation, simulation, and research.[13] At its core, the technology approximates the human body or object as a rigid-body model with a defined set of degrees of freedom (DOF), enabling the capture of complex dynamics through structured data.[14] The basic principles of motion capture revolve around tracking designated points or features on a subject, reconstructing their paths in three-dimensional space, and mapping these paths onto digital models such as avatars or skeletal rigs.[15] Tracking occurs by monitoring the positions of these points over time, often using specialized hardware to detect changes in location and orientation. 3D reconstruction is achieved through methods like triangulation, where multiple viewpoints intersect to determine spatial coordinates, or sensor fusion, which integrates data from various sources to refine positional estimates and reduce errors.[16] The resulting trajectories are then processed to align with a digital skeleton, preserving the natural flow and constraints of the captured motion.[13] Key components of a motion capture system include sensors, such as reflective markers or wearable devices, which serve as the primary points of detection on the subject.[16] Tracking hardware, including cameras for optical detection or inertial measurement units (IMUs) for body-worn systems, captures raw data on these sensors' movements.[14] Software plays a crucial role in data processing, involving calibration, noise filtering, and animation retargeting to convert the captured signals into usable 3D models.[15] To fully represent body poses, motion capture systems aim to record six degrees of freedom (6DOF) for each relevant marker or joint, comprising three translational components (position along x, y, and z axes) and three rotational components (yaw, pitch, and roll).[17] These DOF are defined within a coordinate system, typically a global frame for the capture volume and local frames for individual body segments, allowing precise reconstruction of spatial orientation and movement. This 6DOF approach ensures that the digital model captures both the location and attitude of limbs or objects, facilitating realistic pose estimation.[18]Motion Capture vs. Keyframe Animation

Motion capture (mocap) and keyframe animation are two fundamental techniques in 3D computer animation for creating character and object movements in films, video games, and other media. Motion capture records real-world movements using sensors, markers, or markerless systems, mapping them to digital characters for highly realistic, nuanced performances with natural subtleties like weight shifts and micro-expressions. Advantages of motion capture include superior realism, faster production for lifelike human motion, efficiency with large volumes of data, and authentic actor performances. Disadvantages include high costs for equipment and setup, the need for cleanup of noisy data, limited flexibility for stylized or impossible movements, and potential for an uncanny or slippery feel without polishing. Keyframe animation involves manually setting key poses at specific frames, with software interpolating in-betweens. It provides complete creative control for exaggeration, stylization, physically impossible actions, and non-human characters. Advantages include artistic freedom, suitability for cartoonish or fantasy styles, no special hardware needed, and precise timing adjustments. Disadvantages include the time-consuming process, difficulty achieving convincing realism, and risk of unnatural floaty motion if poorly executed. Direct comparison:- Control: Keyframe offers full freedom; mocap is constrained to captured data.

- Realism: Mocap excels at natural human motion; keyframe depends on skill and can be stylized.

- Speed: Mocap faster for realistic sequences; keyframe slower but no setup overhead.

- Cost: Keyframe lower upfront; mocap higher due to tech and talent.

- Best uses: Mocap for photorealistic humans, dialogue, performances (e.g., Avatar, Planet of the Apes); keyframe for stylized, creatures, exaggerated action (e.g., Pixar films, fighting games).

- Hybrid approaches: Common practice uses mocap as a base for realism, then keyframe refinements for fixes or stylization, prevalent in AAA games and VFX.

Historical Development

The historical development of motion capture traces its roots to early 20th-century analog techniques aimed at capturing human movement for animation. In 1915, animator Max Fleischer invented rotoscoping, a pioneering method that involved projecting live-action film footage onto a drawing surface to trace character outlines frame by frame, enabling more fluid and realistic motion in animated sequences.[19] This technique debuted in Fleischer's Out of the Inkwell series and influenced subsequent animation, including Disney's use in Snow White and the Seven Dwarfs (1937).[20] During the 1940s to 1960s, analog puppet systems advanced the field, with mechanical setups incorporating potentiometers to record joint angles for direct puppet control and early computer animation experiments.[21][22] The transition to digital motion capture occurred in the 1970s, driven by applications in aerospace and medicine. NASA's biomechanics research in the early 1970s utilized early electrogoniometers and film-based systems to analyze astronaut movements, laying groundwork for precise 3D tracking.[23] By the late 1970s, commercial optical systems emerged, such as the SELSPOT system developed in 1976 by Northern Digital Inc., which employed infrared cameras to track reflective markers on performers for real-time 3D data capture in sports and engineering.[22] In the 1980s, motion capture integrated with computer-generated imagery (CGI) in films, exemplified by early experiments in Tron (1982) and The Abyss (1989), where digitized suit data informed fluid creature animations.[24] The 1990s marked a boom in adoption across entertainment, fueled by hardware improvements and software accessibility. Video games pioneered widespread use, with Namco's System 21 arcade hardware in 1994 employing optical motion capture for realistic fighter animations in titles like Tekken.[23] In film, Jurassic Park (1993) leveraged motion-captured human references to guide dinosaur behaviors in ILM's CGI sequences, enhancing lifelike movement despite relying on keyframe animation.[20] The decade also saw the introduction of active markers—LED-equipped reflectors that emit light for precise tracking—deployed in systems like Vicon's 1990s models, reducing occlusion issues and enabling multi-actor captures.[22] Advancements in the 2000s emphasized portability, accuracy, and integration. Inertial measurement unit (IMU)-based suits gained traction, with Xsens launching its MVN suit in 2005, using gyroscopes and accelerometers for wireless, markerless-like full-body tracking suitable for on-location shoots.[24] Markerless prototypes emerged, such as Microsoft's Kinect sensor (2010, building on 2000s research), which employed depth-sensing cameras for vision-based pose estimation without physical markers.[25] Real-time rendering integration accelerated, allowing captured data to drive immediate CGI previews, as seen in production pipelines for films like The Lord of the Rings trilogy (2001–2003).[23] From the 2010s onward, motion capture shifted toward AI-assisted and portable systems, expanding accessibility for virtual and augmented reality. The 2009 film Avatar popularized facial motion capture through James Cameron's performance capture rigs, using head-mounted cameras to record nuanced expressions for Na'vi characters.[20] AI-driven markerless solutions proliferated, with DeepMotion's 2018 platform employing deep learning models to reconstruct 3D poses from monocular video, democratizing the technology.[25] Portable systems for VR/AR, such as HTC Vive's tracker ecosystem (2016) and Xsens' wireless expansions, enabled untethered, real-time tracking in immersive environments.[24] In the 2020s, as of 2025, motion capture has further integrated with consumer hardware and AI, including spatial computing devices like the Apple Vision Pro (released 2023), which supports hand and body tracking for immersive simulations without dedicated mocap setups.[26] This era emphasizes hybrid systems combining inertial and vision-based methods for broader applications in real-time telepresence and metaverse environments.[27]Benefits and Challenges

Advantages

Motion capture technology excels in delivering high fidelity by recording nuanced, natural human movements that are challenging to replicate through manual keyframing alone. This approach captures subtle details, such as micro-expressions or fluid limb articulations, resulting in highly realistic animations unattainable with traditional techniques. For instance, optical systems commonly achieve sub-millimeter precision—typically 0.3 to 1 mm—in controlled settings, serving as the gold standard for applications requiring photorealistic motion. In contrast, IMU-based systems like Rebocap offer affordable full-body tracking using 15 inertial measurement unit sensors, relying on accelerometers and gyroscopes for motion data without external base stations or markers, enhancing accessibility and portability despite potential drift and lower immediate accuracy.[28][29][30] In terms of time efficiency, motion capture substantially accelerates the animation production pipeline compared to conventional keyframing, often reducing the time needed for motion creation significantly. This efficiency stems from the ability to generate vast amounts of animation data rapidly, enabling real-time previews and iterative refinements during virtual production workflows. Such approaches allow creators to focus on creative enhancements rather than labor-intensive frame-by-frame work.[31] The versatility of motion capture extends its utility across diverse scenarios, including complex crowd simulations and physics-based interactions that demand synchronized, lifelike behaviors from multiple entities. By leveraging pre-recorded motion data, it democratizes high-quality animation for non-experts, facilitating applications in fields beyond entertainment, such as scientific modeling and industrial design. This adaptability supports scalable implementations, where motion data can be repurposed for varied contexts without starting from scratch.[1][32] Long-term cost savings are a key advantage, primarily through the creation of reusable motion libraries that minimize the need for repeated live shoots or manual recreations. These libraries enable efficient asset sharing across projects, while integration with AI tools further amplifies scalability by generating virtual actors from existing data, reducing overall production expenditures.[33][34]Disadvantages and Limitations

Motion capture systems often entail significant initial investments, with professional multi-camera optical setups typically costing between $50,000 and $200,000 or more, depending on the number of cameras, software licenses, and additional hardware like suits and markers. However, as of 2025, more affordable entry-level systems starting at around $5,000 have emerged, lowering barriers for smaller-scale use.[35][36] These expenses are compounded by the need for dedicated studio spaces to accommodate calibration and capture volumes, as well as the requirement for skilled operators to handle complex setup and calibration processes, which can demand specialized training in biomechanics or computer vision.[37] Data processing in motion capture presents substantial demands, particularly in optical systems where occlusion errors—caused by markers being blocked from camera views—frequently necessitate manual cleanup that can take several hours per capture session to resolve mislabeling, jitter, or missing data points.[38][39] In non-optical inertial systems, such as the Rebocap system that employs 15 IMU sensors relying on accelerometers and gyroscopes for full-body motion tracking without external equipment, gyroscope drifts can accumulate over time, resulting in lower immediate accuracy compared to optical systems, which provide higher precision through base stations and markers but introduce greater complexity and cost.[40][41] Sensor noise from accelerations or drifts requires application of sophisticated filtering algorithms to achieve usable trajectories, adding computational overhead and expertise needs.[42] Environmental constraints further limit motion capture deployment, as optical systems are highly sensitive to lighting variations and reflections that can distort marker detection, while magnetic systems suffer interference from nearby metal objects or electromagnetic fields.[43][44] Most setups operate within capture volumes typically measuring 3 to 8 meters per side to maintain accuracy, making them unsuitable for large-scale or unstructured outdoor environments where uncontrolled lighting, weather, and occlusions exacerbate tracking failures.[29][45] Accuracy limitations persist in capturing nuanced details, such as subtle facial expressions or rapid limb motions, where marker-based systems may lose fidelity due to small-scale movements below sensor resolution or high-speed blurring that exceeds frame rates.[46][28] In applications involving video surveillance or wearable tracking, motion capture technologies can raise ethical concerns regarding privacy and consent, potentially leading to misuse in profiling or data breaches.[47] Recent AI-driven methods offer partial mitigation for some of these issues, such as automated occlusion handling.[48]Applications

Entertainment

Motion capture has transformed entertainment by enabling creators to translate human performances into digital realms, fostering realistic animations and immersive narratives in video games, films, animation, theater, and virtual/augmented reality. This technology captures subtle movements, expressions, and interactions, allowing for seamless blending of live action with computer-generated elements to heighten emotional depth and visual spectacle. Its adoption has streamlined creative workflows, from pre-visualization to final rendering, while emphasizing actor-driven storytelling over purely manual animation. In video games, motion capture facilitates real-time character controls and procedural animation blending with player input, creating responsive and lifelike gameplay. EA's FIFA series has employed this since the early 2000s, with Sol Campbell providing motion capture data for player actions in FIFA 2000, marking an early integration of authentic soccer movements into digital simulations. Subsequent advancements, such as Real Player Motion Technology in FIFA 18, utilized extensive motion capture sessions with professional athletes to animate new movements, enhancing immersion by combining captured data with algorithmic variations for dynamic on-field interactions. This approach not only replicates professional-level realism but also adapts to user inputs, as seen in HyperMotion Technology for FIFA 22, which processed data from 22 tracked players to generate over 4,000 new animations. Films and animation leverage performance capture to infuse digital characters with human nuance, particularly for non-human roles that demand complex emotional ranges. Andy Serkis's portrayal of Gollum in The Lord of the Rings trilogy (2001–2003) pioneered this by capturing full-body and facial motions in a skintight suit, allowing subtle expressions like trembling fingers and shifting gazes to convey the character's tormented psyche, which added profound relatability to the CGI entity. In Avatar (2009), James Cameron advanced full-body performance capture for the Na'vi aliens, outfitting actors in suits with over 120 markers to record movements on a virtual set, preserving performative authenticity while enabling expansive blue-screen integration for Pandora's environments. Virtual production in The Mandalorian (2019) further innovated by pairing motion capture with massive LED walls via Industrial Light & Magic's StageCraft, providing actors real-time digital backdrops that react to performances, thus reducing post-production compositing and enhancing on-set immersion. Optical systems predominate in these film applications for their precision in tracking intricate motions. Theatrical productions and VR/AR experiences employ live motion capture for interactive mapping, extending narrative possibilities beyond traditional stages. The Royal Shakespeare Company's Dream project (2016) fused motion capture with gaming tech to overlay digital characters onto live actors, creating hybrid performances that explore augmented storytelling for theater audiences. In VR/AR, real-time facial and body capture drives expressive avatars, enabling natural gestures like smiling or nodding in metaverse interactions, which fosters emotional connectivity in social platforms and collaborative virtual spaces. Motion capture's commercial impact is evident in case studies of high-grossing projects, where it has elevated visual storytelling to drive box office success. The Lord of the Rings trilogy, bolstered by Gollum's groundbreaking performance capture, amassed approximately $2.96 billion worldwide (as of November 2025), with the technology's role in authentic creature animation contributing to 17 Academy Awards and widespread acclaim for its effects.[49] Similarly, Avatar's innovative full-body capture propelled it to approximately $2.92 billion in global earnings (as of November 2025), the highest for any film at the time, underscoring how mocap-enabled visuals can captivate audiences on an unprecedented scale.[50] The shift to on-set real-time feedback, pioneered by Weta Digital in projects like The Hobbit (2012), evolved from post-production mocap to live previews of digital performances, accelerating workflows and allowing directors immediate adjustments for narrative fidelity.Scientific and Industrial Uses

Motion capture technologies play a crucial role in sports biomechanics, enabling precise gait analysis for injury prevention and performance optimization. Systems like Vicon, recognized as a gold standard for 3D motion tracking, are employed in elite sports such as football and rugby to quantify joint kinematics and external loads during activities like jumping and sprinting.[51] For instance, Vicon-based analysis has been used to assess vertical jump mechanics and braking squats, identifying asymmetries that inform training protocols to reduce injury risk in athletes.[52] This 3D joint tracking allows coaches to optimize techniques, as seen in studies validating motion data against force plates for curve sprinting force profiles.[53] In medical rehabilitation, motion capture facilitates tracking of patient recovery, particularly for post-stroke motor deficits, with applications dating back to the 1990s through early virtual reality integrations. Interactive motion capture systems, such as those using gesture-controlled virtual environments, support functional retraining in inpatient settings, yielding improvements in balance and arm function comparable to conventional therapy.[54] A 2017 randomized controlled trial demonstrated that motion capture-based rehabilitation enhanced standing balance by approximately 4 cm in functional reach tests among subacute stroke patients, without adverse effects.[54] Additionally, virtual reality combined with motion capture aids motor skills therapy by providing immersive feedback, promoting neuroplasticity and better outcomes in upper limb recovery when adjunct to standard care.[55] Industrial ergonomics leverages motion capture for worker posture assessment to mitigate injury risks, especially in high-repetition environments like automotive assembly lines. Marker-based and inertial systems capture dynamic joint motions during tasks such as material handling, enabling ergonomic evaluations that identify high-risk postures and reduce musculoskeletal disorder incidence.[56] In automotive plants, motion capture has been integrated into assessments of exoskeleton use, measuring joint angles to optimize assembly workflows and lower strain on upper limbs.[57] For robotics training, human demonstration capture via motion tracking allows robots to learn complex manipulations, such as bimanual skills, by mapping human trajectories to robotic actuators, enhancing task automation in manufacturing.[58] In military and research contexts, motion capture supports soldier movement simulation for training and tactical analysis. Optical and inertial tracking systems capture real-time postures in virtual simulators, improving targeting accuracy by accounting for weapon sway during dynamic motions like running.[59] This data informs immersive environments where soldiers practice maneuvers without physical risk.[60] For animal locomotion studies, advanced 3D surface motion capture enables quantitative analysis of freely moving subjects, revealing insights into gait patterns, social interactions, and terrain adaptations in species like rodents.[61] Furthermore, motion capture integrates with computer-aided design (CAD) tools and virtual environments to test product ergonomics, simulating factory layouts for injury prevention during design phases.[62]Core Technologies

Optical Systems

Optical systems in motion capture rely on camera-based tracking of markers that reflect or emit light, enabling precise 3D reconstruction of subject movements through visual line-of-sight observation. These systems typically employ multiple synchronized cameras equipped with infrared illuminators to detect markers without interfering with visible light environments, making them suitable for controlled indoor setups. The core principle involves capturing 2D projections of markers from various angles and reconstructing their 3D positions via geometric algorithms, achieving high fidelity in dynamic scenarios.[63] Passive markers consist of retro-reflective spheres or beads coated with materials that reflect infrared light back toward the camera lenses, illuminated by rings of IR LEDs surrounding each camera. This design minimizes ambient light interference and allows for the simultaneous tracking of numerous markers across multiple subjects, as the reflective property enables detection from a distance without power sources on the markers themselves. Systems like Vicon, originating in the 1970s for biomechanical analysis, popularized this approach by leveraging passive markers for gait studies and early animation applications. The advantages include scalability for multi-person captures and reduced setup complexity compared to powered alternatives, though they require line-of-sight to avoid occlusions.[64][63] Active markers, in contrast, use light-emitting diodes (LEDs) that emit infrared pulses at controlled frequencies, providing unique temporal signatures for identification. This precise timing allows the system to distinguish individual markers even during partial occlusions, as each LED's blink pattern serves as a unique ID, facilitating robust tracking in complex scenes with overlapping subjects. OptiTrack systems exemplify this technology, integrating active markers with high-speed cameras to achieve low-latency data acquisition suitable for real-time applications like virtual reality. The LED-based emission ensures consistent signal strength regardless of distance, enhancing reliability in larger volumes.[65][66] Underwater variants adapt optical principles for aquatic environments using specialized cameras housed in waterproof enclosures, often paired with high-power LED strobes to counteract light attenuation in water. These strobes synchronize with camera shutters to illuminate retro-reflective or active markers, enabling clear detection despite refraction and scattering effects. Applications include marine biology research for tracking fish locomotion and swim analysis in sports science, where systems capture full-body kinematics during strokes or dives. Qualisys underwater cameras, for instance, support ranges up to 30 meters with integrated strobes, allowing seamless transitions between above- and below-water tracking.[67][68] The architecture of optical systems centers on multi-camera arrays, typically 6 to 20 units, calibrated to a shared coordinate frame using reference objects like checkerboard patterns or wand-based movers. Calibration establishes intrinsic parameters (e.g., lens distortion) and extrinsic ones (e.g., camera positions), ensuring accurate 2D-to-3D mapping. Triangulation then computes marker positions by intersecting rays from at least two cameras viewing the same point, yielding sub-millimeter accuracy of 0.1-1 mm in optimal conditions. Post-capture processing involves software such as Autodesk MotionBuilder for retargeting captured data onto digital skeletons, adjusting for anatomical differences without altering the original motion intent. These systems can integrate with inertial sensors for hybrid setups to mitigate occlusions, though optical remains dominant for precision.[69][70]Non-Optical Systems

Non-optical systems in motion capture rely on wearable sensors and environmental technologies to track body movements without cameras, enabling untethered operation in diverse settings such as outdoor environments or areas with occlusions that hinder optical methods. These approaches prioritize direct measurement of motion parameters like orientation, acceleration, and joint angles through physical sensors attached to the body or integrated into garments, offering advantages in portability and robustness to visual obstructions. Inertial measurement units (IMUs) form a cornerstone of non-optical motion capture, typically comprising triaxial gyroscopes to detect angular velocity and triaxial accelerometers to measure linear acceleration, which together estimate pose and trajectory over time. Integration of these raw signals, however, introduces drift errors due to noise and bias accumulation, necessitating fusion algorithms like complementary filters or Kalman filters to combine data from multiple sensors for improved accuracy and drift correction. Commercial suits such as the Xsens MVN Link exemplify this technology, using a network of 17-21 IMUs worn on the body to reconstruct full 3D kinematics in real-time with sub-degree orientation precision after fusion processing. Similarly, the Rebocap system provides a low-cost IMU-based solution utilizing 15 sensors, each equipped with accelerometers, gyroscopes, and magnetometers, for full-body tracking without requiring external equipment like base stations or cameras. In comparison to optical systems, which achieve higher precision through marker and camera setups but involve greater complexity and cost, Rebocap enables affordable, untethered motion capture, albeit with potential drift over extended periods and lower immediate accuracy.[40] In consumer VR, inertial systems like Sony mocopi (six lightweight sensors with smartphone processing, supporting standalone Quest VRChat via Bluetooth/OSC since 2023) provide portable full-body motion capture without cameras or base stations. HTC VIVE Ultimate Tracker (2023 release) offers self-tracking 6DoF trackers (inside-out cameras on trackers) for wireless full-body setups, compatible with SteamVR headsets including partial Quest support (often requiring PC bridge, but native with HTC standalone like XR Elite). These enable no-PC or minimal-setup FBT in social VR platforms. Mechanical systems employ exoskeletons or goniometers to directly quantify joint angles through physical linkages and potentiometers, providing precise, low-latency measurements without reliance on external fields or computations. These devices, often lightweight and portable for gait analysis, constrain motion to predefined ranges to ensure sensor alignment with anatomical joints, limiting their use to controlled rehabilitation or biomechanical studies rather than free-form activities. For instance, wearable goniometers integrated into braces can track knee flexion-extension with errors below 2 degrees during walking, supporting applications in physical therapy where simplicity and direct feedback are paramount.[71][72] Magnetic systems utilize electromagnetic fields generated by a base transmitter to determine the position and orientation of receiver sensors attached to the performer, leveraging principles of induced currents for 6-degree-of-freedom tracking without line-of-sight requirements. Introduced in the early 1990s, systems like the Polhemus Fastrak employed alternating current fields to achieve millimeter-level accuracy in controlled spaces, though they remain susceptible to distortions from nearby ferromagnetic materials, which can introduce positional errors up to 10-20% in metalliferous environments. Despite these limitations, magnetic trackers have historically facilitated animation pipelines in studios free from camera setups, with modern variants incorporating calibration to mitigate interference.[73][2] Stretch sensors integrated into e-textiles represent an emerging non-optical paradigm, embedding piezoresistive or capacitive elements into fabrics to detect deformations from body movements, enabling full-body capture through clothing without rigid attachments. These fabric-based systems measure strain across joints and limbs, converting elongation into electrical signals for pose estimation, and are particularly suited for sports tracking due to their washability and comfort during dynamic activities like running or cycling. Prototypes such as textile-embedded sensor networks have demonstrated correlation coefficients above 0.95 for upper-body kinematics in loose garments, paving the way for unobtrusive monitoring in athletic performance analysis.[74][75]Advanced and Related Techniques

Markerless and AI-Driven Methods