Recent from talks

Nothing was collected or created yet.

Multiple instruction, multiple data

View on Wikipedia| Flynn's taxonomy |

|---|

| Single data stream |

| Multiple data streams |

| SIMD subcategories[1] |

| See also |

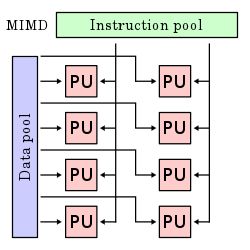

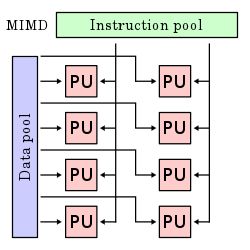

In computing, multiple instruction, multiple data (MIMD) is a technique employed to achieve parallelism. Machines using MIMD have a number of processor cores that function asynchronously and independently. At any time, different processors may be executing different instructions on different pieces of data.

MIMD architectures may be used in a number of application areas such as computer-aided design/computer-aided manufacturing, simulation, modeling, and as communication switches. MIMD machines can be of either shared memory or distributed memory categories. These classifications are based on how MIMD processors access memory. Shared memory machines may be of the bus-based, extended, or hierarchical type. Distributed memory machines may have hypercube or mesh interconnection schemes.

Examples

[edit]An example of MIMD system is Intel Xeon Phi, descended from Larrabee microarchitecture.[2] These processors have multiple processing cores (up to 61 as of 2015) that can execute different instructions on different data.

Most parallel computers, as of 2013, are MIMD systems.[3]

Shared memory model

[edit]In shared memory model the processors are all connected to a "globally available" memory, via either software or hardware means. The operating system usually maintains its memory coherence.[4]

From a programmer's point of view, this memory model is better understood than the distributed memory model. Another advantage is that memory coherence is managed by the operating system and not the written program. Two known disadvantages are: scalability beyond thirty-two processors is difficult, and the shared memory model is less flexible than the distributed memory model.[4]

There are many examples of shared memory (multiprocessors): UMA (uniform memory access), COMA (cache-only memory access).[5]

Bus-based

[edit]MIMD machines with shared memory have processors which share a common, central memory. In the simplest form, all processors are attached to a bus which connects them to memory. This means that every machine with shared memory shares a specific CM, common bus system for all the clients.

For example, if we consider a bus with clients A, B, C connected on one side and P, Q, R connected on the opposite side, any one of the clients will communicate with the other by means of the bus interface between them.

Hierarchical

[edit]MIMD machines with hierarchical shared memory use a hierarchy of buses (as, for example, in a "fat tree") to give processors access to each other's memory. Processors on different boards may communicate through inter-nodal buses. Buses support communication between boards. With this type of architecture, the machine may support over nine thousand processors.

Distributed memory

[edit]In distributed memory MIMD (multiple instruction, multiple data) machines, each processor has its own individual memory location. Each processor has no direct knowledge about other processor's memory. For data to be shared, it must be passed from one processor to another as a message. Since there is no shared memory, contention is not as great a problem with these machines. It is not economically feasible to connect a large number of processors directly to each other. A way to avoid this multitude of direct connections is to connect each processor to just a few others. This type of design can be inefficient because of the added time required to pass a message from one processor to another along the message path. The amount of time required for processors to perform simple message routing can be substantial. Systems were designed to reduce this time loss and hypercube and mesh are among two of the popular interconnection schemes.

Examples of distributed memory (multiple computers) include MPP (massively parallel processors), COW (clusters of workstations) and NUMA (non-uniform memory access). The former is complex and expensive: Many super-computers coupled by broad-band networks. Examples include hypercube and mesh interconnections. COW is the "home-made" version for a fraction of the price.[5]

Hypercube interconnection network

[edit]In an MIMD distributed memory machine with a hypercube system interconnection network containing four processors, a processor and a memory module are placed at each vertex of a square. The diameter of the system is the minimum number of steps it takes for one processor to send a message to the processor that is the farthest away. So, for example, the diameter of a 2-cube is 2. In a hypercube system with eight processors and each processor and memory module being placed in the vertex of a cube, the diameter is 3. In general, a system that contains 2^N processors with each processor directly connected to N other processors, the diameter of the system is N. One disadvantage of a hypercube system is that it must be configured in powers of two, so a machine must be built that could potentially have many more processors than is really needed for the application.

Mesh interconnection network

[edit]In an MIMD distributed memory machine with a mesh interconnection network, processors are placed in a two-dimensional grid. Each processor is connected to its four immediate neighbors. Wrap around connections may be provided at the edges of the mesh. One advantage of the mesh interconnection network over the hypercube is that the mesh system need not be configured in powers of two. A disadvantage is that the diameter of the mesh network is greater than the hypercube for systems with more than four processors.

See also

[edit]References

[edit]- ^ Flynn, Michael J. (September 1972). "Some Computer Organizations and Their Effectiveness" (PDF). IEEE Transactions on Computers. C-21 (9): 948–960. doi:10.1109/TC.1972.5009071.

- ^ "The Perils of Parallel: Larrabee vs. Nvidia, MIMD vs. SIMD". 19 September 2008.

- ^ "MIMD | Intel® Developer Zone". Archived from the original on 2013-10-16. Retrieved 2013-10-16.

- ^ a b Ibaroudene, Djaffer. "Parallel Processing, EG6370G: Chapter 1, Motivation and History." Lecture Slides. St Mary's University, San Antonio, Texas. Spring 2008.

- ^ a b Andrew S. Tanenbaum (1997). Structured Computer Organization (4 ed.). Prentice-Hall. pp. 559–585. ISBN 978-0130959904. Archived from the original on 2013-12-01. Retrieved 2013-03-15.

Multiple instruction, multiple data

View on GrokipediaOverview and Classification

Definition in Flynn's Taxonomy

Flynn's taxonomy, proposed in 1966, classifies computer architectures based on the number of instruction streams and data streams they process simultaneously. The taxonomy divides systems into four categories: single instruction, single data (SISD), which represents conventional sequential processors handling one instruction on one data item at a time; single instruction, multiple data (SIMD), where a single instruction stream operates on multiple data streams in lockstep; multiple instruction, single data (MISD), involving multiple instruction streams processing a single data stream, though this class is rarely implemented; and multiple instruction, multiple data (MIMD). MIMD architectures feature multiple autonomous processors, each capable of executing independent instruction streams on separate data streams concurrently. This setup enables asynchronous parallelism, where processors operate without a global synchronization clock, allowing flexible execution of diverse tasks.[5] In contrast to SIMD systems, which require synchronized operations across processors, MIMD supports greater heterogeneity in workloads. The von Neumann bottleneck, inherent in SISD architectures, arises from the sequential access to a shared memory bus for both instructions and data, limiting performance as processor speeds outpace memory bandwidth.[6] MIMD addresses this limitation by distributing computation across multiple processors, each potentially accessing distinct memory regions or sharing resources in parallel, thereby reducing contention on any single pathway and enhancing overall throughput through concurrent operations.[6]Key Characteristics

MIMD architectures enable asynchronous execution, where multiple processors operate independently without reliance on a global clock, allowing each to follow distinct instruction streams on separate data streams.[5] This independence supports non-deterministic behavior, making MIMD suitable for tasks requiring varied processing rates across processors.[7] Scalability in MIMD systems ranges from small-scale multiprocessors to large clusters comprising thousands of nodes, though communication overhead between processors can limit efficiency as the number of units grows.[5] Key advantages include high flexibility for handling irregular workloads that do not follow uniform patterns, inherent fault tolerance via processor redundancy in distributed setups, and the ability to execute heterogeneous tasks simultaneously across processors.[5] However, these systems introduce disadvantages such as increased programming complexity due to the need for explicit synchronization and data management, non-uniform memory access times that complicate load distribution, and the risk of load imbalance where some processors idle while others are overburdened.[5] Performance in MIMD systems is fundamentally constrained by Amdahl's law, which quantifies the theoretical speedup achievable through parallelism.[8] The law states that the maximum speedup for a program with a parallelizable fraction executed on processors is given by where the serial portion bottlenecks overall gains, emphasizing the importance of minimizing sequential code in MIMD applications.[5]Historical Development

Origins in Parallel Computing

The conceptual foundations of multiple instruction, multiple data (MIMD) architectures in parallel computing trace back to John von Neumann's explorations of self-replicating systems during the late 1940s. In his seminal, posthumously published work on cellular automata, von Neumann conceptualized a theoretical framework for universal constructors capable of self-replication, which necessitated massively parallel operations across independent computational elements to mimic biological processes. This vision of decentralized, concurrent processing units challenged the dominant sequential von Neumann bottleneck model and inspired subsequent ideas in distributed computation.[9] Early efforts in the 1950s further advanced parallel processing concepts through experimental machines that hinted at vector-like operations for scientific computations. By the 1960s, the ILLIAC IV project, initiated in 1965 under Daniel Slotnick—who had collaborated with von Neumann at the Institute for Advanced Study—emerged as a pivotal design. Although primarily SIMD-oriented with its 64-processor array, the ILLIAC IV demonstrated scalable parallel execution and influenced MIMD by addressing synchronization across autonomous processing elements.[10][11] The U.S. Advanced Research Projects Agency (ARPA), established in 1958 amid Cold War pressures following the Sputnik launch, played a critical role in funding such parallel computing initiatives to bolster national security through technological superiority in simulations and cryptography. ARPA's support for the ILLIAC IV exemplified this strategic investment in high-performance computing research during the era.[12] As transistor densities began surging in the mid-1960s—doubling roughly every 18 months per Gordon Moore's observation—the inefficiencies of sequential architectures became evident, driving the transition to parallel paradigms like MIMD to harness the expanding hardware capabilities without proportional power increases. This shift addressed the impending plateau in single-processor clock speeds and enabled more flexible, asynchronous execution across multiple streams. The formal classification of MIMD within Flynn's 1966 taxonomy marked its conceptual maturation as a distinct category.[13][14]Major Milestones and Systems

One of the earliest commercial implementations of MIMD architecture was the Denelcor HEP, introduced in 1978 as a pipelined multiprocessor system capable of supporting up to 16 processors with dynamic scheduling to handle thread synchronization and resource allocation.[15] This design emphasized non-blocking operations and rapid context switching, achieving high throughput for parallel tasks by overlapping instruction execution across multiple streams.[16] In the 1980s, the Intel iPSC, launched in 1985, represented a significant advancement in scalable MIMD systems through its hypercube topology, connecting up to 128 nodes each equipped with an Intel 80286 processor and local memory. This distributed-memory architecture enabled efficient message-passing for scientific computing applications, marking a shift toward commercially viable parallel processing at scale.[17] Building on this momentum, the Connection Machine CM-5, announced in 1991 by Thinking Machines Corporation, introduced a scalable MIMD cluster design with up to thousands of vector processing nodes interconnected via a fat-tree network, supporting both SIMD and MIMD modes for flexible workload distribution.[18] Its modular structure allowed configurations from 32 to over 16,000 processors, facilitating terascale performance in simulations and data-intensive computations.[19] The 1990s saw a pivotal democratization of MIMD computing with the emergence of workstation clusters, exemplified by the Beowulf project initiated at NASA's Goddard Space Flight Center in 1994, which assembled off-the-shelf PCs into high-performance MIMD systems using standard Ethernet for interconnection.[20] This approach drastically reduced costs compared to proprietary hardware, enabling widespread adoption in research and enabling scalable parallel processing without specialized components. The ongoing impact of Moore's Law, which doubled transistor densities roughly every two years, profoundly influenced MIMD evolution by sustaining exponential growth in processing capabilities through the 1990s, but its slowdown in single-core performance around the early 2000s—due to diminishing returns in clock speeds and power efficiency—drove the widespread integration of multicore processors as a natural extension of MIMD principles within commodity CPUs.[13] This transition, evident in designs like Intel's dual-core Pentium D released in 2005, allowed multiple independent instruction streams to execute on shared silicon, perpetuating MIMD scalability in mainstream computing.[21]Architectural Models

Shared Memory Architectures

In shared memory architectures for MIMD systems, multiple processors access a unified address space, enabling implicit communication through load and store operations without explicit message passing. This model simplifies programming compared to distributed alternatives but introduces challenges in maintaining data consistency across processors.[5][22] Uniform Memory Access (UMA) architectures provide all processors with equal access times to the shared memory, typically via a single bus or crossbar interconnect. In UMA systems, processors are symmetric, and memory is centralized, ensuring uniform latency for reads and writes regardless of the requesting processor. This design supports small-scale parallelism effectively but is constrained by the shared interconnect.[23][24] Non-Uniform Memory Access (NUMA) extends shared memory to larger scales by distributing memory banks locally to processor nodes, resulting in faster access to local memory and slower access to remote banks. Access times vary based on the physical proximity of the processor to specific memory modules, often organized in clusters connected by a scalable interconnect like a directory network. NUMA mitigates some UMA bottlenecks while preserving a single address space.[23][25] To ensure data consistency in these architectures, cache coherence protocols maintain that all processors observe a single, valid copy of shared data across private caches. Snooping protocols rely on broadcast mechanisms where each cache monitors bus traffic to detect and resolve inconsistencies, suitable for bus-based UMA systems with few processors. Directory-based protocols, in contrast, use a centralized or distributed directory to track cache line states, avoiding broadcasts and scaling better for NUMA systems with many nodes.[26][27] A widely adopted snooping protocol is MESI, which defines four states for each cache line: Modified (dirty data unique to this cache), Exclusive (clean data unique to this cache), Shared (clean data potentially in multiple caches), and Invalid (stale or unused). Transitions between states occur on read or write misses, ensuring coherence through invalidate or update actions propagated via snoops.[28][29] UMA architectures face scalability limits due to bus contention, where increasing processors beyond 16-32 intensifies competition for the shared interconnect, leading to bandwidth saturation and performance degradation. This bottleneck arises as memory requests serialize on the bus, reducing effective throughput despite added processing power.[23][30] Coherence overhead further impacts performance, modeled approximately as the expected time per access equaling local latency plus the product of remote access probability and remote latency: Here, is the latency for local cache or memory hits, is the fraction of accesses requiring remote involvement, and accounts for directory lookups or snoops. This formula highlights how sharing intensity amplifies delays in larger systems.[31] In contrast to distributed memory architectures, shared memory approaches centralize the address space to facilitate easier synchronization but demand robust coherence mechanisms to handle access disparities.[22]Distributed Memory Architectures

In distributed memory architectures for MIMD systems, each processor maintains its own private local memory without a shared address space, necessitating explicit data exchange between processors to coordinate computations.[32] This design contrasts with shared memory models by eliminating implicit data access, instead relying on programmer-managed communication to transfer data and synchronize operations across nodes.[33] Such systems, often termed multicomputers, enable the construction of large-scale parallel machines by replicating processor-memory pairs connected via an interconnection network.[34] The predominant communication paradigm in these architectures is message passing, exemplified by the Message Passing Interface (MPI) standard released in 1994, which defines a portable interface for distributed memory environments. MPI supports point-to-point operations, such as send and receive primitives for direct data transfer between two processes, as well as collective operations like broadcast, reduce, and all-to-all exchanges that involve multiple processes for efficient group communication. For hybrid approaches that blend message passing with a global view of memory, Partitioned Global Address Space (PGAS) models partition the address space across nodes while allowing one-sided remote access. Unified Parallel C (UPC), an extension of ISO C, implements PGAS by providing shared data structures with affinity to specific threads, facilitating locality-aware parallelism without explicit message coordination.[35] Similarly, Coarray Fortran extends Fortran 95 with coarrays—distributed arrays accessible via remote references—enabling SPMD-style programming where each image (process) owns a portion of the data.[36] A key advantage of distributed memory architectures lies in their scalability, supporting systems with thousands of nodes by avoiding the global memory coherence overhead that limits shared memory designs to smaller scales.[33][32] This scalability arises because memory bandwidth and access contention grow linearly with the number of processors, without centralized bottlenecks. However, communication introduces trade-offs in performance, commonly modeled using the Hockney approximation where the time to transfer a message of size is , with representing startup latency and the per-word transfer cost.[37] This model highlights how latency dominates small messages, while bandwidth limits larger ones, influencing algorithm design in large MIMD clusters.Interconnection Networks

Hypercube Topology

The hypercube topology, also known as the binary n-cube, forms an n-dimensional interconnection network comprising 2^n nodes, with each node directly connected to exactly n neighboring nodes that differ by a single bit in their binary address labels.[38] For example, a 3-dimensional hypercube features 8 nodes (2^3), where each node maintains 3 bidirectional links to its neighbors.[38] This recursive structure allows lower-dimensional hypercubes to be embedded within higher ones, facilitating scalable expansion in distributed MIMD systems by doubling the node count and link degree at each dimension increase.[38] A key advantage of the hypercube lies in its diameter of n hops, representing the longest shortest path between any two nodes and enabling logarithmic communication latency relative to the system size, which supports efficient message passing in large-scale MIMD configurations.[38] Routing algorithms exploit the topology's binary labeling, with dimension-order routing—often termed e-cube routing—directing packets along dimensions in a predetermined sequence (e.g., from least to most significant bit), thereby avoiding cycles and reducing contention in wormhole-routed networks.[39] This deterministic approach ensures minimal paths of length at most n, making it suitable for the fault-tolerant, decentralized control typical of MIMD architectures.[39] Early MIMD systems prominently adopted the hypercube for its balance of connectivity and scalability, including the Intel iPSC/1 introduced in 1985, which interconnected up to 128 nodes in a 7-dimensional hypercube for scalable parallel processing.[40] Similarly, the nCUBE/ten series, launched around the same period, scaled to 1024 processors (10-dimensional) using custom MIMD nodes linked via hypercube topology to deliver up to 500 MFLOPS aggregate performance for scientific workloads.[41] These implementations highlighted the topology's suitability for message-passing paradigms in distributed memory MIMD environments.[41] The hypercube exhibits a bisection width of 2^{n-1} links, which partitions the network into two equal halves of 2^{n-1} nodes each while maintaining high aggregate bandwidth across the cut, underscoring its balanced and fault-resilient properties for MIMD load distribution.[38]Mesh Topology

In mesh interconnection networks for MIMD systems, processing elements are organized in a k-dimensional grid structure, with each node connected directly to up to 2k nearest neighbors along the grid dimensions.[42] For instance, in a 4×4 two-dimensional (2D) mesh, interior nodes have a degree of 4, connecting to north, south, east, and west neighbors, while boundary nodes have fewer connections.[42] This regular, planar layout facilitates scalable implementation in hardware, particularly for applications involving local data dependencies, such as image processing or scientific simulations on large grids.[43] The diameter of a k-dimensional mesh with m nodes per dimension is k × (m - 1), resulting in communication latency that scales linearly with system size and can become a bottleneck for global operations in large-scale MIMD configurations. Toroidal variants address this by adding wraparound links at the grid edges, effectively forming a closed loop in each dimension and reducing the diameter by approximately half—for example, from 2(m - 1) to m in a 2D case—while maintaining the same node degree.[43] This modification enhances overall network efficiency without increasing hardware complexity, making toroidal meshes suitable for distributed MIMD architectures requiring balanced communication.[44] Meshes offer flexibility in algorithm porting through embeddability, allowing hypercube-based parallel algorithms—known for logarithmic diameter—to be mapped onto the mesh with a dilation factor of O(√N in 2D grids of N nodes, where dilation measures the maximum stretch of each communication edge.[45] This embedding enables the execution of hypercube-optimized MIMD programs on mesh hardware with moderate slowdown, preserving much of the algorithmic efficiency for tasks like collective operations.[46] Mesh topologies have been deployed in prominent MIMD supercomputers, including the Cray T3D system introduced in 1993, which utilized a 3D toroidal mesh to interconnect up to 2,048 Alpha processors, achieving 300 MB/s in each direction (600 MB/s bidirectional) per link for scalable parallel processing.[47] In contemporary GPU clusters, NVIDIA's DGX platforms employ NVLink interconnects in a cube-mesh topology among multiple GPUs, providing high-bandwidth, low-latency communication—up to 300 GB/s all-to-all in eight-GPU configurations—to support MIMD-style workloads in AI training and high-performance computing.[48]Programming and Synchronization

Parallel Programming Paradigms

Parallel programming paradigms for MIMD architectures provide software models that enable the execution of independent instruction streams on separate data sets, facilitating scalable computation across multiple processors. These paradigms address task distribution, coordination, and synchronization at a high level, adapting to the inherent flexibility of MIMD systems where processors can operate asynchronously.[49] Thread-based paradigms, such as OpenMP, are designed for shared-memory MIMD environments, where multiple threads access a common address space. OpenMP employs compiler directives to specify parallelism, with constructs like#pragma omp parallel for distributing loop iterations across threads for concurrent execution. This approach simplifies parallelization by incrementally adding directives to sequential code, promoting portability across shared-memory multiprocessors.[50]

Process-based paradigms, exemplified by the Message Passing Interface (MPI), target distributed-memory MIMD systems, where each process maintains private memory and communicates via explicit messages. Core functions such as MPI_Send and MPI_Recv enable point-to-point data exchange between processes, supporting the single-program multiple-data (SPMD) model common in MIMD applications. MPI's standardized interface ensures interoperability across heterogeneous clusters, making it foundational for large-scale distributed computing.[51]

Dataflow models offer an alternative for MIMD programming by emphasizing explicit parallelism through data dependencies rather than traditional control flow, avoiding locks and enabling fine-grained execution in early MIMD prototypes. In these models, computations activate only when input data arrives, as demonstrated in dataflow architectures where operations are represented as nodes in a graph, fostering inherent concurrency without global synchronization. This paradigm influenced subsequent MIMD designs by highlighting demand-driven scheduling for irregular workloads.[52]

Hybrid paradigms combine elements of shared- and distributed-memory approaches, such as integrating MPI for inter-node communication with OpenMP for intra-node thread parallelism in cluster-based MIMD systems. This layered strategy leverages MPI's scalability across nodes while using OpenMP to exploit multi-core processors within each node, reducing communication overhead in hierarchical environments. Hybrid models have become prevalent in high-performance computing for optimizing resource utilization in mixed architectures.[53]

Load balancing techniques in MIMD paradigms mitigate workload imbalances through dynamic scheduling, ensuring even distribution of tasks across processors to maximize utilization. In OpenMP, dynamic scheduling via schedule(dynamic) assigns work chunks to threads at runtime based on availability, adapting to varying computation times. For distributed MIMD, diffusion-based or receiver-initiated methods reallocate tasks by monitoring processor loads and migrating work, as explored in strategies that minimize migration costs while maintaining performance. These techniques are essential for irregular MIMD applications, where static partitioning often leads to inefficiencies.[54][55]