Recent from talks

Nothing was collected or created yet.

Orthogonality

View on WikipediaThis article needs additional citations for verification. (February 2025) |

Orthogonality is a term with various meanings depending on the context.

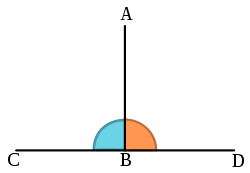

In mathematics, orthogonality is the generalization of the geometric notion of perpendicularity. Although many authors use the two terms perpendicular and orthogonal interchangeably, the term perpendicular is more specifically used for lines and planes that intersect to form a right angle, whereas orthogonal is used in generalizations, such as orthogonal vectors or orthogonal curves.[1][2]

The term is also used in other fields like physics, art, computer science, statistics, and economics.

Etymology

[edit]The word comes from the Ancient Greek ὀρθός (orthós), meaning "upright",[3] and γωνία (gōnía), meaning "angle".[4]

The Ancient Greek ὀρθογώνιον (orthogṓnion) and Classical Latin orthogonium originally denoted a rectangle.[5] Later, they came to mean a right triangle. In the 12th century, the post-classical Latin word orthogonalis came to mean a right angle or something related to a right angle.[6]

Mathematics

[edit]In mathematics, orthogonality is the generalization of the geometric notion of perpendicularity to linear algebra of bilinear forms.

Two elements u and v of a vector space with bilinear form are orthogonal when . Depending on the bilinear form, the vector space may contain null vectors, non-zero self-orthogonal vectors, in which case perpendicularity is replaced with hyperbolic orthogonality.

In the case of function spaces, families of functions are used to form an orthogonal basis, such as in the contexts of orthogonal polynomials, orthogonal functions, and combinatorics.

Physics

[edit]This section needs expansion. You can help by adding to it. (September 2022) |

Optics

[edit]In optics, polarization states are said to be orthogonal when they propagate independently of each other, as in vertical and horizontal linear polarization or right-handed and left-handed circular polarization.

Special relativity

[edit]In special relativity, a time axis determined by a rapidity of motion is hyperbolic-orthogonal to a space axis of simultaneous events, also determined by the rapidity. The theory features relativity of simultaneity.

Hyperbolic orthogonality

[edit]

In geometry, given a pair of conjugate hyperbolas, two conjugate diameters are hyperbolically orthogonal. This relationship of diameters was described by Apollonius of Perga and has been modernized using analytic geometry. Hyperbolically orthogonal lines appear in special relativity as temporal and spatial directions that show the relativity of simultaneity.

Keeping time and space axes hyperbolically orthogonal, as in Minkowski space, gives a constant result when measurements are taken of the speed of light.Quantum mechanics

[edit]In quantum mechanics, a sufficient (but not necessary) condition that two eigenstates of a Hermitian operator, and , are orthogonal is that they correspond to different eigenvalues. This means, in Dirac notation, that if and correspond to different eigenvalues. This follows from the fact that Schrödinger's equation is a Sturm–Liouville equation (in Schrödinger's formulation) or that observables are given by Hermitian operators (in Heisenberg's formulation).[citation needed]

Art

[edit]In art, the perspective (imaginary) lines pointing to the vanishing point are referred to as "orthogonal lines". The term "orthogonal line" often has a quite different meaning in the literature of modern art criticism. Many works by painters such as Piet Mondrian and Burgoyne Diller are noted for their exclusive use of "orthogonal lines" — not, however, with reference to perspective, but rather referring to lines that are straight and exclusively horizontal or vertical, forming right angles where they intersect. For example, an essay of the Thyssen-Bornemisza Museum states that "Mondrian [...] dedicated his entire oeuvre to the investigation of the balance between orthogonal lines and primary colours."[8]

Computer science

[edit]Orthogonality in programming language design is the ability to use various language features in arbitrary combinations with consistent results.[9] This usage was introduced by Van Wijngaarden in the design of Algol 68:

The number of independent primitive concepts has been minimized in order that the language be easy to describe, to learn, and to implement. On the other hand, these concepts have been applied “orthogonally” in order to maximize the expressive power of the language while trying to avoid deleterious superfluities.[10]

Orthogonality is a system design property which guarantees that modifying the technical effect produced by a component of a system neither creates nor propagates side effects to other components of the system. Typically this is achieved through the separation of concerns and encapsulation, and it is essential for feasible and compact designs of complex systems. The emergent behavior of a system consisting of components should be controlled strictly by formal definitions of its logic and not by side effects resulting from poor integration, i.e., non-orthogonal design of modules and interfaces. Orthogonality reduces testing and development time because it is easier to verify designs that neither cause side effects nor depend on them.

Orthogonal instruction set

[edit]An instruction set is said to be orthogonal if it lacks redundancy (i.e., there is only a single instruction that can be used to accomplish a given task)[11] and is designed such that instructions can use any register in any addressing mode. This terminology results from considering an instruction as a vector whose components are the instruction fields. One field identifies the registers to be operated upon and another specifies the addressing mode. An orthogonal instruction set uniquely encodes all combinations of registers and addressing modes.[12]

Telecommunications

[edit]In telecommunications, multiple access schemes are orthogonal when an ideal receiver can completely reject arbitrarily strong unwanted signals from the desired signal using different basis functions. One such scheme is time-division multiple access (TDMA), where the orthogonal basis functions are nonoverlapping rectangular pulses ("time slots").

Orthogonal frequency-division multiplexing

[edit]Another scheme is orthogonal frequency-division multiplexing (OFDM), which refers to the use, by a single transmitter, of a set of frequency multiplexed signals with the exact minimum frequency spacing needed to make them orthogonal so that they do not interfere with each other. Well known examples include (a, g, and n) versions of 802.11 Wi-Fi; WiMAX; ITU-T G.hn, DVB-T, the terrestrial digital TV broadcast system used in most of the world outside North America; and DMT (Discrete Multi Tone), the standard form of ADSL.

In OFDM, the subcarrier frequencies are chosen[how?] so that the subcarriers are orthogonal to each other, meaning that crosstalk between the subchannels is eliminated and intercarrier guard bands are not required. This greatly simplifies the design of both the transmitter and the receiver. In conventional FDM, a separate filter for each subchannel is required.

Statistics, econometrics, and economics

[edit]When performing statistical analysis, independent variables that affect a particular dependent variable are said to be orthogonal if they are uncorrelated,[13] since the covariance forms an inner product. In this case the same results are obtained for the effect of any of the independent variables upon the dependent variable, regardless of whether one models the effects of the variables individually with simple regression or simultaneously with multiple regression. If correlation is present, the factors are not orthogonal and different results are obtained by the two methods. This usage arises from the fact that if centered by subtracting the expected value (the mean), uncorrelated variables are orthogonal in the geometric sense discussed above, both as observed data (i.e., vectors) and as random variables (i.e., density functions). One econometric formalism that is alternative to the maximum likelihood framework, the Generalized Method of Moments, relies on orthogonality conditions. In particular, the Ordinary Least Squares estimator may be easily derived from an orthogonality condition between the explanatory variables and model residuals.

Taxonomy

[edit]In taxonomy, an orthogonal classification is one in which no item is a member of more than one group, that is, the classifications are mutually exclusive.

Chemistry and biochemistry

[edit]In chemistry and biochemistry, an orthogonal interaction occurs when there are two pairs of substances and each substance can interact with their respective partner, but does not interact with either substance of the other pair. For example, DNA has two orthogonal pairs: cytosine and guanine form a base-pair, and adenine and thymine form another base-pair, but other base-pair combinations are strongly disfavored. As a chemical example, tetrazine reacts with transcyclooctene and azide reacts with cyclooctyne without any cross-reaction, so these are mutually orthogonal reactions, and so, can be performed simultaneously and selectively.[14]

Organic synthesis

[edit]In organic synthesis, orthogonal protection is a strategy allowing the deprotection of functional groups independently of each other.

Bioorthogonal chemistry

[edit]Supramolecular chemistry

[edit]In supramolecular chemistry the notion of orthogonality refers to the possibility of two or more supramolecular, often non-covalent, interactions being compatible; reversibly forming without interference from the other.

Analytical chemistry

[edit]In analytical chemistry, analyses are "orthogonal" if they make a measurement or identification in completely different ways, thus increasing the reliability of the measurement. Orthogonal testing thus can be viewed as "cross-checking" of results, and the "cross" notion corresponds to the etymologic origin of orthogonality. Orthogonal testing is often required as a part of a new drug application.

System reliability

[edit]In the field of system reliability orthogonal redundancy is that form of redundancy where the form of backup device or method is completely different from the prone to error device or method. The failure mode of an orthogonally redundant back-up device or method does not intersect with and is completely different from the failure mode of the device or method in need of redundancy to safeguard the total system against catastrophic failure.

Neuroscience

[edit]In neuroscience, a sensory map in the brain which has overlapping stimulus coding (e.g. location and quality) is called an orthogonal map.

Philosophy

[edit]In philosophy, two topics, authors, or pieces of writing are said to be "orthogonal" to each other when they do not substantively cover what could be considered potentially overlapping or competing claims. Thus, texts in philosophy can either support and complement one another, they can offer competing explanations or systems, or they can be orthogonal to each other in cases where the scope, content, and purpose of the pieces of writing are entirely unrelated.[example needed]

Gaming

[edit]In board games such as chess which feature a grid of squares, 'orthogonal' is used to mean "in the same row/'rank' or column/'file'". This is the counterpart to squares which are "diagonally adjacent".[28] In the ancient Chinese board game Go a player can capture the stones of an opponent by occupying all orthogonally adjacent points.

Law

[edit]In law, orthogonality can refer to interests in a proceeding that are not aligned, but also bear no correlation or effect on each other, so as not to create a conflict of interest.

Other examples

[edit]Stereo vinyl records encode both the left and right stereo channels in a single groove. The V-shaped groove in the vinyl has walls that are 90 degrees to each other, with variations in each wall separately encoding one of the two analogue channels that make up the stereo signal. The cartridge senses the motion of the stylus following the groove in two orthogonal directions: 45 degrees from vertical to either side.[29] A pure horizontal motion corresponds to a mono signal, equivalent to a stereo signal in which both channels carry identical (in-phase) signals.

See also

[edit]References

[edit]- ^ "perpendicular". Merriam-Webster.com Dictionary. Merriam-Webster.

- ^ "orthogonal". Merriam-Webster.com Dictionary. Merriam-Webster.

- ^ Liddell and Scott, A Greek–English Lexicon s.v. ὀρθός

- ^ Liddell and Scott, A Greek–English Lexicon s.v. γωνία

- ^ Liddell and Scott, A Greek–English Lexicon s.v. ὀρθογώνιον

- ^ "orthogonal". Oxford English Dictionary (3rd ed.). Oxford University Press. September 2004.

- ^ J.A. Wheeler; C. Misner; K.S. Thorne (1973). Gravitation. W.H. Freeman & Co. p. 58. ISBN 0-7167-0344-0.

- ^ "New York City, 3 (unfinished)". Archived from the original on 2009-01-31.

- ^ Michael L. Scott, Programming Language Pragmatics, p. 228.

- ^ 1968, Adriaan van Wijngaarden et al., Revised Report on the Algorithmic Language ALGOL 68, section 0.1.2, Orthogonal design

- ^ Null, Linda & Lobur, Julia (2006). The essentials of computer organization and architecture (2nd ed.). Jones & Bartlett Learning. p. 257. ISBN 978-0-7637-3769-6.

- ^ Linda Null (2010). The Essentials of Computer Organization and Architecture (PDF). Jones & Bartlett Publishers. pp. 287–288. ISBN 978-1449600068. Archived (PDF) from the original on 2015-10-10.

- ^ Athanasios Papoulis; S. Unnikrishna Pillai (2002). Probability, Random Variables and Stochastic Processes. McGraw-Hill. p. 211. ISBN 0-07-366011-6.

- ^ Karver, Mark R.; Hilderbrand, Scott A. (2012). "Bioorthogonal Reaction Pairs Enable Simultaneous, Selective, Multi-Target Imaging". Angewandte Chemie International Edition. 51 (4): 920–2. Bibcode:2012ACIE...51..920K. doi:10.1002/anie.201104389. PMC 3304098. PMID 22162316.

- ^ Sletten, Ellen M.; Bertozzi, Carolyn R. (2009). "Bioorthogonal Chemistry: Fishing for Selectivity in a Sea of Functionality". Angewandte Chemie International Edition. 48 (38): 6974–98. Bibcode:2009ACIE...48.6974S. doi:10.1002/anie.200900942. PMC 2864149. PMID 19714693.

- ^ Prescher, Jennifer A.; Dube, Danielle H.; Bertozzi, Carolyn R. (2004). "Chemical remodelling of cell surfaces in living animals". Nature. 430 (7002): 873–7. Bibcode:2004Natur.430..873P. doi:10.1038/nature02791. PMID 15318217. S2CID 4371934.

- ^ Prescher, Jennifer A; Bertozzi, Carolyn R (2005). "Chemistry in living systems". Nature Chemical Biology. 1 (1): 13–21. doi:10.1038/nchembio0605-13. PMID 16407987. S2CID 40548615.

- ^ Hang, Howard C.; Yu, Chong; Kato, Darryl L.; Bertozzi, Carolyn R. (2003-12-09). "A metabolic labeling approach toward proteomic analysis of mucin-type O-linked glycosylation". Proceedings of the National Academy of Sciences. 100 (25): 14846–14851. Bibcode:2003PNAS..10014846H. doi:10.1073/pnas.2335201100. ISSN 0027-8424. PMC 299823. PMID 14657396.

- ^ Sletten, Ellen M.; Bertozzi, Carolyn R. (2011). "From Mechanism to Mouse: A Tale of Two Bioorthogonal Reactions". Accounts of Chemical Research. 44 (9): 666–676. doi:10.1021/ar200148z. PMC 3184615. PMID 21838330.

- ^ Plass, Tilman; Milles, Sigrid; Koehler, Christine; Schultz, Carsten; Lemke, Edward A. (2011). "Genetically Encoded Copper-Free Click Chemistry". Angewandte Chemie International Edition. 50 (17): 3878–3881. Bibcode:2011ACIE...50.3878P. doi:10.1002/anie.201008178. PMC 3210829. PMID 21433234.

- ^ Neef, Anne B.; Schultz, Carsten (2009). "Selective Fluorescence Labeling of Lipids in Living Cells". Angewandte Chemie International Edition. 48 (8): 1498–500. Bibcode:2009ACIE...48.1498N. doi:10.1002/anie.200805507. PMID 19145623.

- ^ Baskin, J. M.; Prescher, J. A.; Laughlin, S. T.; Agard, N. J.; Chang, P. V.; Miller, I. A.; Lo, A.; Codelli, J. A.; Bertozzi, C. R. (2007). "Copper-free click chemistry for dynamic in vivo imaging". Proceedings of the National Academy of Sciences. 104 (43): 16793–7. Bibcode:2007PNAS..10416793B. doi:10.1073/pnas.0707090104. PMC 2040404. PMID 17942682.

- ^ Ning, Xinghai; Temming, Rinske P.; Dommerholt, Jan; Guo, Jun; Blanco-Ania, Daniel; Debets, Marjoke F.; Wolfert, Margreet A.; Boons, Geert-Jan; Van Delft, Floris L. (2010). "Protein Modification by Strain-Promoted Alkyne-Nitrone Cycloaddition". Angewandte Chemie International Edition. 49 (17): 3065–8. Bibcode:2010ACIE...49.3065N. doi:10.1002/anie.201000408. PMC 2871956. PMID 20333639.

- ^ Yarema, K. J.; Mahal, LK; Bruehl, RE; Rodriguez, EC; Bertozzi, CR (1998). "Metabolic Delivery of Ketone Groups to Sialic Acid Residues. Application to Cell Surface Glycoform Engineering". Journal of Biological Chemistry. 273 (47): 31168–79. doi:10.1074/jbc.273.47.31168. PMID 9813021.

- ^ Blackman, Melissa L.; Royzen, Maksim; Fox, Joseph M. (2008). "The Tetrazine Ligation: Fast Bioconjugation based on Inverse-electron-demand Diels-Alder Reactivity". Journal of the American Chemical Society. 130 (41): 13518–9. doi:10.1021/ja8053805. PMC 2653060. PMID 18798613.

- ^ Stöckmann, Henning; Neves, André A.; Stairs, Shaun; Brindle, Kevin M.; Leeper, Finian J. (2011). "Exploring isonitrile-based click chemistry for ligation with biomolecules". Organic & Biomolecular Chemistry. 9 (21): 7303–5. doi:10.1039/C1OB06424J. PMID 21915395.

- ^ Sletten, Ellen M.; Bertozzi, Carolyn R. (2011). "A Bioorthogonal Quadricyclane Ligation". Journal of the American Chemical Society. 133 (44): 17570–3. Bibcode:2011JAChS.13317570S. doi:10.1021/ja2072934. PMC 3206493. PMID 21962173.

- ^ "chessvariants.org chess glossary".

- ^ For an illustration, see YouTube.

Orthogonality

View on GrokipediaOrigins and General Concept

Etymology

The term "orthogonality" originates from Ancient Greek, combining ὀρθός (orthós), meaning "straight," "right," or "upright," with γωνία (gōnía), meaning "angle," to literally denote "right-angled."[12] This etymological root reflects the geometric notion of perpendicularity at its core. The adjective form "orthogonal" evolved through Medieval Latin orthogōnālis and orthogōnius, both signifying "right-angled," before entering Middle French as orthogonal in the sense of pertaining to right angles.[13] In English, "orthogonal" first appeared in 1571 in a mathematical context, in Thomas Digges's "A Geometrical Practise, named Pantometria," where it described right angles.[14] The noun "orthogonality," specifically denoting the property or state of being orthogonal, emerged later in the 19th century, with its earliest documented use in 1872 within Philosophical Transactions of the Royal Society, marking a shift toward more abstract mathematical applications.[15] Although the concept of right angles—implicitly orthogonal—was foundational in Euclid's Elements (c. 300 BCE), where perpendicular lines were defined as those forming equal adjacent angles, the Greek term itself was absent; Euclid relied on descriptive phrases rather than the compound word.[16] By the 19th century, mathematicians like Carl Friedrich Gauss incorporated "orthogonal" into advanced geometric frameworks, such as in his 1827 Disquisitiones generales circa superficies curvas, where he discussed orthogonal coordinate systems on curved surfaces, establishing the term's modern mathematical connotation.[17] In the 20th century, "orthogonal" extended beyond geometry to non-spatial senses, such as unrelatedness in statistics and independence in computing, influenced by its perpendicular origin but applied to abstract structures like vector spaces and functions.Fundamental Principles

Orthogonality serves as a foundational relation in abstract mathematical and scientific contexts, denoting a form of independence or non-interference between elements, where their interactions yield a null effect under a defined metric. This concept generalizes the geometric idea of perpendicularity, extending it to diverse structures beyond physical lines or planes, such that two elements are orthogonal if they do not influence or overlap in their contributions to a system.[18] In essence, orthogonality embodies mutual exclusivity, ensuring that the properties or behaviors of one element remain unaltered by the presence or variation of another.[1] A key property of orthogonality is its promotion of decomposability and simplicity within complex systems, as orthogonal elements can be analyzed or modified independently without propagating effects across the whole. This mirrors the behavior of perpendicular lines in Euclidean space, which intersect at a right angle but maintain distinct directions thereafter, providing a intuitive analogy for the abstract principle.[19] Such independence facilitates efficient representations and computations, as seen in the construction of bases or frameworks where orthogonal components span the space without redundancy.[18] Understanding orthogonality requires a preliminary grasp of elements like vectors within a structured space, where the space defines the framework for assessing relations such as alignment or separation. In engineering and scientific design, this principle manifests in non-interfering components—for instance, modular systems where altering one subsystem leaves others unaffected—enhancing reliability and scalability across disciplines.[20] This broad applicability underscores orthogonality's role as a prerequisite for more specialized interpretations in various fields.[19]Mathematics

Geometric and Vector Orthogonality

In Euclidean geometry, two lines are orthogonal if they intersect at a right angle of 90 degrees, and this concept extends to planes that intersect such that their normal vectors are perpendicular.[1] Orthogonality in this context captures the idea of perpendicularity, fundamental to constructing geometric figures like rectangles and cubes.[21] For vectors in Euclidean space, two vectors and are orthogonal if their dot product satisfies , which geometrically means the vectors are perpendicular and form a 90-degree angle.[22] This condition arises from the dot product formula , where yields .[22] Orthogonal vectors exhibit key properties that simplify computations in Euclidean spaces: their dot product is zero, they are linearly independent, and for orthogonal sets, the norm of their sum squares equals the sum of their squared norms, as in the Pythagorean theorem extended to vectors: .[23] These properties ensure that orthogonal bases, such as the standard unit vectors in Cartesian coordinates, preserve vector lengths and angles during projections and transformations, forming the foundation for orthogonal coordinate systems like the 2D xy-plane or 3D xyz-space where axes are mutually perpendicular. Examples of geometric and vector orthogonality abound in spatial representations: in 2D, the x- and y-axes are orthogonal vectors and with ; in 3D, adding the z-axis maintains mutual orthogonality for defining positions and directions.[22] In crystallography, orthogonal lattice structures, such as those in cubic or orthorhombic crystals, rely on perpendicular axes to describe repeating unit cells where lattice vectors meet at 90 degrees, enabling symmetric arrangements of atoms.[25] The historical roots of orthogonality trace to ancient geometry, where the Pythagorean theorem (circa 500 BCE) established the relationship for perpendicular lines in right triangles, later generalized to vectors.[23] Modern vector orthogonality emerged in the late 19th century through the independent work of J. Willard Gibbs and Oliver Heaviside, who formalized vector analysis with the dot product to quantify perpendicularity in physical applications. Gibbs's lectures from 1881–1884, published posthumously, defined the scalar (dot) product for orthogonal unit vectors, solidifying orthogonality as a core tool in multidimensional geometry.Linear Algebra and Inner Product Spaces

In linear algebra, an orthogonal basis for a finite-dimensional inner product space is a basis consisting of vectors that are pairwise orthogonal, meaning the inner product of any two distinct vectors is zero. This property simplifies computations such as finding coordinates of vectors in the basis, as the coefficients are simply the inner products divided by the squared norms of the basis vectors. An orthonormal basis extends this by requiring each vector to have unit length, so the norm of every basis vector is 1; such bases are particularly useful for preserving lengths and angles in transformations and for efficient matrix representations like the QR decomposition. The Gram-Schmidt process provides a constructive method to obtain an orthogonal or orthonormal basis from any linearly independent set of vectors in an inner product space. Given vectors , the algorithm proceeds iteratively: set ; for to , define , where the projection subtracts the components of along the previous orthogonal vectors to ensure is orthogonal to . To obtain an orthonormal basis, normalize each by dividing by its norm, . This process, originally formalized by Erhard Schmidt in 1907, is numerically stable for well-conditioned bases and forms the basis for algorithms in numerical linear algebra. Central to these concepts is the orthogonal projection, which decomposes a vector onto a subspace spanned by another vector (with ) as the unique vector in that direction closest to . The formula is where is the inner product; the remainder is orthogonal to . For projections onto subspaces with orthogonal bases, the operation extends linearly, enabling decompositions like the least-squares approximation in overdetermined systems. Inner product spaces abstract the notion of orthogonality beyond finite-dimensional Euclidean spaces to infinite-dimensional settings, such as spaces of continuous functions, where the inner product generalizes the dot product. Two functions and are orthogonal if their inner product over the interval , allowing bases of orthogonal functions to represent elements via series expansions similar to vector coordinates. A key application is the Fourier series, where the set forms an orthogonal basis for the space of square-integrable functions on under the inner product ; orthogonality follows from integral identities like for all positive integers , enabling the decomposition of periodic functions into sums of these basis elements with coefficients given by inner products.Advanced Mathematical Applications

In advanced mathematical contexts, orthogonal matrices play a central role in preserving geometric structures and facilitating numerical computations. An orthogonal matrix is a square matrix satisfying , where is the transpose and is the identity matrix, ensuring that the columns (and rows) form an orthonormal basis.[26] This property implies that orthogonal matrices preserve the Euclidean norm of vectors, as for any vector , making them ideal for representing rotations and reflections in Euclidean space.[27] A key application is the QR decomposition, where any invertible matrix factors as with orthogonal and upper triangular, enabling stable solutions to linear systems and eigenvalue problems through algorithms like the QR iteration.[28] In combinatorics, orthogonality addresses the independence of combinatorial structures, notably through orthogonal Latin squares and Hadamard matrices, which maximize informational content in designs. Two Latin squares of order are orthogonal if, when superimposed, every ordered pair of symbols appears exactly once, enabling the construction of mutually orthogonal sets up to squares for applications in experimental design theory.[29] Hadamard matrices, matrices of order (where or a multiple of 4) satisfy , achieving the maximal determinant bound of , which quantifies the highest possible "volume" spanned by their rows in the context of combinatorial optimization.[30] These matrices are pivotal in coding theory and block designs, where their orthogonality ensures minimal interference among components.[31] Orthogonal polynomials in number theory provide a framework for approximating functions and solving differential equations, characterized by their orthogonality with respect to a weight function over an interval. Classical examples include Legendre polynomials on with weight 1, and Hermite polynomials on with weight , both forming complete orthogonal bases for spaces.[32] These sequences satisfy a three-term recurrence relation, such as for Legendre polynomials, which facilitates efficient computation and reveals their role in spectral methods and quantum mechanics approximations.[33] Recent developments since 2000 have extended orthogonality to category theory, where it defines independence between functors or morphisms, enhancing the study of accessible categories. Two morphisms and are orthogonal, denoted , if for any commutative square, there exists a unique diagonal morphism making it a pullback, capturing "non-interference" in categorical compositions.[34] In accessible categories, definable orthogonality classes—sets of objects or morphisms closed under certain operations—are shown to be small (of bounded cardinality), with the required large-cardinal assumptions depending on the Levy hierarchy complexity of the defining formulas, impacting model theory and higher-dimensional algebra.[35] This framework unifies prior notions of independence across mathematics, with applications in homotopy theory and logic.Physics

Optics

In optics, orthogonality describes the perpendicular relationship between light rays and wavefronts, a foundational principle in geometric optics. Light rays, defined as lines perpendicular to the surfaces of constant phase (wavefronts), propagate in the direction normal to these wavefronts in homogeneous media. This orthogonality facilitates ray tracing simulations, where rays are modeled as straight paths that bend at interfaces according to Snell's law, with angles measured relative to the surface normal—the line perpendicular to the interface. For instance, at orthogonal (normal) incidence, where the ray is perpendicular to the boundary, refraction occurs without angular deviation, as the sine of zero degrees yields no bending term in the law.[36][37][38] Polarization further exemplifies orthogonality through the mutual perpendicularity of electric field components. Unpolarized light can be decomposed into two orthogonal linear polarizations, such as s- (senkrecht, perpendicular to the plane of incidence) and p- (parallel) components, which experience distinct reflection and transmission behaviors at interfaces. In birefringent materials, like calcite, an incident ray splits into ordinary and extraordinary rays polarized along orthogonal principal axes, each propagating with a different refractive index due to the material's anisotropic structure. Polarizing filters exploit this by transmitting vibrations aligned with their axis while absorbing the orthogonal component, enabling applications like glare reduction.[39][40][41][42] In interferometry, orthogonality supports the independence of resonant modes in Fabry-Pérot cavities, enhancing spectral resolution. These cavities, formed by two parallel mirrors, sustain transverse electromagnetic (TEM) modes whose field distributions are orthogonal, meaning their overlap integrals vanish, preventing energy transfer between modes. This allows the cavity to resolve closely spaced wavelengths based on the free spectral range between orthogonal or higher-order modes, crucial for precision spectroscopy. The geometric orthogonality here echoes vector perpendicularity in defining field directions orthogonal to propagation.[43] This application of orthogonality traces to the 17th century, when Christiaan Huygens proposed his wave principle in Traité de la Lumière (1678), positing that every point on a wavefront emits secondary spherical wavelets, with the new wavefront as their envelope and rays perpendicular to it—incorporating orthogonality to explain diffraction and propagation./University_Physics_III_-Optics_and_Modern_Physics(OpenStax)/01%3A_The_Nature_of_Light/1.07%3A_Huygenss_Principle)[44]Special Relativity and Hyperbolic Orthogonality

In special relativity, spacetime is modeled by Minkowski space, a four-dimensional manifold equipped with the Minkowski metric , where is the speed of light.[45] This indefinite metric distinguishes it from the positive-definite Euclidean metric used in classical geometry, leading to a pseudo-Riemannian structure where the inner product of two four-vectors and is given by .[46] Two four-vectors are orthogonal if their Minkowski inner product vanishes, ; for spacelike vectors (those with positive norm), this orthogonality aligns with intuitive perpendicularity in spatial directions, but the indefinite signature allows timelike vectors (negative norm) to be orthogonal only to spacelike ones, not to other timelike vectors.[47] Hyperbolic orthogonality arises in this framework due to the hyperbolic geometry of the timelike sector, where the set of unit timelike vectors forms a hyperboloid rather than a sphere.[48] A timelike vector and a spacelike vector are hyperbolically orthogonal if their inner product is zero, corresponding to perpendicular worldlines in rapidity space, where rapidity parameterizes boosts via .[47] For instance, the worldline of a particle at rest (along the time axis) is hyperbolically orthogonal to the spatial axes, illustrating how simultaneity and causality are relative across inertial frames.[48] Lorentz transformations, which map between inertial frames while preserving the speed of light, maintain the Minkowski metric and thus all orthogonal relations between four-vectors.[46] These transformations form the Lorentz group, satisfying , where is the metric tensor, ensuring that if in one frame, it remains zero in another.[46] In particle physics, orthogonal boosts exemplify this preservation: successive boosts in perpendicular directions (e.g., along x and y axes) result in a combined transformation that includes a rotation (Thomas-Wigner rotation), but the orthogonality of the boost directions is invariant under the Lorentz group.[46] Null vectors, with zero norm (), represent lightlike paths and are orthogonal to themselves; they form the boundaries of light cones, separating timelike (causal) from spacelike (acausal) intervals and defining the causal structure of spacetime.[49] The four-vector formalism, introduced by Minkowski building on Einstein's 1905 theory, uses orthogonality in the Minkowski inner product to ensure energy-momentum conservation across frames: the total four-momentum (where for each particle) is a timelike four-vector conserved in collisions, with its norm yielding the invariant rest mass via .[50][45] This orthogonality condition guarantees that projections (e.g., energy in one frame orthogonal to spatial momentum adjustments) align relativistically without violating invariance.[46]Quantum Mechanics

In quantum mechanics, the state of a physical system is described by a vector in a complex Hilbert space, where orthogonality plays a central role in defining non-overlapping possibilities. The bra-ket notation, developed by Paul Dirac in the 1930s and formalized in his 1939 monograph, provides a compact way to express the inner product between states |ψ⟩ and |φ⟩ as ⟨ψ|φ⟩.[51] Two quantum states are orthogonal if their inner product vanishes, ⟨ψ|φ⟩ = 0, which corresponds to zero spatial or probabilistic overlap between their wavefunctions; according to the Born rule, this implies a zero probability for transitioning from one state to the other upon measurement.[52] This property ensures that orthogonal states represent mutually exclusive outcomes, forming the foundation for probabilistic interpretations in quantum theory.[53] A complete orthonormal basis in the Hilbert space allows any quantum state to be expanded as a linear superposition of basis vectors with complex coefficients. For instance, the position eigenstates |x⟩, satisfying ⟨x|x'⟩ = δ(x - x'), form such a basis, enabling the representation of wavefunctions ψ(x) = ⟨x|ψ⟩; similarly, momentum eigenstates |p⟩ provide another complete orthonormal set related by Fourier transform.[54] These bases, drawn from the inner product structure of Hilbert spaces as in linear algebra, underpin the expansion of arbitrary states and the resolution of the identity operator, ∫ |x⟩⟨x| dx = I.[55] Orthogonality here guarantees that the expansion coefficients are the projections ⟨φ|ψ⟩ onto the basis states |φ⟩, preserving unitarity and normalization.[56] Observables, such as position or energy, are represented by self-adjoint (Hermitian) operators whose spectral decomposition features orthogonal eigenspaces for distinct eigenvalues./08%3A_The_Postulates_of_Quantum_Mechanics/8.07%3A_Postulates_3_and_4_of_Quantum_Mechanics/8.7.01%3A_Eigenfunctions_of_Operators_are_Orthogonal) This orthogonality follows from the properties of Hermitian operators, ensuring real eigenvalues and the ability to choose orthonormal eigenvectors within degenerate subspaces.[57] If two Hermitian operators A and B commute, [A, B] = 0, they share a common orthonormal basis of simultaneous eigenstates, permitting compatible measurements without disturbance.[58] Representative examples illustrate these principles: for a spin-1/2 particle, the spin-up |↑⟩ and spin-down |↓⟩ states along the z-axis are orthogonal, with ⟨↑|↓⟩ = 0, forming a basis for the two-dimensional Hilbert space.[59] In quantum entanglement, the four Bell states—such as |Φ⁺⟩ = (1/√2)(|00⟩ + |11⟩)—constitute a complete orthonormal basis for the two-qubit Hilbert space, demonstrating orthogonality among maximally entangled pure states, though mixtures of such states may not preserve overall orthogonality.[60]Computer Science and Information Technology

Orthogonal Instruction Sets

In computer architecture, an orthogonal instruction set refers to an instruction set architecture (ISA) in which each operation is self-contained and independent, with no implicit dependencies on specific registers, modes, or states, allowing all instructions to uniformly access all registers and addressing modes without restrictions.[61] This design ensures that instructions operate in isolation, avoiding side effects that could complicate program behavior or hardware implementation.[61] The primary benefits of orthogonal instruction sets include simplified compiler design and optimization, as the uniformity reduces the need to handle irregular interactions between instructions, leading to fewer bugs and more predictable code generation.[61] In contrast, non-orthogonal ISAs like those in complex instruction set computing (CISC) architectures often impose limitations, such as restricted register access for certain operations, which can increase compilation complexity and error rates. Additionally, orthogonality facilitates hardware pipelining and parallel execution by minimizing inter-instruction dependencies, enhancing overall system performance and ease of verification.[61] Historically, the IBM System/360, introduced in the 1960s, represented an early milestone in orthogonal design by separating addressing modes from functional operations, enabling a unified ISA across a family of compatible machines and influencing subsequent architectures.[62] This approach evolved through the reduced instruction set computing (RISC) paradigm in the 1980s, with architectures like MIPS and ARM emphasizing orthogonality through uniform register files—typically 32 general-purpose registers accessible by all instructions—and fixed-length formats to further eliminate irregularities.[63] Modern extensions of this principle appear in graphics processing units (GPUs), such as AMD's RDNA3, where orthogonal ALU operations support efficient parallel workloads without stalling dependencies.[64] The degree of orthogonality in an ISA is typically assessed by the extent to which all possible combinations of operations, operands, and addressing modes are supported without exclusions or special cases.[61] For instance, highly orthogonal RISC designs like ARM's A64 achieve near-complete uniformity, with every arithmetic instruction able to use any of the 31 general-purpose registers, contrasting with less orthogonal systems where such flexibility is limited to specific subsets.[65]Programming and System Design

In software engineering, orthogonality refers to the design of APIs where functions operate independently, with parameters that do not produce hidden interactions or side effects, allowing changes to one component without impacting others. This principle aligns with the Unix philosophy, which advocates for small, modular tools that compose orthogonally to form complex systems, as exemplified by command-line utilities likegrep and sort that process streams without assuming specific formats.[66][67]

Key design principles incorporating orthogonality include separation of concerns, where distinct aspects of a system—such as data management, user interface, and business logic—are isolated to minimize interdependencies. In languages like Rust, orthogonal error handling achieves this through the Result<T, E> type and Error trait, which separate error propagation from core logic without introducing exceptions or implicit control flow, enabling explicit and composable error management.[68][69]

Representative examples illustrate these principles in practice. In Model-View-Controller (MVC) frameworks, orthogonality is promoted by decoupling the model (data and logic), view (presentation), and controller (input handling), ensuring modifications to the user interface do not affect data processing, as seen in implementations like Ruby on Rails or Spring MVC. Orthogonal persistence in databases extends this to storage, treating persistent and transient objects uniformly regardless of type or lifetime, as in systems like Napier88, where reachability from persistent roots automatically manages data longevity without explicit save operations.[70][71]

Challenges arise in balancing orthogonality with performance, as highly independent components can introduce overhead from communication or indirection, potentially degrading efficiency in resource-constrained environments; for instance, excessive modularity may increase latency in real-time systems. A case study is Lisp's orthogonal syntax, which uses a minimal, homoiconic structure where code and data share the same form, enabling powerful macros and metaprogramming without syntactic exceptions, though this uniformity can complicate readability for non-Lisp programmers.[72][73]

In the 2020s, modern trends emphasize orthogonality in microservices architectures, where services handle distinct domains independently, scalable via containerization tools like Docker, which isolates deployments without altering underlying application logic. This approach facilitates independent scaling and updates, though it requires careful management of inter-service communication to maintain performance.[74][75]

Communications and Signal Processing

Orthogonal Frequency-Division Multiplexing

Orthogonal frequency-division multiplexing (OFDM) is a digital modulation technique that divides a high-rate data stream into multiple parallel low-rate streams, each modulated onto a distinct subcarrier frequency. These subcarriers are closely spaced orthogonal sinusoids, with frequency spacing equal to the inverse of the symbol duration , ensuring that the signals do not interfere with each other at the receiver despite overlapping spectra. This orthogonality eliminates inter-carrier interference (ICI), allowing efficient use of the available bandwidth. In practice, the transmitter employs an inverse fast Fourier transform (IFFT) to generate the time-domain OFDM symbol from frequency-domain data symbols, while the receiver uses a fast Fourier transform (FFT) for demodulation, making the system computationally efficient.[76] The mathematical foundation of OFDM's orthogonality lies in the property of exponential functions over the symbol interval. Specifically, for subcarrier indices and , and fundamental frequency , the condition holds that This integral represents the inner product of the subcarrier signals, yielding zero for distinct frequencies, which confirms their mutual orthogonality and enables perfect recovery of each subcarrier's data without crosstalk.[76] The technique was pioneered by S. B. Weinstein and P. M. Ebert in 1971, who demonstrated that the discrete Fourier transform (DFT) could efficiently implement multicarrier modulation and demodulation for data transmission, including the addition of a guard interval to preserve orthogonality in dispersive channels.[76] OFDM gained practical traction in the 1990s through standardization efforts, with the European Telecommunications Standards Institute (ETSI) adopting it in the Digital Audio Broadcasting (DAB) standard (EN 300 401) in 1995, marking one of the first commercial deployments for robust audio transmission over multipath environments. OFDM has become integral to modern wireless communications, underpinning standards such as IEEE 802.11a (introduced in 1999) and subsequent Wi-Fi amendments (802.11g/n/ac/ax), where it supports high data rates in the 2.4 GHz and 5 GHz bands. It is also central to 4G LTE and 5G NR cellular networks, as specified by 3GPP, enabling broadband mobile data over wide channels. A primary advantage is its inherent resistance to multipath fading: the long symbol duration per subcarrier results in frequency-selective fading that affects subcarriers individually as flat fading, simplifying equalization with one-tap frequency-domain processing per subcarrier. Despite these benefits, OFDM exhibits significant drawbacks, including a high peak-to-average power ratio (PAPR), where the composite signal's amplitude peaks can be much higher than the average, necessitating linear amplifiers that operate inefficiently and increase power consumption.[77] To maintain orthogonality and mitigate inter-symbol interference in multipath channels while aiding synchronization, a cyclic prefix—a repeated suffix of the OFDM symbol—is prepended, typically comprising 10-25% of the symbol length, which incurs a bandwidth overhead and reduces overall spectral efficiency.[76][77]Orthogonal Codes and Modulation

Orthogonal codes are binary sequences engineered to exhibit zero cross-correlation between distinct codes, allowing multiple signals to coexist in the same frequency band with minimal interference. This property enables efficient multiple access in communication systems by decorrelating user signals at the receiver. A classic example is the Walsh-Hadamard codes, derived from Hadamard matrices of order , which maintain perfect orthogonality when time-aligned and are widely used in downlink CDMA systems to assign unique spreading sequences to users.[78][79][80] In orthogonal modulation techniques, signals are transmitted over mutually orthogonal dimensions to maximize spectral efficiency and reduce crosstalk. Quadrature Amplitude Modulation (QAM) exemplifies this by employing independent in-phase (I) and quadrature (Q) channels, which are phase-shifted by 90 degrees and thus orthogonal, permitting the simultaneous modulation of two data streams onto a single carrier without mutual interference. This approach doubles the data rate compared to single-channel modulation while preserving signal integrity in additive white Gaussian noise channels.[81][82] Spread-spectrum systems leverage orthogonal codes to achieve robust multiple access and anti-jamming capabilities. In direct-sequence spread spectrum (DSSS), these codes spread the signal across a wider bandwidth, enhancing resistance to interference. The Global Positioning System (GPS) utilizes pseudo-random noise (PRN) codes, particularly Gold codes for the coarse/acquisition (C/A) signal, which feature low cross-correlation values approximating orthogonality among the 32 satellite codes, enabling precise signal separation and acquisition even in multipath environments.[83][84][85] The performance benefits of orthogonal codes include significant bit error rate (BER) reduction through effective decorrelation, which suppresses multi-user interference in CDMA setups. For instance, in AWGN channels, Walsh-Hadamard codes yield lower BER compared to non-orthogonal sequences at equivalent signal-to-noise ratios, as validated by simulations showing BER improvements by orders of magnitude for multiuser scenarios. These gains approach the Shannon capacity limits for multiple-access channels, where orthogonal signaling achieves near-optimal rates by partitioning the channel into independent subchannels, bounded by per dimension, with minimal interference penalty.[86][87][88] Advances in the 2010s and 2020s have integrated orthogonal principles with chaotic sequences for enhanced physical layer security. Chaos-based orthogonal modulation schemes, such as those embedding chaotic maps into orthogonal time-frequency spaces or NOMA frameworks, scramble signals to thwart eavesdroppers while maintaining low BER and high secrecy rates over fading channels. These methods, often applied in satellite and wireless systems, exploit the broadband nature of chaos for key generation and signal masking, outperforming traditional encryption in resource-constrained environments.[89][90][91]Quantitative Analysis

Statistics and Econometrics

In statistics and econometrics, orthogonality refers to the independence or uncorrelatedness between components of a model, which facilitates estimation, inference, and interpretation by ensuring that effects are separable without interference. This property is foundational in linear models, where orthogonal elements simplify computations and enhance efficiency. For instance, orthogonal designs and estimators leverage this to partition variance and avoid biases from correlated predictors or errors.[92] Orthogonal polynomials serve as a basis for polynomial regression, particularly for modeling continuous quantitative predictors, where they ensure that higher-degree terms are uncorrelated with lower-degree ones, thus stabilizing coefficient estimates and reducing multicollinearity. Legendre polynomials, defined over the interval with respect to the uniform weight function, are commonly used for continuous data in least squares regression, as their cross-products sum to zero, allowing sequential testing of polynomial degrees without confounding.[93] In analysis of variance (ANOVA) for balanced experimental designs, orthogonal polynomials enable the decomposition of total variation into orthogonal components, such as linear and quadratic trends, facilitating hypothesis tests on specific patterns. This approach simplifies ordinary least squares (OLS) estimation by decorrelating the design matrix columns, improving numerical stability and interpretability.[92][94] A key application in econometrics is the instrumental variables (IV) method, which addresses endogeneity in regression models by imposing an orthogonality condition between instruments and error terms , formally , ensuring unbiased estimation of causal effects. This condition, first formalized in the context of errors-in-variables models, allows valid instruments to correlate with endogenous regressors while remaining exogenous to the disturbance, as in two-stage least squares procedures.[95] Orthogonal contrasts in experimental design further exemplify this, where linear combinations of group means are constructed such that their coefficients sum to zero across pairs, partitioning the sum of squares in ANOVA into independent single-degree-of-freedom tests for balanced data. Principal component analysis (PCA) extracts orthogonal factors by transforming correlated variables into uncorrelated principal components via eigenvectors of the covariance matrix, maximizing variance explanation while minimizing redundancy.[96][97] These properties underpin the efficiency of OLS under classical assumptions, as articulated in the Gauss-Markov theorem, which states that if errors are uncorrelated (orthogonal) with mean zero and constant variance, OLS yields the best linear unbiased estimator (BLUE) among all linear unbiased estimators. Developed in the early 19th century, this theorem relies on the orthogonality of residuals to the regressors in the projection sense, ensuring minimal variance without requiring normality. By avoiding multicollinearity through orthogonal bases or instruments, these techniques enhance model reliability in high-dimensional settings.[98]Economics

In economics, orthogonality refers to the independence or uncorrelated nature of variables, shocks, or factors, which facilitates the isolation of causal effects in modeling and policy analysis. A key application is in structural vector autoregression (SVAR) models, where economic disturbances are decomposed into orthogonal shocks—uncorrelated innovations that represent underlying structural forces such as monetary policy changes or supply disruptions.[99] These orthogonal shocks enable impulse response analysis to trace how independent disturbances, like a contractionary monetary policy shock, affect variables such as interest rates, economic activity, and bond premiums over time.[100] By assuming orthogonality among shocks, policymakers can identify the isolated impact of fiscal or monetary interventions without confounding correlations, as seen in evaluations of U.S. monetary transmission mechanisms.[101] In growth theory, orthogonality appears in factor models extending the Solow model, where latent variables representing technology or productivity are treated as orthogonal to observable inputs like capital accumulation and labor growth. This assumption allows empirical tests to attribute cross-country output differences to independent factors, such as investment rates, without bias from correlated technology residuals. For instance, in augmented Solow frameworks, orthogonal latent technology differences explain long-run per capita income variations, isolating their role from proximate causes like savings rates.[102] Such decompositions enhance the model's ability to interpret both temporal growth patterns and international disparities by ensuring factors like human capital accumulation operate independently of physical capital dynamics.[103] Examples of orthogonality include the decomposition of gross domestic product (GDP) into orthogonal components via factor models, which separate macroeconomic risks and explained variations in economic activity. In these approaches, an optimal orthogonalization isolates the contributions of variables like consumption and investment to GDP fluctuations, revealing the relative importance of domestic versus global drivers in GDP-at-risk forecasts.[104] Similarly, in auction theory, orthogonality underpins assumptions of independent private values, where bidders' signals or valuations are uncorrelated, enabling efficient mechanism design and revenue predictions in sealed-bid formats.[105] Policy implications arise from the orthogonality principle in fiscal-monetary interactions, where shocks from one policy are assumed independent of the other to avoid biases in impact assessments, as in the Tanzi effect of the 1980s, which highlighted inflation's erosive role on tax revenues amid delayed fiscal adjustments.[106] This independence supports targeted interventions, such as orthogonalizing fiscal shocks to monetary policy in VAR analyses, ensuring unbiased estimates of effects like government spending on output.[107] Recent developments in behavioral economics incorporate orthogonality for utility separation, particularly through the concept of orthogonal independence, which restricts additivity axioms to cases where alternatives are orthogonal—uncorrelated in attributes. Post-2010 studies show this property holds for spherical preferences, where indifference curves form spheres, allowing clean separation of utility components like risk attitudes from consumption bundles without violating behavioral anomalies.[108] This framework advances utility modeling by accommodating empirical deviations from expected utility while maintaining independence in orthogonal dimensions.[109]Biological and Chemical Sciences

Taxonomy and Classification

In biological taxonomy, classification systems use non-overlapping, mutually exclusive categories to ensure distinct and unique evolutionary lineages for organisms, particularly within cladistic frameworks. This approach aligns with the core tenet of cladistics, where monophyletic groups (clades) form hierarchical structures without membership overlap at equivalent levels, reflecting shared derived characters (synapomorphies) that trace independent evolutionary paths from common ancestors. Such structures prevent ambiguity in classification by maintaining clear boundaries between taxa, allowing researchers to reconstruct phylogenies based on non-redundant evidence.[110] The principles underlying classification in the Linnaean system structure taxa into hierarchical ranks with independent diagnostic criteria—meaning attributes at levels like genus and species operate without correlation or redundancy. For instance, genus-level traits often encompass broader anatomical or ecological features, while species-level distinctions focus on finer reproductive or genetic incompatibilities, enabling a nested yet non-intersecting framework where taxa at the same rank remain mutually exclusive. This independence facilitates precise identification and evolutionary inference, as emphasized in traditional taxonomic practice.[111] Practical examples include identification guides employing matrix-based or multi-access keys, such as those utilizing sets of independent morphological characters scored separately to pinpoint taxa without sequential bias. A prominent modern application is DNA barcoding, where complementary genetic loci like the mitochondrial COI gene combined with nuclear ITS regions provide non-correlated data for species authentication, enhancing accuracy in complex floras or faunas. These methods ensure robust discrimination by leveraging traits that evolve along distinct pathways.[112] Challenges arise from convergent evolution, where unrelated lineages develop similar traits due to analogous selective pressures, introducing homoplasy that blurs evolutionary boundaries and misleads cladistic analyses by simulating false synapomorphies. For example, wing structures in bats, birds, and insects exemplify convergence, complicating tree reconstruction if independence assumptions fail. Contemporary solutions incorporate Bayesian models, which probabilistically integrate prior knowledge of trait evolution and account for non-independence via Markov chain Monte Carlo sampling, yielding more reliable hierarchical inferences despite homoplasy.[113][114] Historically, Charles Darwin's 1859 On the Origin of Species laid foundational influence by advocating classification via multiple independent traits to capture natural affinities, moving beyond artificial systems toward evolutionary independence in character assessment. This perspective evolved into cladistics, formalized by Willi Hennig in the 1960s, who rigorously defined monophyletic taxa as non-overlapping units bound by unique synapomorphies, establishing clear boundaries as a cornerstone of phylogenetic systematics.Chemistry and Organic Synthesis

In organic synthesis, orthogonality refers to the use of protecting groups that can be selectively removed under specific conditions without impacting others, enabling precise control in multi-step reactions. This concept is fundamental to constructing complex molecules, particularly in peptide and natural product synthesis, where multiple functional groups must be manipulated independently. Orthogonal protecting groups minimize side reactions and improve overall yield by allowing sequential deprotections tailored to the reaction sequence.[115] A landmark strategy incorporating orthogonality emerged in the 1960s with Robert Bruce Merrifield's solid-phase peptide synthesis (SPPS), which utilized the tert-butoxycarbonyl (Boc) group for temporary Nα-amino protection, removable by acid treatment, alongside benzyl-based groups for side chains that required hydrogenation for cleavage. This approach revolutionized peptide assembly by anchoring the growing chain to a resin, facilitating iterative coupling and deprotection cycles. Later advancements enhanced orthogonality; in 1972, Louis A. Carpino introduced the 9-fluorenylmethoxycarbonyl (Fmoc) group, which is base-labile and thus compatible with acid-sensitive Boc, allowing dual protection schemes in SPPS. The Fmoc/Boc pair exemplifies orthogonality, as Fmoc deprotection with piperidine leaves Boc intact, and vice versa with trifluoroacetic acid, enabling efficient synthesis of peptides up to 50 residues long with high purity.[116][117][115] In total synthesis, orthogonal protecting groups facilitate selective functionalization of polyfunctional substrates, such as in the assembly of alkaloids or polyketides, where distinct alcohol or amine groups are unmasked stepwise to direct regioselective couplings. For instance, combinations like allyl esters (removable by palladium catalysis) with silyl ethers (fluoride-labile) allow targeted modifications in carbohydrate or terpene syntheses without global deprotection. Click chemistry further exemplifies orthogonality through modular ligation reactions; the copper-catalyzed azide-alkyne cycloaddition (CuAAC) operates selectively alongside strain-promoted azide-alkyne cycloaddition (SPAAC) or inverse electron-demand Diels-Alder (IEDDA) reactions, enabling multiple orthogonal conjugations in a single pot for dendrimer or conjugate construction. These methods achieve near-quantitative yields under mild conditions, streamlining the synthesis of architecturally complex targets.[118][118] The advantages of orthogonal strategies include enhanced efficiency in multi-step sequences, as they reduce purification needs and error propagation from incomplete deprotections, often boosting overall yields by 20-50% in complex syntheses compared to non-orthogonal schemes. Recent developments in the 2010s have introduced light-orthogonal catalysis, where photoremovable protecting groups, such as nitrobenzyl or coumarin derivatives, are cleaved by specific wavelengths of light without thermal or chemical interference, complementing traditional orthogonal sets. This photolabile approach has been applied in spatiotemporal control of reactions, as reviewed in advancements enabling precise release in polymer or small-molecule synthesis.[119]Bioorthogonal and Supramolecular Chemistry

Bioorthogonal chemistry encompasses chemical reactions that proceed selectively within living organisms without disrupting endogenous biochemical pathways, enabling precise modifications of biomolecules such as proteins, glycans, and lipids. The concept was introduced by Carolyn R. Bertozzi in 2003 to describe transformations involving non-native functional groups that are inert to biological nucleophiles and electrophiles.[120] A foundational example is the strain-promoted azide-alkyne cycloaddition (SPAAC), which facilitates efficient ligation between azides and strained cyclooctynes under physiological conditions, avoiding the toxicity of copper catalysts required in traditional click chemistry. This reaction, first reported by Bertozzi's group in 2004, has rates on the order of 1 M⁻¹ s⁻¹, allowing real-time imaging of cellular processes in vivo. The biocompatibility and high selectivity of SPAAC stem from the orthogonal reactivity of the azide and alkyne moieties, which do not cross-react with abundant biomolecules like thiols or amines.[121] These bioorthogonal reactions have transformed applications in drug delivery and molecular imaging by permitting targeted conjugation of therapeutic payloads or fluorescent probes to specific cellular targets. For instance, SPAAC has been employed to label sialic acids on cell surfaces for tracking tumor glycans in live mice, demonstrating minimal off-target reactivity and enabling high-resolution visualization. The field's impact was recognized with the 2022 Nobel Prize in Chemistry, awarded to Bertozzi, Morten Meldal, and K. Barry Sharpless for pioneering click chemistry and its bioorthogonal extensions, which have accelerated advancements in targeted therapies. Kinetic orthogonality in these systems ensures that multiple reactions can coexist without interference, enhancing modularity in complex biological environments. In supramolecular chemistry, orthogonality manifests through the independent operation of distinct non-covalent interactions, such as hydrogen bonding and π-π stacking, within host-guest assemblies to construct hierarchical structures. These interactions are designed to be mutually exclusive, allowing precise control over self-assembly without competitive binding; for example, crown ether-based host-guest complexation can pair orthogonally with ureido-pyrimidinone hydrogen bonds to form dynamic polymers. Such orthogonal motifs enable the creation of responsive materials with tunable properties, including stimuli-responsive disassembly for controlled release. In metal-organic frameworks (MOFs), orthogonal ligands—featuring perpendicular binding arms—facilitate the synthesis of intricate topologies, such as twisted frameworks that enhance porosity and catalytic sites while maintaining structural integrity.[122][123] The biocompatibility of these supramolecular systems arises from their reliance on weak, reversible bonds under mild aqueous conditions, mirroring biological recognition events and minimizing cytotoxicity. Applications extend to drug delivery, where orthogonal host-guest interactions encapsulate therapeutics for site-specific release, and to imaging, via self-assembling probes that aggregate selectively at disease sites. Overall, the kinetic orthogonality of these non-covalent forces—evidenced by dissociation constants differing by orders of magnitude—ensures robust, interference-free functionality in vivo, paralleling the selectivity principles of bioorthogonal covalent reactions.[124]Analytical and Biochemical Applications

In analytical chemistry, orthogonal methods integrate complementary separation techniques that operate on independent principles to enhance resolution and detection of complex mixtures. For instance, high-performance liquid chromatography coupled with mass spectrometry (HPLC-MS) combines chromatographic separation based on hydrophobicity or charge with mass spectrometric identification by mass-to-charge ratio, allowing for the orthogonal analysis of impurities and degradation products in pharmaceuticals.[125] This approach is particularly valuable in medicinal chemistry for screening and purifying compounds, as the independence of the mechanisms minimizes overlap and improves overall analytical specificity.[126] In biochemistry, orthogonality extends to techniques like two-dimensional gel electrophoresis (2D-GE), where proteins are separated in the first dimension by isoelectric focusing (based on charge) and in the second by sodium dodecyl sulfate-polyacrylamide gel electrophoresis (based on molecular weight), providing orthogonal dimensions for high-resolution proteomics.[127] Similarly, orthogonal tags in proteomics, such as bioorthogonal noncanonical amino acid tagging (BONCAT), enable selective labeling of newly synthesized proteins without interference from endogenous processes, facilitating quantitative analysis of dynamic proteomes.[128] These methods yield benefits including enhanced resolution of analytes, reduced false positives, and improved quantitative accuracy in identifying low-abundance species.[129] Orthogonal enzymes play a key role in metabolic engineering by enabling non-interfering pathways that avoid competition with host metabolism, such as orthogonal fatty acid biosynthesis systems in Escherichia coli that redirect flux toward desired products like oleochemicals.[130] Recent advances in orthogonal spectroscopy, particularly the integration of infrared (IR) and nuclear magnetic resonance (NMR) since the early 2000s, provide complementary structural insights—IR for vibrational modes identifying functional groups and NMR for atomic connectivity—boosting automated structure elucidation in complex biomolecules.[131] Multimodal fusion models combining these spectra have achieved high accuracy in verifying molecular structures, with IR adding orthogonal vibrational data to resolve ambiguities in NMR predictions.[132]Other Disciplines

Art and Design

In visual arts and architecture, orthogonality refers to the use of perpendicular lines and planes to create structured compositions, often enhancing spatial depth and balance. During the Renaissance, artists employed orthogonal lines as visual rays converging toward vanishing points to simulate three-dimensional space on a two-dimensional surface, a technique pioneered in linear perspective drawing. For instance, Leonardo da Vinci utilized orthogonals to guide the viewer's eye from the edges of the canvas to a central vanishing point, achieving realistic depictions of architecture and landscapes.[133][134] In modernist design principles of the early 20th century, orthogonal grids became foundational for abstract compositions, emphasizing harmony through perpendicular arrangements. The De Stijl movement, emerging in the 1910s in the Netherlands, championed these grids as a means to purify form, reducing visual elements to horizontal and vertical lines intersecting at right angles. Piet Mondrian's compositions exemplify this, featuring bold perpendicular black lines dividing colored rectangles into asymmetrical yet balanced fields, as seen in works like Composition with Red, Blue, and Yellow (1930), where orthogonality underscores universal harmony over representational content.[135][136] Similarly, orthogonal symmetry appears in Islamic geometric tiles, where repeating patterns on square grids create intricate, perpendicular motifs that evoke infinite extension and spiritual order, such as the star-and-polygon designs in the Alhambra's decorations.[137][138] Conceptually, orthogonality in abstract art facilitates the independence of form from narrative content, allowing perpendicular elements to stand as autonomous structures that prioritize relational dynamics over depiction. This separation enables artists to explore pure visual relationships, where lines and shapes interact without symbolic burden. The Bauhaus school in the 1920s further amplified this influence, promoting orthogonal modularity in design to foster functional, adaptable systems; Walter Gropius and László Moholy-Nagy integrated perpendicular grids into furniture and architecture, viewing them as modular building blocks for industrialized production and spatial organization.[139][140]System Reliability

Orthogonal redundancy in system reliability refers to the use of independent backup mechanisms designed to fail in uncorrelated ways, ensuring that a single fault does not propagate across all redundant components. This approach enhances fault tolerance by minimizing common-mode failures, where multiple systems succumb to the same error source. In engineering contexts, orthogonal redundancy is achieved through diverse implementation strategies, such as varying algorithms, hardware, or development processes, to promote failure independence. A seminal example is N-version programming, where multiple functionally equivalent software versions are developed independently from the same specification to tolerate design faults. The core principle is failure independence, assuming that faults in different versions occur randomly and do not coincide, thereby allowing a voting mechanism to select the correct output. This technique has been applied in safety-critical applications, including flight control software, to achieve high reliability without single points of failure. Experiments have validated the assumption of low correlated failure rates under independent development conditions, though complete orthogonality remains challenging due to shared specifications.[141] In safety-critical design, fault orthogonality emphasizes the separation of detection, isolation, and recovery mechanisms to ensure robust error handling, particularly in aviation software where failures can have catastrophic consequences. Principles include using diverse verification techniques—such as structural coverage analysis, requirements-based testing, and formal methods—to orthogonally confirm system behavior and detect latent faults. This orthogonality reduces the risk of undetected errors escaping into deployment, aligning with guidelines that mandate independence between development and verification activities to avoid bias.[142] Triple modular redundancy (TMR) exemplifies orthogonal checks in hardware fault tolerance, employing three identical modules with a majority voter to mask single faults, augmented by independent monitoring circuits for error detection. In aerospace guidance systems, TMR with orthogonal redundancy—such as triple-component setups using voting across independent sensors—provides one-failure fault tolerance by isolating failures through diverse signal paths. Similarly, Byzantine fault tolerance (BFT) in distributed systems uses orthogonal replicas to consensus on states despite arbitrary faults, tolerating up to one-third malicious or erroneous nodes via independent execution and agreement protocols.[143] A key metric for evaluating orthogonal detection is the coverage factor, defined as the probability that a fault-tolerant system successfully detects and recovers from a fault before it impacts the output. This factor quantifies the effectiveness of redundancy, with values approaching 1 indicating high orthogonality in fault handling; for instance, in nuclear safety analyses, coverage factors above 0.99 are targeted for critical modules to ensure system performability.[144] Standards like RTCA DO-178C, released in 2011, incorporate orthogonality requirements for aerospace software certification by mandating diverse and independent methods for verification at higher design assurance levels (A and B), including structural code coverage and traceability to mitigate common faults. These guidelines ensure that fault-tolerant architectures, such as those using orthogonal redundancy, meet stringent safety objectives without over-reliance on any single technique.Neuroscience

In neuroscience, orthogonality refers to the independent encoding of information across distinct neural pathways or populations, allowing the brain to process multiple dimensions of stimuli without interference. This concept is evident in the segregation of sensory modalities, where visual, auditory, and somatosensory cortices maintain distinct neural codes to prevent cross-talk. For instance, the visual cortex (V1) processes orientation and motion independently from auditory processing in the temporal lobe, enabling parallel computation of sensory inputs. Such orthogonal representations facilitate efficient information integration while preserving modality-specific fidelity.[145] Seminal work by David Hubel and Torsten Wiesel in the 1960s demonstrated orthogonal-like organization in the primary visual cortex through orientation-selective receptive fields. They identified simple and complex cells with elongated fields tuned to specific stimulus orientations, such as horizontal or vertical edges, which collectively span a continuum of directions (e.g., 0° to 180°). This arrangement implies functional orthogonality, as neurons with perpendicular tuning preferences (e.g., 0° and 90°) respond independently to orthogonal stimulus components, forming the basis for edge detection and feature binding in vision. Their findings, derived from cat and monkey electrophysiology, revealed columnar structures where adjacent neurons share similar orientations, further supporting modular, non-overlapping processing.[146] Population coding exemplifies orthogonality at the ensemble level, where groups of neurons encode multidimensional stimuli through nearly independent firing patterns. In the visual system, neural populations in area V4 represent object position orthogonally from background features like depth or rotation, maintaining decoding accuracy (correlation coefficients ~0.66–0.70) across variations. This separation minimizes distortion when decoding multiple stimuli simultaneously, as orthogonal subspaces allow additive vector representations without crosstalk. Similarly, in the second somatosensory cortex (S2), sensory (e.g., texture) and contextual (e.g., category) responses occupy orthogonal neural subspaces, enabling faithful signal processing amid behavioral demands.[147][148][145] Specific examples illustrate these principles in navigation and sensory transmission. Hippocampal place cells generate orthogonal firing patterns across environments, with uncorrelated activity maps in CA3 for different rooms (e.g., only 6% of cells active in ≥6 of 11 similar rooms), supporting high-capacity episodic memory by decorrelating representations to avoid interference. In the optic nerve, retinal ganglion cells (RGCs) transmit orthogonal signals via diverse subtypes—such as ON/OFF center-surround and direction-selective cells—each carrying independent visual features (e.g., contrast vs. motion) without overlap in their spike trains, ensuring lossless relay to the lateral geniculate nucleus.[149]30996-2)[150] Functional magnetic resonance imaging (fMRI) studies from the 2000s onward have confirmed orthogonality through task designs that isolate cognitive processes. By presenting orthogonal stimuli (e.g., concurrent verbal and spatial tasks), researchers observed independent activation in prefrontal and parietal modules, with minimal overlap in BOLD signals, underscoring functional independence in higher cognition. These designs reveal how brain regions process attributes like scene temperature or sound only when task-relevant, aligning with modular theories.[151][152] Orthogonality underpins cognitive modularity, allowing specialized processing while enabling integration, but disruptions lead to disorders like synesthesia. In grapheme-color synesthesia, cross-activation between adjacent sensory areas (e.g., auditory and visual cortices) erodes modality boundaries, causing involuntary blending (e.g., letters evoking colors) due to hyperconnectivity or reduced inhibition. This non-orthogonality highlights the brain's reliance on segregated pathways for typical perception, with implications for understanding conditions involving sensory overflow.[153]Philosophy