Recent from talks

Nothing was collected or created yet.

Analog television

View on Wikipedia

Analog television (or analogue television), the original television technology, uses analog signals to transmit video and audio.[1] In an analog television broadcast, brightness, color, and sound are represented by the amplitude, phase, and frequency of the signal.

The strength of an analog signal varies over a continuous range of possible values, meaning that electronic noise and interference may be introduced. Thus, a moderately weak signal becomes snowy and subject to interference. In contrast, picture quality from a digital television (DTV) signal remains good until the signal level drops below a certain threshold (the "digital cliff"), where reception is either no longer possible or becomes intermittent.

Analog television may be wireless (as in terrestrial and satellite television) or distributed over a cable network (cable television).

All broadcast television systems traditionally used analog signals. Starting after the year 2000, motivated by the lower bandwidth requirements of compressed digital signals, a digital television transition has been underway in most of the world, with different deadlines for the cessation of analog broadcasts. Countries that still primarily use analogue systems are mostly in Africa, Asia, and South America.

Development

[edit]The earliest systems of analog television were mechanical television systems that used spinning disks with patterns of holes punched into the disc to scan an image. A similar disk reconstructed the image at the receiver. Synchronization of the receiver disc rotation was handled through sync pulses broadcast with the image information. Camera systems used similar spinning discs and required intensely bright illumination of the subject for the light detector to work. The reproduced images from these mechanical systems were dim, very low resolution and flickered severely.

Analog television did not begin in earnest as an industry until the development of the cathode ray tube (CRT), which uses a focused electron beam to trace lines across a phosphor coated surface. The electron beam could be swept across the screen much faster than any mechanical disc system, allowing for more closely spaced scan lines and much higher image resolution. Also, far less maintenance was required of an all-electronic system compared to a mechanical spinning disc system. All-electronic systems became popular with households after World War II.

Standards

[edit]

Broadcasters of analog television encode their signal using different systems. The official systems of transmission were defined by the ITU in 1961 as: A, B, C, D, E, F, G, H, I, K, K1, L, M and N.[2] These systems determine the number of scan lines, frame rate, channel width, video bandwidth, video-audio separation, and so on. A color encoding scheme (NTSC, PAL, or SECAM) could be added to the base monochrome signal.[3] Using RF modulation the signal is then modulated onto a very high frequency (VHF) or ultra high frequency (UHF) carrier wave. Each frame of a television image is composed of scan lines drawn on the screen. The lines are of varying brightness; the whole set of lines is drawn quickly enough that the human eye perceives it as one image. The process repeats and the next sequential frame is displayed, allowing the depiction of motion. The analog television signal contains timing and synchronization information so that the receiver can reconstruct a two-dimensional moving image from a one-dimensional time-varying signal.

The first commercial television systems were black-and-white; the beginning of color television was in the 1950s.[4]

A practical television system needs to take luminance, chrominance (in a color system), synchronization (horizontal and vertical), and audio signals, and broadcast them over a radio transmission. The transmission system must include a means of television channel selection.

Analog broadcast television systems come in a variety of frame rates and resolutions. Further differences exist in the frequency and modulation of the audio carrier. The monochrome combinations still existing in the 1950s were standardized by the International Telecommunication Union (ITU) as capital letters A through N. When color television was introduced, the chrominance information was added to the monochrome signals in a way that black and white televisions ignore. In this way backward compatibility was achieved.

There are three standards for the way the additional color information can be encoded and transmitted. The first was the American NTSC system. The European and Australian PAL and the French and former Soviet Union SECAM standards were developed later and attempt to cure certain defects of the NTSC system. PAL's color encoding is similar to the NTSC systems. SECAM, though, uses a different modulation approach than PAL or NTSC. PAL had a late evolution called PALplus, allowing widescreen broadcasts while remaining fully compatible with existing PAL equipment.

In principle, all three color encoding systems can be used with any scan line/frame rate combination. Therefore, in order to describe a given signal completely, it is necessary to quote the color system plus the broadcast standard as a capital letter. For example, the United States, Canada, Mexico and South Korea used (or use) NTSC-M,[a] Japan used NTSC-J,[b] the UK used PAL-I,[c] France used SECAM-L,[d] much of Western Europe and Australia used (or use) PAL-B/G,[e] most of Eastern Europe uses SECAM-D/K or PAL-D/K and so on.

Not all of the possible combinations exist. NTSC is only used with system M, even though there were experiments with NTSC-A (405 line) in the UK and NTSC-N (625 line) in part of South America. PAL is used with a variety of 625-line standards (B, G, D, K, I, N) but also with the North American 525-line standard, accordingly named PAL-M. Likewise, SECAM is used with a variety of 625-line standards.

For this reason, many people refer to any 625/25 type signal as PAL and to any 525/30 signal as NTSC, even when referring to digital signals; for example, on DVD-Video, which does not contain any analog color encoding, and thus no PAL or NTSC signals at all.

Although a number of different broadcast television systems are in use worldwide, the same principles of operation apply.[5]

Displaying an image

[edit]

A CRT television displays an image by scanning a beam of electrons across the screen in a pattern of horizontal lines known as a raster. At the end of each line, the beam returns to the start of the next line; at the end of the last line, the beam returns to the beginning of the first line at the top of the screen. As it passes each point, the intensity of the beam is varied, varying the luminance of that point. A color television system is similar except there are three beams that scan together and an additional signal known as chrominance controls the color of the spot.

When analog television was developed, no affordable technology for storing video signals existed; the luminance signal had to be generated and transmitted at the same time at which it is displayed on the CRT. It was therefore essential to keep the raster scanning in the camera (or other device for producing the signal) in exact synchronization with the scanning in the television.

The physics of the CRT require that a finite time interval be allowed for the spot to move back to the start of the next line (horizontal retrace) or the start of the screen (vertical retrace). The timing of the luminance signal must allow for this.

The human eye has a characteristic called phi phenomenon. Quickly displaying successive scan images creates the illusion of smooth motion. Flickering of the image can be partially solved using a long persistence phosphor coating on the CRT so that successive images fade slowly. However, slow phosphor has the negative side effect of causing image smearing and blurring when rapid on-screen motion occurs.

The maximum frame rate depends on the bandwidth of the electronics and the transmission system, and the number of horizontal scan lines in the image. A frame rate of 25 or 30 hertz is a satisfactory compromise, while the process of interlacing two video fields of the picture per frame is used to build the image. This process doubles the apparent number of video frames per second and further reduces flicker and other defects in transmission.

Receiving signals

[edit]The television system for each country will specify a number of television channels within the UHF or VHF frequency ranges. A channel actually consists of two signals: the picture information is transmitted using amplitude modulation on one carrier frequency, and the sound is transmitted with frequency modulation at a frequency at a fixed offset (typically 4.5 to 6 MHz) from the picture signal.

The channel frequencies chosen represent a compromise between allowing enough bandwidth for video (and hence satisfactory picture resolution), and allowing enough channels to be packed into the available frequency band. In practice, a technique called vestigial sideband is used to reduce the channel spacing, which would be nearly twice the video bandwidth if pure AM was used.

Signal reception is invariably done via a superheterodyne receiver: the first stage is a tuner which selects a television channel and frequency-shifts it to a fixed intermediate frequency (IF). The signal amplifier performs amplification to the IF stages from the microvolt range to fractions of a volt.

Extracting the sound

[edit]At this point, the IF signal consists of a video carrier signal at one frequency and the sound carrier at a fixed offset in frequency. A demodulator recovers the video signal. Also at the output of the same demodulator is a new frequency-modulated sound carrier at the offset frequency. In some sets made before 1948, this was filtered out, and the sound IF of about 22 MHz was sent to an FM demodulator to recover the basic sound signal. In newer sets, this new carrier at the offset frequency was allowed to remain as intercarrier sound, and it was sent to an FM demodulator to recover the basic sound signal. One particular advantage of intercarrier sound is that when the front panel fine-tuning knob is adjusted, the sound carrier frequency does not change with the tuning, but stays at the above-mentioned offset frequency. Consequently, it is easier to tune the picture without losing the sound.

So the FM sound carrier is then demodulated, amplified, and used to drive a loudspeaker. Until the advent of the NICAM and MTS systems, television sound transmissions were monophonic.

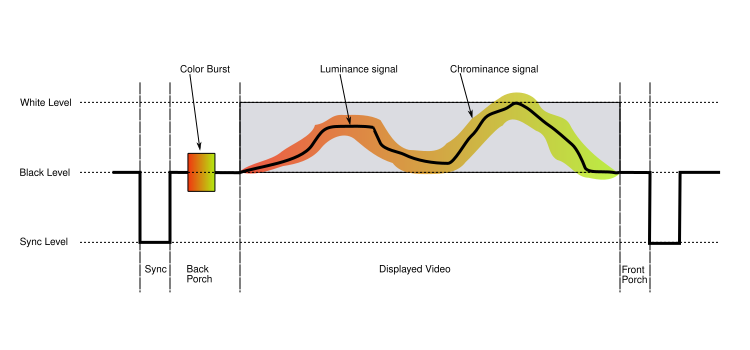

Structure of a video signal

[edit]The video carrier is demodulated to give a composite video signal[f] containing luminance, chrominance and synchronization signals.[6] The result is identical to the composite video format used by analog video devices such as VCRs or CCTV cameras. To ensure good linearity and thus fidelity, consistent with affordable manufacturing costs of transmitters and receivers, the video carrier is never modulated to the extent that it is shut off altogether. When intercarrier sound was introduced later in 1948, not completely shutting off the carrier had the side effect of allowing intercarrier sound to be economically implemented.

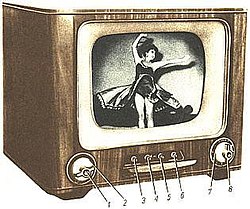

Each line of the displayed image is transmitted using a signal as shown above. The same basic format (with minor differences mainly related to timing and the encoding of color) is used for PAL, NTSC, and SECAM television systems. A monochrome signal is identical to a color one, with the exception that the elements shown in color in the diagram (the colorburst, and the chrominance signal) are not present.

The front porch is a brief (about 1.5 microsecond) period inserted between the end of each transmitted line of picture and the leading edge of the next line's sync pulse. Its purpose was to allow voltage levels to stabilise in older televisions, preventing interference between picture lines. The front porch is the first component of the horizontal blanking interval which also contains the horizontal sync pulse and the back porch.[7][8][9]

The back porch is the portion of each scan line between the end (rising edge) of the horizontal sync pulse and the start of active video. It is used to restore the black level (300 mV) reference in analog video. In signal processing terms, it compensates for the fall time and settling time following the sync pulse.[7][8]

In color television systems such as PAL and NTSC, this period also includes the colorburst signal. In the SECAM system, it contains the reference subcarrier for each consecutive color difference signal in order to set the zero-color reference.

In some professional systems, particularly satellite links between locations, the digital audio is embedded within the line sync pulses of the video signal, to save the cost of renting a second channel. The name for this proprietary system is Sound-in-Syncs.

Monochrome video signal extraction

[edit]The luminance component of a composite video signal varies between 0 V and approximately 0.7 V above the black level. In the NTSC system, there is a blanking signal level used during the front porch and back porch, and a black signal level 75 mV above it; in PAL and SECAM these are identical.

In a monochrome receiver, the luminance signal is amplified to drive the control grid in the electron gun of the CRT. This changes the intensity of the electron beam and therefore the brightness of the spot being scanned. Brightness and contrast controls determine the DC shift and amplification, respectively.

Color video signal extraction

[edit]

U and V signals

[edit]A color signal conveys picture information for each of the red, green, and blue components of an image. However, these are not simply transmitted as three separate signals, because: such a signal would not be compatible with monochrome receivers, an important consideration when color broadcasting was first introduced. It would also occupy three times the bandwidth of existing television, requiring a decrease in the number of television channels available.

Instead, the RGB signals are converted into YUV form, where the Y signal represents the luminance of the colors in the image. Because the rendering of colors in this way is the goal of both monochrome film and television systems, the Y signal is ideal for transmission as the luminance signal. This ensures a monochrome receiver will display a correct picture in black and white, where a given color is reproduced by a shade of gray that correctly reflects how light or dark the original color is.

The U and V signals are color difference signals. The U signal is the difference between the B signal and the Y signal, also known as B minus Y (B-Y), and the V signal is the difference between the R signal and the Y signal, also known as R minus Y (R-Y). The U signal then represents how purplish-blue or its complementary color, yellowish-green, the color is, and the V signal how purplish-red or its complementary, greenish-cyan, it is. The advantage of this scheme is that the U and V signals are zero when the picture has no color content. Since the human eye is more sensitive to detail in luminance than in color, the U and V signals can be transmitted with reduced bandwidth with acceptable results.

In the receiver, a single demodulator can extract an additive combination of U plus V. An example is the X demodulator used in the X/Z demodulation system. In that same system, a second demodulator, the Z demodulator, also extracts an additive combination of U plus V, but in a different ratio. The X and Z color difference signals are further matrixed into three color difference signals, (R-Y), (B-Y), and (G-Y). The combinations of usually two, but sometimes three demodulators were:

- (I) / (Q), (as used in the 1954 RCA CTC-2 and the 1985 RCA "Colortrak" series, and the 1954 Arvin, and some professional color monitors in the 1990s),

- (R-Y) / (Q), as used in the 1955 RCA 21-inch color receiver,

- (R-Y) / (B-Y), used in the first color receiver on the market (Westinghouse, not RCA),

- (R-Y) / (G-Y), (as used in the RCA Victor CTC-4 chassis),

- (R-Y) / (B-Y) / (G-Y),

- (X) / (Z), as used in many receivers of the late '50s and throughout the '60s.

In the end, further matrixing of the above color-difference signals c through f yielded the three color-difference signals, (R-Y), (B-Y), and (G-Y).

The R, G, and B signals in the receiver needed for the display device (CRT, Plasma display, or LCD display) are electronically derived by matrixing as follows: R is the additive combination of (R-Y) with Y, G is the additive combination of (G-Y) with Y, and B is the additive combination of (B-Y) with Y. All of this is accomplished electronically. It can be seen that in the combining process, the low-resolution portion of the Y signals cancel out, leaving R, G, and B signals able to render a low-resolution image in full color. However, the higher resolution portions of the Y signals do not cancel out, and so are equally present in R, G, and B, producing the higher-resolution image detail in monochrome, although it appears to the human eye as a full-color and full-resolution picture.

NTSC and PAL systems

[edit]

In the NTSC and PAL color systems, U and V are transmitted by using quadrature amplitude modulation of a subcarrier. This kind of modulation applies two independent signals to one subcarrier, with the idea that both signals will be recovered independently at the receiving end. For NTSC, the subcarrier is at 3.58 MHz.[g] For the PAL system it is at 4.43 MHz.[h] The subcarrier itself is not included in the modulated signal (suppressed carrier), it is the subcarrier sidebands that carry the U and V information. The usual reason for using suppressed carrier is that it saves on transmitter power. In this application a more important advantage is that the color signal disappears entirely in black and white scenes. The subcarrier is within the bandwidth of the main luminance signal and consequently can cause undesirable artifacts on the picture, all the more noticeable in black and white receivers.

A small sample of the subcarrier, the colorburst, is included in the horizontal blanking portion, which is not visible on the screen. This is necessary to give the receiver a phase reference for the modulated signal. Under quadrature amplitude modulation the modulated chrominance signal changes phase as compared to its subcarrier and also changes amplitude. The chrominance amplitude (when considered together with the Y signal) represents the approximate saturation of a color, and the chrominance phase against the subcarrier reference approximately represents the hue of the color. For particular test colors found in the test color bar pattern, exact amplitudes and phases are sometimes defined for test and troubleshooting purposes only.

Due to the nature of the quadrature amplitude modulation process that created the chrominance signal, at certain times, the signal represents only the U signal, and 70 nanoseconds (NTSC) later, it represents only the V signal. About 70 nanoseconds later still, -U, and another 70 nanoseconds, -V. So to extract U, a synchronous demodulator is utilized, which uses the subcarrier to briefly gate the chroma every 280 nanoseconds, so that the output is only a train of discrete pulses, each having an amplitude that is the same as the original U signal at the corresponding time. In effect, these pulses are discrete-time analog samples of the U signal. The pulses are then low-pass filtered so that the original analog continuous-time U signal is recovered. For V, a 90-degree shifted subcarrier briefly gates the chroma signal every 280 nanoseconds, and the rest of the process is identical to that used for the U signal.

Gating at any other time than those times mentioned above will yield an additive mixture of any two of U, V, -U, or -V. One of these off-axis (that is, of the U and V axis) gating methods is called I/Q demodulation. Another much more popular off-axis scheme was the X/Z demodulation system. Further matrixing[clarification needed] recovered the original U and V signals. This scheme was actually the most popular demodulator scheme throughout the 1960s.[clarification needed]

The above process uses the subcarrier. But as previously mentioned, it was deleted before transmission, and only the chroma is transmitted. Therefore, the receiver must reconstitute the subcarrier. For this purpose, a short burst of the subcarrier, known as the colorburst, is transmitted during the back porch (re-trace blanking period) of each scan line. A subcarrier oscillator in the receiver locks onto this signal (see phase-locked loop) to achieve a phase reference, resulting in the oscillator producing the reconstituted subcarrier.[i]

NTSC uses this process unmodified. Unfortunately, this often results in poor color reproduction due to phase errors in the received signal, sometimes caused by multipath but mostly by poor implementation at the studio end. With the advent of solid-state receivers, cable TV, and digital studio equipment for conversion to an over-the-air analog signal, these NTSC problems have been largely fixed, leaving operator error at the studio end as the sole color rendition weakness of the NTSC system.[citation needed] In any case, the PAL D (delay) system mostly corrects these kinds of errors by reversing the phase of the signal on each successive line, and averaging the results over pairs of lines. This process is achieved by the use of a 1H (where H = horizontal scan frequency) duration delay line.[j] Phase shift errors between successive lines are therefore canceled out and the wanted signal amplitude is increased when the two in-phase signals are re-combined.

NTSC is more spectrum efficient than PAL, giving more picture detail for a given bandwidth. This is because sophisticated comb filters in receivers are more effective with NTSC's 4 color frame sequence compared to PAL's 8-field sequence. However, in the end, the larger channel width of most PAL systems in Europe still gives PAL systems the edge in transmitting more picture detail.

SECAM system

[edit]In the SECAM television system, U and V are transmitted on alternate lines, using simple frequency modulation of two different color subcarriers.

In some analog color CRT displays, starting in 1956, the brightness control signal (luminance) is fed to the cathode connections of the electron guns, and the color difference signals (chrominance signals) are fed to the control grids connections. This simple CRT matrix mixing technique was replaced in later solid state designs of signal processing with the original matrixing method used in the 1954 and 1955 color TV receivers.

Synchronization

[edit]Synchronizing pulses added to the video signal at the end of every scan line and video frame ensure that the sweep oscillators in the receiver remain locked in step with the transmitted signal so that the image can be reconstructed on the receiver screen.[7][8][10]

A sync separator circuit detects the sync voltage levels and sorts the pulses into horizontal and vertical sync.

Horizontal synchronization

[edit]The horizontal sync pulse separates the scan lines. The horizontal sync signal is a single short pulse that indicates the start of every line. The rest of the scan line follows, with the signal ranging from 0.3 V (black) to 1 V (white), until the next horizontal or vertical synchronization pulse.

The format of the horizontal sync pulse varies. In the 525-line NTSC system it is a 4.85 μs pulse at 0 V. In the 625-line PAL system the pulse is 4.7 μs at 0 V. This is lower than the amplitude of any video signal (blacker than black) so it can be detected by the level-sensitive sync separator circuit of the receiver.

Two timing intervals are defined – the front porch between the end of the displayed video and the start of the sync pulse, and the back porch after the sync pulse and before the displayed video. These and the sync pulse itself are called the horizontal blanking (or retrace) interval and represent the time that the electron beam in the CRT is returning to the start of the next display line.

Vertical synchronization

[edit]Vertical synchronization separates the video fields. In PAL and NTSC, the vertical sync pulse occurs within the vertical blanking interval. The vertical sync pulses are made by prolonging the length of horizontal sync pulses through almost the entire length of the scan line.

The vertical sync signal is a series of much longer pulses, indicating the start of a new field. The sync pulses occupy the whole line interval of a number of lines at the beginning and end of a scan; no picture information is transmitted during vertical retrace. The pulse sequence is designed to allow horizontal sync to continue during vertical retrace; it also indicates whether each field represents even or odd lines in interlaced systems (depending on whether it begins at the start of a horizontal line, or midway through).

The format of such a signal in 525-line NTSC and 625-line PAL is:

- pre-equalizing pulses (6 to start scanning odd lines, 5 to start scanning even lines)

- long-sync pulses (5 pulses)

- post-equalizing pulses (5 to start scanning odd lines, 4 to start scanning even lines)

Each pre- or post-equalizing pulse consists of half a scan line of black signal: 2 μs at 0 V, followed by 30 μs at 0.3 V. Each long sync pulse consists of an equalizing pulse with timings inverted: 30 μs at 0 V, followed by 2 μs at 0.3 V.

In video production and computer graphics, changes to the image are often performed during the vertical blanking interval to avoid visible discontinuity of the image. If this image in the framebuffer is updated with a new image while the display is being refreshed, the display shows a mishmash of both frames, producing page tearing partway down the image.

Horizontal and vertical hold

[edit]The sweep (or deflection) oscillators were designed to run without a signal from the television station (or VCR, computer, or other composite video source). This allows the television receiver to display a raster and to allow an image to be presented during antenna placement. With sufficient signal strength, the receiver's sync separator circuit would split timebase pulses from the incoming video and use them to reset the horizontal and vertical oscillators at the appropriate time to synchronize with the signal from the station.

The free-running oscillation of the horizontal circuit is especially critical, as the horizontal deflection circuits typically power the flyback transformer (which provides acceleration potential for the CRT) as well as the filaments for the high voltage rectifier tube and sometimes the filament(s) of the CRT itself. Without the operation of the horizontal oscillator and output stages in these television receivers, there would be no illumination of the CRT's face.

The lack of precision timing components in early equipment meant that the timebase circuits occasionally needed manual adjustment. If their free-run frequencies were too far from the actual line and field rates, the circuits would not be able to follow the incoming sync signals. Loss of horizontal synchronization usually resulted in an unwatchable picture; loss of vertical synchronization would produce an image rolling up or down the screen.

Older analog television receivers often provide manual controls to adjust horizontal and vertical timing. The adjustment takes the form of horizontal hold and vertical hold controls, usually on the front panel along with other common controls. These adjust the free-run frequencies of the corresponding timebase oscillators.

A slowly rolling vertical picture demonstrates that the vertical oscillator is nearly synchronized with the television station but is not locking to it, often due to a weak signal or a failure in the sync separator stage not resetting the oscillator.

Horizontal sync errors cause the image to be torn diagonally and repeated across the screen as if it were wrapped around a screw or a barber's pole; the greater the error, the more copies of the image will be seen at once wrapped around the barber pole.

By the early 1980s the efficacy of the synchronization circuits, plus the inherent stability of the sets' oscillators, had been improved to the point where these controls were no longer necessary. Integrated Circuits which eliminated the horizontal hold control were starting to appear as early as 1969.[11]

The final generations of analog television receivers used IC-based designs where the receiver's timebases were derived from accurate crystal oscillators. With these sets, adjustment of the free-running frequency of either sweep oscillator was unnecessary and unavailable.

Horizontal and vertical hold controls were rarely used in CRT-based computer monitors, as the quality and consistency of components were quite high by the advent of the computer age, but might be found on some composite monitors used with the 1970s–80s home or personal computers.

Other technical information

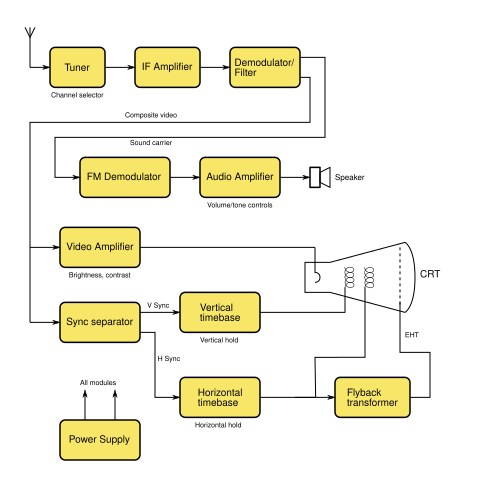

[edit]Components of a television system

[edit]

The tuner is the object which, with the aid of an antenna, isolates the television signals received over the air. There are two types of tuners in analog television, VHF and UHF tuners. The VHF tuner selects the VHF television frequency. This consists of a 4 MHz video bandwidth and about 100 kHz audio bandwidth. It then amplifies the signal and converts it to a 45.75 MHz Intermediate Frequency (IF) amplitude-modulated video and a 41.25 MHz IF frequency-modulated audio carrier.

The IF amplifiers are centered at 44 MHz for optimal frequency transference of the audio and video carriers.[k] Like radio, television has automatic gain control (AGC). This controls the gain of the IF amplifier stages and the tuner.

The video amp and output amplifier is implemented using a pentode or a power transistor. The filter and demodulator separates the 45.75 MHz video from the 41.25 MHz audio then it simply uses a diode to detect the video signal. After the video detector, the video is amplified and sent to the sync separator and then to the picture tube.

The audio signal goes to a 4.5 MHz amplifier. This amplifier prepares the signal for the 4.5 MHz detector. It then goes through a 4.5 MHz IF transformer to the detector. In television, there are 2 ways of detecting FM signals. One way is by the ratio detector. This is simple but very hard to align. The next is a relatively simple detector. This is the quadrature detector. It was invented in 1954. The first tube designed for this purpose was the 6BN6 type. It is easy to align and simple in circuitry. It was such a good design that it is still being used today in the Integrated circuit form. After the detector, it goes to the audio amplifier.

Image synchronization is achieved by transmitting negative-going pulses.[l] The horizontal sync signal is a single short pulse that indicates the start of every line. Two-timing intervals are defined – the front porch between the end of the displayed video and the start of the sync pulse, and the back porch after the sync pulse and before the displayed video. These and the sync pulse itself are called the horizontal blanking (or retrace) interval and represent the time that the electron beam in the CRT is returning to the start of the next display line.

The vertical sync signal is a series of much longer pulses, indicating the start of a new field. The vertical sync pulses occupy the whole of line interval of a number of lines at the beginning and end of a scan; no picture information is transmitted during vertical retrace. The pulse sequence is designed to allow horizontal sync to continue during vertical retrace.[m]

A sync separator circuit detects the sync voltage levels and extracts and conditions signals that the horizontal and vertical oscillators can use to keep in sync with the video. It also forms the AGC voltage.

The horizontal and vertical oscillators form the raster on the CRT. They are driven by the sync separator. There are many ways to create these oscillators. The earliest is the thyratron oscillator. Although it is known to drift, it makes a perfect sawtooth wave. This sawtooth wave is so good that no linearity control is needed. This oscillator was designed for the electrostatic deflection CRTs but also found some use in electromagnetically deflected CRTs. The next oscillator developed was the blocking oscillator which uses a transformer to create a sawtooth wave. This was only used for a brief time period and never was very popular. Finally the multivibrator was probably the most successful. It needed more adjustment than the other oscillators, but it is very simple and effective. This oscillator was so popular that it was used from the early 1950s until today.

Two oscillator amplifiers are needed. The vertical amplifier directly drives the yoke. Since it operates at 50 or 60 Hz and drives an electromagnet, it is similar to an audio amplifier. Because of the rapid deflection required, the horizontal oscillator requires a high-power flyback transformer driven by a high-powered tube or transistor. Additional windings on this flyback transformer typically power other parts of the system.

Loss of horizontal synchronization usually results in a scrambled and unwatchable picture; loss of vertical synchronization produces an image rolling up or down the screen.

Timebase circuits

[edit]In an analog receiver with a CRT display sync pulses are fed to horizontal and vertical timebase circuits (commonly called sweep circuits in the United States), each consisting of an oscillator and an amplifier. These generate modified sawtooth and parabola current waveforms to scan the electron beam. Engineered waveform shapes are necessary to make up for the distance variations from the electron beam source and the screen surface. The oscillators are designed to free-run at frequencies very close to the field and line rates, but the sync pulses cause them to reset at the beginning of each scan line or field, resulting in the necessary synchronization of the beam sweep with the originating signal. The output waveforms from the timebase amplifiers are fed to the horizontal and vertical deflection coils wrapped around the CRT tube. These coils produce magnetic fields proportional to the changing current, and these deflect the electron beam across the screen.

In the 1950s, the power for these circuits was derived directly from the mains supply. A simple circuit consisted of a series voltage dropper resistance and a rectifier. This avoided the cost of a large high-voltage mains supply (50 or 60 Hz) transformer. It was inefficient and produced a lot of heat.

In the 1960s, semiconductor technology was introduced into timebase circuits. During the late 1960s in the UK, synchronous (with the scan line rate) power generation was introduced into solid state receiver designs.[12]

In the UK use of the simple (50 Hz) types of power, circuits were discontinued as thyristor based switching circuits were introduced. The reason for design changes arose from the electricity supply contamination problems arising from EMI, and supply loading issues due to energy being taken from only the positive half cycle of the mains supply waveform.[13]

CRT flyback power supply

[edit]Most of the receiver's circuitry (at least in transistor- or IC-based designs) operates from a comparatively low-voltage DC power supply. However, the anode connection for a CRT requires a very high voltage (typically 10–30 kV) for correct operation.

This voltage is not directly produced by the main power supply circuitry; instead, the receiver makes use of the circuitry used for horizontal scanning. Direct current (DC), is switched through the line output transformer, and alternating current (AC) is induced into the scan coils. At the end of each horizontal scan line the magnetic field, which has built up in both transformer and scan coils by the current, is a source of latent electromagnetic energy. This stored collapsing magnetic field energy can be captured. The reverse flow, short duration, (about 10% of the line scan time) current from both the line output transformer and the horizontal scan coil is discharged again into the primary winding of the flyback transformer by the use of a rectifier which blocks this counter-electromotive force. A small value capacitor is connected across the scan-switching device. This tunes the circuit inductances to resonate at a much higher frequency. This lengthens the flyback time from the extremely rapid decay rate that would result if they were electrically isolated during this short period. One of the secondary windings on the flyback transformer then feeds this brief high-voltage pulse to a Cockcroft–Walton generator design voltage multiplier. This produces the required high-voltage supply. A flyback converter is a power supply circuit operating on similar principles.

A typical modern design incorporates the flyback transformer and rectifier circuitry into a single unit with a captive output lead, known as a diode split line output transformer or an Integrated High Voltage Transformer (IHVT),[14] so that all high-voltage parts are enclosed. Earlier designs used a separate line output transformer and a well-insulated high-voltage multiplier unit. The high frequency (15 kHz or so) of the horizontal scanning allows reasonably small components to be used.

Transition to digital

[edit]In many countries, over-the-air broadcast television of analog audio and analog video signals has been discontinued to allow the re-use of the television broadcast radio spectrum for other services.

The first country to make a wholesale switch to digital over-the-air (terrestrial television) broadcasting was Luxembourg in 2006, followed later in 2006 by the Netherlands.[15] The Digital television transition in the United States for high-powered transmission was completed on 12 June 2009, the date that the Federal Communications Commission (FCC) set. Almost two million households could no longer watch television because they had not prepared for the transition. The switchover had been delayed by the DTV Delay Act.[16] While the majority of the viewers of over-the-air broadcast television in the U.S. watch full-power stations (which number about 1800), there are three other categories of television stations in the U.S.: low-power broadcasting stations, class A stations, and television translator stations. These were given later deadlines.

In Japan, the switch to digital began in northeastern Ishikawa Prefecture on 24 July 2010 and ended in 43 of the country's 47 prefectures (including the rest of Ishikawa) on 24 July 2011, but in Fukushima, Iwate, and Miyagi prefectures, the conversion was delayed to 31 March 2012, due to complications from the 2011 Tōhoku earthquake and tsunami and its related nuclear accidents.[17]

In Canada, most of the larger cities turned off analog broadcasts on 31 August 2011.[18]

China turned off analog broadcasting between 2015 and 2021.[19][needs update]

Brazil switched to digital television on 2 December 2007 in São Paulo and planned to end analog broadcasting nationwide by 30 June 2016. However, the Ministry of Communications announced in 2012 that the deadline would be delayed.[20] As of 2024, Brazil is in the process of implementing its next-generation digital television system, known as TV 3.0.[21][22] In July 2024, ATSC 3.0 standard was officially selected for the country's next-generation digital television system.[21] The transition to TV 3.0 began in 2025, with initial deployments planned for key cities such as São Paulo, Rio de Janeiro, and Brasília.[23][needs update]

In Malaysia, the Malaysian Communications and Multimedia Commission advertised for tender bids to be submitted in the third quarter of 2009 for the 470 through 742 MHz UHF allocation, to enable Malaysia's broadcast system to move into DTV. The new broadcast band allocation would result in Malaysia's having to build an infrastructure for all broadcasters, using a single digital terrestrial television broadcast channel.[citation needed] Large portions of Malaysia are covered by television broadcasts from Singapore, Thailand, Brunei, and Indonesia (from Borneo and Batam). Starting from 1 November 2019, all regions in Malaysia were no longer using the analog system after the states of Sabah and Sarawak finally turned it off on 31 October 2019.[24]

In Singapore, digital television under DVB-T2 began on 16 December 2013. The switchover was delayed many times until analog TV was switched off at midnight on 2 January 2019.[25]

In the Philippines, the National Telecommunications Commission required all broadcasting companies to end analog broadcasting on 31 December 2015 at 11:59 p.m. Due to delay of the release of the implementing rules and regulations for digital television broadcast, the target date was moved to 2020. Full digital broadcast was expected in 2021 and all of the analog TV services were to be shut down by the end of 2023.[26] However, in February 2023, the NTC postponed the ASO/DTV transition to 2025 due to many provincial television stations not being ready to start their digital TV transmissions.[27]

In the Russian Federation, the Russian Television and Radio Broadcasting Network (RTRS) disabled analog broadcasting of federal channels in five stages, shutting down broadcasting in multiple federal subjects at each stage. The first region to have analog broadcasting disabled was Tver Oblast on 3 December 2018, and the switchover was completed on 14 October 2019.[28] During the transition, DVB-T2 receivers and monetary compensations for purchasing of terrestrial or satellite digital TV reception equipment were provided to disabled people, World War II veterans, certain categories of retirees and households with income per member below living wage.[29]

See also

[edit]Notes

[edit]- ^ Many of these countries have transitioned or are transitioning to digital

- ^ Discontinued in 2012, when Japan transitioned to digital (ISDB)

- ^ Discontinued in 2012, when UK transitioned to digital (DVB-T)

- ^ Discontinued in 2011, when France transitioned to digital (DVB-T)

- ^ Many of these transitioned or transitioning to DVB-T as digital television standards

- ^ The RF signal modulation is inverted compared to the conventional AM – the minimum video signal level corresponds to maximum carrier amplitude, and vice versa.

- ^ Their exact frequencies were chosen such that (for NTSC), they are midway between two harmonics of the frame repetition rate, thus ensuring that the majority of the power of the luminance signal does not overlap with the power of the chrominance signal.

- ^ In the British PAL (D) system, the actual chrominance center frequency, with equal lower and upper sidebands, is 4.43361875 MHz, a direct multiple of the scan rate frequency. This frequency was chosen to minimize the chrominance beat interference pattern that would be visible in areas of high color saturation in the transmitted picture.

- ^ A second use of the colorburst in more expensive or newer receiver models is a reference to an AGC system to compensate for chroma gain imperfections in reception.

- ^ A typical circuit used with this device converts the low-frequency color signal to ultrasound and back again.

- ^ Most of the early television sets (1939–45) used 4 stage IF amplifiers with specially designed video amplifier tubes (the type 1852/6AC7). In 1946 the RCA presented a new innovation in television; the RCA 630TS. Instead of using the 1852 octal tube, it uses the 6AG5 7-pin miniature tube. It still had 4 stages, but it was 1/2 the size. Soon all of the manufactures followed RCA and designed better IF stages. They developed higher amplification tubes, and lower stage counts with more amplification. When the tube era came to an end in the mid-70s, they had shrunk the IF stages down to 1–2 (depending on the set) and with the same amplification as the 4 stage, 1852 tube sets.

- ^ In a composite video signal of 1-volt amplitude, these are approximately 0.3 V below the black level.

- ^ The pattern of vertical sync pulses also indicates whether each field represents even or odd lines in interlaced systems depending on whether a pulse begins at the start of a horizontal line, or midway through.

References

[edit]- ^ "Television Technical Performance Code" (PDF). Ofcom – office of Communications. December 2006. Archived (PDF) from the original on 4 July 2011. Retrieved 24 November 2010.

- ^ Final acts of the European Broadcasting Conference in the VHF and UHF bands. Stockholm, 1961. Archived 29 July 2013 at the Wayback Machine

- ^ "TV Technology PAL". Publication date unknown. Thinkbox. Archived from the original on 5 December 2010. Retrieved 24 November 2010.

- ^ "Color Television History". Publication date unknown. About.com. Archived from the original on 20 July 2012. Retrieved 24 November 2010.

- ^ "Color subcarrier frequency and TV Standards/TV Systems". Publication dates 2002, 2003, 2004, 2005 last updated 2005/12/15. Paradiso Design. Retrieved 24 November 2010.

- ^ "Pal systems – Television measurements" (PDF). Tektronics Incorporated. September 1999. Archived from the original (PDF) on 18 July 2011. Retrieved 25 November 2010.

- ^ a b c Gupta, R. G. (2006). Television Engineering and Video Systems. Tata McGraw-Hill. p. 62. ISBN 0-07-058596-2.

- ^ a b c Pemberton, Alan (30 November 2008). "World Analogue Television Standards and Waveforms". Pembers' Ponderings. Sheffield, England. Archived from the original on 20 February 2008. Retrieved 25 September 2010.

- ^ "Basics of Analog Video". maximintegrated.com. Maxim. Retrieved 21 May 2021.

- ^ Wharton, W.; Douglas Howorth (1971). Principles of Television Reception (illustrated ed.). Pitman Publishing. ISBN 0-273-36103-1. OCLC 16244216.

- ^ Mills, Thomas. "A five function IC for television receivers". ResearchGate. IEEE. Retrieved 11 May 2019.

- ^ "TACKLING THE POWER SUPPLY". Publication date – unknown. Old Tellys.co.uk. Archived from the original on 3 March 2012. Retrieved 24 November 2010.

- ^ "An Investigation into the EMC Emissions From Switched Mode Power Supplies and Similar Switched Electronic Load Controllers Operating at Various Loading Conditions" (PDF). York EMC.co.uk. Archived from the original (PDF) on 15 March 2012. Retrieved 24 November 2010.

- ^ "Technical note 77 – Diode Split for E.H.T. generation" (PDF). Publication date – 1976. Mullard. Archived from the original (PDF) on 21 July 2011. Retrieved 24 November 2010.

- ^ "How Television went Digital in The Netherlands" (PDF). Open Society Foundations September 2011. Archived from the original (PDF) on 20 April 2013. Retrieved 4 February 2013.

- ^ Stephanie Condon (26 January 2009). "Senate OKs delay of digital television transition". CNET News. Archived from the original on 25 October 2012. Retrieved 14 June 2009.

- ^ 岩手、宮城、福島の3県のアナログ放送は平成24年3月31日に終了しました [Analog broadcast ended in Iwate, Miyagi and Fukushima on 31 March 2012 (year Heisei 24)] (in Japanese). Tokyo: Ministry of Internal Affairs and Communications. 31 March 2012. Archived from the original on 20 March 2012. Retrieved 1 April 2012.

- ^ "The digital television (DTV) change in Canada happens August 31, 2011 | CRTC". Archived from the original on 11 April 2009. Retrieved 5 May 2009.

- ^ cnBeta (15 July 2020). "广电总局发文关停地面模拟信号 "模拟电视"时代即将结束" (in Chinese (China)). Sina Corp. Retrieved 30 August 2020.

- ^ "BNamericas - Government's 2016 analog TV switch-off suffe..." BNamericas.com. Retrieved 1 January 2025.

- ^ a b Miller, Lee (23 July 2024). "Brazil Adopts ATSC 3.0 for Next-Generation Digital TV". Broadcasting Alliance. Retrieved 1 January 2025.

- ^ "MainConcept Partners with Videodata to Support Brazil's Transition to SBTVD TV 3.0 Standard". www.mainconcept.com. Retrieved 1 January 2025.

- ^ Deborah D. McAdams (24 December 2024). "Brazil Set to Redefine Broadcasting with TV 3.0". TVTechnology. Retrieved 1 January 2025.

- ^ "Malaysia to turn off analogue TV completely on 31 Oct". 25 September 2019.

- ^ "Singapore pulls the plug on analogue TV transmission | Broadcast | News | Rapid TV News".

- ^ Cabuenas, Jon Viktor D. (14 February 2017). "Gov't wants analog TV switched off by 2023". Retrieved 18 March 2023.

- ^ "Philippines to begin Analog TV Shutdown in Metro Manila by late 2024 - LionhearTV". 6 November 2024. Retrieved 23 September 2025.

- ^ "When analog TV channels will be turned off". Russian Television and Radio Broadcasting Network. Archived from the original on 7 March 2021. Retrieved 14 October 2019.

- ^ Plotnikova, Elena (17 February 2019). "Compensation for digital TV. How to get 2000 rubles for buying a digital TV receiver". Argumenty i Fakty. Retrieved 14 October 2019.

External links

[edit]- Video signal measurement and generation

- Television synchronisation at the Wayback Machine (archived 2010-03-26)

- Video broadcast standard frequencies and country listings at the Wayback Machine (archived 2010-12-27)

- EDN magazine describing design of a 1958 transistorized television receiver at the Wayback Machine (archived 2006-07-16)

- Designing the color television signal in the early 1950s as described by two engineers working directly with the NTSC at archive.today (archived 2014-09-23)