Recent from talks

Nothing was collected or created yet.

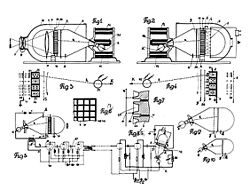

Video camera tube

View on Wikipedia

Video camera tubes are devices based on the cathode-ray tube that were used in television cameras to capture television images, prior to the introduction of charge-coupled device (CCD) image sensors in the 1980s. Several different types of tubes were in use from the early 1930s, and as late as the 1990s.

In these tubes, an electron beam is scanned across an image of the scene to be broadcast focused on a target. This generated a current that is dependent on the brightness of the image on the target at the scan point. The size of the striking ray is tiny compared to the size of the target, allowing 480–486 horizontal scan lines per image in the NTSC format, 576 lines in PAL,[1] and as many as 1035 lines in Hi-Vision.

Cathode-ray tube

[edit]Any vacuum tube which operates using a focused beam of electrons, originally called cathode rays, is known as a cathode-ray tube (CRT). These are usually seen as display devices as used in older (i.e., non-flat panel) television receivers and computer displays. The camera pickup tubes described in this article are also CRTs, but they display no image.[2]

Early research

[edit]In June 1908, the scientific journal Nature published a letter in which Alan Archibald Campbell-Swinton, fellow of the Royal Society (UK), discussed how a fully electronic television system could be realized by using cathode-ray tubes (or "Braun" tubes, after their inventor, Karl Braun) as both imaging and display devices.[3] He noted that the "real difficulties lie in devising an efficient transmitter", and that it was possible that "no photoelectric phenomenon at present known will provide what is required".[3] A cathode-ray tube was successfully demonstrated as a displaying device by the German Professor Max Dieckmann in 1906; his experimental results were published by the journal Scientific American in 1909.[4] Campbell-Swinton later expanded on his vision in a presidential address given to the Röntgen Society in November 1911. The photoelectric screen in the proposed transmitting device was a mosaic of isolated rubidium cubes.[5][6] His concept for a fully electronic television system was later popularized as the "Campbell-Swinton Electronic Scanning System" by Hugo Gernsback and H. Winfield Secor in the August 1915 issue of the popular magazine Electrical Experimenter[7] and by Marcus J. Martin in the 1921 book The Electrical Transmission of Photographs.[8][9][10]

In a letter to Nature published in October 1926, Campbell-Swinton also announced the results of some "not very successful experiments" he had conducted with G. M. Minchin and J. C. M. Stanton. They had attempted to generate an electrical signal by projecting an image onto a selenium-coated metal plate that was simultaneously scanned by a cathode ray beam.[11][12] These experiments were conducted before March 1914, when Minchin died,[13] but they were later repeated by two different teams in 1937, by H. Miller and J. W. Strange from EMI,[14] and by H. Iams and A. Rose from RCA.[15] Both teams succeeded in transmitting "very faint" images with the original Campbell-Swinton's selenium-coated plate, but much better images were obtained when the metal plate was covered with zinc sulphide or selenide,[14] or with aluminum or zirconium oxide treated with caesium.[15] These experiments would form the base of the future vidicon. A description of a CRT imaging device also appeared in a patent application filed by Edvard-Gustav Schoultz in France in August 1921, and published in 1922,[16] although a working device was not demonstrated until some years later.[15]

Experiments with image dissectors

[edit]

An image dissector is a camera tube that creates an "electron image" of a scene from photocathode emissions (electrons) which pass through a scanning aperture to an anode, which serves as an electron detector.[17][1] Among the first to design such a device were German inventors Max Dieckmann and Rudolf Hell,[12][18] who had titled their 1925 patent application Lichtelektrische Bildzerlegerröhre für Fernseher (Photoelectric Image Dissector Tube for Television).[19] The term may apply specifically to a dissector tube employing magnetic fields to keep the electron image in focus,[1] an element lacking in Dieckmann and Hell's design, and in the early dissector tubes built by American inventor Philo Farnsworth.[12][20]

Dieckmann and Hell submitted their application to the German patent office in April 1925, and a patent was issued in October 1927.[19] Their experiments on the image dissector were announced in September 1927 issue of the popular magazine Discovery[21][22] and in the May 1928 issue of the magazine Popular Radio.[23] However, they never transmitted a clear and well focused image with such a tube.[citation needed]

In January 1927, American inventor and television pioneer Philo T. Farnsworth applied for a patent for his Television System that included a device for "the conversion and dissecting of light".[20] Its first moving image was successfully transmitted on September 7 of 1927,[24] and a patent was issued in 1930.[20] Farnsworth quickly made improvements to the device, among them introducing an electron multiplier made of nickel[25][26] and using a "longitudinal magnetic field" in order to sharply focus the electron image.[27] The improved device was demonstrated to the press in early September 1928.[12][28][29] The introduction of a multipactor in October 1933[30][31] and a multi-dynode "electron multiplier" in 1937[32][33] made Farnsworth's image dissector the first practical version of a fully electronic imaging device for television.[34] It had very poor light sensitivity, and was therefore primarily useful only where illumination was exceptionally high (typically over 685 cd/m2).[35][36][37] However, it was ideal for industrial applications, such as monitoring the bright interior of an industrial furnace. Due to their poor light sensitivity, image dissectors were rarely used in television broadcasting, except to scan film and other transparencies.[citation needed]

In April 1933, Farnsworth submitted a patent application also entitled Image Dissector, but which actually detailed a CRT-type camera tube.[38] This is among the first patents to propose the use of a "low-velocity" scanning beam and RCA had to buy it in order to sell image orthicon tubes to the general public.[39] However, Farnsworth never transmitted a clear and well focused image with such a tube.[40][41]

Dissectors were used only briefly for research in television systems before being replaced by different much more sensitive tubes based on the charge-storage phenomenon like the iconoscope during the 1930s. Although camera tubes based on the idea of image dissector technology quickly and completely fell out of use in the field of television broadcasting, they continued to be used for imaging in early weather satellites and the Lunar lander, and for star attitude tracking in the Space Shuttle and the International Space Station.

Operation

[edit]The optical system of the image dissector focuses an image onto a photocathode mounted inside a high vacuum. As light strikes the photocathode, electrons are emitted in proportion to the intensity of the light (see photoelectric effect). The entire electron image is deflected and a scanning aperture permits only those electrons emanating from a very small area of the photocathode to be captured by the detector at any given time. The output from the detector is an electric current whose magnitude is a measure of the brightness of the corresponding area of the image. The electron image is periodically deflected horizontally and vertically ("raster scanning") such that the entire image is read by the detector many times per second, producing an electrical signal that can be conveyed to a display device, such as a CRT monitor, to reproduce the image.[17][1]

The image dissector has no "charge storage" characteristic; the vast majority of electrons emitted by the photocathode are excluded by the scanning aperture,[18] and thus wasted rather than being stored on a photo-sensitive target.

Charge-storage tubes

[edit]Iconoscope

[edit]

The early electronic camera tubes (like the image dissector) suffered from a very disappointing and fatal flaw: They scanned the subject and what was seen at each point was only the tiny piece of light viewed at the instant that the scanning system passed over it. A practical functional camera tube needed a different technological approach, which later became known as Charge - Storage camera tube. It was based on a new physical phenomenon which was discovered and patented in Hungary in 1926, but became widely understood and recognised only from around 1930.[43]

An iconoscope is a camera tube that projects an image on a special charge storage plate containing a mosaic of electrically isolated photosensitive granules separated from a common plate by a thin layer of isolating material, somewhat analogous to the human eye's retina and its arrangement of photoreceptors. Each photosensitive granule constitutes a tiny capacitor that accumulates and stores electrical charge in response to the light striking it. An electron beam periodically sweeps across the plate, effectively scanning the stored image and discharging each capacitor in turn such that the electrical output from each capacitor is proportional to the average intensity of the light striking it between each discharge event.[44][45]

After Hungarian engineer Kálmán Tihanyi studied Maxwell's equations, he discovered a new hitherto unknown physical phenomenon, which led to a break-through in the development of electronic imaging devices. He named the new phenomenon as charge-storage principle. The problem of low sensitivity to light resulting in low electrical output from transmitting or camera tubes would be solved with the introduction of charge-storage technology by Tihanyi in the beginning of 1925.[46] His solution was a camera tube that accumulated and stored electrical charges (photoelectrons) within the tube throughout each scanning cycle. The device was first described in a patent application he filed in Hungary in March 1926 for a television system he dubbed Radioskop.[42] After further refinements included in a 1928 patent application,[46] Tihanyi's patent was declared void in Great Britain in 1930,[47] and so he applied for patents in the United States. Tihanyi's charge storage idea remains a basic principle in the design of imaging devices for television to the present day.

In 1924, while employed by the Westinghouse Electric Corporation in Pittsburgh, Pennsylvania, Russian-born American engineer Vladimir Zworykin presented a project for a totally electronic television system to the company's general manager.[48][49] In July 1925, Zworykin submitted a patent application titled Television System that included a charge storage plate constructed of a thin layer of isolating material (aluminum oxide) sandwiched between a screen (300 mesh) and a colloidal deposit of photoelectric material (potassium hydride) consisting of isolated globules.[50] The following description can be read between lines 1 and 9 in page 2: "The photoelectric material, such as potassium hydride, is evaporated on the aluminum oxide, or other insulating medium, and treated so as to form a colloidal deposit of potassium hydride consisting of minute globules. Each globule is very active photoelectrically and constitutes, to all intents and purposes, a minute individual photoelectric cell". Its first image was transmitted in late summer of 1925,[12] and a patent was issued in 1928.[50] However the quality of the transmitted image failed to impress H.P. Davis, the general manager of Westinghouse, and Zworykin was asked "to work on something useful".[12] A patent for a television system was also filed by Zworykin in 1923, but this filing is not a definitive reference because extensive revisions were done before a patent was issued fifteen years later[39] and the file itself was divided into two patents in 1931.[51][52]

The first practical iconoscope was constructed in 1931 by Sanford Essig, when he accidentally left a silvered mica sheet in the oven too long. Upon examination with a microscope, he noticed that the silver layer had broken up into a myriad of tiny isolated silver globules.[53] He also noticed that, "the tiny dimension of the silver droplets would enhance the image resolution of the iconoscope by a quantum leap".[18] As head of television development at Radio Corporation of America (RCA), Zworykin submitted a patent application in November 1931, and it was issued in 1935.[45] Nevertheless, Zworykin's team was not the only engineering group working on devices that used a charge storage plate. In 1932, the EMI engineers Tedham and McGee under the supervision of Isaac Shoenberg applied for a patent for a new device they dubbed the "Emitron".[54] A 405-line broadcasting service employing the Emitron began at studios in Alexandra Palace in 1936, and patents were issued in the United Kingdom in 1934 and in the US in 1937.[55]

The iconoscope was presented to the general public at a press conference in June 1933,[56] and two detailed technical papers were published in September and October of the same year.[57][58][59] Unlike the Farnsworth image dissector, the Zworykin iconoscope was much more sensitive, useful with an illumination on the target between 40 and 215 lux (4–20 ft-c). It was also easier to manufacture and produced a very clear image.[citation needed] The iconoscope was the primary camera tube used by RCA broadcasting from 1936 until 1946, when it was replaced by the image orthicon tube.[60][61]

Super-Emitron and image iconoscope

[edit]The original iconoscope was noisy, had a high ratio of interference to signal, and ultimately gave disappointing results, especially when compared to the high definition mechanical scanning systems then becoming available.[62][63] The EMI team under the supervision of Isaac Shoenberg analyzed how the Emitron (or iconoscope) produces an electronic signal and concluded that its real efficiency was only about 5% of the theoretical maximum. This is because secondary electrons released from the mosaic of the charge storage plate when the scanning beam sweeps across it may be attracted back to the positively charged mosaic, thus neutralizing many of the stored charges.[64] Lubszynski, Rodda, and McGee realized that the best solution was to separate the photo-emission function from the charge storage one, and so communicated their results to Zworykin.[63][64]

The new video camera tube developed by Lubszynski, Rodda and McGee in 1934 was dubbed "the super-Emitron". This tube is a combination of the image dissector and the Emitron. It has an efficient photocathode that transforms the scene light into an electron image; the latter is then accelerated towards a target specially prepared for the emission of secondary electrons. Each individual electron from the electron image produces several secondary electrons after reaching the target, so that an amplification effect is produced. The target is constructed of a mosaic of electrically isolated metallic granules separated from a common plate by a thin layer of isolating material, so that the positive charge resulting from the secondary emission is stored in the granules. Finally, an electron beam periodically sweeps across the target, effectively scanning the stored image, discharging each granule, and producing an electronic signal like in the iconoscope.[65][66][67]

The super-Emitron was between ten and fifteen times more sensitive than the original Emitron and iconoscope tubes and, in some cases, this ratio was considerably greater.[64] It was used for an outside broadcast by the BBC, for the first time, on Armistice Day 1937, when the general public could watch in a television set how the King laid a wreath at the Cenotaph. This was the first time that anyone could broadcast a live street scene from cameras installed on the roof of neighboring buildings.[68]

On the other hand, in 1934, Zworykin shared some patent rights with the German licensee company Telefunken.[69] The image iconoscope (Superikonoskop in Germany) was produced as a result of the collaboration. This tube is essentially identical to the super-Emitron, but the target is constructed of a thin layer of isolating material placed on top of a conductive base, the mosaic of metallic granules is missing. The production and commercialization of the super-Emitron and image iconoscope in Europe were not affected by the patent war between Zworykin and Farnsworth, because Dieckmann and Hell had priority in Germany for the invention of the image dissector, having submitted a patent application for their Lichtelektrische Bildzerlegerröhre für Fernseher (Photoelectric Image Dissector Tube for Television) in Germany in 1925,[19] two years before Farnsworth did the same in the United States.[20]

The image iconoscope (Superikonoskop) became the industrial standard for public broadcasting in Europe from 1936 until 1960, when it was replaced by the vidicon and plumbicon tubes. Indeed, it was the representative of the European tradition in electronic tubes competing against the American tradition represented by the image orthicon.[70][71] The German company Heimann produced the Superikonoskop for the 1936 Berlin Olympic Games,[72] later Heimann also produced and commercialized it from 1940 to 1955, finally the Dutch company Philips produced and commercialized the image iconoscope and multicon from 1952 until 1963,[71][73] when it was replaced by the much better Plumbicon.[74][75]

Operation

[edit]The super-Emitron is a combination of the image dissector and the Emitron. The scene image is projected onto an efficient continuous-film semitransparent photocathode that transforms the scene light into a light-emitted electron image, the latter is then accelerated (and focused) via electromagnetic fields towards a target specially prepared for the emission of secondary electrons. Each individual electron from the electron image produces several secondary electrons after reaching the target, so that an amplification effect is produced, and the resulting positive charge is proportional to the integrated intensity of the scene light. The target is constructed of a mosaic of electrically isolated metallic granules separated from a common plate by a thin layer of isolating material, so that the positive charge resulting from the secondary emission is stored in the capacitor formed by the metallic granule and the common plate. Finally, an electron beam periodically sweeps across the target, effectively scanning the stored image and discharging each capacitor in turn such that the electrical output from each capacitor is proportional to the average intensity of the scene light between each discharge event (as in the iconoscope).[65][66][67]

The image iconoscope is essentially identical to the super-Emitron, but the target is constructed of a thin layer of isolating material placed on top of a conductive base, the mosaic of metallic granules is missing. Therefore, secondary electrons are emitted from the surface of the isolating material when the electron image reaches the target, and the resulting positive charges are stored directly onto the surface of the isolated material.[70]

Orthicon and CPS Emitron

[edit]The original iconoscope was very noisy[62] due to the secondary electrons released from the photoelectric mosaic of the charge storage plate when the scanning beam swept it across.[64] An obvious solution was to scan the mosaic with a low-velocity electron beam which produced less energy in the neighborhood of the plate such that no secondary electrons were emitted at all. That is, an image is projected onto the photoelectric mosaic of a charge storage plate, so that positive charges are produced and stored there due to photo-emission and capacitance, respectively. These stored charges are then gently discharged by a low-velocity electron scanning beam, preventing the emission of secondary electrons.[76][18] Not all the electrons in the scanning beam may be absorbed in the mosaic, because the stored positive charges are proportional to the integrated intensity of the scene light. The remaining electrons are then deflected back into the anode,[38][44] captured by a special grid,[77][78][79] or deflected back into an electron multiplier.[80]

Low-velocity scanning beam tubes have several advantages; there are low levels of spurious signals and high efficiency of conversion of light into signal, so that the signal output is maximum. However, there are serious problems as well, because the electron beam spreads and accelerates in a direction parallel to the target when it scans the image's borders and corners, so that it produces secondary electrons and one gets an image that is well focused in the center but blurry in the borders.[41][81] Henroteau was among the first inventors to propose in 1929 the use of low-velocity electrons for stabilizing the potential of a charge storage plate,[82] but Lubszynski and the EMI team were the first engineers in transmitting a clear and well focused image with such a tube.[40] Another improvement is the use of a semitransparent charge storage plate. The scene image is then projected onto the back side of the plate, while the low-velocity electron beam scans the photoelectric mosaic at the front side. This configurations allows the use of a straight camera tube, because the scene to be transmitted, the charge storage plate, and the electron gun can be aligned one after the other.[18]

The first fully functional low-velocity scanning beam tube, the CPS Emitron, was invented and demonstrated by the EMI team under the supervision of Sir Isaac Shoenberg.[83] In 1934, the EMI engineers Blumlein and McGee filed for patents for television transmitting systems where a charge storage plate was shielded by a pair of special grids, a negative (or slightly positive) grid lay very close to the plate, and a positive one was placed further away.[77][78][79] The velocity and energy of the electrons in the scanning beam were reduced to zero by the decelerating electric field generated by this pair of grids, and so a low-velocity scanning beam tube was obtained.[76][84] The EMI team kept working on these devices, and Lubszynski discovered in 1936 that a clear image could be produced if the trajectory of the low-velocity scanning beam was nearly perpendicular (orthogonal) to the charge storage plate in a neighborhood of it.[40][85] The resulting device was dubbed the cathode potential stabilized Emitron, or CPS Emitron.[76][86] The industrial production and commercialization of the CPS Emitron had to wait until the end of the Second World War;[84] it was widely used in the UK until 1963, when it was replaced by the much better Plumbicon.[74][75]

On the other side of the Atlantic, the RCA team led by Albert Rose began working in 1935 on a low-velocity scanning beam device they came to dub the orthicon.[87][88] Iams and Rose solved the problem of guiding the beam and keeping it in focus by installing specially designed deflection plates and deflection coils near the charge storage plate to provide a uniform axial magnetic field.[41][80][89] The orthicon's performance was similar to that of the image iconoscope,[90] but it was also unstable under sudden flashes of bright light, producing "the appearance of a large drop of water evaporating slowly over part of the scene".[18]

Image orthicon

[edit]

The image orthicon (sometimes abbreviated IO), was common in American broadcasting from 1946 until 1968.[61] A combination of the image dissector and the orthicon technologies, it replaced the iconoscope in the United States, which required a great deal of light to work adequately.[91]

The image orthicon tube was developed at RCA by Albert Rose, Paul K. Weimer, and Harold B. Law. It represented a considerable advance in the television field, and after further development work, RCA created original models between 1939 and 1940.[61] The National Defense Research Committee entered into a contract with RCA where the NDRC paid for its further development. Upon RCA's development of the more sensitive image orthicon tube in 1943, RCA entered into a production contract with the U.S. Navy, the first tubes being delivered in January 1944.[92] RCA began production of image orthicons for civilian use in the second quarter of 1946.[61][93]

While the iconoscope and the intermediate orthicon used capacitance between a multitude of small but discrete light sensitive collectors and an isolated signal plate for reading video information, the image orthicon employed direct charge readings from a continuous electronically charged collector. The resultant signal was immune to most extraneous signal crosstalk from other parts of the target, and could yield extremely detailed images. Image orthicon cameras were still being used by NASA for capturing Apollo/Saturn rockets nearing orbit, although the television networks had phased the cameras out. [94][failed verification]

An image orthicon camera can take television pictures by candlelight because of the more ordered light-sensitive area and the presence of an electron multiplier at the base of the tube, which operated as a high-efficiency amplifier. It also has a logarithmic light sensitivity curve similar to the human eye. However, it tends to flare in bright light, causing a dark halo to be seen around the object; this anomaly was referred to as blooming in the broadcast industry when image orthicon tubes were in operation.[95] Image orthicons were used extensively in the early color television cameras such as the RCA TK-40/41, where the increased sensitivity of the tube was essential to overcome the very inefficient, beam-splitting optical system of the camera.[95][96]

The image orthicon tube was at one point colloquially referred to as an Immy. Harry Lubcke, the then-President of the Academy of Television Arts & Sciences, decided to have their award named after this nickname. Since the statuette was female, it was feminized into Emmy.[97] The Image orthicon was used until the end of black and white television production in the 1960s.[98]

Operation

[edit]An image orthicon consists of three parts: a photocathode with an image store (target), a scanner that reads this image (an electron gun), and a multistage electron multiplier.[99]

In the image store, light falls upon the photocathode which is a photosensitive plate at a very negative potential (approx. -600 V), and is converted into an electron image (a principle borrowed from the image dissector). This electron rain is then accelerated towards the target (a very thin glass plate acting as a semi-isolator) at ground potential (0 V), and passes through a very fine wire mesh (nearly 200 or 390[100] wires per cm), very near (a few hundredths of a cm) and parallel to the target, acting as a screen grid at a slightly positive voltage (approx +2 V). Once the image electrons reach the target, they cause a splash of electrons by the effect of secondary emission. On average, each image electron ejects several splash electrons (thus adding amplification by secondary emission), and these excess electrons are soaked up by the positive mesh effectively removing electrons from the target and causing a positive charge on it in relation to the incident light in the photocathode. The result is an image painted in positive charge, with the brightest portions having the largest positive charge.[101]

A sharply focused beam of electrons (a cathode ray) is generated by the electron gun at ground potential and accelerated by the anode (the first dynode of the electron multiplier) around the gun at a high positive voltage (approx. +1500 V). Once it exits the electron gun, its inertia makes the beam move away from the dynode towards the back side of the target. At this point the electrons lose speed and get deflected by the horizontal and vertical deflection coils, effectively scanning the target. Thanks to the axial magnetic field of the focusing coil, this deflection is not in a straight line, thus when the electrons reach the target they do so perpendicularly avoiding a sideways component. The target is nearly at ground potential with a small positive charge, thus when the electrons reach the target at low speed they are absorbed without ejecting more electrons. This adds negative charge to the positive charge until the region being scanned reaches some threshold negative charge, at which point the scanning electrons are reflected by the negative potential rather than absorbed (in this process the target recovers the electrons needed for the next scan). These reflected electrons return down the cathode-ray tube toward the first dynode of the electron multiplier surrounding the electron gun which is at high potential. The number of reflected electrons is a linear measure of the target's original positive charge, which, in turn, is a measure of brightness.[102]

Dark halo

[edit]

The mysterious dark "orthicon halo" around bright objects in an orthicon-captured image (also known as "blooming") is based on the fact that the IO relies on the emission of photoelectrons, but very bright illumination can produce more of them locally than the device can successfully deal with. At a very bright point on a captured image, a great preponderance of electrons is ejected from the photosensitive plate. So many may be ejected that the corresponding point on the collection mesh can no longer soak them up, and thus they fall back to nearby spots on the target instead, much as water splashes in a ring when a rock is thrown into it. Since the resultant splashed electrons do not contain sufficient energy to eject further electrons where they land, they will instead neutralize any positive charge that has been built-up in that region. Since darker images produce less positive charge on the target, the excess electrons deposited by the splash will be read as a dark region by the scanning electron beam.[citation needed]

This effect was actually cultivated by tube manufacturers to a certain extent, as a small, carefully controlled amount of the dark halo has the effect of crispening the visual image due to the contrast effect. (That is, giving the illusion of being more sharply focused than it actually is). The later vidicon tube and its descendants (see below) do not exhibit this effect, and so could not be used for broadcast purposes until special detail correction circuitry could be developed.[103]

Vidicon

[edit]A vidicon tube is a video camera tube design in which the target material is a photoconductor. The vidicon was developed in 1950 at RCA by P. K. Weimer, S. V. Forgue and R. R. Goodrich as a simple alternative to the structurally and electrically complex image orthicon.[98][104][105][106] While the initial photoconductor used was selenium, other targets—including silicon diode arrays—have been used. Vidicons with these targets are known as Si-vidicons or Ultricons.[107][108]

The vidicon is a storage-type camera tube in which a charge-density pattern is formed by the imaged scene radiation on a photoconductive surface which is then scanned by a beam of low-velocity electrons. This surface is on a glass plate and is also called the target.[100][109] More specifically, this glass plate is covered in a transparent, electrically conductive, indium tin oxide (ITO) layer, on top of which the photoconductive surface is formed by depositing photoconductive material which can be applied as small squares with insulation between the squares. The photoconductor is normally an insulator but becomes partially conductive when struck by electrons.[100] The output of the tube comes from the ITO layer.[107]

The target is kept at a positive voltage of 30 volts and the cathode in the tube is at a voltage of negative 30 volts. The cathode releases electrons which are modulated by grid G1 and accelerated by grid G2 creating an electron beam. Magnetic coils deflect, focus, and align the electron beam so it can scan the surface of the target. The beam deposits electrons on the target and when enough photons strike the target, a difference in current is produced between the two electrically conductive layers of the target, and due to a connection to an electrical resistor this difference is output as a voltage. The fluctuating voltage created in the target is coupled to a video amplifier[100] and used to reproduce the scene being imaged, in other words it is the video output. The electrical charge produced by an image will remain in the face plate until it is scanned or until the charge dissipates. Special Vidicons can have resolutions of up to 5,000 TV lines.[110]

By using a pyroelectric material such as triglycine sulfate (TGS) as the target, a vidicon sensitive over a broad portion of the infrared spectrum[111] is possible. This technology was a precursor to modern microbolometer technology, and mainly used in firefighting thermal cameras.[112]

Prior to the design and construction of the Galileo probe to Jupiter, in the late 1970s to early 1980s NASA used vidicon cameras on nearly all the unmanned deep space probes equipped with the remote sensing ability.[113] Vidicon tubes were also used aboard the first three Landsat earth imaging satellites launched in 1972, as part of each spacecraft's Return Beam Vidicon (RBV) imaging system.[114][115][116] The Uvicon, a UV-variant Vidicon was also used by NASA for UV duties.[117]

Vidicon tubes were popular in 1970s and 1980s, after which they were rendered obsolete by solid-state image sensors, with the charge-coupled device (CCD) and then the CMOS sensor.

All vidicon and similar tubes are prone to image lag, better known as ghosting, smearing, burn-in, comet tails, luma trails and luminance blooming. Image lag is visible as noticeable (usually white or colored) trails that appear after a bright object (such as a light or reflection) has moved, leaving a trail that eventually fades into the image.[118] It cannot be avoided or eliminated, as it is inherent to the technology. To what degree the image generated by the Vidicon is affected will depend on the properties of the target material used on the Vidicon, and the capacitance of the target material (known as the storage effect) as well as the resistance of the electron beam used to scan the target. The higher the capacitance of the target, the higher the charge it can hold and the longer it will take for the trail to disappear. The remaining charges on the target eventually dissipate making the trail disappear.[119]

Vidicons can be damaged by high intensity light exposure.[120] Image burn-in occurs when an image is captured by a Vidicon for a long time and appears as a persistent outline of the image when it changes, and the outline disappears over time. Vidicons can become damaged by direct exposure to the sun which causes them to develop dark spots.[121][122] Vidicons often used antimony trisulfide as the photoconductive material.[107] They were not very successful because of image lag, which was seen in the RCA TK-42 color camera.[106]

Si-vidicon (1969)

[edit]Si-vidicons, silicon vidicons[123] or Epicons,[124] Vidicons using arrays of silicon diodes for the target, were introduced in 1969 for the Picturephone.[125] They are very resistant to burn-in, have low image lag and very high sensitivity but are not considered suitable for broadcast TV production as they suffer from high image blooming and image non uniformity. The targets in these tubes are made on silicon substrates and require 10 volts to operate, they are made with semiconductor device fabrication processes.[124] These tubes could be used with an image intensifier in which case they were known as silicon intensified tubes (SITs) which had an additional photocathode in front of the target that produced large amounts of electrons when struck by photons, and the electrons were accelerated to the target with several hundred volts. These tubes were used for tracking satellite debris.[107]

Plumbicon (1965)

[edit]

Plumbicon is a registered trademark of Philips from 1963, for its lead(II) oxide (PbO) target vidicons.[126] It was demonstrated in 1965 at the NAB Show.[127][128] Used frequently in broadcast camera applications, these tubes have low output, but a high signal-to-noise ratio. They have excellent resolution compared to image orthicons, but lack the artificially sharp edges of IO tubes, which cause some of the viewing audience to perceive them as softer. CBS Labs invented the first outboard edge enhancement circuits to sharpen the edges of Plumbicon generated images.[129][130][131] Philips received the 1966 Technology & Engineering Emmy Award for the Plumbicon.[132] Targets in Plumbicons have two layers: a pure PbO layer, and a doped PbO layer. The pure PbO is an intrinsic I type semiconductor, and a layer of it is doped to create a P type PbO semiconductor, thus creating a semiconductor junction.[133] The PbO is in crystalline form.[134]

Plumbicons were the first commercially successful version of the Vidicon. They were smaller, had lower noise, higher sensitivity and resolution, had less image lag than Vidicons,[106] and were a defining factor in the development of color TV cameras.[98] The most widely used camera tubes in TV production were the Plumbicons and the Saticon.[107] Compared to Saticons, Plumbicons have much higher resistance to burn-in, and comet and trailing artifacts from bright lights in the shot. Saticons though, usually have slightly higher resolution. After 1980, and the introduction of the diode-gun Plumbicon tube, the resolution of both types was so high, compared to the maximum limits of the broadcasting standard, that the Saticon's resolution advantage became moot. While broadcast cameras migrated to solid-state charge-coupled devices, Plumbicon tubes remained a staple imaging device in the medical field.[129][130][131] High resolution Plumbicons were made for the HD-MAC standard.[135] Since PbO is not stable in air, the deposition of PbO on the target is challenging.[136] Vistacons developed by RCA[137] and Leddicons made by EEV[138] also use PbO in their targets.[98]

Until 2016, Narragansett Imaging was the last company making Plumbicons, using factories Philips built in Rhode Island, USA. While still a part of Philips, the company purchased EEV's (English Electric Valve) lead oxide camera tube business, and gained a monopoly in lead-oxide tube production.[129][130][131] Lead oxide tubes were also made by Matsushita.[139][140]

Saticon (1973)

[edit]Saticon is a registered trademark of Hitachi from 1973, also produced by Thomson and Sony. It was developed in a joint effort by Hitachi and NHK Science & Technology Research Laboratories (NHK is The Japan Broadcasting Corporation). Introduced in 1973,[141][142] Its surface consists of selenium with trace amounts of arsenic and tellurium added (SeAsTe) to make the signal more stable. SAT in the name is derived from (SeAsTe).[143] Saticon tubes have an average light sensitivity equivalent to that of 64 ASA film.[144] Compared to the Plumbicon it has a less advantageous operating temperature range and has more image lag.[107] The target in a Saticon has a transparent Tin oxide transparent electrically conductive layer, followed by a SeAsTe layer, a SeAs layer, and an Antimony trisulfide layer which faces the electron beam.[141]

A high-gain avalanche rushing amorphous photoconductor (HARP) made of amorphous Selenium (a-Se) can be used to increase light sensitivity to up to 10 times that of conventional saticons, and Saticons with this kind of target are known as HARPICONs. The target in HARPICONs is made up of ITO (indium-tin oxide), CeO2 (Cerium oxide), Selenium doped with Arsenic and Lithium Fluoride, Selenium doped with Arsenic and Tellurium, amorphous Selenium made by doping it with Arsenic, and antimony trisulfide.[145][146][147][144] Saticons were made for the Sony HDVS system, used to produce early analog high-definition television using multiple sub-Nyquist sampling encoding (MUSE).[144]

Pasecon (1972)

[edit]Originally developed by Toshiba in 1972 as chalnicon, Pasecon is a registered trademark of Heimann GmbH from 1977. Its surface consists of cadmium selenide trioxide (CdSeO3). Due to its wide spectral response, it is labelled as panchromatic selenium vidicon, hence the acronym 'pasecon'.[143][148] It is not considered suitable for broadcast TV production, as it suffers from high image lag.[107]

Newvicon (1974)

[edit]Newvicon is a registered trademark of Matsushita from 1973.[149] Introduced in 1974,[150][151] The Newvicon tubes were characterized by high light sensitivity. Its surface consists of a combination of zinc selenide (ZnSe) and zinc cadmium Telluride (ZnCdTe).[143] It is not considered suitable for broadcast TV production, as it suffers from high image lag and non uniformity.[107]

Trinicon (1971)

[edit]Trinicon is a registered trademark of Sony from 1971.[152] It uses a vertically striped RGB color filter over the faceplate of an otherwise standard vidicon imaging tube to segment the scan into corresponding red, green and blue segments. Only one tube was used in the camera, instead of a tube for each color, as was standard for color cameras used in television broadcasting. It is used mostly in low-end consumer cameras, such as the HVC-2200 and HVC-2400 models, though Sony also used it in some moderate cost professional cameras in the 1970s and 1980s, such as the DXC-1600 series.[153]

Although the idea of using color stripe filters over the target was not new, the Trinicon was the only tube to use the primary RGB colors. This necessitated an additional electrode buried in the target to detect where the scanning electron beam was relative to the stripe filter. Previous color stripe systems had used colors where the color circuitry was able to separate the colors purely from the relative amplitudes of the signals.[154] As a result, the Trinicon featured a larger dynamic range of operation.

Sony later combined the Saticon tube with the Trinicon's RGB color filter, providing low-light sensitivity and superior color. This type of tube was known as the SMF Trinicon tube, or Saticon Mixed Field. SMF Trinicon tubes were used in the HVC-2800 and HVC-2500 consumer cameras, the DXC-1800 and BVP-1 professional cameras, as well as the first Betamovie camcorders. Toshiba offered a similar tube in 1974,[155] and Hitachi also developed a similar Saticon with a color filter in 1981.[156]

Light biasing

[edit]All the vidicon type tubes except the vidicon itself were able to use a light biasing technique to improve the sensitivity and contrast. The photosensitive target in these tubes suffered from the limitation that the light level had to rise to a particular level before any video output resulted. Light biasing was a method whereby the photosensitive target was illuminated from a light source just enough that no appreciable output was obtained, but such that a slight increase in light level from the scene was enough to provide discernible output. The light came from either an illuminator mounted around the target, or in more professional cameras from a light source on the base of the tube and guided to the target by light piping. The technique would not work with the baseline vidicon tube because it suffered from the limitation that as the target was fundamentally an insulator, the constant low light level built up a charge which would manifest itself as a form of fogging. The other types had semiconducting targets which did not have this problem.

Color cameras

[edit]Early color cameras used the obvious technique of using separate red, green and blue image tubes in conjunction with a color separator, a technique still in use with 3CCD solid state cameras today. It was also possible to construct a color camera that used a single image tube. One technique has already been described (Trinicon above). A more common technique and a simpler one from the tube construction standpoint was to overlay the photosensitive target with a color striped filter having a fine pattern of vertical stripes of green, cyan and clear filters (i.e. green; green and blue; and green, blue and red) repeating across the target. The advantage of this arrangement was that for virtually every color, the video level of the green component was always less than the cyan, and similarly the cyan was always less than the white. Thus the contributing images could be separated without any reference electrodes in the tube. If the three levels were the same, then that part of the scene was green. This method suffered from the disadvantage that the light levels under the three filters were almost certain to be different, with the green filter passing not more than one third of the available light.

Variations on this scheme exist, the principal one being to use two filters with color stripes overlaid such that the colors form vertically oriented lozenge shapes overlaying the target. The method of extracting the color is similar however.

Field-sequential color system

[edit]During the 1930s and 1940s, field-sequential color systems were developed which used synchronized motor-driven color-filter disks at the camera's image tube and at the television receiver. Each disk consisted of red, blue, and green transparent color filters. In the camera, the disk was in the optical path, and in the receiver, it was in front of the CRT. Disk rotation was synchronized with vertical scanning so that each vertical scan in sequence was for a different primary color. This method allowed regular black-and-white image tubes and CRTs to generate and display color images. A field-sequential system developed by Peter Goldmark for CBS was demonstrated to the press on September 4, 1940,[157][158][159] and was first shown to the general public on January 12, 1950.[160] Guillermo González Camarena independently developed a field-sequential color disk system in Mexico in the early 1940s, for which he requested a patent in Mexico on August 19 of 1940 and in the US in 1941.[161] Gonzalez Camarena produced his color television system in his laboratory Gon-Cam for the Mexican market and exported it to the Columbia College of Chicago, who regarded it as the best system in the world.[162][163]

Magnetic focusing in typical camera tubes

[edit]The phenomenon known as magnetic focusing was discovered by A. A. Campbell-Swinton in 1896. He found that a longitudinal magnetic field generated by an axial coil can focus an electron beam.[164] This phenomenon was immediately corroborated by J. A. Fleming, and Hans Busch gave a complete mathematical interpretation in 1926.[165]

Diagrams in this article show that the focus coil surrounds the camera tube; it is much longer than the focus coils for earlier TV CRTs. Camera-tube focus coils, by themselves, have essentially parallel lines of force, very different from the localized semi-toroidal magnetic field geometry inside a TV receiver CRT focus coil. The latter is essentially a magnetic lens; it focuses the "crossover" (between the CRT's cathode and G1 electrode, where the electrons pinch together and diverge again) onto the screen.

The electron optics of camera tubes differ considerably. Electrons inside these long focus coils take helical paths as they travel along the length of the tube. The center (think local axis) of one of those helices is like a line of force of the magnetic field. While the electrons are traveling, the helices essentially don't matter. Assuming that they start from a point, the electrons will focus to a point again at a distance determined by the strength of the field. Focusing a tube with this kind of coil is simply a matter of trimming the coil's current. In effect, the electrons travel along the lines of force, although helically, in detail.

These focus coils are essentially as long as the tubes themselves, and surround the deflection yoke (coils). Deflection fields bend the lines of force (with negligible defocusing), and the electrons follow the lines of force.

In a conventional magnetically deflected CRT, such as in a TV receiver or computer monitor, basically the vertical deflection coils are equivalent to coils wound around an horizontal axis. That axis is perpendicular to the neck of the tube; lines of force are basically horizontal. (In detail, coils in a deflection yoke extend some distance beyond the neck of the tube, and lie close to the flare of the bulb; they have a truly distinctive appearance.)

In a magnetically focused camera tube (there are electrostatically focused vidicons), the vertical deflection coils are above and below the tube, instead of being on both sides of it. One might say that this sort of deflection starts to create S-bends in the lines of force, but doesn't become anywhere near to that extreme.

Size

[edit]The size of video camera tubes is simply the overall outside diameter of the glass envelope. This differs from the size of the sensitive area of the target which is typically two thirds of the size of the overall diameter. Tube sizes are always expressed in inches for historical reasons. A one-inch camera tube has a sensitive area of approximately two thirds of an inch on the diagonal or about 16 mm.

Although the video camera tube is now technologically obsolete, the size of solid-state image sensors is still expressed as the equivalent size of a camera tube. For this purpose a new term was coined and it is known as the optical format. The optical format is approximately the true diagonal of the sensor multiplied by 3⁄2. The result is expressed in inches and is usually, though not always, rounded to a convenient fraction (hence the approximation). For instance, a 6.4 mm × 4.8 mm (0.25 in × 0.19 in) sensor has a diagonal of 8.0 mm (0.31 in) and therefore an optical format of 8.0 × 3⁄2 = 12 mm (0.47 in), which is rounded to the convenient imperial fraction of 1⁄2 inch (13 mm). The parameter is also the source of the "Four Thirds" in the Four Thirds system and its Micro Four Thirds extension—the imaging area of the sensor in these cameras is approximately that of a 4⁄3-inch (3.4 cm) video-camera tube at approximately 22 millimetres (0.87 in).[166]

Although the optical format size bears no relationship to any physical parameter of the sensor, its use means that a lens that would have been used with (say) a 4⁄3-inch camera tube will give roughly the same angle of view when used with a solid-state sensor with an optical format of 4⁄3 of an inch.

Late use and decline

[edit]The lifespan of videotube technology reached as far as the 90s, when high definition, 1035-line videotubes were used in the early MUSE HD broadcasting system. While CCDs were tested for this application, as of 1993 broadcasters still found them inadequate due to issues achieving the necessary high resolution without compromising image quality with undesirable side-effects.[167]

Modern charge-coupled device (CCD) and CMOS-based sensors offer many advantages over their tube counterparts. These include a lack of image lag, high overall picture quality, high light sensitivity and dynamic range, a better signal-to-noise ratio and significantly higher reliability and ruggedness. Other advantages include the elimination of the respective high and low-voltage power supplies required for the electron beam and heater filament, elimination of the drive circuitry for the focusing coils, no warm-up time and a significantly lower overall power consumption. Despite these advantages, the acceptance and incorporation of solid-state sensors into television and video cameras was not immediate. Early sensors were of lower resolution and performance than picture tubes, and were initially relegated to consumer-grade video recording equipment.[167]

Also, video tubes had progressed to a high standard of quality and were standard issue equipment to networks and production entities. Those entities had a substantial investment in not only tube cameras, but also in the ancillary equipment needed to correctly process tube-derived video. A switch-over to solid-state image sensors rendered much of that equipment (and the investments behind it) obsolete, and required new equipment optimized to work well with solid-state sensors; as the old equipment was optimized for tube-sourced video.

Due to their relative insensitivity to radiation, compared to semi-conductor based devices, video camera tubes are still occasionally used in high radiation environments such as nuclear power plants.[citation needed]

See also

[edit]References

[edit]- ^ a b c d Jack, K.; Tsatsoulin, V. (2002). Dictionary of Video and Television Technology. Amsterdam: Newnes Press. pp. 143, 148. ISBN 978-1-878707-99-4. OCLC 50761489.

- ^ Patrick, N. W. (2005). "Cathode-ray tube". McGraw-Hill Concise Encyclopedia of Science and Technology (5th ed.). New York: McGraw-Hill. pp. 382–383. ISBN 978-0-07-142957-3. OCLC 56198760. OL 9254941M.

- ^ a b Campbell-Swinton, A. A. (1908-06-18). "Distant Electric Vision". Nature. 78 (2016): 151. Bibcode:1908Natur..78..151S. doi:10.1038/078151a0. S2CID 3956737.

- ^ Dieckmann, M. (1909-07-24). "The Problem of Television; A Partial Solution". Scientific American Supplement. 68 (1751): 61–62. doi:10.1038/scientificamerican07241909-61supp.

- ^ Abramson, A. (1955). Electronic Motion Pictures: A History of the Television Camera. Berkeley: University of California Press. p. 31. OCLC 1282602.

- ^ Magoun, A. B. (2007). Television: The Life Story of a Technology. Westport: Greenwood Press. p. 12. ISBN 978-0-313-33128-2. OCLC 85828932. OL 10420449M.

- ^ Secor, H. W. (August 1915). "Television, or The Projection of Pictures Over a Wire" (PDF). The Electrical Experimenter. Vol. III, no. 4. New York: Experimenter Publishing Company. pp. 131–132 (in work pp. 5–6).

- ^ Martin, M. J. (1921). The Electrical Transmission of Photographs. London: Sir Issac Pitman & Sons. pp. 102–106. OCLC 1110454. OL 7057092M.

- ^ Gernsback, H.; Secor, H. W., eds. (July 1928). "Vacuum Cameras to Speed Up Television" (PDF). Television. Vol. I, no. 2. New York: Experimenter Publishing Company. pp. 25–26.

- ^ Gernsback, H.; Secor, H. W., eds. (July 1928). "Campbell Swinton Television System" (PDF). Television. Vol. I, no. 2. New York: Experimenter Publishing Company. pp. 27–28.

- ^ Campbell-Swinton, A. A. (1926-10-23). "Electric Television". Nature. 118 (2973): 590. Bibcode:1926Natur.118..590S. doi:10.1038/118590a0. S2CID 4081053.

- ^ a b c d e f Burns, R. W. (1998). Television: An International History of the Formative Years. London: The Institution of Electrical Engineers. pp. 123, 358–361, 383. ISBN 978-0-85296-914-4. OCLC 38435423. OL 3542553M.

- ^ Gregory, R. A. (1914-04-02). "Prof. G. M. Minchin, F.R.S." Nature. 93 (2318): 115–116. Bibcode:1914Natur..93..115R. doi:10.1038/093115a0.

- ^ a b Miller, H.; Strange, J. W. (1938). "The Electrical Reproduction of Images by the Photoconductive Effect". Proceedings of the Physical Society. 50 (3): 374–384. Bibcode:1938PPS....50..374M. doi:10.1088/0959-5309/50/3/307.

- ^ a b c Iams, H.; Rose, A. (August 1937). "Television Pickup Tubes with Cathode-Ray Beam Scanning". Proceedings of the Institute of Radio Engineers. 25 (8): 1048–1070. Bibcode:1937PIRE...25.1048I. doi:10.1109/JRPROC.1937.228423. ISSN 0731-5996. S2CID 51668505.

- ^ Schoultz, E.-G. (1922) [1921]. Brevet d'invention No. 539,613: Procédé et appareillage pour la transmission des images mobiles à distance [Method and apparatus for remote transmission of moving images]. Paris: Office National de la Propriété industrielle. Archived from the original on 2018-11-22. Retrieved 2009-07-28.

- ^ a b Horowitz, P.; Hill, W. (1989). The Art of Electronics (2nd ed.). Cambridge University Press. pp. 1000–1001. ISBN 978-0-521-37095-0. OCLC 19125711.

- ^ a b c d e f Webb, R. C. (2005). Tele-visionaries: The People Behind the Invention of Television. Hoboken: Wiley-Interscience. pp. 30, 34, 65. ISBN 978-0-471-71156-8. OCLC 61916360. OL 22379634M.

- ^ a b c Dieckmann, M.; Hell, R. (1927) [1925]. Patentschrift Nr. 450 187: Lichtelektrische Bildzerlegerröehre für Fernseher [Photoelectric Image Dissector Tube for Television]. Berlin: Reichspatentamt.

- ^ a b c d Farnsworth, Philo T. (1930) [1927]. "Television System". Patent No. 1,773,980. United States Patent Office. Retrieved 2009-07-28.

- ^ Brittain, B. J. (September 1927). "Television on the Continent". Discovery: A Monthly Popular Journal of Knowledge. Vol. 8. London: John Murray. pp. 283–285. hdl:2027/mdp.39015031957916.

- ^ Hartley, J. (1999). Uses of Television. London: Routledge. p. 72. ISBN 978-0-415-08509-0. OCLC 40534751. OL 24271926M.

- ^ Yates, R. F., ed. (May 1928). "Cathode Ray Television" (PDF). In the World's Laboratories. Popular Radio. Vol. XIII, no. 5. pp. 397, 406.

- ^ Postman, Neil (1999-03-29). "Philo Farnsworth". The TIME 100: Scientists & Thinkers. Time. Archived from the original on May 31, 2000. Retrieved 2009-07-28.

- ^ Farnsworth, Philo T. (1934) [1928]. "Photoelectric Apparatus". Patent No. 1,970,036. United States Patent Office. Retrieved 2010-01-15.

- ^ Farnsworth, Philo T. (1939) [1928]. "Television Method". Patent No. 2,168,768. United States Patent Office. Retrieved 2010-01-15.

- ^ Farnsworth, Philo T. (1935) [1928]. "Electrical Discharge Apparatus". Patent No. 1,986,330. United States Patent Office. Archived from the original on 2012-02-25. Retrieved 2009-07-29.

- ^ Farnsworth, E. G. (1990). Distant Vision: Romance and Discovery on an Invisible Frontier. Salt Lake City: PemberlyKent. pp. 108–109. ISBN 978-0-9623276-0-5. OCLC 19971738. OL 26320909M.

- ^ "Philo Taylor Farnsworth (1906–1971)". The Virtual Museum of the City of San Francisco. Archived from the original on June 22, 2011. Retrieved 2009-07-15.

- ^ Farnsworth, Philo T. "Electron Multiplying Device". Patent No. 2,071,515. filed 1933, patented 1937. United States Patent Office. Retrieved 2010-02-22.

- ^ Farnsworth, Philo T. "Multipactor Phase Control". Patent No. 2,071,517. filed 1935, patented 1937. United States Patent Office. Retrieved 2010-02-22.

- ^ Farnsworth, Philo T. "Two-stage Electron Multiplier". Patent No. 2,161,620. filed 1937, patented 1939. United States Patent Office. Retrieved 2010-02-22.

- ^ Gardner, Bernard C. "Image Analyzing and Dissecting Tube". Patent No. 2,200,166. filed 1937, patented 1940. United States Patent Office. Retrieved 2010-02-22.

- ^ Abramson, A. (1987). The History of Television, 1880 to 1941. Jefferson: McFarland & Company. p. 159. ISBN 978-0-89950-284-7. OCLC 15366931. OL 2740120M.

- ^ ITT Industrial Laboratories. (December 1964). "Vidissector - Image Dissector, page 1". Tentative Data-sheet. ITT. Archived from the original on 2010-09-15. Retrieved 2010-02-22.

- ^ ITT Industrial Laboratories. (December 1964). "Vidissector - Image Dissector, page 2". Tentative Data-sheet. ITT. Archived from the original on 2010-09-15. Retrieved 2010-02-22.

- ^ ITT Industrial Laboratories. (December 1964). "Vidissector - Image Dissector, page 3". Tentative Data-sheet. ITT. Archived from the original on 2010-09-15. Retrieved 2010-02-22.

- ^ a b Farnsworth, Philo T. "Image Dissector". Patent No. 2,087,683. filed 1933, patented 1937, reissued 1940. United States Patent Office. Archived from the original on 2011-07-22. Retrieved 2010-01-10.

- ^ a b Schatzkin, Paul. "The Farnsworth Chronicles, Who Invented What -- and When??". Retrieved 2010-01-10.

- ^ a b c Abramson, A. (1995). Zworykin, Pioneer of Television. Urbana: University of Illinois Press. p. 282. ISBN 978-0-252-02104-6. OCLC 29954436. OL 1083768M.

- ^ a b c Rose, A.; Iams, H. A. (September 1939). "Television Pickup Tubes Using Low-Velocity Electron-Beam Scanning". Proceedings of the IRE. 27 (9): 547–555. Bibcode:1939PIRE...27..547R. doi:10.1109/JRPROC.1939.228710. ISSN 0096-8390. S2CID 51670303.

- ^ a b "Kalman Tihanyi's 1926 Patent Application Radioskop". UNESCO Memory of the World. Retrieved 2025-04-22.

- ^ Williams, J. B. (2017). The Electronics Revolution: Inventing the Future. Cham: Springer Nature. p. 29. doi:10.1007/978-3-319-49088-5. ISBN 978-3-319-49088-5. OCLC 999399256.

- ^ a b Tihanyi, Kalman. "Television Apparatus". Patent No. 2,158,259. filed in Germany 1928, filed in USA 1929, patented 1939. United States Patent Office. Archived from the original on 2011-07-22. Retrieved 2010-01-10.

- ^ a b Zworykin, V. K. "Method of and Apparatus for Producing Images of Objects". Patent No. 2,021,907. filed 1931, patented 1935. United States Patent Office. Retrieved 2010-01-10.

- ^ a b "Kálmán Tihanyi (1897–1947)", IEC Techline[permanent dead link], International Electrotechnical Commission (IEC), 2009-07-15.

- ^ Tihanyi, Koloman, Improvements in television apparatus Archived 2022-12-04 at the Wayback Machine. European Patent Office, Patent No. GB313456. Convention date UK application: 1928-06-11, declared void and published: 1930-11-11, retrieved: 2013-04-25.

- ^ Rajchman, J. (2006). "Vladimir Kosma Zworykin". Biographical Memoirs: Volume 88. Biographical Memoirs of the National Academy of Sciences. Vol. 88. Washington, D.C.: The National Academies Press. p. 371. Bibcode:2006nap..book11807N. doi:10.17226/11807. ISBN 978-0-309-10389-3.

- ^ "Vladimir Kosma Zworykin". Encyclopædia Britannica. Retrieved 2018-01-25.

- ^ a b Zworykin, V. K. "Television System". Patent No. 1,691,324. filed 1925, patented 1928. United States Patent Office. Retrieved 2010-01-10.

- ^ Zworykin, Vladimir K. "Television System". Patent No. 2,022,450. filed 1923, issued 1935. United States Patent Office. Retrieved 2010-01-10.

- ^ Zworykin, Vladimir K. "Television System". Patent No. 2,141,059. filed 1923, issued 1938. United States Patent Office. Retrieved 2010-01-10.

- ^ Burns, R. W. (2004). Communications: An International History of the Formative Years. London: The Institution of Electrical Engineers. p. 534. ISBN 978-0-86341-327-8. OCLC 52921676. OL 9576009M.

- ^ EMI LTD; Tedham, William F. & McGee, James D. "Improvements in or relating to cathode ray tubes and the like". Patent No. GB 406,353. filed May 1932, patented 1934. United Kingdom Intellectual Property Office. Archived from the original on 2021-11-22. Retrieved 2010-02-22.

- ^ Tedham, William F. & McGee, James D. "Cathode Ray Tube". Patent No. 2,077,422. filed in Great Britain 1932, filed in USA 1933, patented 1937. United States Patent Office. Retrieved 2010-01-10.

- ^ Laurence, W. L. (1933-06-27). "Human-like Eye Made by Engineers to Televise Images...". The New York Times. p. 1.

- ^ Pocock, H. S., ed. (1933-09-01). "The Iconoscope; America's Latest Television Favourite" (PDF). Wireless World. Vol. XXXIII, no. 9 (731). London: Iliffe & Sons. p. 197.

- ^ Zworykin, V. K. (September 1933). "Television with cathode-ray tubes". Institution of Electrical Engineers - Proceedings of the Wireless Section of the Institution. 8 (24): 219–233. doi:10.1049/pws.1933.0024. ISSN 2050-2613.

- ^ Zworykin, V. K. (October 1933). "Television with cathode-ray tubes". Journal of the Institution of Electrical Engineers. 73 (442): 437–451. doi:10.1049/jiee-1.1933.0150. ISSN 0099-2887.

- ^ "R.C.A. Officials Continue to Be Vague Concerning Future of Television". The Washington Post. 1936-11-15. p. B2.

- ^ a b c d Abramson, A. (2003). The History of Television, 1942 to 2000. Jefferson: McFarland & Company. pp. 7–8, 18, 124. ISBN 978-0-7864-1220-4. OCLC 48837571. OL 9798525M.

- ^ a b Winston, B. (1986). Misunderstanding Media. London: Routeledge & Kegan Paul. pp. 60–61. ISBN 978-0-7102-0002-0. OCLC 15222064. OL 2499006M.

- ^ a b Winston, B. (1998). Media Technology and Society, a History: From the Telegraph to the Internet. London: Routledge. p. 105. ISBN 978-0-415-14230-4. OCLC 37567233. OL 687811M.

- ^ a b c d Alexander, R. C. (2000) [1999]. The Inventor of Stereo: The Life and Works of Alan Dower Blumlein. Oxford: Focal Press. pp. 217–219. ISBN 978-0-240-51628-8. OCLC 166482305.

- ^ a b Lubszynski, Hans Gerhard & Rodda, Sydney. "Improvements in or relating to television". Patent No. GB 442,666. filed May 1934, patented February 1936. United Kingdom Intellectual Property Office. Archived from the original on 2021-11-22. Retrieved 2010-01-15.

- ^ a b Lubszynski, Hans Gerhard & Rodda, Sydney. "Improvements in and relating to television". Patent No. GB 455,085. filed February 1935, patented October 1936. United Kingdom Intellectual Property Office. Retrieved 2010-01-15.

- ^ a b EMI LTD and Lubszynski; Hans Gerhard. "Improvements in or relating to television". Patent No. GB 475,928. filed May 1936, patented November 1937. United Kingdom Intellectual Property Office. Archived from the original on 2021-11-22. Retrieved 2010-01-15.

- ^ Howett, D. (2006). Television Innovations: 50 Technological Developments. Tiverton: Kelly Publications. p. 114. ISBN 978-1-903053-22-5. OCLC 312624263.

- ^ Inglis, A. F. (1990). Behind the Tube: A History of Broadcasting Technology and Business. Boston: Focal Press. p. 172. ISBN 978-0-240-80043-1. OCLC 20220579. OL 2215220M.

- ^ a b De Vries, M. J.; Cross, Nigel; Grant, D. P. (1993). Design Methodology and Relationships with Science. NATO Science Series D. Dordrecht: Kluwer Academic Publishers. p. 222. doi:10.1007/978-94-015-8220-9. ISBN 978-0-7923-2191-0. OCLC 27642302.

- ^ a b Smith, H. (July 1953). "Multicon – A New TV Camera Tube" (PDF). Tele-Tech & Electronic Industries. Vol. 12, no. 7. Bristol: Caldwell-Clements. pp. 57, 125.

- ^ "Prewar Camera Tubes". Hilliard: Early Television Foundation and Museum. Archived from the original on 2011-06-17. Retrieved 2010-01-15.

- ^ Image Iconoscope 5854 (PDF). Koninklijke Philips. 1952–1958. 939 4097, 939 4098, 939 4099, 939 4100, 939 4101. Archived (PDF) from the original on 2006-09-03.

- ^ a b De Haan, E. F. (1962-12-05). "The "Plumbicon", a New Television Camera Tube" (PDF). Philips Technical Review. 24 (2): 57–58.

- ^ a b De Haan, E. F.; Van der Drift, A.; Schampers, P. P. M. (1964-07-07). "The "Plumbicon", a New Television Camera Tube" (PDF). Philips Technical Review. 25 (6/7): 133–151.

- ^ a b c Burns, R. W. (2000). The Life and Times of A. D. Blumlein. London: the Institution of Electrical Engineers. p. 181. ISBN 978-0-85296-773-7. OCLC 43501972.

- ^ a b Blumlein, Alan Dower & McGee, James Dwyer. "Improvements in or relating to television transmitting systems". Patent No. GB 446,661. filed August 1934, patented May 1936. United Kingdom Intellectual Property Office. Archived from the original on 2021-11-22. Retrieved 2010-03-09.

- ^ a b McGee, James Dwyer. "Improvements in or relating to television transmitting systems". Patent No. GB 446,664. filed September 1934, patented May 1936. United Kingdom Intellectual Property Office. Archived from the original on 2021-11-22. Retrieved 2010-03-09.

- ^ a b Blumlein, Alan Dower & McGee, James Dwyer. "Television Transmitting System". Patent No. 2,182,578. filed in Great Britain August 1934, filed in USA August 1935, patented December 1939. United States Patent Office. Retrieved 2010-03-09.

- ^ a b Iams, Harley A. "Television Transmitting Tube". Patent No. 2,288,402. filed January 1941, patented June 1942. United States Patent Office. Retrieved 2010-03-09.

- ^ McGee, J. D. (November 1950). "A review of some television pick-up tubes". Proceedings of the IEE - Part III: Radio and Communication Engineering. 97 (50): 380–381. doi:10.1049/pi-3.1950.0073. ISSN 0369-8947.

- ^ Henroteau, François Charles Pierre. "Television". Patent No. 1,903,112 A. filed 1929, patented 1933. United States Patent Office. Retrieved 2013-01-15.

- ^ "Sir Isaac Shoenberg". Encyclopædia Britannica. Retrieved 2020-07-22.

- ^ a b Gibbons, D. J. (1960). McGee, J. D.; Wilcock, W. L. (eds.). "The Tri-alkali Stabilized C.P.S. Emitron: A New Television Camera Tube of High Sensitivity". Advances in Electronics and Electron Physics. XII. New York: Academic Press: 204. Bibcode:1960AEEP...12..203G. doi:10.1016/S0065-2539(08)60635-6. ISBN 978-0-12-014512-6.

{{cite journal}}: ISBN / Date incompatibility (help) - ^ Lubszynski, Hans Gerhard. "Improvements in and relating to television and like systems". Patent No. GB 468,965. filed January 1936, patented July 1937. United Kingdom Intellectual Property Office. Retrieved 2010-03-09.

- ^ McLean, T. P.; Schagen, P., eds. (1979). Electronic Imaging. The Rank Prize Funds opto-electronics bienniel symposia. London: Academic Press. pp. 46, 53. hdl:2027/uc1.b4164703. ISBN 978-0-12-485050-7. OCLC 5724473.

- ^ Weimer, P. K. (1993). "Albert Rose". Memorial Tributes: Volume 6. Memorial Tributes of the National Academy of Engineering. Vol. 6. Washington, D.C.: The National Academies Press. p. 196. Bibcode:1993nap..book.2231N. doi:10.17226/2231. ISBN 978-0-309-04847-7.

- ^ Johnson, W.; Weimer, P. K.; Williams, R. (December 1991). "Albert Rose". Physics Today. 44 (12): 98. Bibcode:1991PhT....44l..98J. doi:10.1063/1.2810377.

- ^ Rose, Albert. "Television Transmitting Apparatus and Method of Operation". Patent No. 2,407,905. filed 1942, patented 1946. United States Patent Office. Retrieved 2010-01-15.

- ^ Rose, A. (1948). Marton, L. (ed.). "Television Pickup Tubes and the Problem of Vision". Advances in Electronics. Advances in Electronics and Electron Physics. I. New York: Academic Press: 153. Bibcode:1948AEEP....1..131R. doi:10.1016/S0065-2539(08)61102-6. ISBN 978-0-12-014501-0.

{{cite journal}}: ISBN / Date incompatibility (help) - ^ "Television". Microsoft Encarta Online Encyclopedia 2000. Microsoft Corporation. 1997–2000. Archived from the original on October 4, 2009. Retrieved 29 June 2012.

- ^ Remington Rand Inc., v. U.S., 120 F. Supp. 912, 913 (1944).

- ^ aade.com Archived January 29, 2012, at the Wayback Machine RCA 2P23, One of the earliest image orthicons

- ^ The University of Alabama Telescopic Tracking of the Apollo Lunar Missions

- ^ a b dtic.mil Westinghouse Non-blooming Image Orthicon.

- ^ oai.dtic.mil Archived 2015-02-20 at the Wayback Machine Non-blooming Image Orthicon.

- ^ Parker, Sandra (August 12, 2013). "History of the Emmy Statuette". Emmys. Academy of Television Arts and Sciences. Retrieved March 14, 2017.

- ^ a b c d Todorovic, Aleksandar Louis (August 7, 2014). Television Technology Demystified: A Non-technical Guide. CRC Press. ISBN 978-1-136-06853-9 – via Google Books.

- ^ roysvintagevideo.741.com Archived 2021-01-19 at the Wayback Machine 3" image orthicon camera project

- ^ a b c d Biswas, Sambunath. Basic Electronics. Khanna Publishing House. ISBN 978-81-87522-16-4 – via Google Books.

- ^ acmi.net.au Archived April 4, 2004, at the Wayback Machine The Image Orthicon (Television Camera) Tube c. 1940 - 1960

- ^ fazano.pro.br Archived 2020-08-07 at the Wayback Machine The Image Converter

- ^ morpheustechnology.com Morpheus Technology 4.5.1 Camera Tubes

- ^ Rose, Albert (June 29, 2013). Vision: Human and Electronic. Springer Science & Business Media. ISBN 978-1-4684-2037-1 – via Google Books.

- ^ P.K. Weimer, S.V. Forque, and R.R. Goodrich, The vidicon-photoconductive camera tube, Electronics, May (1950).

- ^ a b c Vries, Marc J. de; Cross, Nigel; Grant, D. P. (March 31, 1993). Design Methodology and Relationships with Science. Springer Science & Business Media. ISBN 978-0-7923-2191-0 – via Google Books.

- ^ a b c d e f g h Webster, John G.; Eren, Halit (December 19, 2017). Measurement, Instrumentation, and Sensors Handbook: Electromagnetic, Optical, Radiation, Chemical, and Biomedical Measurement. CRC Press. ISBN 978-1-4398-4893-7 – via Google Books.

- ^ Newcomer, C. D. (October 1981). The RCA Ultricon: An Improved Vidicon Camera Tube for General Closed-Circuit Television Applications (PDF). Lancaster: Solid State Division, Radio Corporation of America. Electro-Optics AN-6994. Archived (PDF) from the original on 20 September 2021.

- ^ Modern Television Practice Principles,Technology and Servicing 2/Ed. New Age International. ISBN 978-81-224-1360-1 – via Google Books.

- ^ Biberman, Lucien (November 11, 2013). Photoelectronic Imaging Devices: Devices and Their Evaluation. Springer Science & Business Media. ISBN 978-1-4684-2931-2 – via Google Books.

- ^ Goss, A. J.; Nixon, R. D.; Watton, R.; Wreathall, W. M. (1985). "Progress in IR Television Using the Pyroelectric Vidicon". In Mollicone, Richard A.; Spiro, Irving J. (eds.). Infrared Technology X. Vol. 510. p. 154. Bibcode:1985SPIE..510..154G. doi:10.1117/12.945018. S2CID 111164581.

- ^ "Heritage TICs EEV P4428 & P4430 Cameras".

- ^ Spacecraft Imaging III: First Voyage into the Planetary Data System (PPT). The Planetary Society. 2001. Archived from the original on 2012-01-26. Retrieved 2011-11-23.

- ^ Bell, E. (ed.). "Return Beam Vidicon Camera (RBV)". NASA Space Science Data Coordinated Archive. National Aeronautics and Space Administration. 1978-026A-01. Retrieved 2017-07-09.

- ^ Rocchio, L. (ed.). "Landsat1". Landsat Science. National Aeronautics and Space Administration. Archived from the original on 2015-09-08. Retrieved 2016-03-25.

- ^ "Landsat 2 History". United States Geological Survey. Archived from the original on 2016-04-28. Retrieved 2007-01-16.

- ^ "Detector, Uvicon, Celescope". National Air and Space Museum, Smithsonian Institution. A19740052001. Archived from the original on 2019-04-11. Retrieved 2018-10-30.

- ^ Zuech, N.; Miller, R. K. (1987). Machine Vision. Lilburn: the Fairmont Press. p. 9. ISBN 978-0-88173-017-3. OCLC 13760379. OL 2552098M.

- ^ "Image Lag | AVAA".

- ^ Miller, Richard K.; Zeuch, Nello (August 31, 1989). Machine Vision. Springer Science & Business Media. ISBN 978-0-442-23737-0 – via Google Books.

- ^ Fennelly, Lawrence J. (12 May 2014). Museum, Archive, and Library Security. Butterworth-Heinemann. ISBN 978-1-4832-2103-8.

- ^ Inoue, Shinya (11 November 2013). Video Microscopy. Springer. ISBN 978-1-4757-6925-8.

- ^ "Pick-up Tube, the heart of TV Camera" (PDF). lampes-et-tubes.info.

- ^ a b Gulati, R. R. (December 4, 2005). Monochrome and Colour Television. New Age International. ISBN 978-81-224-1776-0 – via Google Books.

- ^ Crowell, Merton H.; Labuda, Edward F. (May 6, 1969). "The Silicon Diode Array Camera Tube". Bell System Technical Journal. 48 (5): 1481–1528. Bibcode:1969BSTJ...48.1481C. doi:10.1002/j.1538-7305.1969.tb04277.x – via CrossRef.

- ^ "PLUMBICON Trademark - Registration Number 0770662 - Serial Number 72173123 :: Justia Trademarks". trademarks.justia.com.

- ^ Vries, Marc J. de; Cross, Nigel; Grant, D. P. (March 31, 1993). Design Methodology and Relationships with Science. Springer Science & Business Media. ISBN 978-0-7923-2191-0 – via Google Books.

- ^ Inglis, Andrew F. (December 22, 2023). Behind the Tube: A History of Broadcasting Technology and Business. Taylor & Francis. ISBN 978-1-003-81974-5 – via Google Books.

- ^ a b c "History of Narragansett Imaging". Narragansett Imaging. 2004. Archived from the original on 17 August 2016. Retrieved 29 June 2012.

- ^ a b c "Camera Tubes". Narragansett Imaging. 2004. Archived from the original on 31 May 2016. Retrieved 29 June 2012.

- ^ a b c "Plumbicon Broadcast Tubes". Narragansett Imaging. 2004. Archived from the original on 15 July 2016. Retrieved 29 June 2012.

- ^ "Emmy, 1966 Technology & Engineering Emmy Award" (PDF). Archived from the original (PDF) on July 20, 2019.

- ^ Gulati, R. R. (December 6, 2005). Monochrome and Colour Television. New Age International. ISBN 978-81-224-1776-0 – via Google Books.

- ^ Whitaker, Jerry C. (October 3, 2018). The Electronics Handbook. CRC Press. ISBN 978-1-4200-3666-4 – via Google Books.

- ^ Tejerina, J. L.; Visintin, F. (1993). "The HDTV Demonstrations at Expo 92" (PDF). EBU Technical Review. 254. European Broadcasting Union: 25–32. ISSN 1019-6587.

- ^ Biberman, Lucien (November 11, 2013). Photoelectronic Imaging Devices: Devices and Their Evaluation. Springer Science & Business Media. ISBN 978-1-4684-2931-2 – via Google Books.

- ^ Howett, Dicky (2006). Television Innovations: 50 Technological Developments. Kelly Publications. ISBN 978-1-903053-22-5.

- ^ "EEV Leddicons" (PDF). frank.pocnet.net.

- ^ Howett, Dicky (February 6, 2006). Television Innovations: 50 Technological Developments. Kelly Publications. ISBN 978-1-903053-22-5 – via Google Books.

- ^ "Journal of Electronic Engineering". Dempa Publications. February 6, 1984 – via Google Books.

- ^ a b Goto, N.; Isozaki, Y.; Shidara, K.; Maruyama, E.; Hirai, T.; Fujita, T. (1974). "SATICON: A new photoconductive camera tube with Se-As-Te target". IEEE Transactions on Electron Devices. 21 (11): 662–666. Bibcode:1974ITED...21..662G. doi:10.1109/T-ED.1974.17991.

- ^ "Journal of Electronic Engineering". Dempa Publications. February 6, 1992 – via Google Books.

- ^ a b c Dhake, A. M. (1995). Television and Video Engineering (2nd ed.). New Delhi: McGraw-Hill. ISBN 978-0-07-460105-1. OCLC 731971346.

- ^ a b c Cianci, P. J. (2012). High Definition Television: The Creation, Development, and Implementation of the Technology. Jefferson: McFarland & Company. pp. 41, 67, 321. ISBN 978-0-7864-4975-0. OCLC 760531886.

- ^ Tanioka, K. (2009). "High-Gain Avalanche Rushing amorphous Photoconductor (HARP) detector". Nuclear Instruments and Methods in Physics Research Section A: Accelerators, Spectrometers, Detectors and Associated Equipment. 608 (1): S15 – S17. Bibcode:2009NIMPA.608S..15T. doi:10.1016/j.nima.2009.05.066.

- ^ Huang, Heyuan; Abbaszadeh, Shiva (2020). "Recent Developments of Amorphous Selenium-Based X-Ray Detectors: A Review". IEEE Sensors Journal. 20 (4): 1694–1704. Bibcode:2020ISenJ..20.1694H. doi:10.1109/JSEN.2019.2950319.

- ^ Mikla, Victor I.; Mikla, Victor V. (September 26, 2011). Amorphous Chalcogenides: The Past, Present and Future. Elsevier. ISBN 978-0-12-388429-9 – via Google Books.

- ^ Csorba, I. P. (1985). Image Tubes. Indianapolis: Howard W. Sams. p. 320. ISBN 978-0-672-22023-4. OCLC 12366280.

- ^ "NEWVICON Trademark - Registration Number 1079721 - Serial Number 73005338 :: Justia Trademarks". trademarks.justia.com.

- ^ Clifford, Martin (February 6, 1989). The Camcorder: Use, Care, and Repair. Prentice Hall. ISBN 978-0-13-113689-2 – via Google Books.

- ^ White, Gordon (February 6, 1988). Video Techniques. Heinemann Professional Pub. ISBN 978-0-434-92290-1 – via Google Books.