Recent from talks

Nothing was collected or created yet.

Year 2000 problem

View on Wikipedia

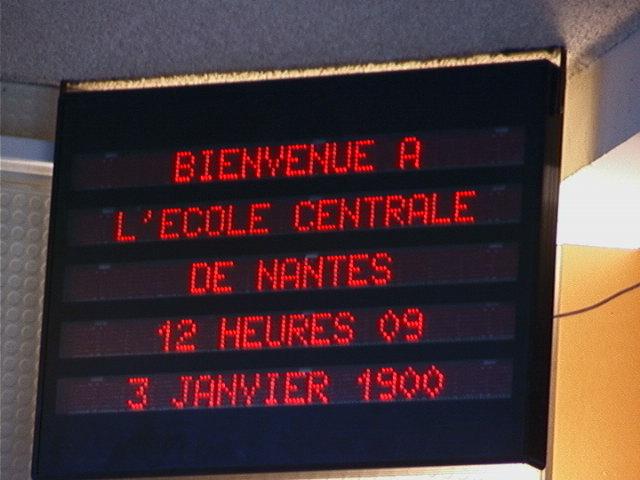

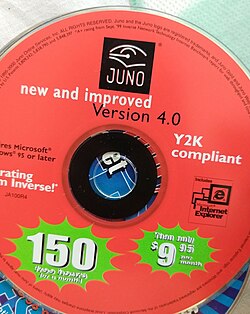

The term year 2000 problem,[1] or simply Y2K, refers to potential computer errors related to the formatting and storage of calendar data for dates in and after the year 2000. Many programs represented four-digit years with only the final two digits, making the year 2000 indistinguishable from 1900. Computer systems' inability to distinguish dates correctly had the potential to bring down worldwide infrastructures for computer-reliant industries.

In the years leading up to the turn of the millennium, the public gradually became aware of the "Y2K scare", and individual companies predicted the global damage caused by the bug would require anything between $400 million and $600 billion to rectify.[2] A lack of clarity regarding the potential dangers of the bug led some to stock up on food, water, and firearms, purchase backup generators, and withdraw large sums of money in anticipation of a computer-induced apocalypse.[3]

Contrary to published expectations, few major errors occurred in 2000. Supporters of the Y2K remediation effort argued that this was primarily due to the pre-emptive action of many computer programmers and information technology experts. Companies and organizations in some countries, but not all, had checked, fixed, and upgraded their computer systems to address the problem.[4][5] Then-U.S. president Bill Clinton, who organized efforts to minimize the damage in the United States, labelled Y2K as "the first challenge of the 21st century successfully met",[6] and retrospectives on the event typically commend the programmers who worked to avert the anticipated disaster.

Critics argued that even in countries where very little had been done to fix software, problems were minimal. The same was true in sectors such as schools and small businesses where compliance with Y2K policies was inconsistent at best.

Background

[edit]Y2K is a numeronym and was the common abbreviation for the year 2000 software problem. The abbreviation combines the letter Y for "year", the number 2 and a capitalized version of k for the SI unit prefix kilo meaning 1000; hence, 2K signifies 2000. It was also named the "millennium bug" because it was associated with the popular (rather than literal) rollover of the millennium, even though most of the problems could have occurred at the end of any century.

Computerworld's 1993 three-page "Doomsday 2000" article by Peter de Jager was called "the information-age equivalent of the midnight ride of Paul Revere" by The New York Times.[7][8][9]

The problem was the subject of the early book Computers in Crisis by Jerome and Marilyn Murray (Petrocelli, 1984; reissued by McGraw-Hill under the title The Year 2000 Computing Crisis in 1996). Its first recorded mention on a Usenet newsgroup is from 18 January 1985 by Spencer Bolles.[10]

The acronym Y2K has been attributed to Massachusetts programmer David Eddy[11] in an e-mail sent on 12 June 1995. He later said, "People were calling it CDC (Century Date Change), FADL (Faulty Date Logic). There were other contenders. Y2K just came off my fingertips."[12]

The problem started because on both mainframe computers and later personal computers, memory was expensive, from as low as $10 per kilobyte to more than US$100 per kilobyte in 1975.[13][14] It was therefore very important for programmers to minimize usage. Since computers only gained wide usage in the 20th century, programs could simply prefix "19" to the year of a date, allowing them to only store the last two digits of the year instead of four. As space on disc and tape storage was also expensive, these strategies saved money by reducing the size of stored data files and databases in exchange for becoming unusable past the year 2000.[15]

This meant that programs facing two-digit years could not distinguish between dates in 1900 and 2000. Dire warnings at times were in the mode of:

The Y2K problem is the electronic equivalent of the El Niño and there will be nasty surprises around the globe.

- — John Hamre, United States Deputy Secretary of Defense[16]

Options on the De Jager Year 2000 Index, "the first index enabling investors to manage risk associated with the ... computer problem linked to the year 2000" began trading mid-March 1997.[17]

Special committees were set up by governments to monitor remedial work and contingency planning, particularly by crucial infrastructures such as telecommunications, to ensure that the most critical services had fixed their own problems and were prepared for problems with others. While some commentators and experts argued that the coverage of the problem largely amounted to scaremongering,[18] it was only the safe passing of the main event itself, 1 January 2000, that fully quelled public fears.[citation needed]

Some experts who argued that scaremongering was occurring, such as Ross Anderson, professor of security engineering at the University of Cambridge Computer Laboratory, have since claimed that despite sending out hundreds of press releases about research results suggesting that the problem was not likely to be as big as some had suggested, they were largely ignored by the media.[18] In a similar vein, the Microsoft Press book Running Office 2000 Professional, published in May 1999, accurately predicted that most personal computer hardware and software would be unaffected by the year 2000 problem.[19] Authors Michael Halvorson and Michael Young characterized most of the worries as popular hysteria, an opinion echoed by Microsoft Corp.[20]

Programming problem

[edit]The practice of using two-digit dates for convenience predates computers, but was never a problem until stored dates were used in calculations.

Bit conservation need

[edit]I'm one of the culprits who created this problem. I used to write those programs back in the 1960s and 1970s, and was proud of the fact that I was able to squeeze a few elements of space out of my program by not having to put a 19 before the year. Back then, it was very important. We used to spend a lot of time running through various mathematical exercises before we started to write our programs so that they could be very clearly delimited with respect to space and the use of capacity. It never entered our minds that those programs would have lasted for more than a few years. As a consequence, they are very poorly documented. If I were to go back and look at some of the programs I wrote 30 years ago, I would have one terribly difficult time working my way through step-by-step.

Business data processing was done using unit record equipment and punched cards, most commonly the 80-column variety employed by IBM, which dominated the industry. Many tricks were used to squeeze needed data into fixed-field 80-character records. Saving two digits for every date field was significant in this effort.

In the 1960s, computer memory and mass storage were scarce and expensive. Early core memory cost one dollar per bit. Popular commercial computers, such as the IBM 1401, shipped with as little as 2 kilobytes of memory.[a] Programs often mimicked card processing techniques. Commercial programming languages of the time, such as COBOL and RPG, processed numbers in their character representations. Over time, the punched cards were converted to magnetic tape and then disc files, but the structure of the data usually changed very little.

Data was still input using punched cards until the mid-1970s. Machine architectures, programming languages and application designs were evolving rapidly. Neither managers nor programmers of that time expected their programs to remain in use for many decades, and the possibility that these programs would both remain in use and cause problems when interacting with databases – a new type of program with different characteristics – went largely uncommented upon.

Early attention

[edit]The first person known to publicly address this issue was Bob Bemer, who had noticed it in 1958 as a result of work on genealogical software. He spent the next twenty years fruitlessly trying to raise awareness of the problem with programmers, IBM, the government of the United States and the International Organization for Standardization. This included the recommendation that the COBOL picture clause should be used to specify four digit years for dates.[23]

In the 1980s, the brokerage industry began to address this issue, mostly because of bonds with maturity dates beyond the year 2000. By 1987 the New York Stock Exchange had reportedly spent over $20 million on Y2K, including hiring 100 programmers.[24]

Despite magazine articles on the subject from 1970 onward, the majority of programmers and managers only started recognizing Y2K as a looming problem in the mid-1990s, but even then, inertia and complacency caused it to be mostly unresolved until the last few years of the decade. In 1989, Erik Naggum was instrumental in ensuring that internet mail used four digit representations of years by including a strong recommendation to this effect in the internet host requirements document RFC 1123.[25] On April Fools' Day 1998, some companies set their mainframe computer dates to 2001, so that "the wrong date will be perceived as good fun instead of bad computing" while having a full day of testing.[26]

While using 3-digit years and 3-digit dates within that year was used by some, others chose to use the number of days since a fixed date, such as 1 January 1900.[27] Inaction was not an option, and risked major failure. Embedded systems with similar date logic were expected to malfunction and cause utilities and other crucial infrastructure to fail.

Saving space on stored dates persisted into the Unix era, with most systems representing dates to a single 32-bit word, typically representing dates as elapsed seconds from some fixed date, which causes the similar Y2K38 problem.[28]

Resulting bugs from date programming

[edit]

.getYear() method problem, which depicts the year 2000 problem

Storage of a combined date and time within a fixed binary field is often considered a solution, but the possibility for software to misinterpret dates remains because such date and time representations must be relative to some known origin. Rollover of such systems is still a problem but can happen at varying dates and can fail in various ways. For example:

- Credit card systems experienced issues with machines not correctly processing credit cards that expired in the new millennium and customers being charged incorrect compound interest.[29] An upscale grocer's 1997 credit-card caused a crash of their 10 cash registers, repeatedly, due to year 2000 expiration dates, and was the source of the first Y2K-related lawsuit.[30]

- The Microsoft Excel spreadsheet program had a very elementary Y2K problem: Excel (in both Windows and Mac versions, when they are set to start at 1900) incorrectly set the year 1900 as a leap year for compatibility with Lotus 1-2-3.[31] In addition, the years 2100, 2200, and so on, were regarded as leap years. This bug was fixed in later versions, but since the epoch of the Excel timestamp was set to the meaningless date of 0 January 1900 in previous versions, the year 1900 is still regarded as a leap year to maintain backward compatibility.

- The C programming language's standard library's date and time handling header defines a struct type

struct tmwhose year member represents the year minus 1900. Perl'slocaltimeandgmtimefunctions, derived from their C equivalents, as well as Java'sDateclass'sgetYear()method treat the year the same way. This led to a "popular misconception" that these functions returned the year as a two-digit number.[32][33][34] Many programs written in Perl or Java, two programming languages widely used in web development, incorrectly treated this value as the last two digits of the year. On the web this was usually a harmless presentation bug, but it did cause many dynamically generated web pages to display 1 January 2000 as "1/1/19100", "1/1/100", or other variants, depending on the display format.[citation needed] - JavaScript was changed due to concerns over the Y2K bug, and the return value for years changed and thus differed between versions from sometimes being a four digit representation and sometimes a two-digit representation forcing programmers to rewrite already working code to make sure web pages worked for all versions.[35][36]

- Older applications written for the commonly used UNIX Source Code Control System failed to handle years that began with the digit "2".

- In the Windows 3.x file manager, dates displayed as 1/1/19:0 for 1/1/2000 (because the colon is the character after "9" in the ASCII character set). An update was available.

- Some software, such as Math Blaster Episode I: In Search of Spot which only treats years as two-digit values instead of four, will give a given year as "1900", "1901", and so on, depending on the last two digits of the present year.

Similar date bugs

[edit]4 January 1975

[edit]The date of 4 January 1975 overflowed the 12-bit field that had been used in the Decsystem 10 operating systems. There were numerous problems and crashes related to this bug while an alternative format was developed.[37]

9 September 1999

[edit]Even before 1 January 2000 arrived, there were also some worries about 9 September 1999 (albeit less than those generated by Y2K). Because this date could also be written in the numeric format 9/9/99, it could have conflicted with the date value 9999, frequently used to specify an unknown date. It was thus possible that database programs might act on the records containing unknown dates on that day. Data entry operators commonly entered 9999 into required fields for an unknown future date, (e.g., a termination date for cable television or telephone service), in order to process computer forms using CICS software.[38] Somewhat similar to this is the end-of-file code 9999, used in older programming languages. While fears arose that some programs might unexpectedly terminate on that date, the bug was more likely to confuse computer operators than machines.

Leap years

[edit]Normally, a year is a leap year if it is evenly divisible by four. A year divisible by 100 is not a leap year in the Gregorian calendar unless it is also divisible by 400. For example, 1600 was a leap year, but 1700, 1800 and 1900 were not. Some programs may have relied on the oversimplified rule that "a year divisible by four is a leap year". This method works fine for the year 2000 (because it is a leap year), and will not become a problem until 2100, when older legacy programs will likely have long since been replaced. Other programs contained incorrect leap year logic, assuming for instance that no year divisible by 100 could be a leap year. An assessment of this leap year problem including a number of real-life code fragments appeared in 1998.[39] For information on why century years are treated differently, see Gregorian calendar.

Year 2010 problem

[edit]Some systems had problems once the year rolled over to 2010. This was dubbed by some in the media as the "Y2K+10" or "Y2.01K" problem.[40]

The main source of problems was confusion between hexadecimal number encoding and binary-coded decimal encodings of numbers. Both hexadecimal and BCD encode the numbers 0–9 as 0x0–0x9. BCD encodes the number 10 as 0x10, while hexadecimal encodes the number 10 as 0x0A; 0x10 interpreted as a hexadecimal encoding represents the number 16.

For example, because the SMS protocol uses BCD for dates, some mobile phone software incorrectly reported dates of SMSes as 2016 instead of 2010. Windows Mobile is the first software reported to have been affected by this glitch; in some cases WM6 changes the date of any incoming SMS message sent after 1 January 2010 from the year 2010 to 2016.[41][42]

Other systems affected include EFTPOS terminals,[43] and the PlayStation 3 (except the Slim model).[44]

The most important occurrences of such a glitch were in Germany, where up to 20 million bank cards became unusable, and with Citibank Belgium, whose Digipass customer identification chips failed.[45]

Year 2022 problem

[edit]When the year 2022 began, many systems using 32-bit integers encountered problems, which are now collectively known as the Y2K22 bug. The maximum value of a signed 32-bit integer, as used in many computer systems, is 2147483647. Systems using an integer to represent a 10 character date-based field, where the leftmost two characters are the 2-digit year, ran into an issue on 1 January 2022 when the leftmost characters needed to be '22', i.e. values from 2200000001 needed to be represented.

Microsoft Exchange Server was one of the more significant systems affected by the Y2K22 bug. The problem caused emails to be stuck on transport queues on Exchange Server 2016 and Exchange Server 2019, reporting the following error:

The FIP-FS "Microsoft" Scan Engine failed to load. PID: 23092, Error Code: 0x80004005. Error Description: Can't convert "2201010001" to long.[46]

Year 2038 problem

[edit]Many systems use Unix time and store it in a signed 32-bit integer. This data type is only capable of representing integers between −(231) and (231)−1, treated as number of seconds since the epoch at 1 January 1970 at 00:00:00 UTC. These systems can only represent times between 13 December 1901 at 20:45:52 UTC and 19 January 2038 at 03:14:07 UTC. If these systems are not updated and fixed, then dates all across the world that rely on Unix time will wrongfully display the year as 1901 beginning at 03:14:08 UTC on 19 January 2038.[citation needed]

Programming solutions

[edit]Several very different approaches were used to solve the year 2000 problem in legacy systems.

- Date expansion

- Two-digit years were expanded to include the century (becoming four-digit years) in programs, files, and databases. This was considered the "purest" solution, resulting in unambiguous dates that are permanent and easy to maintain. This method was costly, requiring massive testing and conversion efforts, and usually affecting entire systems.

- Date windowing

- Two-digit years were retained, and programs determined the century value only when needed for particular functions, such as date comparisons and calculations. (The century "window" refers to the 100-year period to which a date belongs.) This technique, which required installing small patches of code into programs, was simpler to test and implement than date expansion, thus much less costly. While not a permanent solution, windowing fixes were usually designed to work for many decades. This was thought acceptable, as older legacy systems tend to eventually get replaced by newer technology.[47]

- Date compression

- Dates can be compressed into binary 14-bit numbers. This allows retention of data structure alignment, using an integer value for years. Such a scheme is capable of representing 16384 different years; the exact scheme varies by the selection of epoch.

- Date re-partitioning

- In legacy databases whose size could not be economically changed, six-digit year/month/day codes were converted to three-digit years (with 1999 represented as 099 and 2001 represented as 101, etc.) and three-digit days (ordinal date in year). Only input and output instructions for the date fields had to be modified, but most other date operations and whole record operations required no change. This delays the eventual roll-over problem to the end of the year 2899.

- Software kits

- Software kits, such as those listed in CNN.com's Top 10 Y2K fixes for your PC:[48] ("most ... free") which was topped by the $50 Millennium Bug Kit.[49]

- Real Time Clock Upgrades

- One unique solution found prominence. While other fixes worked at the BIOS level as TSRs (Terminate and Stay Resident), intercepting BIOS calls, Y2000RTC was the only product that worked as a device driver and replaced the functionality of the faulty RTC with a compliant equivalent. This driver was rolled out in the years before the 1999/2000 deadline onto millions of PCs.

- Bridge programs

- Date servers where Call statements are used to access, add or update date fields.[50][51][52]

Documented errors

[edit]Before 2000

[edit]- In late 1998, Commonwealth Edison reported a computer upgrade intended to prevent the Y2K glitch caused them to send the village of Oswego, Illinois an erroneous electric bill for $7 million.[53]

- On 1 January 1999, taxi meters in Singapore stopped working, while in Sweden, incorrect taxi fares were given.[54]

- At midnight on 1 January 1999, at three airports in Sweden, computers used by police to generate temporary passports stopped working.[55]

- On 8 February 1999, while testing Y2K compliance in a computer system monitoring nuclear core rods at Peach Bottom Nuclear Generating Station, Pennsylvania, instead of resetting the time on the external computer meant to simulate the date rollover a technician accidentally changed the time on the operation systems computer. This computer had not yet been upgraded, and the date change caused all the computers at the station to crash. It took approximately seven hours to restore all normal functions, during which time workers had to use obsolete manual equipment to monitor plant operations.[53]

- In November 1999, approximately 500 residents in Philadelphia received jury duty summonses for dates in 1900.[56]

- In December 1999, in the United Kingdom, a software upgrade intended to make computers Y2K compliant prevented social services in Bedfordshire from finding if anyone in their care was over 100 years old, since computers failed to recognize the dates of birth being searched.[57][58]

- In late December 1999, Telecom Italia (now Gruppo TIM), Italy's largest telecom company, sent a bill for January and February 1900. The company stated this was a one-time error and that it had recently ensured its systems would be compatible with the year rollover.[59][60]

- On 28 December 1999, 10,000 card swipe machines issued by HSBC and manufactured by Racal stopped processing credit and debit card transactions.[18] This was limited to machines in the United Kingdom, and was the result of the machines being designed to ensure transactions had been completed within four business days; from 28 to 31 December they interpreted the future dates to be in the year 1900.[61] Stores with these machines relied on paper transactions until they started working again on 1 January.[62]

- On 31 December 1999, at 7:00 pm EST, as a direct result of a patch intended to prevent the Y2K glitch, computers at a ground control station in Fort Belvoir, Virginia crashed and ceased processing information from five spy satellites, including three KH-11 satellites. The military implemented a contingency plan within 3 hours by diverting their feeds and manually decoding the scrambled information, from which they were able to produce a limited dataset. All normal functionality was restored at 11:45 pm on 2 January 2000.[63][64][65]

On 1 January 2000

[edit]Problems that occurred on 1 January 2000 were generally regarded as minor.[66] Consequences did not always result exactly at midnight. Some programs were not active at that moment and problems would only show up when they were invoked. Not all problems recorded were directly linked to Y2K programming in a causality; minor technological glitches occur on a regular basis.

Reported problems include:

- In Australia, bus ticket validation machines in two states failed to operate.[66]

- In Japan:

- machines in 13 train stations stopped dispensing tickets for a short time.[67]

- in Ishikawa, the Shika Nuclear Power Plant reported that radiation monitoring equipment failed at a few seconds after midnight. Officials said there was no risk to the public, and no excess radiation was found at the plant.[68][69]

- at two minutes past midnight, the telecommunications carrier Osaka Media Port found date management mistakes in their network. A spokesman said they had resolved the issue by 02:43 and did not interfere with operations.[70]

- NTT Mobile Communications Network (NTT Docomo), Japan's largest cellular operator, reported that some models of mobile telephones were deleting new messages received, rather than the older messages, as the memory filled up.[70]

- Fifteen securities companies discovered glitches in programs used for trading. Officials said that fourteen of them resolved all issues within a day.[71]

- In South Korea:

- at midnight, 902 ondol heating systems and water heating failed at an apartment building near Seoul; the ondol systems were down for 19 hours and would only work when manually controlled, while the water heating took 24 hours to restart.[72]

- two hospitals in Gyeonggi Province reported malfunctions with equipment measuring bone marrow and patient intake forms, with one accidentally registering a newborn as having been born in 1900, and four people in the city of Daegu received medical bills with dates in 1900.[72][73][74]

- a court in Suwon sent out notifications containing a trial date for 4 January 1900.[74]

- a video store in Gwangju accidentally generated a late fee of approximately 8 million won (approximately $7,000 US dollars) because the store's computer determined a tape rental to be 100 years overdue. South Korean authorities stated the computer was a model anticipated to be incompatible with the year rollover, and had not undergone the software upgrades necessary to make it compliant.[71]

- Korea University sent graduation certificates dated 13 January 1900.[71]

- In Hong Kong, police breathalyzers failed at midnight.[75]

- In Jiangsu, China, taxi meters failed at midnight.[76]

- In Egypt, three dialysis machines briefly failed.[67]

- In Greece, approximately 30,000 cash registers, amounting to around 10% of the country's total, printed receipts with dates in 1900.[77]

- In Denmark, the first baby born on 1 January was recorded as being 100 years old.[78]

- In France, the national weather forecasting service, Météo-France, said a Y2K bug made the date on a webpage show a map with Saturday's weather forecast as "01/01/19100".[66] Additionally, the government reported that a Y2K glitch rendered one of their Syracuse satellite systems incapable of recognizing onboard malfunctions.[72][79]

- In Germany:

- at the Deutsche Oper Berlin, the payroll system interpreted the new year to be 1900 and determined the ages of employees' children by the last two digits of their years of birth, causing it to wrongly withhold government childcare subsidies in paychecks. To reinstate the subsidies, accountants had to reset the operating system's year to 1999.[80]

- a bank accidentally transferred 12 million Deutsche Marks (equivalent to $6.2 million) to a customer and presented a statement with the date 30 December 1899. The bank quickly fixed the incorrect transfer.[78][81]

- In Italy, courthouse computers in Venice and Naples showed an upcoming release date for some prisoners as 10 January 1900, while other inmates wrongly showed up as having 100 additional years on their sentences.[76][75]

- In Mali, a program for tracking trains throughout the country failed.[82]

- In Norway, a day care center for kindergarteners in Oslo offered a spot to a 105-year-old woman because the citizen's registry only showed the last two digits of citizens' years of birth.[83]

- In Spain, a worker received a notice for an industrial tribunal in Murcia which listed the event date as 3 February 1900.[66]

- In Sweden, the main hospital in Uppsala, a hospital in Lund, and two regional hospitals in Karlstad and Linköping reported that machines used for reading electrocardiogram information failed to operate, although the hospitals stated it had no effect on patient health.[72][84]

- In Sheffield, United Kingdom, a Y2K bug that was not discovered and fixed until 24 May caused computers to miscalculate the ages of pregnant mothers, which led to 154 patients receiving incorrect risk assessments for having a child with Down syndrome. As a direct result two abortions were carried out, and four babies with Down syndrome were also born to mothers who had been told they were in the low-risk group.[85]

- In Brazil, at the Port of Santos, computers which had been upgraded in July 1999 to be Y2K compliant could not read three-year customs registrations generated in their previous system once the year rolled over. Santos said this affected registrations from before June 1999 that companies had not updated, which Santos estimated was approximately 20,000, and that when the problem became apparent on 10 January they were able to fix individual registrations, "in a matter of minutes".[86] A computer at Viracopos International Airport in São Paulo state also experienced this glitch, which temporarily halted cargo unloading.[86]

- In Jamaica, in the Kingston and St. Andrew Corporation, 8 computerized traffic lights at major intersections stopped working. Officials stated these lights were part of a set of 35 traffic lights known to be Y2K non-compliant, and that all 35 were already slated for replacement.[87]

- In the United States:

- the US Naval Observatory, which runs the master clock that keeps the country's official time, gave the date on its website as 1 Jan 19100.[88]

- the Bureau of Alcohol, Tobacco, Firearms and Explosives could not register new firearms dealers for 5 days because their computers failed to recognize dates on applications.[89][90]

- 150 Delaware Lottery racino slot machines stopped working.[66]

- In New York, a video store accidentally generated a $91,250 late fee because the store computer determined a tape rental was 100 years overdue.[91]

- In Tennessee, the Y-12 National Security Complex stated that a Y2K glitch caused an unspecified malfunction in a system for determining the weight and composition of nuclear substances at a nuclear weapons plant, although the United States Department of Energy stated they were still able to keep track of all material. It was resolved within three hours, no one at the plant was injured, and the plant continued carrying out its normal functions.[91][92]

- In Chicago, for one day the Chicago Federal Reserve Bank could not transfer $700,000 from tax revenue; the problem was fixed the following day. Additionally, another bank in Chicago could not handle electronic Medicare payments until January 6, during which time the bank had to rely on sending processed claims on diskettes.[93]

- In New Mexico, the New Mexico Motor Vehicle Division was temporarily unable to issue new driver's licenses.[94]

- The campaign website for United States presidential candidate Al Gore gave the date as 3 January 19100 for a short time.[94]

- Godiva Chocolatier reported that cash registers in its American outlets failed to operate. They first became aware of and determined the source of the problem on 2 January, and immediately began distributing a patch. A spokesman reported that they restored all functionality to most of the affected registers by the end of that day and had fixed the rest by noon on 3 January.[95][96]

- The credit card companies MasterCard and Visa reported that, as a direct result of the Y2K glitch, for weeks after the year rollover a small percentage of customers were being charged multiple times for transactions.[97]

- Microsoft reported that, after the year rolled over, Hotmail e-mails sent in October 1999 or earlier showed up as having been sent in 2099, although this did not affect the e-mail's contents or the ability to send and receive e-mails.[98]

After January 2000

[edit]On 29 February and 1 March 2000

[edit]Problems were reported on 29 February 2000, Y2K's first leap year day, and 1 March 2000. These were mostly minor.[99][100][101]

- In New Zealand, an estimated 4,000 electronic terminals could not properly authenticate transactions.

- In Japan, around five percent of post office cash dispensers failed to work, although it was unclear if this was the result of the Y2K glitch. In addition, 6 observatories failed to recognize 29 February while over 20 seismographs incorrectly interpreted the date 29 February to be 1 March, and data from 43 weather bureau computers that had not been updated for compliance was corrupted, causing them to release inaccurate readings on 1 March.

- In Singapore, on 29 February subway terminals would not accept some passenger cards.

- In Bulgaria, police documents were issued with expiration dates of 29 February 2005 and 29 February 2010 (which are not leap years) and the police computer system defaulted to 1900.

- In Canada, on 29 February a program for tax collecting and information in the city of Montreal interpreted the date to be 1 March 1900; although it remained possible to pay taxes, computers miscalculated interest rates for delinquent taxes and residents could not access tax bills or property evaluations. Despite being the day before taxes were due, to fix the glitch authorities had to entirely turn off the city's tax system.[102][103]

- In the United States, on 29 February the archiving system of the Coast Guard's message processing system was affected.

- At Reagan National Airport, on 29 February a computer program for curbside baggage handling initially failed to recognize the date, forcing passengers to use standard check-in stations and causing significant delays.[102]

- At Offutt Air Force Base south of Omaha, Nebraska, on 29 February records of aircraft maintenance and parts could not be accessed or updated by computer. Workers continued normal operations and relied on paper records for the day.

On 31 December 2000 or 1 January 2001

[edit]Some software did not correctly recognize 2000 as a leap year, and so worked on the basis of the year having 365 days. On the last day of 2000 (day 366) and first day of 2001 these systems exhibited various errors. Some computers also treated the new year 2001 as 1901, causing errors. These were generally minor.

- The Swedish bank Nordbanken reported that its online and physical banking systems went down 5 times between 27 December 2000 and 3 January 2001, which was believed to be due to the Y2K glitch.[104]

- In Norway, on 31 December 2000, the Norwegian State Railways reported that all 29 of its new Signatur trains failed to run because their onboard computers considered the date invalid, causing some delays. As an interim measure, engineers restarted the trains by resetting their clocks back by a month and used older trains to cover some routes.[104][105][106]

- In Hungary, computers at over 1000 drug stores stopped working on 1 January 2001 because they did not recognize the new year as a valid date.[107]

- In South Africa, on 1 January 2001 computers at the First National Bank interpreted the new year to be 1901, affecting approximately 16,000 transactions and causing customers to be charged incorrect interest rates on credit cards. First National Bank first became aware of the problem on 4 January and fixed it the same day.[108]

- A large number of cash registers at the convenience store chain 7-Eleven stopped working for card transactions on 1 January 2001 because they interpreted the new year to be 1901, despite not having had any prior glitches. 7-Eleven reported the registers had been restored to complete functionality within two days.[104]

- In Connecticut, in early January the Connecticut Department of Motor Vehicles sent duplicate motor vehicle tax bills for vehicles that had their registrations renewed between 2 October 1999 and 30 November 1999, affecting 23,000 residents. A spokesman stated the Y2K glitch caused these vehicles to be double-entered in their system.[109]

- In Multnomah County, Oregon, in early January approximately 3,000 residents received jury duty summonses for dates in 1901. Due to using two-digit years when entering the summons dates, courthouse employees had not seen that the computer inaccurately rolled over the year.[104]

Since 2000

[edit]Since 2000, various issues have occurred due to errors involving overflows. An issue with time tagging caused the destruction of the NASA Deep Impact spacecraft.[110]

Some software used a process called date windowing to fix the issue by interpreting years 00–19 as 2000–2019 and 20–99 as 1920–1999. As a result, a new wave of problems started appearing in 2020, including parking meters in New York City refusing to accept credit cards, issues with Novitus point of sale units, and some utility companies printing bills listing the year 1920. The video game WWE 2K20 also began crashing when the year rolled over, although a patch was distributed later that day.[111]

Even the iPhone is not completely immune to the quirks of the Millennium Bug: it struggles with birthdate changes around Y2K. [112]

Government responses

[edit]Bulgaria

[edit]Although the Bulgarian national identification number allocates only two digits for the birth year, the year 1900 problem and subsequently the Y2K problem were addressed by the use of unused values above 12 in the month range. For all persons born before 1900, the month is stored as the calendar month plus 20, and for all persons born in or after 2000, the month is stored as the calendar month plus 40.[113]

Canada

[edit]Canadian Prime Minister Jean Chrétien's most important cabinet ministers were ordered to remain in the capital Ottawa, and gathered at 24 Sussex Drive, the prime minister's residence, to watch the clock.[7] 13,000 Canadian troops were also put on standby.[7]

Netherlands

[edit]The Dutch Government promoted Y2K Information Sharing and Analysis Centers (ISACs) to share readiness between industries, without threat of antitrust violations or liability based on information shared.[citation needed]

Norway and Finland

[edit]Norway and Finland changed their national identification numbers to indicate a person's century of birth. In both countries, the birth year was historically indicated by two digits only. This numbering system had already given rise to a similar problem, the "Year 1900 problem", which arose due to problems distinguishing between people born in the 19th and 20th centuries. Y2K fears drew attention to an older issue, while prompting a solution to a new problem. In Finland, the problem was solved by replacing the hyphen ("-") in the number with the letter "A" for people born in the 21st century (for people born before 1900, the sign was already "+").[114] In Norway, the range of the individual numbers following the birth date was altered from 0–499 to 500–999.[citation needed]

Romania

[edit]Romania also changed its national identification number in response to the Y2K problem, due to the birth year being represented by only two digits. Before 2000, the first digit, which shows the person's sex, was 1 for males and 2 for females. Individuals born since 1 January 2000 have a number starting with 5 if male or 6 if female.[citation needed]

Uganda

[edit]The Ugandan government responded to the Y2K threat by setting up a Y2K Task Force.[115] In August 1999 an independent international assessment by the World Bank International Y2k Cooperation Centre found that Uganda's website was in the top category as "highly informative". This put Uganda in the "top 20" out of 107 national governments, and on a par with the United States, United Kingdom, Canada, Australia and Japan, and ahead of Germany, Italy, Austria, Switzerland which were rated as only "somewhat informative". The report said that "Countries which disclose more Y2K information will be more likely to maintain public confidence in their own countries and in the international markets."[116]

United States

[edit]In 1998, the United States government responded to the Y2K threat by passing the Year 2000 Information and Readiness Disclosure Act, by working with private sector counterparts in order to ensure readiness, and by creating internal continuity of operations plans in the event of problems and set limits to certain potential liabilities of companies with respect to disclosures about their year 2000 programs.[117][118] The effort was coordinated by the President's Council on Year 2000 Conversion, headed by John Koskinen, in coordination with the Federal Emergency Management Agency (FEMA), and an interim Critical Infrastructure Protection Group within the Department of Justice.[119][120]

The US government followed a three-part approach to the problem: (1) outreach and advocacy, (2) monitoring and assessment, and (3) contingency planning and regulation.[121]

A feature of US government outreach was Y2K websites, including y2k.gov, many of which have become inaccessible in the years since 2000. Some of these websites have been archived by the National Archives and Records Administration or the Wayback Machine.[122][123]

Each federal agency had its own Y2K task force which worked with its private sector counterparts; for example, the FCC had the FCC Year 2000 Task Force.[121][124]

Most industries had contingency plans that relied upon the internet for backup communications. As no federal agency had clear authority with regard to the internet at this time (it had passed from the Department of Defense to the National Science Foundation and then to the Department of Commerce), no agency was assessing the readiness of the internet itself. Therefore, on 30 July 1999, the White House held the White House Internet Y2K Roundtable.[125]

The U.S. government also established the Center for Year 2000 Strategic Stability as a joint operation with the Russian Federation. It was a liaison operation designed to mitigate the possibility of false positive readings in each nation's nuclear attack early warning systems.[126]

International cooperation

[edit]The International Y2K Cooperation Center (IY2KCC) was established at the behest of national Y2K coordinators from over 120 countries when they met at the First Global Meeting of National Y2K Coordinators at the United Nations in December 1998.[127] IY2KCC established an office in Washington, D.C., in March 1999. Funding was provided by the World Bank, and Bruce W. McConnell was appointed as director.

IY2KCC's mission was to "promote increased strategic cooperation and action among governments, peoples, and the private sector to minimize adverse Y2K effects on the global society and economy." Activities of IY2KCC were conducted in six areas:

- National Readiness: Promoting Y2K programs worldwide

- Regional Cooperation: Promoting and supporting co-ordination within defined geographic areas

- Sector Cooperation: Promoting and supporting co-ordination within and across defined economic sectors

- Continuity and Response Cooperation: Promoting and supporting co-ordination to ensure essential services and provisions for emergency response

- Information Cooperation: Promoting and supporting international information sharing and publicity

- Facilitation and Assistance: Organizing global meetings of Y2K coordinators and to identify resources

IY2KCC closed down in March 2000.[127]

Private sector response

[edit]

- The United States established the Year 2000 Information and Readiness Disclosure Act, which limited the liability of businesses who had properly disclosed their Y2K readiness.

- Insurance companies sold insurance policies covering failure of businesses due to Y2K problems.

- Attorneys organized and mobilized for Y2K class action lawsuits (which were not pursued).[128]

- Survivalist-related businesses (gun dealers, surplus and sporting goods) anticipated increased business in the final months of 1999 in an event known as the Y2K scare.[129]

- The Long Now Foundation, which (in their words) "seeks to promote 'slower/better' thinking and to foster creativity in the framework of the next 10,000 years", has a policy of anticipating the Year 10,000 problem by writing all years with five digits. For example, they list "01996" as their year of founding.

- While there was no one comprehensive internet Y2K effort, multiple internet trade associations and organisations banded together to form the Internet Year 2000 Campaign.[130] This effort partnered with the White House's Internet Y2K Roundtable.

The Y2K issue was a major topic of discussion in the late 1990s and as such showed up in much popular media. A number of "Y2K disaster" books were published such as Deadline Y2K by Mark Joseph. Movies such as Y2K: Year to Kill capitalized on the currency of Y2K, as did numerous TV shows, comic strips, and computer games.

Fringe group responses

[edit]A variety of fringe groups and individuals such as those within some fundamentalist religious organizations, survivalists, cults, anti-social movements, self-sufficiency enthusiasts and those attracted to conspiracy theories, called attention to Y2K fears and claimed that they provided evidence for their respective theories. End-of-the-world scenarios and apocalyptic themes were common in their communication.

Interest in the survivalist movement peaked in 1999 in its second wave for that decade, triggered by Y2K fears. In the time before extensive efforts were made to rewrite computer programming codes to mitigate the possible impacts, some writers such as Gary North, Ed Yourdon, James Howard Kunstler,[131] and Ed Yardeni anticipated widespread power outages, food and gasoline shortages, and other emergencies. North and others raised the alarm because they thought Y2K code fixes were not being made quickly enough. While a range of authors responded to this wave of concern, two of the most survival-focused texts to emerge were Boston on Y2K (1998) by Kenneth W. Royce and Mike Oehler's The Hippy Survival Guide to Y2K.

Y2K also appeared in the communication of some fundamentalist and charismatic Christian leaders throughout the Western world, particularly in North America and Australia. Their promotion of the perceived risks of Y2K was combined with end times thinking and apocalyptic prophecies, allegedly in an attempt to influence followers.[132] The New York Times reported in late 1999, "The Rev. Jerry Falwell suggested that Y2K would be the confirmation of Christian prophecy – God's instrument to shake this nation, to humble this nation. The Y2K crisis might incite a worldwide revival that would lead to the rapture of the church. Along with many survivalists, Mr. Falwell advised stocking up on food and guns".[133] Adherents in these movements were encouraged to engage in food hoarding, take lessons in self-sufficiency, and the more extreme elements planned for a total collapse of modern society. The Chicago Tribune reported that some large fundamentalist churches, motivated by Y2K, were the sites for flea market-like sales of paraphernalia designed to help people survive a social order crisis ranging from gold coins to wood-burning stoves.[134] Betsy Hart wrote in the Deseret News that many of the more extreme evangelicals used Y2K to promote a political agenda in which the downfall of the government was a desired outcome in order to usher in Christ's reign. She also said, "the cold truth is that preaching chaos is profitable and calm doesn't sell many tapes or books".[135] Y2K fears were described dramatically by New Zealand-based Christian prophetic author and preacher Barry Smith in his publication "I Spy with my Little Eye," where he dedicated an entire chapter to Y2K.[136] Some expected, at times through so-called prophecies, that Y2K would be the beginning of a worldwide Christian revival.[137]

In the aftermath, it became clear that leaders of these fringe groups and churches had manufactured fears of apocalyptic outcomes to manipulate their followers into dramatic scenes of mass repentance or renewed commitment to their groups, as well as urging additional giving of funds. The Baltimore Sun claimed this in their article "Apocalypse Now – Y2K spurs fears", noting the increased call for repentance in the populace in order to avoid God's wrath.[138] Christian leader Col Stringer wrote, "Fear-creating writers sold over 45 million books citing every conceivable catastrophe from civil war, planes dropping from the sky to the end of the civilized world as we know it. Reputable preachers were advocating food storage and a "head for the caves" mentality. No banks failed, no planes crashed, no wars or civil war started. And yet not one of these prophets of doom has ever apologized for their scare-mongering tactics."[137] Critics argue that some prominent North American Christian ministries and leaders generated huge personal and corporate profits through sales of Y2K preparation kits, generators, survival guides, published prophecies and a wide range of other associated merchandise, such as Christian journalist Rob Boston in his article "False Prophets, Real Profits."[132] However, Pat Robertson, founder of the global Christian Broadcasting Network, gave equal time to pessimists and optimists alike and granted that people should at least expect "serious disruptions".[139]

Cost

[edit]The total cost of the work done in preparation for Y2K likely surpassed US$300 billion ($548 billion as of May 2025, once inflation is taken into account).[140][141] IDC calculated that the US spent an estimated $134 billion ($245 billion) preparing for Y2K, and another $13 billion ($24 billion) fixing problems in 2000 and 2001. Worldwide, $308 billion ($562 billion) was estimated to have been spent on Y2K remediation.[142]

Remedial work organization

[edit]Remedial work was driven by customer demand for solutions.[143] Software suppliers, mindful of their potential legal liability,[128] responded with remedial effort. Software subcontractors were required to certify that their software components were free of date-related problems, which drove further work down the supply chain.

By 1999, many corporations required their suppliers to certify that their software was all Y2K-compliant. Some signed after accepting merely remedial updates. Many businesses or even whole countries suffered only minor problems despite spending little effort themselves.[citation needed]

Results

[edit]There are two ways to view the events of 2000 from the perspective of its aftermath:

Supporting view

[edit]This view holds that the vast majority of problems were fixed correctly, and the money spent was at least partially justified. The situation was essentially one of preemptive alarm. Those who hold this view claim that the lack of problems at the date change reflects the completeness of the project, and that many computer applications would not have continued to function into the 21st century without correction or remediation.

Expected problems that were not seen by small businesses and small organizations were prevented by Y2K fixes embedded in routine updates to operating system and utility software[144] that were applied several years before 31 December 1999.

The extent to which larger industry and government fixes averted issues that would have more significant impacts had they not been fixed were typically not disclosed or widely reported.[145][unreliable source?]

It has been suggested that on 11 September 2001, infrastructure in New York City (including subways, phone service, and financial transactions) was able to continue operation because of the redundant networks established in the event of Y2K bug impact[146] and the contingency plans devised by companies.[147] The terrorist attacks and the following prolonged blackout to lower Manhattan had minimal effect on global banking systems.[148] Backup systems were activated at various locations around the region, many of which had been established to deal with a possible complete failure of networks in Manhattan's Financial District on 31 December 1999.[149]

Opposing view

[edit]The contrary view asserts that there were no, or very few, critical problems to begin with. This view also asserts that there would have been only a few minor mistakes and that a "fix on failure" approach would have been the most efficient and cost-effective way to solve these problems as they occurred.

International Data Corporation estimated that the US might have wasted $40 billion.[150]

Skeptics of the need for a massive effort pointed to the absence of Y2K-related problems occurring before 1 January 2000, even though the 2000 financial year commenced in 1999 in many jurisdictions, and a wide range of forward-looking calculations involved dates in 2000 and later years. Estimates undertaken in the leadup to 2000 suggested that around 25% of all problems should have occurred before 2000.[151] Critics of large-scale remediation argued during 1999 that the absence of significant reported problems in non-compliant small firms was evidence that there had been, and would be, no serious problems needing to be fixed in any firm, and that the scale of the problem had therefore been severely overestimated.[152]

Countries such as South Korea, Italy, and Russia invested little to nothing in Y2K remediation,[133][150] yet had the same negligible Y2K problems as countries that spent enormous sums of money. Western countries anticipated such severe problems in Russia that many issued travel advisories and evacuated non-essential staff.[153]

Critics also cite the lack of Y2K-related problems in schools, many of which undertook little or no remediation effort. By 1 September 1999, only 28% of US schools had achieved compliance for mission critical systems, and a government report predicted that "Y2K failures could very well plague the computers used by schools to manage payrolls, student records, online curricula, and building safety systems".[154]

Similarly, there were few Y2K-related problems in an estimated 1.5 million small businesses that undertook no remediation effort. On 3 January 2000 (the first weekday of the year), the Small Business Administration received an estimated 40 calls from businesses with computer issues, similar to the average. None of the problems were critical.[155]

Legacy

[edit]The 2024 CrowdStrike incident, a global IT system outage, was compared to the Y2K bug by several news outlets, recalling fears surrounding it due to its scale and impact.[156][157]

There was also an incident in 2022 with Honda and Acura model year 2004-2012 cars equipped with a screen, having all the car clocks roll back to 2002.[158]

See also

[edit]- Year 2038 problem: a time formatting bug in computer systems with representing times after 03:14:07 UTC on 19 January 2038

- GPS week number rollover: time keeping integer rollover caused by the design of the Global Positioning System, which occurs every 19.6 years

- 512k day: an event in 2014, involving a software limitation in network routers

- IPv4 address exhaustion, problems caused by the limited allocation size for numeric internet addresses

- ISO 8601, an international standard for representing dates and times, which mandates the use of (at least) four digits for the year

- "Da Boom", the third episode of the second season of Family Guy, featuring the Y2K bug causing a nuclear holocaust.

- "Life's a Glitch, Then You Die" is a Treehouse of Horror segment from The Simpsons eleventh season. The segment sees Homer forget to make his company's computers Y2K-compliant, causing a virus to be unleashed upon the world.

- Perpetual calendar, a calendar valid for many years, including before and after 2000

- Y2K, a 1999 American made-for-television science fiction-thriller film directed by Dick Lowry

- Y2K, a 2024 American apocalyptic science fiction comedy horror film directed by Kyle Mooney

- YEAR2000, a configuration setting supported by some versions of DR-DOS to overcome Year 2000 BIOS bugs

- Millennium celebrations, a worldwide, coordinated series of events to celebrate and commemorate the end of 1999 and the start of the year 2000 in the Gregorian calendar.

Notes

[edit]References

[edit]- ^ also commonly known as the year 2000 bug, Y2K problem, Y2K scare, millennium bug, Y2K bug, Y2K glitch, or Y2K error.

- ^ Committee on Government Reform and Oversight (26 October 1998). The Year 2000 Problem: Fourth Report by the Committee on Government Reform and Oversight, Together with Additional Views (PDF). U.S. Government Printing Office. p. 3. Archived (PDF) from the original on 2021-07-20. Retrieved 2021-06-07.

- ^ Uenuma, Francine (30 December 2019). "20 Years Later, the Y2K Bug Seems Like a Joke—Because Those Behind the Scenes Took It Seriously". Time Magazine. Archived from the original on 2021-09-30. Retrieved 2021-06-07.

- ^ "Leap Day Tuesday Last Y2K Worry". Wired. 25 February 2000. Archived from the original on 2021-04-30. Retrieved 2016-10-16.

- ^ Carrington, Damian (4 January 2000). "Was Y2K bug a boost?". BBC News. Archived from the original on 2004-04-22. Retrieved 2009-09-19.

- ^ Loeb, Zachary (30 December 2019). "The lessons of Y2K, 20 years later". The Washington Post. Archived from the original on 2020-12-02. Retrieved 2021-06-07.

- ^ a b c Eric Andrew-Gee (28 December 2019). "Y2K: The strange, true history of how Canada prepared for an apocalypse that never happened, but changed us all". The Globe and Mail. Archived from the original on 2020-01-01. Retrieved 2020-02-27.

- ^ Cory Johnson (29 December 1999). "Y2K Crier's Crisis". TheStreet. Archived from the original on 2020-02-28. Retrieved 2020-02-28.

- ^ Barnaby J. Feder (11 October 1998). "The Town Crier for the Year 2000". The New York Times. Archived from the original on 2020-04-14. Retrieved 2020-02-28.

- ^ Bolles, Spencer (19 January 1985). "Computer bugs in the year 2000". Newsgroup: net.bugs. Usenet: 820@reed.UUCP. Archived from the original on 2011-01-22. Retrieved 2019-08-15.

- ^ American RadioWorks Archived 2011-07-27 at the Wayback Machine Y2K Notebook Problems Archived 2011-07-27 at the Wayback Machine – The Surprising Legacy of Y2K Archived 2011-07-27 at the Wayback Machine. Retrieved 22 April 2007.

- ^ Rose, Ted (22 December 1999). "Who invented Y2K and why did it become so universally popular?". Baltimore Sun. Archived from the original on 2022-09-26. Retrieved 2022-09-26.

- ^ A web search on images for "computer memory ads 1975" returns advertisements showing pricing for 8K of memory at $990 and 64K of memory at $1495.

- ^ McCallum, John C. (2022). "Computer Memory and Data Storage". Global Change Data Lab. Archived from the original on 2023-10-04. Retrieved 2023-10-21.

- ^ Kappelman, Leon; Scott, Phil (25 November 1996). "Accrued Savings of the Year 2000 Computer Date Problem". Computerworld. Archived from the original on 2017-12-18. Retrieved 2017-02-13.

- ^ Looking at the Y2K bug, portal on CNN.com Archived 7 February 2006 at the Wayback Machine

- ^ Piskora, Beth (1 March 1997). "The Dow decimal system". The New York Post. p. 26.

- ^ a b c Presenter: Stephen Fry (3 October 2009). "In the beginning was the nerd". Archive on 4. BBC Radio 4. Archived from the original on 2009-10-13. Retrieved 2018-02-13.

- ^ Halvorson, Michael (1999). Running Microsoft Office 2000. Young, Michael J. Redmond, Wash.: Microsoft Press. ISBN 1-57231-936-4. OCLC 40174922.

- ^ Halvorson, Michael; Young, Michael (1999). Running Microsoft Office 2000 Professional. Redmond, WA: Microsoft Press. pp. xxxix. ISBN 1572319364.

As you learn about the year 2000 problem, and prepare for its consequences, there are a number of points we'd like you to consider. First, despite dire predictions, there is probably no good reason to prepare for the new millennium by holing yourself up in a mine shaft with sizable stocks of water, grain, barter goods, and ammunition. The year 2000 will not disable most computer systems, and if your personal computer was manufactured after 1996, it's likely that your hardware and systems software will require little updating or customizing.

- ^ Testimony by Alan Greenspan, ex-Chairman of the Federal Reserve before the Senate Banking Committee, 25 February 1998, ISBN 978-0-16-057997-4

- ^ "IBM 1401 Reference manual" (PDF). Archived from the original (PDF) on 2010-08-09.

- ^ "Key computer coding creator dies". The Washington Post. 25 June 2004. Archived from the original on 2017-09-19. Retrieved 2011-09-25.

- ^ Andrew-Gee, Eric (28 December 2019). "Y2K: The strange, true history of how Canada prepared for an apocalypse that never happened, but changed us all". The Globe and Mail. Archived from the original on 2020-01-01. Retrieved 2020-02-27.

- ^ R. Braden, ed. (October 1989). Requirements for Internet Hosts -- Application and Support. Network Working Group. doi:10.17487/RFC1123. STD 3. RFC 1123. Internet Standard 3. Updated by RFC 1349, 2181, 5321, 5966 and 7766.

- ^ D. Kolstedt (15 November 1997). "Helpful Year 2000 hint". CIO magazine. p. 12.

- ^ "Thinking Ahead". InformationWeek. 28 October 1996. p. 8.

extends .. the 23rd century

- ^ "Epoch Time". unixtutoria. 15 March 2019. Archived from the original on 2023-04-13. Retrieved 2023-04-13.

- ^ Thomas, Martyn (31 December 2019). "The millennium bug was real – and 20 years later we face the same threats". The Guardian. Retrieved 2024-06-08.

- ^ Patrizio, Andy (15 September 1997). "Visa Debits The Vendors". InformationWeek. p. 50.

- ^ "Microsoft Knowledge Base article 214326". Microsoft Support. 17 December 2015. Archived from the original on 2008-04-08. Retrieved 2016-10-16.

- ^ Christiansen, Tom (1 January 1999). "Y2K Compliance". Perl.com. Retrieved 2025-10-21.

- ^ "localtime - Perldoc Browser". perldoc.perl.org. Retrieved 2025-10-21.

- ^ "Date (Java Platform SE 8 )". docs.oracle.com. Retrieved 2025-10-21.

- ^ "JavaScript Reference Javascript 1.2". Sun Microsystems. Archived from the original on 2007-06-08. Retrieved 2009-06-07.

- ^ "JavaScript Reference Javascript 1.3". Sun. Archived from the original on 2009-04-20. Retrieved 2009-06-07.

- ^ Neumann, Peter G. (2 February 1987). "The RISKS Digest, Volume 4 Issue 45". The Risks Digest. 4 (45). Archived from the original on 2014-12-28. Retrieved 2014-12-28.

- ^ Stockton, J.R., "Critical and Significant Dates Archived 2015-09-07 at the Wayback Machine" Merlyn.

- ^ A. van Deursen, "The Leap Year Problem Archived 2022-05-20 at the Wayback Machine" The Year/2000 Journal 2(4):65–70, July/August 1998.

- ^ "Bank of Queensland hit by "Y2.01k" glitch". CRN. 4 January 2010. Archived from the original on 2010-03-15. Retrieved 2016-10-16.

- ^ "Windows Mobile glitch dates 2010 texts 2016". 5 January 2010. Archived from the original on 2013-01-19.

- ^ "Windows Mobile phones suffer Y2K+10 bug". 4 January 2010. Archived from the original on 2013-10-23. Retrieved 2010-01-04.

- ^ "Bank of Queensland vs Y2K – an update". 4 January 2010. Archived from the original on 2010-01-08. Retrieved 2010-01-04.

- ^ "Error: 8001050F Takes Down PlayStation Network". Gizmodo. March 2010. Archived from the original on 2020-08-09. Retrieved 2020-03-16.

- ^ "2010 Bug in Germany" (in French). RTL. 5 January 2010. Archived from the original on 2010-01-07. Retrieved 2016-10-16.

- ^ "Remember the Y2K bug? Microsoft confirms new Y2K22 issue". Sky news. Archived from the original on 2022-01-04. Retrieved 2022-01-02.

- ^ Raymond B. Howard. "The Case for Windowing: Techniques That Buy 60 Years". Year/2000 Journal (Mar/Apr 1998).

Windowing is a long-term fix that should keep legacy systems working fine until the software is redesigned...

- ^ Green, Max. "CNN - Top 10 Y2K fixes for your PC - September 22, 1999". CNN. Archived from the original on 2001-05-08.

- ^ "Millennium Bug Kit". Archived from the original on 2000-04-11.

- ^ "The Year 2000 FAQ". 5 May 1998. Archived from the original on 2021-03-08. Retrieved 2020-03-01.

- ^ Ellen Friedman; Jerry Rosenberg. "Countdown to the Millennium: Issues to Consider in the Final Year" (PDF). Archived (PDF) from the original on 2021-08-18. Retrieved 2020-03-01.

- ^ Peter Kruskopfs. "The Date Dilemma". Information Builders. Archived from the original on 1996-12-27. Retrieved 2020-03-15.

Bridge programs such as a date server are another option. These servers handle record format conversions from two to four-digit years.

- ^ a b Chandrasekaran, Rajiv (7 March 1999). "Big Glitch at Nuclear Plant Shows Perils of Y2K Tests". The Washington Post. ISSN 0190-8286. Archived from the original on 2023-03-14. Retrieved 2023-05-12.

- ^ "Y2K bug rears its ugly head". New York: CNN. 12 January 1999. Archived from the original on 2021-08-17. Retrieved 2019-12-30.

- ^ "Y2K bug strikes airports". Archived from the original on 2023-03-08. Retrieved 2023-03-08.

- ^ "Philly Not Fully Y2K-Ready, as 1900 Jury Notices Prove". 28 November 1999. Archived from the original on 2023-03-08. Retrieved 2023-03-08.

- ^ Becket, Andy (23 April 2000). "The bug that didn't bite". The Guardian. Archived from the original on 2023-03-07. Retrieved 2023-03-07.

- ^ Gibbs, Thom (19 December 2019). "The millennium bug myth, 20 years on: Why you're probably wrong about Y2K". The Telegraph. ISSN 0307-1235. Archived from the original on 2023-03-07. Retrieved 2023-03-07.

- ^ "Telecom Italia bills for 1900". Archived from the original on 2023-03-15. Retrieved 2023-03-15.

- ^ Fitzpatrick, Pat (14 November 2019). "Remember Y2K? Pat Fitzpatrick remembers when we all thought planes would fall out of the sky". Archived from the original on 2023-03-15. Retrieved 2023-03-15.

- ^ "Y2K Behind Credit Card Machine Failure". Archived from the original on 2023-02-03. Retrieved 2023-02-03.

- ^ Millennium bug hits retailers Archived 2017-08-12 at the Wayback Machine, from BBC News, 29 December 1999.

- ^ "US satellites safe after Y2K glitch". BBC News. Archived from the original on 2021-07-01. Retrieved 2021-01-16.

- ^ "Y2K glitch hobbled top secret spy sats". United Press International. Archived from the original on 2022-09-06. Retrieved 2023-03-24.

- ^ "Report: Y2K fix disrupts U.S. spy satellites for days, not hours". CNET. 2 January 2002. Archived from the original on 2023-03-24. Retrieved 2023-03-24.

- ^ a b c d e "Minor bug problems arise". BBC News. 1 January 2000. Archived from the original on 2009-01-11. Retrieved 2017-07-08.

- ^ a b "What Y2K bug? Global computers are A-OK". Deseret. 2 January 2000. Archived from the original on 2023-05-09. Retrieved 2023-05-09.

- ^ "Japan nuclear power plants malfunction". BBC News. 31 December 1999. Archived from the original on 2021-03-22. Retrieved 2017-10-04.

- ^ "Y2K Problem Strikes Japanese Plant". Archived from the original on 2023-02-04. Retrieved 2023-02-04.

- ^ a b Martyn Williams (3 January 2000). "Computer problems hit three nuclear plants in Japan". CNN. IDG Communications. Archived from the original on 2004-12-07.

- ^ a b c "World-Wide, the Y2K Bug Had Little Bite in the End". 3 January 2000. Archived from the original on 2023-02-23. Retrieved 2023-02-23.

- ^ a b c d "Will Monday be the real Y2K day?". Archived from the original on 2023-02-07. Retrieved 2023-02-07.

- ^ "Y2K bug hits heating system in Korean apartments". 3 January 2000. Archived from the original on 2022-11-27. Retrieved 2023-02-07.

- ^ a b "S.Korea declares success against Y2K bug". Archived from the original on 2020-11-11. Retrieved 2023-02-07.

- ^ a b Allen, Frederick E. "Apocalypse Then: When Y2K Didn't Lead To The End Of Civilization". Archived from the original on 2023-03-18. Retrieved 2023-03-18.

- ^ a b Reguly, Eric. "Opinion: The Y2K bug turned out to be a non-event, Eric Reguly says". The Globe and Mail. Archived from the original on 2023-02-07. Retrieved 2023-02-07.

- ^ "30,000 Cash Registers In Greece Hit By Y2K Bug". 6 January 2000. Archived from the original on 2023-03-09. Retrieved 2023-03-09.

- ^ a b Samuel, Lawrence R. (1 June 2009). Future: A Recent History. University of Texas Press. p. 179. ISBN 978-0-292-71914-9. Archived from the original on 2023-02-03. Retrieved 2023-02-03.

- ^ "Y2K glitch knocks out satellite spying system". Flight Global. 7 March 2023. Archived from the original on 2023-10-21. Retrieved 2023-03-07.

- ^ "Y2K bug bites German opera". USA Today. Archived from the original on 2000-06-08. Retrieved 2023-02-03.

- ^ "Y2K bug blamed for 4m banking blunder". Archived from the original on 2023-03-07. Retrieved 2023-02-07.

- ^ "Y2K bug briefly affected U.S. terrorist-monitoring effort, Pentagon says". www.cnn.com. Archived from the original on 2023-04-21. Retrieved 2023-09-20.

- ^ "Y2K bug bites 105-year-old". Independent Online. 4 February 2000. Archived from the original on 2023-04-24. Retrieved 2023-04-24.

- ^ "Pentagon Reports Failure In Satellite Intelligence System". 8 March 2023. Archived from the original on 2023-03-08. Retrieved 2023-03-08.

- ^ Wainwright, Martin (13 September 2001). "NHS faces huge damages bill after millennium bug error". The Guardian. UK. Archived from the original on 2021-08-18. Retrieved 2011-09-25.

The health service is facing big compensation claims after admitting yesterday that failure to spot a millennium bug computer error led to incorrect Down's syndrome test results being sent to 154 pregnant women. ...

- ^ a b "Brazil port hassled by Y2K glitch, but no delays". Reuters. 10 January 2000. Archived from the original on 2023-03-24. Retrieved 2023-03-24.

- ^ "Y2K bug hits traffic lights". The Gleaner. 3 January 2000. Archived from the original on 2021-09-20. Retrieved 2023-05-16.

- ^ Marsha Walton; Miles O'Brien (1 January 2000). "Preparation pays off; world reports only tiny Y2K glitches". CNN. Archived from the original on 2004-12-07.

- ^ Leeds, Jeff (4 January 2000). "Year 2000 Bug Triggers Few Disruptions". Archived from the original on 2023-04-18. Retrieved 2023-04-18.

- ^ "Y2K bug briefly affected U.S. terrorist-monitoring effort, Pentagon says". CNN. 5 January 2000. Archived from the original on 2023-04-18. Retrieved 2023-04-18.

- ^ a b "Y2K Glitch Reported At Nuclear Weapons Plant". Archived from the original on 2023-01-28. Retrieved 2023-01-28.

- ^ "Y2K briefly hits nuke plant". Associated Press. 4 January 2000. Archived from the original on 2023-03-28. Retrieved 2023-03-28.

- ^ "Y2K Council reports Y2K annoyances | Computerworld". www.computerworld.com. 7 January 2000. Archived from the original on 2023-07-23. Retrieved 2023-07-23.

- ^ a b Davidson, Lee (4 January 2000). "Y2K bug squashed U.S. claims victory, ends all-day operation at command center". Deseret News. Archived from the original on 2023-06-09. Retrieved 2023-06-09.

- ^ Barr, Stephen (3 January 2000). "Y2K Chief Waiting for Market Close to Sound All-Clear". The Washington Post. ISSN 0190-8286. Archived from the original on 2022-11-22. Retrieved 2023-06-18.

- ^ Michaud, Chris (4 January 2023). "Y2K bug bites into gourmet chocolates". Independent Online. Archived from the original on 2023-06-18. Retrieved 2023-06-18.

- ^ S, Edmund; ERS (7 January 2000). "Y2K Glitch Is Causing Multiple Charges for Some Cardholders". Archived from the original on 2023-01-27. Retrieved 2023-01-27.

- ^ "Two glitches hit Microsoft Internet services as New Year rolls over". CNN. 3 January 2000. Archived from the original on 2006-02-11. Retrieved 2023-04-24.

- ^ "HK Leap Year Free of Y2K Glitches". Wired. 29 February 2000. Archived from the original on 2021-04-30. Retrieved 2016-10-16.

- ^ "Leap Day arrives with few computer glitches worldwide". 29 February 2000. Archived from the original on 2023-02-03. Retrieved 2023-02-03.

- ^ "Leap Day Had Its Glitches". Wired. 1 March 2000. Archived from the original on 2021-06-08. Retrieved 2020-02-25.

- ^ a b "Computer glitches minor on Leap Day". 1 March 2000. Archived from the original on 2023-03-07. Retrieved 2023-03-07.

- ^ "Leap Day bug infests tax system". CBC News. 1 March 2000. Archived from the original on 2023-03-07. Retrieved 2023-03-07.

- ^ a b c d "The last bite of the bug". BBC News. 5 January 2001. Archived from the original on 2003-02-03. Retrieved 2014-12-31.

- ^ "7-Eleven Systems Hit by Y2k-like Glitch". Archived from the original on 2023-03-10. Retrieved 2023-03-10.

- ^ "Y2K Bug Hits Norway's Railroad At End Of Year". 1 January 2001. Archived from the original on 2023-03-10. Retrieved 2023-03-10.

- ^ Horvath, John (6 January 2001). "Y2K Strikes Back" (in German). Archived from the original on 2023-10-21. Retrieved 2023-10-10.

{{cite magazine}}: Cite magazine requires|magazine=(help) - ^ Meintjies, Marvin (11 January 2001). "Y2K glitch gives bank a new year's shock". Independent Online. Archived from the original on 2023-03-13. Retrieved 2023-03-12.

- ^ Valenta, Kaaren (4 January 2001). "Tax Collector: Car Tax Bills Are Correct". The Newtown Bee. Archived from the original on 2023-04-28. Retrieved 2023-04-28.

- ^ "NASA's Deep Space Comet Hunter Mission Comes to an End". Jet Propulsion Laboratory. 20 September 2013. Archived from the original on 2013-10-14. Retrieved 2022-07-09.

- ^ Stokel-Walker, Chris. "A lazy fix 20 years ago means the Y2K bug is taking down computers now". New Scientist. Archived from the original on 2020-01-12. Retrieved 2020-01-12.

- ^ Nìgito, Adalberto (11 June 2025). "E sì! Non sono più gli Iphone di una volta..." [Oh yes! Iphones just aren't what they used to be...] (in Italian).[dead link]

- ^ Kohler, Iliana V.; Kaltchev, Jordan; Dimova, Mariana (14 May 2002). "Integrated Information System for Demographic Statistics 'ESGRAON-TDS' in Bulgaria" (PDF). Demographic Research. 6 (Article 12): 325–354. doi:10.4054/DemRes.2002.6.12. Archived (PDF) from the original on 2010-12-04. Retrieved 2011-01-15.

- ^ "The personal identity code: Frequently asked questions". Digital and Population Data Services Agency, Finland. Archived from the original on 2020-11-26. Retrieved 2020-11-29.

- ^ "Uganda National Y2k Task Force End-June 1999 Public Position Statement". 30 June 1999. Archived from the original on 2012-08-10. Retrieved 2012-01-11.

- ^ "Y2K Center urges more information on Y2K readiness". 3 August 1999. Archived from the original on 2013-06-03. Retrieved 2012-01-11.

- ^ "Year 2000 Information and Readiness Disclosure Act". FindLaw. Archived from the original on 2019-05-13. Retrieved 2019-05-14.

- ^ "Y2K bug: Definition, Hysteria, & Facts". Encyclopædia Britannica. 10 May 2019. Archived from the original on 2019-05-20. Retrieved 2019-05-14.

- ^ DeBruce, Orlando; Jones, Jennifer (23 February 1999). "White House shifts Y2K focus to states". CNN. Archived from the original on 2006-12-20. Retrieved 2016-10-16.

- ^ Atlee, Tom. "The President's Council on Year 2000 Conversion". The Co-Intelligence Institute. Archived from the original on 2021-03-08. Retrieved 2019-05-14.

- ^ a b "FCC Y2K Communications Sector Report (March 1999) copy available at WUTC" (PDF). Archived from the original (PDF) on 2007-06-05. Retrieved 2007-05-29.

- ^ "Statement by President on Y2K Information and Readiness". Clinton Presidential Materials Project. National Archives and Records Administration. 19 October 1998. Archived from the original on 2020-04-14. Retrieved 2020-03-16.

- ^ "Home". National Y2K Clearinghouse. General Services Administration. Archived from the original on 2000-12-05. Retrieved 2020-03-16.

- ^ Robert J. Butler; Anne E. Hoge (September 1999). "Federal Communications Commission Spearheads Oversight of the U.S. Communications Industries' Y2K Preparedness". Messaging Magazine. Wiley, Rein & Fielding. Archived from the original on 2008-10-09. Retrieved 2016-10-16 – via The Open Group.

- ^ "Basic Internet Structures Expected to be Y2K Ready, Telecom News, NCS (1999 Issue 2)" (PDF). Archived from the original (PDF) on 2007-05-08. Retrieved 2007-05-29. (799 KB)

- ^ "U.S., Russia Shutter Joint Y2k Bug Center". Chicago Tribune. 16 January 2000. Archived from the original on 2017-02-02. Retrieved 2017-01-28.

- ^ a b "Collection: International Y2K Cooperation Center records | University of Minnesota Archival Collections Guides". archives.lib.umn.edu. Archived from the original on 2019-09-08. Retrieved 2020-03-16.

- ^ a b Kirsner, Scott (1 November 1997). "Fly in the Legal Eagles". CIO magazine. p. 38.

- ^ "quetek.com". quetek.com. Archived from the original on 2011-08-28. Retrieved 2011-09-25.

- ^ Internet Year 2000 Campaign Archived 2007-12-04 at the Wayback Machine archived at Cybertelecom.

- ^ Kunstler, Jim (1999). "My Y2K—A Personal Statement". Kunstler, Jim. Archived from the original on 2007-09-27. Retrieved 2006-12-12.

- ^ a b "False Prophets, Real Profits - Americans United". Archived from the original on 2016-09-27. Retrieved 2016-11-09.

- ^ a b Dutton, Denis (31 December 2009). "It's Always the End of the World as We Know It". The New York Times. Archived from the original on 2017-02-27. Retrieved 2017-02-26.

- ^ Coen, J., 1 March 1999, "Some Christians Fear End, It's just a day to others" Chicago Tribune

- ^ Hart, B., 12 February 1999 Deseret News, "Christian Y2K Alarmists Irresponsible" Scripps Howard News Service

- ^ Smith, B. (1999). "chapter 24 - Y2K Bug". I Spy with my Little Eye. MS Life Media. Archived from the original on 2016-11-06.

- ^ a b "Col Stringer Ministries - Newsletter Vol.1 : No.4". Archived from the original on 2012-03-20. Retrieved 2016-11-09.

- ^ Rivera, J., 17 February 1999, "Apocalypse Now – Y2K spurs fears", The Baltimore Sun

- ^ "Washingtonpost.com: Business Y2K Computer Bug". www.washingtonpost.com. Archived from the original on 2012-12-19. Retrieved 2023-09-27.

- ^ 1634–1699: McCusker, J. J. (1997). How Much Is That in Real Money? A Historical Price Index for Use as a Deflator of Money Values in the Economy of the United States: Addenda et Corrigenda (PDF). American Antiquarian Society. 1700–1799: McCusker, J. J. (1992). How Much Is That in Real Money? A Historical Price Index for Use as a Deflator of Money Values in the Economy of the United States (PDF). American Antiquarian Society. 1800–present: Federal Reserve Bank of Minneapolis. "Consumer Price Index (estimate) 1800–". Retrieved 2024-02-29.