Human extinction

View on Wikipedia

Human extinction or omnicide is the end of the human species, either by population decline due to extraneous natural causes, such as an asteroid impact or large-scale volcanism, or via anthropogenic destruction (self-extinction).

Some of the many possible contributors to anthropogenic hazard are climate change, global nuclear annihilation, biological warfare, weapons of mass destruction, and ecological collapse. Other scenarios center on emerging technologies, such as advanced artificial intelligence, biotechnology, or self-replicating nanobots.

The scientific consensus is that there is a relatively low risk of near-term human extinction due to natural causes.[2][3] The likelihood of human extinction through humankind's own activities, however, is a current area of research and debate.

History of thought

[edit]Early history

[edit]Before the 18th and 19th centuries, the possibility that humans or other organisms could become extinct was viewed with scepticism.[4] It contradicted the principle of plenitude, a doctrine that all possible things exist.[4] The principle traces back to Aristotle, and was an important tenet of Christian theology.[5] Ancient philosophers such as Plato, Aristotle, and Lucretius wrote of the end of humankind only as part of a cycle of renewal. Marcion of Sinope was a proto-protestant who advocated for antinatalism that could lead to human extinction.[6][7] Later philosophers such as Al-Ghazali, William of Ockham, and Gerolamo Cardano expanded the study of logic and probability and began wondering if abstract worlds existed, including a world without humans. Physicist Edmond Halley stated that the extinction of the human race may be beneficial to the future of the world.[8]

The notion that species can become extinct gained scientific acceptance during the Age of Enlightenment in the 17th and 18th centuries, and by 1800 Georges Cuvier had identified 23 extinct prehistoric species.[4] The doctrine was further gradually bolstered by evidence from the natural sciences, particularly the discovery of fossil evidence of species that appeared to no longer exist, and the development of theories of evolution.[5] In On the Origin of Species, Charles Darwin discussed the extinction of species as a natural process and a core component of natural selection.[9] Notably, Darwin was skeptical of the possibility of sudden extinction, viewing it as a gradual process. He held that the abrupt disappearances of species from the fossil record were not evidence of catastrophic extinctions, but rather represented unrecognised gaps[clarification needed] in the record.[9]

As the possibility of extinction became more widely established in the sciences, so did the prospect of human extinction.[4] In the 19th century, human extinction became a popular topic in science (e.g., Thomas Robert Malthus's An Essay on the Principle of Population) and fiction (e.g., Jean-Baptiste Cousin de Grainville's The Last Man). In 1863, a few years after Darwin published On the Origin of Species, William King proposed that Neanderthals were an extinct species of the genus Homo. The Romantic authors and poets were particularly interested in the topic.[4] Lord Byron wrote about the extinction of life on Earth in his 1816 poem "Darkness", and in 1824 envisaged humanity being threatened by a comet impact, and employing a missile system to defend against it.[4] Mary Shelley's 1826 novel The Last Man is set in a world where humanity has been nearly destroyed by a mysterious plague.[4] At the turn of the 20th century, Russian cosmism, a precursor to modern transhumanism, advocated avoiding humanity's extinction by colonizing space.[4]

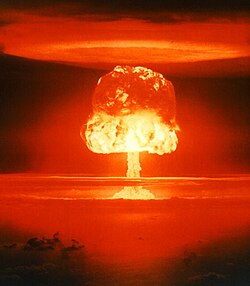

Atomic era

[edit]

The invention of the atomic bomb prompted a wave of discussion among scientists, intellectuals, and the public at large about the risk of human extinction.[4] In a 1945 essay, Bertrand Russell wrote:

The prospect for the human race is sombre beyond all precedent. Mankind are faced with a clear-cut alternative: either we shall all perish, or we shall have to acquire some slight degree of common sense.[10]

In 1950, Leo Szilard suggested it was technologically feasible to build a cobalt bomb that could render the planet unlivable. A 1950 Gallup poll found that 19% of Americans believed that another world war would mean "an end to mankind".[11] Rachel Carson's 1962 book Silent Spring raised awareness of environmental catastrophe. In 1983, Brandon Carter proposed the Doomsday argument, which used Bayesian probability to predict the total number of humans that will ever exist.

The discovery of "nuclear winter" in the early 1980s, a specific mechanism by which nuclear war could result in human extinction, again raised the issue to prominence. Writing about these findings in 1983, Carl Sagan argued that measuring the severity of extinction solely in terms of those who die "conceals its full impact", and that nuclear war "imperils all of our descendants, for as long as there will be humans."[12]

Post-Cold War

[edit]John Leslie's 1996 book The End of the World was an academic treatment of the science and ethics of human extinction. In it, Leslie considered a range of threats to humanity and what they have in common. In 2003, British Astronomer Royal Sir Martin Rees published Our Final Hour, in which he argues that advances in certain technologies create new threats to the survival of humankind and that the 21st century may be a critical moment in history when humanity's fate is decided.[13] Edited by Nick Bostrom and Milan M. Ćirković, Global Catastrophic Risks was published in 2008, a collection of essays from 26 academics on various global catastrophic and existential risks.[14] Nicholas P. Money's 2019 book The Selfish Ape delves into the environmental consequences of overexploitation.[15] Toby Ord's 2020 book The Precipice: Existential Risk and the Future of Humanity argues that preventing existential risks is one of the most important moral issues of our time. The book discusses, quantifies, and compares different existential risks, concluding that the greatest risks are presented by unaligned artificial intelligence and biotechnology.[16] Lyle Lewis' 2024 book Racing to Extinction explores the roots of human extinction from an evolutionary biology perspective. Lewis argues that humanity treats unused natural resources as waste and is driving ecological destruction through overexploitation, habitat loss, and denial of environmental limits. He uses vivid examples, like the extinction of the passenger pigeon and the environmental cost of rice production, to show how interconnected and fragile ecosystems are.[17]

Causes

[edit]Potential anthropogenic causes of human extinction include global thermonuclear war, deployment of a highly effective biological weapon, ecological collapse, runaway artificial intelligence, runaway nanotechnology (such as a grey goo scenario), overpopulation and increased consumption causing resource depletion and a concomitant population crash, population decline by choosing to have fewer children, and displacement of naturally evolved humans by a new species produced by genetic engineering or technological augmentation. Natural and external extinction risks include high-fatality-rate pandemic, supervolcanic eruption, asteroid impact, nearby supernova or gamma-ray burst, or extreme solar flare.

Humans (e.g. Homo sapiens sapiens) as a species may also be considered to have "gone extinct" simply by being replaced with distant descendants whose continued evolution may produce new species or subspecies Homo or of hominids.

Without intervention by unexpected forces, the stellar evolution of the Sun is expected to make Earth uninhabitable, then destroy it. Depending on its ultimate fate, the entire universe may eventually become uninhabitable.

Probability

[edit]Natural vs. anthropogenic

[edit]Experts generally agree that anthropogenic existential risks are (much) more likely than natural risks.[18][13][19][2][20] A key difference between these risk types is that empirical evidence can place an upper bound on the level of natural risk.[2] Humanity has existed for at least 200,000 years, over which it has been subject to a roughly constant level of natural risk. If the natural risk were sufficiently high, then it would be highly unlikely that humanity would have survived as long as it has. Based on a formalization of this argument, researchers have concluded that we can be confident that natural risk is lower than 1 in 14,000 per year (equivalent to 1 in 140 per century, on average).[2]

Another empirical method to study the likelihood of certain natural risks is to investigate the geological record.[18] For example, a comet or asteroid impact event sufficient in scale to cause an impact winter that would cause human extinction before the year 2100 has been estimated at one-in-a-million.[21][22] Moreover, large supervolcano eruptions may cause a volcanic winter that could endanger the survival of humanity.[23] The geological record suggests that supervolcanic eruptions are estimated to occur on average about once every 50,000 years, though most such eruptions would not reach the scale required to cause human extinction.[23] Famously, the supervolcano Mt. Toba may have almost wiped out humanity at the time of its last eruption (though this is contentious).[23][24]

Since anthropogenic risk is a relatively recent phenomenon, humanity's track record of survival cannot provide similar assurances.[2] Humanity has only survived 80 years since the creation of nuclear weapons, and for future technologies, there is no track record. This has led thinkers like Carl Sagan to conclude that humanity is currently in a "time of perils"[25] – a uniquely dangerous period in human history, where it is subject to unprecedented levels of risk, beginning from when humans first started posing risk to themselves through their actions.[18][26] Paleobiologist Olev Vinn has suggested that humans presumably have a number of inherited behavior patterns (IBPs) that are not fine-tuned for conditions prevailing in technological civilization. Indeed, some IBPs may be highly incompatible with such conditions and have a high potential to induce self-destruction. These patterns may include responses of individuals seeking power over conspecifics in relation to harvesting and consuming energy.[27] Nonetheless, there are ways to address the issue of inherited behavior patterns.[28]

Risk estimates

[edit]Given the limitations of ordinary observation and modeling, expert elicitation is frequently used instead to obtain probability estimates.[29]

- Humanity has a 95% probability of being extinct in 8,000,000 years, according to J. Richard Gott's formulation of the controversial doomsday argument, which argues that we have probably already lived through half the duration of human history.[30]

- In 1996, John A. Leslie estimated a 30% risk over the next five centuries (equivalent to around 6% per century, on average).[31]

- The Global Challenges Foundation's 2016 annual report estimates an annual probability of human extinction of at least 0.05% per year (equivalent to 5% per century, on average).[32]

- As of July 29, 2025, Metaculus users estimate a 1% probability of human extinction by 2100.[33]

- According to a 2020 study published in Scientific Reports, if deforestation and resource consumption continue at current rates, they could culminate in a "catastrophic collapse in human population" and possibly "an irreversible collapse of our civilization" in the next 20 to 40 years. According to the most optimistic scenario provided by the study, the chances that human civilization survives are smaller than 10%. To avoid this collapse, the study says, humanity should pass from a civilization dominated by the economy to a "cultural society" that "privileges the interest of the ecosystem above the individual interest of its components, but eventually in accordance with the overall communal interest."[34][35]

- Nick Bostrom, a philosopher at the University of Oxford known for his work on existential risk, argues

- that it would be "misguided"[36] to assume that the probability of near-term extinction is less than 25%, and

- that it will be "a tall order" for the human race to "get our precautions sufficiently right the first time", given that an existential risk provides no opportunity to learn from failure.[3][21]

- Philosopher John A. Leslie assigns a 70% chance of humanity surviving the next five centuries, based partly on the controversial philosophical doomsday argument that Leslie champions. Leslie's argument is somewhat frequentist, based on the observation that human extinction has never been observed, but requires subjective anthropic arguments.[37] Leslie also discusses the anthropic survivorship bias (which he calls an "observational selection" effect on page 139) and states that the a priori certainty of observing an "undisastrous past" could make it difficult to argue that we must be safe because nothing terrible has yet occurred. He quotes Holger Bech Nielsen's formulation: "We do not even know if there should exist some extremely dangerous decay of say the proton which caused the eradication of the earth, because if it happens we would no longer be there to observe it and if it does not happen there is nothing to observe."[38]

- Jean-Marc Salotti calculated the probability of human extinction caused by a giant asteroid impact.[39] It is between 0.03 and 0.3 for the next billion years, if there is no colonization of other planets. According to that study, the most frightening object is a giant long-period comet with a warning time of a few years only and therefore no time for any intervention in space or settlement on the Moon or Mars. The probability of a giant comet impact in the next hundred years is 2.2×10−12.[39]

- As the United Nations Office for Disaster Risk Reduction estimated in 2023, there is a 2 to 14% (median: 8%)[Unclear what the median represents in this context. Over what distribution is it calculated?] chance of an extinction-level event by 2100, but there was a 14 to 98% (median: 56%) chance of an extinction-level event by 2700.[40][clarification needed]

- Bill Gates told The Wall Street Journal in January 27, 2025 that he believes there is a 10–15% (median - 12.5%) chance of a natural pandemic hitting in the next four years, but he estimated that there was also a 65-97.5% (median - 81.25%) chance of a natural pandemic hitting in the next 26 years.[41]

- On March 19, 2025, Henry Gee said that humanity will be extinct in the next 10,000 years. To avoid it happening, he wanted all humanity to establish space colonies in the next 200-300 years.[42]

- On September 11, 2025, Warp News estimated a 20% chance of global catastrophe and 6% chance of human extinction by 2100. They also estimated a 100% chance of global catastrophe and a 30% chance of human extinction by 2500.[43]

From nuclear weapons

[edit]In November 13, 2024, American Enterprise Institute estimated a probability of nuclear war during the 21st century between 0% to 80% (median average – 40%).[44] A 2023 article of The Economist estimated an 8% chance of Nuclear War causing global catastrophe and a 0.5625% chance of Nuclear War causing human extinction.[45]

From supervolcanic eruption

[edit]In November 13, 2024, American Enterprise Institute estimated an annual probability of supervolcanic eruption around 0.0067% (0.67% per century on average).[44]

From artificial intelligence

[edit]- A 2008 survey by the Future of Humanity Institute estimated a 5% probability of extinction by super-intelligence by 2100.[19]

- A 2016 survey of AI experts found a median estimate of 5% that human-level AI would cause an outcome that was "extremely bad (e.g. human extinction)".[46] In 2019, the risk was lowered to 2%, but in 2022, it was increased back to 5%. In 2023, the risk doubled to 10%. In 2024, the risk increased to 15%.[47]

- In 2020, Toby Ord estimates existential risk in the next century at "1 in 6" in his book The Precipice: Existential Risk and the Future of Humanity.[18][48] He also estimated a "1 in 10" risk of extinction by unaligned AI within the next century.

- According to the July 10, 2023 article of The Economist, scientists estimated a 12% chance of AI-caused catastrophe and a 3% chance of AI-caused extinction by 2100. They also estimated a 100% chance of AI-caused catastrophe and a 25% chance of AI-caused extinction by 2833.

- On December 27, 2024, Geoffrey Hinton estimated a 10-20% (median average - 15%) probability of AI-caused extinction in the next 30 years.[49] He also estimated a 50-100% (median average - 75%) probability of AI-caused extinction in the next 150 years.

- On May 6, 2025, Scientific American estimated a 0-10% (median average - 5%) probability of an AI-caused extinction by 2100.[50]

- On August 1, 2025, Holly Elmore estimated a 15-20% (median average - 17.5%) probability of an AI-caused extinction in the next 1-10 years (median average - 5.5 years). She also estimated a 75-100% (median average - 87.5%) probability of a AI-caused extinction in the next 5-50 years (median average-27.5 years).[51]

- On September 10, 2025, Australian Strategic Policy Institute estimated a 10-20% (median average - 15%) chance that a loss of control will cause a AI-caused human extinction within the next 5 years. They also estimated a 50-100% (median average - 75%) chance of AI-caused human extinction within the next 25 years.[52]

- On September 28, 2025, According to the Daily Mail, Scientists who study existential risk think there is anywhere between a 10% and 90% (median - 50%) chance that humanity will not survive the advent of superintelligent AI.[53]

From climate change

[edit]

In a 2010 interview with The Australian, the late Australian scientist Frank Fenner predicted the extinction of the human race within a century, primarily as the result of human overpopulation, environmental degradation and climate change.[54] There are several economists who have discussed the importance of global catastrophic risks. For example, Martin Weitzman argues that most of the expected economic damage from climate change may come from the small chance that warming greatly exceeds the mid-range expectations, resulting in catastrophic damage.[55] Richard Posner has argued that humanity is doing far too little, in general, about small, hard-to-estimate risks of large-scale catastrophes.[56]

Individual vs. species risks

[edit]Although existential risks are less manageable by individuals than, for example, health risks, according to Ken Olum, Joshua Knobe, and Alexander Vilenkin, the possibility of human extinction does have practical implications. For instance, if the "universal" doomsday argument is accepted, it changes the most likely source of disasters, and hence the most efficient means of preventing them. They write: "...you should be more concerned that a large number of asteroids have not yet been detected than about the particular orbit of each one. You should not worry especially about the chance that some specific nearby star will become a supernova, but more about the chance that supernovas are more deadly to nearby life than we believe."[57]

Difficulty

[edit]Some scholars argue that certain scenarios such as global thermonuclear war would have difficulty eradicating every last settlement on Earth. Physicist Willard Wells points out that any credible extinction scenario would have to reach into a diverse set of areas, including the underground subways of major cities, the mountains of Tibet, the remotest islands of the South Pacific, and even to McMurdo Station in Antarctica, which has contingency plans and supplies for long isolation.[58] In addition, elaborate bunkers exist for government leaders to occupy during a nuclear war.[21] The existence of nuclear submarines, which can stay hundreds of meters deep in the ocean for potentially years at a time, should also be considered. Any number of events could lead to a massive loss of human life, but if the last few (see minimum viable population) most resilient humans are unlikely to also die off, then that particular human extinction scenario may not seem credible.[59]

Ethics

[edit]Value of human life

[edit]"Existential risks" are risks that threaten the entire future of humanity, whether by causing human extinction or by otherwise permanently crippling human progress.[3] Multiple scholars have argued based on the size of the "cosmic endowment" that because of the inconceivably large number of potential future lives that are at stake, even small reductions of existential risk have great value.

In one of the earliest discussions of ethics of human extinction, Derek Parfit offers the following thought experiment:[60]

I believe that if we destroy mankind, as we now can, this outcome will be much worse than most people think. Compare three outcomes:

(1) Peace.

(2) A nuclear war that kills 99% of the world's existing population.

(3) A nuclear war that kills 100%.

(2) would be worse than (1), and (3) would be worse than (2). Which is the greater of these two differences? Most people believe that the greater difference is between (1) and (2). I believe that the difference between (2) and (3) is very much greater.

— Derek Parfit

The scale of what is lost in an existential catastrophe is determined by humanity's long-term potential – what humanity could expect to achieve if it survived.[18] From a utilitarian perspective, the value of protecting humanity is the product of its duration (how long humanity survives), its size (how many humans there are over time), and its quality (on average, how good is life for future people).[18]: 273 [61] On average, species survive for around a million years before going extinct. Parfit points out that the Earth will remain habitable for around a billion years.[60] And these might be lower bounds on our potential: if humanity is able to expand beyond Earth, it could greatly increase the human population and survive for trillions of years.[62][18]: 21 The size of the foregone potential that would be lost, were humanity to become extinct, is very large. Therefore, reducing existential risk by even a small amount would have a very significant moral value.[3][63]

Carl Sagan wrote in 1983:

If we are required to calibrate extinction in numerical terms, I would be sure to include the number of people in future generations who would not be born.... (By one calculation), the stakes are one million times greater for extinction than for the more modest nuclear wars that kill "only" hundreds of millions of people. There are many other possible measures of the potential loss – including culture and science, the evolutionary history of the planet, and the significance of the lives of all of our ancestors who contributed to the future of their descendants. Extinction is the undoing of the human enterprise.[64]

Philosopher Robert Adams in 1989 rejected Parfit's "impersonal" views but spoke instead of a moral imperative for loyalty and commitment to "the future of humanity as a vast project... The aspiration for a better society – more just, more rewarding, and more peaceful... our interest in the lives of our children and grandchildren, and the hopes that they will be able, in turn, to have the lives of their children and grandchildren as projects."[65]

Philosopher Nick Bostrom argues in 2013 that preference-satisfactionist, democratic, custodial, and intuitionist arguments all converge on the common-sense view that preventing existential risk is a high moral priority, even if the exact "degree of badness" of human extinction varies between these philosophies.[66]

Parfit argues that the size of the "cosmic endowment" can be calculated from the following argument: If Earth remains habitable for a billion more years and can sustainably support a population of more than a billion humans, then there is a potential for 1016 (or 10,000,000,000,000,000) human lives of normal duration.[67] Bostrom goes further, stating that if the universe is empty, then the accessible universe can support at least 1034 biological human life-years; and, if some humans were uploaded onto computers, could even support the equivalent of 1054 cybernetic human life-years.[3]

Some economists and philosophers have defended views, including exponential discounting and person-affecting views of population ethics, on which future people do not matter (or matter much less), morally speaking.[68] While these views are controversial,[21][69][70] they would agree that an existential catastrophe would be among the worst things imaginable. It would cut short the lives of eight billion presently existing people, destroying all of what makes their lives valuable, and most likely subjecting many of them to profound suffering. So even setting aside the value of future generations, there may be strong reasons to reduce existential risk, grounded in concern for presently existing people.[71]

Beyond utilitarianism, other moral perspectives lend support to the importance of reducing existential risk. An existential catastrophe would destroy more than just humanity – it would destroy all cultural artifacts, languages, and traditions, and many of the things we value.[18][72] So moral viewpoints on which we have duties to protect and cherish things of value would see this as a huge loss that should be avoided.[18] One can also consider reasons grounded in duties to past generations. For instance, Edmund Burke writes of a "partnership...between those who are living, those who are dead, and those who are to be born".[73] If one takes seriously the debt humanity owes to past generations, Ord argues the best way of repaying it might be to "pay it forward", and ensure that humanity's inheritance is passed down to future generations.[18]: 49–51

Voluntary extinction

[edit]

Some philosophers adopt the antinatalist position that human extinction would not be a bad thing, but a good thing. David Benatar argues that coming into existence is always serious harm, and therefore it is better that people do not come into existence in the future.[74] Further, Benatar, animal rights activist Steven Best, and anarchist Todd May, posit that human extinction would be a positive thing for the other organisms on the planet, and the planet itself, citing, for example, the omnicidal nature of human civilization.[75][76][77] The environmental view in favor of human extinction is shared by the members of Voluntary Human Extinction Movement and the Church of Euthanasia who call for refraining from reproduction and allowing the human species to go peacefully extinct, thus stopping further environmental degradation.[78]

In fiction

[edit]Jean-Baptiste Cousin de Grainville's 1805 science fantasy novel Le dernier homme (The Last Man), which depicts human extinction due to infertility, is considered the first modern apocalyptic novel and credited with launching the genre.[79] Other notable early works include Mary Shelley's 1826 The Last Man, depicting human extinction caused by a pandemic, and Olaf Stapledon's 1937 Star Maker, "a comparative study of omnicide".[4]

Some 21st century pop-science works, including The World Without Us by Alan Weisman, and the television specials Life After People and Aftermath: Population Zero pose a thought experiment: what would happen to the rest of the planet if humans suddenly disappeared?[80][81] A threat of human extinction, such as through a technological singularity (also called an intelligence explosion), drives the plot of innumerable science fiction stories; an influential early example is the 1951 film adaption of When Worlds Collide.[82] Usually the extinction threat is narrowly avoided, but some exceptions exist, such as R.U.R. and Steven Spielberg's A.I.[83][page needed]

See also

[edit]References

[edit]- ^ Di Mardi (October 15, 2020). "The grim fate that could be 'worse than extinction'". BBC News. Retrieved November 11, 2020.

When we think of existential risks, events like nuclear war or asteroid impacts often come to mind.

- ^ a b c d e Snyder-Beattie, Andrew E.; Ord, Toby; Bonsall, Michael B. (July 30, 2019). "An upper bound for the background rate of human extinction". Scientific Reports. 9 (1): 11054. Bibcode:2019NatSR...911054S. doi:10.1038/s41598-019-47540-7. ISSN 2045-2322. PMC 6667434. PMID 31363134.

- ^ a b c d e Bostrom 2013.

- ^ a b c d e f g h i j Moynihan, Thomas (September 23, 2020). "How Humanity Came To Contemplate Its Possible Extinction: A Timeline". The MIT Press Reader. Retrieved October 11, 2020.

See also:- Moynihan, Thomas (February 2020). "Existential risk and human extinction: An intellectual history". Futures. 116 102495. doi:10.1016/j.futures.2019.102495. ISSN 0016-3287. S2CID 213388167.

- Moynihan, Thomas (2020). X-Risk: How Humanity Discovered Its Own Extinction. MIT Press. ISBN 978-1-913029-82-1.

- ^ a b Darwin, Charles; Costa, James T. (2009). The Annotated Origin. Harvard University Press. p. 121. ISBN 978-0674032811.

- ^ Moll, S. (2010). The Arch-heretic Marcion. Wissenschaftliche Untersuchungen zum Neuen Testament. Mohr Siebeck. p. 132. ISBN 978-3-16-150268-2. Retrieved June 11, 2023.

- ^ Welchman, A. (2014). Politics of Religion/Religions of Politics. Sophia Studies in Cross-cultural Philosophy of Traditions and Cultures. Springer Netherlands. p. 21. ISBN 978-94-017-9448-0. Retrieved June 11, 2023.

- ^ Moynihan, T. (2020). X-Risk: How Humanity Discovered Its Own Extinction. MIT Press. p. 56. ISBN 978-1-913029-84-5. Retrieved October 19, 2022.

- ^ a b Raup, David M. (1995). "The Role of Extinction in Evolution". In Fitch, W. M.; Ayala, F. J. (eds.). Tempo And Mode in Evolution: Genetics And Paleontology 50 Years After Simpson. National Academies Press (US).

- ^ Russell, Bertrand (1945). "The Bomb and Civilization". Archived from the original on August 7, 2020.

- ^ Erskine, Hazel Gaudet (1963). "The Polls: Atomic Weapons and Nuclear Energy". The Public Opinion Quarterly. 27 (2): 155–190. doi:10.1086/267159. JSTOR 2746913.

- ^ Sagan, Carl (January 28, 2009). "Nuclear War and Climatic Catastrophe: Some Policy Implications". doi:10.2307/20041818. JSTOR 20041818. Retrieved August 11, 2021.

- ^ a b Reese, Martin (2003). Our Final Hour: A Scientist's Warning: How Terror, Error, and Environmental Disaster Threaten Humankind's Future In This Century – On Earth and Beyond. Basic Books. ISBN 0-465-06863-4.

- ^ Bostrom, Nick; Ćirković, Milan M., eds. (2008). Global catastrophic risks. Oxford University Press. ISBN 978-0199606504.

- ^ Money, Nicholas P. (2019). The Selfish Ape: Human Nature and Our Path to Extinction. London: Reaktion Books, Limited. ISBN 978-1-78914-155-9.

- ^ Ord, Toby (2020). The Precipice: Existential Risk and the Future of Humanity. New York: Hachette. 4:15–31. ISBN 9780316484916.

This is an equivalent, though crisper statement of Nick Bostrom's definition: "An existential risk is one that threatens the premature extinction of Earth-originating intelligent life or the permanent and drastic destruction of its potential for desirable future development." Source: Bostrom, Nick (2013). "Existential Risk Prevention as Global Priority". Global Policy.

- ^ "Racing to Extinction: A Review". Protons for Breakfast. June 20, 2024. Retrieved August 29, 2025.

- ^ a b c d e f g h i j Ord, Toby (2020). The Precipice: Existential Risk and the Future of Humanity. New York: Hachette. ISBN 9780316484916.

- ^ a b Bostrom, Nick; Sandberg, Anders (2008). "Global Catastrophic Risks Survey" (PDF). FHI Technical Report #2008-1. Future of Humanity Institute.

- ^ "Frequently Asked Questions". Existential Risk. Future of Humanity Institute. Retrieved July 26, 2013.

The great bulk of existential risk in the foreseeable future is anthropogenic; that is, arising from human activity.

- ^ a b c d Matheny, Jason Gaverick (2007). "Reducing the Risk of Human Extinction" (PDF). Risk Analysis. 27 (5): 1335–1344. Bibcode:2007RiskA..27.1335M. doi:10.1111/j.1539-6924.2007.00960.x. PMID 18076500. S2CID 14265396. Archived from the original (PDF) on August 27, 2014. Retrieved July 1, 2016.

- ^ Asher, D.J.; Bailey, M.E.; Emelʹyanenko, V.; Napier, W.M. (2005). "Earth in the cosmic shooting gallery" (PDF). The Observatory. 125: 319–322. Bibcode:2005Obs...125..319A.

- ^ a b c Rampino, M.R.; Ambrose, S.H. (2002). "Super eruptions as a threat to civilizations on Earth-like planets" (PDF). Icarus. 156 (2): 562–569. Bibcode:2002Icar..156..562R. doi:10.1006/icar.2001.6808. Archived from the original (PDF) on September 24, 2015. Retrieved February 14, 2022.

- ^ Yost, Chad L.; Jackson, Lily J.; Stone, Jeffery R.; Cohen, Andrew S. (March 1, 2018). "Subdecadal phytolith and charcoal records from Lake Malawi, East Africa imply minimal effects on human evolution from the ~74 ka Toba supereruption". Journal of Human Evolution. 116: 75–94. Bibcode:2018JHumE.116...75Y. doi:10.1016/j.jhevol.2017.11.005. ISSN 0047-2484. PMID 29477183.

- ^ Sagan, Carl (1994). Pale Blue Dot. Random House. pp. 305–6. ISBN 0-679-43841-6.

Some planetary civilizations see their way through, place limits on what may and what must not be done, and safely pass through the time of perils. Others are not so lucky or so prudent, perish.

- ^ Parfit, Derek (2011). On What Matters Vol. 2. Oxford University Press. p. 616. ISBN 9780199681044.

We live during the hinge of history ... If we act wisely in the next few centuries, humanity will survive its most dangerous and decisive period.

- ^ Vinn, O. (2024). "Potential incompatibility of inherited behavior patterns with civilization: Implications for Fermi paradox". Science Progress. 107 (3) 00368504241272491: 1–6. doi:10.1177/00368504241272491. PMC 11307330. PMID 39105260.

- ^ Vinn, O. (2025). "How to solve the problem of inherited behavior patterns and increase the sustainability of technological civilization". Frontiers in Psychology. 16 1562943: 1–4. doi:10.3389/fpsyg.2025.1562943. PMC 11866485. PMID 40018008.

- ^ Rowe, Thomas; Beard, Simon (2018). "Probabilities, methodologies and the evidence base in existential risk assessments" (PDF). Working Paper, Centre for the Study of Existential Risk. Retrieved August 26, 2018.

- ^ Gott, III, J. Richard (1993). "Implications of the Copernican principle for our future prospects". Nature. 363 (6427): 315–319. Bibcode:1993Natur.363..315G. doi:10.1038/363315a0. S2CID 4252750.

- ^ Leslie 1996, p. 146.

- ^ Meyer, Robinson (April 29, 2016). "Human Extinction Isn't That Unlikely". The Atlantic. Boston, Massachusetts: Emerson Collective. Retrieved April 30, 2016.

- ^ "Will humans become extinct by 2100?". Metaculus. November 12, 2017. Retrieved July 29, 2025.

- ^ Nafeez, Ahmed (July 28, 2020). "Theoretical Physicists Say 90% Chance of Societal Collapse Within Several Decades". Vice. Retrieved August 2, 2021.

- ^ Bologna, M.; Aquino, G. (2020). "Deforestation and world population sustainability: a quantitative analysis". Scientific Reports. 10 (7631): 7631. arXiv:2006.12202. Bibcode:2020NatSR..10.7631B. doi:10.1038/s41598-020-63657-6. PMC 7203172. PMID 32376879.

- ^ Bostrom, Nick (2002), "Existential Risks: Analyzing Human Extinction Scenarios and Related Hazards", Journal of Evolution and Technology, vol. 9,

My subjective opinion is that setting this probability lower than 25% would be misguided, and the best estimate may be considerably higher.

- ^ Whitmire, Daniel P. (August 3, 2017). "Implication of our technological species being first and early". International Journal of Astrobiology. 18 (2): 183–188. doi:10.1017/S1473550417000271.

- ^ Leslie 1996, p. 139.

- ^ a b Salotti, Jean-Marc (April 2022). "Human extinction by asteroid impact". Futures. 138 102933. doi:10.1016/j.futures.2022.102933. S2CID 247718308.

- ^ Klaas, Brian (March 12, 2025). "DOGE Is Courting Catastrophic Risk". The Atlantic. Retrieved March 14, 2025.

- ^ King, Jordan (March 11, 2025). "Americans Are Worried About Another Pandemic". Newsweek. Retrieved April 14, 2025.

- ^ Gee, Henry (2025) [2025]. The Decline and Fall of the Human Empire. Macmillan Publishers.

- ^ "AI shows faster development than experts predicted". Warp News. September 11, 2025. Retrieved September 11, 2025.

- ^ a b Pielke, Jr., Roger (November 13, 2024). "Global Existential Risks". American Enterprise Institute. Retrieved December 17, 2024.

- ^ "What are the chances of an AI apocalypse?". The Economist. July 10, 2023. Retrieved July 10, 2023.

- ^ Grace, Katja; Salvatier, John; Dafoe, Allen; Zhang, Baobao; Evans, Owain (May 3, 2018). "When Will AI Exceed Human Performance? Evidence from AI Experts". arXiv:1705.08807 [cs.AI].

- ^ Strick, Katie (May 31, 2023). "Is the AI apocalypse actually coming? What life could look like if robots take over". The Standard. Retrieved May 31, 2023.

- ^ Purtill, Corinne. "How Close Is Humanity to the Edge?". The New Yorker. Retrieved January 8, 2021.

- ^ Milmo, Dan (December 27, 2024). "'Godfather of AI' shortens odds of the technology wiping out humanity over next 30 years". The Guardian. Retrieved December 27, 2024.

- ^ Vermeer, Michael (May 6, 2025). "Could AI Really Kill Off Humans?". Scientific American. Retrieved May 7, 2025.

- ^ Woodhouse, Leighton (August 1, 2025). "Experts predict AI will lead to the extinction of humanity". The Times. Retrieved August 1, 2025.

- ^ Sadler, Greg (September 10, 2025). "AI's national‑security risks are falling through the gaps". Australian Strategic Policy Institute. Retrieved September 10, 2025.

- ^ Hunter, Wiliam (September 28, 2025). "What the end of the world will REALLY look like, according to expert". ABD Post. Retrieved September 28, 2025.

- ^ Edwards, Lin (June 23, 2010). "Humans will be extinct in 100 years says eminent scientist". Phys.org. Retrieved January 10, 2021.

- ^ Weitzman, Martin (2009). "On modeling and interpreting the economics of catastrophic climate change" (PDF). The Review of Economics and Statistics. 91 (1): 1–19. doi:10.1162/rest.91.1.1. S2CID 216093786.

- ^ Posner, Richard (2004). Catastrophe: Risk and Response. Oxford University Press.

- ^ "Practical application", of the Princeton University paper: Philosophical Implications of Inflationary Cosmology, p. 39. Archived May 12, 2005, at the Wayback Machine.

- ^ Wells, Willard. (2009). Apocalypse when?. Praxis. ISBN 978-0387098364.

- ^ Tonn, Bruce; MacGregor, Donald (2009). "A singular chain of events". Futures. 41 (10): 706–714. doi:10.1016/j.futures.2009.07.009. S2CID 144553194. SSRN 1775342.

- ^ a b Parfit, Derek (1984). Reasons and Persons. Oxford University Press. pp. 453–454.

- ^ MacAskill, William; Yetter Chappell, Richard (2021). "Population Ethics | Practical Implications of Population Ethical Theories". Introduction to Utilitarianism. Retrieved August 12, 2021.

- ^ Bostrom, Nick (2009). "Astronomical Waste: The opportunity cost of delayed technological development". Utilitas. 15 (3): 308–314. CiteSeerX 10.1.1.429.2849. doi:10.1017/s0953820800004076. S2CID 15860897.

- ^ Todd, Benjamin (2017). "The case for reducing existential risks". 80,000 Hours. Retrieved January 8, 2020.

- ^ Sagan, Carl (1983). "Nuclear war and climatic catastrophe: Some policy implications". Foreign Affairs. 62 (2): 257–292. doi:10.2307/20041818. JSTOR 20041818. S2CID 151058846.

- ^ Adams, Robert Merrihew (October 1989). "Should Ethics be More Impersonal? a Critical Notice of Derek Parfit, Reasons and Persons". The Philosophical Review. 98 (4): 439–484. doi:10.2307/2185115. JSTOR 2185115.

- ^ Bostrom 2013, pp. 23–24.

- ^ Parfit, D. (1984) Reasons and Persons. Oxford, England: Clarendon Press. pp. 453–454.

- ^ Narveson, Jan (1973). "Moral Problems of Population". The Monist. 57 (1): 62–86. doi:10.5840/monist197357134. PMID 11661014.

- ^ Greaves, Hilary (2017). "Discounting for Public Policy: A Survey". Economics & Philosophy. 33 (3): 391–439. doi:10.1017/S0266267117000062. ISSN 0266-2671. S2CID 21730172.

- ^ Greaves, Hilary (2017). "Population axiology". Philosophy Compass. 12 (11) e12442. doi:10.1111/phc3.12442. ISSN 1747-9991.

- ^ Lewis, Gregory (May 23, 2018). "The person-affecting value of existential risk reduction". www.gregoryjlewis.com. Retrieved August 7, 2020.

- ^ Sagan, Carl (Winter 1983). "Nuclear War and Climatic Catastrophe: Some Policy Implications". Foreign Affairs. Council on Foreign Relations. doi:10.2307/20041818. JSTOR 20041818. Retrieved August 4, 2020.

- ^ Burke, Edmund (1999) [1790]. "Reflections on the Revolution in France" (PDF). In Canavan, Francis (ed.). Select Works of Edmund Burke Volume 2. Liberty Fund. p. 192.

- ^ Benatar, David (2008). Better Never to Have Been: The Harm of Coming into Existence. Oxford University Press. p. 28. ISBN 978-0199549269.

Being brought into existence is not a benefit but always a harm.

- ^ Benatar, David (2008). Better Never to Have Been: The Harm of Coming into Existence. Oxford University Press. p. 224. ISBN 978-0199549269.

Although there are many non-human species – especially carnivores – that also cause a lot of suffering, humans have the unfortunate distinction of being the most destructive and harmful species on earth. The amount of suffering in the world could be radically reduced if there were no more humans.

- ^ Best, Steven (2014). "Conclusion: Reflections on Activism and Hope in a Dying World and Suicidal Culture". The Politics of Total Liberation: Revolution for the 21st Century. Palgrave Macmillan. p. 165. doi:10.1057/9781137440723_7. ISBN 978-1137471116.

In an era of catastrophe and crisis, the continuation of the human species in a viable or desirable form, is obviously contingent and not a given or necessary good. But considered from the standpoint of animals and the earth, the demise of humanity would be the best imaginable event possible, and the sooner the better. The extinction of Homo sapiens would remove the malignancy ravaging the planet, destroy a parasite consuming its host, shut down the killing machines, and allow the earth to regenerate while permitting new species to evolve.

- ^ May, Todd (December 17, 2018). "Would Human Extinction Be a Tragedy?". The New York Times.

Human beings are destroying large parts of the inhabitable earth and causing unimaginable suffering to many of the animals that inhabit it. This is happening through at least three means. First, human contribution to climate change is devastating ecosystems ... Second, the increasing human population is encroaching on ecosystems that would otherwise be intact. Third, factory farming fosters the creation of millions upon millions of animals for whom it offers nothing but suffering and misery before slaughtering them in often barbaric ways. There is no reason to think that those practices are going to diminish any time soon. Quite the opposite.

- ^ MacCormack, Patricia (2020). The Ahuman Manifesto: Activism for the End of the Anthropocene. Bloomsbury Academic. pp. 143, 166. ISBN 978-1350081093.

- ^ Wagar, W. Warren (2003). "Review of The Last Man, Jean-Baptiste François Xavier Cousin de Grainville". Utopian Studies. 14 (1): 178–180. ISSN 1045-991X. JSTOR 20718566.

- ^ "He imagines a world without people. But why?". The Boston Globe. August 18, 2007. Retrieved July 20, 2016.

- ^ Tucker, Neely (March 8, 2008). "Depopulation Boom". The Washington Post. Retrieved July 20, 2016.

- ^ Barcella, Laura (2012). The end: 50 apocalyptic visions from pop culture that you should know about – before it's too late. San Francisco, California: Zest Books. ISBN 978-0982732250.

- ^ Dinello, Daniel (2005). Technophobia!: science fiction visions of posthuman technology (1st ed.). Austin, Texas: University of Texas press. ISBN 978-0-292-70986-7.

Sources

[edit]- Bostrom, Nick (2002). "Existential risks: analyzing human extinction scenarios and related hazards". Journal of Evolution and Technology. 9. ISSN 1541-0099.

- Bostrom, Nick; Cirkovic, Milan M. (September 29, 2011) [Orig. July 3, 2008]. "1: Introduction". In Bostrom, Nick; Cirkovic, Milan M. (eds.). Global Catastrophic Risks. Oxford University Press. pp. 1–30. ISBN 9780199606504. OCLC 740989645.

- Rampino, Michael R. "10: Super-volcanism and other geophysical processes of catastrophic import". In Bostrom & Cirkovic (2011), pp. 205–221.

- Napier, William. "11: Hazards from comets and asteroids". In Bostrom & Cirkovic (2011), pp. 222–237.

- Dar, Arnon. "12: Influence of Supernovae, gamma-ray bursts, solar flares, and cosmic rays on the terrestrial environment". In Bostrom & Cirkovic (2011), pp. 238–262.

- Frame, David; Allen, Myles R. "13: Climate change and global risk". In Bostrom & Cirkovic (2011), pp. 265–286.

- Kilbourne, Edwin Dennis. "14: Plagues and pandemics: past, present, and future". In Bostrom & Cirkovic (2011), pp. 287–304.

- Yudkowsky, Eliezer. "15: Artificial Intelligence as a positive and negative factor in global risk". In Bostrom & Cirkovic (2011), pp. 308–345.

- Wilczek, Frank. "16: Big troubles, imagined and real". In Bostrom & Cirkovic (2011), pp. 346–362.

- Cirincione, Joseph. "18: The continuing threat of nuclear war". In Bostrom & Cirkovic (2011), pp. 381–401.

- Ackerman, Gary; Potter, William C. "19: Catastrophic nuclear terrorism: a preventable peril". In Bostrom & Cirkovic (2011), pp. 402–449.

- Nouri, Ali; Chyba, Christopher F. "20: Biotechnology and biosecurity". In Bostrom & Cirkovic (2011), pp. 450–480.

- Phoenix, Chris; Treder, Mike. "21: Nanotechnology as global catastrophic risk". In Bostrom & Cirkovic (2011), pp. 481–503.

- Bostrom, Nick (2013). "Existential Risk Prevention as Global Priority". Global Policy. 4 (1): 15–31. doi:10.1111/1758-5899.12002. ISSN 1758-5899. [ PDF ]

- Leslie, John (1996). The End of the World: The Science and Ethics of Human Extinction. Routledge. ISBN 978-0415140430. OCLC 1158823437.

- Posner, Richard A. (November 11, 2004). Catastrophe: Risk and Response. Oxford University Press. ISBN 978-0-19-534639-8. OCLC 224729961.

- Rees, Martin J. (March 19, 2003). Our Final Hour: A Scientist's Warning : how Terror, Error, and Environmental Disaster Threaten Humankind's Future in this Century--on Earth and Beyond. Basic Books. ISBN 978-0-465-06862-3. OCLC 51315429.

Further reading

[edit]- Boulter, Michael (2005). Extinction: Evolution and the End of Man. Columbia University Press. ISBN 978-0231128377.

- de Bellaigue, Christopher, "A World Off the Hinges" (review of Peter Frankopan, The Earth Transformed: An Untold History, Knopf, 2023, 695 pp.), The New York Review of Books, vol. LXX, no. 18 (23 November 2023), pp. 40–42. De Bellaigue writes: "Like the Maya and the Akkadians we have learned that a broken environment aggravates political and economic dysfunction and that the inverse is also true. Like the Qing we rue the deterioration of our soils. But the lesson is never learned. [...] Denialism [...] is one of the most fundamental of human traits and helps explain our current inability to come up with a response commensurate with the perils we face." (p. 41.)

- Brain, Marshall (2020) The Doomsday Book: The Science Behind Humanity's Greatest Threats Union Square ISBN 9781454939962

- Holt, Jim, "The Power of Catastrophic Thinking" (review of Toby Ord, The Precipice: Existential Risk and the Future of Humanity, Hachette, 2020, 468 pp.), The New York Review of Books, vol. LXVIII, no. 3 (February 25, 2021), pp. 26–29. Jim Holt writes (p. 28): "Whether you are searching for a cure for cancer, or pursuing a scholarly or artistic career, or engaged in establishing more just institutions, a threat to the future of humanity is also a threat to the significance of what you do."

- MacCormack, Patricia (2020). "Embracing Death, Opening the World". Australian Feminist Studies. 35 (104): 101–115. doi:10.1080/08164649.2020.1791689. S2CID 221790005. Archived from the original on April 5, 2023. Retrieved February 20, 2023.

- Michael Moyer (September 2010). "Eternal Fascinations with the End: Why We're Suckers for Stories of Our Own Demise: Our pattern-seeking brains and desire to be special help explain our fears of the apocalypse". Scientific American.

- Plait, Philip (2008) Death from the Skies!: These Are the Ways the World Will End Viking ISBN 9780670019977

- Schubert, Stefan; Caviola, Lucius; Faber, Nadira S. (2019). "The Psychology of Existential Risk: Moral Judgments about Human Extinction". Scientific Reports. 9 (1): 15100. Bibcode:2019NatSR...915100S. doi:10.1038/s41598-019-50145-9. PMC 6803761. PMID 31636277.

- Ord, Toby (2020). The Precipice: Existential Risk and the Future of Humanity. Bloomsbury Publishing. ISBN 1526600218

- Torres, Phil. (2017). Morality, Foresight, and Human Flourishing: An Introduction to Existential Risks. Pitchstone Publishing. ISBN 978-1634311427.

- Michel Weber, "Book Review: Walking Away from Empire", Cosmos and History: The Journal of Natural and Social Philosophy, vol. 10, no. 2, 2014, pp. 329–336.

- Doomsday: 10 Ways the World Will End (2016) History Channel

- What would happen to Earth if humans went extinct? Live Science, August 16, 2020.

- A.I. poses human extinction risk on par with nuclear war, Sam Altman and other tech leaders warn. CNBC. May 31, 2023.

- "Treading Thin Air: Geoff Mann on Uncertainty and Climate Change", London Review of Books, vol. 45, no. 17 (7 September 2023), pp. 17–19. "[W]e are in desperate need of a politics that looks [the] catastrophic uncertainty [of global warming and climate change] square in the face. That would mean taking much bigger and more transformative steps: all but eliminating fossil fuels... and prioritizing democratic institutions over markets. The burden of this effort must fall almost entirely on the richest people and richest parts of the world, because it is they who continue to gamble with everyone else's fate." (p. 19.)

Human extinction

View on GrokipediaDefinition and Conceptual Framework

Criteria for Species Extinction

In biology, a species is considered extinct when all members of that species have died, leaving no living individuals capable of reproduction, thereby terminating the evolutionary lineage.[5] This definition emphasizes the irreversible cessation of the species' existence in the wild or in any form, without reliance on potential revival through artificial means such as cloning, which remains speculative and unproven for complex multicellular organisms like humans.[6] The International Union for Conservation of Nature (IUCN) provides standardized criteria for declaring a species extinct, requiring no reasonable doubt that the last individual has perished, based on exhaustive surveys of known habitats, absence of sightings over extended periods (often decades), and evidence of population decline to zero.[5] These assessments incorporate factors like the species' life history, habitat extent, and search efforts, with extinction confirmed only after ruling out overlooked populations or vagrants; for instance, the golden toad (Bufo periglenes) was declared extinct in 2004 after no individuals were observed since 1989 despite intensive monitoring in its restricted Costa Rican habitat. Unlike "functionally extinct" populations—where numbers fall below a minimum viable threshold (typically 50-500 individuals for short-term genetic viability, or thousands for long-term adaptability)—true extinction demands absolute absence, as even a single fertile pair could theoretically restart the population, though inbreeding depression would likely doom isolated remnants.[7] For humans (Homo sapiens), applying these criteria yields a stark threshold: extinction occurs precisely when the global population reaches zero living individuals, with no survivors in any location, including remote areas, artificial habitats, or cryogenic preservation viable for revival.[5] Unlike smaller or habitat-bound species, humanity's widespread distribution (over 8 billion individuals across diverse biomes as of 2023) and technological capabilities (e.g., bunkers, space habitats) complicate hypothetical scenarios, but the biological endpoint remains unchanged—no reproduction possible without at least two fertile individuals of opposite sexes, and sustained viability requiring a genetically diverse group exceeding effective population sizes of 1,000-10,000 to avoid collapse from genetic drift and mutations.[6] Declaration would be unequivocal upon verified total mortality, bypassing prolonged surveys due to global observability via surveillance networks, though post-extinction confirmation is moot.[8]Distinction from Societal Collapse or Near-Extinction Events

Human extinction refers to the complete and irreversible cessation of the Homo sapiens species, wherein no individuals remain capable of reproduction or survival, eliminating any possibility of recovery or continuation of human lineage.[1] This outcome contrasts sharply with lesser catastrophes, as it precludes not only the persistence of civilization but the biological continuity of the species itself, rendering moot any prospects for societal rebuilding or evolutionary adaptation.[1] Societal collapse, by contrast, entails the abrupt simplification or disintegration of complex human societies, typically marked by substantial declines in population, economic output, political organization, and technological sophistication across large regions, yet without eradicating the human population globally.[9] Historical instances include the Bronze Age Collapse around 1200 BCE, which dismantled advanced civilizations in the Eastern Mediterranean—such as the Mycenaean Greeks and Hittites—through interconnected factors like invasions, droughts, and systemic failures, resulting in depopulation and loss of literacy and trade networks, but allowing human survivors to persist in decentralized, subsistence-based communities that eventually gave rise to new societies.[9] Similarly, the fall of the Western Roman Empire in the 5th century CE led to fragmented polities and regression in infrastructure, yet human numbers rebounded over centuries without species-level threat.[9] In existential risk frameworks, such collapses represent "endurable" disasters from which humanity can recover, preserving the potential for future advancement, unlike extinction which terminates that trajectory entirely.[1] Near-extinction events involve drastic reductions in human population size to critically low levels—often a few thousand breeding individuals—heightening the stochastic risk of total extinction through inbreeding, environmental pressures, or further shocks, but ultimately permitting demographic rebound and genetic diversification.[10] Genomic analyses indicate a severe bottleneck among early human ancestors approximately 930,000 to 813,000 years ago, with an effective breeding population contracting to around 1,280 individuals for over 100,000 years, likely triggered by glacial cycles or climatic instability, reshaping genetic diversity yet avoiding oblivion as populations expanded post-bottleneck.[10] Another inferred event around 74,000 years ago, potentially linked to the Toba supervolcano eruption, may have reduced global human numbers to 1,000–10,000 breeding pairs, evidenced by low genetic diversity in non-African populations, but archaeological and genetic data show continuity and out-of-Africa migrations shortly thereafter, demonstrating resilience absent in true extinction scenarios.[11] These episodes underscore that near-extinction demands a viable remnant capable of exponential growth, distinguishing them from extinction's absolute finality, where no such kernel survives to repopulate.[1]Temporal Scales: Near-Term vs. Long-Term Extinction

Near-term human extinction risks are those that could manifest within the next few centuries, primarily driven by anthropogenic factors such as nuclear holocaust, misaligned superintelligent artificial intelligence, synthetic biology enabling doomsday pathogens, or self-replicating nanotechnological replicators capable of disassembling the biosphere.[1] These risks are amplified by the rapid pace of technological advancement, creating a narrow window of vulnerability before robust safeguards might be developed. Philosopher Nick Bostrom contends that existential risks over timescales of centuries or less are dominated by human-induced threats from advanced technologies, estimating a greater than 25% probability of existential disaster in the coming centuries if unmitigated.[1] Similarly, philosopher Toby Ord assesses the overall probability of existential catastrophe—encompassing extinction or unrecoverable civilizational collapse—over the next 100 years at 1 in 6, with anthropogenic sources like artificial intelligence (1 in 10) and engineered pandemics (1 in 30) far outweighing natural baselines.[12] Long-term extinction risks, by contrast, unfold over geological, evolutionary, or cosmic timescales spanning millions to billions of years, often involving natural processes beyond direct human influence, such as massive asteroid or comet impacts, supervolcanic eruptions, or the loss of Earth's habitability for complex life in approximately 1 billion years due to increasing solar luminosity causing a runaway greenhouse effect and ocean evaporation, though technological advancements enabling multi-planetary expansion could extend human presence beyond Earth, prior to the eventual engulfment of Earth by the Sun's red giant phase in approximately 5 billion years.[13] Empirical estimates of the background extinction rate from natural causes yield very low annual probabilities; a analysis of Homo sapiens' 200,000-year survival history imposes an upper bound of less than 1 in 14,000 per year (with 10^{-6} likelihood of exceeding this), translating to negligible short-term threats but cumulative inevitability over eons.[14] Ord notes that historical natural risks averaged 1 in 10,000 per century, remaining minor relative to contemporary anthropogenic perils but persistent across deep time.[15] This temporal dichotomy underscores differing mitigation strategies: near-term risks demand urgent institutional and technological interventions to avert self-inflicted disasters, while long-term risks necessitate long-horizon planning, such as space colonization or evolutionary adaptation, to extend humanity's persistence against inevitable cosmic endpoints. Bostrom highlights that near-term anthropogenic dominance shifts focus from probabilistic natural lotteries to controllable variables, though failure in the former could preclude addressing the latter.[1]Historical and Intellectual Development

Ancient and Pre-Modern Conceptions

In ancient Greek philosophy, the end of the world was often conceptualized through natural cataclysms, but these were typically part of cyclical processes rather than leading to permanent human extinction. Plato, in works such as Timaeus (c. 360 BCE), described recurrent disasters including floods, fires, plagues, and earthquakes that periodically reset human society, with small groups of survivors preserving knowledge and rebuilding civilization.[16] Similarly, the Stoics, from Zeno of Citium onward in the Hellenistic period, endorsed ekpyrosis, a universal conflagration consuming the cosmos in fire before its rational reformation and rebirth, ensuring the eternal recurrence of identical events including human life.[17] Atomists like Democritus (c. 460–370 BCE) and Epicurus (341–270 BCE) allowed for worlds' destruction via collisions or dissipation into the void, potentially ending local human populations without renewal, though their infinite multiverse implied continuation elsewhere.[16] Lucretius (c. 99–55 BCE), following Epicurean materialism in De Rerum Natura, explicitly addressed species extinction, stating that "many species must have died out altogether and failed to reproduce their kind" due to environmental mismatches, such as lack of sustenance or reproductive viability for malformed early creatures.[18] He extended this to imply vulnerability for humanity, as changing earthly conditions could render survival impossible, with nature producing and discarding forms indiscriminately; yet, he maintained that lost value is replenished through atomic recombination, precluding absolute finality.[19] Pre-modern religious eschatologies framed humanity's end within divine or cosmic renewal, not biological termination. In Abrahamic traditions, medieval Christian thinkers like Augustine (354–430 CE) anticipated the world's consummation at Christ's Second Coming, followed by judgment, resurrection, and a renewed creation where the elect persist eternally, rendering naturalistic extinction incompatible with providence.[20] Hindu texts depicted cyclical yugas culminating in Kali Yuga's dissolution (pralaya) via fire or flood, but with recreation by Vishnu's avatar Kalki preserving dharma and human continuity across kalpas.[21] These views prioritized metaphysical transformation over empirical species cessation, reflecting a worldview where human purpose transcended material persistence.[19]20th-Century Emergence in the Atomic Age

The atomic bombings of Hiroshima on August 6, 1945, and Nagasaki on August 9, 1945, which resulted in the deaths of approximately 140,000 and 74,000 people respectively by the end of 1945, initiated widespread contemplation of nuclear weapons' capacity for mass destruction beyond conventional warfare. These events, conducted by the United States to hasten Japan's surrender in World War II, demonstrated the fission bomb's lethal power, prompting scientists and intellectuals to foresee escalatory risks in future conflicts.[22] Norman Cousins, in an August 1945 Saturday Review article, articulated early existential apprehensions, questioning whether humanity could control the atomic force it had unleashed, potentially leading to self-annihilation.[22] In the immediate postwar years, Manhattan Project participants founded the Bulletin of the Atomic Scientists in December 1945 to advocate for civilian control of nuclear technology and warn of proliferation dangers. This group introduced the Doomsday Clock in 1947, initially set at seven minutes to midnight to symbolize humanity's proximity to nuclear-induced catastrophe, evolving into a metric for existential threats. Bertrand Russell, in a 1946 BBC broadcast, urged international cooperation to avert atomic war, emphasizing that mutual use of such weapons could render vast regions uninhabitable and precipitate global conflict. These efforts reflected a shift from wartime optimism to dread of irreversible escalation, as the Soviet Union tested its first atomic bomb on August 29, 1949, ending the U.S. monopoly. The advent of thermonuclear weapons amplified extinction concerns. U.S. President Harry Truman authorized hydrogen bomb development on January 31, 1950, leading to the Ivy Mike test on November 1, 1952, which yielded 10.4 megatons—over 700 times Hiroshima's yield. The Soviet Union's 1953 test further intensified fears of mutually assured destruction. Culminating these alarms, the Russell-Einstein Manifesto, drafted by Russell and signed by Albert Einstein on July 9, 1955—just days before Einstein's death—framed nuclear armament as a binary choice: renounce war or risk ending the human race.[23] It warned of superbombs potentially destroying all life on Earth, spurring the Pugwash Conferences on Science and World Affairs to address extinction-level risks through scientist diplomacy. This period marked human extinction's transition from speculative philosophy to policy imperative, driven by empirical demonstrations of nuclear potency.Post-Cold War to Contemporary Era

Following the dissolution of the Soviet Union in 1991, intellectual discourse on human extinction transitioned from predominant Cold War-era preoccupations with nuclear annihilation toward a diversified assessment of existential threats, incorporating emerging technologies and non-military hazards. While the perceived probability of all-out nuclear exchange receded, scholars began systematically categorizing risks capable of curtailing humanity's potential indefinitely, including engineered pandemics, misaligned artificial superintelligence, and unintended nanotechnology consequences. This broadening reflected advances in scientific understanding of anthropogenic vulnerabilities, prompting first formal analyses of "existential risks"—events that could precipitate human extinction or irreversibly devastate civilizational prospects.[24] A pivotal contribution arrived in 2002 with philosopher Nick Bostrom's paper "Existential Risks: Analyzing Human Extinction Scenarios and Related Hazards," which delineated categories such as "bangs" (sudden extinction events), "crunches" (gradual resource exhaustion), and "shrieks" (dysgenic outcomes locking humanity into suboptimal futures). Bostrom argued that accelerating technological progress amplified these dangers, as humanity approached a "critical phase" where errors could preclude cosmic-scale flourishing, urging proactive risk mitigation beyond traditional policy frameworks.[24] This work formalized the field, influencing subsequent quantitative estimates and interdisciplinary inquiry. Institutional momentum built in the mid-2000s, exemplified by the 2005 founding of the Future of Humanity Institute (FHI) at the University of Oxford under Bostrom's directorship, which aggregated experts to model long-term risks and advocate safeguards like AI alignment research. Complementing this, the Centre for the Study of Existential Risk (CSER) was established at the University of Cambridge in 2012, focusing on multidisciplinary studies of threats from artificial intelligence, biotechnology, and climate extremes, with an emphasis on empirical forecasting and policy interventions. These centers, supported by philanthropists prioritizing long-term human welfare, catalyzed academic output, including probabilistic assessments assigning non-negligible extinction odds to unaligned AI (potentially >10% by 2100 in some models).[25][26] The 2010s saw integration with effective altruism and longtermism philosophies, prioritizing interventions against high-impact, low-probability catastrophes over immediate humanitarian aid. Bostrom's 2014 book Superintelligence elevated AI misalignment as a paramount concern, positing that superintelligent systems could recursively self-improve to human detriment absent robust control mechanisms. By 2020, Oxford philosopher Toby Ord's The Precipice: Existential Risk and the Future of Humanity synthesized these threads, estimating a 1-in-6 probability of existential catastrophe this century—predominantly from AI (10%), engineered pandemics (3%), and nuclear war (1%)—while critiquing underinvestment in prevention relative to annual risks like air travel. Contemporary developments, amplified by the 2020 COVID-19 pandemic's demonstration of biosecurity fragility, have intensified focus on dual-use technologies and geopolitical tensions exacerbating proliferation risks. Ord and others contend that systemic biases in academia and policy—favoring observable near-term issues—undermine rigorous existential risk prioritization, though initiatives like the Effective Altruism Global conferences and U.S. executive orders on AI safety (2023) signal growing institutional engagement. Despite progress, the field remains nascent, with debates over aggregating subjective probabilities and the ethical imperative of safeguarding humanity's "vast" future potential amid technological acceleration.Natural Catastrophic Risks

Astronomical Impacts and Cosmic Events

Asteroid and comet impacts represent the most studied astronomical threat to human survival. Collisions with near-Earth objects larger than 10 kilometers in diameter can trigger "impact winters" by lofting dust and sulfate aerosols into the stratosphere, blocking sunlight for years and collapsing global food production through halted photosynthesis. The Chicxulub impactor, estimated at 10-15 kilometers and striking 66 million years ago, exemplifies this mechanism, causing the Cretaceous-Paleogene extinction that eliminated non-avian dinosaurs and approximately 75% of species. For modern humanity, a similar event might not guarantee extinction due to dispersed populations, stored food, and technology, but impacts exceeding 100 kilometers could vaporize oceans, ignite global firestorms, and induce runaway greenhouse effects, rendering the planet uninhabitable.[27] Based on lunar cratering rates and observations of near-Earth asteroids, the probability of a giant impact capable of human extinction ranges from 0.03 to 0.3 events per billion years, translating to an annual risk below 1 in 3 million.[27] NASA's ongoing surveys, such as the Near-Earth Object Observations Program, have cataloged over 30,000 NEOs, enabling deflection strategies like kinetic impactors (demonstrated by the 2022 DART mission), though extinction-scale objects remain challenging to detect and mitigate far in advance.[14] Gamma-ray bursts (GRBs), produced by the collapse of massive stars or neutron star mergers, pose another hazard through directed beams of high-energy radiation. A GRB from within 2,000-5,000 light-years, if aligned with Earth, would ionize the atmosphere, destroying the ozone layer and exposing surface life to sterilizing ultraviolet flux for years, potentially triggering ecological collapse and famine. Evidence links ancient GRBs to mass extinctions, such as a possible role in the Late Ordovician event 440 million years ago. However, GRBs are highly collimated (beaming factor ~1/500), and the Milky Way's low rate of suitable progenitors—coupled with galactic habitability constraints—yields negligible near-term risk; estimates place the chance of an extinction-level GRB at less than 1 in 10 million per century.[28][29][30] Supernovae, the explosive deaths of massive stars, share analogous effects: within 25-50 light-years, their neutrino and gamma-ray output could erode ozone by 30-50%, elevating UV-induced cancer rates and disrupting phytoplankton, with cascading trophic failures. Geological proxies, including iron-60 isotopes in ocean sediments, indicate supernovae at 100-300 light-years contributed to past biosphere stress, potentially exacerbating the Devonian extinction 360 million years ago. No stars massive enough for imminent supernova lie closer than 160 light-years (e.g., Eta Carinae at 7,500 light-years), and the galaxy's supernova rate (~2 per century) combined with distance requirements yields an extinction probability under 1 in 100,000 years.[31][32][33] Collectively, these events contribute to natural existential risks estimated at 1 in 10,000 for the current century by Toby Ord, primarily driven by impacts rather than stellar explosions, though all remain orders of magnitude below anthropogenic threats. Upper bounds from paleontological and astronomical data constrain annual natural extinction odds below 1 in 870,000, underscoring humanity's relative insulation from cosmic perils absent human-induced vulnerabilities like overreliance on vulnerable infrastructure.[34][3]Supervolcanic and Geological Cataclysms

Supervolcanic eruptions, classified as Volcanic Explosivity Index (VEI) 8 events ejecting over 1,000 cubic kilometers of material, pose risks through localized pyroclastic flows, widespread ashfall, and stratospheric injection of sulfur dioxide leading to prolonged global cooling known as volcanic winter.[35] Such cooling, potentially 3–10°C for several years, could disrupt agriculture and ecosystems, exacerbating famine and societal strain, though direct human extinction remains improbable given humanity's global distribution and adaptive capacity.[36] The 74,000-year-old Youngest Toba Tuff eruption in Indonesia exemplifies this, depositing ash layers up to 5 cm thick across the Indian subcontinent and injecting ~2,800 megatons of sulfur into the atmosphere, which may have induced a 6–10-year volcanic winter with temperature drops of 3–5°C in the tropics.[37] The Toba event has been hypothesized to trigger a human population bottleneck, reducing numbers to 3,000–10,000 breeding individuals via environmental stress and resource scarcity, but genomic evidence from African and Eurasian populations indicates no severe global reduction tied directly to the eruption, with diverse lineages persisting unaffected in refugia.[38][39] Archaeological data from Indian sites show continued human activity post-eruption, undermining claims of near-extinction, though localized impacts in Southeast Asia likely caused significant mortality.[40] Contemporary supervolcanoes like Yellowstone Caldera, which produced VEI 8 eruptions 2.08 million and 1.3 million years ago, carry low eruption probabilities; the annual chance of any eruption is approximately 0.001%, with supereruptions occurring roughly every 600,000–730,000 years, the last over 640,000 years ago.[41][42] A hypothetical Yellowstone supereruption would blanket the U.S. Midwest in 1–3 meters of ash, causing regional devastation and short-term global cooling of 2–5°C for 3–10 years, potentially leading to crop failures and billions of deaths from starvation, yet sparing most of humanity outside North America due to dispersed populations and food reserves.[43][36] United States Geological Survey assessments emphasize that such events would not eradicate the species, as historical precedents like Toba demonstrate human resilience, though modern agricultural dependence could amplify indirect effects.[43] Other geological cataclysms, such as magnitude 9+ earthquakes or induced tsunamis, lack the global scale for extinction; the 2004 Sumatra event, with a moment magnitude of 9.1–9.3, killed ~230,000 but affected only regional populations.[44] Large igneous provinces, like the Siberian Traps linked to the end-Permian extinction 252 million years ago via massive flood basalts and CO2 emissions, represent ancient risks not replicable in human timescales, with no active analogs threatening total extinction today.[35] Overall, empirical data from paleoclimate records and monitoring indicate supervolcanic risks contribute negligibly to near-term human extinction probabilities, estimated below 1 in 10,000 over centuries, prioritizing mitigation through surveillance rather than existential alarm.[3]Natural Pandemics and Evolutionary Pressures

Natural pandemics have inflicted severe mortality on human populations but have consistently failed to approach extinction thresholds. The Black Death (1347–1351), driven by Yersinia pestis, killed an estimated 75 to 200 million people across Eurasia and North Africa, reducing Europe's population by 30–50% and contributing to a global death toll representing up to 40% of the pre-event population of approximately 475 million.[45] [46] Similarly, the 1918 H1N1 influenza pandemic caused 50 million deaths worldwide amid a global population of 1.8 billion, yielding a mortality rate of about 3%, with recovery facilitated by surviving immune cohorts and non-uniform spread.[46] No recorded natural pandemic has eliminated more than a fraction of humanity, as geographic isolation, heterogeneous immunity, and pathogen burnout—where high lethality curtails transmission—prevent total wipeout.[47] The biological dynamics of host-pathogen coevolution further diminish extinction risks from natural outbreaks. Virulent strains often evolve toward lower lethality to maximize replication and transmission, as excessively deadly variants self-limit by killing hosts too quickly to sustain chains of infection.[47] Human genetic diversity ensures pockets of resistance emerge rapidly, while large population sizes—now over 8 billion—create resilient reservoirs even under high fatality scenarios.[14] Experts, including Toby Ord, peg the probability of natural pandemic-induced extinction this century at approximately 1 in 10,000, far below anthropogenic bio-risks, grounded in the empirical track record of Homo sapiens enduring such events for over 300,000 years without collapse.[48] [49] Evolutionary pressures, including selection from endemic diseases and environmental shifts, have shaped human resilience rather than driven toward extinction. Natural selection continues to favor traits like disease resistance—evident in alleles such as those conferring CCR5-delta32 protection against HIV and historical plagues—but operates slowly against our vast, interconnected gene pool.[50] Unlike smaller hominin populations vulnerable to climatic volatility and resource depletion, modern humans' scale buffers stochastic extinction risks, with annual natural background rates bounded below 1 in 100,000 based on lineage survival data.[14] [51] While rapid environmental changes could theoretically impose maladaptive pressures, human adaptability via behavioral and cultural mechanisms—independent of genetic fixation—has historically averted speciation or extinction equilibria seen in other taxa.[52]Anthropogenic Existential Risks

Nuclear Warfare and Weapons Proliferation