Recent from talks

Nothing was collected or created yet.

Water supply network

View on WikipediaThis article may incorporate text from a large language model. (September 2025) |

A water supply network or water supply system is a system of engineered hydrologic and hydraulic components that provide water supply. A water supply system typically includes the following:

- A drainage basin (see water purification – sources of drinking water)

- A raw water collection point (above or below ground) where the water accumulates, such as a lake, a river, or groundwater from an underground aquifer. Raw water may be transferred using uncovered ground-level aqueducts, covered tunnels, or underground pipes to water purification facilities..

- Water purification facilities. Treated water is transferred using water pipes (usually underground).

- Water storage facilities such as reservoirs, water tanks, or water towers. Smaller water systems may store the water in cisterns or pressure vessels. Tall buildings may also need to store water locally in pressure vessels in order for the water to reach the upper floors.

- Additional water pressurizing components such as pumping stations may need to be situated at the outlet of underground or aboveground reservoirs or cisterns (if gravity flow is impractical).

- A pipe network for distribution of water to consumers (which may be private houses or industrial, commercial, or institution establishments) and other usage points (such as fire hydrants)

- Connections to the sewers (underground pipes, or aboveground ditches in some developing countries) are generally found downstream of the water consumers, but the sewer system is considered to be a separate system, rather than part of the water supply system.

Water supply networks are often run by public utilities of the water industry.

Water extraction and raw water transfer

[edit]Raw water (untreated) is from a surface water source (such as an intake on a lake or a river) or from a groundwater source (such as a water well drawing from an underground aquifer) within the watershed that provides the water resource.

The raw water is transferred to the water purification facilities using uncovered aqueducts, covered tunnels or underground water pipes.

Water treatment

[edit]Virtually all large systems must treat the water; a fact that is tightly regulated by global, state and federal agencies, such as the World Health Organization (WHO) or the United States Environmental Protection Agency (EPA). Water treatment must occur before the product reaches the consumer and afterwards (when it is discharged again). Water purification usually occurs close to the final delivery points to reduce pumping costs and the chances of the water becoming contaminated after treatment.

Traditional surface water treatment plants generally consists of three steps: clarification, filtration and disinfection. Clarification refers to the separation of particles (dirt, organic matter, etc.) from the water stream. Chemical addition (i.e. alum, ferric chloride) destabilizes the particle charges and prepares them for clarification either by settling or floating out of the water stream. Sand, anthracite or activated carbon filters refine the water stream, removing smaller particulate matter. While other methods of disinfection exist, the preferred method is via chlorine addition. Chlorine effectively kills bacteria and most viruses and maintains a residual to protect the water supply through the supply network.

Water distribution network

[edit]

The product, delivered to the point of consumption, is called potable water if it meets the water quality standards required for human consumption.

The water in the supply network is maintained at positive pressure to ensure that water reaches all parts of the network, that a sufficient flow is available at every take-off point and to ensure that untreated water in the ground cannot enter the network. The water is typically pressurised by pumping the water into storage tanks constructed at the highest local point in the network. One network may have several such service reservoirs.

In small domestic systems, the water may be pressurised by a pressure vessel or even by an underground cistern (the latter however does need additional pressurizing). This eliminates the need of a water tower or any other heightened water reserve to supply the water pressure.

These systems are usually owned and maintained by local governments such as cities or other public entities, but are occasionally operated by a commercial enterprise (see water privatization). Water supply networks are part of the master planning of communities, counties, and municipalities. Their planning and design requires the expertise of city planners and civil engineers, who must consider many factors, such as location, current demand, future growth, leakage, pressure, pipe size, pressure loss, fire fighting flows, etc.—using pipe network analysis and other tools.

As water passes through the distribution system, the water quality can degrade by chemical reactions and biological processes. Corrosion of metal pipe materials in the distribution system can cause the release of metals into the water with undesirable aesthetic and health effects. Release of iron from unlined iron pipes can result in customer reports of "red water" at the tap. Release of copper from copper pipes can result in customer reports of "blue water" and/or a metallic taste. Release of lead can occur from the solder used to join copper pipe together or from brass fixtures. Copper and lead levels at the consumer's tap are regulated to protect consumer health.

Utilities will often adjust the chemistry of the water before distribution to minimize its corrosiveness. The simplest adjustment involves control of pH and alkalinity to produce a water that tends to passivate corrosion by depositing a layer of calcium carbonate. Corrosion inhibitors are often added to reduce release of metals into the water. Common corrosion inhibitors added to the water are phosphates and silicates.

Maintenance of a biologically safe drinking water is another goal in water distribution. Typically, a chlorine based disinfectant, such as sodium hypochlorite or monochloramine is added to the water as it leaves the treatment plant. Booster stations can be placed within the distribution system to ensure that all areas of the distribution system have adequate sustained levels of disinfection.

Topologies

[edit]Like electric power lines, roads, and microwave radio networks, water systems may have a loop or branch network topology, or a combination of both. The piping networks are circular or rectangular. If any one section of water distribution main fails or needs repair, that section can be isolated without disrupting all users on the network.

Most systems are divided into zones.[1] Factors determining the extent or size of a zone can include hydraulics, telemetry systems, history, and population density. Sometimes systems are designed for a specific area then are modified to accommodate development. Terrain affects hydraulics and some forms of telemetry. While each zone may operate as a stand-alone system, there is usually some arrangement to interconnect zones in order to manage equipment failures or system failures.

Water network maintenance

[edit]Water supply networks usually represent the majority of assets of a water utility. Systematic documentation of maintenance works using a computerized maintenance management system (CMMS) is a key to a successful operation of a water utility.[why?]

Sustainable urban water supply

[edit]

A sustainable urban water supply network covers all the activities related to provision of potable water. Sustainable development is of increasing importance for the water supply to urban areas. Incorporating innovative water technologies into water supply systems improves water supply from sustainable perspectives. The development of innovative water technologies provides flexibility to the water supply system, generating a fundamental and effective means of sustainability based on an integrated real options approach.[2]

Water is an essential natural resource for human existence. It is needed in every industrial and natural process, for example, it is used for oil refining, for liquid-liquid extraction in hydro-metallurgical processes, for cooling, for scrubbing in the iron and the steel industry, and for several operations in food processing facilities.

It is necessary to adopt a new approach to design urban water supply networks; water shortages are expected in the forthcoming decades and environmental regulations for water utilization and waste-water disposal are increasingly stringent.

To achieve a sustainable water supply network, new sources of water are needed to be developed, and to reduce environmental pollution.

The price of water is increasing, so less water must be wasted and actions must be taken to prevent pipeline leakage. Shutting down the supply service to fix leaks is less and less tolerated by consumers. A sustainable water supply network must monitor the freshwater consumption rate and the waste-water generation rate.

Many of the urban water supply networks in developing countries face problems related to population increase, water scarcity, and environmental pollution.

Population growth

[edit]In 1900 just 13% of the global population lived in cities. By 2005, 49% of the global population lived in urban areas. In 2030 it is predicted that this statistic will rise to 60%.[3] Attempts to expand water supply by governments are costly and often not sufficient. The building of new illegal settlements makes it hard to map, and make connections to, the water supply, and leads to inadequate water management.[4] In 2002, there were 158 million people with inadequate water supply.[5] An increasing number of people live in slums, in inadequate sanitary conditions, and are therefore at risk of disease.

Water scarcity

[edit]Potable water is not well distributed in the world. 1.8 million deaths are attributed to unsafe water supplies every year, according to the WHO.[6] Many people do not have any access, or do not have access to quality and quantity of potable water, though water itself is abundant. Poor people in developing countries can be close to major rivers, or be in high rainfall areas, yet not have access to potable water at all. There are also people living where lack of water creates millions of deaths every year.

Where the water supply system cannot reach the slums, people manage to use hand pumps, to reach the pit wells, rivers, canals, swamps and any other source of water. In most cases the water quality is unfit for human consumption. The principal cause of water scarcity is the growth in demand. Water is taken from remote areas to satisfy the needs of urban areas. Another reason for water scarcity is climate change: precipitation patterns have changed; rivers have decreased their flow; lakes are drying up; and aquifers are being emptied.

Governmental issues

[edit]In developing countries many governments are corrupt and poor and they respond to these problems with frequently changing policies and non clear agreements.[7] Water demand exceeds supply, and household and industrial water supplies are prioritised over other uses, which leads to water stress.[8] Potable water has a price in the market; water often becomes a business for private companies, which earn a profit by putting a higher price on water, which imposes a barrier for lower-income people. The Millennium Development Goals propose the changes required.

Goal 6 of the United Nations' Sustainable Development Goals is to "Ensure availability and sustainable management of water and sanitation for all".[9] This is in recognition of the human right to water and sanitation, which was formally acknowledged at the United Nations General Assembly in 2010, that "clean drinking water and sanitation are essential to the recognition of all human rights".[10] Sustainable water supply includes ensuring availability, accessibility, affordability and quality of water for all individuals.

In advanced economies, the problems are about optimising existing supply networks. These economies have usually had continuing evolution, which allowed them to construct infrastructure to supply water to people. The European Union has developed a set of rules and policies to overcome expected future problems.

There are many international documents with interesting, but not very specific, ideas and therefore they are not put into practice.[11] Recommendations have been made by the United Nations, such as the Dublin Statement on Water and Sustainable Development.

Optimizing the water supply network

[edit]The yield of a system can be measured by either its value or its net benefit. For a water supply system, the true value or the net benefit is a reliable water supply service having adequate quantity and good quality of the product. For example, if the existing water supply of a city needs to be extended to supply a new municipality, the impact of the new branch of the system must be designed to supply the new needs, while maintaining supply to the old system.

Single-objective optimization

[edit]The design of a system is governed by multiple criteria, one being cost. If the benefit is fixed, the least cost design results in maximum benefit. However, the least cost approach normally results in a minimum capacity for a water supply network. A minimum cost model usually searches for the least cost solution (in pipe sizes), while satisfying the hydraulic constraints such as: required output pressures, maximum pipe flow rate and pipe flow velocities. The cost is a function of pipe diameters; therefore the optimization problem consists of finding a minimum cost solution by optimising pipe sizes to provide the minimum acceptable capacity.

Multi-objective optimization

[edit]However, according to the authors of the paper entitled, “Method for optimizing design and rehabilitation of water distribution systems”, “the least capacity is not a desirable solution to a sustainable water supply network in a long term, due to the uncertainty of the future demand”.[12] It is preferable to provide extra pipe capacity to cope with unexpected demand growth and with water outages. The problem changes from a single objective optimization problem (minimizing cost), to a multi-objective optimization problem (minimizing cost and maximizing flow capacity).

Weighted sum method

[edit]To solve a multi-objective optimization problem, it is necessary to convert the problem into a single objective optimization problem, by using adjustments, such as a weighted sum of objectives, or an ε-constraint method. The weighted sum approach gives a certain weight to the different objectives, and then factors in all these weights to form a single objective function that can be solved by single factor optimization. This method is not entirely satisfactory, because the weights cannot be correctly chosen, so this approach cannot find the optimal solution for all the original objectives.

The constraint method

[edit]The second approach (the constraint method), chooses one of the objective functions as the single objective, and the other objective functions are treated as constraints with a limited value. However, the optimal solution depends on the pre-defined constraint limits.

Sensitivity analysis

[edit]The multiple objective optimization problems involve computing the tradeoff between the costs and benefits resulting in a set of solutions that can be used for sensitivity analysis and tested in different scenarios. But there is no single optimal solution that will satisfy the global optimality of both objectives. As both objectives are to some extent contradictory, it is not possible to improve one objective without sacrificing the other. It is necessary in some cases use a different approach. (e.g. Pareto Analysis), and choose the best combination.

Operational constraints

[edit]Returning to the cost objective function, it cannot violate any of the operational constraints. Generally this cost is dominated by the energy cost for pumping. “The operational constraints include the standards of customer service, such as: the minimum delivered pressure, in addition to the physical constraints such as the maximum and the minimum water levels in storage tanks to prevent overtopping and emptying respectively.”[13]

In order to optimize the operational performance of the water supply network, at the same time as minimizing the energy costs, it is necessary to predict the consequences of different pump and valve settings on the behavior of the network.

Apart from Linear and Non-linear Programming, there are other methods and approaches to design, to manage and operate a water supply network to achieve sustainability—for instance, the adoption of appropriate technology coupled with effective strategies for operation and maintenance. These strategies must include effective management models, technical support to the householders and industries, sustainable financing mechanisms, and development of reliable supply chains. All these measures must ensure the following: system working lifespan; maintenance cycle; continuity of functioning; down time for repairs; water yield and water quality.

Sustainable development

[edit]In an unsustainable system there is insufficient maintenance of the water networks, especially in the major pipe lines in urban areas. The system deteriorates and then needs rehabilitation or renewal.

Householders and sewage treatment plants can both make the water supply networks more efficient and sustainable. Major improvements in eco-efficiency are gained through systematic separation of rainfall and wastewater. Membrane technology can be used for recycling wastewater.

The municipal government can develop a “Municipal Water Reuse System” which is a current approach to manage the rainwater. It applies a water reuse scheme for treated wastewater, on a municipal scale, to provide non-potable water for industry, household and municipal uses. This technology consists in separating the urine fraction of sanitary wastewater, and collecting it for recycling its nutrients.[14] The feces and graywater fraction is collected, together with organic wastes from the households, using a gravity sewer system, continuously flushed with non-potable water. The water is treated anaerobically and the biogas is used for energy production.

One effective way to achieve sustainable water management is to shift emphasis towards decentralized water projects, such as drip irrigation diffusion in India.[15] This project covers large spatial areas while relying on individual technological adoption decisions, offering scalable solutions that can mitigate water scarcity and enhance agricultural productivity.

Another method that can be utilized is through the promoting of community engagement and resistance against unsustainable water infrastructure projects. Grassroots movements, as observed in anti-dam protests in various countries, play a crucial role in challenging dominant development narratives and advocating for more socially and ecologically just water management practices.[15]

Municipalities and other forms of local governments should also invest in innovative technologies, such as membrane technology for wastewater recycling, and develop policy frameworks that incentivize eco-efficient practices. Municipal water reuse systems, as demonstrated in implementation, offer promising avenues for integrating wastewater treatment and resource recovery into urban water networks.[15]

The sustainable water supply system is an integrated system including water intake, water utilization, wastewater discharge and treatment and water environmental protection. It requires reducing freshwater and groundwater usage in all sectors of consumption. Developing sustainable water supply systems is a growing trend, because it serves people's long-term interests.[16] There are several ways to reuse and recycle the water, in order to achieve long-term sustainability, such as:

- Gray water re-use and treatment: gray water is wastewater coming from baths, showers, sinks and washbasins. If this water is treated it can be used as a source of water for uses other than drinking. Depending on the type of gray water and its level of treatment, it can be re-used for irrigation and toilet flushing. According to an investigation about the impacts of domestic grey water reuse on public health, carried out by the New South Wales Health Centre in Australia in the year 2000[citation needed], grey water contains less nitrogen and fecal pathogenic organisms than sewage, and the organic content of grey water decomposes more rapidly.

- Ecological treatment systems use little energy: there are many applications in gray water re-use, such as reed beds, soil treatment systems and plant filters. This process is ideal for gray water re-use, because of easier maintenance and higher removal rates of organic matter, ammonia, nitrogen and phosphorus.

Other possible approaches to scoping models for water supply, applicable to any urban area, include the following:

- Sustainable drainage system

- Borehole extraction

- Intercluster groundwater flow

- Canal and river extraction

- Aquifer storage

- A more user-friendly indoor water use[clarification needed]

The Dublin Statement on Water and Sustainable Development is a good example of the new trend to overcome water supply problems. This statement, suggested by advanced economies, has come up with some principles that are of great significance to urban water supply. These are:

- Fresh water is a finite and vulnerable resource, essential to sustain life, development and the environment.

- Water development and management should be based on a participatory approach, involving users, planners and policy-makers at all levels.

- Women play a central part in the provision, management and safeguarding of water. Institutional arrangements should reflect the role of women in water provision and protection.

- Water has an economic value in all its competing uses and should be recognized as an economic good.[17]

From these statements, developed in 1992, several policies have been created to give importance to water and to move urban water system management towards sustainable development. The Water Framework Directive by the European Commission is a good example of what has been created there out of former policies.

Future approaches

[edit]There is great need for a more sustainable water supply systems. To achieve sustainability several factors must be tackled at the same time: climate change, rising energy cost, and rising populations. All of these factors provoke change and put pressure on management of available water resources.[18]

An obstacle to transforming conventional water supply systems is the amount of time needed to achieve the transformation. More specifically, transformation must be implemented by municipal legislation bodies, which always need short-term solutions too.[citation needed] Another obstacle to achieving sustainability in water supply systems is the insufficient practical experience with the technologies required, and the missing know-how about the organization and the transition process.

Urban water infrastructure faces several challenges that undermine its sustainability and resilience. One critical issue highlighted in recent research is the vulnerability of water networks to climate variability and extreme weather events. Poor seasonal rains, as observed in the case of the Panama Canal's lock and dam infrastructure, exemplify how inadequate water supply can strain water-intensive infrastructure, raising questions about engineering legitimacy and the reliability of water systems.[19]

Another key challenge is the unequal development associated with large-scale water infrastructure projects such as dams and canals. Such projects, while aimed at promoting economic growth, often actually reproduce social and economic inequalities by displacing rural communities and marginalizing indigenous populations.[19] This phenomenon of "accumulation by dispossession" further emphasizes the need for more equitable and inclusive approaches to water infrastructure development.[19]

Possible ways to improve this situation is simulating of the network, implementing pilot projects, learning from the costs involved and the benefits achieved.

See also

[edit]References

[edit]- ^ Herrera, Manuel (2011). Improving water network management by efficient division into supply clusters. Riunet (Tesis doctoral). PhD thesis, Universitat Politecnica de Valencia. doi:10.4995/Thesis/10251/11233. hdl:10251/11233.

- ^ Zhang, Stephen X, Babovic, Vladan (2012). "A real options approach to the design and architecture of water supply systems using innovative water technologies under uncertainty". Journal of Hydroinformatics. 14 (1): 13–29. doi:10.2166/hydro.2011.078.

- ^ "World Urbanization Prospects: The 2005 Revision". www.un.org. Retrieved 2018-02-26.

- ^ Water : a shared responsibility. UNESCO World Water Assessment Programme (United Nations). Paris: United Nations Educational, Scientific and Cultural Organization (UNESCO). 2006. ISBN 9231040065. OCLC 69021428.

{{cite book}}: CS1 maint: others (link) - ^ "Reports | JMP". washdata.org. Retrieved 2018-02-26.

- ^ "Water, sanitation and hygiene links to health". WHO. Retrieved 2018-02-26.

- ^ Escolero, O.; Kralisch, S.; Martínez, S.E.; Perevochtchikova, M. (2016). "Diagnóstico y análisis de los factores que influyen en la vulnerabilidad de las fuentes de abastecimiento de agua potable a la Ciudad de México, México". Boletín de la Sociedad Geológica Mexicana (in Spanish). 68 (3): 409–427. doi:10.18268/BSGM2016v68n3a3.

- ^ Vairavamoorthy, Kala; Gorantiwar, Sunil D.; Pathirana, Assela (2008). "Managing urban water supplies in developing countries – Climate change and water scarcity scenarios". Physics and Chemistry of the Earth, Parts A/B/C. 33 (5): 330–339. Bibcode:2008PCE....33..330V. doi:10.1016/j.pce.2008.02.008.

- ^ "Goal 6 | Department of Economic and Social Affairs". sdgs.un.org. Retrieved 2020-11-20.

- ^ Solon, Pablo (28 July 2010). "The Human Right to Water and Sanitation". United Nations General Assembly.

- ^ van der Steen, Peter. (2006). “Integrated Urban Water Management: towards sustainability”. Environmental Resources Department. UNESCO-IHE Institute for Water Education. SWITCH.

- ^ "Method for optimizing design and rehabilitation of water distribution systems". Zheng Y. Wu, Thomas M. Walski, Robert F. Mankowski, Gregg A. Herrin, Wayne R. Hartell, Jonathan DeCarlo. 2003-03-04.

{{cite journal}}: Cite journal requires|journal=(help)CS1 maint: others (link) - ^ Martínez, Fernando; Hernández, Vicente; Alonso, José Miguel; Rao, Zhengfu; Alvisi, Stefano (2007-01-01). "Optimizing the operation of the Valencia water-distribution network". Journal of Hydroinformatics. 9 (1): 65–78. doi:10.2166/hydro.2006.018. ISSN 1464-7141.

- ^ Craddock Consulting Engineering. “Recycling treated municipal wastewater for industrial water use”. (2007).

- ^ a b c Birkenholtz, Trevor (2023). "Geographies of big water infrastructure: Contemporary insights and future research oppurtunities". Geography Compass Journal. 17 (8).

- ^ Qiang, He. Li Zhai Jun, Huang. “Application of Sustainable Water System the Demonstration in Chengdu (China)”. (2008).

- ^ International Conference on Water and the Environment. "The Dublin Statement on Water and Sustainable Development – UN Documents: Gathering a body of global agreements". www.un-documents.net. Retrieved 2018-02-26.

- ^ Last, Ewan. Mackay, Rae. Developing a New Scoping Model for Urban Sustainability. (2007). [ISBN missing] [page needed]

- ^ a b c Birkenholtz, Trevor (2023). "Geographies of big water infrastructure: Contemporary insights and future research oppurtunities". Geography Compass Journal. 17 (8).

External links

[edit]Water supply network

View on GrokipediaHistorical Development

Ancient and Pre-Industrial Systems

In ancient Mesopotamia, communities developed early water management infrastructure around 3000 BC, constructing levees, canals, and ditches to channel water from the Tigris and Euphrates rivers for both irrigation and urban supply, mitigating seasonal floods while enabling settlement growth in arid regions.[9] These systems relied on gravity-fed channels and manual labor for maintenance, with vertical shafts sometimes used for waste removal into cesspools, marking rudimentary urban water handling.[10] The Indus Valley Civilization, flourishing circa 2500 BC, featured advanced urban water networks including wells, reservoirs, and brick-lined drains in cities like Mohenjo-Daro, where households accessed groundwater via stepped wells up to 12 meters deep and interconnected drainage channels facilitated wastewater removal, supporting populations of tens of thousands without centralized treatment.[11] In parallel, ancient Egypt harnessed the Nile's annual floods through basins and canals dating to around 3000 BC, diverting water for fields and settlements, though urban supply often drew directly from the river or shallow wells rather than extensive piping.[12] On Minoan Crete during the Bronze Age (circa 2000–1450 BC), water supply advanced with terracotta pipes, cisterns, and spring-fed conduits in palaces like Knossos, where covered drainage and distribution systems delivered rainwater and groundwater to multiple buildings, incorporating settling tanks for basic filtration and demonstrating sustainable harvesting in a Mediterranean climate.[13] These networks prioritized small-scale, gravity-driven flow over long distances, with evidence of dams and aqueduct-like channels for irrigation augmentation.[14] Ancient Greek cities, from the 6th century BC, expanded on these foundations with cisterns, wells, and early aqueducts; Athens, for instance, constructed underground conduits sloping gently to transport spring water across neighborhoods, serving public fountains and private needs while integrating rainwater collection in urban planning.[15] Hellenistic engineering further refined tunneling and pressure management, as seen in Pergamon's multi-level system combining siphons and arches to elevate water supply.[16] The Roman Empire achieved the era's pinnacle in scale and precision, beginning with the Aqua Appia aqueduct in 312 BC, which spanned 16 kilometers to deliver spring water to Rome's cattle market and public basins using covered channels and minimal elevation drops of 1:4000 for gravity flow.[17] By the 3rd century AD, eleven aqueducts supplied the city, totaling capacities exceeding 1 million cubic meters daily across lengths up to 92 kilometers, incorporating stone arches, lead pipes for branching distribution, and valves for pressure control, sustaining a population of over 1 million with public fountains, baths, and private lead-lined conduits.[18] Engineering feats like the Pont du Gard exemplified inverted siphons to navigate valleys, with maintenance via regular inspections ensuring longevity.[19] Following Rome's fall in the 5th century AD, European water networks declined, with aqueducts often abandoned due to lack of centralized authority and repair capacity, shifting reliance to local wells, rivers, and hand-carried supplies in urban areas.[20] Medieval innovations emerged sporadically, such as London's 13th-century conduit system drawing from Tyburn springs 4 kilometers away to central cisterns via wooden pipes and lead channels, distributing to public conduits for household fetching, though contamination risks persisted without systematic treatment.[21] Monasteries and larger cities like Paris developed spring-fed lead pipes and gravity mains by the 14th century, but coverage remained limited to elites, with most populations dependent on polluted streams or groundwater accessed via communal pumps.[22] These pre-industrial systems emphasized localized extraction over expansive grids, constrained by material limitations like wood and lead prone to corrosion and breakage.[23]Industrial Era Innovations

The rapid urbanization accompanying the Industrial Revolution in the late 18th and 19th centuries overwhelmed traditional gravity-fed aqueducts and local wells, prompting innovations in pressurized distribution systems to deliver water reliably to growing populations in cities like London and New York.[24] These advancements shifted water supply from intermittent, low-pressure conduits to continuous networks capable of serving multi-story buildings and factories, reducing reliance on hand pumps and contaminated sources that exacerbated epidemics such as cholera.[25] A pivotal development was the widespread adoption of cast-iron pipes, which could withstand the pressures required for elevated distribution unlike brittle wooden or lead alternatives.[26] Originating from earlier limited uses, such as the 1664 Versailles installation, cast-iron mains proliferated in the early 19th century; for instance, New York City laid its first in 1799, and London water companies systematically replaced wooden networks with iron by the 1820s to enable pressurized delivery from central stations.[26] [27] This material's durability—resistant to corrosion and bursting under 100-200 psi—facilitated branching networks with service connections to individual properties, marking a transition to modern grid-like topologies.[28] Steam-powered pumping stations emerged as the mechanical backbone, harnessing Newcomen and Watt engines to lift water from rivers or wells to reservoirs and mains.[25] The first U.S. application occurred in 1774 in Manhattan, but industrial-scale deployment accelerated post-1820, with British cities installing engines by the 1840s to combat sanitary crises; these stations could pump millions of gallons daily, as in London's Thames-derived systems serving over 2 million residents by mid-century.[24] [25] Innovations like rotary pumps improved efficiency over atmospheric engines, enabling constant pressure and reducing downtime from manual labor. Early water treatment innovations addressed contamination from industrial effluents and sewage, with slow sand filtration proving effective against turbidity and pathogens. John Gibb installed the first public sand filter in Paisley, Scotland, in 1804 for his bleachery, filtering 1.8 million liters daily through gravel and sand beds that relied on biological layers for purification.[29] By 1829, London adopted similar systems at the Chelsea Water Works, treating Thames water and halving impurity levels, which influenced mandatory filtration laws in Britain by 1854 amid cholera outbreaks.[29] These gravity-driven filters, with head losses of 1-2 meters, represented a causal leap in quality control, prioritizing empirical removal of sediments over mere sedimentation.[29]20th Century Standardization and Expansion

In the early 20th century, rapid urbanization in the United States drove significant expansion of municipal water supply networks, with the number of public water systems increasing from approximately 600 in 1880 to over 3,000 by 1900, reflecting a shift toward public ownership that surpassed private systems.[30] This growth continued through the century, fueled by population increases in cities and suburbs, necessitating longer distribution mains and more service connections to deliver pressurized water for residential, industrial, and firefighting uses.[30] By mid-century, post-World War II suburban development further accelerated network extension, incorporating standardized grid-like topologies to serve expanding peripheries efficiently.[31] Standardization efforts advanced concurrently, beginning with the American Water Works Association (AWWA) issuing its first consensus standards in 1908 for cast-iron pipe castings and related components, which established uniform specifications for materials, dimensions, and testing to ensure reliability and interoperability across systems.[32] A pivotal development was the adoption of chlorination as a routine disinfection method, first implemented on a large scale in Jersey City, New Jersey, in 1908, which dramatically reduced waterborne diseases like typhoid and set a precedent for widespread treatment integration into distribution networks.[33] The U.S. Public Health Service formalized quality standards in 1914, influencing design practices for filtration, pressure maintenance, and contamination prevention.[30] Pipe material innovations further supported standardization and scalability; cast iron remained dominant until the mid-20th century, when ductile iron—offering greater tensile strength and flexibility—was introduced for water mains in 1955, with standardized thickness classes defined by 1965 to replace brittle predecessors and accommodate higher pressures in expanding urban grids.[34][35] Asbestos-cement and reinforced concrete pipes also gained traction for smaller diameters during this period, enabling cost-effective extensions while adhering to emerging AWWA guidelines for corrosion resistance and hydraulic performance.[36] These advancements, combined with federal policies like the 1974 Safe Drinking Water Act, institutionalized uniform engineering practices, reducing variability in network design and facilitating large-scale projects such as regional aqueducts and reservoir interconnections.[30]Core Components

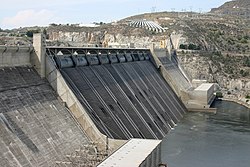

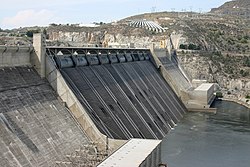

Water Sources and Extraction

Water supply networks primarily draw from surface water sources such as rivers, lakes, and reservoirs, which account for about 74% of total water withdrawals in the United States.[37] Globally, large urban areas obtain approximately 78% of their water from surface sources, often transported over significant distances to meet demand.[38] These sources are preferred in many regions due to their higher recharge rates from precipitation and runoff compared to groundwater.[39] Surface water extraction typically involves intake structures positioned in rivers or lakes to capture water while excluding large debris through screens or grates.[40] For reservoir-based supplies, dams impound river flows to create storage, enabling controlled release and withdrawal via outlet works or spillways, as exemplified by large-scale facilities like the Grand Coulee Dam.[41] Pumps or gravity flow then convey the raw water through pipelines to treatment facilities, with intake designs often incorporating velocity caps to minimize sediment intake and fish entrainment.[42] Groundwater, sourced from aquifers—porous geologic formations of soil, sand, and rock that store and transmit water—supplies the remaining portion, constituting about 26% of U.S. withdrawals and roughly half of global domestic use.[37][43] Extraction occurs via drilled wells, which penetrate the aquifer and use submersible pumps to lift water to the surface, with well types varying by depth: shallow wells for unconfined aquifers near the surface, and deeper artesian wells tapping confined aquifers under pressure.[44][45] Wellfields, comprising multiple wells, are commonly employed for municipal supplies to ensure redundancy and sustainable yields, though excessive pumping can lead to aquifer depletion and subsidence.[46] In arid or coastal regions, supplementary sources like desalinated seawater or treated wastewater may contribute, but these represent less than 1% of global urban supply volumes as of 2023, limited by high energy costs and infrastructure requirements.[47] Sustainable management of both surface and groundwater extraction is critical, as over-abstraction from aquifers has caused groundwater levels to decline by over 1 meter per year in parts of India and the United States since the 1980s.[48]Treatment Processes

Water treatment processes in municipal supply networks transform raw water from sources such as rivers, lakes, or groundwater into potable water by removing physical, chemical, and biological contaminants through a series of engineered steps. These processes adhere to standards like the U.S. Environmental Protection Agency's (EPA) Surface Water Treatment Rules, which mandate effective filtration and disinfection for surface water to control pathogens such as Giardia and viruses, achieving at least 99.9% removal or inactivation of Cryptosporidium oocysts.[49] Conventional treatment plants process billions of gallons daily; for instance, a typical facility might handle 50-200 million gallons per day, depending on population served.[50] The initial stage involves coagulation, where chemicals such as aluminum sulfate (alum) or ferric chloride are added to raw water to neutralize the negative charges on suspended particles like clay, silt, and organic matter, allowing them to aggregate. Dosages typically range from 10-50 mg/L, determined by jar testing to optimize turbidity removal, which can reduce initial turbidity levels from hundreds of NTU to below 10 NTU.[50] This step is critical for surface water, which often contains higher organic loads than groundwater, preventing filter clogging downstream.[51] Following coagulation, flocculation entails gentle mixing in baffled basins or paddle flocculators to form larger, pinhead-sized flocs from the destabilized particles, enhancing settleability over 20-45 minutes of detention time. Shear rates are controlled at 10-75 s⁻¹ to avoid breaking fragile flocs, with polymeric aids sometimes added for improved bridging.[50] Effective flocculation can achieve 70-90% removal of total suspended solids before sedimentation.[52] Sedimentation then occurs in large basins where gravity settles the flocs, typically over 2-4 hours, removing 50-90% of remaining turbidity and associated contaminants like heavy metals bound to particulates. Clarifiers are designed with surface overflow rates of 0.5-2.0 gallons per minute per square foot to balance efficiency and footprint. Sludge from the bottom, comprising 1-2% solids, is periodically removed and dewatered.[50] This process is less emphasized in direct filtration systems for low-turbidity waters, skipping extended settling to reduce costs.[53] Subsequent filtration passes clarified water through media beds of sand, gravel, and anthracite coal, or advanced membranes, to trap residual particles, achieving effluent turbidity below 0.3 NTU as required by EPA rules for effective disinfection. Rapid sand filters operate at rates of 2-6 gallons per minute per square foot, backwashed every 24-72 hours when head loss exceeds 6-10 feet.[50] Granular activated carbon filters may integrate adsorption for taste, odor, or organic removal, such as trihalomethane precursors.[53] Final disinfection eliminates microbial pathogens, with chlorination being the predominant method, injecting free chlorine (0.2-4 mg/L residual) to provide continuous protection in distribution, inactivating 99.99% of bacteria and viruses via oxidation of cell walls.[54] Alternatives include ozonation, which generates reactive oxygen species for rapid disinfection (contact times of 5-10 minutes at 0.1-2 mg/L) but lacks residual activity, and ultraviolet (UV) irradiation at doses of 20-40 mJ/cm², effective against Cryptosporidium without chemical byproducts.[55] Combined chlorine (chloramines) extends residuals but penetrates biofilms less effectively than free chlorine.[56] Additional unit processes, such as aeration for volatile organic compound stripping or iron/manganese oxidation, pH adjustment with lime or soda ash to prevent corrosion (targeting 7.5-8.5 pH), and optional fluoridation (0.7 mg/L) for dental health, tailor treatment to source water quality.[53] Groundwater often bypasses coagulation-sedimentation if low in particulates, relying primarily on disinfection under the EPA's Ground Water Rule.[57] Overall efficacy is validated by continuous monitoring, ensuring compliance with maximum contaminant levels for over 90 regulated parameters.[58]Distribution Infrastructure

The distribution infrastructure of a water supply network consists of an interconnected system of pipes, pumping stations, valves, storage facilities, fire hydrants, and service connections that transport treated water from purification plants to consumers while ensuring sufficient pressure, flow rates, and reliability.[1] These components maintain hydraulic integrity, provide redundancy against failures, and support fire protection demands, typically requiring minimum pressures of 20-40 psi for domestic use and higher flows for emergencies.[2] Pipes form the backbone, categorized as transmission mains (large-diameter for bulk transport) and distribution mains (smaller for local delivery). Common materials include ductile iron for mains due to its high tensile strength and longevity exceeding 100 years under proper coating, polyvinyl chloride (PVC) for its corrosion resistance, lightweight installation, and cost-effectiveness in diameters up to 48 inches, and high-density polyethylene (HDPE) for flexibility in seismic areas and fusion-welded joints that minimize leaks.[59][60] Ductile iron pipes, governed by AWWA C151 standards, offer durability against external loads but require protective linings like cement mortar to prevent tuberculation; PVC, per AWWA C900, provides smooth interiors reducing friction losses but is susceptible to brittleness under UV exposure or improper jointing; HDPE, updated in AWWA C901-25, excels in corrosion resistance and joint integrity but demands specialized fusion equipment.[61] Pipes are typically buried at depths of 3-6 feet to protect against freezing and traffic loads, with diameters ranging from 4 inches for laterals to over 72 inches for feeders.[2] Pumping stations boost pressure in areas of elevation gain or long-distance transport, using centrifugal pumps powered by electricity or diesel backups to achieve heads of 100-500 feet.[62] Booster pumps maintain system pressures, often automated with variable frequency drives for energy efficiency, and are sited near treatment plants or high-demand zones. Storage facilities, including elevated tanks, standpipes, and ground-level reservoirs, equalize diurnal demand fluctuations, store 1-2 days' supply for resilience, and provide surge capacity for fire flows up to 5,000 gallons per minute in urban areas. Elevated steel or concrete tanks, elevated 50-200 feet, leverage gravity for pressure without constant pumping, while reservoirs incorporate overflow and mixing to prevent stagnation.[1] Valves, such as gate, butterfly, and check types, enable flow control, isolation for repairs, and backflow prevention, with hydrants spaced 300-500 feet apart for firefighting access.[2] Service connections link mains to customer meters, incorporating corporation stops and curb valves for shutoff. Network design favors looped topologies over dead-end branches to minimize head losses via the Hardy Cross method and enhance redundancy, adhering to EPA guidelines for cross-connection control and AWWA standards for material integrity.[5] Maintenance considerations include corrosion monitoring and pressure testing to sustain infrastructure lifespan, with U.S. systems averaging pipe ages of 25-50 years amid ongoing replacement needs.[1]Network Topologies and Design

Water supply networks are configured in topologies that determine hydraulic efficiency, reliability, and vulnerability to failures, with designs optimized through hydraulic modeling to meet demand while minimizing energy loss and costs. Branched topologies, also known as dead-end or tree-like systems, feature a hierarchical structure where pipes extend from main lines to endpoints without interconnections, resulting in simpler construction and lower initial costs due to reduced pipe lengths.[63][64] However, they suffer from pressure drops at extremities—often exceeding 10-15 meters head loss over long branches—and promote water stagnation in dead ends, increasing risks of contamination and reduced chlorine residuals.[65][66] Looped or gridiron topologies interconnect mains and laterals to form closed circuits, enabling multiple flow paths that maintain uniform pressures (typically 20-50 psi minimum) and facilitate water circulation to prevent stagnation.[63][67] This redundancy enhances reliability during pipe breaks or high-demand events like firefighting, where flows can reach 1,000-2,500 gallons per minute per hydrant, but requires 20-30% more piping, elevating capital and maintenance expenses.[68][69] Radial systems distribute from a central elevated source outward in spokes, leveraging gravity for pressure in hilly terrains but limiting scalability in flat areas without pumps.[70] Ring topologies encircle districts with circumferential mains fed by cross-connections, offering balanced supply in compact urban zones yet complicating expansions due to fixed loops.[63]| Topology | Description | Advantages | Disadvantages |

|---|---|---|---|

| Branched (Dead-End) | Hierarchical pipes from mains to terminals without loops | Lower construction costs; easier to isolate sections for repairs | Uneven pressure distribution; stagnation and quality degradation at ends; poor redundancy for outages[63][65] |

| Looped (Gridiron) | Interconnected mains and branches forming meshes | Uniform pressure; multiple paths for reliability; reduced stagnation | Higher pipe volumes and costs; complex valve management[67][68] |

| Radial | Spoke-like extension from central reservoir | Gravity-driven efficiency in topography-suited areas; simple zoning | Dependent on elevation; limited to specific terrains; pressure variability[70] |

| Ring | Circular mains around areas with radial feeds | Balanced district supply; fault tolerance in loops | Expansion challenges; potential for uneven flows in imbalanced rings[63] |