Recent from talks

Nothing was collected or created yet.

Natural logarithm

View on Wikipedia

| Natural logarithm | |

|---|---|

Graph of part of the natural logarithm function. The function slowly grows to positive infinity as x increases, and slowly goes to negative infinity as x approaches 0 ("slowly" as compared to any power law of x). | |

| General information | |

| General definition | |

| Motivation of invention | hyperbola quadrature |

| Fields of application | Pure and applied mathematics |

| Domain, codomain and image | |

| Domain | |

| Codomain | |

| Image | |

| Specific values | |

| Value at +∞ | +∞ |

| Value at e | 1 |

| Value at 1 | 0 |

| Value at 0 | −∞ |

| Specific features | |

| Asymptote | |

| Root | 1 |

| Inverse | |

| Derivative | |

| Antiderivative | |

| Part of a series of articles on the |

| mathematical constant e |

|---|

|

| Properties |

| Applications |

| Defining e |

| People |

| Related topics |

The natural logarithm of a number is its logarithm to the base of the mathematical constant e, which is an irrational and transcendental number approximately equal to 2.718281828459.[1] The natural logarithm of x is generally written as ln x, loge x, or sometimes, if the base e is implicit, simply log x.[2][3] Parentheses are sometimes added for clarity, giving ln(x), loge(x), or log(x). This is done particularly when the argument to the logarithm is not a single symbol, so as to prevent ambiguity.

The natural logarithm of x is the power to which e would have to be raised to equal x. For example, ln 7.5 is 2.0149..., because e2.0149... = 7.5. The natural logarithm of e itself, ln e, is 1, because e1 = e, while the natural logarithm of 1 is 0, since e0 = 1.

The natural logarithm can be defined for any positive real number a as the area under the curve y = 1/x from 1 to a[4] (with the area being negative when 0 < a < 1). The simplicity of this definition, which is matched in many other formulas involving the natural logarithm, leads to the term "natural". The definition of the natural logarithm can then be extended to give logarithm values for negative numbers and for all non-zero complex numbers, although this leads to a multi-valued function: see complex logarithm for more.

The natural logarithm function, if considered as a real-valued function of a positive real variable, is the inverse function of the exponential function, leading to the identities:

Like all logarithms, the natural logarithm maps multiplication of positive numbers into addition:[5]

Logarithms can be defined for any positive base other than 1, not only e. However, logarithms in other bases differ only by a constant multiplier from the natural logarithm, and can be defined in terms of the latter, .

Logarithms are useful for solving equations in which the unknown appears as the exponent of some other quantity. For example, logarithms are used to solve for the half-life, decay constant, or unknown time in exponential decay problems. They are important in many branches of mathematics and scientific disciplines, and are used to solve problems involving compound interest.

History

[edit]The concept of the natural logarithm was worked out by Gregoire de Saint-Vincent and Alphonse Antonio de Sarasa before 1649.[6] Their work involved quadrature of the hyperbola with equation xy = 1, by determination of the area of hyperbolic sectors. Their solution generated the requisite "hyperbolic logarithm" function, which had the properties now associated with the natural logarithm.

An early mention of the natural logarithm was by Nicholas Mercator in his work Logarithmotechnia, published in 1668,[7] although the mathematics teacher John Speidell had already compiled a table of what in fact were effectively natural logarithms in 1619.[8] It has been said that Speidell's logarithms were to the base e, but this is not entirely true due to complications with the values being expressed as integers.[8]: 152

Notational conventions

[edit]The notations ln x and loge x both refer unambiguously to the natural logarithm of x, and log x without an explicit base may also refer to the natural logarithm. This usage is common in mathematics, along with some scientific contexts as well as in many programming languages.[nb 1] In some other contexts such as chemistry, however, log x can be used to denote the common (base 10) logarithm. It may also refer to the binary (base 2) logarithm in the context of computer science, particularly in the context of time complexity.

Generally, the notation for the logarithm to base b of a number x is shown as logb x. So the log of 8 to the base 2 would be log2 8 = 3.

Definitions

[edit]The natural logarithm can be defined in several equivalent ways.

Inverse of exponential

[edit]The most general definition is as the inverse function of , so that . Because is positive and invertible for any real input , this definition of is well defined for any positive x.

Integral definition

[edit]

The natural logarithm of a positive, real number a may be defined as the area under the graph of the hyperbola with equation y = 1/x between x = 1 and x = a. This is the integral[4] If a is in , then the region has negative area, and the logarithm is negative.

This function is a logarithm because it satisfies the fundamental multiplicative property of a logarithm:[5]

This can be demonstrated by splitting the integral that defines ln ab into two parts, and then making the variable substitution x = at (so dx = a dt) in the second part, as follows:

In elementary terms, this is simply scaling by 1/a in the horizontal direction and by a in the vertical direction. Area does not change under this transformation, but the region between a and ab is reconfigured. Because the function a/(ax) is equal to the function 1/x, the resulting area is precisely ln b.

The number e can then be defined to be the unique real number a such that ln a = 1.

Properties

[edit]The natural logarithm has the following mathematical properties:

Derivative

[edit]The derivative of the natural logarithm as a real-valued function on the positive reals is given by[4]

How to establish this derivative of the natural logarithm depends on how it is defined firsthand. If the natural logarithm is defined as the integral then the derivative immediately follows from the first part of the fundamental theorem of calculus.

On the other hand, if the natural logarithm is defined as the inverse of the (natural) exponential function, then the derivative (for x > 0) can be found by using the properties of the logarithm and a definition of the exponential function.

From the definition of the number the exponential function can be defined as where

The derivative can then be found from first principles.

Also, we have:

so, unlike its inverse function , a constant in the function doesn't alter the differential.

Series

[edit]

Since the natural logarithm is undefined at 0, itself does not have a Maclaurin series, unlike many other elementary functions. Instead, one looks for Taylor expansions around other points. For example, if then[9]

This is the Taylor series for around 1. A change of variables yields the Mercator series: valid for and

Leonhard Euler,[10] disregarding , nevertheless applied this series to to show that the harmonic series equals the natural logarithm of ; that is, the logarithm of infinity. Nowadays, more formally, one can prove that the harmonic series truncated at N is close to the logarithm of N, when N is large, with the difference converging to the Euler–Mascheroni constant.

The figure is a graph of ln(1 + x) and some of its Taylor polynomials around 0. These approximations converge to the function only in the region −1 < x ≤ 1; outside this region, the higher-degree Taylor polynomials devolve to worse approximations for the function.

A useful special case for positive integers n, taking , is:

If then

Now, taking for positive integers n, we get:

If then Since we arrive at Using the substitution again for positive integers n, we get:

This is, by far, the fastest converging of the series described here.

The natural logarithm can also be expressed as an infinite product:[11]

Two examples might be:

From this identity, we can easily get that:

For example:

The natural logarithm in integration

[edit]The natural logarithm allows simple integration of functions of the form : an antiderivative of g(x) is given by . This is the case because of the chain rule and the following fact:

In other words, when integrating over an interval of the real line that does not include , then where C is an arbitrary constant of integration.[12]

Likewise, when the integral is over an interval where ,

For example, consider the integral of over an interval that does not include points where is infinite:

The natural logarithm can be integrated using integration by parts:

Let: then:

Efficient computation

[edit]For where x > 1, the closer the value of x is to 1, the faster the rate of convergence of its Taylor series centered at 1. The identities associated with the logarithm can be leveraged to exploit this:

Such techniques were used before calculators, by referring to numerical tables and performing manipulations such as those above.

Natural logarithm of 10

[edit]The natural logarithm of 10, approximately equal to 2.30258509,[13] plays a role for example in the computation of natural logarithms of numbers represented in scientific notation, as a mantissa multiplied by a power of 10:

This means that one can effectively calculate the logarithms of numbers with very large or very small magnitude using the logarithms of a relatively small set of decimals in the range [1, 10).

High precision

[edit]To compute the natural logarithm with many digits of precision, the Taylor series approach is not efficient since the convergence is slow. Especially if x is near 1, a good alternative is to use Halley's method or Newton's method to invert the exponential function, because the series of the exponential function converges more quickly. For finding the value of y to give using Halley's method, or equivalently to give using Newton's method, the iteration simplifies to which has cubic convergence to .

Another alternative for extremely high precision calculation is the formula[14][15] where M denotes the arithmetic-geometric mean of 1 and 4/s, and with m chosen so that p bits of precision is attained. (For most purposes, the value of 8 for m is sufficient.) In fact, if this method is used, Newton inversion of the natural logarithm may conversely be used to calculate the exponential function efficiently. (The constants and π can be pre-computed to the desired precision using any of several known quickly converging series.) Or, the following formula can be used:

where are the Jacobi theta functions.[16]

Based on a proposal by William Kahan and first implemented in the Hewlett-Packard HP-41C calculator in 1979 (referred to under "LN1" in the display, only), some calculators, operating systems (for example Berkeley UNIX 4.3BSD[17]), computer algebra systems and programming languages (for example C99[18]) provide a special natural logarithm plus 1 function, alternatively named LNP1,[19][20] or log1p[18] to give more accurate results for logarithms close to zero by passing arguments x, also close to zero, to a function log1p(x), which returns the value ln(1+x), instead of passing a value y close to 1 to a function returning ln(y).[18][19][20] The function log1p avoids in the floating point arithmetic a near cancelling of the absolute term 1 with the second term from the Taylor expansion of the natural logarithm. This keeps the argument, the result, and intermediate steps all close to zero where they can be most accurately represented as floating-point numbers.[19][20]

In addition to base e, the IEEE 754-2008 standard defines similar logarithmic functions near 1 for binary and decimal logarithms: log2(1 + x) and log10(1 + x).

Similar inverse functions named "expm1",[18] "expm"[19][20] or "exp1m" exist as well, all with the meaning of expm1(x) = exp(x) − 1.[nb 2]

An identity in terms of the inverse hyperbolic tangent, gives a high precision value for small values of x on systems that do not implement log1p(x).

Computational complexity

[edit]The computational complexity of computing the natural logarithm using the arithmetic-geometric mean (for both of the above methods) is . Here, n is the number of digits of precision at which the natural logarithm is to be evaluated, and M(n) is the computational complexity of multiplying two n-digit numbers.

Continued fractions

[edit]While no simple continued fractions are available, several generalized continued fractions exist, including:

These continued fractions—particularly the last—converge rapidly for values close to 1. However, the natural logarithms of much larger numbers can easily be computed, by repeatedly adding those of smaller numbers, with similarly rapid convergence.

For example, since 2 = 1.253 × 1.024, the natural logarithm of 2 can be computed as:

Furthermore, since 10 = 1.2510 × 1.0243, even the natural logarithm of 10 can be computed similarly as: The reciprocal of the natural logarithm can be also written in this way:

For example:

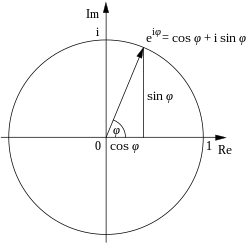

Complex logarithms

[edit]The exponential function can be extended to a function which gives a complex number as ez for any arbitrary complex number z; simply use the infinite series with x=z complex. This exponential function can be inverted to form a complex logarithm that exhibits most of the properties of the ordinary logarithm. There are two difficulties involved: no x has ex = 0; and it turns out that e2iπ = 1 = e0. Since the multiplicative property still works for the complex exponential function, ez = ez+2kiπ, for all complex z and integers k.

So the logarithm cannot be defined for the whole complex plane, and even then it is multi-valued—any complex logarithm can be changed into an "equivalent" logarithm by adding any integer multiple of 2iπ at will. The complex logarithm can only be single-valued on the cut plane. For example, ln i = iπ/2 or 5iπ/2 or −3iπ/2, etc.; and although i4 = 1, 4 ln i can be defined as 2iπ, or 10iπ or −6iπ, and so on.

- Plots of the natural logarithm function on the complex plane (principal branch)

-

z = Re(ln(x + yi))

-

z = |(Im(ln(x + yi)))|

-

z = |(ln(x + yi))|

-

Superposition of the previous three graphs

See also

[edit]- Iterated logarithm

- Napierian logarithm

- List of logarithmic identities

- Logarithm of a matrix

- Logarithmic coordinates of an element of a Lie group.

- Logarithmic differentiation

- Logarithmic integral function

- Nicholas Mercator – first to use the term natural logarithm

- Polylogarithm

- Von Mangoldt function

Notes

[edit]- ^ Including C, C++, Java, SAS, MATLAB, Mathematica, Fortran, and some BASIC dialects

- ^ For a similar approach to reduce round-off errors of calculations for certain input values see trigonometric functions like versine, vercosine, coversine, covercosine, haversine, havercosine, hacoversine, hacovercosine, exsecant and excosecant.

References

[edit]- ^ Sloane, N. J. A. (ed.). "Sequence A001113 (Decimal expansion of e)". The On-Line Encyclopedia of Integer Sequences. OEIS Foundation.

- ^ Hardy, G.H.; Wright, E.M. (1975). An Introduction to the Theory of Numbers (4th ed.). Oxford. footnote to paragraph 1.7: "log x is, of course, the 'Naperian' logarithm of x, to base e. 'Common' logarithms have no mathematical interest".

- ^ Mortimer, Robert G. (2005). Mathematics for physical chemistry (3rd ed.). Academic Press. p. 9. ISBN 0-12-508347-5. Extract of page 9

- ^ a b c Weisstein, Eric W. "Natural Logarithm". mathworld.wolfram.com. Retrieved 2020-08-29.

- ^ a b "Rules, Examples, & Formulas". Logarithm. Encyclopedia Britannica. Retrieved 2020-08-29.

- ^ Burn, R.P. (2001). "Alphonse Antonio de Sarasa and logarithms". Historia Mathematica. 28: 1–17. doi:10.1006/hmat.2000.2295.

- ^ O'Connor, J. J.; Robertson, E. F. (September 2001). "The number e". The MacTutor History of Mathematics archive. Retrieved 2009-02-02.

- ^ a b Cajori, Florian (1991). A History of Mathematics (5th ed.). AMS Bookstore. p. 152. ISBN 0-8218-2102-4.

- ^ ""Logarithmic Expansions" at Math2.org".

- ^ Leonhard Euler, Introductio in Analysin Infinitorum. Tomus Primus. Bousquet, Lausanne 1748. Exemplum 1, p. 228; quoque in: Opera Omnia, Series Prima, Opera Mathematica, Volumen Octavum, Teubner 1922

- ^ RUFFA, Anthony. "A PROCEDURE FOR GENERATING INFINITE SERIES IDENTITIES" (PDF). International Journal of Mathematics and Mathematical Sciences. Retrieved 2022-02-27. (Page 3654, equation 2.6)

- ^ For a detailed proof see for instance: George B. Thomas, Jr and Ross L. Finney, Calculus and Analytic Geometry, 5th edition, Addison-Wesley 1979, Section 6-5 pages 305-306.

- ^ Sloane, N. J. A. (ed.). "Sequence A002392 (Decimal expansion of natural logarithm of 10)". The On-Line Encyclopedia of Integer Sequences. OEIS Foundation.

- ^ Sasaki, T.; Kanada, Y. (1982). "Practically fast multiple-precision evaluation of log(x)". Journal of Information Processing. 5 (4): 247–250. Retrieved 2011-03-30.

- ^ Ahrendt, Timm (1999). "Fast Computations of the Exponential Function". Stacs 99. Lecture Notes in Computer Science. Vol. 1564. pp. 302–312. doi:10.1007/3-540-49116-3_28. ISBN 978-3-540-65691-3.

- ^ Borwein, Jonathan M.; Borwein, Peter B. (1987). Pi and the AGM: A Study in Analytic Number Theory and Computational Complexity (First ed.). Wiley-Interscience. ISBN 0-471-83138-7. page 225

- ^ Beebe, Nelson H. F. (2017-08-22). "Chapter 10.4. Logarithm near one". The Mathematical-Function Computation Handbook - Programming Using the MathCW Portable Software Library (1 ed.). Salt Lake City, UT, USA: Springer International Publishing AG. pp. 290–292. doi:10.1007/978-3-319-64110-2. ISBN 978-3-319-64109-6. LCCN 2017947446. S2CID 30244721.

In 1987, Berkeley UNIX 4.3BSD introduced the log1p() function

- ^ a b c d Beebe, Nelson H. F. (2002-07-09). "Computation of expm1 = exp(x)−1" (PDF). 1.00. Salt Lake City, Utah, USA: Department of Mathematics, Center for Scientific Computing, University of Utah. Retrieved 2015-11-02.

- ^ a b c d HP 48G Series – Advanced User's Reference Manual (AUR) (4 ed.). Hewlett-Packard. December 1994 [1993]. HP 00048-90136, 0-88698-01574-2. Retrieved 2015-09-06.

- ^ a b c d HP 50g / 49g+ / 48gII graphing calculator advanced user's reference manual (AUR) (2 ed.). Hewlett-Packard. 2009-07-14 [2005]. HP F2228-90010. Retrieved 2015-10-10. Searchable PDF

Natural logarithm

View on GrokipediaDefinitions and Notation

Inverse of the exponential function

The exponential function, denoted as or , where is the base of the natural logarithm (approximately 2.71828), maps real numbers to positive real numbers and serves as the counterpart to the natural logarithm. This function is continuous, strictly increasing, and one-to-one, ensuring it has an inverse over its range.[4][5] The natural logarithm, denoted , is defined as the inverse of the exponential function: it is the unique real number such that for any . This definition establishes the natural logarithm as the function that "undoes" the exponential operation, solving equations of the form for .[4][6][7] Graphically, the natural logarithm function has a domain of all positive real numbers , a range of all real numbers , and is strictly monotonic increasing. It approaches a vertical asymptote at from the right, where as , and passes through the point . The curve starts near the y-axis on the left and rises slowly to the right, reflecting its concave down shape near the origin before becoming nearly linear for large .[8][9][10] Basic examples illustrate this inverse relationship: because , and because . These values highlight the function's behavior at key points.[4][6] The inverse properties are expressed as: and These identities confirm the bidirectional inverse relationship between the functions.[7][5][11]Integral representation

The natural logarithm of a positive real number can be defined as the definite integral This definition arises from recognizing the natural logarithm as the antiderivative of , normalized such that .[12] Geometrically, this integral represents the net signed area between the curve , the x-axis, and the vertical lines at and . The function describes the rectangular hyperbola , so the area corresponds to the region bounded by this hyperbola from 1 to . For , the area is positive; for , the integral evaluates to a negative value, reflecting the area "below" the x-axis in the reversed limits. At , the integral from 1 to 1 is zero, confirming .[12] An equivalent form for is which reverses the limits and introduces a negative sign; this is particularly convenient when , as it computes a positive area from to 1.[12] This integral definition establishes the natural logarithm as the inverse of the exponential function , where is the unique function satisfying with . To verify, apply the Fundamental Theorem of Calculus to the integral definition: . Now consider . Substitute , so ; the limits change from to , yielding Thus, . Similarly, , confirming the inverse relationship.[12]Notational conventions

The natural logarithm is most commonly denoted by in mathematical writing, particularly within physics and engineering contexts, where it explicitly signifies the logarithm with base .[1] In pure mathematics, especially in advanced texts and research papers, is frequently used as an alternative to denote the natural logarithm, with the base assumed by convention to avoid ambiguity in specialized fields like number theory.[13] This usage of contrasts with applied sciences, where without subscript often implies the common logarithm (base 10).[1] The notation derives from the French phrase logarithme naturel (natural logarithm) and was first introduced in print by Irving Stringham in his 1893 text Uniplanar Algebra.[14] Its adoption marked a historical shift from the generic "log" notation, which had previously encompassed various bases, to clearly distinguish the base- logarithm from the base-10 common logarithm amid growing use of both in calculations.[15] Less common alternatives include , which explicitly specifies the base but is verbose and rarely employed in modern writing.[1] For the complex logarithm, the principal branch is conventionally denoted by , with a capital "L" to indicate the primary value, typically defined for in the complex plane excluding the non-positive real axis.[16] In typography and typesetting, such as in LaTeX, the symbols "ln" and "Log" are rendered in upright (roman) font to signify they are operator names rather than italicized variables, using commands like\ln or \Log for proper spacing and style.[17] For real-valued functions, the domain is invariably restricted to to ensure the argument is positive, as the natural logarithm is undefined for non-positive reals.[1]

Historical Development

Origins in logarithmic concepts

The concept of logarithms originated as a computational tool to simplify multiplication and division, particularly for astronomical and navigational calculations. In 1614, Scottish mathematician John Napier published Mirifici Logarithmorum Canonis Descriptio, introducing logarithms as a method to transform products into sums, thereby easing complex arithmetic. Napier's logarithms were not based on a fixed base but were designed around the geometry of proportional parts, inspired by the need to compute sines and tangents efficiently. This innovation dramatically reduced the labor of calculations that previously required extensive manual effort.[18] Building on Napier's work, English mathematician Henry Briggs refined the system by proposing a base-10 logarithm in correspondence with Napier around 1616–1617, leading to the publication of Arithmetica Logarithmica in 1624. Briggs's tables provided common logarithms (base 10) for numbers from 1 to 20,000 and from 90,000 to 100,000, computed to 10 decimal places, which became widely adopted for practical use in science and engineering. These tables marked a shift toward standardized logarithmic computation, emphasizing decimal convenience over Napier's more geometric approach.[19] The foundation for the natural logarithm emerged from investigations into continuous growth processes. In 1683, Swiss mathematician Jacob Bernoulli explored compound interest with infinitesimally small intervals, deriving the limit , later known as the constant , without recognizing its full significance. This limit provided the basis for the exponential function central to natural logarithms. Independently, in 1668, German mathematician Nicolaus Mercator developed an infinite series approximation for the natural logarithm, for small , published in Logarithmotechnia, linking it to the area under the hyperbola . This series represented an early analytic expression for the function.[20] The natural logarithm gained recognition in early calculus through the integral of . In the late 1600s, Scottish mathematician James Gregory demonstrated in 1668 that the quadrature (area) under the curve from 1 to yields a logarithmic function, using geometric methods to establish its properties. Concurrently, Isaac Newton incorporated this integral into his method of fluxions around 1669–1671, treating it as the inverse of the exponential and using it to solve differential equations in physics. These developments highlighted the natural logarithm's role as the antiderivative of , distinguishing it from common logarithms by its direct connection to continuous change.[21]Formalization and naming

The term "hyperbolic logarithm" originated with the work of Grégoire de Saint-Vincent in his 1647 publication Opus geometricum quadraturae circuli et sectionum coni, where he demonstrated that the area under the rectangular hyperbola from to is proportional to the ratio , establishing a geometric foundation for what would later be recognized as the natural logarithm.[22] This approach, further elaborated by his pupil Alphonse Antonio de Sarasa, shifted focus from arithmetic logarithms to an integral-based definition tied to hyperbolic areas, laying the groundwork for its transition to the "natural" designation in analytical mathematics. Leonhard Euler advanced the formalization of this function beginning in 1728, when he first employed the letter to denote the base of the logarithm in an unpublished manuscript, defining it through its infinite series expansion and linking it to exponential growth.[23] In his 1736 treatise Mechanica sive motus scientia analytice exposita, Euler incorporated as the base of natural logarithms in integral expressions for motion under resistance, marking its earliest printed appearance and demonstrating its utility in solving differential equations of physical systems.[24] Euler justified the "natural" label by emphasizing the base 's emergence from limits such as continuous compounding (the limit of as approaches infinity) and its central role in differential equations like , where the exponential function is its own derivative, simplifying calculus operations without arbitrary constants.[20] Euler solidified this framework in his 1748 Introductio in analysin infinitorum, providing a rigorous series definition of and explicitly naming logarithms to this base as "natural or hyperbolic," with the latter term retaining the hyperbolic area connection while "natural" highlighted its intrinsic analytical properties.[25] By the 19th century, the natural logarithm achieved widespread standardization in mathematical texts and applications, as seen in Carl Friedrich Gauss's astronomical computations in Theoria motus corporum coelestium (1809), where it facilitated precise orbital calculations, and in subsequent analytic works that integrated it as the standard for calculus and number theory.[26] This adoption reflected its foundational status in rigorous analysis, supplanting earlier terminological variations.Fundamental Properties

Algebraic and order properties

The natural logarithm, denoted , is defined for all positive real numbers, so its domain is the open interval . The range of is the entire set of real numbers , meaning that for every real number , there exists a unique such that . This surjectivity onto follows from the function's continuous and unbounded behavior on its domain.[27][28] As approaches the boundaries of its domain, exhibits divergent limits: and . These limits underscore the function's asymptotic properties, with decreasing without bound near 0 and increasing without bound as grows large. The natural logarithm is strictly increasing on , satisfying if and only if . This monotonicity is a direct consequence of the first derivative for all , ensuring the function preserves order on the positive reals.[27][29][30] A fundamental inequality for the natural logarithm is for all , with equality holding if and only if . This inequality captures the function's growth relative to its tangent line at , where the derivative is 1. Applications of Bernoulli's inequality, which states that for natural numbers and , extend to bounding logarithmic expressions; for instance, it aids in deriving log-concavity-based estimates for sequences and means involving , such as in generalizations of Maclaurin's inequality.[31][32] The concavity of is evident from its second derivative, for all , confirming that the function is strictly concave down on . This negative second derivative implies that the graph of lies below any of its tangent lines, reinforcing the inequality and enabling Jensen's inequality applications for convex combinations in the logarithm's domain.[27][33]Change of base and identities

The change of base formula expresses the natural logarithm in terms of logarithms with any other positive base : for . This identity, which holds for all valid bases, facilitates computation and theoretical analysis by converting between different logarithmic systems.[34] It underscores the natural logarithm's role as a universal reference, since any logarithm can be reduced to it via the base .[35] The natural logarithm obeys key functional identities that reflect its algebraic structure. The product rule states that for all , allowing the logarithm of a product to be decomposed into a sum.[35] Similarly, the power rule gives for and real , which extends the function's behavior under exponentiation.[34] These properties derive from the definition of the logarithm as the inverse of exponentiation and are foundational for simplifying expressions in analysis.[35] As the inverse of the exponential function with base , the natural logarithm satisfies for all real and for . These reciprocal relations highlight the bijective correspondence between the natural logarithm and the exponential on the positive reals.[1] A specific case of the change of base relates it to the common logarithm (base 10): for , providing a practical link for numerical evaluations.[34]Calculus Aspects

Derivative

The derivative of the natural logarithm function , defined for , is given by This result follows from the inverse function theorem, as is the inverse of the exponential function , whose derivative is itself. To derive it, let , so . Differentiating both sides implicitly with respect to yields , and solving for the derivative gives .[5][36] Alternatively, using the integral definition , the fundamental theorem of calculus implies that the derivative is the integrand evaluated at the upper limit, yielding .[27][37] The higher-order derivatives of follow a pattern: the th derivative for is This can be verified by successive differentiation, starting from the first derivative and applying the product rule repeatedly.[38] In applications, the derivative interprets the rate of change of as the reciprocal of the input, which models relative growth rates in exponential processes. For instance, if a quantity grows exponentially as , then the relative growth rate equals the derivative of , providing a measure of proportional change independent of scale.[39]Integrals involving the natural logarithm

The natural logarithm serves as the antiderivative of the reciprocal function, providing a fundamental result in calculus. Specifically, the indefinite integral of is given by where is the constant of integration, and the absolute value ensures the expression is defined for .[40] This formula arises because the derivative of is for and , confirming the antiderivative relationship.[40] For definite integrals over positive intervals, the natural logarithm evaluates the accumulated area under the curve . The integral from to , where , yields which simplifies the change in logarithmic scale between the bounds.[41] This result follows directly from applying the Fundamental Theorem of Calculus to the antiderivative.[41] A broader class of integrals leverages substitution to reveal the natural logarithm's role. For a differentiable function with , the integral holds, as substitution transforms the integrand into .[42] For instance, substitutes , yielding .[42] In applications, the natural logarithm quantifies areas and measures uncertainty. The definite integral represents the area under the hyperbola from to , a geometric interpretation dating to 17th-century discoveries.[43] In probability, differential entropy for a continuous random variable with density , defined as , uses the natural logarithm to measure average uncertainty in nats.[44] Improper integrals highlight the logarithm's behavior at infinity. The integral diverges, as indicating unbounded growth in the harmonic series context.[45]Series and Approximations

Taylor series expansion

The Taylor series expansion of the natural logarithm function, centered at (or equivalently, for centered at ), provides a power series representation that converges to the function within a specific interval. This expansion is particularly useful for approximating near , where . The series takes the form [1] This representation, known historically as the Mercator series, was first published by the German mathematician Nicolaus Mercator in his 1668 treatise Logarithmotechnia.[46] One standard derivation of this series integrates the geometric series expansion of . The geometric series for is integrated term by term from 0 to , yielding with the constant of integration zero since .[47] Alternatively, the series can be obtained directly via the Taylor theorem by computing successive derivatives of at : the th derivative (for ) is , so evaluating at 0 gives coefficients .[1] The radius of convergence is 1, determined by the ratio test on the series terms, ensuring absolute convergence for . At the endpoints (where the series becomes the alternating harmonic series summing to ) and (the negative harmonic series, which diverges), conditional convergence or divergence applies, respectively.[1] As an alternating series for , it allows error bounds via the alternating series estimation theorem, where the remainder after terms is less than the next term's magnitude, facilitating practical approximations like for small .[47]Continued fraction representations

The natural logarithm admits a continued fraction representation through its connection to the inverse hyperbolic tangent function, which provides an effective means for approximation in the real domain. The inverse hyperbolic tangent is given by for . Consequently, This yields the continued fraction for , where the numerators are successive squares and the denominators are odd integers starting from 1.[48] This representation converges more rapidly than the corresponding Taylor series expansion for values of away from zero, offering superior accuracy with fewer terms in practical computations.[49] Continued fraction expansions for the natural logarithm trace their development to the 18th century, particularly through Leonhard Euler's foundational work on transforming series into such forms, building upon 17th-century advancements in continued fractions by William Brouncker and others.[50][51] As an illustration, the value can be approximated by setting , since , yielding . Substituting into the continued fraction and truncating after a few levels produces rational approximants that converge quickly to .[48]Numerical Computation

Algorithms and methods

Computing the natural logarithm ln(x) for x > 0 in numerical libraries generally begins with range reduction to bring the argument into a convenient interval, typically [1/2, 2] or [√2/2, √2], where approximations are more efficient. For large x, this is achieved by expressing x = 2^k * y with y in [1, 2) and k = floor(log_2 x), yielding ln(x) = k ln(2) + ln(y).[52] This step leverages precomputed or efficiently calculable values of ln(2) and reduces the problem to evaluating ln(y) near 1, minimizing errors in subsequent approximations.[53] The arithmetic-geometric mean (AGM) iteration provides a rapidly converging method for computing ln(2) and generalizes to arbitrary x through connections to complete elliptic integrals. For ln(2), the method uses appropriate initials derived from elliptic integral relations, where the iteration defines a_n = (a_{n-1} + b_{n-1})/2 and b_n = √(a_{n-1} b_{n-1}), converging quadratically to enable high-precision evaluation after O(log p) steps for p bits.[54] Generalizations for ln(x) scale the argument via powers of 2 and apply AGM to compute intervals bounding the elliptic integral expressions like I(1, b) = ∫_0^{π/2} dt / √(1 - b sin² t), relating to log((1 + √b)/(1 - √b)) via asymptotic properties, achieving ~5 log p multiplications and ~2 log p square roots for p-bit precision.[55] Binary splitting accelerates the evaluation of series expansions for ln(x), particularly for high-precision computations of constants like ln(2) or ln(p) for primes p. This technique recursively divides the series sum into binary tree structures, computing partial numerators and denominators to avoid intermediate expansions, yielding O(M(d) log² d) time for d digits where M(d) is multiplication time, outperforming naive summation by orders of magnitude for d > 10^6.[56] It is especially effective for hypergeometric-type series tailored to logarithms, such as Ramanujan-style identities, enabling multiprecision ln(x) via argument reduction followed by accelerated summation.[57] Newton's method offers quadratic convergence for solving e^y = x to find y = ln(x), starting from an initial guess like y_0 = log_2(x) * ln(2) approximated via bit manipulation. The iteration y_{n+1} = y_n - (e^{y_n} - x)/e^{y_n} = y_n + (x e^{-y_n} - 1) simplifies to cubic convergence variants in some implementations, requiring few steps (typically 3-5) after range reduction for double-precision accuracy in floating-point arithmetic.[58] This approach is favored in software libraries for its simplicity and efficiency when combined with hardware exponentiation. IEEE 754-compliant implementations of ln(x) in hardware often combine range reduction with polynomial approximations or table lookups for the mantissa, ensuring correctly rounded results to the last ulp. For single-precision, architectures decompose the IEEE 754 format into exponent E and mantissa m ∈ [1, 2), compute ln(m) via minimax polynomials over subintervals, and add E ln(2) with fused operations to meet the standard's accuracy requirements, achieving latencies of 10-20 cycles on modern FPUs.[59] Double-precision variants extend this with higher-degree approximations or iterative refinement, prioritizing fused multiply-add for error control.[53] Taylor series may serve as a baseline for small arguments near 1, but are typically augmented by these methods for broader ranges.[56]Special values and precision

The natural logarithm of 10, denoted , is approximately 2.302585092994045684. This value is crucial for converting between natural and common (base-10) logarithms, as , enabling efficient computation across logarithmic bases in numerical applications. One efficient method to compute to high precision involves the arithmetic-geometric mean (AGM) iteration, which relates the logarithm to elliptic integrals and converges quadratically; starting with initial values and , the iteration yields tight bounds around after approximately steps for bits of precision. Similarly, the natural logarithm of 2, , is approximately 0.693147180559945. High-precision evaluation of employs binary splitting on series expansions, such as the Taylor series for with , using a divide-and-conquer approach on rational sums to achieve time complexity, where is the bit precision and is the multiplication time for -bit numbers. For arbitrary-precision computation beyond standard floating-point limits, libraries like mpmath in Python support natural logarithms to thousands of decimal digits by dynamically adjusting precision during evaluation; for instance,mpmath.log(2) yields at 50 decimal places as 0.69314718055994530941723212145817656807550013436025. The Arb library (now integrated into FLINT), a C library for ball arithmetic, computes logarithms with rigorous error bounds using strategies like Ziv's method, allocating extra guard bits exponentially until the desired precision is met, supporting computations up to millions of bits while tracking numerical uncertainty.

In standard double-precision floating-point arithmetic (IEEE 754), the natural logarithm is typically computed with a small relative error, bounded by a few multiples of the machine epsilon ; for with small , the relative error is at most under conditions including a guard digit and computation within half a unit in the last place (ulp). More generally, the relative error in floating-point representation and operations, including logarithms, remains on the order of , ensuring that the computed value satisfies for well-conditioned inputs.

The computational complexity of evaluating the natural logarithm to bits of precision is , where denotes the time for -bit multiplication, achieved via AGM-based methods requiring iterations of arithmetic operations whose cost scales with . This complexity holds for both via AGM and via binary splitting variants, making high-precision logarithm computation feasible on modern hardware for extensive digit counts.

.svg/250px-Log_(2).svg.png)

.svg/2000px-Log_(2).svg.png)

![{\displaystyle {\begin{aligned}\ln ab=\int _{1}^{ab}{\frac {1}{x}}\,dx&=\int _{1}^{a}{\frac {1}{x}}\,dx+\int _{a}^{ab}{\frac {1}{x}}\,dx\\[5pt]&=\int _{1}^{a}{\frac {1}{x}}\,dx+\int _{1}^{b}{\frac {1}{at}}a\,dt\\[5pt]&=\int _{1}^{a}{\frac {1}{x}}\,dx+\int _{1}^{b}{\frac {1}{t}}\,dt\\[5pt]&=\ln a+\ln b.\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d7210259ed243c3b86451e39eb2b50dccc7832e1)

![{\displaystyle \ln({\sqrt[{y}]{x}})=(\ln x)/y\quad {\text{for }}\;x>0\;{\text{and }}\;y\neq 0}](https://wikimedia.org/api/rest_v1/media/math/render/svg/196be723b66e16fed1cf00d43cc450d2e93468d8)

![{\displaystyle {\begin{aligned}{\frac {d}{dx}}\ln x&=\lim _{h\to 0}{\frac {\ln(x+h)-\ln x}{h}}\\&=\lim _{h\to 0}\left[{\frac {1}{h}}\ln \left({\frac {x+h}{x}}\right)\right]\\&=\lim _{h\to 0}\left[\ln \left(1+{\frac {h}{x}}\right)^{\frac {1}{h}}\right]\quad &&{\text{all above for logarithmic properties}}\\&=\ln \left[\lim _{h\to 0}\left(1+{\frac {h}{x}}\right)^{\frac {1}{h}}\right]\quad &&{\text{for continuity of the logarithm}}\\&=\ln e^{1/x}\quad &&{\text{for the definition of }}e^{x}=\lim _{h\to 0}(1+hx)^{1/h}\\&={\frac {1}{x}}\quad &&{\text{for the definition of the ln as inverse function.}}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8e12dece20904dd40cd69415a900d0ee66e36793)

![{\displaystyle \ln(x)=(x-1)\prod _{k=1}^{\infty }\left({\frac {2}{1+{\sqrt[{2^{k}}]{x}}}}\right)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/19a685fc50f560fbfd45cd8c625c839137a0cb42)

![{\displaystyle \ln(2)=\left({\frac {2}{1+{\sqrt {2}}}}\right)\left({\frac {2}{1+{\sqrt[{4}]{2}}}}\right)\left({\frac {2}{1+{\sqrt[{8}]{2}}}}\right)\left({\frac {2}{1+{\sqrt[{16}]{2}}}}\right)...}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b7b509b3f7ce72ef554df0dfb48f10dbdc772055)

![{\displaystyle \pi =(2i+2)\left({\frac {2}{1+{\sqrt {i}}}}\right)\left({\frac {2}{1+{\sqrt[{4}]{i}}}}\right)\left({\frac {2}{1+{\sqrt[{8}]{i}}}}\right)\left({\frac {2}{1+{\sqrt[{16}]{i}}}}\right)...}](https://wikimedia.org/api/rest_v1/media/math/render/svg/438561a80d9075271b2dcd15bea94412d2683e69)

![{\displaystyle {\frac {1}{\ln(2)}}=2-{\frac {\sqrt {2}}{2+2{\sqrt {2}}}}-{\frac {\sqrt[{4}]{2}}{4+4{\sqrt[{4}]{2}}}}-{\frac {\sqrt[{8}]{2}}{8+8{\sqrt[{8}]{2}}}}\cdots }](https://wikimedia.org/api/rest_v1/media/math/render/svg/def29e93c87165f8547949c7e10896c45dc22d2f)

![{\displaystyle {\begin{aligned}\ln(1+x)&={\frac {x^{1}}{1}}-{\frac {x^{2}}{2}}+{\frac {x^{3}}{3}}-{\frac {x^{4}}{4}}+{\frac {x^{5}}{5}}-\cdots \\[5pt]&={\cfrac {x}{1-0x+{\cfrac {1^{2}x}{2-1x+{\cfrac {2^{2}x}{3-2x+{\cfrac {3^{2}x}{4-3x+{\cfrac {4^{2}x}{5-4x+\ddots }}}}}}}}}}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/92f9f9bda019d60b5ac5d5fd29ea2dd952c5b90a)

![{\displaystyle {\begin{aligned}\ln \left(1+{\frac {x}{y}}\right)&={\cfrac {x}{y+{\cfrac {1x}{2+{\cfrac {1x}{3y+{\cfrac {2x}{2+{\cfrac {2x}{5y+{\cfrac {3x}{2+\ddots }}}}}}}}}}}}\\[5pt]&={\cfrac {2x}{2y+x-{\cfrac {(1x)^{2}}{3(2y+x)-{\cfrac {(2x)^{2}}{5(2y+x)-{\cfrac {(3x)^{2}}{7(2y+x)-\ddots }}}}}}}}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/90abfa2132828fc8eea5d3551dfa4df25dbdfa87)

![{\displaystyle {\begin{aligned}\ln 2&=3\ln \left(1+{\frac {1}{4}}\right)+\ln \left(1+{\frac {3}{125}}\right)\\[8pt]&={\cfrac {6}{9-{\cfrac {1^{2}}{27-{\cfrac {2^{2}}{45-{\cfrac {3^{2}}{63-\ddots }}}}}}}}+{\cfrac {6}{253-{\cfrac {3^{2}}{759-{\cfrac {6^{2}}{1265-{\cfrac {9^{2}}{1771-\ddots }}}}}}}}.\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/bc10de9595aca079ef56e7b76a2a23af56e453da)

![{\displaystyle {\begin{aligned}\ln 10&=10\ln \left(1+{\frac {1}{4}}\right)+3\ln \left(1+{\frac {3}{125}}\right)\\[10pt]&={\cfrac {20}{9-{\cfrac {1^{2}}{27-{\cfrac {2^{2}}{45-{\cfrac {3^{2}}{63-\ddots }}}}}}}}+{\cfrac {18}{253-{\cfrac {3^{2}}{759-{\cfrac {6^{2}}{1265-{\cfrac {9^{2}}{1771-\ddots }}}}}}}}.\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/931b5e1a786450547bd77e466677d9a983974886)