Recent from talks

Nothing was collected or created yet.

Maxwell's equations

View on Wikipedia| Electromagnetism |

|---|

|

Maxwell's equations, or Maxwell–Heaviside equations, are a set of coupled partial differential equations that, together with the Lorentz force law, form the foundation of classical electromagnetism, classical optics, electric and magnetic circuits. The equations provide a mathematical model for electric, optical, and radio technologies, such as power generation, electric motors, wireless communication, lenses, radar, etc. They describe how electric and magnetic fields are generated by charges, currents, and changes of the fields.[note 1] The equations are named after the physicist and mathematician James Clerk Maxwell, who, in 1861 and 1862, published an early form of the equations that included the Lorentz force law. Maxwell first used the equations to propose that light is an electromagnetic phenomenon. The modern form of the equations in their most common formulation is credited to Oliver Heaviside.[1]

Maxwell's equations may be combined to demonstrate how fluctuations in electromagnetic fields (waves) propagate at a constant speed in vacuum, c (299792458 m/s[2]). Known as electromagnetic radiation, these waves occur at various wavelengths to produce a spectrum of radiation from radio waves to gamma rays.

In partial differential equation form and a coherent system of units, Maxwell's microscopic equations can be written as (top to bottom: Gauss's law, Gauss's law for magnetism, Faraday's law, Ampère-Maxwell law) With the electric field, the magnetic field, the electric charge density and the current density. is the vacuum permittivity and the vacuum permeability.

The equations have two major variants:

- The microscopic equations have universal applicability but are unwieldy for common calculations. They relate the electric and magnetic fields to total charge and total current, including the complicated charges and currents in materials at the atomic scale.

- The macroscopic equations define two new auxiliary fields that describe the large-scale behaviour of matter without having to consider atomic-scale charges and quantum phenomena like spins. However, their use requires experimentally determined parameters for a phenomenological description of the electromagnetic response of materials.

The term "Maxwell's equations" is often also used for equivalent alternative formulations. Versions of Maxwell's equations based on the electric and magnetic scalar potentials are preferred for explicitly solving the equations as a boundary value problem, analytical mechanics, or for use in quantum mechanics. The covariant formulation (on spacetime rather than space and time separately) makes the compatibility of Maxwell's equations with special relativity manifest. Maxwell's equations in curved spacetime, commonly used in high-energy and gravitational physics, are compatible with general relativity.[note 2] In fact, Albert Einstein developed special and general relativity to accommodate the invariant speed of light, a consequence of Maxwell's equations, with the principle that only relative movement has physical consequences.

The publication of the equations marked the unification of a theory for previously separately described phenomena: magnetism, electricity, light, and associated radiation. Since the mid-20th century, it has been understood that Maxwell's equations do not give an exact description of electromagnetic phenomena, but are instead a classical limit of the more precise theory of quantum electrodynamics.

History of the equations

[edit]Conceptual descriptions

[edit]Gauss's law

[edit]

Gauss's law describes the relationship between an electric field and electric charges: an electric field points away from positive charges and towards negative charges, and the net outflow of the electric field through a closed surface is proportional to the enclosed charge, including bound charge due to polarization of material. The coefficient of the proportion is the permittivity of free space.

Gauss's law for magnetism

[edit]

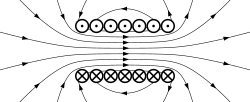

Gauss's law for magnetism states that electric charges have no magnetic analogues, called magnetic monopoles; no north or south magnetic poles exist in isolation.[3] Instead, the magnetic field of a material is attributed to a dipole, and the net outflow of the magnetic field through a closed surface is zero. Magnetic dipoles may be represented as loops of current or inseparable pairs of equal and opposite "magnetic charges". Precisely, the total magnetic flux through a Gaussian surface is zero, and the magnetic field is a solenoidal vector field.[note 3]

Faraday's law

[edit]

The Maxwell–Faraday version of Faraday's law of induction describes how a time-varying magnetic field corresponds to the negative curl of an electric field.[3] In integral form, it states that the work per unit charge required to move a charge around a closed loop equals the rate of change of the magnetic flux through the enclosed surface.

The electromagnetic induction is the operating principle behind many electric generators: for example, a rotating bar magnet creates a changing magnetic field and generates an electric field in a nearby wire.

Ampère–Maxwell law

[edit]

The original law of Ampère states that magnetic fields relate to electric current. Maxwell's addition states that magnetic fields also relate to changing electric fields, which Maxwell called displacement current. The integral form states that electric and displacement currents are associated with a proportional magnetic field along any enclosing curve.

Maxwell's modification of Ampère's circuital law is important because the laws of Ampère and Gauss must otherwise be adjusted for static fields.[4][clarification needed] As a consequence, it predicts that a rotating magnetic field occurs with a changing electric field.[3][5] A further consequence is the existence of self-sustaining electromagnetic waves which travel through empty space.

The speed calculated for electromagnetic waves, which could be predicted from experiments on charges and currents,[note 4] matches the speed of light; indeed, light is one form of electromagnetic radiation (as are X-rays, radio waves, and others). Maxwell understood the connection between electromagnetic waves and light in 1861, thereby unifying the theories of electromagnetism and optics.

Formulation in terms of electric and magnetic fields (microscopic or in vacuum version)

[edit]In the electric and magnetic field formulation there are four equations that determine the fields for given charge and current distribution. A separate law of nature, the Lorentz force law, describes how the electric and magnetic fields act on charged particles and currents. By convention, a version of this law in the original equations by Maxwell is no longer included. The vector calculus formalism below, the work of Oliver Heaviside,[6][7] has become standard. It is rotationally invariant, and therefore mathematically more transparent than Maxwell's original 20 equations in x, y and z components. The relativistic formulations are more symmetric and Lorentz invariant. For the same equations expressed using tensor calculus or differential forms (see § Alternative formulations).

The differential and integral formulations are mathematically equivalent; both are useful. The integral formulation relates fields within a region of space to fields on the boundary and can often be used to simplify and directly calculate fields from symmetric distributions of charges and currents. On the other hand, the differential equations are purely local and are a more natural starting point for calculating the fields in more complicated (less symmetric) situations, for example using finite element analysis.[8]

Key to the notation

[edit]Symbols in bold represent vector quantities, and symbols in italics represent scalar quantities, unless otherwise indicated. The equations introduce the electric field, E, a vector field, and the magnetic field, B, a pseudovector field, each generally having a time and location dependence. The sources are

- the total electric charge density (total charge per unit volume), ρ, and

- the total electric current density (total current per unit area), J.

The universal constants appearing in the equations (the first two ones explicitly only in the SI formulation) are:

- the permittivity of free space, ε0, and

- the permeability of free space, μ0, and

- the speed of light,

Differential equations

[edit]In the differential equations,

- the nabla symbol, ∇, denotes the three-dimensional gradient operator, del,

- the ∇⋅ symbol (pronounced "del dot") denotes the divergence operator,

- the ∇× symbol (pronounced "del cross") denotes the curl operator.

Integral equations

[edit]In the integral equations,

- Ω is any volume with closed boundary surface ∂Ω, and

- Σ is any surface with closed boundary curve ∂Σ,

The equations are a little easier to interpret with time-independent surfaces and volumes. Time-independent surfaces and volumes are "fixed" and do not change over a given time interval. For example, since the surface is time-independent, we can bring the differentiation under the integral sign in Faraday's law: Maxwell's equations can be formulated with possibly time-dependent surfaces and volumes by using the differential version and using Gauss' and Stokes' theorems as appropriate.

-

is a surface integral over the boundary surface ∂Ω, with the loop indicating the surface is closed

is a surface integral over the boundary surface ∂Ω, with the loop indicating the surface is closed - is a volume integral over the volume Ω,

- is a line integral around the boundary curve ∂Σ, with the loop indicating the curve is closed.

- is a surface integral over the surface Σ,

- The total electric charge Q enclosed in Ω is the volume integral over Ω of the charge density ρ (see the "macroscopic formulation" section below): where dV is the volume element.

- The net magnetic flux ΦB is the surface integral of the magnetic field B passing through a fixed surface, Σ:

- The net electric flux ΦE is the surface integral of the electric field E passing through Σ:

- The net electric current I is the surface integral of the electric current density J passing through Σ: where dS denotes the differential vector element of surface area S, normal to surface Σ. (Vector area is sometimes denoted by A rather than S, but this conflicts with the notation for magnetic vector potential).

Formulation in the SI

[edit]| Name | Integral equations | Differential equations |

|---|---|---|

| Gauss's law | |

|

| Gauss's law for magnetism | |

|

| Maxwell–Faraday equation (Faraday's law of induction) | ||

| Ampère–Maxwell law |

Formulation in the Gaussian system

[edit]The definitions of charge, electric field, and magnetic field can be altered to simplify theoretical calculation, by absorbing dimensioned factors of ε0 and μ0 into the units (and thus redefining these). With a corresponding change in the values of the quantities for the Lorentz force law this yields the same physics, i.e. trajectories of charged particles, or work done by an electric motor. These definitions are often preferred in theoretical and high energy physics where it is natural to take the electric and magnetic field with the same units, to simplify the appearance of the electromagnetic tensor: the Lorentz covariant object unifying electric and magnetic field would then contain components with uniform unit and dimension.[9]: vii Such modified definitions are conventionally used with the Gaussian (CGS) units. Using these definitions, colloquially "in Gaussian units",[10] the Maxwell equations become:[11]

| Name | Integral equations | Differential equations |

|---|---|---|

| Gauss's law | |

|

| Gauss's law for magnetism | |

|

| Maxwell–Faraday equation (Faraday's law of induction) | ||

| Ampère–Maxwell law |

The equations simplify slightly when a system of quantities is chosen in the speed of light, c, is used for nondimensionalization, so that, for example, seconds and lightseconds are interchangeable, and c = 1.

Further changes are possible by absorbing factors of 4π. This process, called rationalization, affects whether Coulomb's law or Gauss's law includes such a factor (see Heaviside–Lorentz units, used mainly in particle physics).

Relationship between differential and integral formulations

[edit]The equivalence of the differential and integral formulations are a consequence of the Gauss divergence theorem and the Kelvin–Stokes theorem.

Flux and divergence

[edit]

According to the (purely mathematical) Gauss divergence theorem, the electric flux through the boundary surface ∂Ω can be rewritten as

The integral version of Gauss's equation can thus be rewritten as Since Ω is arbitrary (e.g. an arbitrary small ball with arbitrary center), this is satisfied if and only if the integrand is zero everywhere. This is the differential equations formulation of Gauss equation up to a trivial rearrangement.

Similarly rewriting the magnetic flux in Gauss's law for magnetism in integral form gives

which is satisfied for all Ω if and only if everywhere.

Circulation and curl

[edit]

By the Kelvin–Stokes theorem we can rewrite the line integrals of the fields around the closed boundary curve ∂Σ to an integral of the "circulation of the fields" (i.e. their curls) over a surface it bounds, i.e. Hence the Ampère–Maxwell law, the modified version of Ampère's circuital law, in integral form can be rewritten as Since Σ can be chosen arbitrarily, e.g. as an arbitrary small, arbitrary oriented, and arbitrary centered disk, we conclude that the integrand is zero if and only if the Ampère–Maxwell law in differential equations form is satisfied. The equivalence of Faraday's law in differential and integral form follows likewise.

The line integrals and curls are analogous to quantities in classical fluid dynamics: the circulation of a fluid is the line integral of the fluid's flow velocity field around a closed loop, and the vorticity of the fluid is the curl of the velocity field.

Charge conservation

[edit]The invariance of charge can be derived as a corollary of Maxwell's equations. The left-hand side of the Ampère–Maxwell law has zero divergence by the div–curl identity. Expanding the divergence of the right-hand side, interchanging derivatives, and applying Gauss's law gives: i.e., By the Gauss divergence theorem, this means the rate of change of charge in a fixed volume equals the net current flowing through the boundary:

In particular, in an isolated system the total charge is conserved.

Vacuum equations, electromagnetic waves and speed of light

[edit]

In a region with no charges (ρ = 0) and no currents (J = 0), such as in vacuum, Maxwell's equations reduce to:

Taking the curl (∇×) of the curl equations, and using the curl of the curl identity we obtain

The quantity has the dimension (T/L)2. Defining , the equations above have the form of the standard wave equations

Already during Maxwell's lifetime, it was found that the known values for and give , then already known to be the speed of light in free space. This led him to propose that light and radio waves were propagating electromagnetic waves, since amply confirmed. In the old SI system of units, the values of and are defined constants, (which means that by definition ) that define the ampere and the metre. In the new SI system, only c keeps its defined value, and the electron charge gets a defined value.

In materials with relative permittivity, εr, and relative permeability, μr, the phase velocity of light becomes which is usually[note 5] less than c.

In addition, E and B are perpendicular to each other and to the direction of wave propagation, and are in phase with each other. A sinusoidal plane wave is one special solution of these equations. Maxwell's equations explain how these waves can physically propagate through space. The changing magnetic field creates a changing electric field through Faraday's law. In turn, that electric field creates a changing magnetic field through Maxwell's modification of Ampère's circuital law. This perpetual cycle allows these waves, now known as electromagnetic radiation, to move through space at velocity c.

Macroscopic formulation

[edit]The above equations are the microscopic version of Maxwell's equations, expressing the electric and the magnetic fields in terms of the (possibly atomic-level) charges and currents present. This is sometimes called the "general" form, but the macroscopic version below is equally general, the difference being one of bookkeeping.

The microscopic version is sometimes called "Maxwell's equations in vacuum": this refers to the fact that the material medium is not built into the structure of the equations, but appears only in the charge and current terms. The microscopic version was introduced by Lorentz, who tried to use it to derive the macroscopic properties of bulk matter from its microscopic constituents.[12]: 5

"Maxwell's macroscopic equations", also known as Maxwell's equations in matter, are more similar to those that Maxwell introduced himself.

| Name | Integral equations (SI) |

Differential equations (SI) |

Differential equations (Gaussian system) |

|---|---|---|---|

| Gauss's law | |

||

| Ampère–Maxwell law | |||

| Gauss's law for magnetism | |

||

| Maxwell–Faraday equation (Faraday's law of induction) |

In the macroscopic equations, the influence of bound charge Qb and bound current Ib is incorporated into the displacement field D and the magnetizing field H, while the equations depend only on the free charges Qf and free currents If. This reflects a splitting of the total electric charge Q and current I (and their densities ρ and J) into free and bound parts:

The cost of this splitting is that the additional fields D and H need to be determined through phenomenological constituent equations relating these fields to the electric field E and the magnetic field B, together with the bound charge and current.

See below for a detailed description of the differences between the microscopic equations, dealing with total charge and current including material contributions, useful in air/vacuum;[note 6] and the macroscopic equations, dealing with free charge and current, practical to use within materials.

Bound charge and current

[edit]

When an electric field is applied to a dielectric material its molecules respond by forming microscopic electric dipoles – their atomic nuclei move a tiny distance in the direction of the field, while their electrons move a tiny distance in the opposite direction. This produces a macroscopic bound charge in the material even though all of the charges involved are bound to individual molecules. For example, if every molecule responds the same, similar to that shown in the figure, these tiny movements of charge combine to produce a layer of positive bound charge on one side of the material and a layer of negative charge on the other side. The bound charge is most conveniently described in terms of the polarization P of the material, its dipole moment per unit volume. If P is uniform, a macroscopic separation of charge is produced only at the surfaces where P enters and leaves the material. For non-uniform P, a charge is also produced in the bulk.[13]

Somewhat similarly, in all materials the constituent atoms exhibit magnetic moments that are intrinsically linked to the angular momentum of the components of the atoms, most notably their electrons. The connection to angular momentum suggests the picture of an assembly of microscopic current loops. Outside the material, an assembly of such microscopic current loops is not different from a macroscopic current circulating around the material's surface, despite the fact that no individual charge is traveling a large distance. These bound currents can be described using the magnetization M.[14]

The very complicated and granular bound charges and bound currents, therefore, can be represented on the macroscopic scale in terms of P and M, which average these charges and currents on a sufficiently large scale so as not to see the granularity of individual atoms, but also sufficiently small that they vary with location in the material. As such, Maxwell's macroscopic equations ignore many details on a fine scale that can be unimportant to understanding matters on a gross scale by calculating fields that are averaged over some suitable volume.

Auxiliary fields, polarization and magnetization

[edit]The definitions of the auxiliary fields are: where P is the polarization field and M is the magnetization field, which are defined in terms of microscopic bound charges and bound currents respectively. The macroscopic bound charge density ρb and bound current density Jb in terms of polarization P and magnetization M are then defined as

If we define the total, bound, and free charge and current density by and use the defining relations above to eliminate D, and H, the "macroscopic" Maxwell's equations reproduce the "microscopic" equations.

Constitutive relations

[edit]In order to apply 'Maxwell's macroscopic equations', it is necessary to specify the relations between displacement field D and the electric field E, as well as the magnetizing field H and the magnetic field B. Equivalently, we have to specify the dependence of the polarization P (hence the bound charge) and the magnetization M (hence the bound current) on the applied electric and magnetic field. The equations specifying this response are called constitutive relations. For real-world materials, the constitutive relations are rarely simple, except approximately, and usually determined by experiment. See the main article on constitutive relations for a fuller description.[15]: 44–45

For materials without polarization and magnetization, the constitutive relations are (by definition)[9]: 2 where ε0 is the permittivity of free space and μ0 the permeability of free space. Since there is no bound charge, the total and the free charge and current are equal.

An alternative viewpoint on the microscopic equations is that they are the macroscopic equations together with the statement that vacuum behaves like a perfect linear "material" without additional polarization and magnetization. More generally, for linear materials the constitutive relations are[15]: 44–45 where ε is the permittivity and μ the permeability of the material. For the displacement field D the linear approximation is usually excellent because for all but the most extreme electric fields or temperatures obtainable in the laboratory (high power pulsed lasers) the interatomic electric fields of materials of the order of 1011 V/m are much higher than the external field. For the magnetizing field , however, the linear approximation can break down in common materials like iron leading to phenomena like hysteresis. Even the linear case can have various complications, however.

- For homogeneous materials, ε and μ are constant throughout the material, while for inhomogeneous materials they depend on location within the material (and perhaps time).[16]: 463

- For isotropic materials, ε and μ are scalars, while for anisotropic materials (e.g. due to crystal structure) they are tensors.[15]: 421 [16]: 463

- Materials are generally dispersive, so ε and μ depend on the frequency of any incident EM waves.[15]: 625 [16]: 397

Even more generally, in the case of non-linear materials (see for example nonlinear optics), D and P are not necessarily proportional to E, similarly H or M is not necessarily proportional to B. In general D and H depend on both E and B, on location and time, and possibly other physical quantities.

In applications one also has to describe how the free currents and charge density behave in terms of E and B possibly coupled to other physical quantities like pressure, and the mass, number density, and velocity of charge-carrying particles. E.g., the original equations given by Maxwell (see History of Maxwell's equations) included Ohm's law in the form

Alternative formulations

[edit]Following are some of the several other mathematical formalisms of Maxwell's equations, with the columns separating the two homogeneous Maxwell equations from the two inhomogeneous ones. Each formulation has versions directly in terms of the electric and magnetic fields, and indirectly in terms of the electrical potential φ and the vector potential A. Potentials were introduced as a convenient way to solve the homogeneous equations, but it was thought that all observable physics was contained in the electric and magnetic fields (or relativistically, the Faraday tensor). The potentials play a central role in quantum mechanics, however, and act quantum mechanically with observable consequences even when the electric and magnetic fields vanish (Aharonov–Bohm effect).

Each table describes one formalism. See the main article for details of each formulation.

The direct spacetime formulations make manifest that the Maxwell equations are relativistically invariant, where space and time are treated on equal footing. Because of this symmetry, the electric and magnetic fields are treated on equal footing and are recognized as components of the Faraday tensor. This reduces the four Maxwell equations to two, which simplifies the equations, although we can no longer use the familiar vector formulation. Maxwell equations in formulation that do not treat space and time manifestly on the same footing have Lorentz invariance as a hidden symmetry. This was a major source of inspiration for the development of relativity theory. Indeed, even the formulation that treats space and time separately is not a non-relativistic approximation and describes the same physics by simply renaming variables. For this reason the relativistic invariant equations are usually called the Maxwell equations as well.

Each table below describes one formalism.

| Formulation | Homogeneous equations | Inhomogeneous equations |

|---|---|---|

| Fields Minkowski space |

||

| Potentials (any gauge) Minkowski space |

||

| Potentials (Lorenz gauge) Minkowski space |

|

|

| Fields any spacetime |

||

| Potentials (any gauge) any spacetime (with §topological restrictions) |

||

| Potentials (Lorenz gauge) any spacetime (with topological restrictions) |

|

| Formulation | Homogeneous equations | Inhomogeneous equations |

|---|---|---|

| Fields any spacetime |

||

| Potentials (any gauge) any spacetime (with topological restrictions) |

||

| Potentials (Lorenz gauge) any spacetime (with topological restrictions) |

|

- In the tensor calculus formulation, the electromagnetic tensor Fαβ is an antisymmetric covariant order 2 tensor; the four-potential, Aα, is a covariant vector; the current, Jα, is a vector; the square brackets, [ ], denote antisymmetrization of indices; ∂α is the partial derivative with respect to the coordinate, xα. In Minkowski space coordinates are chosen with respect to an inertial frame; (xα) = (ct, x, y, z), so that the metric tensor used to raise and lower indices is ηαβ = diag(1, −1, −1, −1). The d'Alembert operator on Minkowski space is ◻ = ∂α∂α as in the vector formulation. In general spacetimes, the coordinate system xα is arbitrary, the covariant derivative ∇α, the Ricci tensor, Rαβ and raising and lowering of indices are defined by the Lorentzian metric, gαβ and the d'Alembert operator is defined as ◻ = ∇α∇α. The topological restriction is that the second real cohomology group of the space vanishes (see the differential form formulation for an explanation). This is violated for Minkowski space with a line removed, which can model a (flat) spacetime with a point-like monopole on the complement of the line.

- In the differential form formulation on arbitrary space times, F = 1/2Fαβdxα ∧ dxβ is the electromagnetic tensor considered as a 2-form, A = Aαdxα is the potential 1-form, is the current 3-form, d is the exterior derivative, and is the Hodge star on forms defined (up to its orientation, i.e. its sign) by the Lorentzian metric of spacetime. In the special case of 2-forms such as F, the Hodge star depends on the metric tensor only for its local scale. This means that, as formulated, the differential form field equations are conformally invariant, but the Lorenz gauge condition breaks conformal invariance. The operator is the d'Alembert–Laplace–Beltrami operator on 1-forms on an arbitrary Lorentzian spacetime. The topological condition is again that the second real cohomology group is 'trivial' (meaning that its form follows from a definition). By the isomorphism with the second de Rham cohomology this condition means that every closed 2-form is exact.

Other formalisms include the geometric algebra formulation and a matrix representation of Maxwell's equations. Historically, a quaternionic formulation[17][18] was used.

Solutions

[edit]Maxwell's equations are partial differential equations that relate the electric and magnetic fields to each other and to the electric charges and currents. Often, the charges and currents are themselves dependent on the electric and magnetic fields via the Lorentz force equation and the constitutive relations. These all form a set of coupled partial differential equations which are often very difficult to solve: the solutions encompass all the diverse phenomena of classical electromagnetism. Some general remarks follow.

As for any differential equation, boundary conditions[19][20][21] and initial conditions[22] are necessary for a unique solution. For example, even with no charges and no currents anywhere in spacetime, there are the obvious solutions for which E and B are zero or constant, but there are also non-trivial solutions corresponding to electromagnetic waves. In some cases, Maxwell's equations are solved over the whole of space, and boundary conditions are given as asymptotic limits at infinity.[23] In other cases, Maxwell's equations are solved in a finite region of space, with appropriate conditions on the boundary of that region, for example an artificial absorbing boundary representing the rest of the universe,[24][25] or periodic boundary conditions, or walls that isolate a small region from the outside world (as with a waveguide or cavity resonator).[26]

Jefimenko's equations (or the closely related Liénard–Wiechert potentials) are the explicit solution to Maxwell's equations for the electric and magnetic fields created by any given distribution of charges and currents. It assumes specific initial conditions to obtain the so-called "retarded solution", where the only fields present are the ones created by the charges. However, Jefimenko's equations are unhelpful in situations when the charges and currents are themselves affected by the fields they create.

Numerical methods for differential equations can be used to compute approximate solutions of Maxwell's equations when exact solutions are impossible. These include the finite element method and finite-difference time-domain method.[19][21][27][28][29] For more details, see Computational electromagnetics.

Overdetermination of Maxwell's equations

[edit]Maxwell's equations seem overdetermined, in that they involve six unknowns (the three components of E and B) but eight equations (one for each of the two Gauss's laws, three vector components each for Faraday's and Ampère's circuital laws). (The currents and charges are not unknowns, being freely specifiable subject to charge conservation.) This is related to a certain limited kind of redundancy in Maxwell's equations: It can be proven that any system satisfying Faraday's law and Ampère's circuital law automatically also satisfies the two Gauss's laws, as long as the system's initial condition does, and assuming conservation of charge and the nonexistence of magnetic monopoles.[30][31] This explanation was first introduced by Julius Adams Stratton in 1941.[32]

Although it is possible to simply ignore the two Gauss's laws in a numerical algorithm (apart from the initial conditions), the imperfect precision of the calculations can lead to ever-increasing violations of those laws. By introducing dummy variables characterizing these violations, the four equations become not overdetermined after all. The resulting formulation can lead to more accurate algorithms that take all four laws into account.[33]

Both identities , which reduce eight equations to six independent ones, are the true reason of overdetermination.[34][35]

Equivalently, the overdetermination can be viewed as implying conservation of electric and magnetic charge, as they are required in the derivation described above but implied by the two Gauss's laws.

For linear algebraic equations, one can make 'nice' rules to rewrite the equations and unknowns. The equations can be linearly dependent. But in differential equations, and especially partial differential equations (PDEs), one needs appropriate boundary conditions, which depend in not so obvious ways on the equations. Even more, if one rewrites them in terms of vector and scalar potential, then the equations are underdetermined because of gauge fixing.

Maxwell's equations as the classical limit of QED

[edit]Maxwell's equations and the Lorentz force law (along with the rest of classical electromagnetism) are extraordinarily successful at explaining and predicting a variety of phenomena. However, they do not account for quantum effects, and so their domain of applicability is limited. Maxwell's equations are thought of as the classical limit of quantum electrodynamics (QED).

Some observed electromagnetic phenomena cannot be explained with Maxwell's equations if the source of the electromagnetic fields are the classical distributions of charge and current. These include photon–photon scattering and many other phenomena related to photons or virtual photons, "nonclassical light" and quantum entanglement of electromagnetic fields (see Quantum optics). E.g. quantum cryptography cannot be described by Maxwell theory, not even approximately. The approximate nature of Maxwell's equations becomes more and more apparent when going into the extremely strong field regime (see Euler–Heisenberg Lagrangian) or to extremely small distances.

Finally, Maxwell's equations cannot explain any phenomenon involving individual photons interacting with quantum matter, such as the photoelectric effect, Planck's law, the Duane–Hunt law, and single-photon light detectors. However, many such phenomena may be explained using a halfway theory of quantum matter coupled to a classical electromagnetic field, either as external field or with the expected value of the charge current and density on the right hand side of Maxwell's equations. This is known as semiclassical theory or self-field QED and was initially discovered by de Broglie and Schrödinger and later fully developed by E.T. Jaynes and A.O. Barut.

Variations

[edit]Popular variations on the Maxwell equations as a classical theory of electromagnetic fields are relatively scarce because the standard equations have stood the test of time remarkably well.

Magnetic monopoles

[edit]Maxwell's equations posit that there is electric charge, but no magnetic charge (also called magnetic monopoles), in the universe. Indeed, magnetic charge has never been observed, despite extensive searches,[note 7] and may not exist. If they did exist, both Gauss's law for magnetism and Faraday's law would need to be modified, and the resulting four equations would be fully symmetric under the interchange of electric and magnetic fields.[9]: 273–275

See also

[edit]Explanatory notes

[edit]- ^ Electric and magnetic fields, according to the theory of relativity, are the components of a single electromagnetic field.

- ^ In general relativity, however, they must enter, through its stress–energy tensor, into Einstein field equations that include the spacetime curvature.

- ^ The absence of sinks/sources of the field does not imply that the field lines must be closed or escape to infinity. They can also wrap around indefinitely, without self-intersections. Moreover, around points where the field is zero (that cannot be intersected by field lines, because their direction would not be defined), there can be the simultaneous begin of some lines and end of other lines. This happens, for instance, in the middle between two identical cylindrical magnets, whose north poles face each other. In the middle between those magnets, the field is zero and the axial field lines coming from the magnets end. At the same time, an infinite number of divergent lines emanate radially from this point. The simultaneous presence of lines which end and begin around the point preserves the divergence-free character of the field. For a detailed discussion of non-closed field lines, see L. Zilberti "The Misconception of Closed Magnetic Flux Lines", IEEE Magnetics Letters, vol. 8, art. 1306005, 2017.

- ^ The quantity we would now call (ε0μ0)−1/2, with units of velocity, was directly measured before Maxwell's equations, in an 1855 experiment by Wilhelm Eduard Weber and Rudolf Kohlrausch. They charged a leyden jar (a kind of capacitor), and measured the electrostatic force associated with the potential; then, they discharged it while measuring the magnetic force from the current in the discharge wire. Their result was 3.107×108 m/s, remarkably close to the speed of light. See Joseph F. Keithley, The story of electrical and magnetic measurements: from 500 B.C. to the 1940s, p. 115.

- ^ There are cases (anomalous dispersion) where the phase velocity can exceed c, but the "signal velocity" will still be ≤ c

- ^ In some books—e.g., in U. Krey and A. Owen's Basic Theoretical Physics (Springer 2007)—the term effective charge is used instead of total charge, while free charge is simply called charge.

- ^ See magnetic monopole for a discussion of monopole searches. Recently, scientists have discovered that some types of condensed matter, including spin ice and topological insulators, display emergent behavior resembling magnetic monopoles. (See sciencemag.org and nature.com.) Although these were described in the popular press as the long-awaited discovery of magnetic monopoles, they are only superficially related. A "true" magnetic monopole is something where ∇ ⋅ B ≠ 0, whereas in these condensed-matter systems, ∇ ⋅ B = 0 while only ∇ ⋅ H ≠ 0.

References

[edit]- ^ Hampshire, Damian P. (29 October 2018). "A derivation of Maxwell's equations using the Heaviside notation". Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences. 376 (2134). arXiv:1510.04309. Bibcode:2018RSPTA.37670447H. doi:10.1098/rsta.2017.0447. ISSN 1364-503X. PMC 6232579. PMID 30373937.

- ^ "2022 CODATA Value: speed of light in vacuum". The NIST Reference on Constants, Units, and Uncertainty. NIST. May 2024. Retrieved 2024-05-18.

- ^ a b c Jackson, John. "Maxwell's equations". Science Video Glossary. Berkeley Lab. Archived from the original on 2019-01-29. Retrieved 2016-06-04.

- ^ J. D. Jackson, Classical Electrodynamics, section 6.3

- ^ Principles of physics: a calculus-based text, by R. A. Serway, J. W. Jewett, page 809.

- ^ Bruce J. Hunt (1991) The Maxwellians, chapter 5 and appendix, Cornell University Press

- ^ "Maxwell's Equations". Engineering and Technology History Wiki. 29 October 2019. Retrieved 2021-12-04.

- ^ Šolín, Pavel (2006). Partial differential equations and the finite element method. John Wiley and Sons. p. 273. ISBN 978-0-471-72070-6.

- ^ a b c J. D. Jackson (1975-10-17). Classical Electrodynamics (3rd ed.). Wiley. ISBN 978-0-471-43132-9.

- ^ Littlejohn, Robert (Fall 2007). "Gaussian, SI and Other Systems of Units in Electromagnetic Theory" (PDF). Physics 221A, University of California, Berkeley lecture notes. Retrieved 2008-05-06.

- ^ David J Griffiths (1999). Introduction to electrodynamics (Third ed.). Prentice Hall. pp. 559–562. ISBN 978-0-13-805326-0.

- ^ Kimball Milton; J. Schwinger (18 June 2006). Electromagnetic Radiation: Variational Methods, Waveguides and Accelerators. Springer Science & Business Media. ISBN 978-3-540-29306-4.

- ^ See David J. Griffiths (1999). "4.2.2". Introduction to Electrodynamics (third ed.). Prentice Hall. ISBN 9780138053260. for a good description of how P relates to the bound charge.

- ^ See David J. Griffiths (1999). "6.2.2". Introduction to Electrodynamics (third ed.). Prentice Hall. ISBN 9780138053260. for a good description of how M relates to the bound current.

- ^ a b c d Andrew Zangwill (2013). Modern Electrodynamics. Cambridge University Press. ISBN 978-0-521-89697-9.

- ^ a b c Kittel, Charles (2005), Introduction to Solid State Physics (8th ed.), USA: John Wiley & Sons, Inc., ISBN 978-0-471-41526-8

- ^ Jack, P. M. (2003). "Physical Space as a Quaternion Structure I: Maxwell Equations. A Brief Note". arXiv:math-ph/0307038.

- ^ A. Waser (2000). "On the Notation of Maxwell's Field Equations" (PDF). AW-Verlag.

- ^ a b Peter Monk (2003). Finite Element Methods for Maxwell's Equations. Oxford UK: Oxford University Press. p. 1 ff. ISBN 978-0-19-850888-5.

- ^ Thomas B. A. Senior & John Leonidas Volakis (1995-03-01). Approximate Boundary Conditions in Electromagnetics. London UK: Institution of Electrical Engineers. p. 261 ff. ISBN 978-0-85296-849-9.

- ^ a b T Hagstrom (Björn Engquist & Gregory A. Kriegsmann, Eds.) (1997). Computational Wave Propagation. Berlin: Springer. p. 1 ff. ISBN 978-0-387-94874-4.

- ^ Henning F. Harmuth & Malek G. M. Hussain (1994). Propagation of Electromagnetic Signals. Singapore: World Scientific. p. 17. ISBN 978-981-02-1689-4.

- ^ David M Cook (2002). The Theory of the Electromagnetic Field. Mineola NY: Courier Dover Publications. p. 335 ff. ISBN 978-0-486-42567-2.

- ^ Jean-Michel Lourtioz (2005-05-23). Photonic Crystals: Towards Nanoscale Photonic Devices. Berlin: Springer. p. 84. ISBN 978-3-540-24431-8.

- ^ S. G. Johnson, Notes on Perfectly Matched Layers, online MIT course notes (Aug. 2007).

- ^ S. F. Mahmoud (1991). Electromagnetic Waveguides: Theory and Applications. London UK: Institution of Electrical Engineers. Chapter 2. ISBN 978-0-86341-232-5.

- ^ John Leonidas Volakis, Arindam Chatterjee & Leo C. Kempel (1998). Finite element method for electromagnetics : antennas, microwave circuits, and scattering applications. New York: Wiley IEEE. p. 79 ff. ISBN 978-0-7803-3425-0.

- ^ Bernard Friedman (1990). Principles and Techniques of Applied Mathematics. Mineola NY: Dover Publications. ISBN 978-0-486-66444-6.

- ^ Taflove A & Hagness S C (2005). Computational Electrodynamics: The Finite-difference Time-domain Method. Boston MA: Artech House. Chapters 6 & 7. ISBN 978-1-58053-832-9.

- ^ H Freistühler & G Warnecke (2001). Hyperbolic Problems: Theory, Numerics, Applications. Springer. p. 605. ISBN 9783764367107.

- ^ J Rosen (1980). "Redundancy and superfluity for electromagnetic fields and potentials". American Journal of Physics. 48 (12): 1071. Bibcode:1980AmJPh..48.1071R. doi:10.1119/1.12289.

- ^ J. A. Stratton (1941). Electromagnetic Theory. McGraw-Hill Book Company. pp. 1–6. ISBN 9780470131534.

{{cite book}}: ISBN / Date incompatibility (help) - ^ B Jiang & J Wu & L. A. Povinelli (1996). "The Origin of Spurious Solutions in Computational Electromagnetics". Journal of Computational Physics. 125 (1): 104. Bibcode:1996JCoPh.125..104J. doi:10.1006/jcph.1996.0082. hdl:2060/19950021305.

- ^ Weinberg, Steven (1972). Gravitation and Cosmology. John Wiley. pp. 161–162. ISBN 978-0-471-92567-5.

- ^ Courant, R. & Hilbert, D. (1962), Methods of Mathematical Physics: Partial Differential Equations, vol. II, New York: Wiley-Interscience, pp. 15–18, ISBN 9783527617241

{{citation}}: ISBN / Date incompatibility (help)

Further reading

[edit]- Imaeda, K. (1995), "Biquaternionic Formulation of Maxwell's Equations and their Solutions", in Ablamowicz, Rafał; Lounesto, Pertti (eds.), Clifford Algebras and Spinor Structures, Springer, pp. 265–280, doi:10.1007/978-94-015-8422-7_16, ISBN 978-90-481-4525-6

Historical publications

[edit]- On Faraday's Lines of Force – 1855/56. Maxwell's first paper (Part 1 & 2) – Compiled by Blaze Labs Research (PDF).

- On Physical Lines of Force – 1861. Maxwell's 1861 paper describing magnetic lines of force – Predecessor to 1873 Treatise.

- James Clerk Maxwell, "A Dynamical Theory of the Electromagnetic Field", Philosophical Transactions of the Royal Society of London 155, 459–512 (1865). (This article accompanied a December 8, 1864 presentation by Maxwell to the Royal Society.)

- A Dynamical Theory Of The Electromagnetic Field – 1865. Maxwell's 1865 paper describing his 20 equations, link from Google Books.

- J. Clerk Maxwell (1873), "A Treatise on Electricity and Magnetism":

- Developments before the theory of relativity

- Larmor Joseph (1897). . Phil. Trans. R. Soc. 190: 205–300.

- Lorentz Hendrik (1899). . Proc. Acad. Science Amsterdam. I: 427–443.

- Lorentz Hendrik (1904). . Proc. Acad. Science Amsterdam. IV: 669–678.

- Henri Poincaré (1900) "La théorie de Lorentz et le Principe de Réaction" (in French), Archives Néerlandaises, V, 253–278.

- Henri Poincaré (1902) "La Science et l'Hypothèse" (in French).

- Henri Poincaré (1905) "Sur la dynamique de l'électron" (in French), Comptes Rendus de l'Académie des Sciences, 140, 1504–1508.

- Catt, Walton and Davidson. "The History of Displacement Current" Archived 2008-05-06 at the Wayback Machine. Wireless World, March 1979.

External links

[edit]- "Maxwell equations", Encyclopedia of Mathematics, EMS Press, 2001 [1994]

- maxwells-equations.com — An intuitive tutorial of Maxwell's equations.

- The Feynman Lectures on Physics Vol. II Ch. 18: The Maxwell Equations

- Wikiversity Page on Maxwell's Equations

Modern treatments

[edit]- Electromagnetism (ch. 11), B. Crowell, Fullerton College

- Lecture series: Relativity and electromagnetism, R. Fitzpatrick, University of Texas at Austin

- Electromagnetic waves from Maxwell's equations on Project PHYSNET.

- MIT Video Lecture Series (36 × 50 minute lectures) (in .mp4 format) – Electricity and Magnetism Taught by Professor Walter Lewin.

Other

[edit]- Silagadze, Z. K. (2002). "Feynman's derivation of Maxwell equations and extra dimensions". Annales de la Fondation Louis de Broglie. 27: 241–256. arXiv:hep-ph/0106235.

- Nature Milestones: Photons – Milestone 2 (1861) Maxwell's equations

Maxwell's equations

View on GrokipediaHistorical Development

Maxwell's Original Formulation

James Clerk Maxwell developed his theory of electromagnetism in the mid-19th century, building on key empirical discoveries that linked electricity and magnetism. In 1820, Hans Christian Ørsted observed that an electric current deflects a magnetic needle, establishing the magnetic effects of electric currents.[5] Shortly thereafter, André-Marie Ampère formulated a mathematical law describing the magnetic field produced by steady currents, providing a foundational relation between current and magnetism.[5] Michael Faraday advanced these ideas through experiments in the 1830s, discovering electromagnetic induction—where a changing magnetic field induces an electric current—and conceptualizing fields as continuous lines of force rather than action-at-a-distance.[5] Maxwell sought to unify these phenomena mathematically, interpreting Faraday's qualitative field concepts in terms of precise equations. Maxwell's initial formulation appeared in his 1861–1862 paper "On Physical Lines of Force," published in the Philosophical Magazine, where he proposed a mechanical model of the electromagnetic field using molecular vortices to explain magnetic phenomena and saturation.[6] This work translated Faraday's lines of force into a dynamical framework, introducing the idea of a pervasive medium (the luminiferous ether) that transmits electromagnetic effects.[7] In this treatise, Maxwell began deriving equations for electric and magnetic interactions, laying the groundwork for a comprehensive theory without yet fully incorporating optics. Maxwell refined and expanded his ideas in the 1865 paper "A Dynamical Theory of the Electromagnetic Field," presented to the Royal Society, where he achieved the unification of electricity, magnetism, and light.[3] To resolve inconsistencies in Ampère's law for time-varying fields, Maxwell introduced the concept of displacement current—a term representing the rate of change of electric displacement—which allows changing electric fields to generate magnetic fields, even in the absence of conduction currents.[5] This addition enabled the prediction of self-sustaining electromagnetic waves propagating through space at a speed of approximately 310,000,000 meters per second, closely matching the known velocity of light (about 3 × 10^8 m/s).[3] Maxwell concluded that light itself must be an electromagnetic wave, thus linking optics to electromagnetism.[3] Maxwell's original formulation, as systematically presented in his 1873 two-volume "A Treatise on Electricity and Magnetism," comprised around 20 equations expressed in component form using scalar and vector potentials.[8] These equations captured the full dynamics of the electromagnetic field but were cumbersome due to their expanded notation. In 1884–1885, Oliver Heaviside reformulated them into a more compact set of four vector equations, enhancing their elegance and applicability while preserving Maxwell's core insights.Standardization and Modern Form

Following James Clerk Maxwell's original formulation of electromagnetism in approximately 20 equations within his 1873 Treatise on Electricity and Magnetism, subsequent refinements in the 1880s and 1890s transformed these into the compact, vector-based set recognized today.[9] Oliver Heaviside played a pivotal role in this standardization, independently developing a system of vector notation and, in 1884–1885, condensing Maxwell's equations into four principal vector equations that emphasized the electric field E and magnetic field H without relying on potentials.[9] This reformulation shifted away from the quaternion-based approach Maxwell had employed, which Heaviside criticized as overly complex and "antiphysical," toward a more physically intuitive vector calculus suitable for engineering and physics applications.[9] Concurrently, J. Willard Gibbs contributed foundational advancements in vector analysis during the 1880s, producing lecture notes in 1881 and 1884 that formalized operations like the dot and cross products, drawing from Grassmann's ideas but tailored for physical contexts.[10] Gibbs's work, published posthumously in 1901 as Vector Analysis by Edwin Bidwell Wilson, provided the mathematical framework that complemented Heaviside's efforts and facilitated the widespread adoption of vector methods in electromagnetism, including applications to Maxwell's theory in Gibbs's own papers from 1882 to 1889.[10] Heaviside's vector equations explicitly incorporated Maxwell's displacement current—first clearly articulated in the 1873 Treatise as a term accounting for changing electric fields—formalizing its essential role in ensuring continuity of current and enabling wave propagation.[9] Independently of Heaviside, Heinrich Hertz also derived a simplified vector formulation of Maxwell's equations in the late 1880s. Through experiments conducted from 1886 to 1888, Hertz generated and detected electromagnetic waves propagating at the speed of light, providing empirical confirmation of Maxwell's predictions and accelerating the theory's acceptance.[11] In 1895, Hendrik Lorentz further refined the equations in his monograph Versuch einer Theorie der electrischen und optischen Erscheinungen in bewegten Körpern, adjusting them to maintain invariance under transformations for bodies in motion relative to the luminiferous ether, which laid groundwork for special relativity.[12] Lorentz integrated the Lorentz force law, describing the force on charged particles in electromagnetic fields, ensuring compatibility with relativistic principles while preserving the equation structure. These developments culminated in a symmetric form of the equations, particularly evident in vacuum, that highlighted the duality between electric and magnetic fields, foreshadowing deeper symmetries in electromagnetic theory.[9]Conceptual Descriptions

Gauss's Law for Electricity

Gauss's law for electricity states that the electric field originates from electric charges, with field lines emerging from positive charges and terminating on negative charges, thereby quantifying the relationship between these charges and the surrounding electric field. This principle underscores that the total electric flux through any closed surface is directly proportional to the net charge enclosed within that surface, providing a fundamental measure of how charges "source" the electric field. The concept emphasizes the conservation of field lines, where the net number of lines leaving a closed surface equals the enclosed charge, scaled by the permittivity of free space. The law was first formulated by Joseph-Louis Lagrange in 1773, and independently derived by Carl Friedrich Gauss from Coulomb's inverse-square law of electrostatic force in 1835, though it remained unpublished until 1867.[13] This integral formulation served as a foundational step, enabling later developments into differential forms that describe local behavior of fields. Gauss's insight built upon earlier observations of electric forces, transforming them into a symmetric expression for flux, which proved essential for broader electromagnetic theory. A classic illustration involves a point charge at the center of a spherical surface, where the symmetric electric field results in uniform flux outward, directly linking the field's strength to the enclosed charge. Similarly, for a charged parallel-plate capacitor, a Gaussian surface enclosing one plate captures the flux through its faces, revealing the uniform field between plates without needing detailed force calculations. These examples highlight the law's utility in symmetric charge distributions, such as spherical symmetry in uniformly charged spheres, where the enclosed charge determines the field's radial dependence. In qualitative terms, the law is expressed as the surface integral of the electric field over a closed surface equaling the enclosed charge divided by the vacuum permittivity: This relation captures the net flux without regard to the surface's shape, as long as it fully encloses the charge, distinguishing electric fields as uniquely sourced by charges unlike other field types. The integral form relates to the divergence in differential descriptions, where local charge density governs field spreading, though detailed analysis appears in subsequent formulations.Gauss's Law for Magnetism

Gauss's law for magnetism asserts that there are no magnetic charges in nature, meaning that the net magnetic flux through any closed surface is always zero. This principle implies that the divergence of the magnetic field vector B is zero everywhere, indicating that magnetic fields have no sources or sinks. Unlike the corresponding law for electricity, where electric fields originate from charges, magnetic fields cannot be produced by isolated magnetic poles.[14][15] This law was inferred from centuries of experiments demonstrating that magnets always exhibit both north and south poles together, with no evidence of isolated poles, and was formalized as part of James Clerk Maxwell's synthesis of electromagnetic theory in his 1865 paper "A Dynamical Theory of the Electromagnetic Field." Maxwell's equations incorporated this observation to describe how magnetic fields behave consistently with experimental findings, such as those from Michael Faraday on field lines. The absence of magnetic monopoles underscores the law's foundational role in unifying electricity and magnetism.[16][5] A classic example is the magnetic field around a bar magnet, where field lines emerge from the north pole and loop back to the south pole externally, forming continuous closed paths without beginning or ending. Similarly, Earth's magnetic field approximates a giant dipole, with lines forming closed loops that extend from the southern magnetic pole through space to the northern pole, protecting the planet from solar wind. Even during geomagnetic reversals, which have occurred hundreds of times over millions of years—such as the last one approximately 780,000 years ago—the field weakens and becomes multipolar but maintains its sourceless nature, never producing isolated poles.[17][18][19] The law's implications extend to the fundamental origin of magnetism, which emerges as a relativistic effect arising from the motion of electric charges, rather than from independent magnetic sources. In special relativity, the magnetic field observed in one frame corresponds to transformations of electric fields due to relative velocities, explaining why moving charges produce magnetic effects alongside electric ones. This perspective, highlighted in analyses of electromagnetic interactions, reinforces that all magnetic phenomena ultimately trace back to electric charge dynamics.[20]Faraday's Law of Induction

Faraday discovered electromagnetic induction in 1831 through experiments showing that moving a magnet near a wire coil or varying current in one coil could produce a transient current in a nearby coil, without direct electrical connection.[21] These findings, detailed in his 1832 paper "Experimental Researches in Electricity," established that a changing magnetic field generates an electric current, laying the groundwork for understanding dynamic electromagnetic interactions.[22] James Clerk Maxwell quantified this phenomenon mathematically in his 1865 paper "A Dynamical Theory of the Electromagnetic Field," integrating it as a core equation in his unified theory of electromagnetism.[3] Faraday's law asserts that a time-varying magnetic flux through a closed loop induces an electromotive force (EMF) equal to the negative rate of change of that flux. In integral form, this is given by where represents the magnetic flux through the surface bounded by the loop , is the electric field, the magnetic field, and , are differential elements along the path and surface, respectively.[2] The negative sign reflects Lenz's law, indicating that the induced EMF opposes the flux change, conserving energy in the system. The induced electric field from a changing magnetic field is non-conservative, meaning the line integral around a closed loop can be nonzero, unlike static electric fields from charges.[2] This non-conservative nature arises because the curl of is proportional to the time derivative of , leading to circulatory electric fields that drive currents in loops. In practical terms, this principle enables induced currents in moving conductors, such as a metal rod sliding on rails in a magnetic field, where motion alters the flux and generates a motional EMF.[23] Electric generators exemplify Faraday's law, converting mechanical energy to electrical energy by rotating coils in a magnetic field to produce alternating flux changes and thus an AC EMF.[23] Transformers rely on mutual induction, where an alternating current in a primary coil creates a varying magnetic field that induces an EMF in a secondary coil, facilitating voltage transformation without direct connection.[24] These applications highlight the law's role in powering modern electrical systems, from energy generation to signal transmission.[25]Ampère's Circuital Law with Displacement Current

Ampère's circuital law originally described the relationship between electric currents and the magnetic fields they produce in steady-state conditions. Formulated by André-Marie Ampère in 1826, the law states that the line integral of the magnetic field around a closed loop is proportional to the total electric current passing through the surface bounded by that loop: where is the permeability of free space.[26] This relation holds for steady currents where charge distribution does not change with time, providing a foundational tool for calculating magnetic fields from known current distributions.[27] However, this original form was inconsistent with the continuity equation, which expresses local conservation of charge: , where is the current density and is the charge density. In time-varying situations, such as a charging capacitor, the law failed to account for changing electric fields between the plates where no conduction current flows, leading to discontinuities in the predicted magnetic field.[27] To resolve this, James Clerk Maxwell introduced the concept of displacement current in his 1865 paper, extending Ampère's law to include a term proportional to the rate of change of the electric flux: where is the permittivity of free space and is the electric flux through the surface.[3] This modification, known as the Ampère-Maxwell law, ensures consistency with charge conservation by treating the displacement current density as an effective current that generates magnetic fields even in the absence of conduction currents.[28] A classic example of the original law's application is the magnetic field inside a long solenoid, where steady current flows through tightly wound coils. By choosing an Amperian loop as a rectangle with one side along the solenoid's axis, the law yields a uniform magnetic field inside, where is the number of turns per unit length and is the current—demonstrating how enclosed current directly determines field strength.[29] In contrast, the displacement current becomes crucial in scenarios without conduction currents, such as the propagation of electromagnetic waves in vacuum. Here, oscillating electric fields produce changing magnetic fields via the displacement term, and vice versa, allowing self-sustaining waves to travel through empty space at the speed of light without any material medium.[30] Maxwell's addition of the displacement current was pivotal, as it not only rectified the theoretical inconsistency but also enabled the prediction of electromagnetic waves, unifying electricity, magnetism, and optics into a coherent framework.[3] This extension transformed Ampère's static relation into a dynamic law essential for understanding time-dependent phenomena in electromagnetism.Microscopic Formulation in Vacuum

Differential Equations

The differential form of Maxwell's equations provides a local, point-wise description of electromagnetic fields in terms of their divergence and curl at every point in space and time. This formulation, which emerged from Oliver Heaviside's vectorial reformulation of James Clerk Maxwell's original scalar equations in the late 19th century, expresses the relationships between electric and magnetic fields, charge density, and current density using partial differential operators. It is particularly suited for microscopic analyses in vacuum, where fields arise directly from charges and currents without material effects like polarization or magnetization. In SI units, the four differential equations for the electric field and magnetic field in vacuum are: where is the free charge density, is the free current density, is the vacuum permittivity, is the vacuum permeability, and the partial derivative with respect to time accounts for the dynamic evolution of the fields. These equations describe classical relativistic electrodynamics in flat spacetime, focusing on classical field behavior without quantum or gravitational influences.[31] This differential representation offers key advantages over integral forms, as it describes field variations instantaneously at any location, enabling straightforward derivations of broader phenomena such as the electromagnetic wave equation by taking curls of the curl equations. For instance, combining the curl equations yields the wave equation in source-free regions, highlighting the propagation of fields at the speed of light .Integral Equations

The integral forms of Maxwell's equations describe the global behavior of electromagnetic fields in vacuum by relating the flux of the fields through closed surfaces and their circulation around closed loops to enclosed charges, currents, and time-varying fields. These formulations are particularly useful for problems exhibiting high symmetry, such as spherical charge distributions or long solenoids, where the integrals simplify due to uniform field directions over the surfaces or paths. Unlike local point-wise descriptions, the integral forms provide macroscopic insights applicable to finite regions of space. The four integral equations, stated in SI units for vacuum, are as follows: Gauss's law for electricity states that the total electric flux through any closed surface equals the enclosed free charge divided by the vacuum permittivity: where is the electric field, is a closed surface enclosing volume , and is the total charge within .[32] Gauss's law for magnetism asserts that the magnetic flux through any closed surface is zero, implying no magnetic monopoles: with the magnetic field.[32] Faraday's law of induction relates the electromotive force around a closed loop to the negative rate of change of magnetic flux through the surface bounded by that loop: where is the closed contour and the surface integral defines the magnetic flux . This equation captures the generation of electric fields by changing magnetic fields.[32] Ampère's circuital law, augmented by Maxwell's displacement current term, equates the magnetic circulation around a closed loop to the enclosed conduction current plus the rate of change of electric flux: where is the total current piercing the surface , and the electric flux term accounts for time-varying electric fields.[32] Physically, the surface integrals represent net flux, quantifying how much field "escapes" a volume, while line integrals measure circulation, akin to the work done by the field along a path. These forms embody the flux and circulation theorems, directly linking field behaviors to sources in enclosed regions. For instance, in symmetric cases like a uniformly charged sphere, Gauss's law yields the field strength by assuming constant over a Gaussian surface. Similarly, for an ideal solenoid, Ampère's law simplifies to relate inside to the current, with zero field outside.[33] These integral equations are directly testable through experiments mirroring the original discoveries: Gauss's law via measurements of electric flux from charged conductors, as in early electrostatic experiments with isolated spheres; the magnetic Gauss law confirmed by the absence of isolated magnetic poles in searches using electromagnets; Faraday's law demonstrated by induced currents in coils from varying magnetic fields, as in dynamo setups; and Ampère-Maxwell law verified by magnetic fields around steady currents in wires or solenoids, with displacement current effects observed in capacitor charging experiments showing consistent even without conduction current. Countless such verifications, from 19th-century setups to modern precision tests, uphold their validity.[34][2] The assumptions underlying these integral forms mirror those of the differential versions—validity in vacuum (no matter), no magnetic monopoles, and relativistic consistency—but emphasize application over arbitrary finite volumes, surfaces, and loops, where boundary conditions are implicitly incorporated. These global statements are mathematically equivalent to the local differential forms via the divergence and Stokes theorems, facilitating transitions between perspectives.[28]Formulation in SI Units

The formulation of Maxwell's equations in the International System of Units (SI) applies to fields in vacuum and incorporates two fundamental constants: the vacuum permittivity ε₀, approximately 8.85 × 10^{-12} F/m, and the vacuum permeability μ₀, exactly 4π × 10^{-7} H/m.[35][36] These constants relate the equations to the SI base units of length (meter), mass (kilogram), time (second), and electric current (ampere), ensuring dimensional consistency. The SI version is the standard in modern engineering and scientific practice due to its coherence and alignment with practical measurements in electrical systems.[37] In differential form, the equations describe local relationships between the electric field E, magnetic field B, charge density ρ, and current density J: These forms arise from applying Stokes' theorem to their integral counterparts and are valid in regions without sources by setting ρ = 0 and J = 0.[38] The integral forms express global conservation laws over closed surfaces and paths, relating flux and circulation to enclosed charges and currents: Here, Q_enc is the enclosed charge, I_enc is the enclosed current, and the surface integrals represent magnetic and electric flux, respectively.[38] A key consequence of this formulation is the prediction of electromagnetic wave propagation at speed c = 1 / √(μ₀ ε₀), which equals exactly 299,792,458 m/s in vacuum as defined in the 2019 SI revision. This value emerges directly from the coupled curl equations, unifying electricity, magnetism, and optics.[38] The SI system's advantages for engineering stem from its rationalized structure, which eliminates extraneous factors like 4π in key relations, facilitating calculations in circuits, antennas, and devices.[37][39]Formulation in Gaussian Units

The Gaussian unit system, also known as the cgs Gaussian system, formulates Maxwell's equations in a manner that emphasizes theoretical symmetry and elegance, particularly in vacuum, by incorporating the speed of light explicitly and avoiding the vacuum permittivity and permeability found in SI units. This system uses centimeter-gram-second base units and defines electromagnetic quantities such that the electric field and magnetic field share the same dimensions, typically expressed in statvolts per centimeter for and gauss for . Developed in the 19th century building on the work of Carl Friedrich Gauss and others, it became a standard for theoretical electromagnetism due to its simplification of fundamental relations.[37] In differential form, Maxwell's equations in Gaussian units for the microscopic fields in vacuum are: Here, is the charge density, is the current density, and is the speed of light in vacuum. These forms introduce factors of arising from the non-rationalized nature of the system, which stems from defining the unit of charge via the force between two charges at unit distance as exactly 1 dyne.[40] The presence of in the curl equations highlights the relativistic structure, making the equations manifestly Lorentz invariant without additional constants.[41] A key advantage of Gaussian units is the dimensional equivalence of and , which aligns with their symmetric roles in the Lorentz force law and facilitates relativistic formulations where electric and magnetic fields transform into each other. This symmetry simplifies derivations in theoretical physics, such as those involving electromagnetic waves, where the wave speed emerges naturally as in SI but is built-in here. Additionally, the absence of and reduces clutter in equations, aiding conceptual clarity in fundamental interactions.[37][39][42] Historically, Gaussian units dominated 20th-century theoretical physics literature, including seminal texts like Jackson's Classical Electrodynamics and Landau and Lifshitz's Electrodynamics of Continuous Media, due to their prevalence in atomic and nuclear physics research where cgs mechanical units were standard. They remain common in graduate-level physics courses and high-energy physics for their alignment with natural units in quantum field theory. Conversion to SI units involves scaling factors derived from the definitions: for example, charge density transforms as , electric field as , and current density as , ensuring numerical consistency across systems.[43][41][44] A related variant, the Heaviside-Lorentz unit system, achieves even greater symmetry by rationalizing the equations—removing the factors—while retaining the explicit and equal status of and ; it is particularly favored in quantum electrodynamics for perturbative calculations. In this system, Gauss's law becomes , and Ampère's law , with the unit of charge adjusted accordingly. This variant, proposed by Oliver Heaviside and Hendrik Lorentz, bridges Gaussian units and natural units in relativistic quantum theories.[45][46]Relationships Between Formulations

Linking Differential and Integral Forms

The differential and integral formulations of Maxwell's equations in vacuum are mathematically equivalent and interconnected through two fundamental theorems of vector calculus: Gauss's divergence theorem and Stokes' theorem. These theorems enable the translation between local descriptions of electromagnetic fields—expressed as point-wise relations involving derivatives—and global descriptions involving integrals over surfaces and volumes. This linkage assumes that the electromagnetic fields are sufficiently smooth and that the domains of integration are bounded regions with well-defined boundaries, allowing the theorems to apply without singularities.[47] Gauss's divergence theorem states that for a vector field that is continuously differentiable in a volume bounded by a closed surface , where is the outward-pointing area element on . This theorem directly links the differential forms of the divergence equations to their integral counterparts. To derive the integral form of Gauss's law for electricity from its differential version , integrate both sides over the volume : Applying the divergence theorem to the left side yields , which is the integral statement that the electric flux through equals the enclosed charge divided by . Similarly, for Gauss's law for magnetism, , the integration and application of the theorem produce , indicating zero magnetic flux through any closed surface. These derivations hold for arbitrary volumes, provided the fields are smooth and the charge density is integrable.[48] Stokes' theorem complements this by relating the curl equations to line and surface integrals. It asserts that for a vector field and an oriented surface bounded by a closed curve , where aligns with the right-hand rule orientation of . Applying this to Faraday's law in differential form, , integrate over : The left side becomes by Stokes' theorem, yielding the integral form , where is the magnetic flux. For Ampère's law with Maxwell's correction, , the same process gives , with the enclosed current and the electric flux. These steps assume the surface is piecewise smooth and the fields satisfy the necessary continuity conditions.[49][50] Conversely, the differential forms can be recovered from the integral forms by considering limits over shrinking domains, leveraging the arbitrary nature of the integration regions and the smoothness of the fields. For the divergence equations, if the surface integral of flux divided by volume approaches a point, the result is the local divergence; similar localization applies to the curl equations via Stokes' theorem, ensuring consistency across formulations under standard boundary conditions where fields vanish at infinity or match across interfaces. This bidirectional equivalence underscores the robustness of Maxwell's equations in describing electromagnetism.[51][52]Physical Interpretations of Flux and Circulation