Electrical engineering

View on Wikipedia

A long row of disconnectors | |

| Occupation | |

|---|---|

| Names | Electrical engineer |

Activity sectors | Electronics, electrical circuits, electromagnetics, power engineering, electrical machines, telecommunications, control systems, signal processing, optics, photonics, and electrical substations |

| Description | |

| Competencies | Technical knowledge, advanced mathematics, systems design, physics, science, abstract thinking, analytical thinking (see also Glossary of electrical and electronics engineering) |

Fields of employment | Technology, science, exploration, military, industry and society |

| This article is part of a series on |

| Engineering |

|---|

Electrical engineering is an engineering discipline concerned with the study, design, and application of equipment, devices, and systems that use electricity, electronics, and electromagnetism. It emerged as an identifiable occupation in the latter half of the 19th century after the commercialization of the electric telegraph, the telephone, and electrical power generation, distribution, and use.

Electrical engineering is divided into a wide range of different fields, including computer engineering, systems engineering, power engineering, telecommunications, radio-frequency engineering, signal processing, instrumentation, control engineering, photovoltaic cells, electronics, and optics and photonics. Many of these disciplines overlap with other engineering branches, spanning a huge number of specializations including hardware engineering, power electronics, electromagnetics and waves, microwave engineering, nanotechnology, electrochemistry, renewable energies, mechatronics/control, and electrical materials science.[a] Electrical engineers also study machine learning and computer science techniques due to significant overlap.

Electrical engineers typically hold a degree in electrical engineering, electronic or electrical and electronic engineering. Practicing engineers may have professional certification and be members of a professional body or an international standards organization. These include the International Electrotechnical Commission (IEC), the National Society of Professional Engineers (NSPE), the Institute of Electrical and Electronics Engineers (IEEE) and the Institution of Engineering and Technology (IET, formerly the IEE).

Electrical engineers work in a very wide range of industries and the skills required are likewise variable. These range from circuit theory to the management skills of a project manager. The tools and equipment that an individual engineer may need are similarly variable, ranging from a simple voltmeter to sophisticated design and manufacturing software.

History

[edit]Electricity has been a subject of scientific interest since at least the early 17th century. William Gilbert was a prominent early electrical scientist, and was the first to draw a clear distinction between magnetism and static electricity. He is credited with establishing the term "electricity".[1] He also designed the versorium: a device that detects the presence of statically charged objects. In 1762 Swedish professor Johan Wilcke invented a device later named electrophorus that produced a static electric charge.[2] By 1800 Alessandro Volta had developed the voltaic pile, a forerunner of the electric battery.

19th century

[edit]

In the 19th century, research into the subject started to intensify. Notable developments in this century include the work of Hans Christian Ørsted, who discovered in 1820 that an electric current produces a magnetic field that will deflect a compass needle; of William Sturgeon, who in 1825 invented the electromagnet; of Joseph Henry and Edward Davy, who invented the electrical relay in 1835; of Georg Ohm, who in 1827 quantified the relationship between the electric current and potential difference in a conductor; of Michael Faraday, the discoverer of electromagnetic induction in 1831; and of James Clerk Maxwell, who in 1873 published a unified theory of electricity and magnetism in his treatise Electricity and Magnetism.[3]

In 1782, Georges-Louis Le Sage developed and presented in Berlin probably the world's first form of electric telegraphy, using 24 different wires, one for each letter of the alphabet. This telegraph connected two rooms. It was an electrostatic telegraph that moved gold leaf through electrical conduction.

In 1795, Francisco Salva Campillo proposed an electrostatic telegraph system. Between 1803 and 1804, he worked on electrical telegraphy, and in 1804, he presented his report at the Royal Academy of Natural Sciences and Arts of Barcelona. Salva's electrolyte telegraph system was very innovative though it was greatly influenced by and based upon two discoveries made in Europe in 1800—Alessandro Volta's electric battery for generating an electric current and William Nicholson and Anthony Carlyle's electrolysis of water.[4] Electrical telegraphy may be considered the first example of electrical engineering.[5] Electrical engineering became a profession in the later 19th century. Practitioners had created a global electric telegraph network, and the first professional electrical engineering institutions were founded in the UK and the US to support the new discipline. Francis Ronalds created an electric telegraph system in 1816 and documented his vision of how the world could be transformed by electricity.[6][7] Over 50 years later, he joined the new Society of Telegraph Engineers (soon to be renamed the Institution of Electrical Engineers) where he was regarded by other members as the first of their cohort.[8] By the end of the 19th century, the world had been forever changed by the rapid communication made possible by the engineering development of land-lines, submarine cables, and, from about 1890, wireless telegraphy.

Practical applications and advances in such fields created an increasing need for standardized units of measure. They led to the international standardization of the units volt, ampere, coulomb, ohm, farad, and henry. This was achieved at an international conference in Chicago in 1893.[9] The publication of these standards formed the basis of future advances in standardization in various industries, and in many countries, the definitions were immediately recognized in relevant legislation.[10]

During these years, the study of electricity was largely considered to be a subfield of physics since early electrical technology was considered electromechanical in nature. The Technische Universität Darmstadt founded the world's first department of electrical engineering in 1882 and introduced the first-degree course in electrical engineering in 1883.[11] The first electrical engineering degree program in the United States was started at Massachusetts Institute of Technology (MIT) in the physics department under Professor Charles Cross,[12] though it was Cornell University to produce the world's first electrical engineering graduates in 1885.[13] The first course in electrical engineering was taught in 1883 in Cornell's Sibley College of Mechanical Engineering and Mechanic Arts.[14]

In about 1885, Cornell President Andrew Dickson White established the first Department of Electrical Engineering in the United States.[15] In the same year, University College London founded the first chair of electrical engineering in Great Britain.[16] Professor Mendell P. Weinbach at University of Missouri established the electrical engineering department in 1886.[17] Afterwards, universities and institutes of technology gradually started to offer electrical engineering programs to their students all over the world.

During these decades the use of electrical engineering increased dramatically. In 1882, Thomas Edison switched on the world's first large-scale electric power network that provided 110 volts—direct current (DC)—to 59 customers on Manhattan Island in New York City. In 1884, Sir Charles Parsons invented the steam turbine allowing for more efficient electric power generation. Alternating current, with its ability to transmit power more efficiently over long distances via the use of transformers, developed rapidly in the 1880s and 1890s with transformer designs by Károly Zipernowsky, Ottó Bláthy and Miksa Déri (later called ZBD transformers), Lucien Gaulard, John Dixon Gibbs and William Stanley Jr. Practical AC motor designs including induction motors were independently invented by Galileo Ferraris and Nikola Tesla and further developed into a practical three-phase form by Mikhail Dolivo-Dobrovolsky and Charles Eugene Lancelot Brown.[18] Charles Steinmetz and Oliver Heaviside contributed to the theoretical basis of alternating current engineering.[19][20] The spread in the use of AC set off in the United States what has been called the war of the currents between a George Westinghouse backed AC system and a Thomas Edison backed DC power system, with AC being adopted as the overall standard.[21]

Early 20th century

[edit]

During the development of radio, many scientists and inventors contributed to radio technology and electronics. The mathematical work of James Clerk Maxwell during the 1850s had shown the relationship of different forms of electromagnetic radiation including the possibility of invisible airborne waves (later called "radio waves"). In his classic physics experiments of 1888, Heinrich Hertz proved Maxwell's theory by transmitting radio waves with a spark-gap transmitter, and detected them by using simple electrical devices. Other physicists experimented with these new waves and in the process developed devices for transmitting and detecting them. In 1895, Guglielmo Marconi began work on a way to adapt the known methods of transmitting and detecting these "Hertzian waves" into a purpose-built commercial wireless telegraphic system. Early on, he sent wireless signals over a distance of one and a half miles. In December 1901, he sent wireless waves that were not affected by the curvature of the Earth. Marconi later transmitted the wireless signals across the Atlantic between Poldhu, Cornwall, and St. John's, Newfoundland, a distance of 2,100 miles (3,400 km).[22]

Millimetre wave communication was first investigated by Jagadish Chandra Bose during 1894–1896, when he reached an extremely high frequency of up to 60 GHz in his experiments.[23] He also introduced the use of semiconductor junctions to detect radio waves,[24] when he patented the radio crystal detector in 1901.[25][26]

In 1897, Karl Ferdinand Braun introduced the cathode-ray tube as part of an oscilloscope, a crucial enabling technology for electronic television.[27] John Fleming invented the first radio tube, the diode, in 1904. Two years later, Robert von Lieben and Lee De Forest independently developed the amplifier tube, called the triode.[28]

In 1920, Albert Hull developed the magnetron which would eventually lead to the development of the microwave oven in 1946 by Percy Spencer.[29][30] In 1934, the British military began to make strides toward radar (which also uses the magnetron) under the direction of Dr Wimperis, culminating in the operation of the first radar station at Bawdsey in August 1936.[31]

In 1941, Konrad Zuse presented the Z3, the world's first fully functional and programmable computer using electromechanical parts. In 1943, Tommy Flowers designed and built the Colossus, the world's first fully functional, electronic, digital and programmable computer.[32][33] In 1946, the ENIAC (Electronic Numerical Integrator and Computer) of John Presper Eckert and John Mauchly followed, beginning the computing era. The arithmetic performance of these machines allowed engineers to develop completely new technologies and achieve new objectives.[34]

In 1948, Claude Shannon published "A Mathematical Theory of Communication" which mathematically describes the passage of information with uncertainty (electrical noise).

Solid-state electronics

[edit]

The first working transistor was a point-contact transistor invented by John Bardeen and Walter Houser Brattain while working under William Shockley at the Bell Telephone Laboratories (BTL) in 1947.[35] They then invented the bipolar junction transistor in 1948.[36] While early junction transistors were relatively bulky devices that were difficult to manufacture on a mass-production basis,[37] they opened the door for more compact devices.[38]

The first integrated circuits were the hybrid integrated circuit invented by Jack Kilby at Texas Instruments in 1958 and the monolithic integrated circuit chip invented by Robert Noyce at Fairchild Semiconductor in 1959.[39]

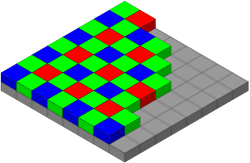

The MOSFET (metal–oxide–semiconductor field-effect transistor, or MOS transistor) was invented by Mohamed Atalla and Dawon Kahng at BTL in 1959.[40][41][42] It was the first truly compact transistor that could be miniaturised and mass-produced for a wide range of uses.[37] It revolutionized the electronics industry,[43][44] becoming the most widely used electronic device in the world.[41][45][46]

The MOSFET made it possible to build high-density integrated circuit chips.[41] The earliest experimental MOS IC chip to be fabricated was built by Fred Heiman and Steven Hofstein at RCA Laboratories in 1962.[47] MOS technology enabled Moore's law, the doubling of transistors on an IC chip every two years, predicted by Gordon Moore in 1965.[48] Silicon-gate MOS technology was developed by Federico Faggin at Fairchild in 1968.[49] Since then, the MOSFET has been the basic building block of modern electronics.[42][50][51] The mass-production of silicon MOSFETs and MOS integrated circuit chips, along with continuous MOSFET scaling miniaturization at an exponential pace (as predicted by Moore's law), has since led to revolutionary changes in technology, economy, culture and thinking.[52]

The Apollo program which culminated in landing astronauts on the Moon with Apollo 11 in 1969 was enabled by NASA's adoption of advances in semiconductor electronic technology, including MOSFETs in the Interplanetary Monitoring Platform (IMP)[53][54] and silicon integrated circuit chips in the Apollo Guidance Computer (AGC).[55]

The development of MOS integrated circuit technology in the 1960s led to the invention of the microprocessor in the early 1970s.[56][57] The first single-chip microprocessor was the Intel 4004, released in 1971.[56] The Intel 4004 was designed and realized by Federico Faggin at Intel with his silicon-gate MOS technology,[56] along with Intel's Marcian Hoff and Stanley Mazor and Busicom's Masatoshi Shima.[58] The microprocessor led to the development of microcomputers and personal computers, and the microcomputer revolution.

Electrical Engineering and Artificial Intelligence

[edit]In the recent times, the subject of machine learning (including speech systems, computer vision and reinforcement learning) has had significant overlap with electrical engineering fields such as signal processing, image processing and control engineering, and is as such studied often by electrical engineers. Machine learning techniques are also used in electrical engineering systems in subfields such as electronic design automation, stochastic and adaptive control, smart grids, adaptive signal processing, etc.

Subfields

[edit]One of the properties of electricity is that it is very useful for energy transmission as well as for information transmission. These were also the first areas in which electrical engineering was developed. Today, electrical engineering has many subdisciplines, the most common of which are listed below. Although there are electrical engineers who focus exclusively on one of these subdisciplines, many deal with a combination of them. Sometimes, certain fields, such as electronic engineering and computer engineering, are considered disciplines in their own right.

Power and energy

[edit]

Power & Energy engineering deals with the generation, transmission, and distribution of electricity as well as the design of a range of related devices.[59] These include transformers, electric generators, electric motors, high voltage engineering, and power electronics. In many regions of the world, governments maintain an electrical network called a power grid that connects a variety of generators together with users of their energy. Users purchase electrical energy from the grid, avoiding the costly exercise of having to generate their own. Power engineers may work on the design and maintenance of the power grid as well as the power systems that connect to it.[60] Such systems are called on-grid power systems and may supply the grid with additional power, draw power from the grid, or do both. Power engineers may also work on systems that do not connect to the grid, called off-grid power systems, which in some cases are preferable to on-grid systems.

Telecommunications

[edit]

Telecommunications engineering focuses on the transmission of information across a communication channel such as a coax cable, optical fiber or free space.[61] Transmissions across free space require information to be encoded in a carrier signal to shift the information to a carrier frequency suitable for transmission; this is known as modulation. Popular analog modulation techniques include amplitude modulation and frequency modulation.[62] The choice of modulation affects the cost and performance of a system and these two factors must be balanced carefully by the engineer.

Once the transmission characteristics of a system are determined, telecommunication engineers design the transmitters and receivers needed for such systems. These two are sometimes combined to form a two-way communication device known as a transceiver. A key consideration in the design of transmitters is their power consumption as this is closely related to their signal strength.[63][64] Typically, if the power of the transmitted signal is insufficient once the signal arrives at the receiver's antenna(s), the information contained in the signal will be corrupted by noise, specifically static.

Control engineering

[edit]

Control engineering focuses on the modeling of a diverse range of dynamic systems and the design of controllers that will cause these systems to behave in the desired manner.[65] To implement such controllers, electronics control engineers may use electronic circuits, digital signal processors, microcontrollers, and programmable logic controllers (PLCs). Control engineering has a wide range of applications from the flight and propulsion systems of commercial airliners to the cruise control present in many modern automobiles.[66] It also plays an important role in industrial automation.

Control engineers often use feedback when designing control systems. For example, in an automobile with cruise control the vehicle's speed is continuously monitored and fed back to the system which adjusts the motor's power output accordingly.[67] Where there is regular feedback, control theory can be used to determine how the system responds to such feedback.

Control engineers also work in robotics to design autonomous systems using control algorithms which interpret sensory feedback to control actuators that move robots such as autonomous vehicles, autonomous drones and others used in a variety of industries.[68]

Electronics

[edit]

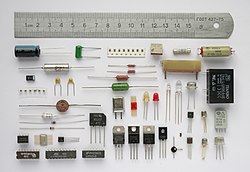

Electronic engineering involves the design and testing of electronic circuits that use the properties of components such as resistors, capacitors, inductors, diodes, and transistors to achieve a particular functionality.[60] The tuned circuit, which allows the user of a radio to filter out all but a single station, is just one example of such a circuit. Another example to research is a pneumatic signal conditioner.

Prior to the Second World War, the subject was commonly known as radio engineering and basically was restricted to aspects of communications and radar, commercial radio, and early television.[60] Later, in post-war years, as consumer devices began to be developed, the field grew to include modern television, audio systems, computers, and microprocessors. In the mid-to-late 1950s, the term radio engineering gradually gave way to the name electronic engineering.

Before the invention of the integrated circuit in 1959,[69] electronic circuits were constructed from discrete components that could be manipulated by humans. These discrete circuits consumed much space and power and were limited in speed, although they are still common in some applications. By contrast, integrated circuits packed a large number—often millions—of tiny electrical components, mainly transistors,[70] into a small chip around the size of a coin. This allowed for the powerful computers and other electronic devices we see today.

Microelectronics and nanoelectronics

[edit]

Microelectronics engineering deals with the design and microfabrication of very small electronic circuit components for use in an integrated circuit or sometimes for use on their own as a general electronic component.[71] The most common microelectronic components are semiconductor transistors, although all main electronic components (resistors, capacitors etc.) can be created at a microscopic level.

Nanoelectronics is the further scaling of devices down to nanometer levels. Modern devices are already in the nanometer regime, with below 100 nm processing having been standard since around 2002.[72]

Microelectronic components are created by chemically fabricating wafers of semiconductors such as silicon (at higher frequencies, compound semiconductors like gallium arsenide and indium phosphide) to obtain the desired transport of electronic charge and control of current. The field of microelectronics involves a significant amount of chemistry and material science and requires the electronic engineer working in the field to have a very good working knowledge of the effects of quantum mechanics.[73]

Signal processing

[edit]

Signal processing deals with the analysis and manipulation of signals.[74] Signals can be either analog, in which case the signal varies continuously according to the information, or digital, in which case the signal varies according to a series of discrete values representing the information. For analog signals, signal processing may involve the amplification and filtering of audio signals for audio equipment or the modulation and demodulation of signals for telecommunications. For digital signals, signal processing may involve the compression, error detection and error correction of digitally sampled signals.[75]

Signal processing is a very mathematically oriented and intensive area forming the core of digital signal processing and it is rapidly expanding with new applications in every field of electrical engineering such as communications, control, radar, audio engineering, broadcast engineering, power electronics, and biomedical engineering as many already existing analog systems are replaced with their digital counterparts. Analog signal processing is still important in the design of many control systems.

DSP processor ICs are found in many types of modern electronic devices, such as digital television sets,[76] radios, hi-fi audio equipment, mobile phones, multimedia players, camcorders and digital cameras, automobile control systems, noise cancelling headphones, digital spectrum analyzers, missile guidance systems, radar systems, and telematics systems. In such products, DSP may be responsible for noise reduction, speech recognition or synthesis, encoding or decoding digital media, wirelessly transmitting or receiving data, triangulating positions using GPS, and other kinds of image processing, video processing, audio processing, and speech processing.[77]

Instrumentation

[edit]

Instrumentation engineering deals with the design of devices to measure physical quantities such as pressure, flow, and temperature.[78] The design of such instruments requires a good understanding of physics that often extends beyond electromagnetic theory. For example, flight instruments measure variables such as wind speed and altitude to enable pilots the control of aircraft analytically. Similarly, thermocouples use the Peltier-Seebeck effect to measure the temperature difference between two points.[79]

Often instrumentation is not used by itself, but instead as the sensors of larger electrical systems. For example, a thermocouple might be used to help ensure a furnace's temperature remains constant.[80] For this reason, instrumentation engineering is often viewed as the counterpart of control.

Computers

[edit]

Computer engineering deals with the design of computers and computer systems. This may involve the design of new hardware. Computer engineers may also work on a system's software. However, the design of complex software systems is often the domain of software engineering, which is usually considered a separate discipline.[81] Desktop computers represent a tiny fraction of the devices a computer engineer might work on, as computer-like architectures are now found in a range of embedded devices including video game consoles and DVD players. Computer engineers are involved in many hardware and software aspects of computing.[82] Robots are one of the applications of computer engineering.

Photonics and optics

[edit]

Photonics and optics deals with the generation, transmission, amplification, modulation, detection, and analysis of electromagnetic radiation. The application of optics deals with design of optical instruments such as lenses, microscopes, telescopes, and other equipment that uses the properties of electromagnetic radiation. Other prominent applications of optics include electro-optical sensors and measurement systems, lasers, fiber-optic communication systems, and optical disc systems (e.g. CD and DVD). Photonics builds heavily on optical technology, supplemented with modern developments such as optoelectronics (mostly involving semiconductors), laser systems, optical amplifiers and novel materials (e.g. metamaterials).

Related disciplines

[edit]

Mechatronics is an engineering discipline that deals with the convergence of electrical and mechanical systems. Such combined systems are known as electromechanical systems and have widespread adoption. Examples include automated manufacturing systems,[83] heating, ventilation and air-conditioning systems,[84] and various subsystems of aircraft and automobiles.[85] Electronic systems design is the subject within electrical engineering that deals with the multi-disciplinary design issues of complex electrical and mechanical systems.[86]

The term mechatronics is typically used to refer to macroscopic systems but futurists have predicted the emergence of very small electromechanical devices. Already, such small devices, known as microelectromechanical systems (MEMS), are used in automobiles to tell airbags when to deploy,[87] in digital projectors to create sharper images, and in inkjet printers to create nozzles for high definition printing. In the future it is hoped the devices will help build tiny implantable medical devices and improve optical communication.[88]

In aerospace engineering and robotics, an example is the most recent electric propulsion and ion propulsion.

Education

[edit]

Electrical engineers typically possess an academic degree with a major in electrical engineering, electronics engineering, electronics and computer engineering, electrical engineering technology,[89] or electrical and electronic engineering.[90][91] The same fundamental principles are taught in all programs, though emphasis may vary according to title. The length of study for such a degree is usually four or five years and the completed degree may be designated as a Bachelor of Science in Electrical/Electronics Engineering Technology, Bachelor of Engineering, Bachelor of Science, Bachelor of Technology, or Bachelor of Applied Science, depending on the university. The bachelor's degree generally includes units covering physics, mathematics, computer science, project management, and a variety of topics in electrical engineering.[92] Initially such topics cover most, if not all, of the subdisciplines of electrical engineering.

At many schools, electronic engineering is included as part of an electrical award, sometimes explicitly, such as a Bachelor of Engineering (Electrical and Electronic), but in others, electrical and electronic engineering are both considered to be sufficiently broad and complex that separate degrees are offered.[93]

Some electrical engineers choose to study for a postgraduate degree such as a Master of Engineering/Master of Science (MEng/MSc), a Master of Engineering Management, a Doctor of Philosophy (PhD) in Engineering, an Engineering Doctorate (Eng.D.), or an Engineer's degree. The master's and engineer's degrees may consist of either research, coursework or a mixture of the two. The Doctor of Philosophy and Engineering Doctorate degrees consist of a significant research component and are often viewed as the entry point to academia. In the United Kingdom and some other European countries, Master of Engineering is often considered to be an undergraduate degree of slightly longer duration than the Bachelor of Engineering rather than a standalone postgraduate degree.[94]

Professional practice

[edit]

In most countries, a bachelor's degree in engineering represents the first step towards professional certification and the degree program itself is certified by a professional body.[95] After completing a certified degree program the engineer must satisfy a range of requirements (including work experience requirements) before being certified. Once certified the engineer is designated the title of Professional Engineer (in the United States, Canada and South Africa), Chartered engineer or Incorporated Engineer (in India, Pakistan, the United Kingdom, Ireland and Zimbabwe), Chartered Professional Engineer (in Australia and New Zealand) or European Engineer (in much of the European Union).

The advantages of licensure vary depending upon location. For example, in the United States and Canada "only a licensed engineer may seal engineering work for public and private clients".[96] This requirement is enforced by state and provincial legislation such as Quebec's Engineers Act.[97] In other countries, no such legislation exists. Practically all certifying bodies maintain a code of ethics that they expect all members to abide by or risk expulsion.[98] In this way these organizations play an important role in maintaining ethical standards for the profession. Even in jurisdictions where certification has little or no legal bearing on work, engineers are subject to contract law. In cases where an engineer's work fails he or she may be subject to the tort of negligence and, in extreme cases, the charge of criminal negligence. An engineer's work must also comply with numerous other rules and regulations, such as building codes and legislation pertaining to environmental law.

Professional bodies of note for electrical engineers include the Institute of Electrical and Electronics Engineers (IEEE) and the Institution of Engineering and Technology (IET). The IEEE claims to produce 30% of the world's literature in electrical engineering, has over 360,000 members worldwide and holds over 3,000 conferences annually.[99] The IET publishes 21 journals, has a worldwide membership of over 150,000, and claims to be the largest professional engineering society in Europe.[100][101] Obsolescence of technical skills is a serious concern for electrical engineers. Membership and participation in technical societies, regular reviews of periodicals in the field and a habit of continued learning are therefore essential to maintaining proficiency. An MIET(Member of the Institution of Engineering and Technology) is recognised in Europe as an Electrical and computer (technology) engineer.[102]

In Australia, Canada, and the United States, electrical engineers make up around 0.25% of the labor force.[b]

Tools and work

[edit]From the Global Positioning System to electric power generation, electrical engineers have contributed to the development of a wide range of technologies. They design, develop, test, and supervise the deployment of electrical systems and electronic devices. For example, they may work on the design of telecommunications systems, the operation of electric power stations, the lighting and wiring of buildings, the design of household appliances, or the electrical control of industrial machinery.[106]

Fundamental to the discipline are the sciences of physics and mathematics as these help to obtain both a qualitative and quantitative description of how such systems will work. Today most engineering work involves the use of computers and it is commonplace to use computer-aided design programs when designing electrical systems. Nevertheless, the ability to sketch ideas is still invaluable for quickly communicating with others.

Although most electrical engineers will understand basic circuit theory (that is, the interactions of elements such as resistors, capacitors, diodes, transistors, and inductors in a circuit), the theories employed by engineers generally depend upon the work they do. For example, quantum mechanics and solid state physics might be relevant to an engineer working on VLSI (the design of integrated circuits), but are largely irrelevant to engineers working with macroscopic electrical systems. Even circuit theory may not be relevant to a person designing telecommunications systems that use off-the-shelf components. Perhaps the most important technical skills for electrical engineers are reflected in university programs, which emphasize strong numerical skills, computer literacy, and the ability to understand the technical language and concepts that relate to electrical engineering.[107]

A wide range of instrumentation is used by electrical engineers. For simple control circuits and alarms, a basic multimeter measuring voltage, current, and resistance may suffice. Where time-varying signals need to be studied, the oscilloscope is also an ubiquitous instrument. In RF engineering and high-frequency telecommunications, spectrum analyzers and network analyzers are used. In some disciplines, safety can be a particular concern with instrumentation. For instance, medical electronics designers must take into account that much lower voltages than normal can be dangerous when electrodes are directly in contact with internal body fluids.[108] Power transmission engineering also has great safety concerns due to the high voltages used; although voltmeters may in principle be similar to their low voltage equivalents, safety and calibration issues make them very different.[109] Many disciplines of electrical engineering use tests specific to their discipline. Audio electronics engineers use audio test sets consisting of a signal generator and a meter, principally to measure level but also other parameters such as harmonic distortion and noise. Likewise, information technology have their own test sets, often specific to a particular data format, and the same is true of television broadcasting.

For many engineers, technical work accounts for only a fraction of the work they do. A lot of time may also be spent on tasks such as discussing proposals with clients, preparing budgets and determining project schedules.[110] Many senior engineers manage a team of technicians or other engineers and for this reason project management skills are important. Most engineering projects involve some form of documentation and strong written communication skills are therefore very important.

The workplaces of engineers are just as varied as the types of work they do. Electrical engineers may be found in the pristine lab environment of a fabrication plant, on board a Naval ship, the offices of a consulting firm or on site at a mine. During their working life, electrical engineers may find themselves supervising a wide range of individuals including scientists, electricians, computer programmers, and other engineers.[111]

Electrical engineering has an intimate relationship with the physical sciences. For instance, the physicist Lord Kelvin played a major role in the engineering of the first transatlantic telegraph cable.[112] Conversely, the engineer Oliver Heaviside produced major work on the mathematics of transmission on telegraph cables.[113] Electrical engineers are often required on major science projects. For instance, large particle accelerators such as CERN need electrical engineers to deal with many aspects of the project including the power distribution, the instrumentation, and the manufacture and installation of the superconducting electromagnets.[114][115]

See also

[edit]- Barnacle (slang)

- Comparison of EDA software

- Electrical Technologist

- Electronic design automation

- Glossary of electrical and electronics engineering

- Index of electrical engineering articles

- Information engineering

- International Electrotechnical Commission (IEC)

- List of electrical engineers

- List of electrical engineering journals

- List of engineering branches

- List of mechanical, electrical and electronic equipment manufacturing companies by revenue

- List of Russian electrical engineers

- Occupations in electrical/electronics engineering

- Outline of electrical engineering

- Timeline of electrical and electronic engineering

Notes

[edit]- ^ For more see glossary of electrical and electronics engineering.

- ^ In May 2014 there were around 175,000 people working as electrical engineers in the US.[103] In 2012, Australia had around 19,000[104] while in Canada, there were around 37,000 (as of 2007[update]), constituting about 0.2% of the labour force in each of the three countries. Australia and Canada reported that 96% and 88% of their electrical engineers respectively are male.[105]

References

[edit]- ^ Martinsen & Grimnes 2011, p. 411.

- ^ "The Voltaic Pile | Distinctive Collections Spotlights". libraries.mit.edu. Retrieved 16 December 2022.

- ^ Lambourne 2010, p. 11.

- ^ "Francesc Salvà i Campillo : Biography". ethw.org. 25 January 2016. Retrieved 25 March 2019.

- ^ Roberts, Steven. "Distant Writing: A History of the Telegraph Companies in Britain between 1838 and 1868: 2. Introduction".

Using these discoveries a number of inventors or rather 'adapters' appeared, taking this new knowledge, transforming it into useful ideas with commercial utility; the first of these 'products' was the use of electricity to transmit information between distant points, the electric telegraph.

- ^ Ronalds, B.F. (2016). Sir Francis Ronalds: Father of the Electric Telegraph. London: Imperial College Press. ISBN 978-1-78326-917-4.

- ^ Ronalds, B.F. (2016). "Sir Francis Ronalds and the Electric Telegraph". International Journal for the History of Engineering & Technology. 86: 42–55. doi:10.1080/17581206.2015.1119481. S2CID 113256632.

- ^ Ronalds, B.F. (July 2016). "Francis Ronalds (1788–1873): The First Electrical Engineer?". Proceedings of the IEEE. 104 (7): 1489–1498. doi:10.1109/JPROC.2016.2571358. S2CID 20662894.

- ^ Rosenberg 2008, p. 9.

- ^ Tunbridge 1992.

- ^ Darmstadt, Technische Universität. "Historie". Technische Universität Darmstadt. Retrieved 12 October 2019.

- ^ Wildes & Lindgren 1985, p. 19.

- ^ "History". School of Electrical and Computer Engineering, Cornell. Spring 1994 [Later updated]. Archived from the original on 6 June 2013.

- ^ Roger Segelken, H. (2009). A tradition of leadership and innovation: a history of Cornell Engineering (PDF). Ithaca, NY. ISBN 978-0-918531-05-6. OCLC 455196772. Archived from the original (PDF) on 3 March 2016.

{{cite book}}: CS1 maint: location missing publisher (link) - ^ "Andrew Dickson White | Office of the President". president.cornell.edu.

- ^ The Electrical Engineer. 1911. p. 54.

- ^ "Department History – Electrical & Computer Engineering". Archived from the original on 17 November 2015. Retrieved 5 November 2015.

- ^ Heertje & Perlman 1990, p. 138.

- ^ Grattan-Guinness, I. (1 January 2003). Companion Encyclopedia of the History and Philosophy of the Mathematical Sciences. JHU Press. ISBN 9780801873973 – via Google Books.

- ^ Suzuki, Jeff (27 August 2009). Mathematics in Historical Context. MAA. ISBN 9780883855706 – via Google Books.

- ^ Severs & Leise 2011, p. 145.

- ^ Marconi's biography at Nobelprize.org retrieved 21 June 2008.

- ^ "Milestones: First Millimeter-wave Communication Experiments by J.C. Bose, 1894–96". List of IEEE milestones. Institute of Electrical and Electronics Engineers. Retrieved 1 October 2019.

- ^ Emerson, D. T. (1997). "The work of Jagadis Chandra Bose: 100 years of mm-wave research". 1997 IEEE MTT-S International Microwave Symposium Digest. Vol. 45. IEEE Transactions on Microwave Theory and Research. pp. 2267–2273. Bibcode:1997imsd.conf..553E. CiteSeerX 10.1.1.39.8748. doi:10.1109/MWSYM.1997.602853. ISBN 9780986488511. S2CID 9039614. reprinted in Igor Grigorov, Ed., Antentop, Vol. 2, No.3, pp. 87–96.

- ^ "Timeline". The Silicon Engine. Computer History Museum. Retrieved 22 August 2019.

- ^ "1901: Semiconductor Rectifiers Patented as "Cat's Whisker" Detectors". The Silicon Engine. Computer History Museum. Retrieved 23 August 2019.

- ^ Abramson 1955, p. 22.

- ^ Huurdeman 2003, p. 226.

- ^ "Albert W. Hull (1880–1966)". IEEE History Center. Archived from the original on 2 June 2002. Retrieved 22 January 2006.

- ^ "Who Invented Microwaves?". Archived from the original on 12 December 2017. Retrieved 22 January 2006.

- ^ "Early Radar History". Peneley Radar Archives. Retrieved 22 January 2006.

- ^ Rojas, Raúl (2002). "The history of Konrad Zuse's early computing machines". In Rojas, Raúl; Hashagen, Ulf (eds.). The First Computers—History and Architectures History of Computing. MIT Press. p. 237. ISBN 978-0-262-68137-7.

- ^ Sale, Anthony E. (2002). "The Colossus of Bletchley Park". In Rojas, Raúl; Hashagen, Ulf (eds.). The First Computers—History and Architectures History of Computing. MIT Press. pp. 354–355. ISBN 978-0-262-68137-7.

- ^ "The ENIAC Museum Online". Retrieved 18 January 2006.

- ^ "1947: Invention of the Point-Contact Transistor". Computer History Museum. Retrieved 10 August 2019.

- ^ "1948: Conception of the Junction Transistor". The Silicon Engine. Computer History Museum. Retrieved 8 October 2019.

- ^ a b Moskowitz, Sanford L. (2016). Advanced Materials Innovation: Managing Global Technology in the 21st century. John Wiley & Sons. p. 168. ISBN 9780470508923.

- ^ "Electronics Timeline". Greatest Engineering Achievements of the Twentieth Century. Retrieved 18 January 2006.

- ^ Saxena, Arjun N. (2009). Invention of Integrated Circuits: Untold Important Facts. World Scientific. p. 140. ISBN 9789812814456.

- ^ "1960 – Metal Oxide Semiconductor (MOS) Transistor Demonstrated". The Silicon Engine. Computer History Museum.

- ^ a b c "Who Invented the Transistor?". Computer History Museum. 4 December 2013. Retrieved 20 July 2019.

- ^ a b "Triumph of the MOS Transistor". YouTube. Computer History Museum. 6 August 2010. Archived from the original on 28 October 2021. Retrieved 21 July 2019.

- ^ Chan, Yi-Jen (1992). Studies of InAIAs/InGaAs and GaInP/GaAs heterostructure FET's for high speed applications. University of Michigan. p. 1.

The Si MOSFET has revolutionized the electronics industry and as a result impacts our daily lives in almost every conceivable way.

- ^ Grant, Duncan Andrew; Gowar, John (1989). Power MOSFETS: theory and applications. Wiley. p. 1. ISBN 9780471828679.

The metal–oxide–semiconductor field-effect transistor (MOSFET) is the most commonly used active device in the very large-scale integration of digital integrated circuits (VLSI). During the 1970s these components revolutionized electronic signal processing, control systems and computers.

- ^ Golio, Mike; Golio, Janet (2018). RF and Microwave Passive and Active Technologies. CRC Press. pp. 18–2. ISBN 9781420006728.

- ^ "13 Sextillion & Counting: The Long & Winding Road to the Most Frequently Manufactured Human Artifact in History". Computer History Museum. 2 April 2018. Retrieved 28 July 2019.

- ^ "Tortoise of Transistors Wins the Race – CHM Revolution". Computer History Museum. Retrieved 22 July 2019.

- ^ Franco, Jacopo; Kaczer, Ben; Groeseneken, Guido (2013). Reliability of High Mobility SiGe Channel MOSFETs for Future CMOS Applications. Springer Science & Business Media. pp. 1–2. ISBN 9789400776630.

- ^ "1968: Silicon Gate Technology Developed for ICs". Computer History Museum. Retrieved 22 July 2019.

- ^ McCluskey, Matthew D.; Haller, Eugene E. (2012). Dopants and Defects in Semiconductors. CRC Press. p. 3. ISBN 9781439831533.

- ^ Daniels, Lee A. (28 May 1992). "Dr. Dawon Kahng, 61, Inventor in Field of Solid-State Electronics". The New York Times. Retrieved 1 April 2017.

- ^ Feldman, Leonard C. (2001). "Introduction". Fundamental Aspects of Silicon Oxidation. Springer Science & Business Media. pp. 1–11. ISBN 9783540416821.

- ^ Butler, P. M. (29 August 1989). Interplanetary Monitoring Platform (PDF). NASA. pp. 1, 11, 134. Retrieved 12 August 2019.

- ^ White, H. D.; Lokerson, D. C. (1971). "The Evolution of IMP Spacecraft Mosfet Data Systems". IEEE Transactions on Nuclear Science. 18 (1): 233–236. Bibcode:1971ITNS...18..233W. doi:10.1109/TNS.1971.4325871. ISSN 0018-9499.

- ^ "Apollo Guidance Computer and the First Silicon Chips". National Air and Space Museum. Smithsonian Institution. 14 October 2015. Retrieved 1 September 2019.

- ^ a b c "1971: Microprocessor Integrates CPU Function onto a Single Chip". Computer History Museum. Retrieved 22 July 2019.

- ^ Colinge, Jean-Pierre; Greer, James C. (2016). Nanowire Transistors: Physics of Devices and Materials in One Dimension. Cambridge University Press. p. 2. ISBN 9781107052406.

- ^ Faggin, Federico (2009). "The Making of the First Microprocessor". IEEE Solid-State Circuits Magazine. 1: 8–21. doi:10.1109/MSSC.2008.930938. S2CID 46218043.

- ^ Grigsby 2012.

- ^ a b c Engineering: Issues, Challenges and Opportunities for Development. UNESCO. 2010. pp. 127–8. ISBN 978-92-3-104156-3.

- ^ Tobin 2007, p. 15.

- ^ Chandrasekhar 2006, p. 21.

- ^ Smith 2007, p. 19.

- ^ Zhang, Hu & Luo 2007, p. 448.

- ^ Bissell 1996, p. 17.

- ^ McDavid & Echaore-McDavid 2009, p. 95.

- ^ Åström & Murray 2021, p. 108.

- ^ Fairman 1998, p. 119.

- ^ Thompson 2006, p. 4.

- ^ Merhari 2009, p. 233.

- ^ Bhushan 1997, p. 581.

- ^ Mook 2008, p. 149.

- ^ Sullivan 2012.

- ^ Tuzlukov 2010, p. 20.

- ^ Manolakis & Ingle 2011, p. 17.

- ^ Bayoumi & Swartzlander 1994, p. 25.

- ^ Khanna 2009, p. 297.

- ^ Grant & Bixley 2011, p. 159.

- ^ Fredlund, Rahardjo & Fredlund 2012, p. 346.

- ^ Manual on the Use of Thermocouples in Temperature Measurement. ASTM International. 1 January 1993. p. 154. ISBN 978-0-8031-1466-1.

- ^ Jalote 2006, p. 22.

- ^ Lam, Herman; O'Malley, John R. (26 April 1988). Fundamentals of Computer Engineering: Logic Design and Microprocessors. Wiley. ISBN 0471605018.

- ^ Mahalik 2003, p. 569.

- ^ Leondes 2000, p. 199.

- ^ Shetty & Kolk 2010, p. 36.

- ^ J. Lienig; H. Bruemmer (2017). Fundamentals of Electronic Systems Design. Springer International Publishing. p. 1. doi:10.1007/978-3-319-55840-0. ISBN 978-3-319-55839-4.

- ^ Maluf & Williams 2004, p. 3.

- ^ Iga & Kokubun 2010, p. 137.

- ^ "Electrical and Electronic Engineer". Occupational Outlook Handbook, 2012–13 Edition. Bureau of Labor Statistics, U.S. Department of Labor. Retrieved 15 November 2014.

- ^ Chaturvedi 1997, p. 253.

- ^ "What is the difference between electrical and electronic engineering?". FAQs – Studying Electrical Engineering. Archived from the original on 10 November 2005. Retrieved 20 March 2012.

- ^ Computerworld. IDG Enterprise. 25 August 1986. p. 97.

- ^ "Electrical and Electronic Engineering". Archived from the original on 28 November 2011. Retrieved 8 December 2011.

- ^ Various including graduate degree requirements at MIT Archived 16 January 2006 at the Wayback Machine, study guide at UWA, the curriculum at Queen's Archived 4 August 2012 at the Wayback Machine and unit tables at Aberdeen Archived 22 August 2006 at the Wayback Machine

- ^ Occupational Outlook Handbook, 2008–2009. U S Department of Labor, Jist Works. 1 March 2008. p. 148. ISBN 978-1-59357-513-7.

- ^ "Why Should You Get Licensed?". National Society of Professional Engineers. Archived from the original on 4 June 2005. Retrieved 11 July 2005.

- ^ "Engineers Act". Quebec Statutes and Regulations (CanLII). Retrieved 24 July 2005.

- ^ "Codes of Ethics and Conduct". Online Ethics Center. Archived from the original on 2 February 2016. Retrieved 24 July 2005.

- ^ "About the IEEE". IEEE. Retrieved 11 July 2005.

- ^ "About the IET". The IET. Retrieved 11 July 2005.

- ^ "Journal and Magazines". The IET. Archived from the original on 24 August 2007. Retrieved 11 July 2005.

- ^ "Electrical and Electronics Engineers, except Computer". Occupational Outlook Handbook. Archived from the original on 13 July 2005. Retrieved 16 July 2005. (see here regarding copyright)

- ^ "Electrical Engineers". www.bls.gov. Retrieved 30 November 2015.

- ^ "Electrical Engineer Career Information for Migrants | Victoria, Australia". www.liveinvictoria.vic.gov.au. Archived from the original on 8 December 2015. Retrieved 30 November 2015.

- ^ "Electrical Engineers". Bureau of Labor Statistics. Archived from the original on 19 February 2006. Retrieved 13 March 2009. See also: "Work Experience of the Population in 2006". Bureau of Labor Statistics. Retrieved 20 June 2008. and "Electrical and Electronics Engineers". Australian Careers. Archived from the original on 23 October 2009. Retrieved 13 March 2009. and "Electrical and Electronics Engineers". Canadian jobs service. Archived from the original on 6 March 2009. Retrieved 13 March 2009.

- ^ "Electrical and Electronics Engineers, except Computer". Occupational Outlook Handbook. Archived from the original on 13 July 2005. Retrieved 16 July 2005. (see )

- ^ Taylor 2008, p. 241.

- ^ Leitgeb 2010, p. 122.

- ^ Naidu & Kamaraju 2009, p. 210

- ^ Trevelyan, James (2005). "What Do Engineers Really Do?" (PDF). University of Western Australia.

- ^ McDavid & Echaore-McDavid 2009, p. 87.

- ^ Huurdeman, pp. 95–96

- ^ Huurdeman, p. 90

- ^ Schmidt, p. 218

- ^ Martini, p. 179

- Bibliography

- Abramson, Albert (1955). Electronic Motion Pictures: A History of the Television Camera. University of California Press.

- Åström, K.J.; Murray, R.M. (2021). Feedback Systems: An Introduction for Scientists and Engineers, Second Edition. Princeton University Press. p. 108. ISBN 978-0-691-21347-7.

- Bayoumi, Magdy A.; Swartzlander, Earl E. Jr. (31 October 1994). VLSI Signal Processing Technology. Springer. ISBN 978-0-7923-9490-7.

- Bhushan, Bharat (1997). Micro/Nanotribology and Its Applications. Springer. ISBN 978-0-7923-4386-8.

- Bissell, Chris (25 July 1996). Control Engineering, 2nd Edition. CRC Press. ISBN 978-0-412-57710-9.

- Chandrasekhar, Thomas (1 December 2006). Analog Communication (Jntu). Tata McGraw-Hill Education. ISBN 978-0-07-064770-1.

- Chaturvedi, Pradeep (1997). Sustainable Energy Supply in Asia: Proceedings of the International Conference, Asia Energy Vision 2020, Organised by the Indian Member Committee, World Energy Council Under the Institution of Engineers (India), During November 15–17, 1996 at New Delhi. Concept Publishing Company. ISBN 978-81-7022-631-4.

- Dodds, Christopher; Kumar, Chandra; Veering, Bernadette (March 2014). Oxford Textbook of Anaesthesia for the Elderly Patient. Oxford University Press. ISBN 978-0-19-960499-9.

- Fairman, Frederick Walker (11 June 1998). Linear Control Theory: The State Space Approach. John Wiley & Sons. ISBN 978-0-471-97489-5.

- Fredlund, D. G.; Rahardjo, H.; Fredlund, M. D. (30 July 2012). Unsaturated Soil Mechanics in Engineering Practice. Wiley. ISBN 978-1-118-28050-8.

- Grant, Malcolm Alister; Bixley, Paul F (1 April 2011). Geothermal Reservoir Engineering. Academic Press. ISBN 978-0-12-383881-0.

- Grigsby, Leonard L. (16 May 2012). Electric Power Generation, Transmission, and Distribution, Third Edition. CRC Press. ISBN 978-1-4398-5628-4.

- Heertje, Arnold; Perlman, Mark (1990). Evolving technology and market structure: studies in Schumpeterian economics. University of Michigan Press. ISBN 978-0-472-10192-4.

- Huurdeman, Anton A. (31 July 2003). The Worldwide History of Telecommunications. John Wiley & Sons. ISBN 978-0-471-20505-0.

- Iga, Kenichi; Kokubun, Yasuo (12 December 2010). Encyclopedic Handbook of Integrated Optics. CRC Press. ISBN 978-1-4200-2781-5.

- Jalote, Pankaj (31 January 2006). An Integrated Approach to Software Engineering. Springer. ISBN 978-0-387-28132-2.

- Khanna, Vinod Kumar (1 January 2009). Digital Signal Processing. S. Chand. ISBN 978-81-219-3095-6.

- Lambourne, Robert J. A. (1 June 2010). Relativity, Gravitation and Cosmology. Cambridge University Press. ISBN 978-0-521-13138-4.

- Leitgeb, Norbert (6 May 2010). Safety of Electromedical Devices: Law – Risks – Opportunities. Springer. ISBN 978-3-211-99683-6.

- Leondes, Cornelius T. (8 August 2000). Energy and Power Systems. CRC Press. ISBN 978-90-5699-677-2.

- Mahalik, Nitaigour Premchand (2003). Mechatronics: Principles, Concepts and Applications. Tata McGraw-Hill Education. ISBN 978-0-07-048374-3.

- Maluf, Nadim; Williams, Kirt (1 January 2004). Introduction to Microelectromechanical Systems Engineering. Artech House. ISBN 978-1-58053-591-5.

- Manolakis, Dimitris G.; Ingle, Vinay K. (21 November 2011). Applied Digital Signal Processing: Theory and Practice. Cambridge University Press. ISBN 978-1-139-49573-8.

- Martini, L., "BSCCO-2233 multilayered conductors", in Superconducting Materials for High Energy Colliders, pp. 173–181, World Scientific, 2001 ISBN 981-02-4319-7.

- Martinsen, Orjan G.; Grimnes, Sverre (29 August 2011). Bioimpedance and Bioelectricity Basics. Academic Press. ISBN 978-0-08-056880-5.

- McDavid, Richard A.; Echaore-McDavid, Susan (1 January 2009). Career Opportunities in Engineering. Infobase Publishing. ISBN 978-1-4381-1070-7.

- Merhari, Lhadi (3 March 2009). Hybrid Nanocomposites for Nanotechnology: Electronic, Optical, Magnetic and Biomedical Applications. Springer. ISBN 978-0-387-30428-1.

- Mook, William Moyer (2008). The Mechanical Response of Common Nanoscale Contact Geometries. ISBN 978-0-549-46812-7.

- Naidu, S. M.; Kamaraju, V. (2009). High Voltage Engineering. Tata McGraw-Hill Education. ISBN 978-0-07-066928-4.

- Obaidat, Mohammad S.; Denko, Mieso; Woungang, Isaac (9 June 2011). Pervasive Computing and Networking. John Wiley & Sons. ISBN 978-1-119-97043-9.

- Rosenberg, Chaim M. (2008). America at the Fair: Chicago's 1893 World's Columbian Exposition. Arcadia Publishing. ISBN 978-0-7385-2521-1.

- Schmidt, Rüdiger, "The LHC accelerator and its challenges", in Kramer M.; Soler, F.J.P. (eds), Large Hadron Collider Phenomenology, pp. 217–250, CRC Press, 2004 ISBN 0-7503-0986-5.

- Severs, Jeffrey; Leise, Christopher (24 February 2011). Pynchon's Against the Day: A Corrupted Pilgrim's Guide. Lexington Books. ISBN 978-1-61149-065-7.

- Shetty, Devdas; Kolk, Richard (14 September 2010). Mechatronics System Design, SI Version. Cengage Learning. ISBN 978-1-133-16949-9.

- Smith, Brian W. (January 2007). Communication Structures. Thomas Telford. ISBN 978-0-7277-3400-6.

- Sullivan, Dennis M. (24 January 2012). Quantum Mechanics for Electrical Engineers. John Wiley & Sons. ISBN 978-0-470-87409-7.

- Taylor, Allan (2008). Energy Industry. Infobase Publishing. ISBN 978-1-4381-1069-1.

- Thompson, Marc (12 June 2006). Intuitive Analog Circuit Design. Newnes. ISBN 978-0-08-047875-3.

- Tobin, Paul (1 January 2007). PSpice for Digital Communications Engineering. Morgan & Claypool Publishers. ISBN 978-1-59829-162-9.

- Tunbridge, Paul (1992). Lord Kelvin, His Influence on Electrical Measurements and Units. IET. ISBN 978-0-86341-237-0.

- Tuzlukov, Vyacheslav (12 December 2010). Signal Processing Noise. CRC Press. ISBN 978-1-4200-4111-8.

- Walker, Denise (2007). Metals and Non-metals. Evans Brothers. ISBN 978-0-237-53003-7.

- Wildes, Karl L.; Lindgren, Nilo A. (1 January 1985). A Century of Electrical Engineering and Computer Science at MIT, 1882–1982. MIT Press. p. 19. ISBN 978-0-262-23119-0.

- Zhang, Yan; Hu, Honglin; Luo, Jijun (27 June 2007). Distributed Antenna Systems: Open Architecture for Future Wireless Communications. CRC Press. ISBN 978-1-4200-4289-4.

Further reading

[edit]- Adhami, Reza; Meenen, Peter M.; Hite, Denis (2007). Fundamental Concepts in Electrical and Computer Engineering with Practical Design Problems. Universal-Publishers. ISBN 978-1-58112-971-7.

- Bober, William; Stevens, Andrew (27 August 2012). Numerical and Analytical Methods with MATLAB for Electrical Engineers. CRC Press. ISBN 978-1-4398-5429-7.

- Bobrow, Leonard S. (1996). Fundamentals of Electrical Engineering. Oxford University Press. ISBN 978-0-19-510509-4.

- Chen, Wai Kai (16 November 2004). The Electrical Engineering Handbook. Academic Press. ISBN 978-0-08-047748-0.

- Ciuprina, G.; Ioan, D. (30 May 2007). Scientific Computing in Electrical Engineering. Springer. ISBN 978-3-540-71980-9.

- Faria, J. A. Brandao (15 September 2008). Electromagnetic Foundations of Electrical Engineering. John Wiley & Sons. ISBN 978-0-470-69748-1.

- Jones, Lincoln D. (July 2004). Electrical Engineering: Problems and Solutions. Dearborn Trade Publishing. ISBN 978-1-4195-2131-7.

- Karalis, Edward (18 September 2003). 350 Solved Electrical Engineering Problems. Dearborn Trade Publishing. ISBN 978-0-7931-8511-5.

- Krawczyk, Andrzej; Wiak, S. (1 January 2002). Electromagnetic Fields in Electrical Engineering. IOS Press. ISBN 978-1-58603-232-6.

- Laplante, Phillip A. (31 December 1999). Comprehensive Dictionary of Electrical Engineering. Springer. ISBN 978-3-540-64835-2.

- Leon-Garcia, Alberto (2008). Probability, Statistics, and Random Processes for Electrical Engineering. Prentice Hall. ISBN 978-0-13-147122-1.

- Malaric, Roman (2011). Instrumentation and Measurement in Electrical Engineering. Universal-Publishers. ISBN 978-1-61233-500-1.

- Sahay, Kuldeep; Pathak, Shivendra (1 January 2006). Basic Concepts of Electrical Engineering. New Age International. ISBN 978-81-224-1836-1.

- Srinivas, Kn (1 January 2007). Basic Electrical Engineering. I. K. International Pvt Ltd. ISBN 978-81-89866-34-1.

External links

[edit]- International Electrotechnical Commission (IEC)

- MIT OpenCourseWare Archived 26 January 2008 at the Wayback Machine in-depth look at Electrical Engineering – online courses with video lectures.

- IEEE Global History Network A wiki-based site with many resources about the history of IEEE, its members, their professions and electrical and informational technologies and sciences.

Electrical engineering

View on GrokipediaOverview

Definition and scope

Electrical engineering is a technical discipline concerned with the study, design, and application of equipment, devices, and systems that use electricity, electronics, and electromagnetism.[9] This field applies principles from physics, mathematics, and materials science to harness electrical energy for practical purposes, focusing on phenomena such as electric current, voltage, resistance, and electromagnetic fields.[10] The scope of electrical engineering is broad, encompassing the generation, transmission, distribution, and utilization of electric power, as well as the design of electronic circuits and systems for signal processing and control.[9] It includes the development of control systems that regulate processes in industries like manufacturing and aerospace, and the integration of electrical technologies with computing and communications infrastructure, such as in telecommunications networks and embedded systems.[10] For instance, electrical engineers contribute to power grids that deliver electricity to homes and businesses, as well as to semiconductors that enable modern computing devices.[11] Electrical engineering is distinguished from related fields by its primary emphasis on electrical and electromagnetic phenomena, rather than mechanical forces, thermal dynamics, or pure software algorithms.[12] In contrast to mechanical engineering, which centers on the design and analysis of machines and mechanical systems involving motion and energy transfer through physical components, electrical engineering prioritizes the behavior of electrons and fields in circuits and devices.[12] Similarly, while computer engineering overlaps in areas like hardware design and integrates elements of electrical engineering with computer science, it focuses more on the architecture of computing systems and software-hardware interfaces, whereas electrical engineering addresses broader electrical power and signal applications beyond computation.[13] The term "electrical engineering" originated in the mid- to late 19th century, emerging from early work on electrical telegraphy and the distribution of electric power, which formalized the need for specialized professionals to handle these technologies.[14] This etymology reflects the field's roots in practical innovations that transformed communication and energy systems during the Industrial Revolution.[14]Importance in modern society

Electrical engineering underpins modern economies, driving substantial contributions to global GDP through key industries. The semiconductor sector, a cornerstone of electrical engineering, is projected to generate $697 billion in sales worldwide in 2025, fueling advancements in computing, communications, and consumer electronics.[15] Similarly, the renewable energy industry, reliant on electrical systems for generation and distribution, drove 10 percent of global GDP growth in 2023, with investments reaching $728 billion in 2024 to support clean energy infrastructure.[16][17] These sectors highlight how electrical engineering enables economic expansion by powering high-tech manufacturing and sustainable technologies. In daily life, electrical engineering facilitates essential societal functions, including widespread electrification and innovative medical and transportation applications. By 2025, global access to electricity has reached 92 percent, connecting nearly all populations to reliable power for lighting, education, and economic activity, largely through engineered grid expansions and off-grid solutions.[18] Medical devices such as MRI machines, which rely on sophisticated electrical engineering for magnetic field generation and signal processing, have revolutionized diagnostics by enabling non-invasive imaging for millions annually. In transportation, electrical engineering powers the rise of electric vehicles, projected to exceed 40 percent of global car sales by 2030, reducing reliance on fossil fuels and enhancing urban mobility.[19] Electrical engineering addresses pressing global challenges by advancing sustainable energy transitions and connectivity. Smart grids, incorporating electrical engineering principles like real-time monitoring and automation, can reduce energy distribution losses by up to 20-25 percent through optimized power flow and demand management.[20] Networks such as 5G, with deployments covering 55 percent of the global population as of 2025, enable seamless connectivity for telemedicine, smart cities, and industrial automation, while emerging 6G technologies promise even greater societal integration by 2030.[21] Looking ahead, electrical engineering's integration with artificial intelligence will amplify its impact on autonomous systems and climate mitigation. AI-enhanced electrical systems optimize renewable energy forecasting and grid stability, supporting autonomous vehicles and drones for efficient logistics, while enabling carbon emission reductions through predictive maintenance and energy-efficient designs.[22][23] This convergence positions electrical engineering as a vital force in achieving net-zero goals and fostering resilient societies.History

Precursors and 19th-century foundations

The earliest observations of electrical phenomena date back to ancient times, with the Greek philosopher Thales of Miletus noting around 600 BCE that amber, when rubbed with fur, could attract lightweight objects such as feathers, an effect now understood as static electricity.[24] This rudimentary experimentation laid the groundwork for later inquiries into electric forces, though it remained qualitative and disconnected from practical applications for centuries.[25] In the late 16th century, English physician William Gilbert advanced the study by systematically investigating these attractions in his 1600 treatise De Magnete, where he coined the term "electric" (from the Greek for amber) to describe the force and distinguished it from magnetism, establishing electricity as a separate phenomenon through experiments with various materials.[26] Building on this, French chemist Charles François de Cisternay du Fay proposed in 1733 that electricity consisted of two opposing fluids—vitreous (produced by rubbing glass) and resinous (from amber)—after observing that like-charged substances repelled while opposites attracted, refining the understanding of electric charge polarity.[27] During the mid-18th century, American polymath Benjamin Franklin conducted pivotal experiments, including his 1752 kite experiment during a thunderstorm, which demonstrated that lightning was an electrical discharge; he unified du Fay's two fluids into a single-fluid theory, introducing concepts like positive and negative charges that persist in modern electrostatics. The 19th century marked the transition from curiosity-driven science to engineering foundations, beginning with Italian physicist Alessandro Volta's invention of the voltaic pile in 1800, the first reliable chemical battery that produced a steady electric current, enabling sustained experiments and devices beyond fleeting static charges.[28] In 1820, Danish physicist Hans Christian Ørsted discovered electromagnetism when he observed that a current-carrying wire deflected a compass needle, revealing the intimate link between electricity and magnetism and inspiring subsequent inventions.[29] This breakthrough led to English scientist Michael Faraday's 1831 demonstration of electromagnetic induction, where a changing magnetic field induced an electric current in a nearby circuit, a principle essential for generators and transformers.[29] Concurrently, American physicist Joseph Henry developed the electromagnetic relay in 1835, a device that used a weak signal to control a stronger circuit, amplifying electrical signals over distances and facilitating long-range communication.[30] Key milestones in the era included the development of practical devices, such as Russian-German physicist Moritz Jacobi's 1834 electric motor, which converted electrical energy into mechanical motion using electromagnetic principles to drive a paddle wheel, demonstrating viability for propulsion.[31] American inventor Samuel F. B. Morse refined the telegraph between 1837 and 1844, culminating in the first public demonstration on May 24, 1844, when he transmitted the message "What hath God wrought" from Washington, D.C., to Baltimore using electromagnetic relays and Morse code, revolutionizing instant communication.[32] The institutionalization of electrical engineering emerged late in the century, with the founding of the American Institute of Electrical Engineers (AIEE) on October 9, 1884, in New York by a group including Thomas Edison, which provided a forum for professionals to share knowledge and standardize practices amid the growing electric power industry.[33] Universities began offering dedicated courses in the 1890s; for instance, the University of Glasgow modified its engineering curriculum around this time to include specialized instruction for electrical engineers, integrating theoretical principles with practical training in dynamo design and transmission.[34] These developments solidified electrical engineering as a distinct discipline, bridging scientific discovery with technological application.20th-century advancements

The early 20th century marked a pivotal era for power engineering, driven by the widespread adoption of alternating current (AC) systems pioneered by Nikola Tesla and George Westinghouse. Building on the successful demonstration at the Niagara Falls hydroelectric plant, which began operations in 1895 and expanded through the 1900s to transmit power over long distances, AC technology enabled efficient large-scale electricity distribution that supplanted direct current (DC) networks.[35][36] This shift facilitated the industrialization of urban centers and the growth of manufacturing, as AC motors and transformers allowed for reliable power delivery across regions previously unelectrified. By the 1910s, AC systems had become the standard for new installations worldwide, powering factories, streetlights, and traction systems for electric railways.[37] The expansion of electrical grids accelerated in the interwar period, particularly through government initiatives addressing rural areas. In the United States, the Rural Electrification Act of 1936, part of President Franklin D. Roosevelt's New Deal, established the Rural Electrification Administration (REA) to provide low-interest loans for cooperatives to build distribution lines, transforming access from less than 10% of farms in 1935 to nearly 90% by 1950.[38][39] Similar efforts in Europe and other industrialized nations extended grids to agricultural and remote communities, boosting productivity in farming through electric pumps, lighting, and appliances. This infrastructure boom not only supported economic recovery but also laid the foundation for postwar suburban growth, with global electricity generation rising from about 66 TWh in 1900 to over 1,000 TWh by 1950.[40] Advancements in early electronics complemented power developments, with Lee de Forest's invention of the Audion triode vacuum tube in 1906 revolutionizing signal amplification. By inserting a control grid between the cathode and anode in a vacuum tube, de Forest created the first device capable of amplifying weak electrical signals, enabling practical applications in telephony and wireless communication.[41][42] This breakthrough underpinned the rise of radio broadcasting in the 1920s, as stations like KDKA in Pittsburgh launched the first scheduled commercial programs in 1920, reaching millions via amplified transmissions and fostering a new mass medium for news and entertainment.[43] By the 1930s, triode-based amplifiers supported experimental television broadcasts, such as the BBC's high-definition service starting in 1936, which used cathode-ray tubes to convert images into electrical signals for transmission.[44] The World Wars catalyzed rapid innovations in electrical engineering, particularly in detection technologies. During World War II, the U.S. Navy accelerated sonar development to counter submarine threats, evolving from early piezoelectric transducers to active systems that emitted sound pulses for underwater ranging, significantly reducing U-boat effectiveness in the Atlantic.[45] Complementing this, the MIT Radiation Laboratory, established in 1940, advanced microwave radar using the British cavity magnetron, producing over 100 radar variants that accounted for nearly half of Allied systems deployed by war's end, including ground-based and airborne units for air defense and navigation.[46] These efforts highlighted the field's wartime urgency, with interdisciplinary teams integrating electromagnetism and circuit theory to achieve real-time signal processing. The era culminated in the ENIAC, completed in 1945 at the University of Pennsylvania as the first general-purpose electronic digital computer, using 18,000 vacuum tubes to perform ballistic calculations at speeds 1,000 times faster than mechanical predecessors.[47][48] Professional institutions grew alongside these technical strides, reflecting the field's maturation. The Institute of Radio Engineers (IRE), founded in 1912 to advance wireless technologies, merged with the American Institute of Electrical Engineers (AIEE, established 1884) in 1963 to form the Institute of Electrical and Electronics Engineers (IEEE), uniting over 150,000 members under a single banner for standards and research.[49][50] By mid-century, electrification had reached substantial levels in industrialized nations, with the U.S. achieving near-universal access and global household rates climbing from under 20% in 1950 through cooperative and public investments, enabling broader societal integration of electrical systems.Post-1950 developments and digital revolution

The post-1950 era in electrical engineering marked the solid-state revolution, beginning with the invention of the transistor at Bell Laboratories in 1947 by John Bardeen, Walter Brattain, and William Shockley, which was publicly announced in 1948 and commercialized in the 1950s through applications like the first transistor radio in 1954.[51][52] This breakthrough replaced bulky vacuum tubes, enabling smaller, more efficient electronic devices and laying the foundation for modern computing. The revolution accelerated with the development of the integrated circuit (IC), first demonstrated by Jack Kilby at Texas Instruments in 1958 as a hybrid circuit on germanium, followed by Robert Noyce's monolithic silicon IC at Fairchild Semiconductor in 1959, which allowed multiple transistors to be fabricated on a single chip.[53][54][55] Gordon Moore's 1965 observation, later known as Moore's Law, predicted that the number of transistors on an IC would double approximately every two years, driving exponential improvements in performance and cost reduction; this trend held through 2025, with advanced chips reaching around 10^11 transistors.[56][57] The digital shift emerged in the 1970s with the microprocessor, exemplified by Intel's 4004 in 1971—the first complete CPU on a single chip, initially designed for calculators but enabling broader computing applications.[58][59] This paved the way for personal computers in the 1970s and 1980s, starting with the Altair 8800 kit in 1975, followed by the Apple II in 1977 and IBM PC in 1981, which democratized computing for consumers and businesses.[60] Concurrently, internet protocols advanced through Vint Cerf and Bob Kahn's 1974 design of TCP/IP, which was standardized by 1983 and became the backbone of global networking by facilitating interoperable packet-switched communication.[61][62] Recent milestones include the integration of renewables into power systems via smart grids, which gained momentum in the 2000s through U.S. Department of Energy initiatives emphasizing distributed energy resources, demand response, and grid modernization to accommodate variable solar and wind generation. In telecommunications, 5G deployment began commercially in 2019 with early launches in South Korea and the U.S., expanding globally to over 2.25 billion connections by 2025 and enabling ultra-low latency for applications like autonomous vehicles.[63] Quantum computing prototypes advanced with IBM's Osprey processor in 2022, featuring 433 superconducting qubits and demonstrating scalability toward fault-tolerant systems; by late 2025, IBM introduced the Nighthawk processor with 120 qubits and enhanced connectivity, further advancing toward practical fault-tolerant quantum computing.[64][65] Globalization reshaped the field, with Asia dominating semiconductor production; Taiwan Semiconductor Manufacturing Company (TSMC) held approximately 60% of the global foundry market share by 2025, underscoring the region's control over advanced node fabrication.[66] This concentration highlighted supply chain vulnerabilities exposed by the 2020-2022 shortages, prompting diversification efforts like U.S. CHIPS Act investments to mitigate geopolitical risks.[67]Fundamental Principles

Electricity, circuits, and basic laws