Recent from talks

Nothing was collected or created yet.

History of physics

View on Wikipedia| Part of a series on |

| Physics |

|---|

|

Physics is a branch of science in which the primary objects of study are matter and energy. These topics were discussed across many cultures in ancient times by philosophers, but they had no means to distinguish causes of natural phenomena from superstitions.

The Scientific Revolution of the 17th century, especially the discovery of the law of gravity, began a process of knowledge accumulation and specialization that gave rise to the field of physics.

Mathematical advances of the 18th century gave rise to classical mechanics, and the increased used of the experimental method led to new understanding of thermodynamics.

In the 19th century, the basic laws of electromagnetism and statistical mechanics were discovered.

At the beginning of the 20th century, physics was transformed by the discoveries of quantum mechanics, relativity, and atomic theory.

Physics today may be divided loosely into classical physics and modern physics.

Ancient history

[edit]Elements of what became physics were drawn primarily from the fields of astronomy, optics, and mechanics, which were methodologically united through the study of geometry. These mathematical disciplines began in antiquity with the Babylonians and with Hellenistic writers such as Archimedes and Ptolemy. Ancient philosophy, meanwhile, included what was called "Physics".

Greek concept

[edit]The move towards a rational understanding of nature began at least since the Archaic period in Greece (650–480 BCE) with the Pre-Socratic philosophers. The philosopher Thales of Miletus (7th and 6th centuries BCE), dubbed "the Father of Science" for refusing to accept various supernatural, religious or mythological explanations for natural phenomena, proclaimed that every event had a natural cause.[1] Thales also made advancements in 580 BCE by suggesting that water is the basic element, experimenting with the attraction between magnets and rubbed amber and formulating the first recorded cosmologies. Anaximander, developer of a proto-evolutionary theory, disputed Thales' ideas and proposed that rather than water, a substance called apeiron was the building block of all matter. Around 500 BCE, Heraclitus proposed that the only basic law governing the Universe was the principle of change and that nothing remains in the same state indefinitely. He, along with his contemporary Parmenides were among the first scholars to contemplate on the role of time in the universe, a key concept that is still an issue in modern physics.

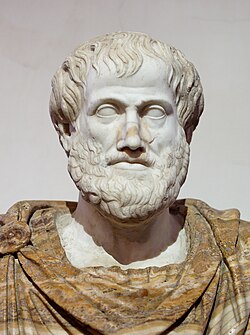

During the classical period in Greece (6th, 5th and 4th centuries BCE) and in Hellenistic times, natural philosophy developed into a field of study. Aristotle (Greek: Ἀριστοτέλης, Aristotélēs) (384–322 BCE), a student of Plato, promoted the concept that observation of physical phenomena could ultimately lead to the discovery of the natural laws governing them.[citation needed] Aristotle's writings cover physics, metaphysics, poetry, theater, music, logic, rhetoric, linguistics, politics, government, ethics, biology and zoology. He wrote the first work which refers to that line of study as "Physics" – in the 4th century BCE, Aristotle founded the system known as Aristotelian physics. He attempted to explain ideas such as motion (and gravity) with the theory of four elements. Aristotle believed that all matter was made of aether, or some combination of four elements: earth, water, air, and fire. According to Aristotle, these four terrestrial elements are capable of inter-transformation and move toward their natural place, so a stone falls downward toward the center of the cosmos, but flames rise upward toward the circumference. Eventually, Aristotelian physics became popular for many centuries in Europe, informing the scientific and scholastic developments of the Middle Ages. It remained the mainstream scientific paradigm in Europe until the time of Galileo Galilei and Isaac Newton.

Early in Classical Greece, knowledge that the Earth is spherical ("round") was common. Around 240 BCE, as the result of a seminal experiment, Eratosthenes (276–194 BCE) accurately estimated its circumference. In contrast to Aristotle's geocentric views, Aristarchus of Samos (Greek: Ἀρίσταρχος; c. 310 – c. 230 BCE) presented an explicit argument for a heliocentric model of the Solar System, i.e. for placing the Sun, not the Earth, at its centre. Seleucus of Seleucia, a follower of Aristarchus' heliocentric theory, stated that the Earth rotated around its own axis, which, in turn, revolved around the Sun. Though the arguments he used were lost, Plutarch stated that Seleucus was the first to prove the heliocentric system through reasoning.

In the 3rd century BCE, the Greek mathematician Archimedes of Syracuse Greek: Ἀρχιμήδης (287–212 BCE) – generally considered to be the greatest mathematician of antiquity and one of the greatest of all time – laid the foundations of hydrostatics, statics and calculated the underlying mathematics of the lever. A scientist of classical antiquity, Archimedes also developed elaborate systems of pulleys to move large objects with a minimum of effort. The Archimedes' screw underpins modern hydroengineering, and his machines of war helped to hold back the armies of Rome in the First Punic War. Archimedes even tore apart the arguments of Aristotle and his metaphysics, pointing out that it was impossible to separate mathematics and nature and proved it by converting mathematical theories into practical inventions. Furthermore, in his work On Floating Bodies, around 250 BCE, Archimedes developed the law of buoyancy, also known as Archimedes' principle. In mathematics, Archimedes used the method of exhaustion to calculate the area under the arc of a parabola with the summation of an infinite series, and gave a remarkably accurate approximation of pi. He also defined the spiral bearing his name, formulae for the volumes of surfaces of revolution and an ingenious system for expressing very large numbers. He also developed the principles of equilibrium states and centers of gravity, ideas that would influence future scholars like Galileo, and Newton.

Hipparchus (190–120 BCE), focusing on astronomy and mathematics, used sophisticated geometrical techniques to map the motion of the stars and planets, even predicting the times that Solar eclipses would happen. He added calculations of the distance of the Sun and Moon from the Earth, based upon his improvements to the observational instruments used at that time. Another of the early physicists was Ptolemy (90–168 CE) during the time of the Roman Empire. Ptolemy was the author of several scientific treatises, at least three of which were of continuing importance to later Islamic and European science. The first is the astronomical treatise now known as the Almagest (in Greek, Ἡ Μεγάλη Σύνταξις, "The Great Treatise", originally Μαθηματικὴ Σύνταξις, "Mathematical Treatise"). The second is the Geography, which is a thorough discussion of the geographic knowledge of the Greco-Roman world.

Much of the accumulated knowledge of the ancient world was lost. Even of the works of the many respectable thinkers, few fragments survive. Although he wrote at least fourteen books, almost nothing of Hipparchus' direct work survived. Of the 150 reputed Aristotelian works, only 30 exist, and some of those are "little more than lecture notes".[according to whom?]

India and China

[edit]

Important physical and mathematical traditions also existed in ancient Indian and Chinese sciences.

In Indian philosophy, Maharishi Kanada was the first to systematically develop a theory of atomism around 200 BCE[3] though some authors have allotted him an earlier era in the 6th century BCE.[4][5] It was further elaborated by the Buddhist atomists Dharmakirti and Dignāga during the 1st millennium CE.[6] Pakudha Kaccayana, a 6th-century BCE Indian philosopher and contemporary of Gautama Buddha, had also propounded ideas about the atomic constitution of the material world. The Vaisheshika school of philosophers believed that an atom was a mere point in space. It was also first to depict relations between motion and force applied. Indian theories about the atom are greatly abstract and enmeshed in philosophy as they were based on logic and not on personal experience or experimentation.

In Indian astronomy, Aryabhata's Aryabhatiya (499 CE) proposed the Earth's rotation, while Nilakantha Somayaji (1444–1544) of the Kerala school of astronomy and mathematics proposed a semi-heliocentric model resembling the Tychonic system.

The study of magnetism in Ancient China dates to the 4th century BCE (in the Book of the Devil Valley Master).[7] A main contributor to this field was Shen Kuo (1031–1095), a polymath and statesman who was the first to describe the magnetic-needle compass used for navigation, as well as establishing the concept of true north. In optics, Shen Kuo independently developed a camera obscura.[8]

Islamic world

[edit]

In the 7th to 15th centuries, scientific progress occurred in the Muslim world. Many classic works in Indian, Assyrian, Sassanian (Persian) and Greek, including the works of Aristotle, were translated into Arabic.[9] Important contributions were made by Ibn al-Haytham (965–1040), an Arab[10] or Persian[11] scientist, considered to be a founder of modern optics. Ptolemy and Aristotle theorised that light either shone from the eye to illuminate objects or that "forms" emanated from objects themselves, whereas al-Haytham (known by the Latin name "Alhazen") suggested that light travels to the eye in rays from different points on an object. The works of Ibn al-Haytham and al-Biruni (973–1050), a Persian scientist, eventually passed on to Western Europe where they were studied by scholars such as Roger Bacon and Vitello.[12]

Ibn al-Haytham used controlled experiments in his work on optics, although to what extent it differed from Ptolemy is debated.[13][14] Arabic mechanics like Bīrūnī and Al-Khazini developed sophisticated "science of weight", carrying out measurements of specific weights and volumes.[15]

Ibn Sīnā (980–1037), known as "Avicenna", was a polymath from Bukhara (in present-day Uzbekistan) responsible for important contributions to physics, optics, philosophy and medicine. He published his theory of motion in Book of Healing (1020), where he argued that an impetus is imparted to a projectile by the thrower. He viewed it as persistent, requiring external forces such as air resistance to dissipate it.[16][17][18] Ibn Sina made a distinction between 'force' and 'inclination' (called "mayl"), and argued that an object gained mayl when the object is in opposition to its natural motion. He concluded that continuation of motion is attributed to the inclination that is transferred to the object, and that object will be in motion until the mayl is spent. This conception of motion is consistent with Newton's first law of motion, inertia, which states that an object in motion will stay in motion unless it is acted on by an external force.[16] This idea which dissented from the Aristotelian view was later described as "impetus" by John Buridan, who was likely influenced by Ibn Sina's Book of Healing.[19]

Hibat Allah Abu'l-Barakat al-Baghdaadi (c. 1080 – c. 1165) adopted and modified Ibn Sina's theory on projectile motion. In his Kitab al-Mu'tabar, Abu'l-Barakat stated that the mover imparts a violent inclination (mayl qasri) on the moved and that this diminishes as the moving object distances itself from the mover.[20] He also proposed an explanation of the acceleration of falling bodies by the accumulation of successive increments of power with successive increments of velocity.[21] According to Shlomo Pines, al-Baghdaadi's theory of motion was "the oldest negation of Aristotle's fundamental dynamic law [namely, that a constant force produces a uniform motion], [and is thus an] anticipation in a vague fashion of the fundamental law of classical mechanics [namely, that a force applied continuously produces acceleration]."[22] Jean Buridan and Albert of Saxony later referred to Abu'l-Barakat in explaining that the acceleration of a falling body is a result of its increasing impetus.[20]

Ibn Bajjah (c. 1085–1138), known as "Avempace" in Europe, proposed that for every force there is always a reaction force. Ibn Bajjah was a critic of Ptolemy and he worked on creating a new theory of velocity to replace the one theorized by Aristotle. Two future philosophers supported the theories Avempace created, known as Avempacean dynamics. These philosophers were Thomas Aquinas, a Catholic priest, and John Duns Scotus.[23] Galileo went on to adopt Avempace's formula "that the velocity of a given object is the difference of the motive power of that object and the resistance of the medium of motion".[23]

Nasir al-Din al-Tusi (1201–1274), a Persian astronomer and mathematician who died in Baghdad, introduced the Tusi couple an important mathematical theorem and founded the Maragha School of astronomy. Geocentric (but not heliocentric) astronomical models developed by the Maragha School have many striking parallels with models developed by Nicolaus Copernicus. The possibility that Maragha results may have influenced Copernicus has a been investigated in some detail.[24]

Medieval Europe

[edit]Awareness of ancient works re-entered the West through translations from Arabic to Latin. Their re-introduction, combined with Judeo-Islamic theological commentaries, had a great influence on Medieval philosophers such as Thomas Aquinas. Scholastic European scholars, who sought to reconcile the philosophy of the ancient classical philosophers with Christian theology, proclaimed Aristotle the greatest thinker of the ancient world. In cases where they did not directly contradict the Bible, Aristotelian physics became the foundation for the physical explanations of the European Churches. Quantification became a core element of medieval physics.[25]

Based on Aristotelian physics, Scholastic physics described things as moving according to their essential nature. Celestial objects were described as moving in circles, because perfect circular motion was considered an innate property of objects that existed in the uncorrupted realm of the celestial spheres. Motions below the lunar sphere were seen as imperfect, and thus could not be expected to exhibit consistent motion. More idealized motion in the "sublunary" realm could only be achieved through artifice, and prior to the 17th century, many did not view artificial experiments as a valid means of learning about the natural world. Physical explanations in the sublunary realm revolved around tendencies. Stones contained the element earth, and earthly objects tended to move in a straight line toward the centre of the earth (and the universe in the Aristotelian geocentric view) unless otherwise prevented from doing so.[26]

Aristotle's physics was not scrutinized until John Philoponus, who relied on observation rather than verbal argument like Aristotle.[27] Philoponus' criticism of Aristotelian principles of physics served as an inspiration for Galileo Galilei ten centuries later,[28] during the Scientific Revolution. Galileo cited Philoponus substantially in his works when arguing that Aristotelian physics was flawed.[29][30] In the 1300s Jean Buridan, a teacher in the faculty of arts at the University of Paris, developed the concept of impetus. It was a step toward the modern ideas of inertia and momentum.[31]

Scientific Revolution

[edit]During the 16th and 17th centuries, a large advancement of scientific progress known as the Scientific Revolution took place in Europe. Dissatisfaction with older philosophical approaches had begun earlier and had produced other changes in society, such as the Protestant Reformation, but the revolution in science began when natural philosophers began to mount a sustained attack on the Scholastic philosophical programme and supposed that mathematical descriptive schemes adopted from such fields as mechanics and astronomy could actually yield universally valid characterizations of motion and other concepts.

Nicolaus Copernicus

[edit]

A breakthrough in astronomy was made by Renaissance astronomer Nicolaus Copernicus (1473–1543) when, in 1543, he gave strong arguments for the heliocentric model of the Solar System, ostensibly as a means to render tables charting planetary motion more accurate and to simplify their production. In heliocentric models of the Solar system, the Earth orbits the Sun along with other bodies in Earth's galaxy, a contradiction according to the Greek-Egyptian astronomer Ptolemy (2nd century CE; see above), whose system placed the Earth at the center of the Universe and had been accepted for over 1,400 years. The Greek astronomer Aristarchus of Samos (c. 310 – c. 230 BCE) had suggested that the Earth revolves around the Sun, but Copernicus's reasoning led to lasting general acceptance of this "revolutionary" idea. Copernicus's book presenting the theory (De revolutionibus orbium coelestium, "On the Revolutions of the Celestial Spheres") was published just before his death in 1543 and, as it is now generally considered to mark the beginning of modern astronomy, is also considered to mark the beginning of the Scientific Revolution.[citation needed] Copernicus's new perspective, along with the accurate observations made by Tycho Brahe, enabled German astronomer Johannes Kepler (1571–1630) to formulate his laws regarding planetary motion that remain in use today.

Galileo Galilei

[edit]

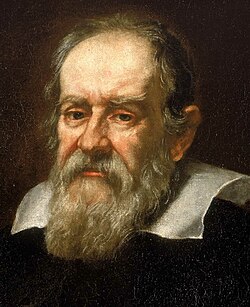

The Italian mathematician, astronomer, and physicist Galileo Galilei (1564–1642) was a supporter of Copernicanism who made numerous astronomical discoveries, carried out empirical experiments and improved the telescope. As a mathematician, Galileo's role in the university culture of his era was subordinated to the three major topics of study: law, medicine, and theology (which was closely allied to philosophy). Galileo, however, felt that the descriptive content of the technical disciplines warranted philosophical interest, particularly because mathematical analysis of astronomical observations – notably, Copernicus's analysis of the relative motions of the Sun, Earth, Moon, and planets – indicated that philosophers' statements about the nature of the universe could be shown to be in error. Galileo also performed mechanical experiments, insisting that motion itself – regardless of whether it was produced "naturally" or "artificially" (i.e. deliberately) – had universally consistent characteristics that could be described mathematically.

Galileo's early studies at the University of Pisa were in medicine, but he was soon drawn to mathematics and physics. At age 19, he discovered (and, subsequently, verified) the isochronal nature of the pendulum when, using his pulse, he timed the oscillations of a swinging lamp in Pisa's cathedral and found that it remained the same for each swing regardless of the swing's amplitude. He soon became known through his invention of a hydrostatic balance and for his treatise on the center of gravity of solid bodies. While teaching at the University of Pisa (1589–1592), he initiated his experiments concerning the laws of bodies in motion that brought results so contradictory to the accepted teachings of Aristotle that strong antagonism was aroused. He found that bodies do not fall with velocities proportional to their weights. The story in which Galileo is said to have dropped weights from the Leaning Tower of Pisa is apocryphal, but he did find that the path of a projectile is a parabola and is credited with conclusions that anticipated Newton's laws of motion (e.g. the notion of inertia). Among these is what is now called Galilean relativity, the first precisely formulated statement about properties of space and time outside three-dimensional geometry.[citation needed]

Galileo has been called the "father of modern observational astronomy",[32] the "father of modern physics", the "father of science",[33] and "the father of modern science".[34] According to Stephen Hawking, "Galileo, perhaps more than any other single person, was responsible for the birth of modern science."[35] As religious orthodoxy decreed a geocentric or Tychonic understanding of the Solar system, Galileo's support for heliocentrism provoked controversy and he was tried by the Inquisition. Found "vehemently suspect of heresy", he was forced to recant and spent the rest of his life under house arrest.

The contributions that Galileo made to observational astronomy include the telescopic confirmation of the phases of Venus; his discovery, in 1609, of Jupiter's four largest moons (subsequently given the collective name of the "Galilean moons"); and the observation and analysis of sunspots. Galileo also pursued applied science and technology, inventing, among other instruments, a military compass. His discovery of the Jovian moons was published in 1610, enabling him to obtain the position of mathematician and philosopher to the Medici court. As such, he was expected to engage in debates with philosophers in the Aristotelian tradition and received a large audience for his own publications such as the Discourses and Mathematical Demonstrations Concerning Two New Sciences (published abroad following his arrest for the publication of Dialogue Concerning the Two Chief World Systems) and The Assayer.[36][37] Galileo's interest in experimenting with and formulating mathematical descriptions of motion established experimentation as an integral part of natural philosophy. This tradition, combining with the non-mathematical emphasis on the collection of "experimental histories" by philosophical reformists such as William Gilbert and Francis Bacon, drew a significant following in the years leading to and following Galileo's death, including Evangelista Torricelli and the participants in the Accademia del Cimento in Italy; Marin Mersenne and Blaise Pascal in France; Christiaan Huygens in the Netherlands; and Robert Hooke and Robert Boyle in England.

Johannes Kepler

[edit]

Johannes Kepler (1571–1630) was a German astronomer, mathematician, astrologer, natural philosopher and a key figure in the 17th century Scientific Revolution, best known for his laws of planetary motion, and his books Astronomia nova, Harmonice Mundi, and Epitome Astronomiae Copernicanae, influencing among others Isaac Newton, providing one of the foundations for his theory of universal gravitation.[38] The variety and impact of his work made Kepler one of the founders of modern astronomy, the scientific method, natural and modern science.[39][40][41]

Kepler was partly driven by his belief that there is an intelligible plan that is accessible through reason.[42] Kepler described his new astronomy as "celestial physics",[43] as "an excursion into Aristotle's Metaphysics",[44] and as "a supplement to Aristotle's On the Heavens",[45] treating astronomy as part of a universal mathematical physics.[46]

René Descartes

[edit]

The French philosopher René Descartes (1596–1650) was well-connected to, and influential within, experimental philosophy networks. Descartes had an agenda, however, which was geared toward replacing the Scholastic philosophical tradition. Questioning the reality interpreted through the senses, Descartes sought to re-establish philosophical explanations by reducing all phenomena to the motion of an invisible sea of "corpuscles". (Notably, he reserved human thought and God from his scheme, holding these to be separate from the physical universe). In proposing this philosophical framework, Descartes supposed that different kinds of motion, such as that of planets versus that of terrestrial objects, were not fundamentally different, but were manifestations of an endless chain of corpuscular motions obeying universal principles. Particularly influential were his explanations for circular astronomical motions in terms of the vortex motion of corpuscles in space (Descartes argued, in accord with the beliefs, if not the methods, of the Scholastics, that a vacuum could not exist), and his explanation of gravity in terms of corpuscles pushing objects downward.[47][48][49]

Descartes, like Galileo, was convinced of the importance of mathematical explanation, and he and his followers were key figures in the development of mathematics and geometry in the 17th century. Cartesian mathematical descriptions of motion held that all mathematical formulations had to be justifiable in terms of direct physical action, a position held by Christiaan Huygens and the German philosopher Gottfried Leibniz, who, while following in the Cartesian tradition, developed his own philosophical alternative to Scholasticism, which he outlined in his 1714 work, the Monadology. Descartes has been dubbed the "Father of Modern Philosophy", and much subsequent Western philosophy is a response to his writings, which are studied closely to this day. In particular, his Meditations on First Philosophy continues to be a standard text at most university philosophy departments. Descartes' influence in mathematics is equally apparent; the Cartesian coordinate system – allowing algebraic equations to be expressed as geometric shapes in a two-dimensional coordinate system – was named after him. He is credited as the father of analytical geometry, the bridge between algebra and geometry, important to the discovery of calculus and analysis.

Christiaan Huygens

[edit]

The Dutch physicist, mathematician, astronomer and inventor Christiaan Huygens (1629–1695) was the leading scientist in Europe between Galileo and Newton. Huygens came from a family of nobility that had an important position in the Dutch society of the 17th century; a time in which the Dutch Republic flourished economically and culturally. This period – roughly between 1588 and 1702 – of the history of the Netherlands is also referred to as the Dutch Golden Age, an era during the Scientific Revolution when Dutch science was among the most acclaimed in Europe. At this time, intellectuals and scientists like René Descartes, Baruch Spinoza, Pierre Bayle, Antonie van Leeuwenhoek, John Locke and Hugo Grotius resided in the Netherlands. It was in this intellectual environment that Christiaan Huygens grew up. Christiaan's father, Constantijn Huygens, was, apart from an important poet, the secretary and diplomat for the Princes of Orange. He knew many scientists of his time because of his contacts and intellectual interests, including René Descartes and Marin Mersenne, and it was because of these contacts that Christiaan Huygens became aware of their work, especially Descartes, whose mechanistic philosophy was going to have a huge influence on Huygens' own work. Descartes was later impressed by the skills Huygens showed in geometry, as was Mersenne, who christened him "the new Archimedes" (which led Constantijn to refer to his son as "my little Archimedes").

A child prodigy, Huygens began his correspondence with Marin Mersenne when he was 17 years old. Huygens became interested in games of chance when he encountered the work of Fermat, Blaise Pascal and Girard Desargues. It was Pascal who encouraged him to write Van Rekeningh in Spelen van Gluck, which Frans van Schooten translated and published as De Ratiociniis in Ludo Aleae in 1657. The book is the earliest known scientific treatment of the subject, and at the time the most coherent presentation of a mathematical approach to games of chance. Two years later Huygens derived geometrically the now standard formulae in classical mechanics for the centripetal- and centrifugal force in his work De vi Centrifuga (1659). Around the same time Huygens' research in horology resulted in the invention of the pendulum clock; a breakthrough in timekeeping and the most accurate timekeeper for almost 300 years. The theoretical research of the way the pendulum works eventually led to the publication of one of his most important achievements: the Horologium Oscillatorium. This work was published in 1673 and became one of the three most important 17th century works on mechanics (the other two being Galileo's Discourses and Mathematical Demonstrations Relating to Two New Sciences (1638) and Newton's Philosophiæ Naturalis Principia Mathematica (1687)[50]). The Horologium Oscillatorium is the first modern treatise in which a physical problem (the accelerated motion of a falling body) is idealized by a set of parameters then analyzed mathematically and constitutes one of the seminal works of applied mathematics.[51][52] It is for this reason, Huygens has been called the first theoretical physicist and one of the founders of modern mathematical physics.[53][54] Huygens' Horologium Oscillatorium influenced the work of Isaac Newton, who admired the work. For instance, the laws Huygens described in the Horologium Oscillatorium are structurally the same as Newton's first two laws of motion.[55]

Five years after the publication of his Horologium Oscillatorium, Huygens described his wave theory of light. Though proposed in 1678, it was not published until 1690 in his Traité de la Lumière. His mathematical theory of light was initially rejected in favour of Newton's corpuscular theory of light, until Augustin-Jean Fresnel adopted Huygens' principle to give a complete explanation of the rectilinear propagation and diffraction effects of light in 1821. Today this principle is known as the Huygens–Fresnel principle.

As an astronomer, Huygens began grinding lenses with his brother Constantijn Jr. to build telescopes for astronomical research. He was the first to identify the rings of Saturn as "a thin, flat ring, nowhere touching, and inclined to the ecliptic," and discovered the first of Saturn's moons, Titan, using a refracting telescope.

Huygens was also the first who brought mathematical rigor to the description of physical phenomena. Because of this, and the fact that he developed institutional frameworks for scientific research on the continent, he has been referred to as "the leading actor in 'the making of science in Europe'"[56]

Isaac Newton

[edit]

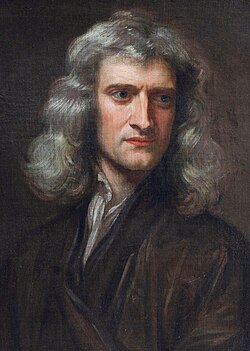

Cambridge University physicist and mathematician Sir Isaac Newton (1642–1727) was a fellow of the Royal Society of England, who created a single system for describing the workings of the universe. Newton formulated three laws of motion which formulated the relationship between motion and objects and also the law of universal gravitation, the latter of which could be used to explain the behavior not only of falling bodies on the earth but also planets and other celestial bodies. To arrive at his results, Newton invented one form of an entirely new branch of mathematics: calculus (also invented independently by Gottfried Leibniz), which was to become an essential tool in much of the later development in most branches of physics. Newton's findings were set forth in his Philosophiæ Naturalis Principia Mathematica ("Mathematical Principles of Natural Philosophy"), the publication of which in 1687 marked the beginning of the modern period of mechanics and astronomy.

Newton refuted the Cartesian mechanical tradition that all motions should be explained with respect to the immediate force exerted by corpuscles. Using his three laws of motion and law of universal gravitation, Newton removed the idea that objects followed paths determined by natural shapes and instead demonstrated that all the future motions of any body could be deduced mathematically based on knowledge of their existing motion, their mass, and the forces acting upon them. However, observed celestial motions did not precisely conform to a Newtonian treatment, and Newton, who was also deeply interested in theology, imagined that God intervened to ensure the continued stability of the solar system.

Newton's principles (but not his mathematical treatments) proved controversial with Continental philosophers, who found his lack of metaphysical explanation for movement and gravitation philosophically unacceptable. Beginning around 1700, a bitter rift opened between the Continental and British philosophical traditions, which were stoked by heated, ongoing, and viciously personal disputes between the followers of Newton and Leibniz concerning priority over the analytical techniques of calculus, which each had developed independently. Initially, the Cartesian and Leibnizian traditions prevailed on the Continent (leading to the dominance of the Leibnizian calculus notation everywhere except Britain). Newton himself remained privately disturbed at the lack of a philosophical understanding of gravitation while insisting in his writings that none was necessary to infer its reality. As the 18th century progressed, Continental natural philosophers increasingly accepted the Newtonians' willingness to forgo ontological metaphysical explanations for mathematically described motions.[57][58][59]

Newton built the first functioning reflecting telescope[60] and developed a theory of color, published in Opticks, based on the observation that a prism decomposes white light into the many colours forming the visible spectrum. While Newton explained light as being composed of tiny particles, a rival theory of light which explained its behavior in terms of waves was presented in 1690 by Christiaan Huygens. However, the belief in the mechanistic philosophy coupled with Newton's reputation meant that the wave theory saw relatively little support until the 19th century. Newton also formulated an empirical law of cooling, studied the speed of sound, investigated power series, demonstrated the generalised binomial theorem and developed a method for approximating the roots of a function. His work on infinite series was inspired by Simon Stevin's decimals.[61] Most importantly, Newton showed that the motions of objects on Earth and of celestial bodies are governed by the same set of natural laws, which were neither capricious nor malevolent. By demonstrating the consistency between Kepler's laws of planetary motion and his own theory of gravitation, Newton also removed the last doubts about heliocentrism. By bringing together all the ideas set forth during the Scientific Revolution, Newton effectively established the foundation for modern society in mathematics and science.

Other achievements

[edit]Other branches of physics also received attention during the period of the Scientific Revolution. William Gilbert, court physician to Queen Elizabeth I, described how the earth itself behaves like a giant magnet. Robert Boyle (1627–1691) studied the behavior of gases enclosed in a chamber and formulated the gas law named for him; he also contributed to physiology and to the founding of modern chemistry.

Another factor in the Scientific Revolution was the rise of learned societies and academies in various countries. The earliest of these were in Italy and Germany and were short-lived. More influential were the Royal Society of England (1660) and the Academy of Sciences in France (1666). The former was a private institution in London and included John Wallis, William Brouncker, Thomas Sydenham, John Mayow, and Christopher Wren (who contributed not only to architecture but also to astronomy and anatomy); the latter, in Paris, was a government institution and included as a foreign member the Dutchman Huygens. In the 18th century, important royal academies were established at Berlin (1700) and at St. Petersburg (1724). The societies and academies provided the principal opportunities for the publication and discussion of scientific results during and after the scientific revolution. In 1690, James Bernoulli showed that the cycloid is the solution to the tautochrone problem; and the following year, in 1691, Johann Bernoulli showed that a chain freely suspended from two points will form a catenary, the curve with the lowest possible center of gravity available to any chain hung between two fixed points. He then showed, in 1696, that the cycloid is the solution to the brachistochrone problem.

Early thermodynamics

[edit]

A precursor of the engine was designed by the German scientist Otto von Guericke who, in 1650, designed and built the world's first vacuum pump to create a vacuum as demonstrated in the Magdeburg hemispheres experiment. He was driven to make a vacuum to disprove Aristotle's long-held supposition that 'Nature abhors a vacuum'. Shortly thereafter, Irish physicist and chemist Boyle had learned of Guericke's designs and in 1656, in coordination with English scientist Robert Hooke, built an air pump. Using this pump, Boyle and Hooke noticed the pressure-volume correlation for a gas: PV = k, where P is pressure, V is volume and k is a constant: this relationship is known as Boyle's law. In that time, air was assumed to be a system of motionless particles, and not interpreted as a system of moving molecules. The concept of thermal motion came two centuries later. Therefore, Boyle's publication in 1660 speaks about a mechanical concept: the air spring.[62] Later, after the invention of the thermometer, the property temperature could be quantified. This tool gave Joseph Louis Gay-Lussac the opportunity to derive his law, which led shortly later to the ideal gas law. But, already before the establishment of the ideal gas law, an associate of Boyle's named Denis Papin built in 1679 a bone digester, which is a closed vessel with a tightly fitting lid that confines steam until a high pressure is generated.

Later designs implemented a steam release valve to keep the machine from exploding. By watching the valve rhythmically move up and down, Papin conceived of the idea of a piston and cylinder engine. He did not however follow through with his design. Nevertheless, in 1697, based on Papin's designs, engineer Thomas Savery built the first engine. Although these early engines were crude and inefficient, they attracted the attention of the leading scientists of the time. Hence, prior to 1698 and the invention of the Savery Engine, horses were used to power pulleys, attached to buckets, which lifted water out of flooded salt mines in England. In the years to follow, more variations of steam engines were built, such as the Newcomen Engine, and later the Watt Engine. In time, these early engines would replace horses. Thus, each engine began to be associated with a certain amount of "horse power" depending upon how many horses it had replaced. The main problem with these first engines was that they were slow and clumsy, converting less than 2% of the input fuel into useful work. In other words, large quantities of coal (or wood) had to be burned to yield a small fraction of work output; the need for a new science of engine dynamics was born.

18th-century developments

[edit]During the 18th century, the mechanics founded by Newton was developed by several scientists as more mathematicians learned calculus and elaborated upon its initial formulation. The application of mathematical analysis to problems of motion was known as rational mechanics, or mixed mathematics (and was later termed classical mechanics).

Mechanics

[edit]

In 1714, Brook Taylor derived the fundamental frequency of a stretched vibrating string in terms of its tension and mass per unit length by solving a differential equation. The Swiss mathematician Daniel Bernoulli (1700–1782) made important mathematical studies of the behavior of gases, anticipating the kinetic theory of gases developed more than a century later, and has been referred to as the first mathematical physicist.[63] In 1733, Daniel Bernoulli derived the fundamental frequency and harmonics of a hanging chain by solving a differential equation. In 1734, Bernoulli solved the differential equation for the vibrations of an elastic bar clamped at one end. Bernoulli's treatment of fluid dynamics and his examination of fluid flow was introduced in his 1738 work Hydrodynamica.

Rational mechanics dealt primarily with the development of elaborate mathematical treatments of observed motions, using Newtonian principles as a basis, and emphasized improving the tractability of complex calculations and developing of legitimate means of analytical approximation. A representative contemporary textbook was published by Johann Baptiste Horvath. By the end of the century analytical treatments were rigorous enough to verify the stability of the Solar System solely on the basis of Newton's laws without reference to divine intervention – even as deterministic treatments of systems as simple as the three body problem in gravitation remained intractable.[64] In 1705, Edmond Halley predicted the periodicity of Halley's Comet, William Herschel discovered Uranus in 1781, and Henry Cavendish measured the gravitational constant and determined the mass of the Earth in 1798. In 1783, John Michell suggested that some objects might be so massive that not even light could escape from them.

In 1739, Leonhard Euler solved the ordinary differential equation for a forced harmonic oscillator and noticed the resonance phenomenon. In 1742, Colin Maclaurin discovered his uniformly rotating self-gravitating spheroids. In 1742, Benjamin Robins published his New Principles in Gunnery, establishing the science of aerodynamics. British work, carried on by mathematicians such as Taylor and Maclaurin, fell behind Continental developments as the century progressed. Meanwhile, work flourished at scientific academies on the Continent, led by such mathematicians as Bernoulli and Euler, as well as Joseph-Louis Lagrange, Pierre-Simon Laplace, and Adrien-Marie Legendre. In 1743, Jean le Rond d'Alembert published his Traité de dynamique, in which he introduced the concept of generalized forces for accelerating systems and systems with constraints, and applied the new idea of virtual work to solve dynamical problem, now known as D'Alembert's principle, as a rival to Newton's second law of motion. In 1747, Pierre Louis Maupertuis applied minimum principles to mechanics. In 1759, Euler solved the partial differential equation for the vibration of a rectangular drum. In 1764, Euler examined the partial differential equation for the vibration of a circular drum and found one of the Bessel function solutions. In 1776, John Smeaton published a paper on experiments relating power, work, momentum and kinetic energy, and supporting the conservation of energy. In 1788, Lagrange presented his equations of motion in Mécanique analytique, in which the whole of mechanics was organized around the principle of virtual work. In 1789, Antoine Lavoisier stated the law of conservation of mass. The rational mechanics developed in the 18th century received expositions in both Lagrange's Mécanique analytique and Laplace's Traité de mécanique céleste (1799–1825).

Thermodynamics and static electricity

[edit]

During the 18th century, thermodynamics was developed through the theories of weightless "imponderable fluids", such as heat ("caloric"), electricity, and phlogiston (which was rapidly overthrown as a concept following Antoine Lavoisier's identification of oxygen gas late in the century). Assuming that these concepts were real fluids, their flow could be traced through a mechanical apparatus or chemical reactions. This tradition of experimentation led to the development of new kinds of experimental apparatus, such as the Leyden jar; and new kinds of measuring instruments, such as the calorimeter, and improved versions of old ones, such as the thermometer. Experiments also produced new concepts, such as the University of Glasgow experimenter Joseph Black's notion of latent heat and Philadelphia intellectual Benjamin Franklin's characterization of electrical fluid as flowing between places of excess and deficit (a concept later reinterpreted in terms of positive and negative charges). Franklin also showed that lightning is electricity in 1752.

The accepted theory of heat in the 18th century viewed it as a kind of fluid, called caloric; although this theory was later shown to be erroneous, a number of scientists adhering to it nevertheless made important discoveries useful in developing the modern theory, including Joseph Black (1728–1799) and Henry Cavendish (1731–1810). Opposed to this caloric theory, which had been developed mainly by the chemists, was the less accepted theory dating from Newton's time that heat is due to the motions of the particles of a substance. This mechanical theory gained support in 1798 from the cannon-boring experiments of Count Rumford (Benjamin Thompson), who found a direct relationship between heat and mechanical energy.

While it was recognized early in the 18th century that finding absolute theories of electrostatic and magnetic force akin to Newton's principles of motion would be an important achievement, none were forthcoming. This impossibility only slowly disappeared as experimental practice became more widespread and more refined in the early years of the 19th century in places such as the newly established Royal Institution in London. Meanwhile, the analytical methods of rational mechanics began to be applied to experimental phenomena, most influentially with the French mathematician Joseph Fourier's analytical treatment of the flow of heat, as published in 1822.[65][66][67] Joseph Priestley proposed an electrical inverse-square law in 1767, and Charles-Augustin de Coulomb introduced the inverse-square law of electrostatics in 1798.

At the end of the century, the members of the French Academy of Sciences had attained clear dominance in the field.[59][68][69][70] At the same time, the experimental tradition established by Galileo and his followers persisted. The Royal Society and the French Academy of Sciences were major centers for the performance and reporting of experimental work. Experiments in mechanics, optics, magnetism, static electricity, chemistry, and physiology were not clearly distinguished from each other during the 18th century, but significant differences in explanatory schemes and, thus, experiment design were emerging. Chemical experimenters, for instance, defied attempts to enforce a scheme of abstract Newtonian forces onto chemical affiliations, and instead focused on the isolation and classification of chemical substances and reactions.[71]

19th century

[edit]Mechanics

[edit]In 1821, William Hamilton began his analysis of Hamilton's characteristic function. In 1835, he stated Hamilton's canonical equations of motion.

In 1813, Peter Ewart supported the idea of the conservation of energy in his paper On the measure of moving force. In 1829, Gaspard Coriolis introduced the terms of work (force times distance) and kinetic energy with the meanings they have today. In 1841, Julius Robert von Mayer, an amateur scientist, wrote a paper on the conservation of energy, although his lack of academic training led to its rejection. In 1847, Hermann von Helmholtz formally stated the law of conservation of energy.

Electromagnetism

[edit]

In 1800, Alessandro Volta invented the electric battery (known as the voltaic pile) and thus improved the way electric currents could also be studied. A year later, Thomas Young demonstrated the wave nature of light – which received strong experimental support from the work of Augustin-Jean Fresnel – and the principle of interference. In 1820, Hans Christian Ørsted found that a current-carrying conductor gives rise to a magnetic force surrounding it, and within a week after Ørsted's discovery reached France, André-Marie Ampère discovered that two parallel electric currents will exert forces on each other. In 1821, Michael Faraday built an electricity-powered motor, while Georg Ohm stated his law of electrical resistance in 1826, expressing the relationship between voltage, current, and resistance in an electric circuit.

In 1831, Faraday (and independently Joseph Henry) discovered the reverse effect, the production of an electric potential or current through magnetism – known as electromagnetic induction; these two discoveries are the basis of the electric motor and the electric generator, respectively.

In 1873, James Clerk Maxwell published A Treatise on Electricity and Magnetism, which described the transmission of energy in wave form through a "luminiferous ether", and suggested that light was such a wave. This was confirmed in 1888 when Helmholtz student Heinrich Hertz generated and detected electromagnetic radiation in the laboratory.[72][73][74][75]

Laws of thermodynamics

[edit]

In the 19th century, the connection between heat and mechanical energy was established quantitatively by Julius Robert von Mayer and James Prescott Joule, who measured the mechanical equivalent of heat in the 1840s. In 1849, Joule published results from his series of experiments (including the paddlewheel experiment) which show that heat is a form of energy, a fact that was accepted in the 1850s. The relation between heat and energy was important for the development of steam engines, and in 1824 the experimental and theoretical work of Sadi Carnot was published. Carnot captured some of the ideas of thermodynamics in his discussion of the efficiency of an idealized engine. Sadi Carnot's work provided a basis for the formulation of the first law of thermodynamics – a restatement of the law of conservation of energy – which was stated around 1850 by William Thomson, later known as Lord Kelvin, and Rudolf Clausius. Lord Kelvin, who had extended the concept of absolute zero from gases to all substances in 1848, drew upon the engineering theory of Lazare Carnot, Sadi Carnot, and Émile Clapeyron as well as the experimentation of James Prescott Joule on the interchangeability of mechanical, chemical, thermal, and electrical forms of work to formulate the first law.

Kelvin and Clausius also stated the second law of thermodynamics, which was originally formulated in terms of the fact that heat does not spontaneously flow from a colder body to a warmer one. Other formulations followed quickly (for example, the second law was expounded in Thomson and Peter Guthrie Tait's influential work Treatise on Natural Philosophy) and Kelvin in particular understood some of the law's general implications. The second Law – the idea that gases consist of molecules in motion – had been discussed in some detail by Daniel Bernoulli in 1738, but had fallen out of favor, and was revived by Clausius in 1857. In 1850, Hippolyte Fizeau and Léon Foucault measured the speed of light in water and found that it is slower than in air, in support of the wave model of light. In 1852, Joule and Thomson demonstrated that a rapidly expanding gas cools, later named the Joule–Thomson effect or Joule–Kelvin effect. Hermann von Helmholtz put forward the idea of the heat death of the universe in 1854, the same year that Clausius established the importance of dQ/T (Clausius's theorem) (though he did not yet name the quantity).

Statistical mechanics

[edit]

In 1860, James Clerk Maxwell worked out the mathematics of the distribution of velocities of the molecules of a gas, known today as the Maxwell–Boltzmann distribution.

The atomic theory of matter had been proposed again in the early 19th century by the chemist John Dalton and became one of the hypotheses of the kinetic-molecular theory of gases developed by Clausius and James Clerk Maxwell to explain the laws of thermodynamics.

The kinetic theory in turn led to a revolutionary approach to science, the statistical mechanics of Ludwig Boltzmann (1844–1906) and Josiah Willard Gibbs (1839–1903), which studies the statistics of microstates of a system and uses statistics to determine the state of a physical system. Interrelating the statistical likelihood of certain states of organization of these particles with the energy of those states, Clausius reinterpreted the dissipation of energy to be the statistical tendency of molecular configurations to pass toward increasingly likely, increasingly disorganized states (coining the term "entropy" to describe the disorganization of a state). The statistical versus absolute interpretations of the second law of thermodynamics set up a dispute that would last for several decades (producing arguments such as "Maxwell's demon"), and that would not be held to be definitively resolved until the behavior of atoms was firmly established in the early 20th century.[76][77] In 1902, James Jeans found the length scale required for gravitational perturbations to grow in a static nearly homogeneous medium.

Other developments

[edit]In 1822, botanist Robert Brown discovered Brownian motion: pollen grains in water undergoing movement resulting from their bombardment by the fast-moving atoms or molecules in the liquid.

In 1834, Carl Jacobi discovered his uniformly rotating self-gravitating ellipsoids (the Jacobi ellipsoid).

In 1834, John Russell observed a nondecaying solitary water wave (soliton) in the Union Canal near Edinburgh, Scotland, and used a water tank to study the dependence of solitary water wave velocities on wave amplitude and water depth. In 1835, Gaspard Coriolis examined theoretically the mechanical efficiency of waterwheels, and deduced the Coriolis effect. In 1842, Christian Doppler proposed the Doppler effect.

In 1851, Léon Foucault showed the Earth's rotation with a huge pendulum (Foucault pendulum).

There were important advances in continuum mechanics in the first half of the century, namely formulation of laws of elasticity for solids and discovery of Navier–Stokes equations for fluids.

20th century: birth of modern physics

[edit]

(1867–1934) received Nobel prizes in physics (1903) and chemistry (1911).

At the end of the 19th century, physics had evolved to the point at which classical mechanics could cope with highly complex problems involving macroscopic situations; thermodynamics and kinetic theory were well established; geometrical and physical optics could be understood in terms of electromagnetic waves; and the conservation laws for energy and momentum (and mass) were widely accepted. So profound were these and other developments that it was generally accepted that all the important laws of physics had been discovered and that, henceforth, research would be concerned with clearing up minor problems and particularly with improvements of method and measurement.

However, around 1900 serious doubts arose about the completeness of the classical theories – the triumph of Maxwell's theories, for example, was undermined by inadequacies that had already begun to appear – and their inability to explain certain physical phenomena, such as the energy distribution in blackbody radiation and the photoelectric effect, while some of the theoretical formulations led to paradoxes when pushed to the limit. Prominent physicists such as Hendrik Lorentz, Emil Cohn, Ernst Wiechert and Wilhelm Wien believed that some modification of Maxwell's equations might provide the basis for all physical laws. These shortcomings of classical physics were never to be resolved and new ideas were required. At the beginning of the 20th century, a major revolution shook the world of physics, which led to a new era, generally referred to as modern physics.[78]

Radiation experiments

[edit]

In the 19th century, experimenters began to detect unexpected forms of radiation: Wilhelm Röntgen caused a sensation with his discovery of X-rays in 1895; in 1896, Henri Becquerel discovered that certain kinds of matter emit radiation on their own accord. In 1897, J. J. Thomson discovered the electron, and new radioactive elements found by Marie and Pierre Curie raised questions about the supposedly indestructible atom and the nature of matter. Marie and Pierre coined the term "radioactivity" to describe this property of matter, and isolated the radioactive elements radium and polonium. Ernest Rutherford and Frederick Soddy identified two of Becquerel's forms of radiation with electrons and the element helium. Rutherford identified and named two types of radioactivity and in 1911 interpreted experimental evidence as showing that the atom consists of a dense, positively charged nucleus surrounded by negatively charged electrons. Classical theory, however, predicted that this structure should be unstable. Classical theory had also failed to explain successfully two other experimental results that appeared in the late 19th century. One of these was the demonstration by Albert A. Michelson and Edward W. Morley – known as the Michelson–Morley experiment – which showed there did not seem to be a preferred frame of reference, at rest with respect to the hypothetical luminiferous ether, for describing electromagnetic phenomena. Studies of radiation and radioactive decay continued to be a preeminent focus for physical and chemical research through the 1930s, when the discovery of nuclear fission by Lise Meitner and Otto Frisch opened the way to the practical exploitation of what came to be called "atomic" energy.

Albert Einstein's theory of relativity

[edit]

In 1905, a 26-year-old German physicist named Albert Einstein (then a patent clerk in Bern, Switzerland) showed how measurements of time and space are affected by motion between an observer and what is being observed. Einstein's radical theory of relativity revolutionized science. Although Einstein made many other important contributions to science, the theory of relativity alone is one of the greatest intellectual achievements of all time. Although the concept of relativity was not introduced by Einstein, he recognised that the speed of light in vacuum is constant, i.e., the same for all observers, and an absolute upper limit to speed. This does not impact a person's day-to-day life since most objects travel at speeds much slower than light speed. For objects travelling near light speed, however, the theory of relativity shows that clocks associated with those objects will run more slowly and that the objects shorten in length according to measurements of an observer on Earth. Einstein also derived the equation, E = mc2, which expresses the equivalence of mass and energy.

Special relativity

[edit]Einstein argued that the speed of light was a constant in all inertial reference frames and that electromagnetic laws should remain valid independent of reference frame – assertions which rendered the ether "superfluous" to physical theory, and that held that observations of time and length varied relative to how the observer was moving with respect to the object being measured (what came to be called the "special theory of relativity"). It also followed that mass and energy were interchangeable quantities according to the equation E=mc2. In another paper published the same year, Einstein asserted that electromagnetic radiation was transmitted in discrete quantities ("quanta"), according to a constant that the theoretical physicist Max Planck had posited in 1900 to arrive at an accurate theory for the distribution of blackbody radiation – an assumption that explained the strange properties of the photoelectric effect.

The special theory of relativity is a formulation of the relationship between physical observations and the concepts of space and time. The theory arose out of contradictions between electromagnetism and Newtonian mechanics and had great impact on both those areas. The original historical issue was whether it was meaningful to discuss the electromagnetic wave-carrying "ether" and motion relative to it and also whether one could detect such motion, as was unsuccessfully attempted in the Michelson–Morley experiment. Einstein demolished these questions and the ether concept in his special theory of relativity. However, his basic formulation does not involve detailed electromagnetic theory. It arises out of the question: "What is time?" Newton, in the Principia (1686), had given an unambiguous answer: "Absolute, true, and mathematical time, of itself, and from its own nature, flows equably without relation to anything external, and by another name is called duration." This definition is basic to all classical physics.

Einstein had the genius to question it, and found that it was incomplete. Instead, each "observer" necessarily makes use of his or her own scale of time, and for two observers in relative motion, their time-scales will differ. This induces a related effect on position measurements. Space and time become intertwined concepts, fundamentally dependent on the observer. Each observer presides over his or her own space-time framework or coordinate system. There being no absolute frame of reference, all observers of given events make different but equally valid (and reconcilable) measurements. What remains absolute is stated in Einstein's relativity postulate: "The basic laws of physics are identical for two observers who have a constant relative velocity with respect to each other."

Special relativity had a profound effect on physics: started as a rethinking of the theory of electromagnetism, it found a new symmetry law of nature, now called Poincaré symmetry, that replaced Galilean symmetry.

Special relativity exerted another long-lasting effect on dynamics. Although initially it was credited with the "unification of mass and energy", it became evident that relativistic dynamics established a distinction between rest mass, which is an invariant (observer independent) property of a particle or system of particles, and the energy and momentum of a system. The latter two are separately conserved in all situations but not invariant with respect to different observers. The term mass in particle physics underwent a semantic change, and since the late 20th century it almost exclusively denotes the rest (or invariant) mass.

General relativity

[edit]

By 1916, Einstein was able to generalize this further, to deal with all states of motion including non-uniform acceleration, which became the general theory of relativity. In this theory, Einstein also specified a new concept, the curvature of space-time, which described the gravitational effect at every point in space. The curvature of space-time replaced Newton's universal law of gravitation. According to Einstein, gravitational force in the normal sense is an illusion caused by the geometry of space. The presence of a mass causes a curvature of space-time in the vicinity of the mass, and this curvature dictates the space-time path that all freely-moving objects follow. It was also predicted from this theory that light should be subject to gravity – all of which was verified experimentally. This aspect of relativity explained the phenomena of light bending around the sun, predicted black holes as well as properties of the Cosmic microwave background radiation – a discovery rendering fundamental anomalies in the classic Steady-State hypothesis. For his work on relativity, the photoelectric effect and blackbody radiation, Einstein received the Nobel Prize in 1921.

The gradual acceptance of Einstein's theories of relativity and the quantized nature of light transmission, and of Niels Bohr's model of the atom created as many problems as they solved, leading to a full-scale effort to reestablish physics on new fundamental principles. Expanding relativity to cases of accelerating reference frames (the "general theory of relativity") in the 1910s, Einstein posited an equivalence between the inertial force of acceleration and the force of gravity, leading to the conclusion that space is curved and finite in size, and the prediction of such phenomena as gravitational lensing and the distortion of time in gravitational fields.

Quantum mechanics

[edit]

Although relativity resolved the electromagnetic phenomena conflict demonstrated by Michelson and Morley, a second theoretical problem was the explanation of the distribution of electromagnetic radiation emitted by a black body; experiment showed that at shorter wavelengths, toward the ultraviolet end of the spectrum, the energy approached zero, but classical theory predicted it should become infinite. This glaring discrepancy, known as the ultraviolet catastrophe, was solved by the new theory of quantum mechanics. Quantum mechanics is the theory of atoms and subatomic systems. Approximately the first 30 years of the 20th century represent the time of the conception and evolution of the theory. The basic ideas of quantum theory were introduced in 1900 by Max Planck (1858–1947), who was awarded the Nobel Prize for Physics in 1918 for his discovery of the quantified nature of energy. The quantum theory (which previously relied in the "correspondence" at large scales between the quantized world of the atom and the continuities of the "classical" world) was accepted when the Compton Effect established that light carries momentum and can scatter off particles, and when Louis de Broglie asserted that matter can be seen as behaving as a wave in much the same way as electromagnetic waves behave like particles (wave–particle duality).

In 1905, Einstein used the quantum theory to explain the photoelectric effect, and in 1913 the Danish physicist Niels Bohr used the same constant to explain the stability of Rutherford's atom as well as the frequencies of light emitted by hydrogen gas. The quantized theory of the atom gave way to a full-scale quantum mechanics in the 1920s. New principles of a "quantum" rather than a "classical" mechanics, formulated in matrix-form by Werner Heisenberg, Max Born, and Pascual Jordan in 1925, were based on the probabilistic relationship between discrete "states" and denied the possibility of causality. Quantum mechanics was extensively developed by Heisenberg, Wolfgang Pauli, Paul Dirac, and Erwin Schrödinger, who established an equivalent theory based on waves in 1926; but Heisenberg's 1927 "uncertainty principle" (indicating the impossibility of precisely and simultaneously measuring position and momentum) and the "Copenhagen interpretation" of quantum mechanics (named after Bohr's home city) continued to deny the possibility of fundamental causality, though opponents such as Einstein would metaphorically assert that "God does not play dice with the universe".[79] The new quantum mechanics became an indispensable tool in the investigation and explanation of phenomena at the atomic level. Also in the 1920s, the Indian scientist Satyendra Nath Bose's work on photons and quantum mechanics provided the foundation for Bose–Einstein statistics, the theory of the Bose–Einstein condensate.

The spin–statistics theorem established that any particle in quantum mechanics may be either a boson (statistically Bose–Einstein) or a fermion (statistically Fermi–Dirac). It was later found that all fundamental bosons transmit forces, such as the photon that transmits electromagnetism.

Fermions are particles "like electrons and nucleons" and are the usual constituents of matter. Fermi–Dirac statistics later found numerous other uses, from astrophysics (see Degenerate matter) to semiconductor design.

Division into classical and modern

[edit]

The conceptual differences between physics theories discussed in the 19th century and those that were most historically prominent in the first decades of the 20th century lead to a characterization of the earlier sciences as "classical physics" while the work based on quantum and relativity theories became known as "modern physics". Initially applied to mechanics, as in "classical mechanics", the divide eventually came to characterize quantum and relativistic effects.[80]: 411 This characterization was driven initially by physicists like Max Planck and Hendrik Lorentz, established scientists who nevertheless saw issues that established theories could not explain. Their involvement and contributions to the 1911 Solvay Conference lead to the introduction of this split as a concept.[81]: 558

This division is reflected in the titles of many physics textbooks. For example, the preface of Goldstein's Classical mechanics explains why the topic is still relevant for physics students.[82] In Concepts of Modern Physics Arthur Beiser starts with a definition of modern physics:[83]

Modern physics began in 1900 with Max Planck’s discovery of the role of energy quantization in blackbody radiation, a revolutionary idea soon followed by Albert Einstein’s equally revolutionary theory of relativity and quantum theory of light.

Kenneth Krane's Modern physics begins a text on quantum and relativity theories with a few pages on deficiencies of classical physics.[84]: 3 E.T. Whittaker's two-volume History of the Theories of Aether and Electricity subtitles volume one The Classical Theories and volume two The Modern Theories (1900–1926).[85]

Contemporary physics

[edit]Quantum field theory

[edit]

As the philosophically inclined continued to debate the fundamental nature of the universe, quantum theories continued to be produced, beginning with Paul Dirac's formulation of a relativistic quantum theory in 1928. However, attempts to quantize electromagnetic theory entirely were stymied throughout the 1930s by theoretical formulations yielding infinite energies. This situation was not considered adequately resolved until after World War II, when Julian Schwinger, Richard Feynman and Sin-Itiro Tomonaga independently posited the technique of renormalization, which allowed for an establishment of a robust quantum electrodynamics (QED).[86]

Meanwhile, new theories of fundamental particles proliferated with the rise of the idea of the quantization of fields through "exchange forces" regulated by an exchange of short-lived "virtual" particles, which were allowed to exist according to the laws governing the uncertainties inherent in the quantum world. Notably, Hideki Yukawa proposed that the positive charges of the nucleus were kept together courtesy of a powerful but short-range force mediated by a particle with a mass between that of the electron and proton. This particle, the "pion", was identified in 1947 as part of what became a slew of particles discovered after World War II. Initially, such particles were found as ionizing radiation left by cosmic rays, but increasingly came to be produced in newer and more powerful particle accelerators.[87]

Outside particle physics, significant advances of the time were:

- the invention of the laser (1964 Nobel Prize in Physics);

- the theoretical and experimental research of superconductivity, especially the invention of a quantum theory of superconductivity by Vitaly Ginzburg and Lev Landau (1962 Nobel Prize in Physics) and, later, its explanation via Cooper pairs (1972 Nobel Prize in Physics). The Cooper pair was an early example of quasiparticles.

Unified field theories

[edit]Einstein deemed that all fundamental interactions in nature can be explained in a single theory. Unified field theories were numerous attempts to "merge" several interactions. One of many formulations of such theories (as well as field theories in general) is a gauge theory, a generalization of the idea of symmetry. Eventually the Standard Model (see below) succeeded in unification of strong, weak, and electromagnetic interactions. All attempts to unify gravitation with something else failed.

Particle physics and the Standard Model

[edit]

When parity was broken in weak interactions by Chien-Shiung Wu in her experiment, a series of discoveries were created thereafter.[89] The interaction of these particles by scattering and decay provided a key to new fundamental quantum theories. Murray Gell-Mann and Yuval Ne'eman brought some order to these new particles by classifying them according to certain qualities, beginning with what Gell-Mann referred to as the "Eightfold Way". While its further development, the quark model, at first seemed inadequate to describe strong nuclear forces, allowing the temporary rise of competing theories such as the S-Matrix, the establishment of quantum chromodynamics in the 1970s finalized a set of fundamental and exchange particles, which allowed for the establishment of a "standard model" based on the mathematics of gauge invariance, which successfully described all forces except for gravitation, and which remains generally accepted within its domain of application.[79]

The Standard Model, based on the Yang–Mills theory[90] groups the electroweak interaction theory and quantum chromodynamics into a structure denoted by the gauge group SU(3)×SU(2)×U(1). The formulation of the unification of the electromagnetic and weak interactions in the standard model is due to Abdus Salam, Steven Weinberg and, subsequently, Sheldon Glashow. Electroweak theory was later confirmed experimentally (by observation of neutral weak currents),[91][92][93][94] and distinguished by the 1979 Nobel Prize in Physics.[95]

Since the 1970s, fundamental particle physics has provided insights into early universe cosmology, particularly the Big Bang theory proposed as a consequence of Einstein's general theory of relativity. However, starting in the 1990s, astronomical observations have also provided new challenges, such as the need for new explanations of galactic stability ("dark matter") and the apparent acceleration in the expansion of the universe ("dark energy").

While accelerators have confirmed most aspects of the Standard Model by detecting expected particle interactions at various collision energies, no theory reconciling general relativity with the Standard Model has yet been found, although supersymmetry and string theory were believed by many theorists to be a promising avenue forward. The Large Hadron Collider, however, which began operating in 2008, has failed to find any evidence that is supportive of supersymmetry and string theory.[96]

Cosmology

[edit]Cosmology may be said to have become a serious research question with the publication of Einstein's General Theory of Relativity in 1915 although it did not enter the scientific mainstream until the period known as the "Golden age of general relativity".

About a decade later, in the midst of what was dubbed the "Great Debate", Edwin Hubble and Vesto Slipher discovered the expansion of universe in the 1920s measuring the redshifts of Doppler spectra from galactic nebulae. Using Einstein's general relativity, Georges Lemaître and George Gamow formulated what would become known as the Big Bang theory. A rival, called the steady state theory, was devised by Fred Hoyle, Thomas Gold, Jayant Narlikar and Hermann Bondi.

Cosmic microwave background radiation was verified in the 1960s by Arno Allan Penzias and Robert Woodrow Wilson, and this discovery favoured the big bang at the expense of the steady state scenario. Later work was by George Smoot et al. (1989), among other contributors, using data from the Cosmic Background explorer (CoBE) and the Wilkinson Microwave Anisotropy Probe (WMAP) satellites refined these observations. The 1980s (the same decade of the COBE measurements) also saw the proposal of inflation theory by Alan Guth.

Recently the problems of dark matter and dark energy have risen to the top of the cosmology agenda.

Higgs boson

[edit]

On July 4, 2012, physicists working at CERN's Large Hadron Collider announced that they had discovered a new subatomic particle greatly resembling the Higgs boson, a potential key to an understanding of why elementary particles have mass and indeed to the existence of diversity and life in the universe.[97] For now, some physicists are calling it a "Higgslike" particle.[97] Joe Incandela, of the University of California, Santa Barbara, said, "It's something that may, in the end, be one of the biggest observations of any new phenomena in our field in the last 30 or 40 years, going way back to the discovery of quarks, for example."[97] Michael Turner, a cosmologist at the University of Chicago and the chairman of the physics center board, said:

"This is a big moment for particle physics and a crossroads – will this be the high water mark or will it be the first of many discoveries that point us toward solving the really big questions that we have posed?"

— Michael Turner, University of Chicago[97]

Peter Higgs was one of six physicists, working in three independent groups, who, in 1964, invented the notion of the Higgs field ("cosmic molasses"). The others were Tom Kibble of Imperial College, London; Carl Hagen of the University of Rochester; Gerald Guralnik of Brown University; and François Englert and Robert Brout, both of Université libre de Bruxelles.[97]

Although they have never been seen, Higgslike fields play an important role in theories of the universe and in string theory. Under certain conditions, according to the strange accounting of Einsteinian physics, they can become suffused with energy that exerts an antigravitational force. Such fields have been proposed as the source of an enormous burst of expansion, known as inflation, early in the universe and, possibly, as the secret of the dark energy that now seems to be accelerating the expansion of the universe.[97]

Physical sciences

[edit]With increased accessibility to and elaboration upon advanced analytical techniques in the 19th century, physics was defined as much, if not more, by those techniques than by the search for universal principles of motion and energy, and the fundamental nature of matter. Fields such as acoustics, geophysics, astrophysics, aerodynamics, plasma physics, low-temperature physics, and solid-state physics joined optics, fluid dynamics, electromagnetism, and mechanics as areas of physical research. In the 20th century, physics also became closely allied with such fields as electrical, aerospace and materials engineering, and physicists began to work in government and industrial laboratories as much as in academic settings. Following World War II, the population of physicists increased dramatically, and came to be centered on the United States, while, in more recent decades, physics has become a more international pursuit than at any time in its previous history.

Articles on the history of physics

[edit]On branches of physics

[edit]- History of astronomy (timeline)

- History of condensed matter (timeline)

- History of computational physics (timeline)

- History of electromagnetic theory (timeline)

- History of geophysics

- History of gravity, spacetime and cosmology

- History of classical mechanics (timeline)

- History of nuclear physics

- History of quantum mechanics (timeline)

- Atomic theory

- History of molecular theory

- History of quantum field theory

- History of quantum information (timeline)

- History of subatomic physics (timeline)

- History of thermodynamics (timeline)

On specific discoveries

[edit]- Discovery of cosmic microwave background radiation

- History of graphene

- First observation of gravitational waves

- Subatomic particles (timeline)